SoftMimicGen: A Data Generation System for Scalable Robot Learning in Deformable Object Manipulation

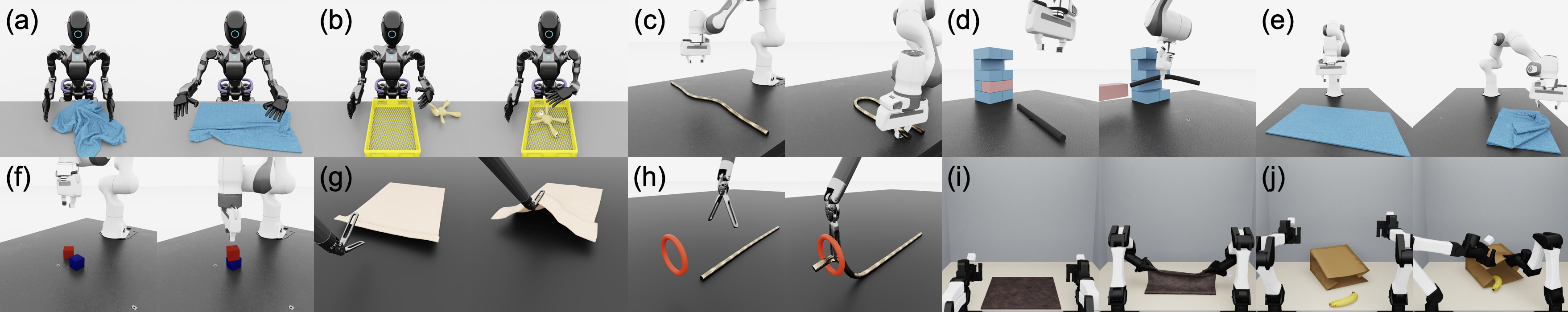

Abstract: Large-scale robot datasets have facilitated the learning of a wide range of robot manipulation skills, but these datasets remain difficult to collect and scale further, owing to the intractable amount of human time, effort, and cost required. Simulation and synthetic data generation have proven to be an effective alternative to fuel this need for data, especially with the advent of recent work showing that such synthetic datasets can dramatically reduce real-world data requirements and facilitate generalization to novel scenarios unseen in real-world demonstrations. However, this paradigm has been limited to rigid-body tasks, which are easy to simulate. Deformable object manipulation encompasses a large portion of real-world manipulation and remains a crucial gap to address towards increasing adoption of the synthetic simulation data paradigm. In this paper, we introduce SoftMimicGen, an automated data generation pipeline for deformable object manipulation tasks. We introduce a suite of high-fidelity simulation environments that encompasses a wide range of deformable objects (stuffed animal, rope, tissue, towel) and manipulation behaviors (high-precision threading, dynamic whipping, folding, pick-and-place), across four robot embodiments: a single-arm manipulator, bimanual arms, a humanoid, and a surgical robot. We apply SoftMimicGen to generate datasets across the task suite, train high-performing policies from the data, and systematically analyze the data generation system. Project website: \href{https://softmimicgen.github.io}{softmimicgen.github.io}.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces SoftMimicGen, a system that helps robots learn to handle soft, bendy things—like towels, ropes, and threads—by creating lots of practice examples in a computer simulator. Instead of needing thousands of hours from human operators, the system starts with just a few human demonstrations and automatically generates many more high‑quality examples. Robots then learn from these examples and can even use what they learn in the real world.

What are the main goals?

The researchers set out to:

- Build a way to automatically generate robot training data for soft (deformable) objects, not just hard (rigid) ones.

- Create realistic simulated tasks involving different soft objects and different types of robots (one‑arm, two‑arm, humanoid, and a surgical robot).

- Train robot policies (the robot’s “brains” for how to move) using the generated data and see how well they perform in both simulation and the real world.

- Compare their system to older methods and study what makes it work better.

How does SoftMimicGen work? (Method, in simple terms)

Think of teaching a friend to fold a towel: you show them once or twice, then they practice in many different towel shapes and starting positions until they get good. SoftMimicGen does this for robots.

Here’s the basic idea:

- A human teleoperates a robot a few times to show how to do a task (like folding a towel or threading a string).

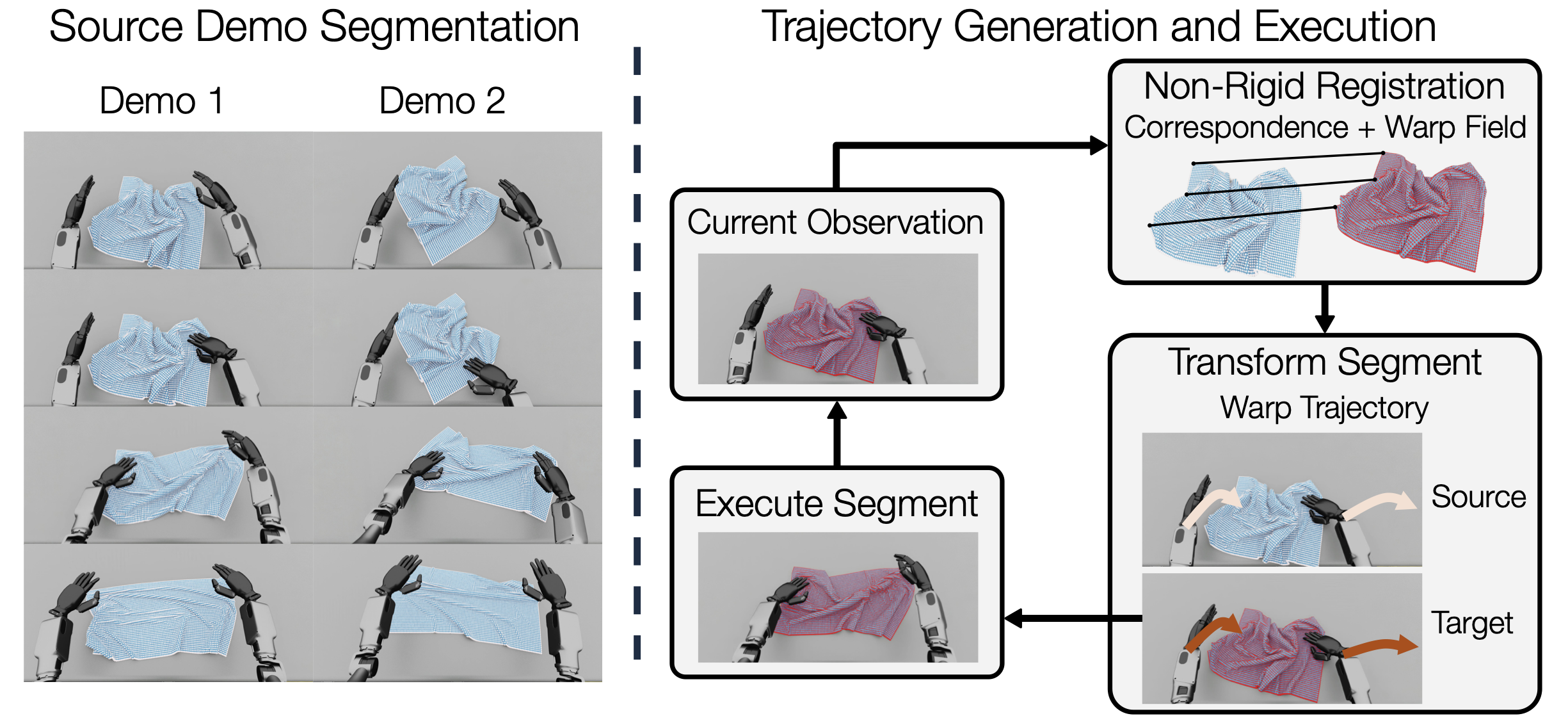

- The system breaks each demonstration into smaller steps (subtasks), like “reach the towel,” “grasp,” “lift,” “fold.”

- In a new scene (where the towel or rope might be in a different shape), the system “matches” the new soft object to the one from the original demo using non‑rigid registration.

What’s “non‑rigid registration”? Imagine a transparent, stretchy grid laid over a crumpled towel. To match one towel shape to another, you stretch and slide the grid so points on one towel line up with points on the other. This creates a “warp field” that tells you how every point moves from the old shape to the new shape. SoftMimicGen uses this warp to bend and adjust the robot’s hand path too—so it still performs the right motions even though the object’s shape has changed.

Putting it together:

- Start with a few human examples.

- For each new randomized scene, compute how the soft object’s new shape relates to the old one (the warp).

- Warp the robot’s original motions to fit the new situation.

- Execute and record the new successful run as a fresh training example.

- Repeat to generate a large dataset.

Finally, the team trains robot policies using imitation learning (think “monkey see, monkey do” for robots): the policy looks at sensor inputs (like images or points) and learns to output the right motions, copying the good examples it’s seen.

What did they find, and why is it important?

Main findings:

- With only 1–3 human demos per task, SoftMimicGen can automatically generate about 1,000 successful training examples per task across many different starting conditions.

- Policies trained on this synthetic data perform much better than policies trained only on the few human demos—often jumping from very low success to high success (for many tasks, from single digits to 70–100% in simulation).

- The method works across many robots and tasks: folding towels, shaping ropes, threading a ring, gently manipulating tissue with a surgical robot, moving a plush toy, even classic rigid tasks like stacking a cube.

- Compared to an earlier approach (MimicGen), SoftMimicGen does far better on soft-object tasks because it can “warp” motions for changing shapes. For example, in a rope‑shaping task, the older method succeeded in only 4/50 cases, while SoftMimicGen succeeded in 49/50.

- More data helps: larger generated datasets generally lead to better policies.

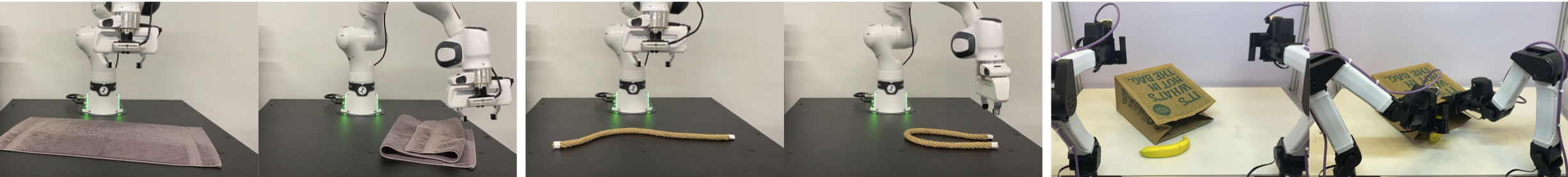

- Real‑world tests show that policies trained on SoftMimicGen’s simulated data can work without extra real‑world training (zero‑shot transfer) and get even better when mixed with a small amount of real data (co‑training).

Why it matters:

- Collecting robot demonstrations by hand is slow and expensive. SoftMimicGen cuts down the human time needed by turning a few demos into thousands of practice runs.

- Soft objects are everywhere in daily life (clothes, cables, bags, food items) and in hospitals (tissue, sutures). Making robots better at handling them is a big step toward useful home, factory, and medical robots.

- The team also releases a set of high‑quality simulated tasks, which helps other researchers train and test their own systems.

What’s the impact and what could come next?

- Faster progress: By making data collection cheaper and easier, this approach can speed up research in robot learning and help build more capable “foundation models” for robots that handle both hard and soft objects.

- Broader use: The same method works on different robots (one‑arm, two‑arm, humanoid, surgical), so it’s flexible for many settings.

- Real‑world readiness: Showing zero‑shot transfer and improvements with small real datasets suggests a practical path to deployable systems.

Limitations and future directions:

- Right now, the tasks are broken into a fixed sequence of steps. Many real jobs are messier and may require retrying steps or changing plans on the fly. Extending the system to handle flexible, branching task structures is a promising next step.

In short, SoftMimicGen helps robots learn tricky, contact‑rich skills with soft objects by turning a handful of human demos into a huge, useful practice set—making robot training faster, cheaper, and more effective.

Knowledge Gaps

Key knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains uncertain or unexplored in the paper and could guide future research.

- Dependence on ground-truth deformable state in simulation: Data generation assumes access to full, noise-free nodal positions of deformables (A3). How to perform registration and trajectory warping from partial, noisy RGB-D point clouds (with occlusions) in both sim and real, and what is the resulting degradation in generation quality?

- Fixed subtask sequences and limited task structure: SoftMimicGen assumes a predetermined subtask ordering and single-object focus per subtask (A2). How to extend to flexible task graphs with conditional branching, retries, and failure recovery, especially for less-structured deformable tasks?

- Open-loop execution of warped trajectories: The system warps and then executes trajectories with minimal feedback (linear interpolation to connect). How to incorporate closed-loop, reactive adaptation (e.g., online re-registration, vision-based corrections, force feedback) to handle unmodeled dynamics and disturbances during execution?

- Robustness of non-rigid registration: The paper does not analyze registration failure modes under large deformations, self-contact, topology changes (e.g., knotting, threading through constrained rings), or substantial geometry/material differences. What are the conditions under which the warp field becomes inaccurate, and how can registration be stabilized or constrained?

- Computational scalability and throughput: No runtime/complexity analysis is provided for registration and trajectory warping at dataset scale. What is the time and memory cost per segment, per demo, and per 1,000 demos; how does it scale with mesh/point density; and what GPU/CPU optimizations are required?

- Generalization across object instances and materials: It is unclear how well warped trajectories transfer across varying cloth sizes/thicknesses, rope lengths, stiffness, damping, or friction. What parameter randomizations and calibrations are necessary to achieve robust sim-to-real for deformables with diverse material properties?

- Handling dynamic, contact-rich tasks: For tasks like whipping, the method replays warped kinematics without guaranteeing dynamic feasibility or timing alignment. How to ensure time scaling, phase alignment, and dynamic constraints (e.g., momentum, impact) are respected after warping?

- Kinematic feasibility and collision safety: Warped poses may be unreachable or induce collisions. How to integrate motion planning or constraint solving (e.g., with SkillMimicGen-style planners) to project warped trajectories into the robot’s feasible, collision-free space while preserving task-relevant deformation correspondences?

- Multi-arm and multi-object coordination: The approach assumes per-subtask single-object focus; coordination across two manipulators acting on the same deformable (or multiple deformables/rigids) is not analyzed. How to derive consistent correspondences and joint warps for simultaneous, coordinated manipulation?

- Finger and hand configuration adaptation: For embodiments with dexterous hands, the paper does not specify how finger joint trajectories are adapted. How to warp hand shape/grasps (not just wrist pose) to preserve contact geometry and grasp stability across varying object deformations?

- Automatic segmentation and correspondence discovery: Segmentation relies on manual annotations or heuristics. How to automatically discover object-centric subtasks and correspondences for deformables from raw teleop data, and how sensitive is generation quality to segmentation errors?

- Source segment selection at scale: Selection uses registration cost as a nearest-neighbor metric. How to design learned retrieval or indexing that scales to large libraries and balances similarity, coverage, and diversity; and how does selection policy affect downstream policy performance?

- Data quality and failure analytics: The paper reports policy performance but not per-task generation success rates, failure modes, or filters used to remove bad demos. How to automatically detect, diagnose, and correct failed or low-quality generated demonstrations at scale?

- Comparison breadth and ablations: Empirical comparisons are limited (e.g., vs. MimicGen on one rope task). How does SoftMimicGen compare against alternative registration methods (CPD variants), learned deformation fields, trajectory optimization/planning baselines, or generative action models across tasks, and how sensitive is performance to registration hyperparameters?

- Sensing and perception pipeline robustness (real-world): Real-world deployment uses Point Bridge for point extraction, but robustness to clutter, lighting changes, and occlusions is not quantified. How does perception quality affect control performance, and what redundancy or self-supervised refinement can mitigate perception errors?

- Policy learning scope and metrics: Only BC-RNN-GMM and Diffusion Policy are tested, and success is reported as max over seeds (not mean/variance). How do other learning paradigms (e.g., VLA models, offline RL, world models) perform on SoftMimicGen data; how stable are results across seeds; and what is the sample efficiency vs. number of source demos?

- Domain randomization and visual realism: The paper does not specify visual/physics randomizations used in simulation or their impact on sim-to-real. What combinations of appearance, lighting, camera, and physics randomizations most improve real-world transfer for deformables?

- Topology-aware registration for constrained tasks: Non-rigid registration assumes consistent topology; threading through a ring or self-contact introduces constraints. How to incorporate topological/constraint-aware registration or correspondence pruning to avoid invalid warps?

- Real-world breadth and embodiment coverage: Real-world tests are limited to three tasks and do not include humanoid or surgical robots. How well does the approach transfer to these embodiments and more complex deformable tasks in the physical world?

- Orientation warping and Jacobian conditioning: Orientation is derived via Jacobian-based orthonormalization, but behavior near singular/ill-conditioned regions is not discussed. How to regularize or reparameterize orientation updates to prevent flips/instabilities in warped poses?

- Force/tactile considerations: The approach uses pose/gripper commands without modeling force, impedance, or tactile feedback—critical for deformables. How to incorporate force/impedance control and tactile sensing during generation and policy learning to improve robustness?

- Multiple deformables and granular/fluids: The task suite focuses on cloth, rope, tissue, and a plush. Extending to interactions with multiple deformables simultaneously, granular media, gels, or fluids remains unaddressed. What adaptations to representation and registration are needed?

- Reproducibility of registration choices: The paper references prior non-rigid registration work but omits concrete algorithmic choices, parameter settings, and implementation details. What configurations are necessary for reproducibility across tasks and embodiments?

- Online generation and lifelong learning: The pipeline generates datasets offline. Can warped, closed-loop generation be used online to continually adapt to new instances/scenes and incrementally expand the dataset without resets?

Practical Applications

Immediate Applications

- Boldly reduce data-collection costs for deformable manipulation

- Sectors: robotics software, R&D operations (industry and academia)

- What it enables: Replace months of teleoperation with 1–10 seed demos plus large-scale synthetic generation to train performant policies for deformable tasks

- Tools/workflows: SoftMimicGen + Isaac Lab simulation suite; BC-RNN-GMM or Diffusion Policy training; optional co-training with limited real data

- Assumptions/dependencies: Access to GPU-accelerated simulator with soft-body support; small number of high-quality teleop demos; subtask segmentation; compute budget for large-scale generation/training

- Rapid training for warehouse/retail bagging and order fulfillment (bag opening, stuffing soft goods)

- Sectors: logistics, retail automation, e-commerce fulfillment

- What it enables: Train bimanual policies for opening bags and loading deformable items (clothing, produce) with minimal real demos

- Tools/workflows: YAM bimanual task templates; SoftMimicGen data generation; sim-to-real transfer via point-cloud pipelines (e.g., Point Bridge)

- Assumptions/dependencies: Robust perception for thin, glossy bags; gripper/end-effector able to achieve bag opening; domain randomization to cover bag shapes/materials

- Household service tasks: towel folding/unfolding, tidying soft toys

- Sectors: consumer/home robotics, hospitality

- What it enables: Train household robot skills for laundry and tidying using synthetic data from diverse initial states; reduce on-robot data collection

- Tools/workflows: Franka/GR1 towel and teddy tasks; SoftMimicGen-generated datasets; vision-based BC/Diffusion policies

- Assumptions/dependencies: Reliable RGB-D sensing; safety/interaction constraints in home; material and texture gap between sim and real mitigated via point-based representations and domain randomization

- Cable/rope shaping for assembly (e.g., routing hoses, wiring harnesses)

- Sectors: manufacturing (automotive, aerospace, electronics)

- What it enables: Train rope-like manipulation for routing and bundling using simulation breadth (varying lengths, stiffness, clutter)

- Tools/workflows: Rope manipulation task; large-scale generation across randomized initial states; fine-tune on a small, supervised real dataset

- Assumptions/dependencies: Accurate modeling of friction/stiffness; specialized fixtures and grippers for slender objects; safety interlocks

- Surgical skill pretraining and benchmarking (tissue retraction, threading)

- Sectors: healthcare/medical robotics, surgical training

- What it enables: Pretrain bimanual surgical policies and assess controllers on standardized deformable benchmarks before benchtop or animal/cadaver studies

- Tools/workflows: dVRK tissue and threading tasks; SoftMimicGen generation; offline BC; protocolized evaluation

- Assumptions/dependencies: Anatomical realism and material parameters; regulatory oversight; careful sim-to-real validation; use for training/assistive autonomy rather than unsupervised fully autonomous care

- Augment robot foundation models with deformable manipulation data

- Sectors: AI for robotics, foundation model development

- What it enables: Co-train VLA/flow models on rigid + deformable synthetic datasets to improve generalization and coverage

- Tools/workflows: Batch generation across task suite; dataset curation and labeling; integration with existing corpora (e.g., Open X-Embodiment)

- Assumptions/dependencies: Data schemas compatible with current FMs; domain gap bridging strategies (e.g., point-cloud or image augmentations)

- QA and stress testing of manipulation policies

- Sectors: robotics QA, safety and reliability engineering

- What it enables: Generate hard edge-case initial states (crumpled towels, tangled ropes) to evaluate robustness pre-deployment

- Tools/workflows: SoftMimicGen scenario generation; automated regression tests; structured success/failure logging

- Assumptions/dependencies: Coverage of realistic failure modes; accurate contact modeling; defined pass/fail metrics per task

- Gripper and end-effector design iteration for deformable tasks

- Sectors: robotics hardware design

- What it enables: Simulate and compare candidate grippers on standardized deformable tasks using identical synthetic datasets and policies

- Tools/workflows: Parametric gripper models; SoftMimicGen-generated training data; A/B tests via trained visuomotor controllers

- Assumptions/dependencies: Contact and compliance fidelity in sim; manufacturability and safety of chosen designs

- Academic benchmarking and coursework

- Sectors: academia (robotics, control, ML)

- What it enables: Reproducible benchmarks and assignments on deformable manipulation with provided environments and a clear data-generation pipeline

- Tools/workflows: Isaac Lab environments; SoftMimicGen codebase; standard BC/Diffusion implementations; open protocols for reporting

- Assumptions/dependencies: Access to GPUs; licensing for simulator assets; instructors provide seed demos or adopt shared seeds

- In-lab operator training with low-friction teleoperation

- Sectors: workforce training (labs, startups)

- What it enables: Use AR/VR (e.g., Apple Vision Pro) to collect the minimal seed demos that bootstrap large synthetic datasets; shorten ramp-up

- Tools/workflows: Teleoperation capture of wrist/gripper/finger trajectories; automated segmentation into subtasks; SoftMimicGen expansion

- Assumptions/dependencies: Teleop hardware availability; safe human-in-the-loop procedures; basic segmentation heuristics or light annotations

Long-Term Applications

- General-purpose household robots reliably manipulating diverse textiles and soft goods

- Sectors: consumer robotics, eldercare, hospitality

- What it enables: Robust, broad-coverage handling of clothing, bedding, plush toys via foundation models co-trained on large deformable corpora

- Tools/workflows: Multi-embodiment SoftMimicGen datasets; VLA/flow models; continual sim-to-real adaptation

- Assumptions/dependencies: Improved perception of thin/reflective fabrics; richer material models; long-horizon task structure beyond fixed subtask sequences

- Assistive autonomy in surgery for soft-tissue tasks (e.g., retraction, suturing assistance)

- Sectors: healthcare

- What it enables: Certified assistive controllers that reduce surgeon workload by handling repeatable subtasks learned from massive synthetic + limited real data

- Tools/workflows: High-fidelity anatomical sims; SoftMimicGen generation; closed-loop safety monitors; human-in-the-loop oversight

- Assumptions/dependencies: Regulatory approval, rigorous validation, sim fidelity to patient variability, reliable sensing and fail-safes

- Standardized community benchmarks and datasets for deformable manipulation

- Sectors: academia, industry consortia

- What it enables: Cross-lab comparable evaluation; progress tracking similar to rigid benchmarks; shared assets and leaderboards

- Tools/workflows: Curated tasks and metrics; public SoftMimicGen datasets; open-source evaluation suites

- Assumptions/dependencies: Community governance, licensing of realistic assets, sustained maintenance

- Cloud “Synthetic Data-as-a-Service” for deformable manipulation

- Sectors: robotics platforms, data providers

- What it enables: On-demand generation of task- and embodiment-specific deformable datasets for startups and labs

- Tools/workflows: Hosted SoftMimicGen pipelines; asset libraries; API for demo upload and dataset retrieval; optional domain randomization tuning

- Assumptions/dependencies: Compute costs and data egress; IP around assets and customer demos; privacy/security

- Co-optimization of hardware and policy using synthetic deformable tasks

- Sectors: robotics hardware, product engineering

- What it enables: Joint search over gripper geometry, compliance, and control policies against diverse deformable workloads

- Tools/workflows: Parametric CAD-to-sim loop; automated data generation; Bayesian optimization or differentiable design

- Assumptions/dependencies: Differentiable or surrogate models of contact; sim fidelity; integration with manufacturing constraints

- Real-time non-rigid registration controllers on robots

- Sectors: robotics control, perception

- What it enables: Deploy warp-field based closed-loop controllers (not just for data generation) using robust online point-cloud registration

- Tools/workflows: Depth/RGB-D sensing; fast, noise-robust non-rigid registration; hybrid model-predictive control

- Assumptions/dependencies: Perception improvements for thin/textureless materials; compute at the edge; fusion of tactile/vision for stability

- Digital twins for textile and soft-goods manufacturing and laundry automation

- Sectors: manufacturing, commercial laundry, circular economy

- What it enables: Plan, simulate, and train for SKU-specific fabric handling (folding, sorting, packing) before plant deployment

- Tools/workflows: Plant-scale digital twins; SoftMimicGen scenario generation; pipeline for rapid on-site fine-tuning

- Assumptions/dependencies: Accurate material databases; integration with MES/ERP; robust robotization of legacy stations

- Policy and standards for synthetic-data validation in safety-critical robotics

- Sectors: policy/regulation, standards bodies

- What it enables: Guidance on acceptable use of synthetic data for certification, testing protocols for deformable manipulation

- Tools/workflows: Reference suites, reproducible scenarios, traceable metrics; audit trails for dataset provenance

- Assumptions/dependencies: Consensus on metrics; stakeholder engagement (manufacturers, regulators, clinicians)

- On-site rapid adaptation: few real demos + cloud generation loop

- Sectors: field robotics, deployment services

- What it enables: Robots encounter new soft objects (novel garments, packaging); collect a handful of demos; auto-generate thousands in the cloud and redeploy within hours

- Tools/workflows: Edge teleop capture; cloud SoftMimicGen; continuous integration for policy updates

- Assumptions/dependencies: Reliable connectivity; MLOps for robotics; safe hot-swapping of policies

- Multi-embodiment robot foundation models with strong deformable skills

- Sectors: AI/ML, platform robotics

- What it enables: Train unified models (arms, humanoids, surgical robots) that transfer deformable skills across embodiments and tasks

- Tools/workflows: Cross-embodiment SoftMimicGen datasets (Franka, YAM, GR1, dVRK); standardized action spaces or embodiment adapters

- Assumptions/dependencies: Scalable training on multimodal/multi-embodiment data; alignment with existing open datasets; evaluation across varied hardware

Notes on key dependencies impacting feasibility across applications:

- Simulator fidelity to real material properties and contacts, and ability to randomize textures/geometries

- Quality and diversity of seed demonstrations and subtask segmentation

- Robust perception for thin, glossy, and textureless deformables; point-cloud extraction and masking at deployment

- Suitable end-effectors (compliance, tactile sensing) and controller bandwidth for dynamic, contact-rich tasks

- Safety, regulatory, and ethical considerations for sensitive domains (healthcare, home environments)

- Compute budgets for large-scale generation and training, and MLOps to manage continuous dataset curation and deployment

Glossary

- Behavioral Cloning (BC): An imitation learning approach that trains a policy to mimic expert actions via supervised learning. "Policies are trained using Behavioral Cloning~\cite{pomerleau1989alvinn} via the maximum likelihood objective"

- bimanual: Involving two arms manipulating simultaneously. "DexMimicGen~\cite{jiang2024dexmimicgen} extended this approach to bimanual manipulation"

- co-training (sim–real co-training): Jointly training policies on both simulated and real datasets to improve performance. "and are further improved via sim-real co-training."

- contact-rich manipulation: Tasks requiring sustained or complex physical contact interactions between robot and objects. "tasks that require dynamic, contact-rich manipulation."

- continuous deformation field: A smooth, spatially varying mapping that warps one shape configuration to another. "and how the resultant continuous deformation field can be used to warp source demonstrations"

- deformable object manipulation: Robotic handling of objects whose shapes change under forces, like cloth or rope. "In this paper, we introduce SoftMimicGen, an automated data generation pipeline for deformable object manipulation tasks."

- Diffusion Policy: A visuomotor policy class that generates actions by denoising from a diffusion process. "Each resulting dataset is used to train visuomotor policies using two imitation learning approaches: BC-RNN-GMM~\cite{mandlekar2021matters} and Diffusion Policy~\cite{chi2023diffusion}."

- end-effector: The robot’s tool or gripper at the arm’s tip that interacts with objects. "Each of these segments is a sequence of end-effector control poses"

- forceps-style grippers: Surgical grippers resembling forceps, used for precise tissue manipulation. "The surgical robot uses forceps-style grippers to grasp the tissue and retract it upward."

- foundation models (robot foundation models): Large, pre-trained models that aim to generalize across many robot tasks. "hinders the broader development of robot foundation models."

- Gaussian Splatting: A 3D scene representation/rendering method using anisotropic Gaussian primitives. "Most recent approaches build upon SDFs~\cite{qiao2022neuphysics}, NeRF~\cite{feng2024pie}, or Gaussian Splatting~\cite{zhong2024reconstruction} to support flexible physical digital twin creation."

- graph-based representations: Modeling objects or particles as nodes and edges for learning dynamics. "neural methods of dynamics learning using graph-based representations have been used to learn the dynamics of various types of deformable objects such as plasticine~\cite{shi2023robocook}, cloth~\cite{li2020causal}, and fluid~\cite{sanchez2020learning}."

- humanoid: A robot with a human-like body configuration (torso, arms, legs). "They encompass a range of deformable objects (stuffed animal, rope, tissue, towel) and manipulation behaviors ... across four robot embodiments (Franka and YAM robot arms, humanoid, surgical robot)."

- imitation learning: Learning policies from expert demonstrations rather than explicit reward signals. "We train visuomotor policies via imitation learning on generated data"

- initial state distribution: The probability distribution over starting states for episodes in a task. "Each episode starts in an initial state sampled from the initial state distribution ."

- Isaac Lab: A GPU-accelerated simulation framework for robot learning environments. "We introduce a suite of high-fidelity deformable object manipulation tasks (Fig. \ref{fig:fig3}) implemented in Isaac Lab~\cite{mittal2025isaac}."

- Jacobian: The matrix of first-order partial derivatives describing local linearization of a vector-valued function. "where is the Jacobian of evaluated at "

- maximum likelihood objective: A training objective that maximizes the likelihood of observed actions under the policy. "via the maximum likelihood objective $\arg\max_{\theta} \mathbb{E}_{(s, o, a) \sim \mathcal{D} [\log \pi_{\theta}(a \mid o)]$"

- NeRF (Neural Radiance Fields): A neural 3D representation used for novel view synthesis and reconstruction. "Most recent approaches build upon SDFs~\cite{qiao2022neuphysics}, NeRF~\cite{feng2024pie}, or Gaussian Splatting~\cite{zhong2024reconstruction} to support flexible physical digital twin creation."

- nearest-neighbor source segment selection: Choosing a demonstration segment by minimizing a similarity cost to the current scene. "nearest-neighbor source segment selection strategy from MimicGen \cite{mandlekar2023mimicgen}"

- non-rigid registration: Aligning two shapes by estimating a smooth, non-linear deformation between them. "Non-rigid registration finds a smooth function that maps points from the first configuration to the second"

- object-centric invariance: The principle that manipulation motions are invariant when expressed relative to the manipulated object. "MimicGen \cite{mandlekar2023mimicgen} is a data generation framework that exploits object-centric invariance to generate new trajectories."

- object reference frame: A coordinate frame attached to an object used to express relative motions. "by leveraging a static object reference frame that remains constant between the source dataset and the new task instance."

- Partially Observable Markov Decision Process (POMDP): A formal model for decision-making where the agent observes incomplete information about the true state. "We model each manipulation task as a Partially Observable Markov Decision Process (POMDP)."

- photorealistic rendering: Rendering that produces images indistinguishable from real photographs. "due to the availability of high-fidelity physics simulators and photorealistic rendering"

- point clouds: Sets of 3D points representing surfaces or volumes, typically from depth sensors. "These approaches typically rely on point clouds from depth sensors to register objects from a source demonstration to a new context."

- retargets (motion retargeting): Mapping recorded human motions to a robot’s kinematics and constraints. "Our teleoperation pipeline retargets human hand motions to either a parallel-jaw gripper or a dexterous robotic hand"

- SE(3) transform: A rigid 3D transformation consisting of rotation and translation. "MimicGen carries out this transformation using a constant SE(3) transform ."

- Signed Distance Functions (SDFs): Functions that give the signed distance to the nearest surface, used to represent geometry. "Most recent approaches build upon SDFs~\cite{qiao2022neuphysics}, NeRF~\cite{feng2024pie}, or Gaussian Splatting~\cite{zhong2024reconstruction} to support flexible physical digital twin creation."

- sim-to-real transfer: Deploying a policy trained in simulation directly on real robots. "Policies trained on SoftMimicGen-generated data achieve zero-shot sim-to-real transfer"

- teleoperation: Human remote control of a robot, often used to collect demonstrations. "These datasets are often collected via robot teleoperation by large teams of human operators"

- visuomotor policies: Policies that map visual observations directly to motor actions. "We train visuomotor policies via imitation learning on generated data"

- warp field: A spatial displacement field used to warp geometry or trajectories from one configuration to another. "performs non-rigid registration to establish correspondence and a warp field between the source and target geometries"

Collections

Sign up for free to add this paper to one or more collections.