The Kitchen Loop: User-Spec-Driven Development for a Self-Evolving Codebase

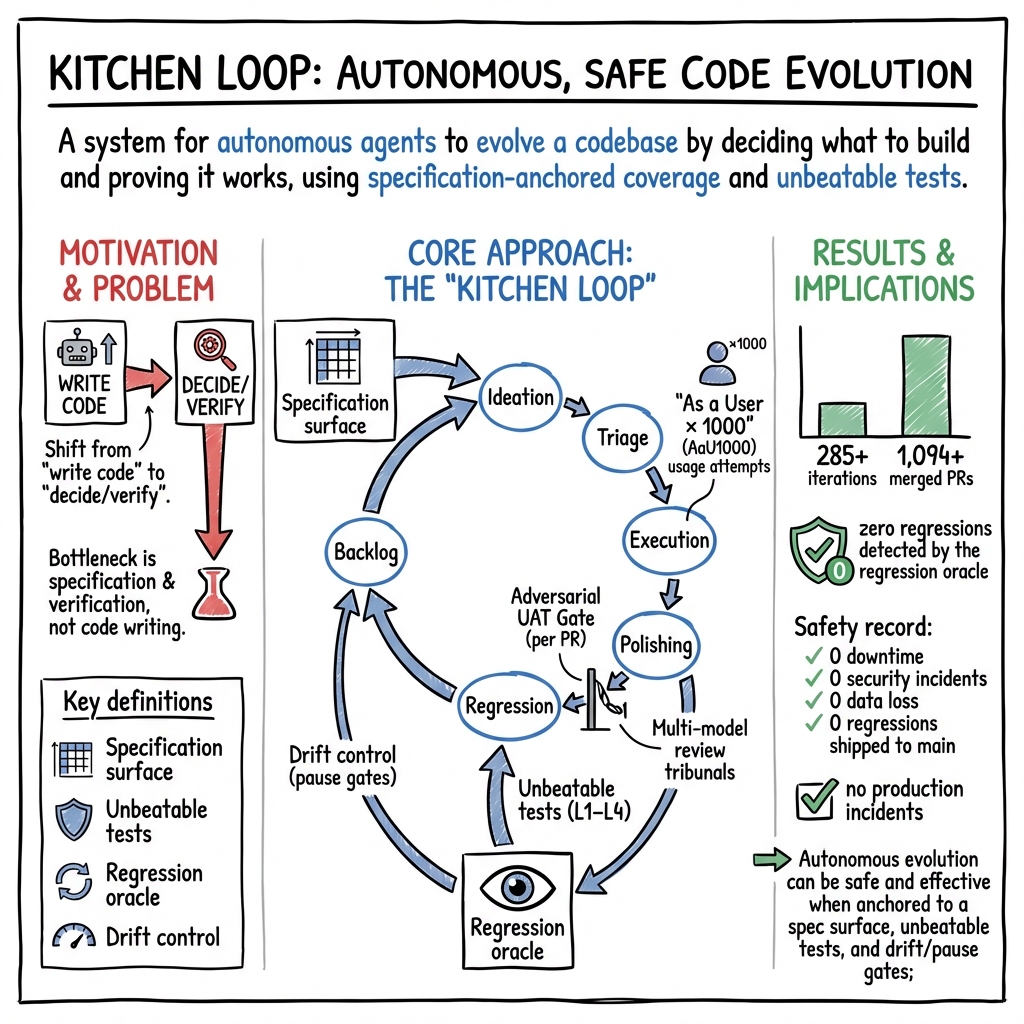

Abstract: Code production is now a commodity; the bottleneck is knowing what to build and proving it works. We present the Kitchen Loop, a framework for autonomous, self-evolving software built on a unified trust model: (1) a specification surface enumerating what the product claims to support; (2) 'As a User x 1000', where an LLM agent exercises that surface as a synthetic power user at 1,000x human cadence; (3) Unbeatable Tests, ground-truth verification the code author cannot fake; and (4) Drift Control, continuous quality measurement with automated pause gates. We validate across two production systems over 285+ iterations, producing 1,094+ merged pull requests with zero regressions detected by the regression oracle (methodology in Section 6.1). We observe emergent properties at scale: multi-iteration self-correction chains, autonomous infrastructure healing, and monotonically improving quality gates. The primitives are not new; our contribution is their composition into a production-tested system with the operational discipline that makes long-running autonomous evolution safe.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What This Paper Is About

This paper introduces the Kitchen Loop, a way to let software “improve itself” safely and continuously. The main idea is that writing code is easy now (AI can help a lot), but deciding what to build and proving it actually works are the real bottlenecks. The Kitchen Loop uses AI agents to act like super-fast users, run real tests that can’t be faked, and stop the process if quality starts to slip.

The Big Questions the Authors Asked

The authors focused on a few simple questions:

- How can we make software improve itself without breaking things?

- How do we verify AI-written code without reading every line?

- How can we catch quality drift (when things slowly get worse) before users notice?

- Can we connect all of this into one trusted, repeatable process that runs for weeks or months?

How Their System Works

The Kitchen Loop ties together four key ideas into one continuous cycle.

The Specification Surface: “What do we claim works?”

Think of a product’s features as a menu. The “specification surface” is the full menu: every feature, on every platform, with the actions it should support. Instead of fixing random bugs, the loop goes through this menu methodically to see if reality matches the claims.

“As a User × 1000”: AI as a super-fast user

An AI agent pretends to be a power user and:

- Picks a realistic scenario from the menu (specification surface).

- Tries it end to end like a real person would (writes code, runs it, observes what breaks).

- Files clear tickets when things go wrong (with steps to reproduce and clues about the cause).

- Makes a fix, adds tests, and opens a pull request.

- Checks that the fix didn’t break anything else.

It repeats this fast, over and over. The “×1000” isn’t exact; it means “much faster than humans,” and the team shows big speedups in practice.

Unbeatable Tests: Tests that check real outcomes

Not all tests give the same confidence. The loop uses four layers of checks, building up trust:

- Level 1 (Unit): Does a function’s logic work in isolation?

- Level 2 (API/Adapter): Do methods behave correctly when talking to real dependencies?

- Level 3 (Integration): Does the full pipeline run against a real environment, and do we see the right outcomes?

- Level 4 (End-to-End): Does a complete user journey succeed like it would in real life?

The critical difference is Level 3 and Level 4 verify “ground truth.” For example, they don’t just see if a function ran; they compare “before and after” state to prove the right change actually happened. That makes them “unbeatable” because the code author can’t fake real-world outcomes.

A simple analogy: don’t just read a recipe (unit test) or check you have ingredients (API test). Actually cook the dish (integration) and taste it (end-to-end). Only then are you sure it works.

Regression Oracle and Drift Control: “Are we at least as good as last time?”

After every change, an automated system (the regression oracle) runs a trusted set of tests and asks one question: “Is the system at least as good as before?” If quality trends down (drift), the loop automatically pauses (“pause gates”) so problems don’t pile up.

The Three-Tier Strategy: What to test next

To cover the product fairly, the loop splits its effort:

- Foundation (about 30%): Basic, simple cases. These must always work perfectly.

- Composition (about 50%): Combine features together. This finds bugs at the “seams.”

- Frontier (about 20%): Try useful things just beyond today’s abilities. This produces gap reports that suggest what to build next.

Over time, this grows coverage in a healthy way: as new features are added, there are more combinations to test, and the system keeps pushing the boundary forward.

The UAT Gate and Multi-Model Review: Trust, but verify

Before merging a change, the author must write a “sealed test card” that any user could follow: exact steps, exact commands, and exact expected outputs. Then a separate, weaker AI (acting like a “dumb user”) follows those steps in a clean environment. It can’t edit product files and must show evidence for every step. If it passes, great. If it fails, the change doesn’t ship.

The code is also checked by multiple independent AI reviewers. If two or three agree on a problem, it’s prioritized. No model’s output is trusted blindly, especially not the one that wrote the change.

A real example from the paper: a feature had 38 passing unit tests but still didn’t work when used for real. The UAT gate caught it because the end-to-end steps failed.

What They Found

The team ran the Kitchen Loop on two real products for several weeks and reported:

- 285+ iterations and 1,094+ merged pull requests.

- 13,000+ tests run.

- Zero regressions caught by their regression oracle after merges.

- Quality gates improved to 100% over time.

- No production incidents.

- Very low cost per merged pull request (about $0.38) and roughly$350/month in model costs due to flat-rate plans.

- Iteration speed ranged from minutes (quick checks) to a couple of hours (full scenarios).

They also saw surprising “emergent” behavior: the system chained self-corrections across multiple iterations, fixed parts of its own infrastructure, and steadily tightened its quality checks.

Why this matters:

- It shows AI can safely help evolve a codebase if you anchor it to real-world tests and strict gates.

- It keeps speed gains without sacrificing quality.

- It makes long-running automation safer by stopping itself when quality dips.

Why This Approach Is Important

- Code writing is no longer the main problem. Knowing what to build and proving it works is.

- Traditional testing can miss real-world failures. “Unbeatable tests” focus on outcomes that can’t be faked.

- Reading every line of AI-written code doesn’t scale. Verifying behavior does.

- Many teams skip or soften QA with AI. This approach forces QA structurally, not by personal discipline.

What This Could Change Going Forward

- Teams can run continuous “user-like” testing at scale, catching problems before users do.

- Backlogs become clearer because every failure produces precise tickets and evidence.

- Software can improve itself in a controlled loop, with built-in brakes to prevent damage.

- Quality can improve monotonically (only getting better), even as speed increases.

In short, the Kitchen Loop shows a practical way to let AI help build and improve software while staying safe: define what you claim to support, act like a fast, honest user to try it, verify with ground truth, and stop automatically if things drift.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

The paper proposes the Kitchen Loop and reports promising early evidence. The following are concrete gaps and unresolved questions that, if addressed, would strengthen the empirical basis and broaden applicability.

- External validity across domains and scales: Results are from two production systems over ~7 weeks. How does the loop perform in other domains (web/app UI, backend services, mobile, embedded, safety-critical), large monorepos, and longer horizons (quarters/years)? Design a multi-domain, longitudinal evaluation.

- Oracle coverage and detection power: “Zero regressions” depends on the regression oracle’s suite. What is the oracle’s recall and false-negative rate? Use mutation testing, seeded regressions, and fault-injection to quantify detection power.

- Spec surface completeness and drift: How is the specification surface enumerated, maintained, and validated for completeness and correctness over time? Develop methods (telemetry mining, user log mining, model-based spec inference) to detect missing or outdated spec cells and spec drift.

- Ground truth in domains without clear state deltas: Unbeatable tests hinge on real state-delta verification. How can equivalent “ground truth” be defined for domains with stochastic, subjective, or visually-validated outcomes (e.g., UI/UX flows, recommendations, LLM outputs beyond signals)? Provide domain-specific patterns and benchmarks.

- Non-determinism handling beyond signals: For APIs/services with inherent variability, what statistical oracles, tolerance bands, or reference distributions should be used to avoid flaky verdicts without masking regressions? Validate robust comparison methods.

- Flakiness and environment variability: How are flaky tests and transient infra failures distinguished from true regressions at scale? Specify retry policies, hermetic test environments, and statistical confidence procedures; report flake rates and mitigation efficacy.

- Drift control metrics and pause-gate logic: The precise drift metrics, thresholds, and change-point detection methods are not specified. Define the metrics, control limits, and gate algorithms; quantify false positive/negative rates and recovery behavior.

- “Weakest-evaluator” validity: Using a weak LLM as a user proxy may under/overestimate real user success. What is the correlation between weakest-model pass rates and human user pass rates? Run user studies to calibrate evaluator choice.

- Adversarial robustness of anti-cheating safeguards: The UAT anti-cheating checks (worktree diffs, evidence directories) may be bypassable (e.g., side-channel actions, OS-level state). Define the threat model and conduct red-team evaluations to identify and harden against evasion strategies.

- Multi-model tribunal independence and stability: Tribunal reviewers may share training data or biases, and model updates may shift behavior. Quantify reviewer independence, inter-rater reliability, and the impact of model version drift; compare tribunal vs single-model baselines.

- Ablation study of loop components: The contribution of each element (UAT gate, tribunals, anti-signal canaries, four-layer tests, pause gates) is not quantified. Perform ablations to measure marginal impact on defect detection and regression prevention.

- Head-to-head baselines: No controlled comparisons with existing agentic systems (e.g., SWE-Agent, OpenHands, Devin-like) on identical repos/time budgets. Run randomized, controlled head-to-head trials with shared evaluation oracles.

- Cost accounting and resource usage: The reported ~$0.38 per PR omits compute, infra, and human oversight time. Provide a full cost model (LLM tokens, CI minutes, storage, human review) and energy use; analyze cost-quality trade-offs.

- Throughput “1000×” claim vs current single-thread reality: Parallelization is proposed but not demonstrated. Evaluate N-parallel loops per product: contention on shared resources, race conditions, and aggregate flake/regression impacts.

- Non-functional requirements (NFRs): The framework emphasizes correctness; coverage of performance, latency, resource usage, resilience, accessibility, and security is unclear. Extend the test stack with NFR oracles and report NFR drift control.

- Security and governance of autonomous commits: How are permissions, secrets, SBOM, dependency integrity, code signing, and rollback handled? Specify guardrails (e.g., policy-as-code, provenance attestation) and validate against supply-chain attack scenarios.

- Human-in-the-loop ergonomics and outcomes: How does the loop affect developer workflow, PR review burden, ticket quality, and acceptance? Measure developer satisfaction, time-to-merge, and defect escape rates pre/post adoption.

- Managing LLM model/version drift: How are quality gates preserved when underlying LLMs change? Define version pinning, canarying, and rollback strategies for model updates; quantify impact on tribunal and evaluator behavior.

- Overfitting to canaries and tests: Monotonic improvements may reflect specific canary/test tuning rather than generalized quality. Introduce rotating, adversarially-generated, and holdout canaries; track generalization to unseen failure modes.

- Handling large refactors and spec changes: How does the loop maintain or adapt tests when architecture changes or spec deprecates features? Propose migration strategies, test refactoring policies, and spec versioning.

- Cross-repo and dependency management: How does the loop coordinate across multi-repo dependencies or monorepos with shared modules? Define version pinning, integration test matrices, and coordinated release oracles.

- Property-based and invariant testing: Beyond enumerated scenarios, how are invariants enforced across compositions and negative tests? Incorporate property-based tests and global invariants; measure incremental defect discovery.

- Coverage quantification of the matrix: The size of the N×M×K coverage matrix and actual coverage achieved are not reported. Provide coverage metrics over time and saturation/plateau analysis.

- Ticket and “gap analysis” quality: The precision, actionability, and prioritization accuracy of automatically generated tickets/gap reports are unmeasured. Create a rubric and have independent reviewers score samples; correlate with time-to-fix and impact.

- Reproducibility artifacts: While code is open-sourced, full logs, configs, and datasets for the 285 iterations are not detailed. Release run logs, oracle outputs, and exact model versions/prompts for independent replication.

- Long-horizon stability and debt: Short-term “zero incidents” may not capture accruing complexity or brittle tests. Track complexity metrics (e.g., cognitive complexity, duplication), maintenance cost of tests, and incident rates over months.

- Risk of Goodharting on spec coverage: Optimizing for coverage matrix completion might miss unmodeled user needs. Incorporate user telemetry and feedback loops to adjust the spec; validate user value realized by Frontier recommendations.

- UI/visual verification: The approach to visual and interaction-heavy flows (browser/mobile) is not specified. Define visual oracles (e.g., perceptual diffs, accessibility checks) and integrate into the L3/L4 stack.

- Internationalization/localization and timezones: How are locale/time-dependent behaviors validated to prevent false positives/negatives? Add locale-aware test matrices and oracles.

- Data privacy and safety in tests: How is PII handled in test artifacts and logs? Specify anonymization, data retention, and compliance controls.

- Legal/licensing risks of generated code/tests: What policies govern license compliance for AI-generated artifacts? Propose automated license scanning and provenance tracking.

- Safety of state-delta tests in production-like environments: For destructive operations, how is isolation guaranteed (e.g., chain forks vs mainnet drift)? Validate that fork behavior represents production and document divergence risks.

- Cold-start guidance: How to bootstrap when there is little to no spec or tests? Provide a cookbook for initial spec elicitation and minimal unbeatable tests.

- Scenario scheduling and prioritization: The exact algorithm for selecting scenarios across tiers is not formalized. Compare heuristics (risk-based, coverage gaps) vs learned policies; report discovery rates per strategy.

- Interaction with CI/CD and developer velocity: The impact of loop gating on pipeline times and developer throughput is not reported. Measure end-to-end cycle times and queues, and assess optimal gating granularity.

- Accountability and ethics: With autonomous merges and limited human review, how is accountability assigned for defects? Outline governance models and escalation policies; assess organizational readiness and ethical implications.

Practical Applications

Immediate Applications

Below are concrete ways organizations and individuals can deploy the Kitchen Loop’s methods today, using existing tools and infrastructure.

- Boldly adopt “Unbeatable Tests” in CI/CD

- Sectors: software, fintech/DeFi, web, cloud/SaaS

- What: Add 4-layer, ground-truth test harnesses (compile → execute → parse → state deltas) to your CI so every PR is validated against reality, not just unit tests.

- Tools/workflows:

- CI jobs that run L3/L4 tests against real dependencies (e.g., headless browser, sandboxed services, chain forks).

- “State delta” assertions as first-class artifacts (before/after DB rows, balances, files, DOM).

- Assumptions/dependencies: Reliable access to near-production test environments and test data; ability to observe state transitions deterministically.

- Introduce the Adversarial UAT Gate with sealed test cards

- Sectors: software, QA, SaaS, open-source

- What: Require every feature PR to include a sealed, step-by-step user test card; execute it in a clean worktree by a “weakest model” evaluator that has zero implementation context.

- Tools/workflows:

- GitHub/GitLab action that validates card format, spins clean checkout, runs commands, captures exit codes and outputs, and enforces a “no product file edits by evaluator” rule.

- Assumptions/dependencies: Clear product entry points (CLI/HTTP/UI) and ability to script end-to-end usage without manual editing.

- Multi-model review tribunals for PRs and design docs

- Sectors: software, devtools, security

- What: Enforce “no self-review by the authoring model.” Use at least two independent LLMs (plus a human as needed) to critique code, tests, and design decisions with consensus rules (consensus/majority/solo).

- Tools/workflows: PR bot that routes diffs to multiple models (e.g., GPT, Claude, Gemini), aggregates findings, and gates merges on tribunal outcomes.

- Assumptions/dependencies: Access to multiple LLM APIs and cost controls; guardrails to prevent leaking secrets to external models.

- Regression oracle with drift control and pause gates

- Sectors: software, fintech/DeFi, ML platforms, data products

- What: After every merge, run a bounded regression oracle (repeatable suites tied to the specification surface). Track trend metrics; auto-pause merges when quality degrades.

- Tools/workflows: CI pipeline stage “Oracle” + a dashboard that plots pass/fail trends and triggers “Pause Gate” status checks that block further PRs.

- Assumptions/dependencies: Clearly scoped oracle suites; organizational willingness to accept automatic pauses.

- Coverage matrix management (spec surface → tests)

- Sectors: product engineering, QA

- What: Enumerate the product’s “specification surface” (features × platforms × actions) and convert it into a coverage matrix that drives test generation and prioritization (P0→P3).

- Tools/workflows: Issue-tracker plugin (Jira/Linear) to store the matrix, label tickets by matrix cells, and visualize coverage/gaps.

- Assumptions/dependencies: A maintained, explicit product capability catalog; shared definition of “claim we support.”

- “As a User × 1000” (AaU1000) synthetic usage loops for QA and triage

- Sectors: SaaS, SDKs, APIs, web apps

- What: Run an agent that continuously attempts realistic user scenarios, files actionable tickets (with repro steps and file-level hypotheses), and proposes fixes as PRs.

- Tools/workflows: A scheduled bot that:

- Picks scenarios using the Three-Tier plan (Foundation/Composition/Frontier = 30/50/20).

- Executes scenarios, logs failures as tickets, and proposes patches that must pass the UAT gate and oracle.

- Assumptions/dependencies: Stable local run or sandbox; responsible rate-limiting for external services; human triage lane for ambiguous items.

- Anti-signal canaries for content, recommendation, and alerting systems

- Sectors: media, finance (signals/alerts), education tech, trust & safety

- What: Continuously inject known-bad or adversarial items to validate that quality gates catch them (e.g., stale, misleading, mixed true/false content).

- Tools/workflows: Canary generator + evaluator pipeline with deterministic rules (e.g., “STALE_NARRATIVES”) and LLM tribunal fallback.

- Assumptions/dependencies: Clear acceptance criteria; labeled canaries; deterministic checks where feasible.

- DeFi/fintech “state-delta” execution harnesses

- Sectors: finance, crypto/DeFi

- What: Validate strategies and SDK actions on chain forks; assert exact balance and state changes to ensure correctness beyond compilation.

- Tools/workflows: Anvil/Hardhat/Ganache environments, transaction receipts parsing, pre/post ledger snapshots as test evidence.

- Assumptions/dependencies: Reliable fork infra; up-to-date ABIs; deterministic seeding of funds and accounts.

- Open-source governance upgrade: PR gating without human toil

- Sectors: open-source, community projects

- What: Enable bots to submit high-quality PRs while guaranteeing safety via UAT gate, oracle runs, and tribunals so maintainers can focus on intent alignment rather than line-by-line review.

- Tools/workflows: GitHub App that enforces the Kitchen Loop gates and labels (PASS/PRODUCT_FAIL/UAT_SPEC_FAIL/EVAL_CHEAT_FAIL).

- Assumptions/dependencies: Maintainers agree to enforce gates; contributors adopt sealed cards.

- Course modules and labs for “Test as the product”

- Sectors: academia, professional training

- What: Teach the 4-level pyramid, spec surfaces, AaU1000, and drift control through hands-on labs that test real services, not mocks.

- Tools/workflows: Classroom repositories with oracle suites, sealed test card assignments, and multi-model review exercises.

- Assumptions/dependencies: Sandbox credentials; reproducible environments for students.

- Procurement and internal compliance checklists for AI-coded changes

- Sectors: enterprise IT, GRC

- What: Require vendors or internal teams to demonstrate: spec surface coverage, presence of unbeatable tests, “no same-model review,” and active pause gates.

- Tools/workflows: Lightweight evidence package (coverage matrix, oracle logs, tribunal reports, UAT artifacts) attached to change requests.

- Assumptions/dependencies: Policy updates; audit trails integrated with CI logs.

Long-Term Applications

These opportunities require additional research, scaling, or standardization before widespread deployment.

- Autonomous, self-evolving product lines under spec-anchored governance

- Sectors: software, cloud/SaaS, SDKs

- What: Multiple Kitchen Loops running in parallel on different spec regions, shipping continuous improvements while bounded by oracles and pause gates.

- Potential products: “Self-evolving SDKs” and “self-hardening platforms” with verifiable release artifacts.

- Dependencies: Robust parallelization (containerized environments, port isolation), stronger change-impact analysis, and budget-aware model routing.

- Safety certifications based on unbeatable tests and regression oracles

- Sectors: safety-critical systems (healthcare, automotive, robotics, industrial)

- What: Third-party certifications that rely on 4-layer, ground-truth verification suites and canary batteries instead of documentation-heavy audits alone.

- Potential products: “Oracle-certified” labels; reference test suites per domain (e.g., medical device interfaces, robot task libraries).

- Dependencies: Domain standards bodies; regulatory buy-in; high-fidelity simulators/digital twins for ground-truth measurement.

- Digital-twin–backed Kitchen Loops for robotics and IoT

- Sectors: robotics, manufacturing, smart infrastructure

- What: Use digital twins to provide state-delta truth (pose, force, energy) for L3/L4 tests; AaU1000 scenarios reflect real operational tasks.

- Potential products: Robotic “scenario cards,” simulator-integrated oracles, and pause gates that prevent unsafe deployments.

- Dependencies: Accurate simulators; sim2real calibration; safe rollout protocols.

- “Spec Surface Manager” platforms and standards

- Sectors: devtools, PM tooling

- What: Dedicated systems to define, evolve, and audit specification surfaces and coverage matrices across microservices and platforms.

- Potential products: Spec ledger with APIs; cross-repo coverage heatmaps; code-to-claim traceability.

- Dependencies: Common schemas for capabilities; mapping tests to claims; organizational adoption.

- Industry-wide “no same-model review” and tribunal norms

- Sectors: software, security

- What: Policy norms or regulations requiring independent-model (or model-family) review for agent-authored changes in critical systems.

- Potential products: Attestation services that record authoring model, reviewers, and consensus outcomes.

- Dependencies: Model provenance tooling; enterprise LLM governance; privacy-preserving review pipelines.

- Drift control as a compliance obligation (automated pause gates)

- Sectors: finance, healthcare, critical infrastructure

- What: Mandate continuous drift monitoring with automatic halt-on-degradation in deployment pipelines for regulated workloads.

- Potential products: “Pause Gate as a Service” with regulator-facing dashboards and tamper-evident logs.

- Dependencies: Regulator guidance; standardized drift metrics per domain; strong SRE/DevOps integration.

- Marketplaces for sealed test cards and canary suites

- Sectors: devtools, QA, open-source ecosystems

- What: Community-vetted libraries of user scenarios (sealed cards) and domain-specific canaries that teams can purchase or adopt.

- Potential products: Curated scenario packs for popular stacks (React, Django, Kafka), DeFi protocol canaries, content-quality canaries for T&S.

- Dependencies: Incentive models for creators; versioning against evolving products; IP/licensing.

- IDE-native AaU1000 and UAT authoring assistants

- Sectors: software, developer experience

- What: IDE plugins that help authors draft sealed test cards, simulate weakest-evaluator runs locally, and suggest coverage-matrix targets while coding.

- Potential products: “Test Card Copilot,” “Coverage Navigator,” “Spec-to-Test” generators.

- Dependencies: Local sandbox orchestration; lightweight oracles; model-in-IDE with privacy controls.

- Cross-organizational quality sharing via “oracle artifacts”

- Sectors: enterprise platforms, ecosystems

- What: Publish machine-readable oracle results (state-delta proofs, canary catch rates) with releases to help integrators assess risk without re-testing everything.

- Potential products: Oracle artifact registries; scorecards embedded in SBOM/SLSA supply chain metadata.

- Dependencies: Interoperable result formats; cryptographic attestations; ecosystem validators.

- Evidence-first regulation and audits for AI-assisted development

- Sectors: public policy, certification bodies

- What: Shift from process narratives to executable evidence (sealed cards, oracle logs, drift trends) as the core of audits for AI-generated code.

- Potential products: Audit toolchains that replay oracles against tagged commits; “minimum evidence” standards.

- Dependencies: Policy updates; auditor training; secure replay environments.

- Education: “Coverage-exhaustion engineering” curricula

- Sectors: academia, professional certification

- What: Degrees and certificates that treat specification, oracles, and drift control as core engineering disciplines, not adjunct QA topics.

- Potential products: Capstones built around spec surfaces and self-evolving repos; shared teaching labs across universities.

- Dependencies: Shared reference stacks; sustainable sandbox infra; alignment with accreditation bodies.

Notes on Assumptions and Dependencies (common across applications)

- Ground truth access: Feasibility hinges on being able to observe real or high-fidelity simulated state changes. Where reality is costly or unsafe, digital twins must be sufficiently accurate.

- Model ecology and governance: Multi-model tribunals assume access to diverse models and policies that prevent self-review and data leakage.

- Organizational readiness: Pause gates, spec surfaces, and sealed cards require cultural adoption; teams must accept automatic halts and invest in maintaining the spec ledger.

- Cost and performance: Running L3/L4 oracles and tribunals increases CI time and compute cost; prioritize by risk (P0→P3) and use deterministic rules where possible to reduce LLM calls.

- Data and privacy: For regulated domains, synthetic or de-identified data and secure evaluation environments are necessary to run AaU1000 and UAT at scale.

Glossary

- AaU1000 (As a User × 1000): A method where an agent exercises the product like a power user at high cadence to uncover issues and drive improvements. "The ``As a User x 1000'' Method (AaU1000)"

- Adversarial UAT Gate: A strict, user-perspective verification step executed by a fresh evaluator to prevent biased or trivial tests. "The Adversarial UAT Gate: ``How Would a User Test This?''"

- Agent Evaluator: A multi-agent design pattern where a dedicated agent evaluates other agents’ outputs or system state. "and Agent Evaluator (regression oracle)"

- Anvil chain forks: Local Ethereum blockchain forks used to run deterministic on-chain tests. "executing demo strategies on Anvil chain forks and verifying 4-layer state deltas"

- Anti-signal canaries: Intentionally bad inputs injected to ensure quality checks catch failures in non-deterministic systems. "the Kitchen Loop uses anti-signal canaries: intentionally crafted bad inputs injected alongside real ones to verify the quality gate catches what it should."

- API degradation canaries: Tests that simulate partial failures of external APIs to verify graceful degradation and detection. "Additionally, 3 API degradation canaries test resilience to partial external API failures"

- Canary escapes: Instances where intentionally bad inputs pass quality gates, signaling a critical testing gap. "Tier 1 canary escapes are treated as a critical warning signal for operator review."

- Coverage-exhaustion mode: An operating regime that systematically exercises all spec combinations until gaps approach zero. "The Kitchen Loop operates in coverage-exhaustion mode: given a specification surface, systematically exercise every combination until the gap between spec and reality approaches zero."

- Coverage matrix: The grid of all feature × platform × action combinations the product claims to support, used to drive exhaustive testing. "The specification defines a coverage matrix: every combination of feature, platform, and action type that the product claims to support."

- Discussion Manager: A structured deliberation system for multi-round debate and synthesis among agents. "Section 7 extends this concept into a full structured deliberation system — the Discussion Manager — with multi-round debate"

- Drift control: Continuous monitoring of quality trends with mechanisms to pause evolution when metrics degrade. "a regression oracle with drift control and automatic pause gates"

- E2E Scenario Tests: End-to-end tests that verify full user journeys across the system boundary. "Level 4: E2E Scenario Tests"

- EVAL_CHEAT_FAIL: A verdict signaling that the evaluator improperly modified product files during UAT, indicating process cheating. "Any product file modification = EVAL_CHEAT_FAIL."

- Goodharting: Optimizing a proxy metric in a way that harms the true objective or unmeasured dimensions. "This prevents the ``goodharting'' failure mode where an agent optimizes a proxy metric while the product degrades in unmeasured dimensions."

- Ground-Truth Verification: A design principle emphasizing tests that verify outcomes against real, unfakeable state. "P1. Ground-Truth Verification"

- LLM tribunal: A panel of different LLMs used to adjudicate or review outputs for higher-confidence judgments. "the L4 LLM tribunal (GPT/Claude/Gemini judgment)"

- Multi-model tribunals: Parallel, independent reviews by multiple models with consensus/majority synthesis to reduce error. "the Kitchen Loop uses multi-model tribunals: three independent AI reviewers evaluate the same artifact in parallel."

- Pause gates: Automated stop mechanisms that halt the loop when quality metrics regress. "automated pause gates that halt the loop when metrics degrade"

- Pass@k: A benchmark metric reporting whether a correct solution appears within k generated attempts. "Dominant benchmarks (HumanEval, MBPP) rely on Pass@k metrics that overlook semantic correctness"

- Production-parity ticket management layer: A process where AI-generated and human tickets share the same system and priority to prevent divergence. "a production-parity ticket management layer (Linear) where human and AI tickets are treated identically"

- Quality gates: Structured checks that code must pass before merge, targeting different defect classes. "monotonically improving quality gates"

- Ralph Wiggum loops: Autonomous continuous-commit loops that generate large volumes of changes with minimal oversight. "Ralph Wiggum loops (Huntley, 2025; snarktank/ralph) demonstrated that autonomous agents can produce 1,000+ commits overnight in a continuous loop."

- Regression oracle: A repeatable, bounded test suite that answers whether the system is at least as good as before. "zero regressions detected by the regression oracle"

- Sealed test card: A precise, user-oriented test script created by the implementer, executed by a fresh evaluator without context. "the implementing agent must write a sealed test card — a step-by-step recipe that any user could follow to verify the feature works."

- Specification surface: The enumerable set of capabilities the product claims to support; the basis for coverage. "a specification surface enumerating what the product claims to support"

- State deltas: Measured differences in system state before and after an action, used to verify real outcomes. "State Deltas (ground truth)"

- Three-tier strategy model (Foundation/Composition/Frontier): A scenario-generation approach balancing basic, combined, and forward-looking tests. "the three-tier strategy model (Foundation/Composition/Frontier) that ensures balanced coverage"

- UAT gate: An adversarial user-acceptance test run by a fresh, low-context evaluator to validate user-visible behavior. "the Kitchen Loop's spec surface and UAT gate provide structural guardrails."

- Unified trust model: The interlocking combination of spec surface, unbeatable tests, and regression/drift controls that makes autonomy safe. "Autonomous evolution requires a unified trust model."

- Weakest-Evaluator: A principle requiring that tests be executable by the least capable evaluator to ensure real usability. "P2. Weakest-Evaluator"

Collections

Sign up for free to add this paper to one or more collections.