ARC-AGI-3: A New Challenge for Frontier Agentic Intelligence

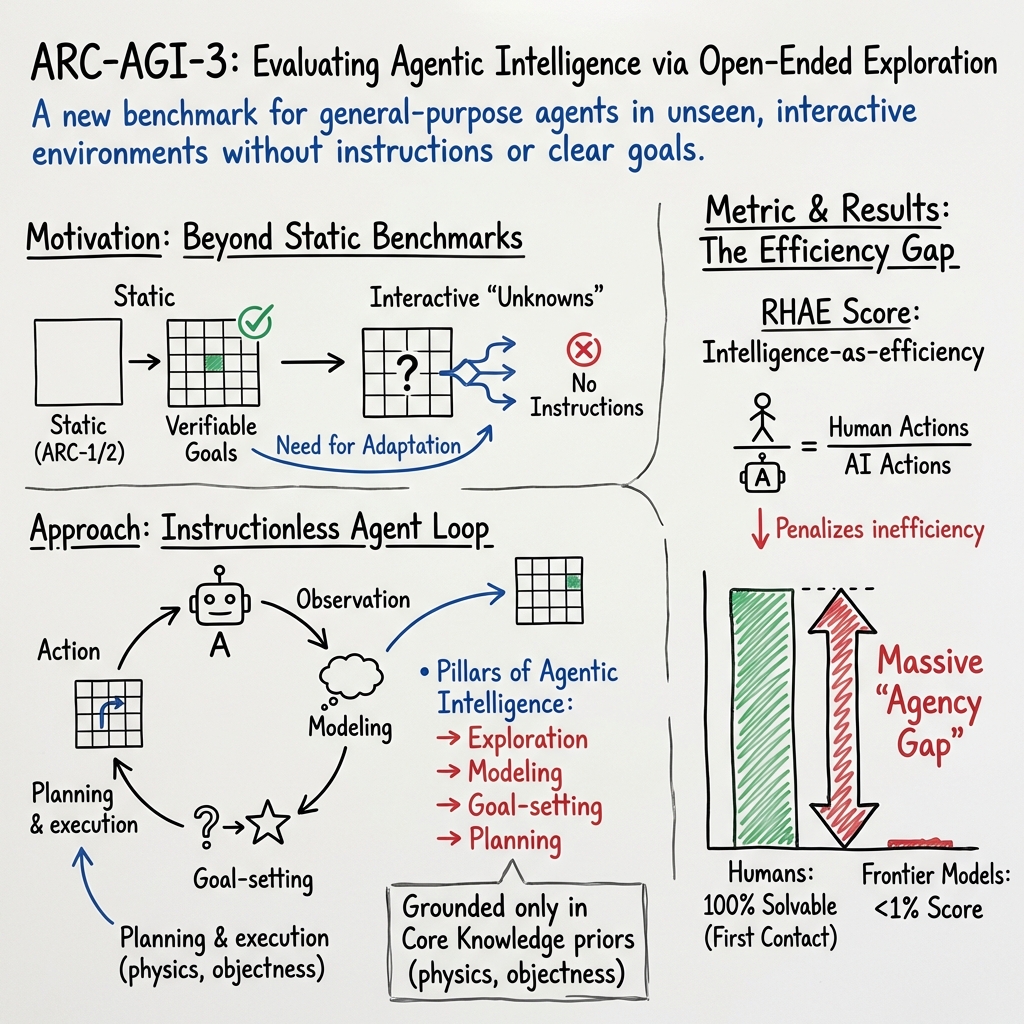

Abstract: We introduce ARC-AGI-3, an interactive benchmark for studying agentic intelligence through novel, abstract, turn-based environments in which agents must explore, infer goals, build internal models of environment dynamics, and plan effective action sequences without explicit instructions. Like its predecessors ARC-AGI-1 and 2, ARC-AGI-3 focuses entirely on evaluating fluid adaptive efficiency on novel tasks, while avoiding language and external knowledge. ARC-AGI-3 environments only leverage Core Knowledge priors and are difficulty-calibrated via extensive testing with human test-takers. Our testing shows humans can solve 100% of the environments, in contrast to frontier AI systems which, as of March 2026, score below 1%. In this paper, we present the benchmark design, its efficiency-based scoring framework grounded in human action baselines, and the methodology used to construct, validate, and calibrate the environments.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What is this paper about?

This paper introduces ARC-AGI-3, a new kind of test that looks like a set of small, turn‑based “video game” puzzles. The goal is to measure a kind of intelligence called agentic intelligence: the ability to explore an unfamiliar situation, figure out the goal without being told, learn how the world works, and plan smart moves to win. The test compares how efficiently humans and AI systems can beat these puzzles the very first time they see them.

The big questions the researchers asked

Here are the simple questions behind the work:

- Can an AI walk into a brand‑new puzzle world, discover the rules by itself, and figure out what “winning” means—without any instructions?

- How well can an AI explore smartly instead of randomly poking around?

- How quickly can it build an internal “mental model” of the world so it can plan instead of guessing?

- What is a fair, simple way to score “intelligence” that works for both humans and AIs?

How they built the challenge

To answer those questions, the team designed many short, turn‑based puzzle environments with strict rules that focus on reasoning, not reflexes or trivia.

The “game worlds” and what the player can do

- Each puzzle shows a 64×64 grid of colored squares. Think of it like a tiny board with pieces.

- You take turns. On your turn, you make one move (like pressing a key or selecting a cell). The world only changes when you act—nothing happens in between your turns.

- Crucially, you are never told the goal. Like opening a board game box with no instruction booklet, you must figure out what makes you “win” by experimenting and thinking.

- The puzzles only use basic, universal “common‑sense” ideas: objects, shapes, movement, collisions, simple physics‑like behaviors, and the idea that some things “act with purpose.” There are no words, numbers, or cultural symbols.

Why this design? It removes shortcuts like memorizing trivia or using language knowledge. Success depends on reasoning and adaptation.

Making sure puzzles are well‑designed and fair

The team set up a studio to build these environments and used a custom, fast Python engine so they could iterate quickly. They used two kinds of automated checks:

- Large‑scale random play: Run up to 1,000,000 random moves to ensure puzzles aren’t accidentally solvable by luck. If random play can often win, the puzzle gets reworked.

- Map of the state space: Imagine drawing a map where each dot is a possible screen and each arrow is a legal move. Building this “graph” helps spot loopholes, dead ends, and rare edge cases, and lets them estimate the chance a random player could stumble into a win. Puzzles were only accepted if random success was extremely unlikely.

Human testing and “easy for humans”

Every included environment had to be solvable by ordinary people seeing it for the first time, usually within a few minutes and always within one short session. Each puzzle was tested by 10 different people at a San Francisco testing center. If too many people got stuck, the puzzle was redesigned. The idea: keep puzzles challenging for AIs but fair and understandable for humans.

Public vs. private puzzle sets

- Public demo set: A small, easier set that showcases how the puzzles look and feel. It’s for learning and community fun, not for official scoring.

- Private sets (semi‑private and fully private): Larger, harder, and more varied. These are the real tests used for official evaluations and competitions. They’re kept out of public view to prevent overfitting or memorization.

How scoring works: measuring “action efficiency”

Instead of asking “did you win?”, ARC-AGI-3 asks “how efficiently did you win on first try?” Efficiency here means the number of moves you needed:

- Fewer moves = more efficient = higher intelligence score.

- Your moves are compared to human baselines. Specifically, each level’s score compares the AI’s move count to the second‑best human’s move count to avoid a single superstar skewing the scores.

- To discourage brute force, the comparison is squared. For example, if a human needs 10 moves and the AI needs 100, the raw ratio is 0.1; squaring it gives 0.01, or 1% for that level.

- Later levels in a puzzle count more than early “tutorial” levels. That’s because later levels test deeper understanding of the mechanics.

- A safety rule caps any single level at “human‑level” maximum credit so one weird shortcut can’t dominate the score.

- To control evaluation cost, AIs are cut off at 5× the human move count per level.

Together, these rules form the RHAE metric (Relative Human Action Efficiency)—a single score between 0% and 100% that says how close an AI is to human‑level first‑try efficiency across all puzzles.

What they found and why it matters

- Humans can solve 100% of these puzzles with no instructions. Most do so in minutes.

- As of March 2026, top AI systems score below 1% on this efficiency‑based measure in the semi‑private test. In other words, today’s best AIs rarely figure out brand‑new, instruction‑less worlds efficiently on first try.

- Earlier ARC tests (ARC-AGI‑1 and 2) already showed that simple memorization or just scaling up training data is not enough for true generalization. ARC-AGI‑3 raises the bar by requiring exploration, goal‑finding, and planning in interactive worlds—skills AIs still struggle with.

- The paper also highlights a real risk with public benchmarks: AIs can “overfit” by training on millions of similar tasks or even learning tiny details like specific color‑number codes. ARC-AGI‑3 counters that by keeping the real test set private and different from the demos.

Why is this important? It shows a clear, measurable gap between current AI and human‑level general intelligence in open‑ended, hands‑on problem solving. It also gives researchers a concrete way to track progress.

What this could lead to

ARC-AGI-3 pushes AI research toward systems that:

- explore smarter and learn faster from very little experience,

- infer goals and rules without being told,

- build internal models of the world and use them to plan efficient actions.

Those abilities are key for future AI assistants, robots, and scientific tools that must handle unfamiliar situations safely and efficiently. At the same time, the current results keep expectations grounded: while AI has become very good at tasks with clear rules and lots of training data (like coding with instant feedback), it still struggles to adapt like humans do when everything is new.

In short: ARC-AGI-3 is a fresh, fair way to measure whether AI can think and act like a curious, resourceful problem‑solver—learning new worlds the way people can.

Knowledge Gaps

Below is a single, concrete list of the paper’s unresolved knowledge gaps, limitations, and open questions across design, scoring, human calibration, evaluation, and validity:

- Inconsistent level count assumptions: environments are said to have “at least six levels,” yet the scoring section hard-codes n=5 and weights levels 1–5. Clarify the intended number of levels per environment and reconcile weighting and aggregation accordingly.

- Broken/malformed equations in the scoring section (missing brackets/denominators) prevent unambiguous implementation of RHAE; provide corrected, reference-tested formulas and code.

- Small and potentially unrepresentative human baseline (n=10 per environment) with “second-best” as the benchmark may be unstable; quantify variance, sensitivity to outliers, and confidence intervals for h_{l,e}.

- Apparent contradiction between “100% solvable by humans” in the abstract and the inclusion criterion of “at least two of ten” solving an environment; define the exact solvability standard and report the full distribution of human outcomes per environment.

- Human participant pool is geographically constrained (SF) and demographically skewed; assess generalizability and run replications with larger, more diverse samples.

- Exclusion of “low-effort” sessions is subjective and may bias baselines; pre-register exclusion rules and report how many/which sessions were excluded per environment.

- Lack of robustness checks for the “second-best human” baseline choice; compare against median, trimmed mean, or model-based estimates and report how leaderboard rankings change.

- Hard per-level cutoff at 5× human actions introduces censoring bias; quantify its impact on scores and explore alternatives (e.g., capped but continuous penalties rather than truncation).

- Power-law (square) penalty is chosen without ablation; provide sensitivity analyses across exponents and justify the selected exponent against desired discriminative properties.

- Actions are treated as uniformly costly, though “Undo,” grid-click selection, and directional moves likely have different operational or cognitive cost; evaluate whether weighting actions changes conclusions.

- No explicit treatment of resets in action counting for scoring (resets are allowed in human tests); clarify whether resets count and assess their prevalence and impact on comparability.

- The metric conflates exploration and execution, obscuring where agents fail; consider reporting separate exploration cost (to infer mechanics) versus execution cost (to achieve goal) per level.

- Lack of per-pillar evaluation (exploration, modeling, goal-setting, planning); propose diagnostic sub-metrics or ablations to attribute failure modes to specific agentic components.

- Official evaluation forbids harnesses/tools, yet many frontier models are designed for tool use; quantify how forbidding tools affects external validity and whether a “tool-enabled” track is warranted.

- Single fixed prompt across models may interact differently with provider-specific safety/formatting; report prompt adherence rates, parsing failures, and prompt-robustness experiments.

- Score uncertainty is not reported (no run-to-run variance, no confidence intervals), despite stochastic model sampling and non-determinism; standardize seeds, trial counts, and uncertainty reporting.

- Leaderboard comparability is confounded by differing provider-side hidden tools/test-time compute; specify compute budgets, temperature, and sampling policies to enable fair comparison.

- The claim that perception is “not limiting” is based on a few harness experiments; run systematic ablations (e.g., object overlays, downsampling, color jitter) to quantify perception vs. reasoning bottlenecks across the full set.

- OOD guarantees between public and private sets are not quantified; provide formal diversity metrics, mechanic overlap analysis, and nearest-neighbor distances to justify OOD claims.

- The novelty criterion (“single program solves two envs with ≥50% code savings”) is ad hoc; develop a principled and reproducible novelty measure (e.g., behavior embeddings, mechanic taxonomy distance).

- Data leakage risk for semi-private and fully private sets is acknowledged but not mitigated with concrete safeguards; adopt canaries/watermarks, usage monitoring, and periodic dataset refresh policies.

- No plan for benchmark longevity against synthetic environment pretraining; articulate update cadence, red-teaming strategy, and mechanisms to detect/prevent domain-specific overfitting at scale.

- State-graph validation includes hidden state in hashing, which agents do not observe; assess whether using hidden state for validation mischaracterizes effective branching factors from the agent’s perspective.

- Random-policy acceptance threshold (≤1e-4 success rate) conflicts with the example (P_win = 1/355); clearly separate tutorial vs. non-tutorial thresholds and report per-level random solve rates.

- Determinism vs. stochasticity is unclear; specify whether transitions are deterministic, what randomness sources exist, and how seeds are controlled for both development and evaluation.

- Non-interactive animations occur “between turns” while the environment “does not change” without actions; clarify the semantics of animations, whether they encode critical information, and how agents should parse them.

- Core Knowledge prior adherence is asserted but not audited; create and publish a checklist or reviewer rubric to verify no inadvertent language, cultural symbols, or prior-specific cues leak in.

- Multiple-mechanics requirement lacks operationalization; define a mechanic taxonomy and provide per-environment labels so researchers can analyze composition and transfer.

- Mechanics coverage of the private sets is not reported; provide aggregate statistics on mechanic diversity and composition depth, enabling targeted generalization research.

- Environment difficulty calibration beyond “easy for humans” lacks detail; publish per-level human action distributions, failure patterns, and time-to-first-insight to guide agent design.

- Reproducibility details are incomplete: exact environment versions, seeds, frame encodings, and serialization formats for replays are not specified; publish a locked evaluation kit with checksums.

- The Python engine’s 1000 FPS claim is unbenchmarked; provide micro/macro-benchmarks and guidance on hardware requirements for large-scale evaluation.

- Official scores on a small semi-private sample are reported without sample size per model or CIs; include the number of evaluated environments/levels and uncertainty bounds for each score.

- External validity to real-world agentic tasks is untested; run correlation studies between ARC-AGI-3 performance and downstream agent benchmarks (e.g., program synthesis with exploration, robotics sims).

- The benchmark does not isolate knowledge dependence; design environments that vary required prior knowledge systematically to quantify “knowledge vs. reasoning” reliance in LRMs.

- No analysis of exploit classes (e.g., clipping through walls, unintended state merges) beyond capping per-level score; implement exploit detection, patching protocols, and publish known-issue lists.

- Memory handling for models (carry-over of entire replies) is not standardized across providers with different context windows; document context lengths used and their effects on performance.

- Ethical/IRB considerations for human testing (consent, privacy in video replays, data retention) are not discussed; clarify compliance and whether human data will be shared for research.

- Licensing and access for semi-private/fully private sets are not detailed; outline partner selection criteria, audit trails, and terms preventing downstream data contamination.

- Lack of ablation on agent action spaces (e.g., removing Undo, altering click granularity) to see how control affordances shape measured “intelligence”; provide variants to stress-test robustness.

- No diagnostics for per-level failure modes (e.g., goal inference vs. dynamics modeling vs. long-horizon planning); release structured logs and failure annotations to aid targeted improvements.

Practical Applications

Overview

ARC-AGI-3 introduces an interactive, turn-based benchmark for “agentic intelligence” that stresses four capabilities on never-seen tasks without instructions: exploration, modeling, goal-setting, and planning/execution. It contributes (1) a standardized environment format (64×64 grid, small action space), (2) an efficiency-centered metric (RHAE) grounded in human baselines, (3) a production pipeline and Python engine for fast environment creation/validation, (4) automated graph-based state-space analysis to detect trivial paths and estimate random win probability, and (5) governance practices to mitigate overfitting (public vs. private sets; official vs. community leaderboards). Below are concrete applications derived from these findings and methods.

Immediate Applications

The following applications can be piloted or deployed now, often with modest adaptation of the paper’s tools and practices.

- Agent capability evaluation for AI labs and vendors

- Sector: AI/software

- Tools/Workflows: Use ARC-AGI-3’s RHAE metric and “official leaderboard” rules to evaluate general-purpose models via a uniform system prompt; leverage the semi-private set or create internal OOD, verifiable, turn-based tasks.

- Assumptions/Dependencies: Access to curated/guarded OOD tasks; API cost constraints (apply 5× human-action cap); acceptance that action efficiency is a proxy for adaptation quality.

- Pre-deployment QA for autonomous software agents and RPA

- Sector: enterprise software, DevOps, IT automation

- Tools/Workflows: Build ARC-AGI-3-like micro-environments that simulate novel UIs or workflows; score agents by first-contact action efficiency before release; reuse the Python engine for rapid testbed creation.

- Assumptions/Dependencies: Ability to discretize actions and define verifiers for target workflows; human baselines for calibration.

- Robotics benchmarking with action-efficiency metrics

- Sector: robotics, warehousing, logistics

- Tools/Workflows: Wrap simulators in turn-based interfaces; adapt RHAE (akin to SPL) to navigation/manipulation tasks; compare agent action counts to expert or second-best human baselines.

- Assumptions/Dependencies: Discretization of continuous control into “turns”; safe and accurate verifiers; bridging sim-to-real gaps.

- Game QA and content integrity checks

- Sector: gaming

- Tools/Workflows: Apply graph-based state-space construction to detect degenerate win paths, estimate random-policy win probability (<1e−4), and fuzz transitions; use novelty tests (program-length criterion) to avoid repetitive mechanics.

- Assumptions/Dependencies: Integration with a game’s state representation and action space; adequate hashing/merging for state identity.

- Benchmark governance and anti-overfitting policy

- Sector: AI governance, evaluation policy, standards bodies

- Tools/Workflows: Adopt dual leaderboards (official vs. community harness) to separate generalization from handcrafted strategies; maintain private OOD test sets to reduce leakage; codify action caps to control cost.

- Assumptions/Dependencies: Sustained curation of private datasets; clear submission rules and auditability.

- Human-in-the-loop evaluation protocol for interactive reasoning

- Sector: UX research, edtech assessment, product design

- Tools/Workflows: Run frequent small-cohort tests, set per-environment time limits, capture replays, and analyze per-level drop-offs; use “second-best” human as a stable baseline for efficiency.

- Assumptions/Dependencies: Participant recruitment, IRB/ethics compliance, and replay capture/analysis tooling.

- Training and education in problem-solving and exploration

- Sector: education, corporate training

- Tools/Workflows: Deploy Core-Knowledge-based, instruction-less puzzles to teach exploration and planning; measure student/trainee action efficiency over levels with weighted contributions.

- Assumptions/Dependencies: Alignment of puzzles with curricular goals; accessibility considerations; norms for repeated exposure.

- Developer tools for fast environment prototyping

- Sector: AI research, RL, simulation

- Tools/Workflows: Use the Python engine (∼1,000 FPS) to build turn-based agents and test-time adaptation benchmarks; integrate with RL frameworks; batch-run automated validation.

- Assumptions/Dependencies: Python ecosystem fit; licensing and maintenance; engineering capacity to build verifiers.

- Leakage and overfitting diagnostics for model developers

- Sector: AI lab operations, model auditing

- Tools/Workflows: Probing prompts and trace inspection (e.g., unexpected use of ARC color maps) to detect contamination; maintain OOD tasks and monitor reasoning-chain artifacts across runs.

- Assumptions/Dependencies: Access to model chain-of-thought or intermediate traces (or synthetic probes); cooperation from providers.

- Cost-aware evaluation operations

- Sector: MLOps, evaluation engineering

- Tools/Workflows: Enforce per-level action caps (e.g., 5× human actions) in evaluation runners; report RHAE and cost together (2D leaderboard).

- Assumptions/Dependencies: Known human baseline; standardized runner and logging.

- Content creation pipelines for reasoning-focused games

- Sector: edutainment, indie games

- Tools/Workflows: Adopt studio pipeline (spec → internal → external → done), human solvability gates, multi-mechanic level design, and tutorial-level pattern; automate validation to avoid triviality.

- Assumptions/Dependencies: Designer capacity for novelty; playtesting resources.

Long-Term Applications

These applications are promising but require further research, scaling, or ecosystem development.

- Regulatory and procurement standards for autonomy levels

- Sector: policy, public sector, defense procurement

- Tools/Workflows: Certify autonomy via ARC-AGI-3-like tests emphasizing first-contact efficiency, goal inference, and OOD robustness; mandate private test suites for vendor evaluations.

- Assumptions/Dependencies: Consensus on metrics and thresholds; independent test centers; anti-gaming enforcement.

- General-purpose agentic assistants for software and OSs

- Sector: consumer and enterprise software

- Tools/Workflows: Agents that explore unfamiliar applications, infer user intent/goals without instructions, and plan efficiently; prioritize verifiable sub-tasks to bound error.

- Assumptions/Dependencies: Robust perception of diverse UIs; verifiers for high-stakes actions; safe fallback/undo.

- Autonomous science and R&D agents

- Sector: pharmaceuticals, materials science, physics, chemistry

- Tools/Workflows: Agents that explore novel simulators/labs, infer objectives (e.g., maximize yield while respecting constraints), and act with action-efficiency budgets; integrate test-time adaptation.

- Assumptions/Dependencies: High-quality simulators and verifiable objectives; data/compute budgets; safety oversight.

- Embodied robots with goal discovery in unknown environments

- Sector: industrial automation, service robotics

- Tools/Workflows: Turn-based planning layers for real-time controllers; action-efficiency objectives to penalize trial-and-error; curriculum of OOD training/eval tasks for generalization.

- Assumptions/Dependencies: Reliable perception-to-state mapping; safety and risk budgets; sim-to-real transfer.

- Standardized QA of interactive systems in regulated industries

- Sector: healthcare IT, finance, aviation software

- Tools/Workflows: ARC-AGI-3-like testbeds to validate agent behavior in unfamiliar workflows (EHRs, trading UIs) before deployment; efficiency thresholds as acceptance criteria.

- Assumptions/Dependencies: Strong privacy/compliance controls; robust verifiers; human oversight.

- Scalable assessment platforms for problem-solving education

- Sector: edtech

- Tools/Workflows: Large-scale interactive exams that grade students on exploration/planning efficiency; weighted level design to emphasize concept integration.

- Assumptions/Dependencies: Fairness across demographics; content validity studies; proctoring/integrity tooling.

- Security and red-teaming suites for autonomous agents

- Sector: cybersecurity, AI safety

- Tools/Workflows: OOD “sandbox” environments to test how agents explore under uncertainty, detect unsafe goal-setting, and measure action budgets; integrate with containment policies.

- Assumptions/Dependencies: Accepted risk metrics tied to action counts; standardized reporting; avoidance of benchmark overfitting.

- Internal “benchmark studios” for OOD dataset production

- Sector: AI labs and large enterprises

- Tools/Workflows: Build in-house pipelines (spec → test → validate) for private, novelty-tested environments to evaluate generalization of agents before fielding.

- Assumptions/Dependencies: Sustained investment in content creation; expert reviewers; secrecy to prevent leakage.

- ROI estimation for open-ended task automation

- Sector: enterprise strategy/operations

- Tools/Workflows: Use human-relative action-efficiency to forecast time/cost savings when deploying agents in unfamiliar processes; prioritize domains with verifiers.

- Assumptions/Dependencies: Transferability from benchmark to domain; stable human baselines; cost models tied to action counts.

- Procedural curriculum generation guided by graph analytics

- Sector: AI training platforms, RL research

- Tools/Workflows: Automated generation of novel tasks with guaranteed non-triviality (random-win <1e−4), diverse mechanics, and compositional difficulty; use graph metrics to scaffold curricula.

- Assumptions/Dependencies: High-quality generators; scalable validation; avoidance of overfitting via synthetic task saturation.

Glossary

- Action efficiency: A scalar metric counting how many actions an agent takes to solve an environment on first contact, used to evaluate efficiency. "Action efficiency is the number of moves or ``turns'' required to solve a new environment upon first contact with it."

- Action space: The set of discrete actions an agent can execute in an environment. "Each environment offers a different action space, which is a subset of:"

- Agentic intelligence: The capability of an agent to autonomously explore, model, set goals, and plan/execute without explicit instructions. "ARC-AGI-3 shifts to targeting agentic intelligence."

- Agentness: A Core Knowledge prior referring to recognizing entities that act with intent and pursue goals. "Agentness: Recognizing that certain objects act with intent and pursue goals."

- Chain-of-Thought prompting: A technique that elicits step-by-step reasoning in LLMs to improve problem solving at inference time. "Chain-of-Thought prompting was discovered in 2022~\cite{wei2022chainofthought} as a way to improve test-time reasoning abilities of LLMs"

- Compositional reasoning: Integrating multiple learned concepts or mechanics to solve harder tasks. "deeper compositional reasoning"

- Core Knowledge priors: Innate cognitive structures (e.g., objectness, basic physics) that environments rely on rather than learned cultural knowledge. "ARC-AGI-3 environments only leverage Core Knowledge priors"

- Directed graph: A graph where edges have direction, used here to model state transitions via actions. "Exploration models the environment as an explicit directed graph over reachable states"

- Domain-specific overfitting: Tuning systems or harnesses specifically for a benchmark domain such that performance doesn’t generalize. "Domain-specific overfitting. This includes any agent that is created specifically to play ARC-AGI-3 environments in general"

- Fuzzing harness: A testing setup that injects randomized inputs to uncover edge-case crashes and inconsistencies. "This combined performance and fuzzing harness testing."

- Graph-based state space: A representation of all reachable environment states and transitions as a graph to analyze structure and solvability. "Exploring graph-based state space construction and win probability estimation"

- Harness: An external control wrapper that orchestrates strategies, tools, or prompts around a model to boost task performance. "using a harness that is handcrafted or specifically configured by someone with knowledge of the public environments."

- Hash-based: Using hash functions to uniquely identify states for merging equivalent nodes during exploration. "Node identity is hash-based, allowing the builder to merge distinct trajectories that arrive at the same underlying state."

- Hidden state: Internal environment variables not directly visible in the observation that influence transitions. "a successor node is constructed from the returned frame and hidden state"

- Human baseline: The reference performance used for normalization, defined as the second-best human action count. "We defined the human baseline as the second-best human by number of actions used."

- Interpolative retrieval: Recovering or interpolating patterns from training data rather than truly generalizing to novel tasks. "memorization and interpolative retrieval of patterns found in their training data"

- Large Reasoning Model (LRM): A paradigm emphasizing test-time reasoning and adaptation beyond static pretraining. "giving rise to the LRM (Large Reasoning Model) paradigm."

- Merge density: A statistic tracking how often distinct trajectories converge to identical states in the constructed graph. "The implementation also tracks merge density, maximum observed depth, cycle detection, and whether the reachable graph has been fully explored."

- Out-of-distribution (OOD): Data that differs from the distribution of available or training data, used to assess true generalization. "Going forward, benchmark designers will need to steer private datasets to be out-of-distribution (OOD) from any publicly available demonstration data if they want to test true generalization."

- Pretraining scaling: The approach of improving model performance by increasing training data and compute without architectural changes. "This is the approach known as pretraining scaling, which was the dominant paradigm of AGI research from 2019 to 2024."

- Power-law scoring: A scoring method that applies a power (squaring) to relative efficiency to more strongly penalize inefficiency. "ARC-AGI-3 uses a power-law efficiency term rather than a linear one."

- Random policy: An agent policy that selects actions uniformly or stochastically without using knowledge of the environment. "the system can still produce mathematically grounded bounds on the probability that a random policy will solve the environment."

- Reachability: The property describing which states can be accessed from the start under valid action sequences. "The graph reveals structural properties of each environment, exposes cycles, estimates reachability, and quantifies stochastic solvability in a reproducible manner."

- Recording-based playback: Replaying stored action traces to verify determinism, reproducibility, and for debugging. "Finally, developer-provided recording-based playback verifies reproducibility."

- Relative Human Action Efficiency (RHAE): The per-level, human-normalized efficiency metric used for ARC-AGI-3 scoring. "This scoring function is called RHAE (Relative Human Action Efficiency), pronounced ``Ray''."

- SPL (Success weighted by Path Length): A robotics metric that evaluates efficiency of successful paths, inspiring ARC-AGI-3’s formulation. "This formulation is inspired by the robotics navigation Success weighted by Path Length (SPL) metric~\cite{anderson2018evaluation}"

- State-space approximation: A compact representation of the explored state graph that merges equivalent states to reduce redundancy. "into a compact state-space approximation rather than a collection of independent rollouts."

- Stochastic solvability: The probability of solving an environment when outcomes or transitions involve randomness. "quantifies stochastic solvability"

- Test-time adaptation: Adjusting a model’s reasoning or behavior at inference time using additional compute or feedback. "led to a second scaling paradigm using test-time adaptation (or test-time compute)."

- Test-time training: Updating or specializing a model during inference using the current test distribution or synthetic data. "Test-time training emerged as a breakthrough technique, reaching a score of 53.5\% on the private ARC-AGI-1 test set."

- Turn-based interface: An interaction mode where the environment advances only in response to discrete agent actions. "The benchmark utilizes a turn-based interface designed to prioritize offline reasoning over real-time sensorimotor ``reflexes.''"

- Verifiable domains: Task domains that provide exact automatic correctness checks for solutions. "such domains are known as ``verifiable domains''."

- Win condition: The terminal condition that ends a level when achieved. "A level ends when a win condition (terminal frame) is reached."

Collections

Sign up for free to add this paper to one or more collections.