- The paper introduces XR Blocks, a modular open-source framework that abstracts spatial computing complexities for rapid, LLM-driven XR prototyping.

- It leverages Gemini’s LLM to generate XR Blocks scripts from natural language prompts, achieving up to a 95.5% one-shot pass rate with high-capacity models.

- The approach accelerates mixed-reality experience creation for education, simulation, and gaming on commodity WebXR platforms.

Vibe Coding XR: Intent-Driven, LLM-Augmented Prototyping for WebXR

Motivation and Context

The intersection of generative AI and spatial computing has generated significant demand for workflows that allow rapid prototyping of intelligent XR experiences from high-level intent descriptions. However, mainstream XR development remains highly fragmented, often requiring low-level sensor API integration, complex game engines, and domain-specific knowledge of perception pipelines. Popular LLM-driven prototyping environments have democratized 2D/3D web app creation via “vibe coding,” but these capabilities have not translated successfully to the XR domain due to the lack of high-level, human-centered abstractions that allow large models to reliably synthesize spatial behaviors.

Vibe Coding XR addresses this deficiency by introducing the open-source XR Blocks framework, which abstracts spatial computing and interaction complexities into high-level primitives accessible to both human creators and generative models. This framework is coupled with an LLM-driven workflow leveraging Gemini, facilitating an end-to-end, prompt-based authoring pipeline for XR experiences deployable on commodity WebXR platforms.

System Architecture: XR Blocks Framework

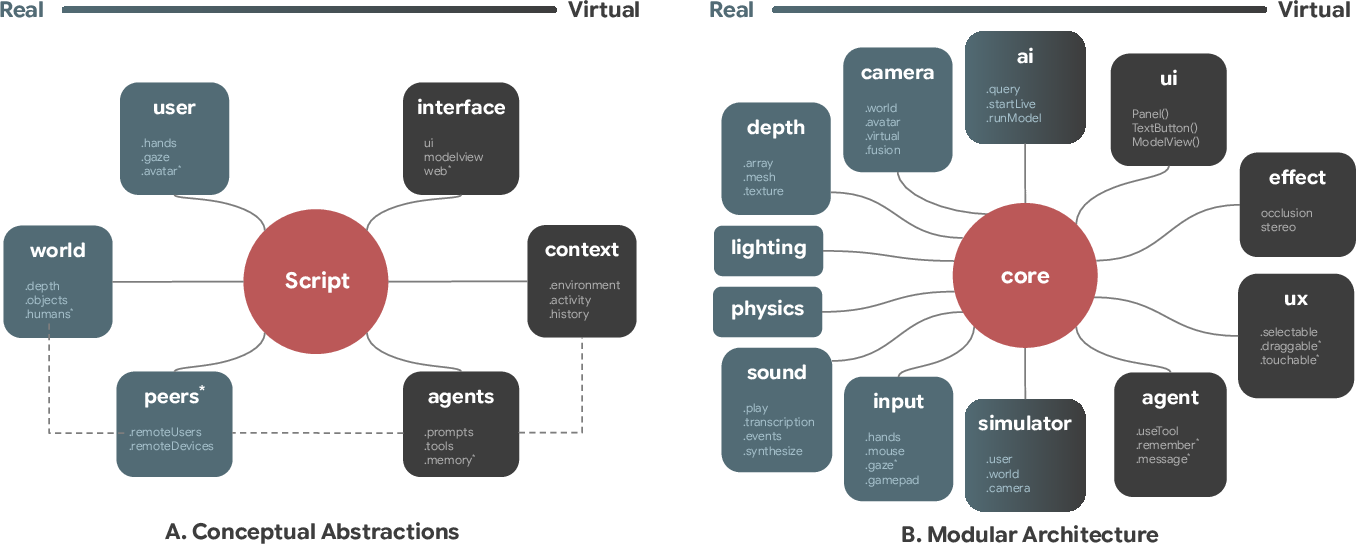

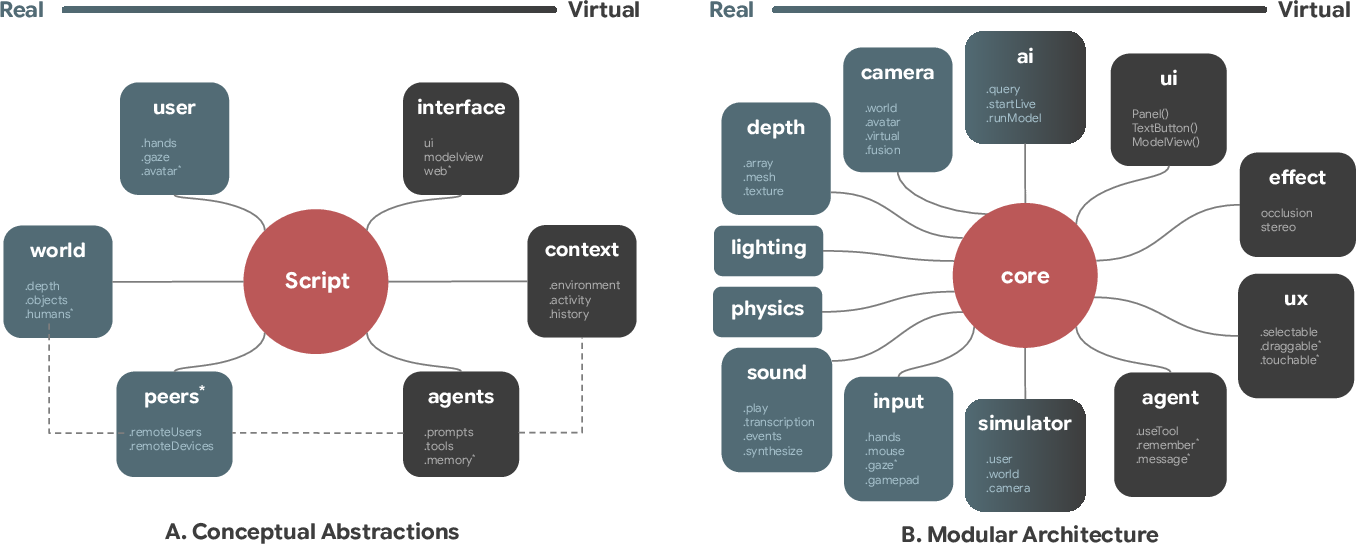

XR Blocks is architected to absorb the incidental complexity of spatial computing—sensor fusion, perception pipelines, and multimodal interaction—by making the core user, world, and agent entities explicitly configurable semantic primitives. Its web-based engine layers atop robust APIs including WebXR, three.js, and TensorFlow.js. The Reality Model formalizes the compositional semantics of spatial environments, supporting modular, extensible subsystems for environmental perception, physics, cross-device collaboration, and agentic behavior.

Figure 1: The XR Blocks Framework comprises a conceptual Reality Model for users, world, agents, and a modular architecture exposing distinct subsystems for perception, physics, interaction, and agent reasoning.

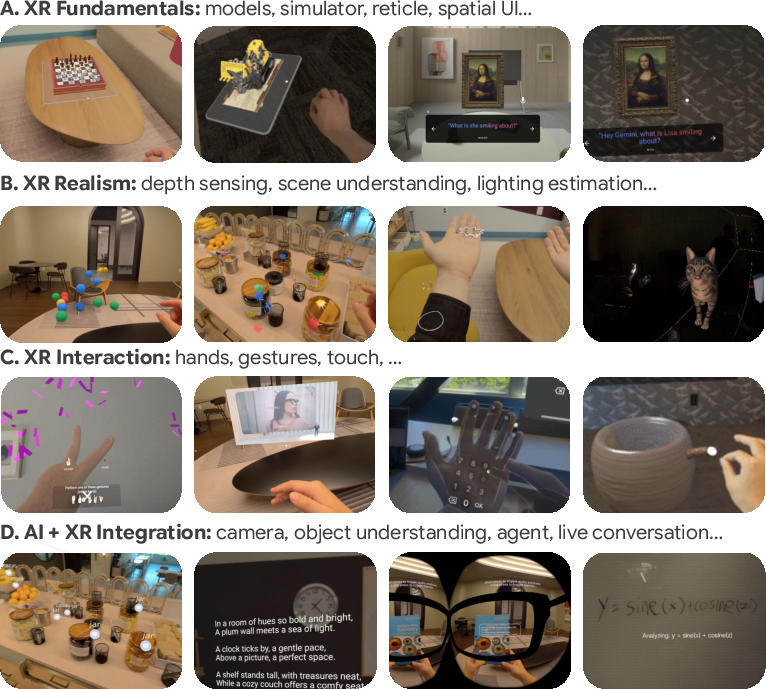

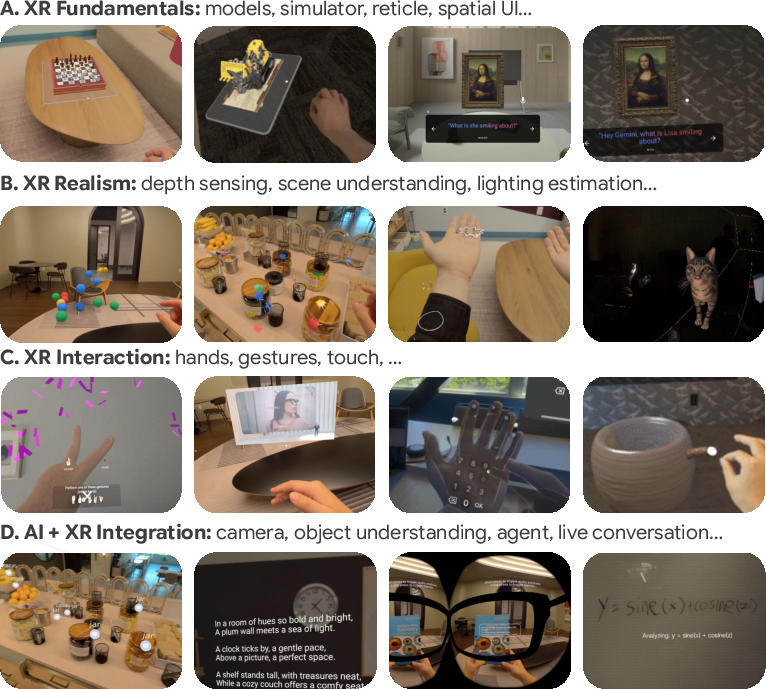

Framework extensibility is achieved through curated templates and human-coded exemplars (see Figure 2), ensuring that LLM-synthesized code is grounded in best practices and minimizes API hallucinations. The web-first paradigm ensures that rapid iteration and distributable deployments are accessible across desktop simulated environments and Android XR headsets.

Workflow: LLM-Augmented Vibe Coding XR

The Vibe Coding XR workflow contextualizes a generalist LLM (Gemini) with a carefully constructed system prompt encoding XR Blocks’s core documentation, template patterns, and package grounding. Users interact with a web-based interface to provide prompts such as "create a dandelion that reacts to hand", which triggers Gemini to generate runnable XR Blocks scripts. The endowed LLM functions as an expert XR designer by virtue of its prompt context, yielding code that conforms to spatial, semantic, and ergonomic constraints of room-scale XR.

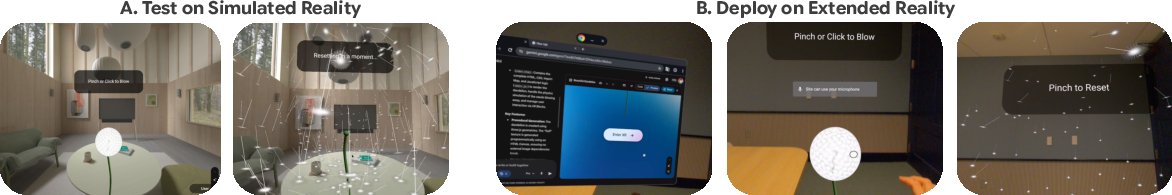

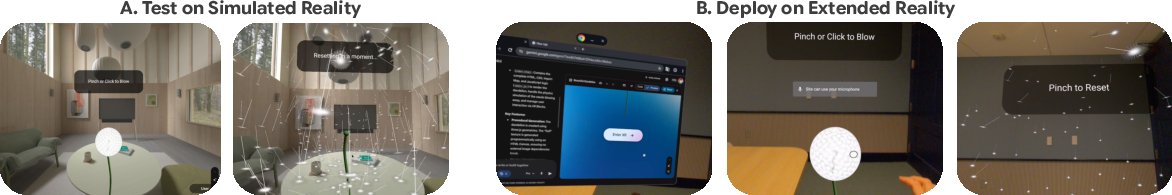

Figure 3: Vibe Coding XR accelerates prototyping by supporting both desktop simulated reality testing and instant deployment to Android XR headsets for direct, embodied interactions.

Generated scripts are evaluated interactively in desktop simulators or directly on headsets, enabling rapid iterative development cycles where speculative and complex spatial behaviors are synthesized in minutes.

Empirical Evaluation

The preliminary technical evaluation introduces VCXR60, a 60-item dataset harvested from structured workshops, to benchmark generative success rates and inference latency. Benchmarking with different Gemini backends highlights a critical scaling characteristic: higher-capacity models with longer-context reasoning (Gemini Pro, “high thinking”) reach a 95.5% one-shot pass rate within a median of 86s, while lower-context or lighter-weight backends result in more frequent failures or suboptimal behaviors. Importantly, larger LLMs are essential for authoring XR applications that require intricate perception and interaction logic—a result directly affirmed by empirical pass rates.

Demonstrated Application Scenarios

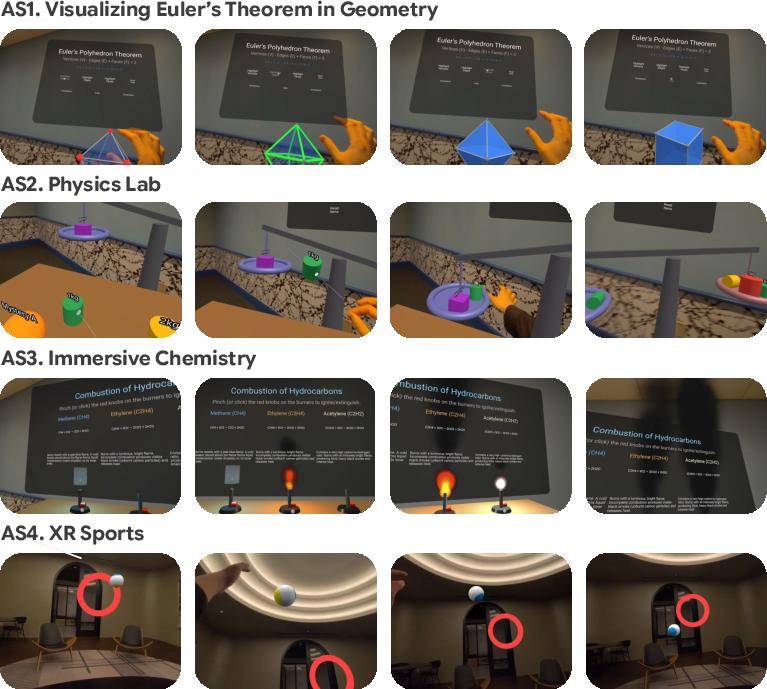

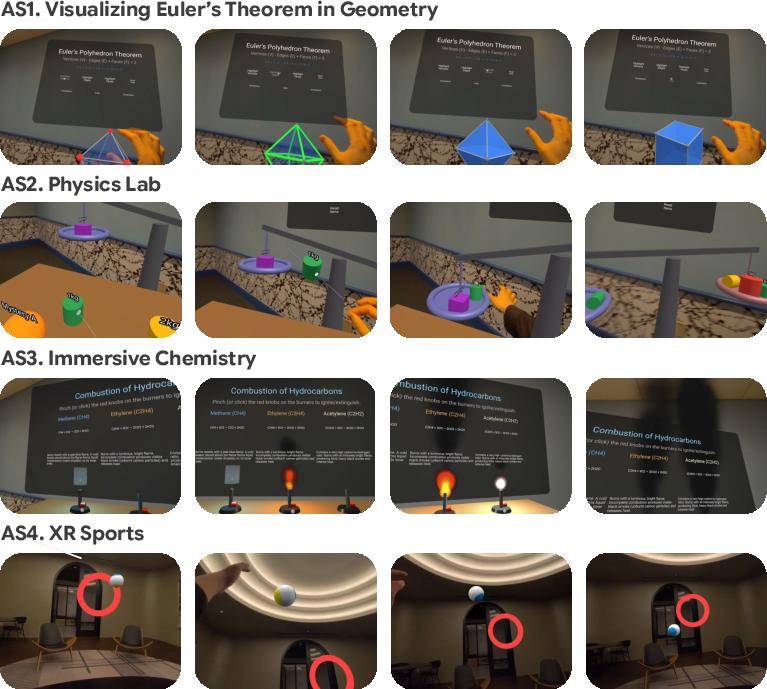

XR Blocks and Vibe Coding XR were repeatedly validated across a broad range of use cases:

- Educational AI+XR: Math and chemistry tutors, where LLMs autonomously select instructional graphics and implement them as interactive, hand-driven objects.

- Physics-Aware XR: Sports and mechanics simulations, utilizing physics modules for realistic hand, environment, and rigid-body interactions.

- Rapid Game Prototyping: Procedural content generation (e.g., voxel-based games, starmaps, city layouts) within minutes, reducing traditional XR development time by orders of magnitude.

Figure 4: AI-generated XR applications for rapid prototyping of educational and physical activity use cases.

Figure 2: Human-coded XR Blocks templates serve as grounding for best practices and templated LLM generation.

Technical and Practical Limitations

WebXR-based systems, while maximizing accessibility and platform reach, are inherently limited in rendering performance and responsiveness relative to native engines (e.g., Unity, Unreal). Network-bound LLM inference introduces variable latency. Furthermore, current abstractions prioritize audio-visual modalities, with future extensions anticipated for richer, multi-sensory experiences (e.g., haptic, biosignal integration via WebUSB and IoT).

The evaluative scope of VCXR60 is introductory; comprehensive, large-scale benchmarks with nuanced coverage of XR abstraction, LLM grounding efficiency (e.g., reduced token count), and agentic, human-in-the-loop evaluation are yet to be developed.

Implications and Forward Directions

The Vibe Coding XR pipeline establishes a repeatable pattern for intent-based, natural language-driven prototyping in high-complexity, spatially-embedded domains. The demonstration that LLM-augmented, open, web-based frameworks can generate and operationalize mixed-reality interactions from abstract prompt intent within minutes has direct implications for democratizing 3D software creation, education, and research.

Theory-building may further examine the compositional abstraction of spatial environments as “semantic substrate” for agentic reasoning, and how large models traverse these abstraction layers efficiently and safely. Practically, ecosystem evolution may include LLM-driven cross-compilers targeting native XR engines, structured benchmarks akin to HumanEval or LMArena adapted for spatial behaviors, and multimodal prompt inputs spanning gesture, gaze, or biosignal streams.

The modular, open-source XR Blocks framework is positioned as a community substrate for continuously advancing LLM+XR workflows, propelling practical advances in authoring, accessibility, and agentic co-creation across platforms.

Conclusion

Vibe Coding XR operationalizes a generalist, LLM-powered approach to spatial software prototyping, systematically lowering technical barriers for AI+XR creators while maintaining expressivity, modularity, and empirical reliability. The findings signal a paradigm shift for spatial computing—moving from low-level, tool-bound practice toward intent-driven, rapid authoring mediated by scalable, semantic abstractions and code generation workflows (2603.24591). The paper’s open-source contribution serves as an extensible foundation for future research on agentic spatial reasoning, multimodal interaction, and formalized benchmarking in AI-augmented XR.