Forecasting and Manipulating the Forecasts of Others

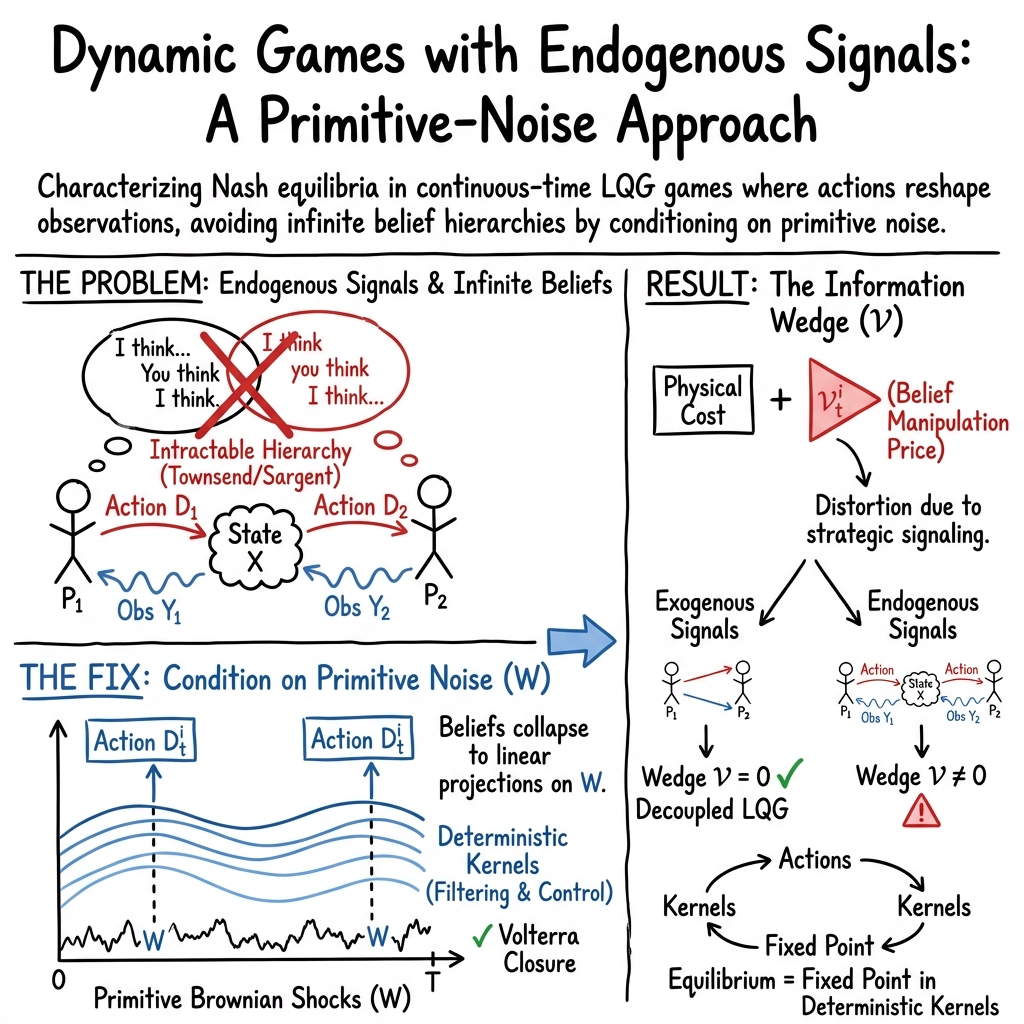

Abstract: In strategic environments with private information, evaluating a change in policy requires predicting how the equilibrium responds -- but when actions reshape opponents' signals, each agent's optimal response depends on an infinite hierarchy of beliefs about beliefs that has resisted exact analysis for four decades. We provide the first exact equilibrium characterization of finite-player continuous-time LQG games with endogenous signals. Conditioning on primitive Brownian shocks rather than the physical state -- a dynamic analogue of Harsanyi's common-prior construction -- collapses the belief hierarchy onto deterministic two-time kernels, reducing Nash equilibrium to a deterministic fixed point with no truncation and no large-population limit. The characterization yields an explicit information wedge $\mathcal{V}i_t$ -- a deterministic Volterra process -- that prices the marginal value of shifting opponents' posteriors. The wedge vanishes precisely when signals are exogenous to controls, formally delineating the boundary where strategic belief manipulation matters, and provides a closed-form mapping from information primitives to equilibrium outcomes.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper studies a tricky kind of “guessing game” where several players act over time while watching noisy clues about a hidden situation—and their actions also change the clues that others see. The main goal is to predict what everyone will do when the rules about information (like how precise signals are, or how often announcements arrive) change. The big contribution is an exact way to solve these games without approximations, even though they involve an “infinite mirror” of everyone forecasting everyone else’s forecasts.

What questions does the paper answer?

The paper asks, in simple terms:

- How can we predict what people will do when their actions not only change the world but also change what others learn about it?

- Can we collapse the “infinite chain” of beliefs-about-beliefs-about-beliefs into something we can compute exactly?

- Can we measure how much a person gains by nudging what others believe (that is, by strategically shaping others’ forecasts)?

- How do changes in information quality (like more precise reports) flow through the system to change outcomes?

How does the approach work? (Plain-language explanation)

Think of a group project where the “true progress” is hidden behind a curtain. Each teammate gets a blurry gauge (a noisy signal) of the project’s progress. Everyone also moves the project forward by acting. Here’s the twist: your actions not only change the project’s true progress, they also change what your teammates’ gauges display. So to choose your action, you need to guess the project’s true state and also guess how others are guessing, which depends on what they see, which depends on your action… That’s the infinite-mirror problem.

The paper solves this by making three simplifying, but powerful, choices:

- Linear–Quadratic–Gaussian (LQG) setting

- Linear: the system responds like a straight line (double the push, double the change).

- Quadratic: costs are like “sum of squared errors” (e.g., how far you are from a target plus effort cost).

- Gaussian: randomness is like the jittery wiggle of a “Brownian motion” (think tiny random bumps each moment).

- Focus on the source of randomness instead of the moving state

- Instead of directly tracking the hidden state (which mixes nature’s randomness and everyone’s actions), the paper tracks the underlying “primitive shocks” (the common random wiggles driving everything).

- Each player’s “noise-state” is their best running estimate of those random wiggles based on their private signals. It’s like reconstructing the dice throws that shook the room, rather than just watching the table that moved.

- Fixed recipes over time (“Volterra kernels”)

- A player’s strategy becomes a fixed, time-indexed recipe that says, “how much to react now to each past moment of the estimated random wiggles.”

- These recipes are deterministic (pre-calculated) functions of time, so the tangled belief hierarchy collapses into solving a set of deterministic equations.

- This yields two key “closure” results:

- Filtering closure: how players update their beliefs can be written exactly using fixed, two-time recipes.

- Best-response closure: the best thing to do against others using these recipes is to use the same kind of recipe yourself.

- With both closures, finding a Nash equilibrium becomes finding a fixed point in these recipes—no truncation, no large-population approximation.

What are the main findings and why do they matter?

- Exact equilibrium in a hard class of games

- The paper gives the first exact characterization of equilibria in finite-player, continuous-time LQG games where signals depend on actions (“endogenous signals”).

- This means we can predict how equilibrium changes when information policies (like disclosure precision) change—without resorting to rough approximations.

- The “information wedge”: putting a price on belief manipulation

- The paper uncovers a new object called the information wedge, written as for player i.

- Intuition: it’s a “price tag” on how valuable it is to tweak what others believe. If your action makes your opponent think the hidden situation is different, that changes how they act, which changes your future situation. The wedge measures that marginal value.

- It comes in two parts:

- Mean wedge: shifts average behavior.

- Kernel wedge: changes how players react to shocks over time.

- Crucially, the wedge is exactly zero when signals do not depend on actions (exogenous signals). That cleanly draws the line between cases where strategic belief manipulation matters and where it doesn’t.

- Clear map from information quality to outcomes

- The framework provides a closed-form mapping from “precision paths” (how accurate signals are over time) to the resulting equilibrium actions and payoffs.

- This lets a policymaker see how making reports more or less precise changes behavior—not only because people estimate the state better, but also because it changes how much they try to influence each other’s beliefs.

- Decomposing costs and optimizing attention

- The total cost for a player splits into:

- A certainty-equivalent part (what you’d pay if you saw the underlying shocks perfectly).

- An information cost (how much extra you pay because you’re estimating).

- Because the system is Gaussian and linear, the information cost is a clean, deterministic function of information precision, which means choosing how much attention/precision to buy is a standard optimization problem.

- The total cost for a player splits into:

- Special cases and extensions

- If signals are exogenous, the strategic channel disappears and the game becomes a set of independent single-agent LQG problems.

- The approach extends to risk-sensitive players and to long-run, time-homogeneous environments (where it connects to transfer functions and frequency-domain tools).

Why this matters:

- It shows precisely when and how changing the flow of information changes strategic behavior—not just via better estimates, but via the desire to steer what others believe.

- It replaces an intractable “infinite mirrors” problem with a solvable fixed-point system.

What could this change in practice?

This framework helps people who design information policies or decentralized systems:

- Central banks and public communication

- If a central bank releases more precise, faster information, firms not only react to the news—they also adjust knowing their actions will influence others’ readings. The method shows exactly how this plays out, which helps design better disclosure rules.

- Financial markets and transparency

- In trading, orders move prices, and prices inform others. The information wedge explains the strategic part of “price impact.” This can inform market design and regulation.

- Contracts and teams with hidden effort

- When several agents work on a shared project and infer each other’s effort from outcomes, their actions shape what others infer. The framework applies directly, guiding contract and incentive design.

- Robotics and multi-agent control

- When robots (or autonomous vehicles) have different sensors and their actions change what others can sense, the method gives a way to coordinate without assuming everyone shares all information.

- Research benchmarks

- The paper offers an exact solution as a benchmark to evaluate simpler approximations that cut off the belief hierarchy.

In short, the paper turns a long-standing “beliefs-about-beliefs” tangle into a concrete, computable system. It shows how to predict the effects of information policies in strategic settings and pinpoints when strategic belief manipulation matters—and by how much.

Collections

Sign up for free to add this paper to one or more collections.