- The paper introduces DropAnSH-GS, which uses anchor-based dropout and SH dropout to reduce spatial redundancy and overfitting in sparse-view 3D Gaussian Splatting.

- It demonstrates that dropping entire clusters of Gaussians generates stronger gradients, enhancing global context encoding and improving metrics like PSNR and SSIM.

- The technique enables efficient model compression through post-training SH truncation while incurring minimal computational overhead (<2.8% increase in training time).

Dropping Anchor and Spherical Harmonics for Sparse-view Gaussian Splatting: An Authoritative Summary

Motivation and Problem Statement

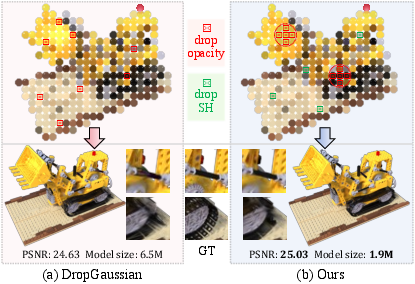

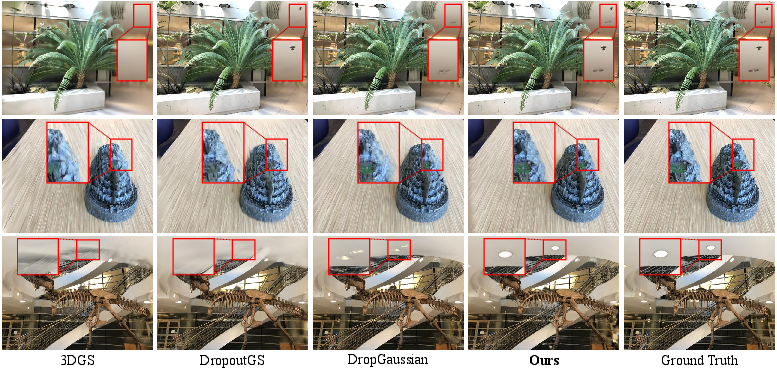

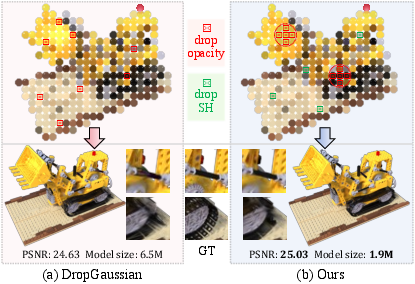

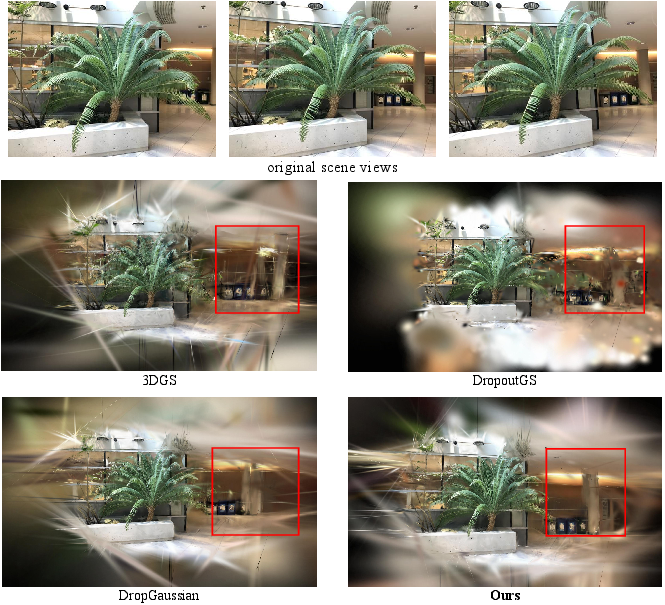

The efficacy of 3D Gaussian Splatting (3DGS) for realistic novel view synthesis is contingent on the density of input views. Under sparse-view conditions, 3DGS models are susceptible to severe overfitting, manifesting as hallucinated details, artifacts, and poor generalization. Previous dropout-based regularizers, including DropGaussian [park2025dropgaussian] and DropoutGS [xu2025Dropoutgs], mitigate overfitting by randomly nullifying the opacity of individual Gaussians. However, these approaches neglect structural redundancy and the compensation effect—neighboring Gaussians tend to fill the void left by a dropped component, thus weakening regularization. Furthermore, prior methods overlook the role of high-degree spherical harmonic (SH) coefficients in overfitting, especially under limited view constraints.

Figure 1: DropGaussian nullifies individual Gaussian opacities, while DropAnSH-GS drops anchor Gaussians and their spatial neighbors, additionally applying SH Dropout; this eliminates contiguous 3D regions and strengthens regularization.

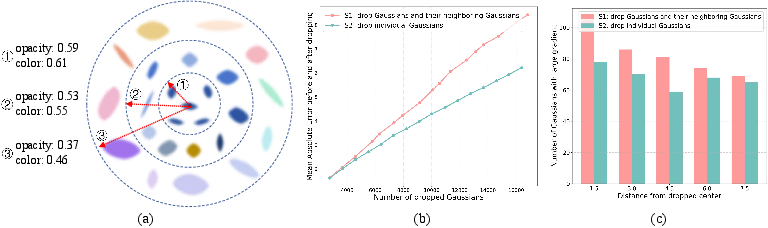

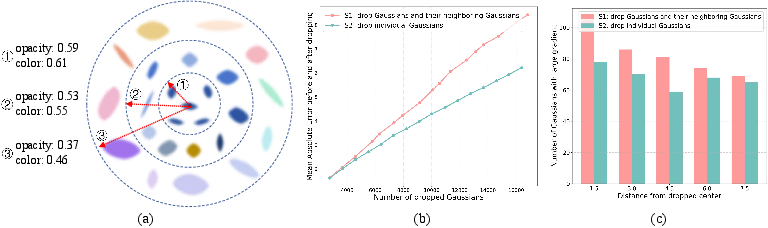

Analysis of Spatial Redundancy and SH Overfitting

A critical finding in this work is the spatial autocorrelation of opacity and color among adjacent Gaussians, measured via Moran's I metric. The pilot study demonstrates high similarity in both attributes when Gaussians are close, indicating redundant local information. Dropping isolated Gaussians often results in trivial appearance changes due to neighbor compensation. Contrastingly, dropping Gaussian clusters creates substantial information voids, provoking stronger gradients during optimization and promoting global information encoding. The compensation effect is firmly quantified, establishing the necessity of dropping spatial clusters for robust regularization.

Figure 2: Spatial autocorrelation reveals local redundancy; grouped dropout induces larger rendering change and stronger gradients than independent dropout.

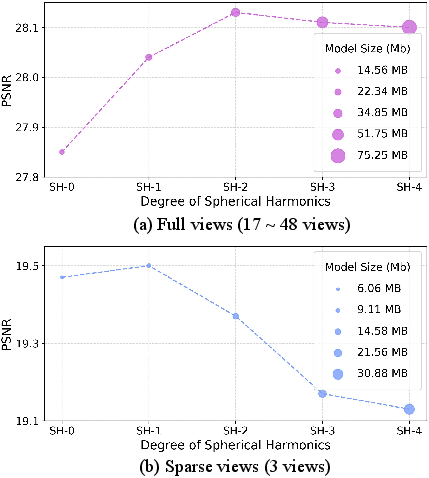

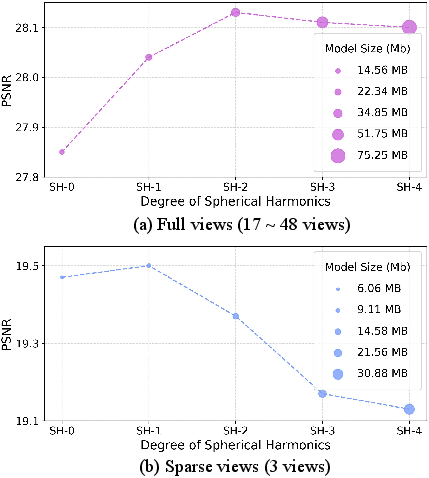

The study also identifies that high-degree SH coefficients exacerbate overfitting. Under sparse views, increasing SH degree elevates model size and reduces quality due to overfitting to fine-grained color attributes—this is evident in degraded performance and inflated parameter counts relative to ground-truth scene complexity.

Figure 3: High-degree SH terms improve performance under dense views, but under sparse conditions they induce overfitting and inflate model size.

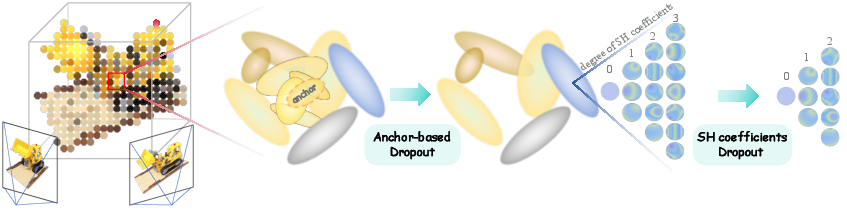

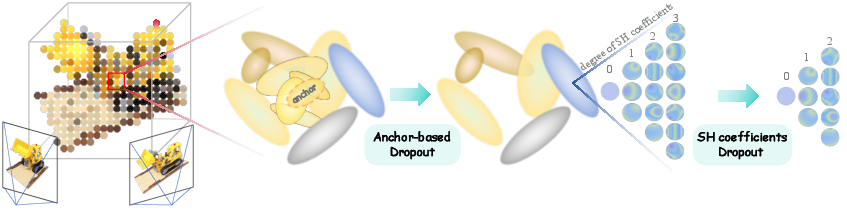

Anchor-Based Dropout and SH Dropout Methodology

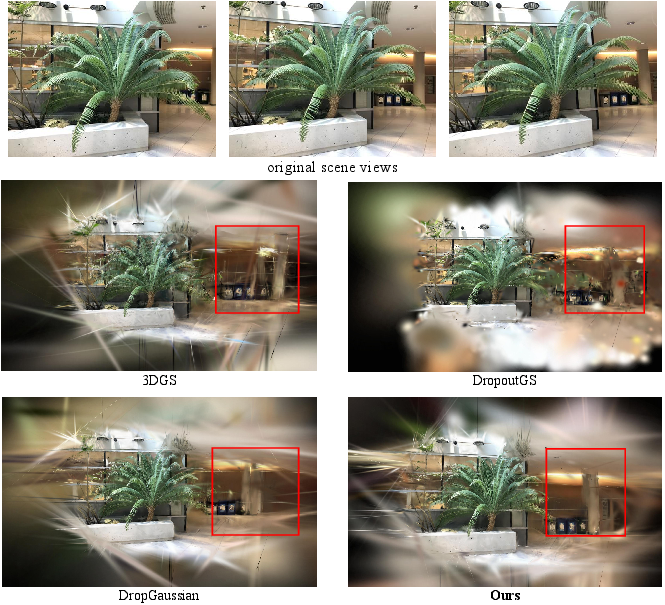

To counteract spatial redundancy and SH-induced overfitting, DropAnSH-GS introduces an anchor-based dropout mechanism. A subset of anchor Gaussians is selected randomly, and their spatial neighbors (identified via nearest-neighbor search) are simultaneously nullified. This structured dropout eliminates entire local clusters, actively disrupting spatial coherence and preventing local compensation. The impacted region receives heightened gradients, encouraging the model to encode broader context for recovery.

Concurrently, SH Dropout is applied: high-degree SH coefficients are randomly dropped during training, enforcing more concentrated appearance encoding in low-degree SH. The degree of SH retained increases over iterations to allow incremental detail acquisition. Notably, post-hoc truncation of SH coefficients is possible without retraining, yielding significant model compression and controlled trade-off between compactness and rendering accuracy.

Figure 4: DropAnSH-GS randomly drops anchor Gaussians and their neighbors, and applies SH Dropout; this is enforced during training to disrupt redundancy and encourage global context learning.

Experimental Evaluation and Numerical Outcomes

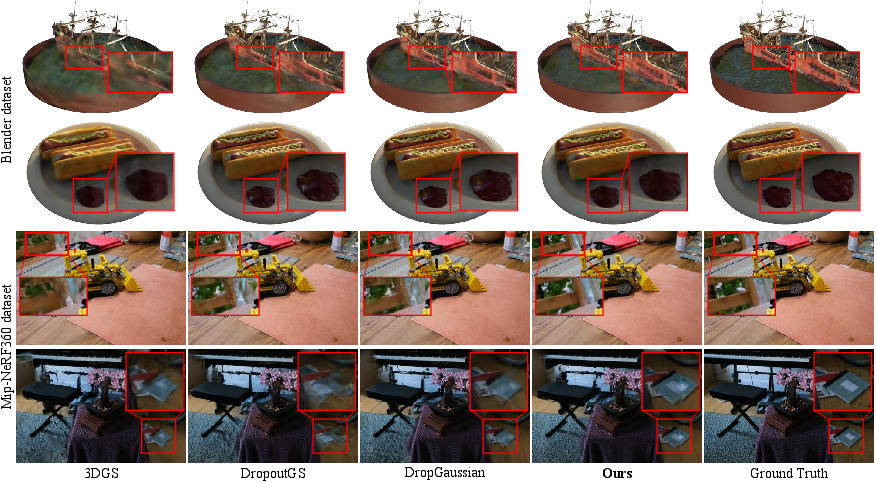

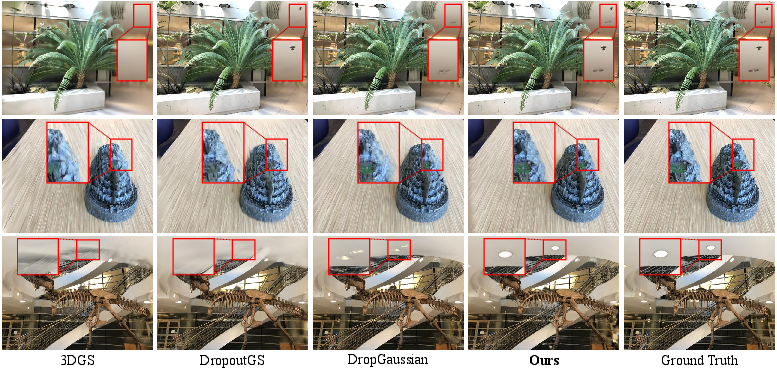

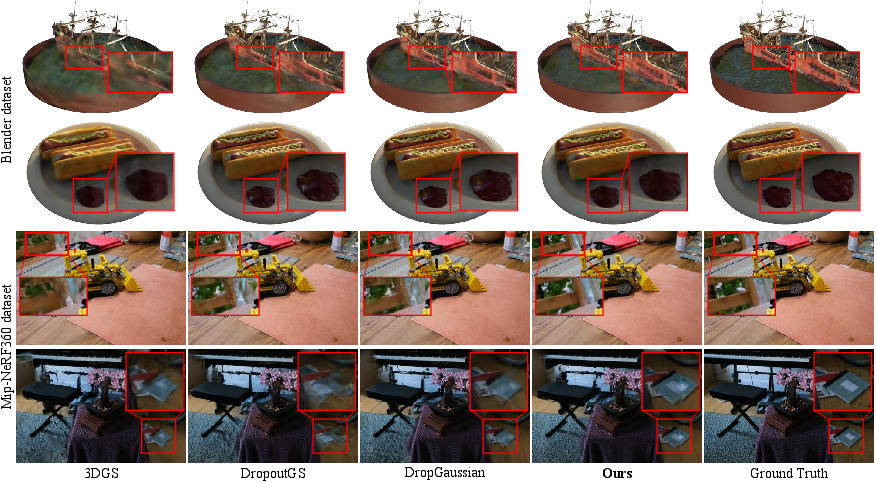

Comprehensive experiments are conducted on LLFF, MipNeRF-360, and Blender datasets in extremely sparse-view settings. On the LLFF dataset (3 views), DropAnSH-GS achieves PSNR = 20.68, SSIM = 0.724, LPIPS = 0.194—these are substantial improvements over state-of-the-art dropout-based baselines (e.g., DropGaussian, CoR-GS). Performance advantage persists across increased view counts and across real and synthetic datasets.

DropAnSH-GS enables post-training SH truncation for model compression. On MipNeRF-360 (12 views), the model with only zeroth-degree SH (Ours-SH_0) outperforms vanilla 3DGS, while reducing size from 143.4 MB to 33.8 MB; progressive SH degree retention yields an adjustable balance between accuracy and size.

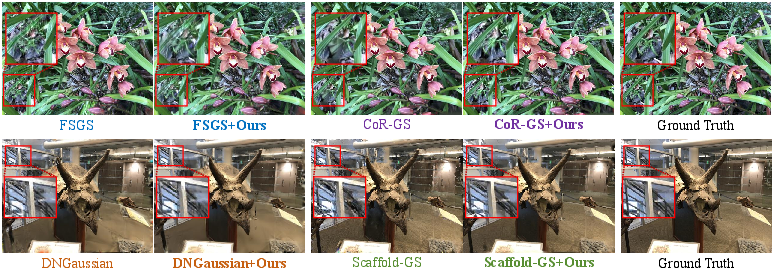

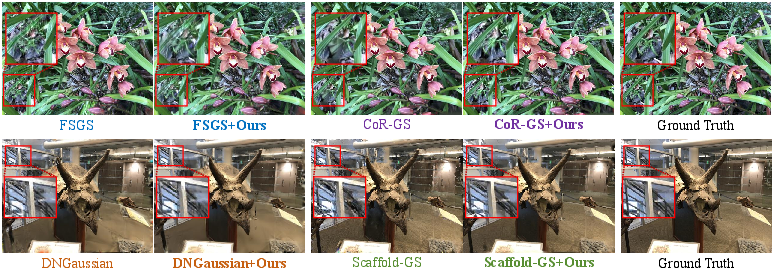

DropAnSH-GS is shown to be compatible with diverse 3DGS variants, including FSGS, CoR-GS, DNGaussian, and Scaffold-GS, consistently boosting their sparse-view performance.

Figure 5: Qualitative comparison on LLFF (3 views) illustrates that DropAnSH-GS yields structurally faithful and artifact-free reconstructions compared to baselines.

Figure 6: Qualitative comparisons on Blender and MipNeRF-360 reveal superior object boundaries and background fidelity for DropAnSH-GS.

Figure 7: DropAnSH-GS integration with different 3DGS variants consistently improves performance on LLFF (3 views).

Figure 8: Visualization of entire reconstructed scenes demonstrates that DropAnSH-GS achieves more natural, coherent, and complete structures under sparse-view conditions.

Ablation and Sensitivity

Ablation studies demonstrate the complementary effect of Anchor-based Dropout and SH Dropout—each component independently contributes to regularization, and their combination yields maximal performance. Sensitivity analyses on anchor ratio, neighbor count, and SH dropout probability confirm the robustness of the method to hyperparameters, with optimal ranges identified for all settings.

Computational Efficiency and Practical Implications

DropAnSH-GS incurs negligible computational overhead (<2.8% increase in training time versus vanilla 3DGS), as the anchor-neighbor search is efficiently implemented via GPU-accelerated nearest-neighbor algorithms. This renders the approach highly practical for integration into real-time rendering pipelines.

The anchor-based mechanism fundamentally compels global context learning and structural representations, while SH dropout regularizes color encoding and enables compression. The implications are broad: improved robustness of sparse-view rendering, enhanced adaptability for real-time applications, and scalable model size for deployment in resource-constrained environments.

Theoretical and Future Directions

The identification and mitigation of compensation effect and SH overfitting establish new regularization paradigms for explicit scene representations. Future work can explore adaptive or importance-driven anchor selection, more advanced neighbor selection metrics (incorporating intrinsic Gaussian attributes or view-dependency), and even cross-modal dropout to further enhance generalization in under-constrained view synthesis scenarios.

Conclusion

DropAnSH-GS introduces anchor-based structural dropout and SH attribute dropout for 3D Gaussian Splatting under sparse-view supervision, addressing both spatial compensation and appearance overfitting. The method achieves significant improvements in accuracy, robustness, and model size across benchmarks, and is compatible with existing 3DGS variants. The proposed strategies lay the groundwork for scalable, high-fidelity novel view synthesis from limited observations, with substantial theoretical and practical implications for graphics and vision applications.

(2602.20933)