- The paper demonstrates that transformation-based PPFR systems, while visually obfuscating, still leak identity information through robust extraction methods.

- FaceLinkGen introduces a two-phase attack combining identity extraction and face regeneration, achieving over 97% success in recovering faces.

- Findings reveal that current pixel-level evaluation metrics are insufficient, urging a shift towards identity-centric privacy measures including soft biometric leakage analysis.

FaceLinkGen: Identity Leakage in Privacy-Preserving Face Recognition

Introduction and Problem Statement

The paper "FaceLinkGen: Rethinking Identity Leakage in Privacy-Preserving Face Recognition with Identity Extraction" (2602.02914) critically examines the security assumptions underlying transformation-based privacy-preserving face recognition (PPFR) systems. These systems are designed to enable user verification without exposing raw facial data by releasing transformed templates instead of original images. The prevailing evaluation paradigm in the field centers on resistance to pixel-level reconstruction, quantified by metrics such as PSNR and SSIM. However, this work demonstrates that such visual distortion is neither necessary nor sufficient for preventing identity leakage, as identity-relevant information can often be extracted even when pixel-wise similarity with the original is low.

The authors introduce FaceLinkGen, an attack methodology targeting identity information extraction for both template linkage and face regeneration. Through empirical results across several state-of-the-art PPFR methods, the paper reveals that current evaluation strategies significantly underestimate the risk of identity leakage and calls for a paradigm shift towards identity-centric evaluation and protection standards.

Inadequacy of Pixel-Level Metrics

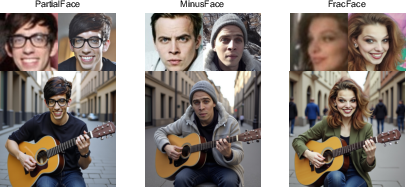

The core argument is that pixel-level metrics (such as PSNR and SSIM) fail to capture the identity information retained in protected templates. Two images can have high pixel similarity but represent different identities, or conversely, images with low pixel similarity can actually share the same identity—a distinction especially relevant for face biometrics, where identity is not a direct function of raw pixel composition. The authors empirically show that recent PPFR models (PartialFace, MinusFace, FracFace) fail to mask identity under identity-centric attacks, despite achieving strong visual obfuscation.

Figure 1: Traditional metrics like SSIM and PSNR do not reliably correspond to the level of identity correlation between images, and pixel- or StyleGAN-guided reconstructions can yield visually plausible but identity-inconsistent results.

Threat Model

The attack model assumes an adversary with oracle access to the template conversion function. This aligns with realistic scenarios—malicious or curious service providers (who possess full knowledge of their systems) and external attackers who may obtain templates via leaks or eavesdropping. The adversary is not presumed to know internal architectures or secrets of the PPFR transformation, strengthening the implications of the attack and exceeding the assumptions in much of prior work.

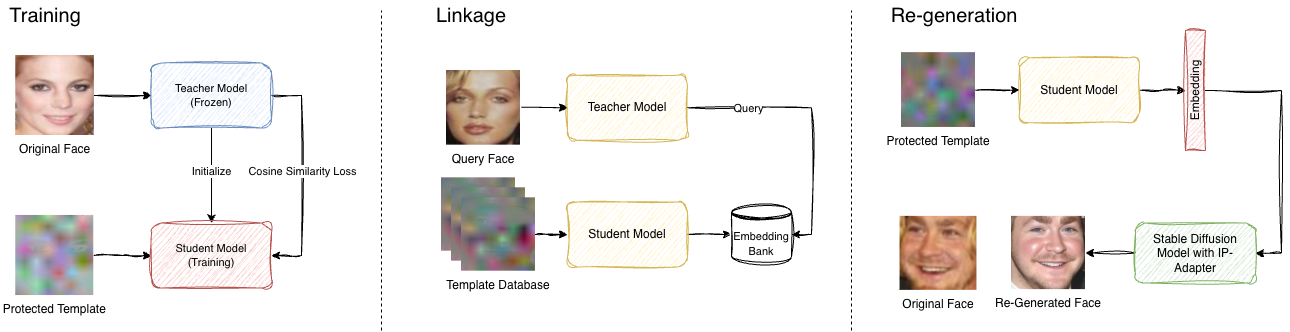

FaceLinkGen Attack Methodology

The FaceLinkGen pipeline consists of two phases: identity extraction and regeneration/linkage attacks. The core insight is that, since PPFR systems must preserve some form of identity-discriminative information for verification, it is possible to train a student network via distillation to map protected templates back into a standard face-embedding space (e.g., ArcFace). This identity representation can then be used for:

The method is agnostic to the specific template structure or recognition backbone, relying only on the premise that templates must maintain features discriminative for identity—a standard requirement for PPFR utility.

Experimental Findings

The attack is evaluated against three representative, recent PPFR methods: PartialFace [ICCV 2023], MinusFace [CVPR 2024], and FracFace [NeurIPS 2025]. Distillation from protected templates to identity embeddings is shown to be efficient, requiring minimal computational resources.

Linkage Attack Results

High-accuracy matching between templates and original images (as well as between templates generated by different PPFR methods) is achieved, with top-1 closed-set linkage rates exceeding 80% and 1-to-1 verification rates near original ArcFace performance on standard datasets (CASIA-WebFace, LFW). Performance remains robust even under cross-domain and distribution-shift scenarios.

Regeneration Attack Results

For the regeneration attack, the recovered embeddings are used to generate faces using Arc2Face. Matching these generated faces back to their originals using commercial APIs (Face++, Amazon) demonstrates over 97% success at first attempt, and 98–100% over multiple stochastic samples, even under strict verification thresholds. Notably, the attack remains effective in a constrained knowledge regime, where the adversary does not know the PPFR transformation, by exploiting the typical frequency-domain obfuscation structure.

Figure 3: Regeneration attack examples: Original (left) and face regenerated by FaceLinkGen (right) for each PPFR method. Visual distortion does not prevent identity recovery.

Figure 4: Controlled scenario: the regenerated identity can be used as a prompt for models like DreamO, enabling synthesis of semantically-plausible images (bottom) verified by commercial APIs, even with degraded registration images.

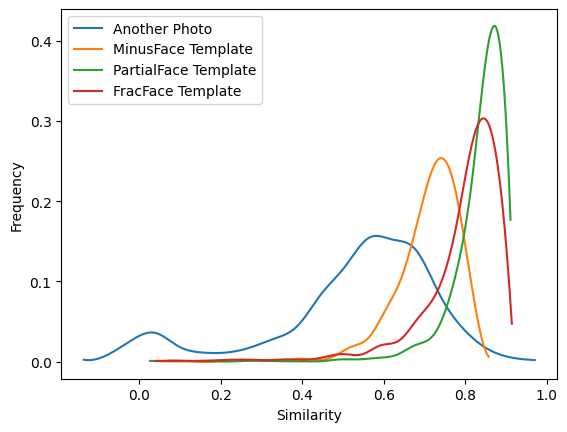

Similarity Analysis

Cosine similarities in embedding space further corroborate that protected templates retain more instance-specific identity than alternate images of the same subject, confirming instance-level linkage capacity.

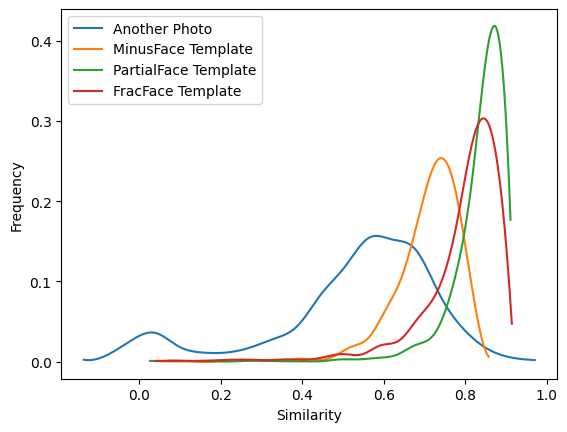

Figure 5: Distribution of similarity scores indicates preserved identity linkage between templates and corresponding original images.

Soft Biometric Leakage

In addition to hard identity recovery, FaceLinkGen enables leakage of sensitive soft-biometrics (age, gender, race) with notable accuracy (e.g., gender accuracy > 82%, age MAE ~6–7 years), facilitating unauthorized profiling.

Implications for Future PPFR Research

The paper asserts the structural inadequacy of current frequency-domain and visual-distortion-centric PPFR mechanisms. Because utility (i.e., recognition) requires the survival of identity-relevant information in the templates, purely obfuscatory transformations are fundamentally limited. Template secrecy cannot be guaranteed as long as recognition rates must remain high.

Potential defenses discussed include cryptographic protection, template randomization with secrets, or stronger formal privacy mechanisms—each of which entails trade-offs in usability or latency. The findings also highlight the need for identity- and attribute-level privacy evaluations, not just pixel-based metrics, and call for adversarial analysis that is grounded in the practical attack surface accessible to both internal and external actors.

Conclusion

This work systematically demonstrates that transformation-based PPFR systems evaluated against pixel-level reconstruction are insufficiently robust to identity extraction attacks such as FaceLinkGen. Even without detailed architectural knowledge, an adversary can recover identity-discriminative features and regenerate faces from protected templates with high fidelity and at low cost. Moreover, soft biometric attributes are similarly exposed. These results signal a fundamental limitation of visual-obfuscation defences and necessitate a shift to privacy frameworks that prioritize direct protection of identity semantics at the representational level rather than solely relying on visual distortion or information loss for “privacy-by-obscurity.” Future research must focus on privacy mechanisms that are provably resistant to both internal and external identity extraction, incorporating rigorous, identity-centric adversarial evaluation as a baseline requirement.