- The paper presents a novel CFG-based framework that leverages formal syntax and semantic constraints to efficiently structure the search space for alpha factor discovery.

- It employs a Tree-Structured Linguistic MDP with RL-guided MCTS and Tree-LSTM encodings to enhance interpretability and accelerate convergence.

- Empirical results demonstrate superior information coefficients, improved Sharpe ratios, and significantly reduced search complexity over traditional methods.

AlphaCFG: Grammar-Guided Symbolic Search for Alpha Factor Discovery

Problem Motivation and Limitations of Existing Alpha Discovery Methods

Formulaic alpha factor discovery is a foundational problem in quantitative finance, demanding explicit symbolic functions that can robustly predict future returns from historical features (prices, volumes, etc). Previous approaches—ranging from heuristics and expert-driven methods, to black-box data-driven models (tree ensembles, deep learning), and genetic programming—suffer from a tradeoff between interpretability, scalability, and computational cost. Heuristic and expert-driven approaches lack scalability and succumb to arbitrage; data-driven models obscure interpretability and are prone to overfitting; formulaic methods, while interpretable, search inefficiently over unstructured or poorly-constrained spaces.

Significantly, existing formulaic methods neglect formal linguistic constraints: they do not adequately structure the search over mathematical expression spaces, leading to rampant semantic redundancy and low sample efficiency. Numerous distinct expressions are semantically equivalent; absence of explicit grammatical structure results in unnecessary computational waste, degraded learning, and inefficient search dynamics.

AlphaCFG Framework: Grammar-Based Search Space Design

AlphaCFG introduces a paradigm shift by initiating alpha discovery from a formal linguistic perspective, employing context-free grammars (CFGs) both to rigorously define the syntactic and semantic search space and to enable efficient traversal, representation, and optimization. The space of all possible expressions is hierarchically structured via:

- α-Syn: Ensures syntactic validity through recursive CFG rules enforcing operator arity and prefix notation, yielding strictly well-formed abstract syntax representations (ASRs).

- α-Sem: Adds semantic restrictions to exclude ill-posed or nonsensical factors, incorporating domain-specific rules for temporal consistency, meaningful window sizing, and operand validity.

- α-Sem-k: Bounds derivation length to cap combinatorial explosion, producing a practical, finite set Lsem≤K amenable to exhaustive yet efficient search and learning.

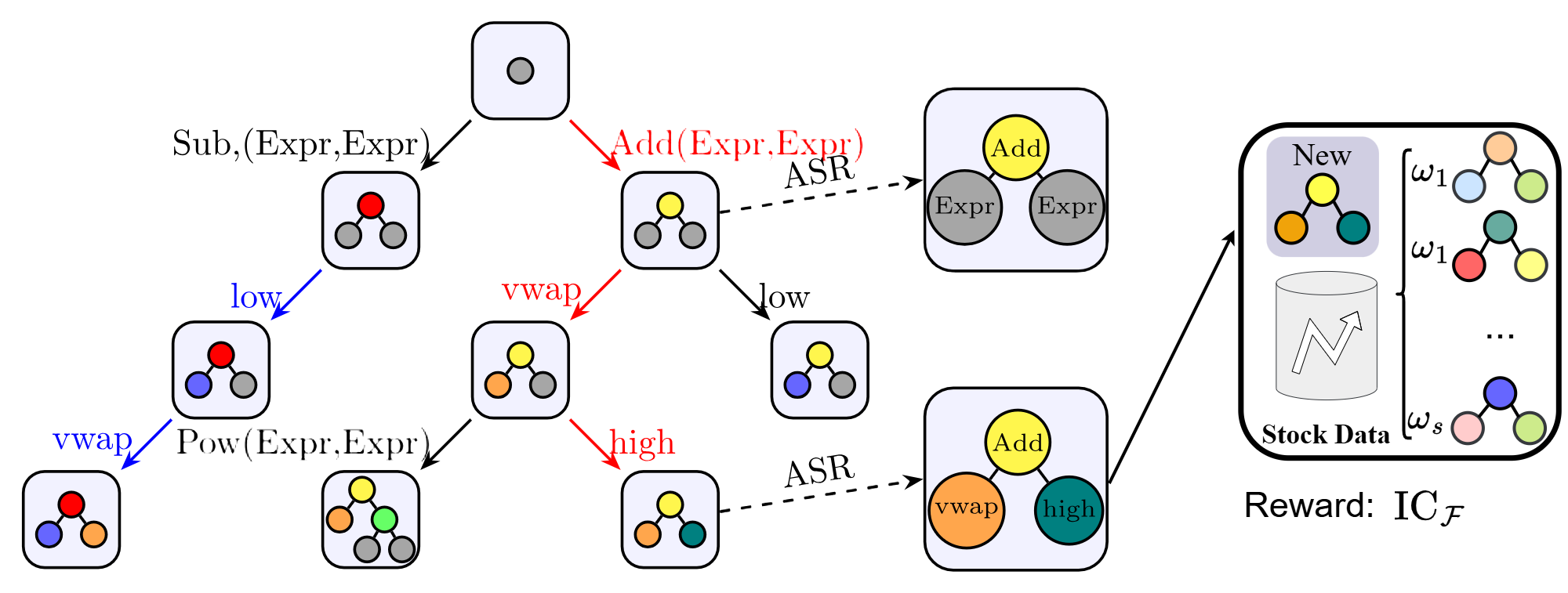

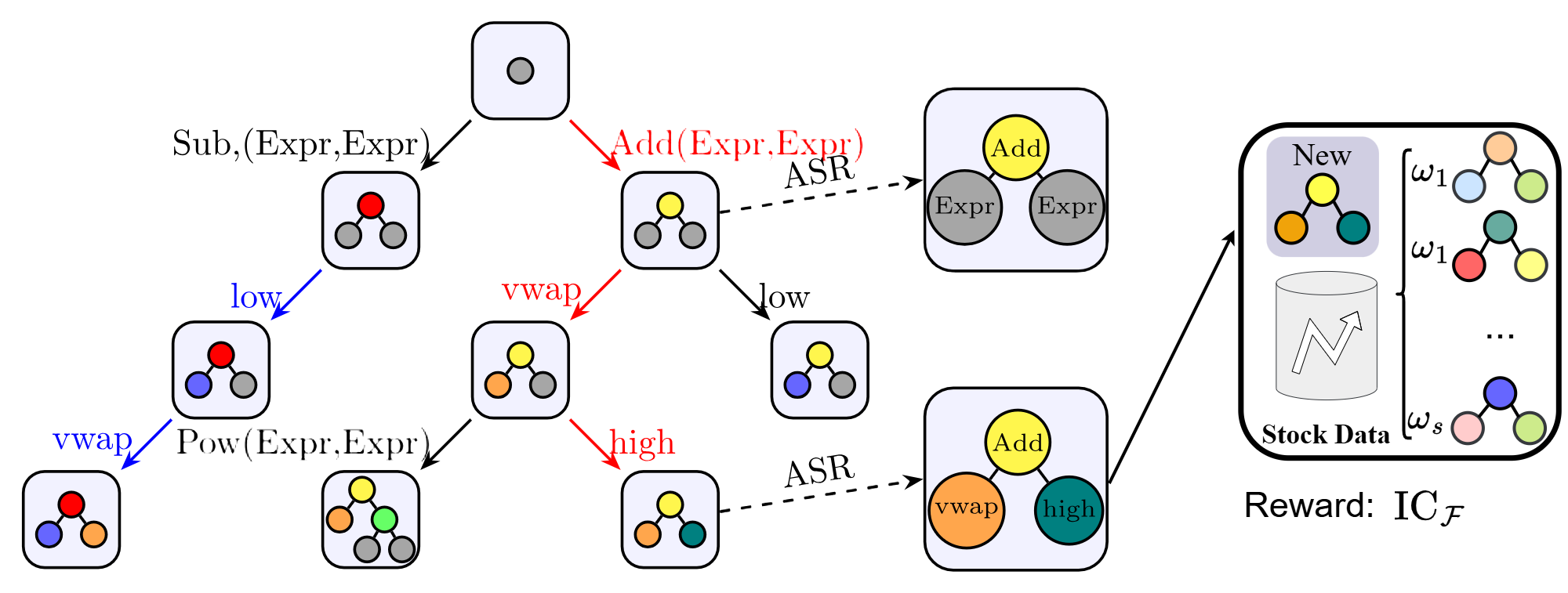

This CFG-based design enables decomposition of the search space into a nested tree (Figure 1), where each node represents a partial expression, operators are production rules, and leaf nodes correspond to complete, evaluable alpha factors. Tree-structured representation directly supports structural encoding and efficient search.

Figure 1: The tree-structured search space, showing recursive construction of alpha expressions via grammar expansion.

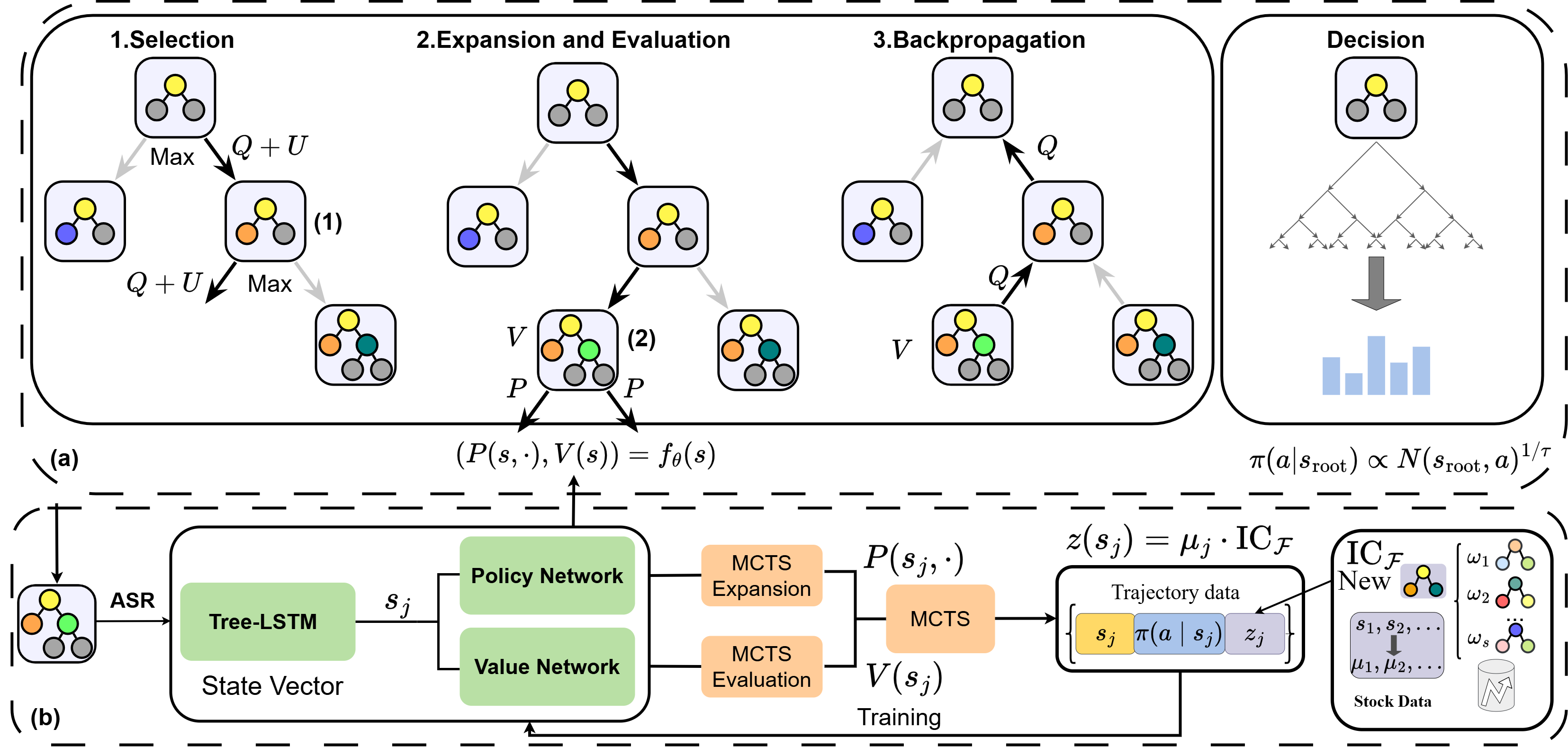

Tree-Structured Linguistic MDP and RL-Guided MCTS

AlphaCFG casts alpha factor discovery as a Tree-Structured Linguistic Markov Decision Process (TSL-MDP), in which states correspond to partial ASRs and actions to grammar production rules. Rewards are delayed and sparse, only assigned to completed expressions via their empirical information coefficients (IC), evaluated on real market data. This sequential decision formulation transforms the combinatorial search problem into a principled RL setting.

To navigate the vast, non-uniform search tree, AlphaCFG integrates grammar-aware Monte Carlo Tree Search (MCTS) with syntax-sensitive neural policy and value estimation:

This mechanism achieves sample-efficient, diversity-aware factor generation and enables the system not only to discover new composite alphas but also to refine classical factors by structurally masking and reoptimizing operator trees.

Empirical Results and Quantitative Evaluation

AlphaCFG demonstrates robust superiority over state-of-the-art baselines across multiple measures and dataset domains (CSI 300, S&P 500):

AlphaCFG is also empirically shown to enhance legacy factors: classical heuristics and factors from GTJA 191 and Alpha101 libraries are significantly improved in predictive IC by structural reoptimization.

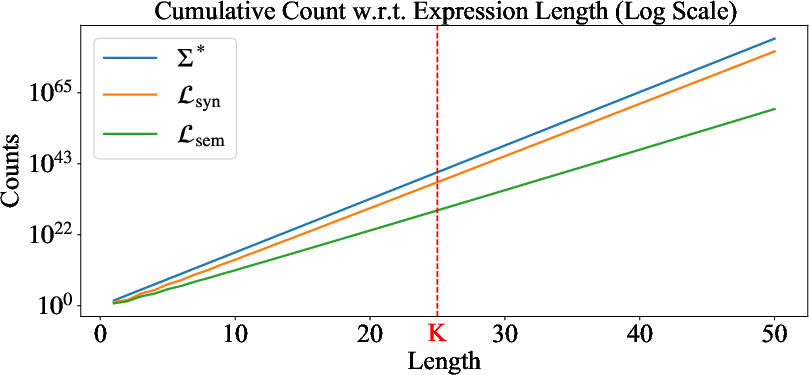

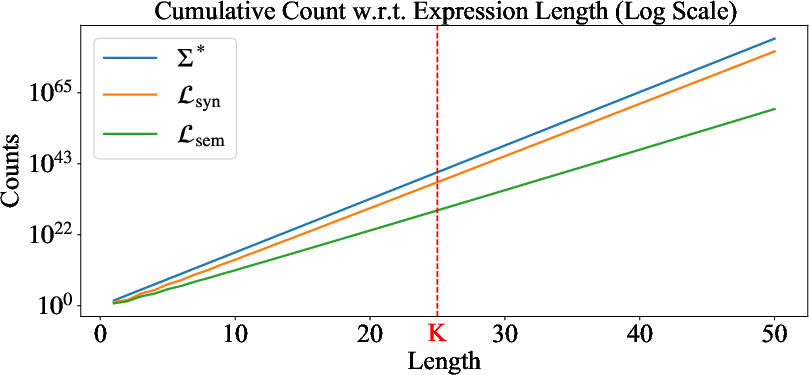

Search Space Efficiency and Grammar Level Comparison

Theoretical and empirical analysis of search space sizes (Figure 4) reveal that syntax/semantic grammar constraints sharply reduce practical search complexity, transforming exponential combinatorial growth into tractable, constant-order spaces via length bounding. Thus, α-Sem-k yields a search space both manageable and expressive—a critical advance for symbolic regression in finance.

Figure 4: Comparison of cumulative search space sizes of grammar-constrained methods, demonstrating drastic reduction in combinatorial burden.

Syntax-Aware Representation Learning

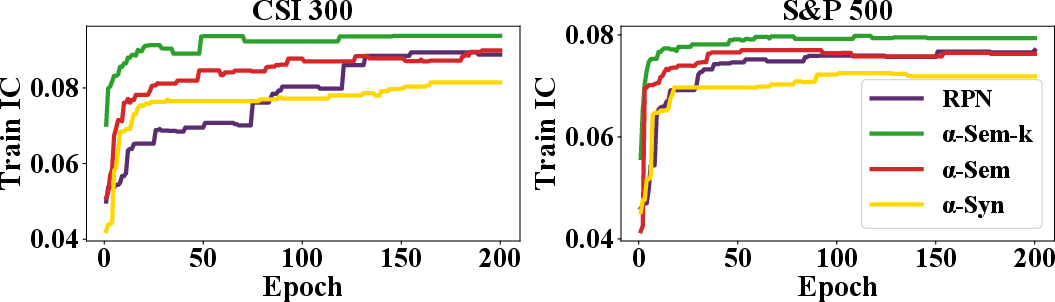

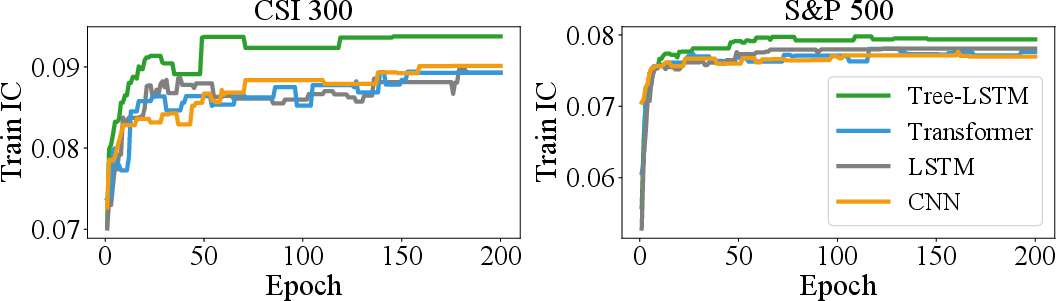

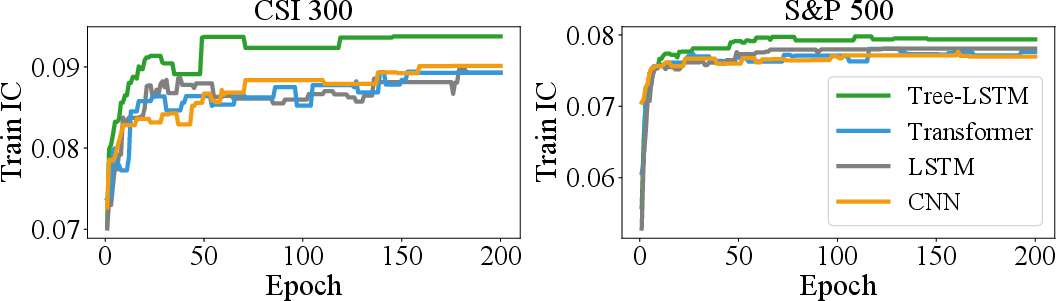

Tree-LSTM-based state encodings outperform sequence-based models (LSTM, Transformer, CNN) by capturing ASR structure and addressing isomorphic redundancy (Figure 5). The order-sensitive and order-invariant aggregation strategies correctly reflect operator semantics, thus supporting accurate estimation and efficient MCTS exploration.

Figure 5: Training curve comparison for different network architectures, establishing Tree-LSTM superiority in syntax-sensitive representation.

Practical and Theoretical Implications, Future Directions

AlphaCFG provides a unified, extensible grammar-guided framework for interpretable factor discovery, symbolic regression, and refinement in finance. Beyond trading, the formalized approach generalizes to asset pricing, portfolio construction, and any domain requiring interpretable symbolic model search under domain-encoded constraints.

Integration of AlphaCFG with foundation models trained over program and syntax trees—combining high-capacity learned priors with grammar-driven inductive bias—poses a promising future direction to further accelerate search, enhance generalization, and apply structured reasoning in high-dimensional scientific domains.

Conclusion

AlphaCFG demonstrates that grammar-guided, syntax-tree–structured search with reinforcement learning and neural MCTS yields efficient, interpretable, and highly effective alpha factor discovery. By encoding financial domain knowledge directly in the hypothesis space, AlphaCFG fundamentally shifts formulaic alpha mining from ad hoc trial-and-error to principled, scalable symbolic reasoning. Its modular, extensible design suggests broad relevance for interpretable machine learning well beyond quantitative finance.