From Basins to safe sets: a machine learning perspective on chaotic dynamics

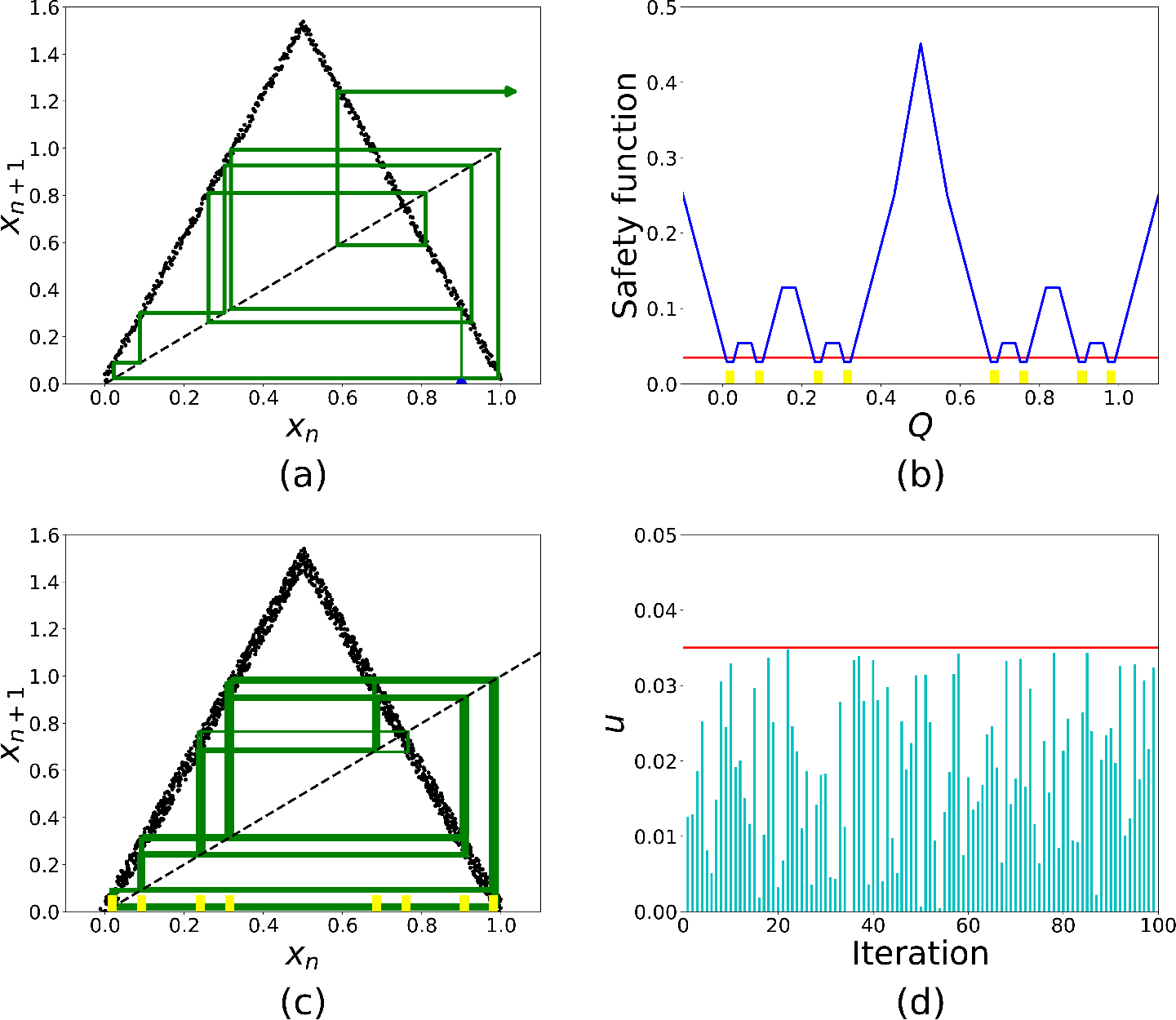

Abstract: The study of chaos has long relied on computationally intensive methods to quantify unpredictability and design control strategies. Recent advances in machine learning, from convolutional neural networks to transformer architectures, provide new ways to analyze complex phase space structures and enable real time action in chaotic dynamics. In this perspective article, we highlight how data driven approaches can accelerate classical tasks such as estimating basin characterization metrics, or partial control of transient chaos, while opening new possibilities for scalable and robust interventions in chaotic systems. In recent studies, convolutional networks have reproduced classical basin metrics with negligible bias and low computational cost, while transformer based surrogates have computed accurate safety functions within seconds, bypassing the recursive procedures required by traditional methods. We discuss current opportunities, remaining challenges, and future directions at the intersection of nonlinear dynamics and artificial intelligence.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “From Basins to Safe Sets: A Machine Learning Perspective on Chaotic Dynamics”

Overview: What is this paper about?

This paper looks at chaos—situations where small changes can lead to big, surprising differences—and shows how modern ML can help us understand and control it faster. Instead of using slow, traditional math and computer methods, the authors explain how tools like convolutional neural networks (CNNs) and transformers can quickly measure how unpredictable a system is and help keep it from going into bad or unwanted states. It’s a “perspective” article, which means it reviews recent progress, explains why it matters, and suggests where the field should go next.

Key Questions: What are the researchers trying to figure out?

The paper focuses on three easy-to-grasp questions:

- Can machine learning speed up the way we measure how unpredictable chaotic systems are?

- Can ML help us control chaos in real time, especially when a system might suddenly switch to an undesired state?

- What are the biggest opportunities and remaining challenges when combining traditional chaos theory with modern AI?

Methods and Ideas: How do they approach the problem?

To make sense of chaos, scientists use the idea of a “basin of attraction.” Imagine dropping a marble on a table with several buckets. Depending on where you drop it, the marble rolls into one bucket. A basin of attraction is the “map” that tells you which starting points end up in which buckets. In chaotic systems, the borders between these basins can be very twisty and complex—like a coastline full of bays and inlets—making outcomes hard to predict.

Here are the main concepts, explained simply:

- Basins of attraction: A colored map where each color shows where a starting point ends up (which “bucket”).

- Fractal boundaries: Edges that are super detailed and complicated at every scale—like zooming into a coastline and seeing endless squiggles.

- Basin entropy: A number that tells you how uncertain the map is; higher means more unpredictability.

- Wada property: A wild situation where three or more basins share the same boundary; a tiny move can flip your outcome from one basin to another.

- Partial control: Instead of fully stopping chaos, you nudge the system just enough to keep it in a safe zone.

- Safety function, written as : For any spot in the system, it tells the minimum “push” needed to keep the system from escaping to a bad state.

- Safe set, written as : All the spots where a push of size or less is enough to keep things safe.

Traditionally, measuring things like fractal boundaries or calculating the safety function takes a lot of time and computer power. The paper explains how ML speeds this up:

- CNNs (a type of ML good at looking at images) can “look” at basin maps and instantly estimate measures like fractal dimension and basin entropy—much faster than old methods.

- Transformers (a type of ML good at learning from sequences, like how your phone predicts the next word) can learn to estimate the safety function from short trajectory samples, skipping slow, nested calculations.

Main Findings: What did they discover, and why does it matter?

The authors highlight recent results showing:

- CNNs can estimate basin metrics (like fractal dimension and basin entropy) quickly and accurately from images of the basins. Once trained, they can produce good answers in under a second per map, much faster than traditional methods.

- Transformer models can estimate safety functions directly from data (short snippets of how the system behaves) without needing full knowledge of the system’s equations. These estimates are accurate and fast, enabling near real-time decisions about keeping the system in a safe set .

Why this is important:

- Speed: Many chaotic systems change quickly. Faster analysis and control could prevent failures in things like power grids or keep models of climate systems more stable.

- Scalability: As systems get bigger and more complex, traditional methods can become too slow. ML can handle more data and higher dimensions more efficiently.

- Flexibility: ML can work with noisy data and doesn’t always need the exact equations of the system, which is helpful in real-world situations.

Implications: What impact could this have?

This work points to a future where:

- Scientists can analyze chaos in real time, making quicker decisions in fields like engineering, climate science, and biology.

- Controllers can keep systems within safe zones using minimal interventions—like gentle taps to keep the marble away from a bad bucket—without spending huge amounts of time computing complex functions.

- Hybrid approaches become standard: use ML for speed and scale, and traditional methods for rigorous safety checks and guarantees.

The authors also outline what still needs work:

- Automatically detecting tricky structures (like riddled basins or KAM islands) that are hard to spot even for experts.

- Scaling safety-function learning to higher-dimensional systems (more variables), which is still challenging.

- Building trust in ML models with uncertainty estimates (how confident the model is) and interpretability (clear reasons for its predictions).

- Creating shared datasets and benchmarks so researchers can fairly compare methods and improve them together.

Simple Takeaway

Chaos is tricky because tiny differences can lead to totally different outcomes. This paper shows that machine learning can act like a fast, smart “assistant” that:

- Reads complex maps of outcomes (basins) quickly.

- Estimates safe zones and the minimum push needed to keep a system from going wrong.

- Helps scientists and engineers make faster, better decisions in complex, changing environments.

The bottom line: ML doesn’t replace traditional chaos theory—it turbocharges it. Combining both makes it possible to understand and steer chaotic systems more effectively, especially when time and computing power are limited.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following points summarize what remains missing, uncertain, or unexplored in the paper, framed as concrete, actionable items for future research.

- Cross-system generalization of CNN basin-metric surrogates is untested: systematically evaluate across diverse dynamical families (maps and flows), including delayed and high-dimensional systems, not just those used for training.

- Robustness to data representation is unknown: quantify sensitivity of CNN predictions to image resolution, noise, color mapping, coordinate transformations, zoom level, and discretization choices; establish invariance or correction procedures.

- Uncertainty calibration for ML-estimated basin metrics is absent: develop calibrated predictive intervals (e.g., deep ensembles, conformal prediction) and reliability diagrams to quantify confidence and failure modes.

- No rigorous certification for ML-inferred properties (e.g., Wada): design hybrid pipelines where ML proposes candidates and classical methods certify them, with quantified coverage and failure rates.

- Missing standardized benchmarks: release public datasets of basins, trajectories, and safety-function labels spanning parameter ranges, dimensions, and noise levels; define evaluation tasks, metrics, and baselines.

- Compute amortization is not demonstrated: perform end-to-end accounting of training-label generation plus training time versus classical analysis across parameter sweeps and hardware to quantify when ML is truly cheaper.

- Domain shift and portability are unaddressed: test whether models trained on synthetic basins generalize to experimentally measured basins with measurement noise and partial observability; develop domain adaptation/transfer methods.

- Interpretability remains aspirational: specify and validate physics-grounded attribution methods that link model outputs to phase-space features (e.g., boundary filaments, Wada junctions) with quantitative benchmarks.

- Transformer safety-function surrogates are validated mainly on 1D maps: demonstrate scalability to 2D/3D maps and continuous-time ODEs with explicit scaling curves for runtime, memory, and accuracy.

- Formal control guarantees are missing: derive bounds that relate surrogate error in to guaranteed confinement; implement conservative safety margins (e.g., operate on with provable ) and certify closed-loop invariance.

- Safety under distribution shift is not treated: build online uncertainty monitoring, change detection, and fallback-to-certified control strategies when disturbances, parameters, or noise bounds drift.

- Partial observability is ignored: develop observers or learn surrogates that map measured outputs (not full states) to safe controls, robust to sensor noise, latency, and sampling artifacts.

- Training-sample strategy for safety-function surrogates is unspecified: implement active learning focused on boundaries and rare escape regions to reduce sample complexity and improve boundary fidelity.

- Delayed and non-Markovian dynamics are unaddressed: extend safe-set definitions and ML training to systems with delays or memory effects; evaluate on canonical delayed systems.

- Continuous-time control formulation is lacking: translate safety-function/partial-control concepts to flows with event-triggered control and step-size dependence; test on Lorenz, Rössler, and other ODEs under external noise.

- Multi-objective control is unexplored: beyond minimizing maximal control, study average energy, intervention sparsity, and risk-sensitive objectives; quantify trade-offs and their impact on safe-set geometry.

- Real-time and hardware constraints are unspecified: characterize sensing-to-actuation latency, throughput, and memory footprints on embedded platforms; verify performance under strict timing budgets.

- Robustness to adversarial or unmodeled disturbances is untested: analyze worst-case deviations and control saturation; design fail-safe policies and graceful degradation mechanisms.

- Ground-truth label fidelity is uncertain: quantify how grid resolution and numerical tolerances in classical and basin-metric labels affect training and evaluation; propagate label uncertainty to model calibration.

- Comparative baselines are incomplete: include strong non-ML baselines (adaptive multiresolution meshing, interval arithmetic, verified numerics) to delineate regimes where ML truly dominates.

- Evaluation metrics are limited: adopt task-relevant metrics (escape rate under closed-loop control, certified safe-set volume, false-positive/negative rates at boundaries) beyond MSE and point estimates.

- Generative augmentation is unvalidated: if using synthetic basins to augment training, ensure physical plausibility and conservation of invariants; create tests to reject nonphysical samples.

- Reproducibility is not ensured: provide open-source code, trained models, and datasets for CNN/transformer surrogates, with documented protocols for independent verification.

- Theoretical understanding of learned representations is lacking: investigate whether embeddings capture invariants (Lyapunov structure, stable/unstable manifolds) and identify conditions under which this emerges.

- Hybrid ML–classical integration is not operationalized: define decision rules for when to trust surrogates versus trigger classical verification; quantify cost–accuracy trade-offs in adaptive pipelines.

- Speed claims need standardization: perform apples-to-apples runtime comparisons (including training costs) across hardware and software stacks to substantiate “real-time” advantages.

Glossary

- Amortization: In machine learning, shifting expensive computation from inference to training by learning a reusable mapping. "through amortization, the costly search is performed once during training"

- Autoencoders: Neural networks that learn compact latent representations by reconstructing inputs. "through autoencoders, diffusion maps, or Koopman based coordinates"

- Basin entropy: A metric quantifying unpredictability in basins by measuring the mixing of outcomes in small regions. "basin entropy and boundary basin entropy"

- Basin of attraction: The set of initial conditions that asymptotically approach a particular attractor. "Basins of attraction in a two dimensional dynamical system."

- Basin stability: A measure of the robustness of an attractor based on the volume of its basin under perturbations. "such as basin stability"

- Boundary basin entropy: A variant of basin entropy focused on uncertainty concentrated at basin boundaries. "basin entropy and boundary basin entropy"

- Boundary filaments: Thin, filament-like structures in basin boundaries that reflect intricate separations between outcomes. "linked to boundary filaments and Wada junctions"

- Box-counting: A numerical method to estimate fractal dimension by covering a set with boxes at different scales. "box-counting for estimating fractal dimension"

- Chaotic attractor: An attractor supporting chaotic dynamics, characterized by sensitivity to initial conditions and fractal structure. "embedded within the chaotic attractor"

- Chaotic saddle: A non-attracting invariant set that organizes transient chaotic motion before escape or settling. "typically containing the chaotic saddle"

- Constrained optimization layers: Neural network components that embed and differentiate through optimization problems with constraints. "constrained optimization layers"

- Diffusion maps: A manifold learning technique that constructs low-dimensional embeddings based on diffusion processes on data. "through autoencoders, diffusion maps, or Koopman based coordinates"

- Escape sets: Regions of phase space from which trajectories leave a target set under the dynamics or disturbances. "distance to escape sets"

- Feature attribution: Methods that assign importance scores to input features to explain model predictions. "feature attribution analyses"

- Fractal dimension: A non-integer dimension quantifying the complexity of fractal structures, such as irregular basin boundaries. "box-counting for estimating fractal dimension"

- Grid method: A computational procedure for Wada detection that analyzes basin structure on progressively refined grids. "the grid method"

- KAM islands: Stable, quasi-periodic regions (Kolmogorov–Arnold–Moser structures) embedded within chaotic seas of Hamiltonian-like systems. "riddled basins or KAM islands"

- Koopman based coordinates: Representations derived from approximations of the Koopman operator to linearize nonlinear dynamics in a higher-dimensional space. "Koopman based coordinates"

- Local linearization: Approximating a nonlinear system by its linearization around a point to analyze stability or design control. "accessed through local linearization"

- Merging method: A Wada-testing approach that examines the effect of progressively merging basins to reveal shared boundaries. "the merging method"

- Model free control: Control strategies that do not rely on explicit system models, instead using data-driven policies or surrogates. "enabling real time, model free control"

- Monte Carlo sweeps: Repeated randomized sampling procedures used to estimate quantities like metrics on basins. "box-counting or Monte Carlo sweeps"

- Multistability: The coexistence of multiple stable attractors for the same parameter set in a dynamical system. "multistability, resilience, and failure"

- NusseâYorke method: A technique for analyzing basin topology and detecting Wada structures using topological constructions. "the NusseâYorke method"

- OttâGrebogiâYorke (OGY) method: A chaos control method that stabilizes unstable periodic orbits via small, timed perturbations. "OttâGrebogiâYorke (OGY) method"

- Partial control: A framework that maintains trajectories within a target region using bounded interventions weaker than disturbances. "One such framework is partial control"

- Phase space: The space of all possible states of a system, where dynamics are represented as trajectories. "partition the phase space into regions"

- Physics-guided regularization: Incorporating physical constraints or priors into learning objectives to enforce scientifically consistent predictions. "physics-guided regularization"

- Physics-informed neural networks (PINNs): Neural networks trained to satisfy governing differential equations by penalizing residuals during learning. "physics-informed neural networks (PINNs)"

- Riddled basins: Basins in which every neighborhood contains points from other basins, leading to extreme unpredictability. "riddled basins or KAM islands"

- Safe set: The set of states from which trajectories can be kept within a target region using control bounded by a given threshold. "the safe set is the yellow region"

- Safety function: A function assigning the minimal control needed at each state to prevent escape from a target region. "the safety function "

- Saddle-straddle method: A numerical technique for detecting Wada basins by tracking trajectories that straddle basin boundaries. "the saddle-straddle method"

- Saliency maps: Visual explanations that highlight input regions most influential to a model’s output. "saliency maps"

- Sparse equation discovery: Techniques that identify parsimonious governing equations from data using sparse regression. "sparse equation discovery techniques"

- Symbolic regression: Searching over mathematical expressions to fit data, yielding interpretable models of dynamics. "Symbolic regression and sparse equation discovery techniques"

- Transient chaos: Chaotic behavior that persists for a finite time before trajectories settle into regular dynamics or escape. "in the context of transient chaos"

- Unstable periodic orbits: Periodic solutions that repel nearby trajectories and often structure chaotic dynamics. "unstable periodic orbits embedded within the chaotic attractor"

- Wada junctions: Points on a basin boundary where three or more basins meet, characteristic of Wada structures. "Wada junctions"

- Wada property: A basin boundary property where every boundary point is shared by three or more basins. "the Wada property"

Practical Applications

Immediate Applications

The following applications can be deployed now or with minimal integration effort, leveraging the paper’s demonstrated use of convolutional neural networks for basin characterization and transformer-based surrogates for safety functions in low-dimensional systems.

- Fast basin metric estimation for large parameter sweeps (Academia, Energy, Software)

- Use CNN surrogates to compute fractal dimension, basin entropy, and boundary basin entropy from basin images at orders-of-magnitude lower cost than classical methods.

- Tools/workflows: Python/Julia plugins wrapping trained CNNs to post-process basin images; integration into existing packages (e.g., PyDSTool, DynamicalSystems.jl) to accelerate uncertainty maps and bifurcation studies.

- Assumptions/dependencies: Availability of representative training data for the target system; basins rendered at sufficient resolution; model generalization across nearby parameter regimes; classical spot checks for validation.

- Real-time partial control in low-dimensional testbeds (Physics labs, Electronics, Education)

- Apply transformer-estimated safety functions to confine trajectories within safe sets using bounded interventions in 1D maps and simple electronic chaotic circuits (e.g., Chua’s circuit).

- Tools/workflows: Microcontroller or FPGA implementations running lightweight transformer inference; sensor feedback loop estimating from short trajectory windows; simple bounded actuation policies.

- Assumptions/dependencies: Low latency sensing and actuation; stationary noise bounds; model-free assumption holds for the observed dynamics; safe operation envelope determined experimentally.

- Online unpredictability monitoring in experimental setups (Academia, Industry R&D)

- Build dashboards that track basin metrics over time to detect changes in unpredictability (e.g., due to parameter drift) and trigger alarms or more expensive classical analyses when thresholds are crossed.

- Tools/workflows: Stream processing of basin snapshots via CNN inference; ensemble/MC dropout for uncertainty; alerting integrated into lab control software.

- Assumptions/dependencies: Continuous or periodic access to basin images or equivalent summaries; calibrated confidence thresholds; human-in-the-loop validation.

- Hybrid ML–classical pipelines to triage compute (Academia, Software)

- Use ML surrogates to prioritize where to run expensive box-counting or Wada verification, focusing classical methods on high-uncertainty regions.

- Tools/workflows: Orchestration pipelines that route samples between fast ML inference and rigorous algorithms; audit logs of ML vs. classical decisions.

- Assumptions/dependencies: Reliable uncertainty estimates (ensembles, dropout); coverage guarantees based on conservative thresholds; reproducible baselines.

- Rapid safety margin mapping for simple mechanical/robotic subsystems (Robotics)

- Estimate safe sets in subsystems exhibiting transient chaos (e.g., compliant joints under intermittent contact) to keep states within bounded regions during testing.

- Tools/workflows: Transformer inference embedded in test stands; bounded control laws derived from ; visualization of bottlenecks in safe sets.

- Assumptions/dependencies: Low-dimensional effective state representations; adequate sensing; domain adaptation of models to the specific mechanism.

- Interactive teaching tools for chaos metrics (Education)

- Classroom/lab apps that render basins and instantly compute unpredictability metrics, demonstrating fractal boundaries and sensitivity to initial conditions.

- Tools/workflows: Web-based frontends with GPU-backed CNN inference; curated datasets and sliders for parameter exploration.

- Assumptions/dependencies: Pre-trained models; examples aligned with curricula; lightweight compute (consumer GPUs or cloud).

- Model-free screening for robustness in small microgrids and inverter testbeds (Energy R&D)

- Use basin metrics to compare controller settings, identifying parameter regions with lower basin entropy (higher predictability) before field deployment.

- Tools/workflows: Offline experiments that generate basin-like outcome maps (e.g., start-up/fault scenarios) and ML-based metric scoring.

- Assumptions/dependencies: Faithful experimental surrogates of operational conditions; mapping outcomes to “attractors” is well-defined; transferability of lab insights to field devices.

- Quality assurance for numerical studies (Academia, Software)

- Replace repeated box-counting/Monte Carlo sweeps with fast CNN checks to detect obvious errors or regressions in pipeline updates.

- Tools/workflows: CI hooks that run ML-based metric estimators on canonical basins; thresholds triggering deeper classical recalculation.

- Assumptions/dependencies: Stable reference datasets; conservative thresholds to avoid false confidence; occasional full re-computation for drift detection.

Long-Term Applications

These applications require further research, scaling to high-dimensional systems, robust uncertainty quantification, or integration with domain constraints and certification.

- Real-time safety-function controllers for high-dimensional infrastructure (Energy)

- Confine grid states within safe sets during disturbances to reduce frequency/voltage collapses and avoid undesirable attractors.

- Tools/workflows: Transformer/RL controllers with dimensionality reduction (autoencoders, Koopman coordinates); SCADA/PLC integration; physics-guided regularization.

- Assumptions/dependencies: Scalable training with representative trajectories; trustworthy uncertainty bounds; cyber-physical security; regulatory acceptance.

- Early-warning and intervention planning for tipping points (Policy, Climate)

- Map basin stability and unpredictability around critical transitions to guide mitigation policies and stress tests for climate subsystems.

- Tools/workflows: ML-accelerated parameter sweep engines for Earth-system components; hybrid validation with classical methods; visualization for decision-makers.

- Assumptions/dependencies: Validated models of subsystems; data accessibility; interpretable uncertainty; caution to avoid overreliance on surrogate outputs.

- Neuromodulation for seizure and arrhythmia control (Healthcare)

- Use safety functions to design bounded stimulation policies that prevent escape to pathological attractors in neural/cardiac dynamics.

- Tools/workflows: Closed-loop implantables with transformer inference; safety-set-aware pulse scheduling; clinical trial frameworks.

- Assumptions/dependencies: Accurate patient-specific models or data-driven embeddings; stringent safety constraints; regulatory approvals; robust real-time sensing.

- Adaptive controllers combining transformers and reinforcement learning (Robotics, Industrial automation)

- Self-tuning policies that maintain confinement under noise, wear, or parameter drift, reducing intervention costs while preserving safety.

- Tools/workflows: Multi-agent RL with safety-function shaping; domain adaptation across machines; uncertainty-aware exploration policies.

- Assumptions/dependencies: Safe exploration protocols; scalable simulation-to-real transfer; interpretability for certification.

- Robust navigation in turbulent/chaotic environments (Aerospace, Autonomous systems)

- Plan trajectories that remain within safe sets in strongly perturbed flows (e.g., UAVs in urban canyons, spacecraft attitude in complex regimes).

- Tools/workflows: Onboard embeddings of flow dynamics; safety-set-informed guidance; PINN-constrained surrogates for physical consistency.

- Assumptions/dependencies: Reliable state estimation; compute budgets on edge hardware; validated models across operating envelopes.

- Automated detection of riddled basins and KAM structures (Academia, Software)

- Train classifiers to flag fine-scale phase-space features, accelerating exploratory analysis and hypothesis testing.

- Tools/workflows: Labeling campaigns and benchmark datasets; multi-scale CNNs/transformers; saliency tools linking predictions to geometry.

- Assumptions/dependencies: High-quality labeled data across systems; generalization across parameter ranges; explainability to avoid spurious detections.

- Sector-wide benchmarking and certification pipelines (Cross-sector, Policy)

- Standardized datasets and hybrid validation protocols that establish confidence in ML-based chaos analysis/control before deployment.

- Tools/workflows: Open repositories of basins, trajectories, safety functions; reference implementations; certification checklists for uncertainty and robustness.

- Assumptions/dependencies: Community coordination; funding for dataset curation; governance for updates and provenance.

- Physics-informed and constrained ML controllers (Energy, Aerospace, Healthcare)

- Embed invariants, symmetries, or conservation laws into learning to ensure consistent and safe behaviors in safety-critical domains.

- Tools/workflows: PINNs, differentiable optimization layers (OptNet), physics-guided losses; formal verification interfaces.

- Assumptions/dependencies: Availability of hard/soft constraints; scalable training; compatibility with verification toolchains.

- Risk analysis for regime-switching systems (Finance, Operations research)

- Use basin metrics as qualitative markers of unpredictability to inform stress testing and contingency planning for systems with multiple attractors.

- Tools/workflows: ML-assisted scans over policy/parameter choices; dashboards flagging high-entropy regions; hybrid audits.

- Assumptions/dependencies: Meaningful mapping from outcomes to attractors; careful interpretation to avoid overfitting complex market dynamics; governance controls.

- Fleet-scale deployment with cybersecurity and safety guarantees (Industry)

- Roll out safe-set controllers and unpredictability monitors across devices (e.g., inverters, robots), with secure updates and fail-safes.

- Tools/workflows: Edge inference pipelines; secure model management; fallback classical routines; continuous monitoring and incident response.

- Assumptions/dependencies: Robust telemetry; compliance frameworks; resilience to adversarial inputs; lifecycle maintenance plans.

Collections

Sign up for free to add this paper to one or more collections.