- The paper introduces a novel cross-subject domain adaptation framework that integrates Filter-Bank Euclidean Alignment (FBEA) with a dual-stage self-training paradigm (CSST) for robust SSVEP classification.

- The paper demonstrates significant performance gains, achieving higher ITR and accuracy on Benchmark and BETA datasets compared to traditional methods.

- The paper incorporates Time-Frequency Augmented Contrastive Learning (TFA-CL) to improve feature discrimination and mitigate label noise, ensuring practical deployment for BCI applications.

Rethinking Self-Training Based Cross-Subject Domain Adaptation for SSVEP Classification

Introduction

This work presents a comprehensive framework for cross-subject domain adaptation in steady-state visually evoked potential (SSVEP)-based brain–computer interface (BCI) classification. SSVEP-based BCIs are prominently used due to their robustness and high signal-to-noise ratios, but cross-subject variability and the expense of user-specific annotation present substantial challenges for generalizable decoding models. Traditional domain generalization and adaptation strategies have addressed some variability, yet remain limited by their inability to efficiently harness unlabeled target data. With recent advances in self-training and domain adversarial learning, there is new potential for more effective cross-subject adaptation.

Methodological Innovations

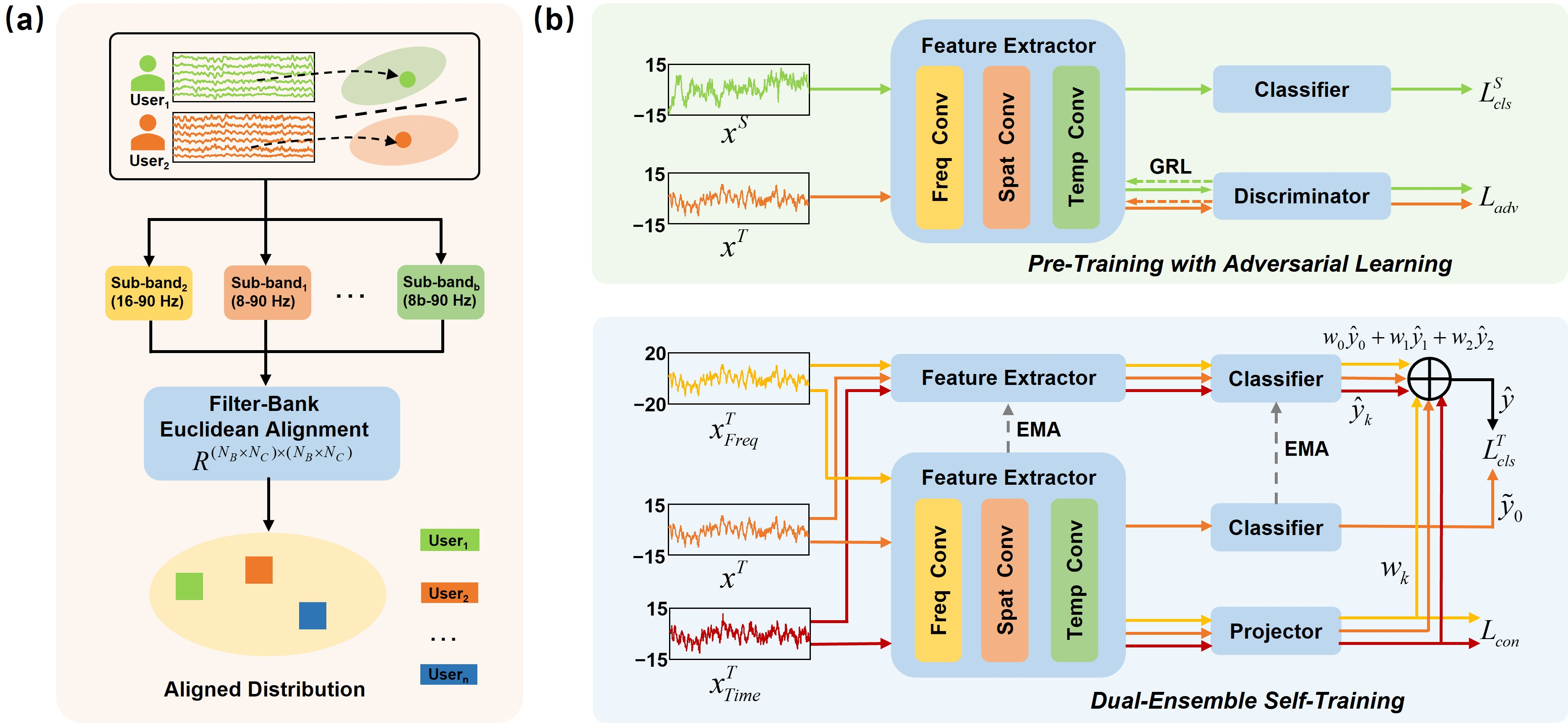

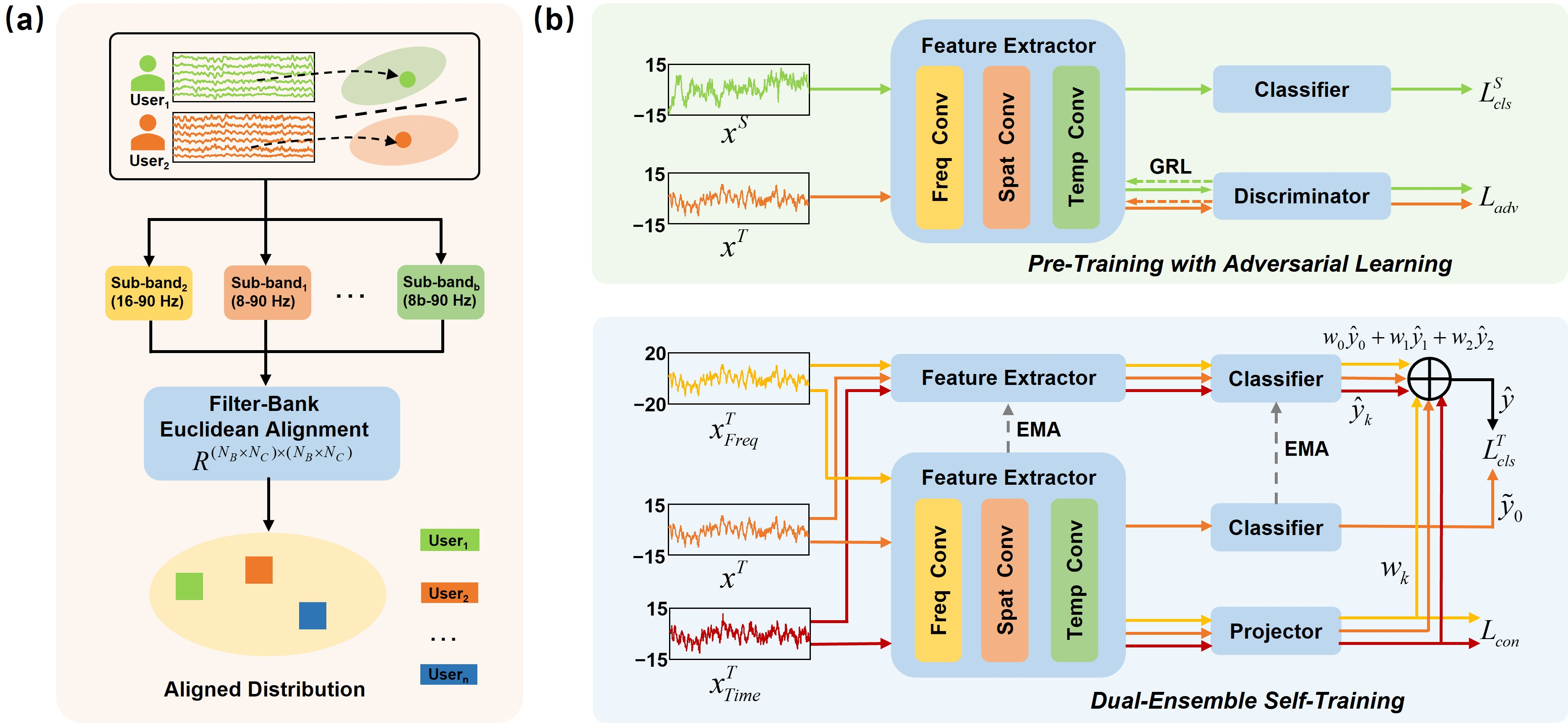

The proposed framework synthesizes three key algorithmic contributions: Filter-Bank Euclidean Alignment (FBEA), a Cross-Subject Self-Training (CSST) paradigm comprising Pre-Training with Adversarial Learning (PTAL) and Dual-Ensemble Self-Training (DEST), and a Time-Frequency Augmented Contrastive Learning (TFA-CL) module.

FBEA directly addresses the domain shift caused by variation in signal magnitude and harmonic structure across subjects. By extending traditional Euclidean alignment to operate on multi-band filter bank representations, FBEA leverages the full frequency spectrum intrinsic to SSVEPs for precise whitening across both spatial and spectral dimensions, thereby aligning source and target distributions more effectively.

CSST comprises two specialized self-training stages. In PTAL, adversarial learning based on the gradient reversal layer aligns feature distributions via domain confusion, improving target generalization prior to pseudo-labeling. DEST further refines pseudo-labels using a mean-teacher strategy: a temporally-ensembled teacher generates multi-view pseudo-labels (via data augmentations), which are fused based on cosine similarity of feature embeddings, enhancing pseudo-label reliability and reducing error propagation.

TFA-CL mitigates the continuing risk of noisy labels by imposing a supervised contrastive loss over temporally and spectrally augmented views, further promoting discriminative, clusterable representations even when pseudo-labels are imperfect.

Figure 1: An overview of the cross-subject domain adaptation approach: (a) shows Filter-Bank Euclidean Alignment for frequency-aware alignment, (b) illustrates the CSST framework with Pre-Training via Adversarial Learning (PTAL) and Dual-Ensemble Self-Training (DEST), with Time-Frequency Contrastive Learning applied in the second stage.

Experimental Evaluation

The approach was tested on two large-scale, publicly available datasets: Benchmark (35 subjects) and BETA (70 subjects)—both representing real-world SSVEP-based BCI spelling tasks with a variety of stimulation frequencies and typical cross-subject variability. Evaluation followed a strict Leave-One-Subject-Out (LOSO) protocol to ensure validity in the cross-subject generalization setting. The core performance metrics were classification accuracy and information transfer rate (ITR), a BCI-relevant measure that quantifies both decoding accuracy and speed.

The proposed method was compared to established baselines, including:

- tt-CCA (template-based generalization)

- Ensemble-DNN (ensemble transfer learning)

- OACCA (online adaptive CCA)

- SUTL (cross-subject transfer learning)

- SFDA (source-free domain adaptation)

Results

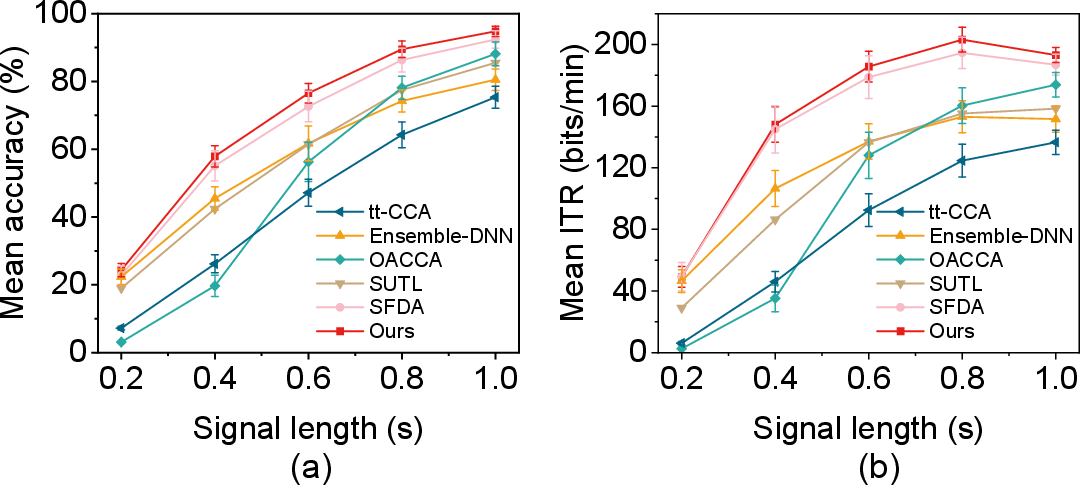

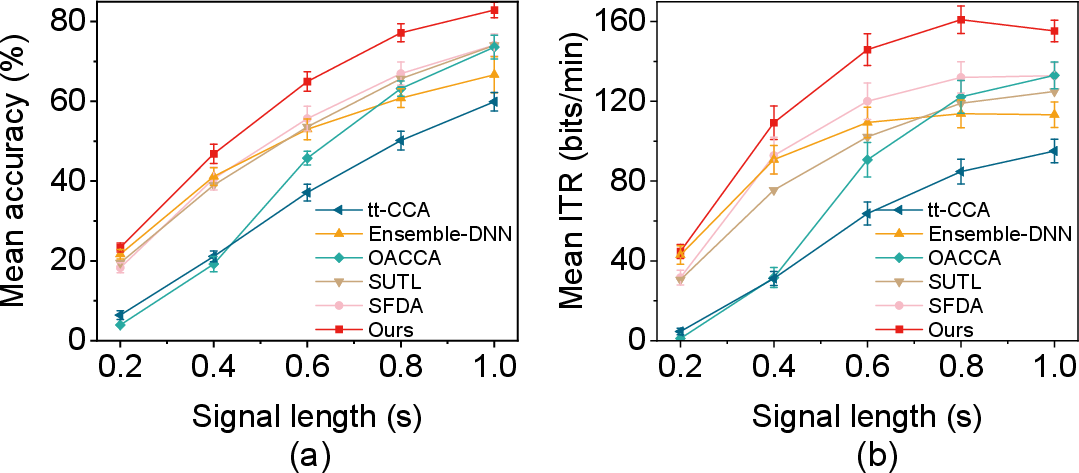

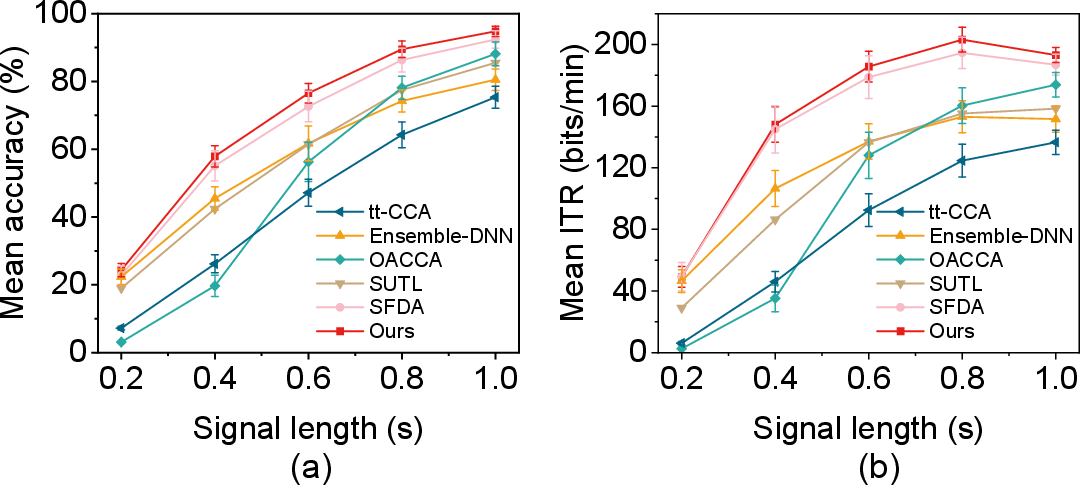

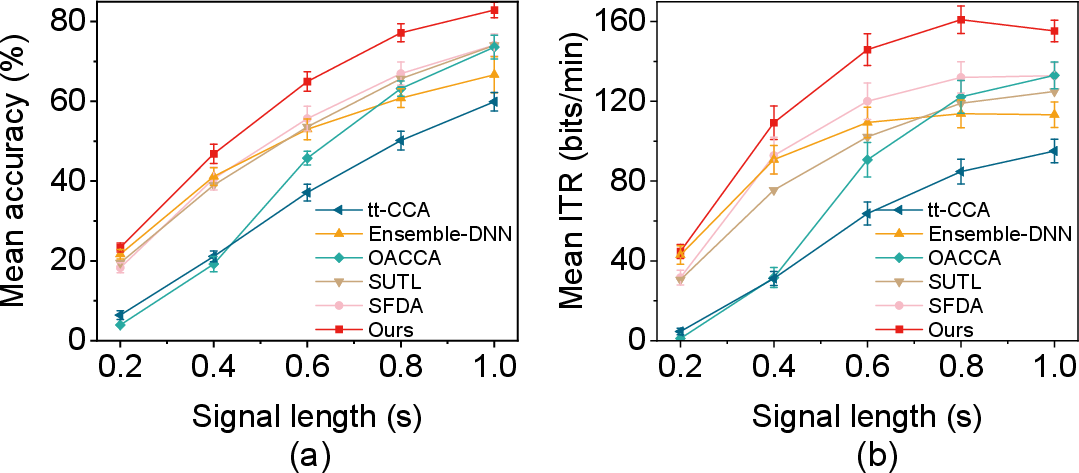

Across all metrics and datasets, the proposed approach consistently outperformed existing techniques, particularly for short signal lengths (which are most relevant for high-speed BCI applications). On the Benchmark dataset, the method achieved an ITR of 203.1±8.03 bits/min at a signal length of 0.8 s, significantly higher than the next-best method (SFDA: 194.54±10.07 bits/min, p<0.01). On BETA, the peak ITR was 160.93±6.93 bits/min (at 0.8 s), again substantially exceeding SFDA (131.99±7.86 bits/min, p<0.001).

Figure 2: Classification accuracy and ITR comparison of six approaches on the Benchmark dataset; proposed method leads in all settings.

Figure 3: Classification accuracy and ITR comparison on BETA dataset; proposed method maintains a superior margin, especially for rapid decoding intervals.

Ablation analysis established the additive contribution of each module: FBEA improved self-training baseline by 0.95% accuracy; PTAL (adversarial learning) raised performance by 7.22%; DEST yielded a further 1.07% increment through robust pseudo-label fusion. TFA-CL delivered the final improvement, achieving 94.80% accuracy and $193.12$ bits/min ITR at one-second intervals. Notably, channel/trial normalization and channel-based Euclidean alignment offered smaller gains than FBEA, underscoring the critical role of frequency-aware alignment in cross-subject adaptation for SSVEP.

Implications and Future Outlook

The integration of multi-band frequency domain alignment with robust, dual-ensemble self-training and contrastive learning marks a substantial advancement for domain adaptation in BCIs. By addressing harmonics and subject-specific noise head-on, and validating via robust large-cohort experiments, the approach presents a scalable pathway for practical SSVEP-BCI deployment without per-user calibration.

Theoretically, the results support the value of combining adversarial learning with mean-teacher pseudo-labeling and contrastive embedding for challenging non-stationary biomedical data. Practically, this framework could be extended to other EEG paradigms and related biosignal transfer learning domains. Future work may focus on further reducing training time, online adaptation in real-time settings, and integration with evolving deep sequential models for temporal EEG decoding.

Conclusion

This paper introduces an effective cross-subject domain adaptation pipeline for SSVEP-based BCI classification, combining FBEA for harmonics-aligned pre-processing, a two-stage CSST paradigm for robust adaptation, and TFA-CL for resilient representations under label noise. Empirical results demonstrate substantial improvements over established methods in both accuracy and ITR, affirming the framework’s superiority for high-throughput, cross-subject SSVEP-BCI scenarios. The modular design provides a foundation for future research aimed at subject-agnostic neural decoding and real-world BCI deployment.

Reference: "Rethinking Self-Training Based Cross-Subject Domain Adaptation for SSVEP Classification" (2601.21203)