Anticipation Before Action: EEG-Based Implicit Intent Detection for Adaptive Gaze Interaction in Mixed Reality

Abstract: Mixed Reality (MR) interfaces increasingly rely on gaze for interaction , yet distinguishing visual attention from intentional action remains difficult, leading to the Midas Touch problem. Existing solutions require explicit confirmations, while brain-computer interfaces may provide an implicit marker of intention using Stimulus-Preceding Negativity (SPN). We investigated how Intention (Select vs. Observe) and Feedback (With vs. Without) modulate SPN during gaze-based MR interactions. During realistic selection tasks, we acquired EEG and eye-tracking data from 28 participants. SPN was robustly elicited and sensitive to both factors: observation without feedback produced the strongest amplitudes, while intention to select and expectation of feedback reduced activity, suggesting SPN reflects anticipatory uncertainty rather than motor preparation. Complementary decoding with deep learning models achieved reliable person-dependent classification of user intention, with accuracies ranging from 75% to 97% across participants. These findings identify SPN as an implicit marker for building intention-aware MR interfaces that mitigate the Midas Touch.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Anticipation Before Action: What This Paper Is About

A quick overview

This paper looks at how to tell whether someone using a mixed reality headset wants to interact with something they’re looking at—or is just looking. The goal is to fix a common problem called the “Midas Touch,” where the system accidentally treats every glance as a command. The researchers used brain signals (EEG) to detect a kind of “anticipation signal” in the brain, hoping to understand a user’s intention before the system acts.

The big questions the researchers asked

- Can we spot a reliable brain signal that shows what a user intends to do while they use gaze (eye) control in mixed reality?

- Does this brain signal change depending on whether the person plans to select something or just observe it?

- Does getting visual feedback (like a quick pop-up) change that signal?

- Can a computer learn to tell “select” vs. “observe” just from brain data?

How they tested it (explained simply)

Think of mixed reality (MR) as wearing special glasses that place apps and controls into the real world around you. Now imagine you control those apps by looking at them.

- 28 people wore an MR headset and an EEG cap (which measures tiny electricity changes in the brain through the scalp—totally safe and non-invasive).

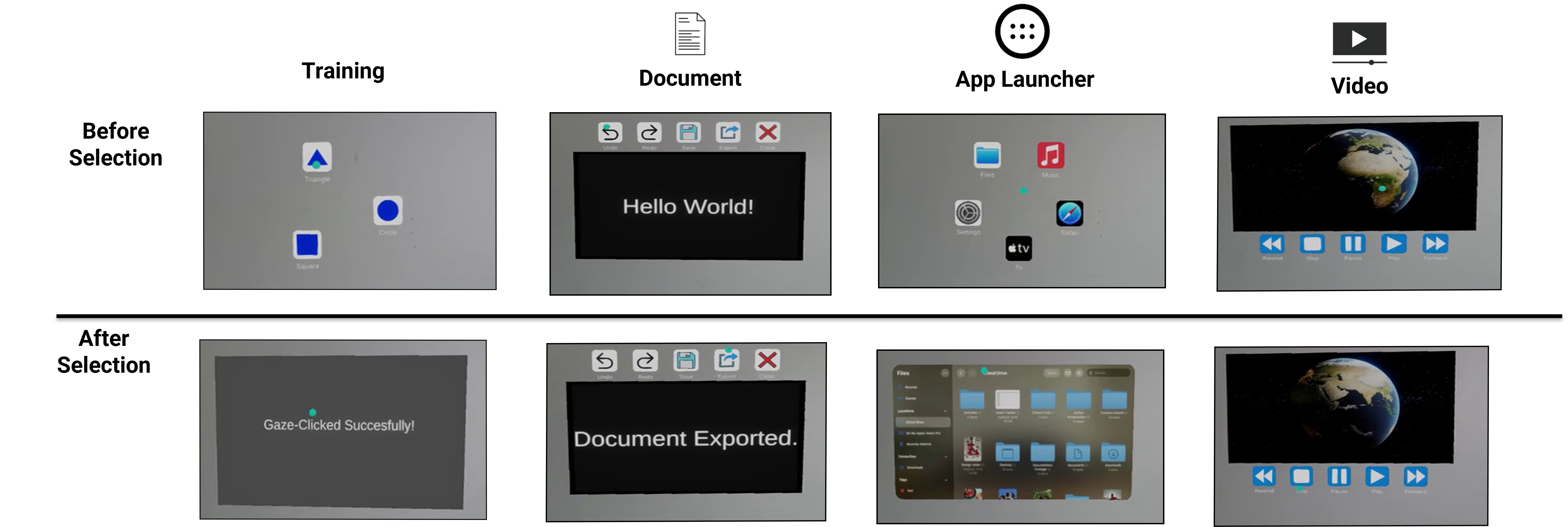

- They did tasks in three everyday scenes: picking an app from a launcher, using buttons in a document editor, and controlling a video player.

- Each trial asked them to:

- either Select (look at an icon to make something happen) or Observe (look at it but do nothing),

- with Feedback (a short confirmation pop-up) or No Feedback.

- Everyone did all four kinds of trials:

- Select + Feedback

- Select + No Feedback

- Observe + Feedback

- Observe + No Feedback

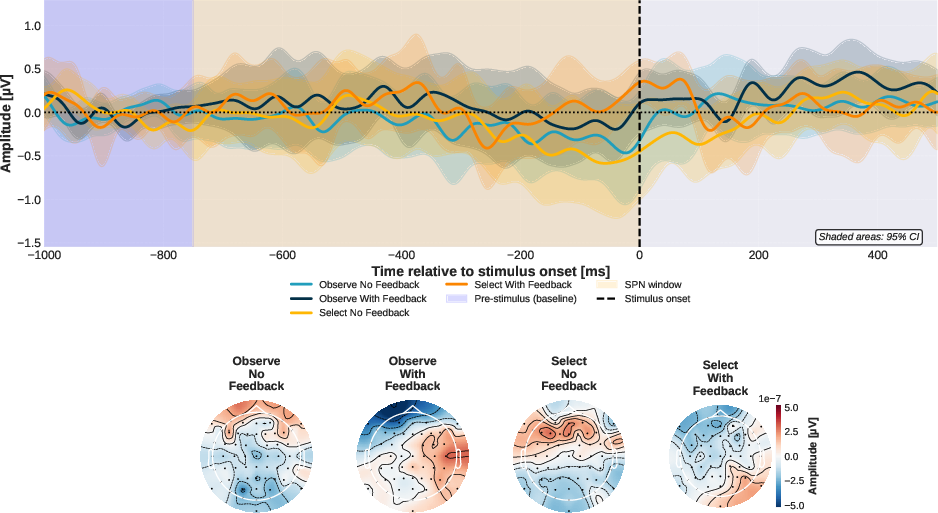

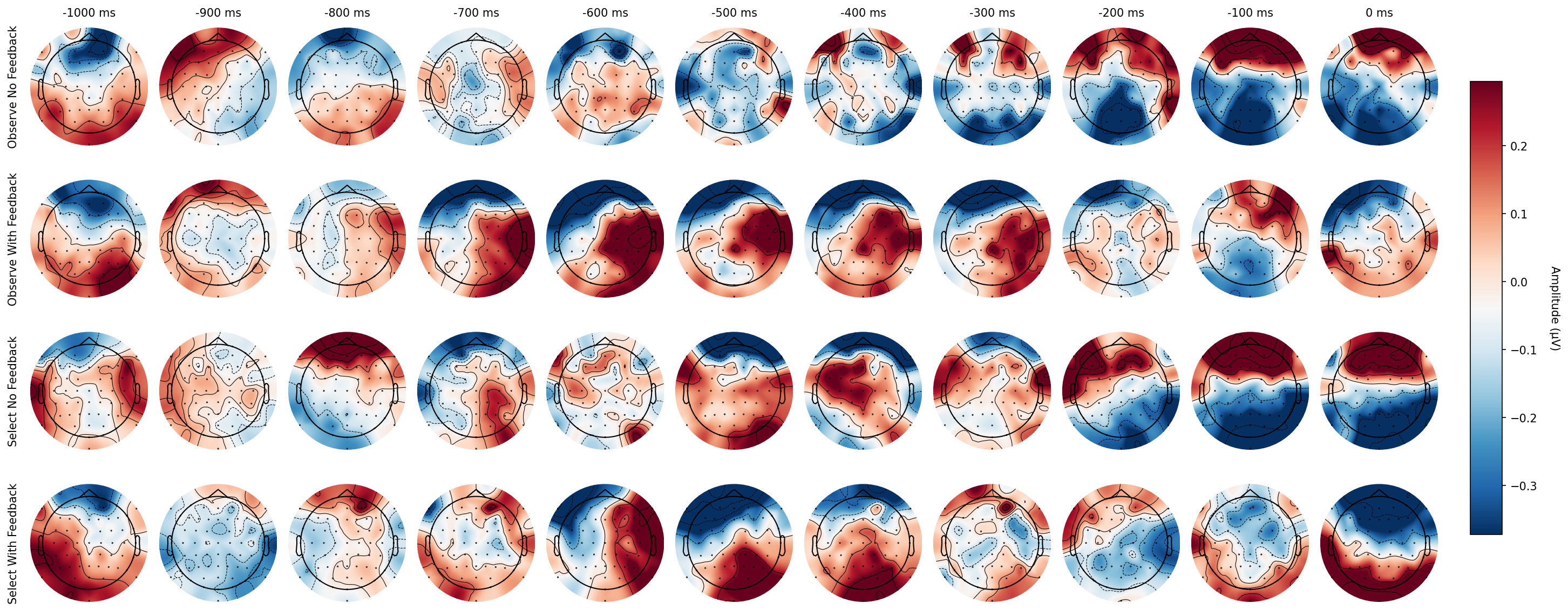

- While they looked at icons, the EEG recorded a pattern called SPN (Stimulus-Preceding Negativity). You can think of SPN as the brain’s “getting ready” signal that builds up before something expected happens—like the feeling right before a surprise, test result, or outcome. The researchers checked how strong this signal was just before the system would respond.

- They also trained machine learning models (a kind of pattern-recognizing AI) on each person’s EEG data to see if the computer could tell when the person intended to select vs. just observe.

What they found (and why it matters)

Here are the main results:

- The brain’s “anticipation” signal (SPN) showed up clearly in all conditions. That means this signal is reliable during realistic MR tasks—not just in simple lab setups.

- The SPN was strongest when people were only observing and there was no feedback. In other words, when users didn’t plan to act and weren’t expecting a confirmation, their brain showed more “anticipatory uncertainty.”

- When people intended to select—or when they expected feedback—the SPN got smaller. That suggests the brain felt more certain about what would happen next.

- This pattern means SPN is more about uncertainty and anticipation of what’s coming than about getting the muscles ready to move.

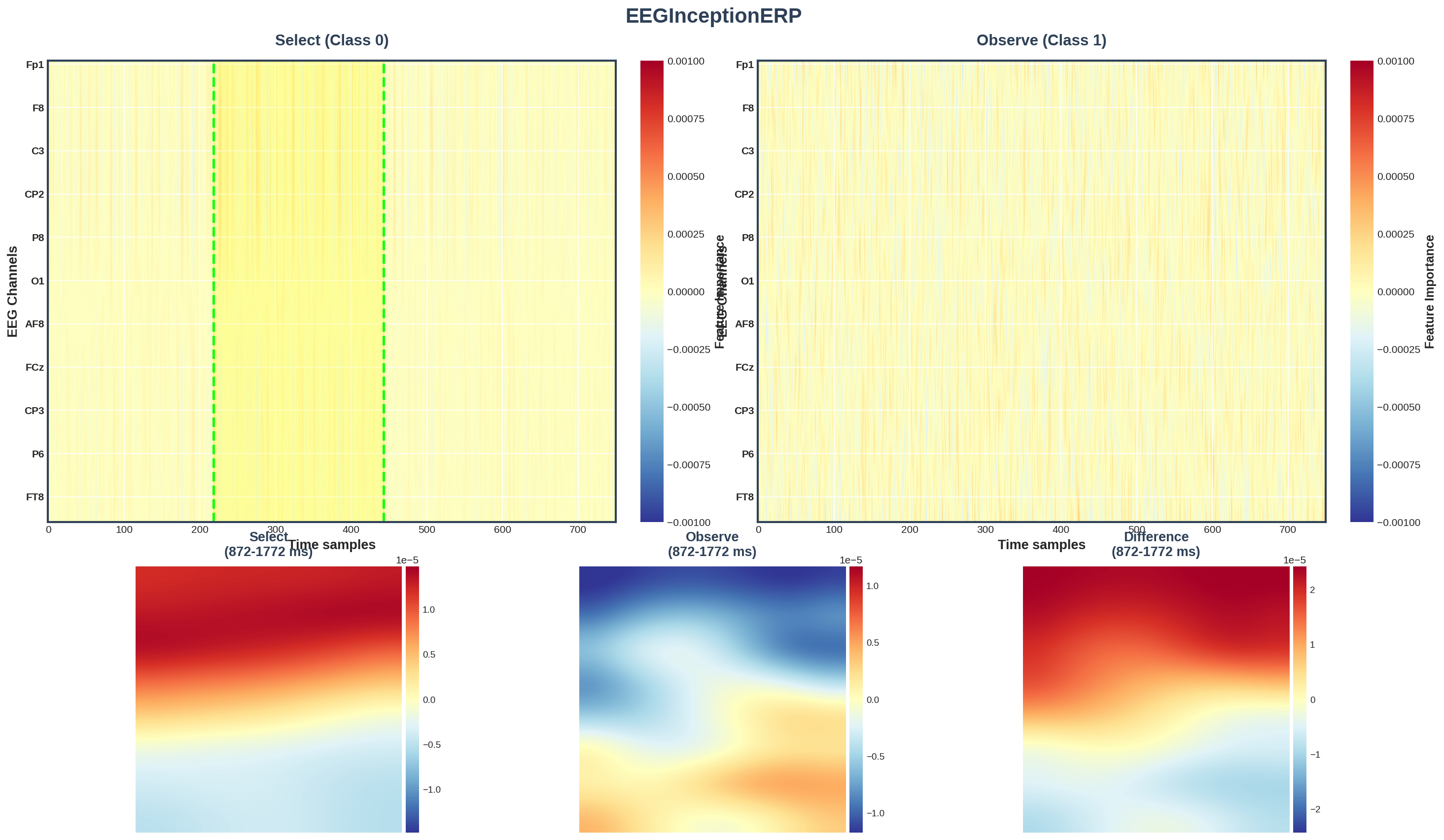

- The AI models could tell “select” vs. “observe” from the EEG quite well for each person, with accuracies between about 75% and 97%. That’s promising for future systems that adapt to you in real time (though it works best with personal calibration).

Why this is important:

- If a system can sense whether you’re just looking or actually intending to act, it can avoid accidental clicks—the Midas Touch problem. That means smoother, more trustworthy eye-controlled interfaces.

What this could lead to

- Smarter gaze controls: MR interfaces could wait until your brain shows an “I’m about to act” pattern before they confirm a selection, reducing misclicks.

- Fewer extra gestures: Instead of always needing a pinch, blink, or voice command to confirm a choice, the system could use your brain’s anticipation signal as an implicit confirmation.

- Personalized systems: Because the AI worked best per person, future MR devices might include a short setup session to learn your brain patterns.

- Safer, more accessible interactions: Hands-free, intention-aware controls could help in situations where speaking or using hands isn’t convenient, and could improve accessibility for users with motor limitations.

In short

The study shows that a specific brain signal (SPN) can help tell when you mean to act versus when you’re just looking in mixed reality. This could make gaze-based controls more reliable and natural—helping computers understand you before you even click.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of what remains missing, uncertain, or unexplored in the paper, framed as concrete, actionable items for future research.

- Real-world, continuous use without explicit trial instructions: The study induces intention via on-screen instructions per trial; it remains unknown whether SPN-based intent can be reliably detected in continuous, self-paced MR use without discrete task cues or dwell-time constraints.

- Cross-user generalization: Decoding results are person-dependent; evaluate cross-participant and few-shot/generalization performance to quantify how much personalization is needed for practical deployment.

- Cross-session and longitudinal stability: Assess whether SPN modulations and trained models remain stable across days/sessions, fatigue, and headset re-positioning, and quantify recalibration costs.

- Practicality with low-density or wearable EEG: Determine minimal channel sets, sensor types (dry, textile, in-HMD electrodes), and placements that preserve intent decoding accuracy in mobile MR.

- Movement robustness: Test performance during natural head/body movement and walking to evaluate resilience to motion artifacts typical in real-world MR.

- Real-time pipeline and latency: Quantify end-to-end detection latency (EEG acquisition, preprocessing, classification) and whether it meets MR interaction timing constraints without harming usability.

- Uncertainty manipulation beyond feedback: Directly manipulate outcome probabilities (e.g., 0–50–100%) and collect subjective uncertainty ratings to validate the “anticipatory uncertainty” account of SPN in MR.

- Feedback modality and timing effects: Systematically compare visual vs auditory vs haptic feedback, vary timing (pre- vs post-action), and duration to map their specific impact on SPN and intent decoding.

- Trial structure alignment confounds: Clarify and standardize the event anchoring used for ERP segmentation across conditions (e.g., “UI display,” “feedback onset,” “ISI onset”) to avoid condition-specific timing confounds in SPN estimation.

- ERP quantification choices: Compare negative peak vs mean-amplitude measures and apply mass-univariate/cluster-permutation analyses to ensure the SPN effect is robust to analysis choices.

- Component dissociation: Disentangle SPN from CNV, lambda responses, and other slow cortical potentials via topographical, source, and time–frequency analyses to confirm the specific neural generator(s) underlying observed effects.

- Icon semantics and visual properties: Test whether icon meaning, complexity, color schemes, and visual salience systematically influence SPN amplitude and classifier performance (beyond the fixed design used).

- Dwell-time sensitivity: Vary dwell thresholds (e.g., 250–1000 ms) to model how selection timing constraints affect SPN and decoding, and derive optimal parameters for intention-aware dwell policies.

- Eye-tracking drift and accuracy: Quantify how gaze calibration drift, fixation precision, and tracking robustness impact SPN measurement and classification, and design drift-aware adaptive pipelines.

- Model evaluation metrics and baselines: Report AUC/F1/precision–recall and confusion matrices, and compare deep learning to strong classical baselines (e.g., Riemannian/LDA, shallow ConvNets), including multimodal fusion baselines.

- Multimodal fusion with ocular/physiological signals: Integrate eye-tracking features (fixation dynamics, pupil size), EDA, and heart rate to test whether multimodal models improve cross-user generalization and robustness.

- Individual differences and state effects: Examine how traits (e.g., anxiety) and transient states (fatigue, workload, affect) modulate SPN and decoding accuracy, and develop normalization/adaptation strategies.

- Effect of repeated exposure and learning: Model habituation or learning over the 75-minute session, and test whether SPN attenuates with repeated stimulus–outcome contingencies.

- Scenario generalizability: Explicitly report and analyze SPN and decoding across the three MR scenarios; determine whether effects are scenario-specific and identify boundary conditions for transfer.

- Ethical, privacy, and user acceptance: Investigate user perceptions, consent, and trust regarding implicit brain-signal-driven interactions, and define guidelines for transparent, controllable SPN-based intent gating.

- Midas Touch mitigation in practice: Build and evaluate a working adaptive MR prototype that uses SPN gating to reduce false selections, measuring real-world error rates, task performance, and user experience.

- False positives/negatives and safety: Quantify operating points for classifiers (ROC/DET), assess failure modes, and design safeguards for high-consequence interactions.

- Data and code transparency: Provide datasets, preprocessing pipelines, and model code for reproducibility and to enable cross-lab benchmarking on intent detection in MR.

Practical Applications

Immediate Applications

The paper demonstrates that SPN (Stimulus‑Preceding Negativity) reliably indexes anticipatory uncertainty in gaze-based MR tasks and that person-dependent deep learning can classify intention (Select vs. Observe) with high accuracy. The following are deployable or pilot-ready uses that build on these findings.

- Adaptive anti–Midas Touch gating in MR interfaces

- What: Use online SPN amplitude to suppress accidental gaze selections when anticipatory uncertainty is high (e.g., Observe without Feedback), and allow rapid selection when uncertainty is low.

- Sectors: Software (XR/AR/MR), Enterprise productivity, Gaming.

- Tools/workflows: MR plugin that streams EEG via LSL, computes windowed SPN over occipital–parietal sensors, and gates dwell-triggered actions; person-dependent calibration using models like EEGInceptionERP.

- Assumptions/dependencies: Requires EEG sensor integration with HMD, low-latency preprocessing (1–15 Hz band), person-specific calibration, and robust artifact handling during head movement.

- Dynamic dwell-time adjustment driven by SPN

- What: Increase dwell thresholds when SPN indicates high uncertainty; decrease when SPN indicates firm intent, reducing false positives without extra input modalities.

- Sectors: Software (XR UX), Assistive technology.

- Tools/workflows: Dwell controller that samples SPN in the −750–0 ms pre-action window and adjusts thresholds per target.

- Assumptions/dependencies: Must balance responsiveness with stability; requires synchronized eye tracking and EEG.

- Feedback timing and modality tuning based on uncertainty

- What: Inject lightweight visual confirmation when SPN suggests high anticipatory uncertainty to stabilize interaction; reduce feedback when SPN/intent is clear.

- Sectors: Software (UX), Training/education apps, Gaming.

- Tools/workflows: Real-time FRP (feedback response policy) that modulates presence/duration of highlights/pop-ups.

- Assumptions/dependencies: SPN attenuates with expected feedback; design must avoid over-feedback and distraction.

- Person-dependent intent classifiers for MR applications

- What: Incorporate short calibration (few minutes) to train per-user EEG deep models (e.g., EEGInceptionERP) that discriminate Select vs. Observe and drive interface policy.

- Sectors: Software (XR), Research tools.

- Tools/workflows: Onboarding pipeline guiding users through labeled Select/Observe blocks; on-device or edge inference.

- Assumptions/dependencies: Classifier is person-dependent (75–97% accuracy per paper); cross-user generalization not yet supported.

- Multimodal uncertainty sensing (EEG + eye tracking + EDA/pupil)

- What: Fuse SPN with pupillometry/EDA to improve intent discrimination and robustness in operational environments.

- Sectors: HCI research, Applied R&D, Enterprise deployments needing higher robustness.

- Tools/workflows: Multimodal sensor fusion in MR; late fusion ensemble for gating/thresholding.

- Assumptions/dependencies: Additional sensors increase setup complexity; fusion must be latency-aware.

- UX evaluation metric for MR prototypes

- What: Use SPN amplitude as an objective metric for anticipatory uncertainty to compare UI designs, feedback strategies, or selection techniques.

- Sectors: Academia, Industry UX labs.

- Tools/workflows: MNE-Python pipeline for ERP extraction over O/PO/P electrodes; A/B testing dashboards.

- Assumptions/dependencies: Requires controlled tasks and consistent timing; SPN sensitive to task context and relevance.

- Hands-free assistive control without explicit confirmations

- What: Combine gaze pointing with SPN-based intent gating to enable more fluid, low-effort control for users with limited motor abilities.

- Sectors: Healthcare (assistive tech), Accessibility.

- Tools/workflows: MR/AR accessibility mode enabling implicit confirmation via SPN instead of blink/voice/pinch.

- Assumptions/dependencies: Comfort and wearability of EEG; training and caregiver support; clinical validation not yet established.

- Training and guidance overlays that react to user uncertainty

- What: Trigger in-situ hints or micro-tutorials when SPN indicates uncertainty before an action in MR training modules.

- Sectors: Education, Corporate training, Field support.

- Tools/workflows: In-app uncertainty detector that cues tooltips or highlights contextual next steps.

- Assumptions/dependencies: False positives must be contained; content needs to be non-intrusive.

- Safety buffers in industrial AR to prevent unintended commands

- What: Require low-uncertainty (attenuated SPN) before executing hazardous or high-stakes actions (e.g., machine stop/start).

- Sectors: Manufacturing, Energy, Maintenance operations.

- Tools/workflows: Intent gate integrated with SOPs; logs for compliance audits.

- Assumptions/dependencies: Reliability requirements are high; certification and fail-safe fallbacks required.

- Game mechanics leveraging intent/uncertainty

- What: Use implicit intent signals to unlock special actions or adapt difficulty, making gaze interactions feel deliberate.

- Sectors: Gaming/Entertainment (XR).

- Tools/workflows: SDK hooks mapping SPN-derived states to gameplay events.

- Assumptions/dependencies: Noise tolerance must be tuned to maintain fun; privacy notice required.

- Ethical-by-design workflows for neuroadaptive MR

- What: Adopt consent, data minimization, and on-device processing for EEG-derived intent; user control over neurodata.

- Sectors: Policy/Compliance, Product management.

- Tools/workflows: Consent templates specific to EEG intent; opt-in toggles; data retention policies; DPIA checklists.

- Assumptions/dependencies: Jurisdiction-specific privacy laws (e.g., GDPR) apply; security measures for neural data.

Long-Term Applications

These opportunities depend on further research, hardware integration, cross-user modeling, extensive validation, or regulatory pathways.

- Integrated dry-EEG in headsets for everyday neuroadaptive XR

- What: Seamless, low-setup EEG sensors embedded in AR/MR headsets for continuous SPN-based intent/uncertainty sensing.

- Sectors: Consumer XR, Enterprise XR.

- Dependencies: Comfortable, reliable dry electrodes; stable signal during motion; battery and compute constraints.

- Cross-user/generalizable intent models

- What: Domain-adaptive or self-supervised models reducing or eliminating per-user calibration for SPN-based intent detection.

- Sectors: Software, Platform providers.

- Dependencies: Larger datasets, transfer learning, federated learning, handling individual variability and state dependence.

- On-device, low-latency inference stacks

- What: Deploy intent/uncertainty classifiers on mobile XR silicon (NPUs) for sub-100 ms closed-loop control.

- Sectors: XR hardware/software.

- Dependencies: Optimized pipelines (filtering, ICA alternatives), energy-efficient models, real-time certification for critical uses.

- Intent-aware collaborative robotics and teleoperation

- What: Gate robot commands and adapt autonomy levels based on operator uncertainty in AR teleoperation.

- Sectors: Robotics, Industrial automation, Defense.

- Dependencies: Motion artifact resilience, human-factors validation, liability frameworks.

- Clinical and rehabilitation applications

- What: Hands-free, intention-aware communication and MR interfaces for ALS, spinal injury, or stroke rehab; detect engagement/uncertainty to tailor therapy.

- Sectors: Healthcare.

- Dependencies: Clinical trials, medical device approval, safety and efficacy studies, infection control for sensors.

- Automotive AR HUD and infotainment control

- What: Prevent unintended gaze selections and adapt HUD content based on driver’s uncertainty/intent.

- Sectors: Automotive.

- Dependencies: Motion robustness, automotive-grade hardware, driver distraction regulations and safety certification.

- Knowledge work in spatial computing (intent-driven window management)

- What: Automatically prioritize, snap, or switch virtual windows/spaces when low-uncertainty intent is detected, reducing mode errors.

- Sectors: Enterprise productivity, Software platforms.

- Dependencies: Reliability in diverse tasks, user acceptance, privacy in workplaces.

- Financial and trading MR terminals with intent confirmations

- What: Require “clear-intent” neural confirmation for high-stakes actions (e.g., order placement), reducing costly slips.

- Sectors: Finance.

- Dependencies: Compliance approval, auditability, opt-in policies; human override and logs.

- Education and assessment with adaptive difficulty

- What: Adjust content pacing and challenge in MR lessons/labs when SPN indicates uncertainty, to optimize learning curves.

- Sectors: Education/EdTech.

- Dependencies: Pedagogical validation; safeguards against over-adaptation; equity considerations.

- Smart home and IoT control via gaze with implicit intent

- What: AR glasses enabling reliable, hands-free control of appliances, confirmed implicitly by low-uncertainty states.

- Sectors: Consumer IoT.

- Dependencies: Affordable EEG-in-glasses, interoperability standards, family/shared-device privacy norms.

- Privacy-preserving neural intent processing

- What: Differential privacy, on-device encryption, and secure enclaves for EEG intent inference; standard APIs for consented use.

- Sectors: Policy/Standards, Platform security.

- Dependencies: Industry standards (ISO/IEEE), regulatory frameworks for neural data, developer tooling.

- Regulatory and standards development for neuroadaptive interfaces

- What: Guidelines on measurement accuracy, fail-safes, human oversight, and data governance for EEG-enabled MR interfaces.

- Sectors: Standards bodies, Regulators, Industry consortia.

- Dependencies: Multi-stakeholder coordination, evidence from longitudinal deployments.

Key Assumptions and Dependencies Across Applications

- Hardware and signal quality: Reliable, comfortable EEG capture during natural head/body movement; minimal setup time; stable electrode–skin contact; synchronized eye tracking.

- Model performance and personalization: Current results are person-dependent; cross-user generalization requires further R&D and larger datasets.

- Cognitive and affective influences: SPN reflects anticipatory uncertainty and is sensitive to task relevance and feedback; fatigue, stress, and medication can modulate signals.

- Latency and robustness: Real-time systems must maintain low latency with robust artifact mitigation in field conditions.

- Ethics, privacy, and consent: EEG data are sensitive; strong privacy controls, transparency, and opt-in user agency are prerequisites.

- Safety and compliance: Safety-critical and medical uses demand rigorous validation, fail-safes, auditability, and regulatory approval.

Glossary

- Akaike Information Criterion (AIC): A model selection metric that balances goodness of fit with model complexity. "selected with the lowest Akaike Information Criterion (AIC)"

- Balanced Latin Williams square design: A counterbalancing scheme that controls order effects by rotating condition sequences across participants. "using a balanced Latin Williams square design with four levels"

- Bayesian optimization: A method for tuning parameters (e.g., thresholds) by modeling the objective function and selecting promising candidates probabilistically. "using Bayesian optimization for threshold selection"

- Brain–Computer Interface (BCI): Systems that infer user states or intents directly from brain signals to enable interaction. "brain-computer interface (BCI) approaches have shown promise in inferring cognitive states in MR"

- Common Average Reference (CAR): An EEG re-referencing technique that subtracts the average of all channels to reduce noise. "re-referenced to the common average reference (CAR)"

- Dwell-based techniques: Gaze interaction methods that treat sustained fixation for a set duration as a selection command. "Dwell-based techniques, where gaze fixations are interpreted as selections after a fixed duration"

- EEGInceptionERP: A deep learning architecture tailored for classifying event-related EEG signals. "with EEGInceptionERP performing best"

- Electrodermal activity (EDA): Skin conductance changes reflecting autonomic arousal, often used as an implicit physiological marker. "electrodermal activity (EDA)"

- Electroencephalography (EEG): Recording of electrical brain activity from the scalp to study neural processes. "we acquired EEG and eye-tracking data from 28 participants."

- Electromyographic (EMG): Muscle electrical activity measurements used to detect movement-related states. "time-domain EEG and electromyographic (EMG) signals can reveal idle versus pre-movement states"

- Event-Related Potential (ERP): Time-locked EEG responses to specific events, averaged across trials to reveal component structure. "guidelines specific to ERP research"

- Fixation-related Lambda response: A visual evoked EEG component linked to eye fixation onset, typically over occipital sites. "due to the fixation-related Lambda response resulting from the first fixation on the target stimulus"

- Free energy principle: A theoretical framework positing that the brain minimizes prediction error (free energy) by updating generative models. "predictive coding and the free energy principle"

- G*Power: A software tool for conducting statistical power analyses and sample size planning. "An a priori power analysis was conducted using G*Power (version 3.1)"

- ICLabel: An automated classifier for labeling ICA components (e.g., brain, eye, muscle) to aid artifact rejection. "via the MNE plugin ``ICLabel ''"

- Independent Component Analysis (ICA): A blind source separation technique to unmix EEG into statistically independent components. "We applied an Independent Component Analysis (ICA) for artifact detection and correction with extended Infomax algorithm"

- Infomax algorithm (extended Infomax): An ICA optimization approach that maximizes information transfer to separate sources, adapted for EEG artifacts. "extended Infomax algorithm"

- Inter-stimulus interval (ISI): The time between successive trials or stimuli used to reset attention and reduce carryover. "a 1-second inter-stimulus interval (ISI)"

- Lab Streaming Layer Framework: A middleware for time-synchronized streaming of multimodal signals (e.g., EEG, eye tracking). "we employed the Lab Streaming Layer Framework"

- Lateralized Readiness Potential (LRP): An ERP component indexing effector-specific motor preparation over motor cortices. "The LRP refines this further by indexing effector-specific preparation over motor cortices"

- Likelihood ratio tests: Statistical comparisons between nested models based on the ratio of their likelihoods. "likelihood ratio tests"

- Linear mixed models: Regression models that include both fixed effects (e.g., conditions) and random effects (e.g., participants). "We compared a set of linear mixed models predicting SPN amplitude"

- Midas Touch problem: An HCI issue where systems mistakenly treat mere looking as intentional action, causing false activations. "commonly referred to as the Midas Touch problem"

- Mixed Reality (MR): Interfaces that blend virtual elements with the real world to enable spatial, context-aware interaction. "Mixed reality (MR) interfaces are advancing usability and accessibility"

- MNE-Python Toolbox: A Python library for EEG/MEG preprocessing, analysis, and visualization. "we used the MNE-Python Toolbox"

- Notch filter: A frequency-domain filter that removes a narrow band (e.g., 50 Hz line noise) from signals. "We then applied a notch filter (50 Hz)"

- Predictive coding: A theory that the brain generates predictions and minimizes prediction error through hierarchical inference. "contemporary neurocognitive theories based on predictive coding"

- Random sample consensus (RANSAC): A robust estimation method that identifies and excludes outliers when fitting models. "random sample consensus (RANSAC) method"

- Raycasting: A technique that projects rays from gaze or head orientation to determine interactive targets in 3D environments. "raycasting \cite{chen2023gaze, gabel2024guiding,gabel2023redirecting}, where gaze or head orientation guides a virtual pointer"

- Readiness Potential (RP): A slow negative EEG shift preceding voluntary movement, reflecting motor preparation. "The RP is a slow negative fluctuation emerging up to two seconds before voluntary movement"

- Source localization: Methods estimating the brain regions generating observed EEG activity. "with source localization implicating prefrontal areas related to uncertainty processing."

- Spherical splines: Mathematical functions used to interpolate scalp potentials and estimate channels in EEG processing. "of spherical splines for estimating scalp potential"

- Stimulus-Preceding Negativity (SPN): A slow anticipatory EEG potential that increases before expected, task-relevant events, sensitive to uncertainty. "Stimulus-Preceding Negativity (SPN), a slow cortical potential that builds up before anticipated events"

- Supplementary motor areas: Medial frontal cortex regions involved in planning and initiating movements. "It originates in the supplementary motor areas"

- Varjo XR-4: A high-resolution mixed reality headset used for immersive spatial computing experiments. "Participants wore the Varjo XR-4 headset"

- Visual angles: The angular size of visual stimuli as projected on the retina, affecting perceptual performance. "targets subtended visual angles of –"

- Water-based electrodes: EEG sensors that use saline or water for conductivity instead of gel, enabling faster setup. "from 64 water-based electrodes from the R-Net elastic cap"

Collections

Sign up for free to add this paper to one or more collections.