- The paper presents evidence that post-ChatGPT, student submissions increased by 50%, with total and average edit distances rising by 500% and 300% respectively.

- The methodology employs a quasi-longitudinal analysis of 2,066 submissions using metrics such as submission count, edit distances, and project scores.

- The findings reveal improved team project scores but a decline in individual performance, raising concerns about over-reliance on AI tools.

Introduction

The study "Changes in Coding Behavior and Performance Since the Introduction of LLMs" investigates the influence of LLMs on student coding behavior and performance. Conducted over ten semesters in a graduate-level cloud computing course at Carnegie Mellon University, this quasi-longitudinal study compares student activities and achievements before and after the release of ChatGPT in late 2022. The analysis reveals notable shifts in coding practice and academic outcomes, sparking discussions on the implications for computer science education in the era of AI-assisted tools.

Methodology and Metrics

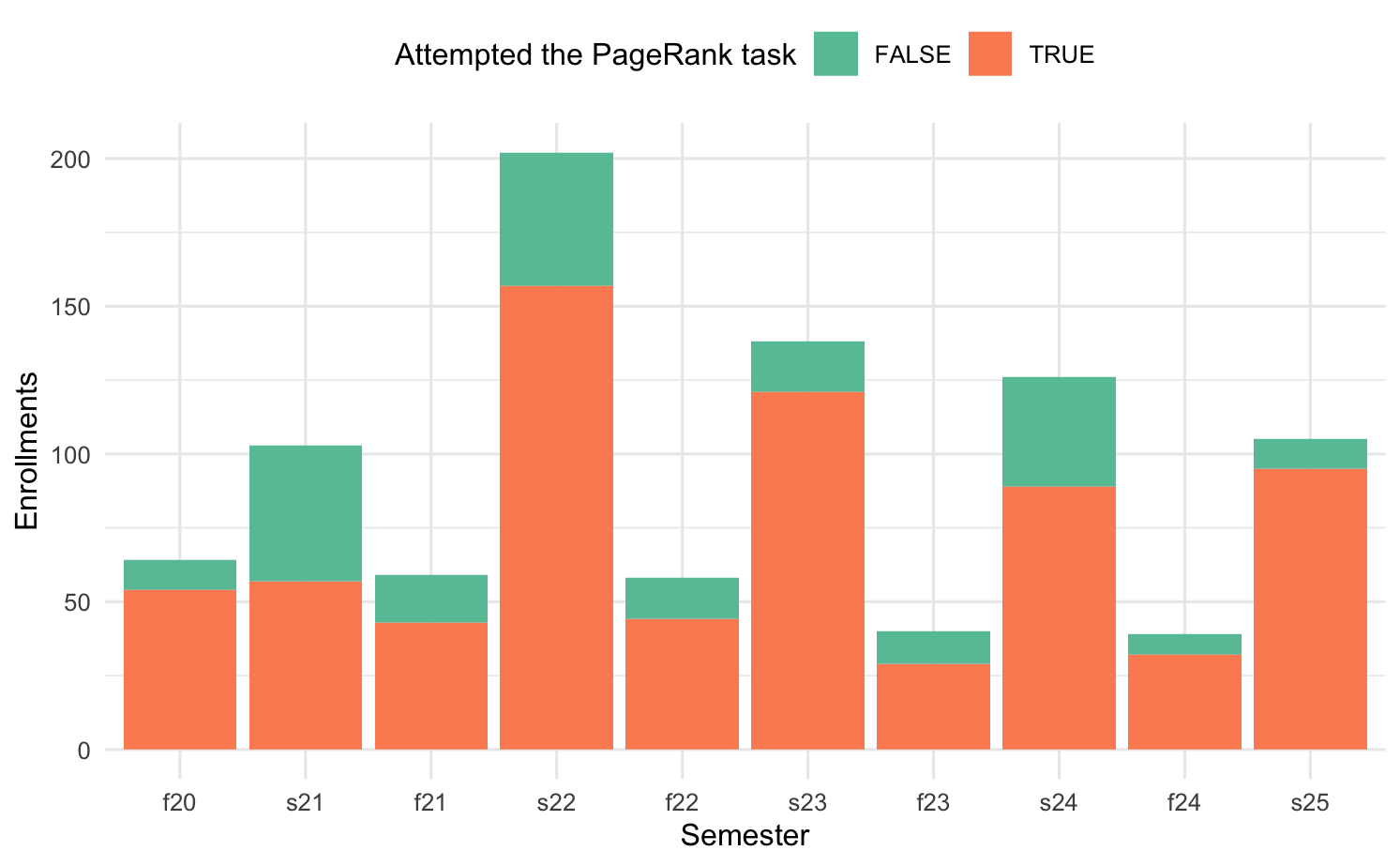

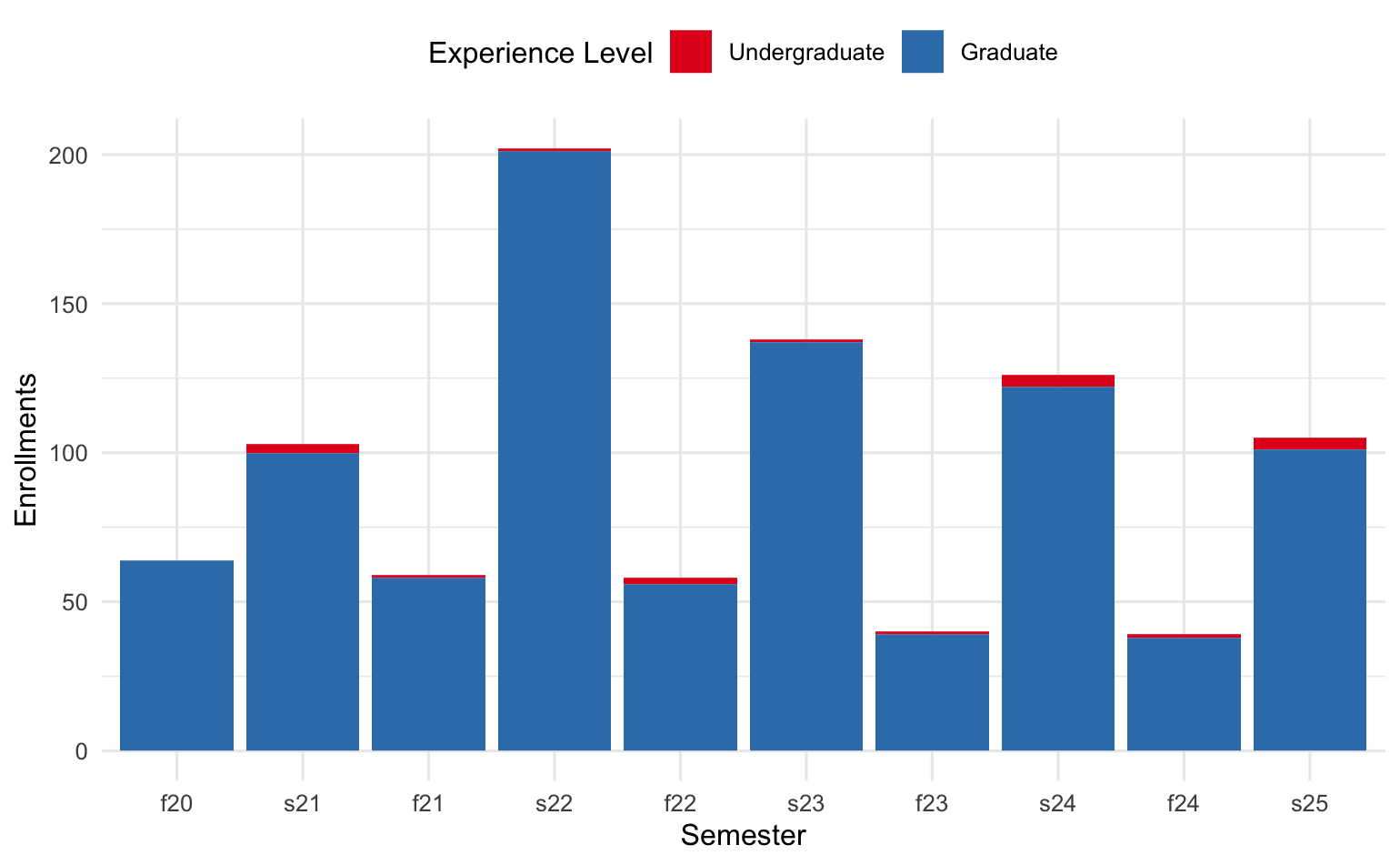

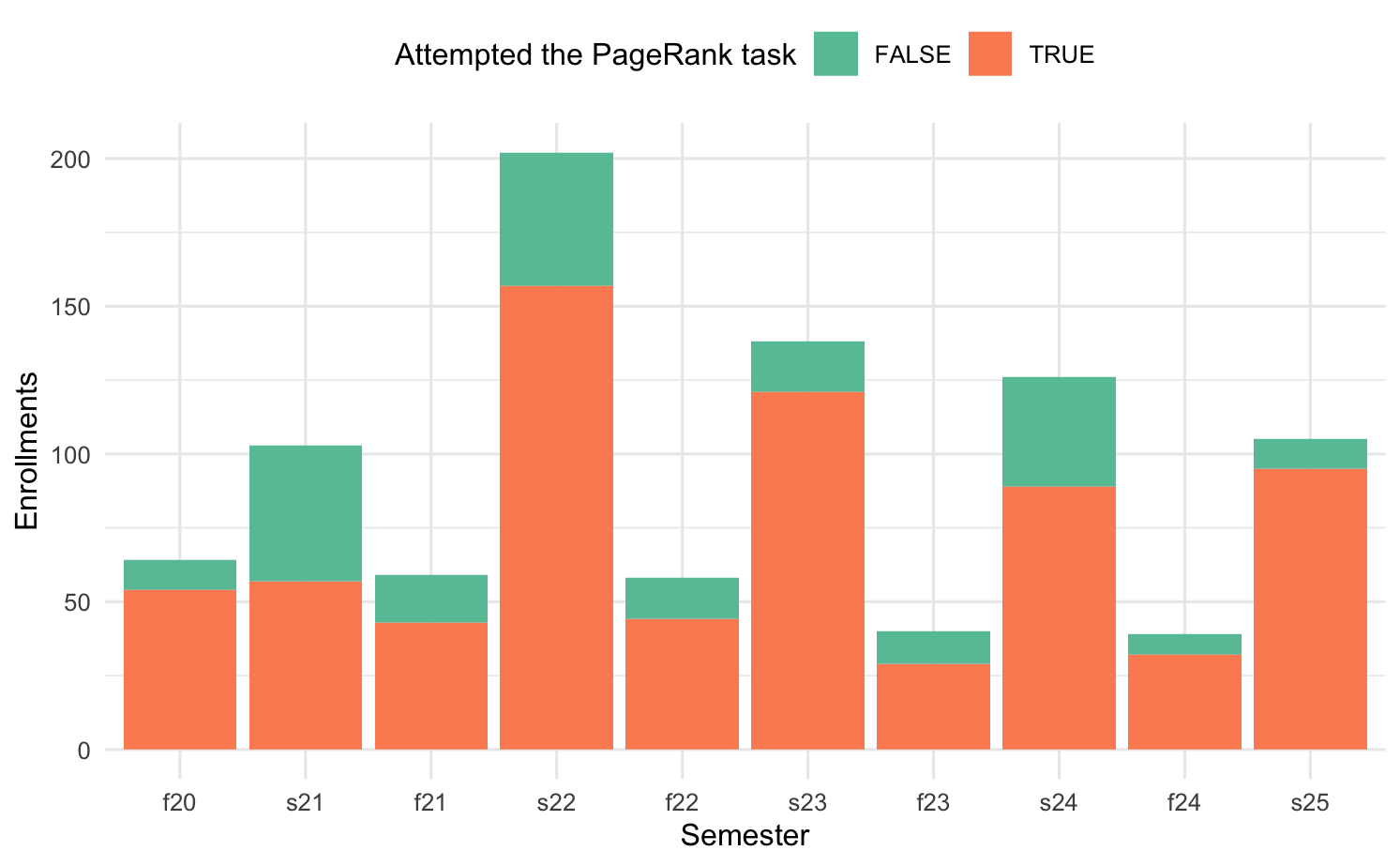

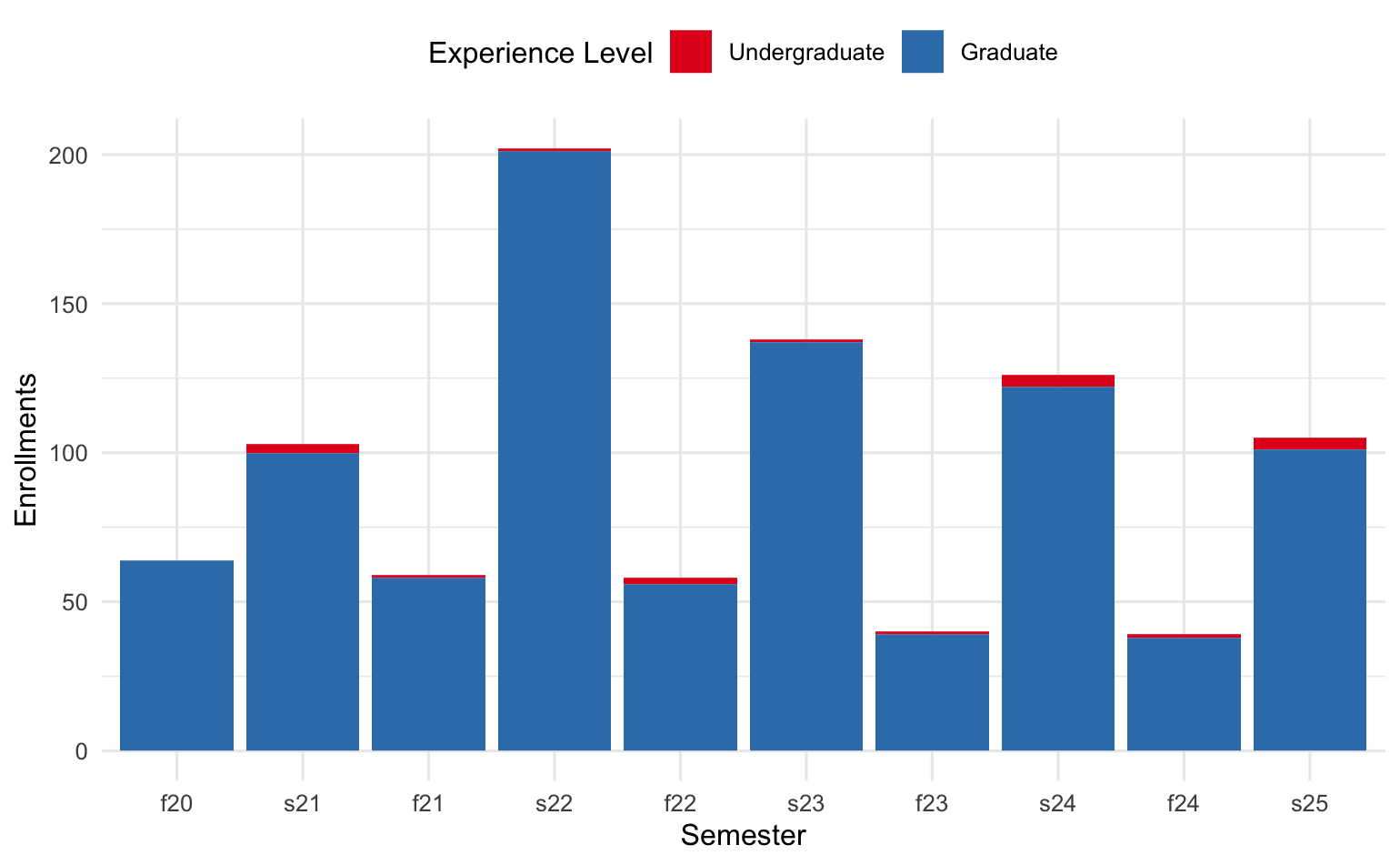

The research analyzed 2,066 student submissions involving 721 enrollments from Fall 2020 to Spring 2025, focusing on an unchanged assignment to track longitudinal changes accurately. The coding behavior is quantified using metrics such as the number of submissions, total edit distance, and average edit distance. Performance outcomes are measured by task scores, individual project scores (IP scores), and team project scores (TP scores).

The methodology provides an empirical basis for examining how LLM use affects both the process (as seen in coding behavior) and the product (as seen in academic performance).

Changes in Coding Behavior

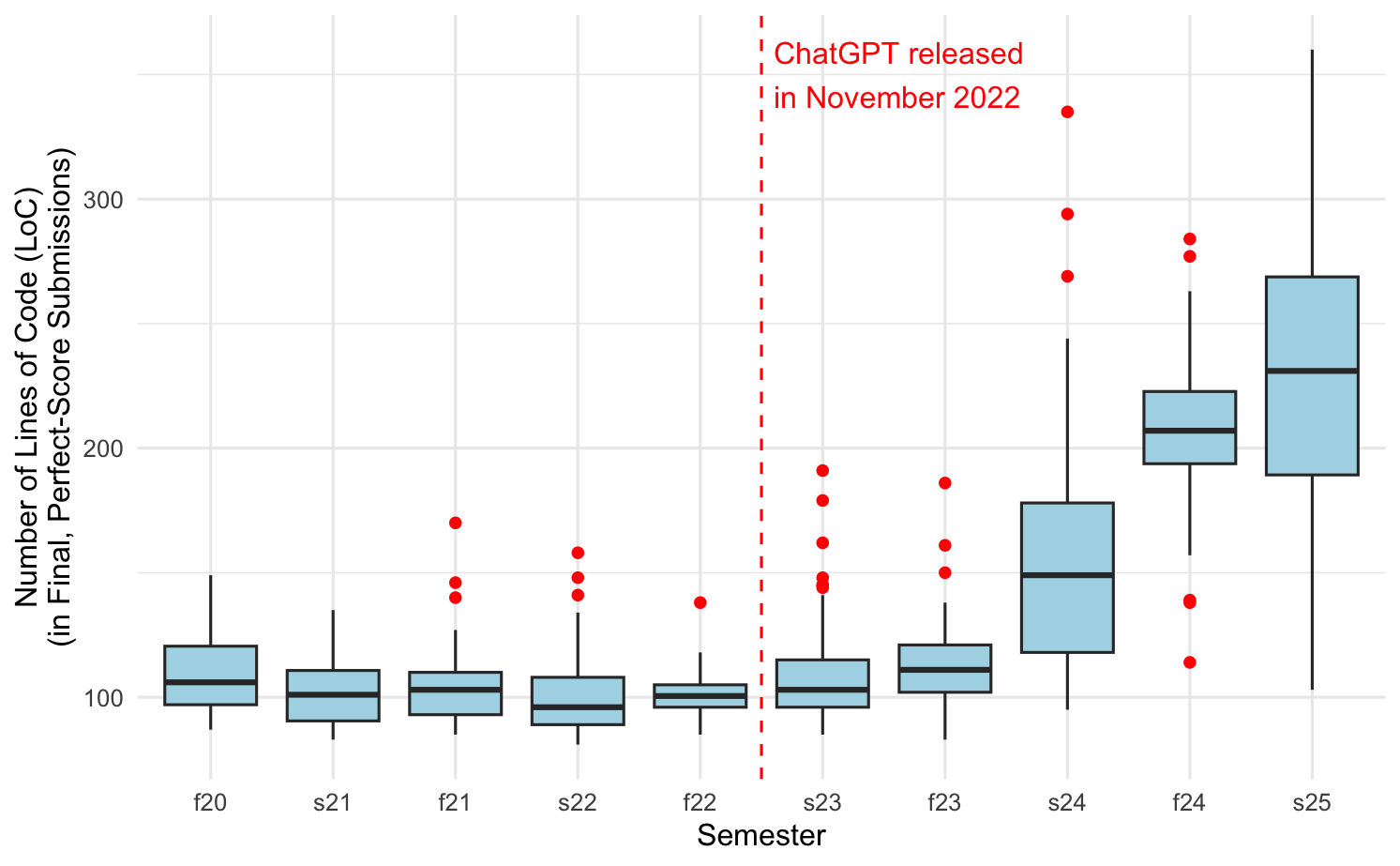

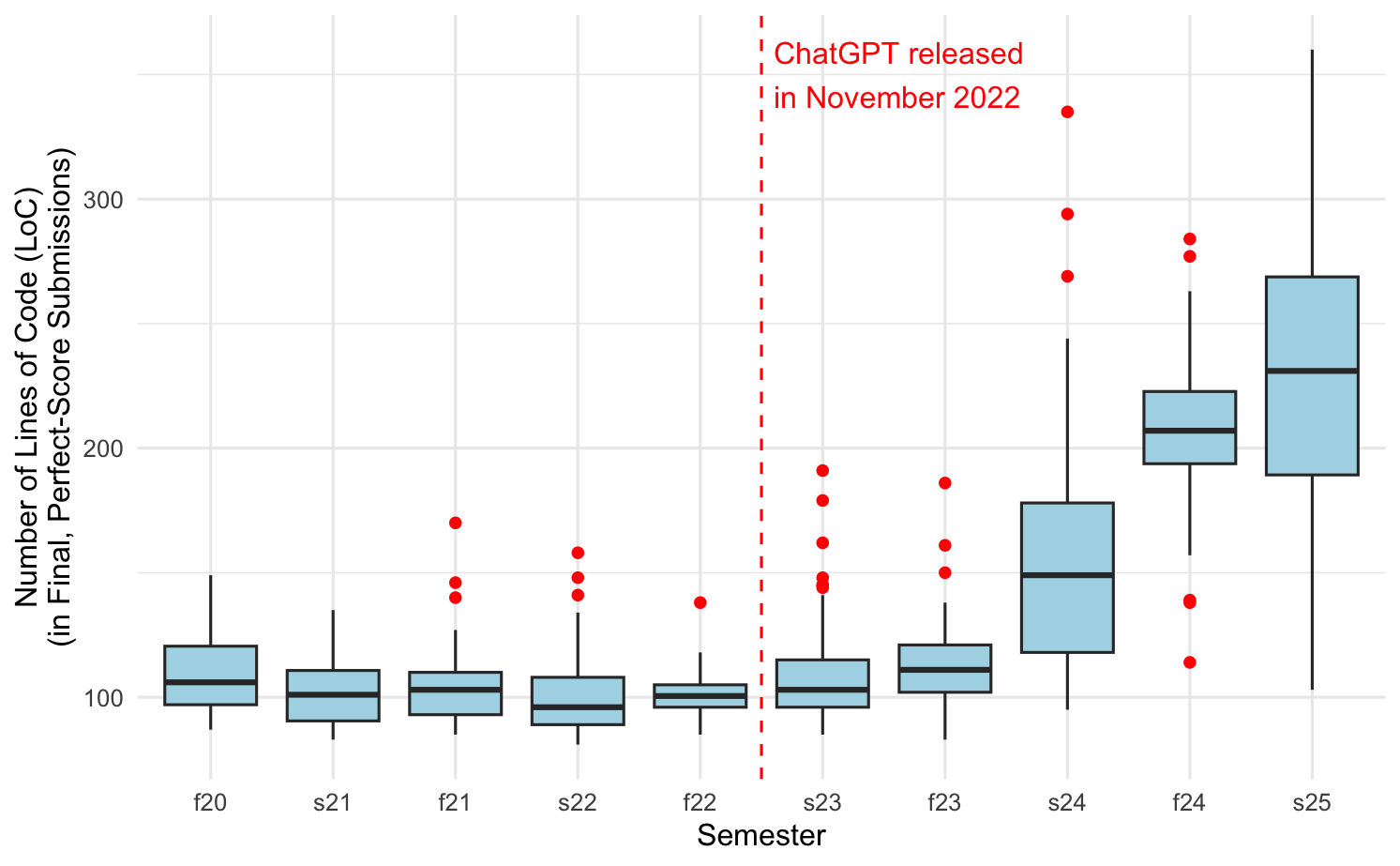

Post-ChatGPT semesters display a significant increase in the length and edit distance of student submissions (Figure 1), indicating a growing trend in making more extensive changes to the code. The median number of submissions per student increased by 50%, total edit distance increased by 500%, and average edit distance rose by 300%.

Figure 1: Lines of code in final, perfect-score submissions increased significantly since Fall 2022. Note: the y-axis is not zero-indexed.

This trend aligns with the hypothesis that students are leveraging LLMs for code generation, leading to more substantial modifications in the submission process. Such behavior may suggest over-reliance on AI tools, potentially at the expense of a deeper understanding of underlying algorithms and coding principles.

The correlation between coding behavior changes and performance presents a mixed picture. Task scores have remained nearly perfect across all semesters, suggesting the task's low difficulty. Nonetheless, there's a noticeable decrease in individual project scores, coupled with an increase in team project scores post-LLMs.

Figure 2: Individual Projects (IP) scores by semester.

Figure 3: Team Project (TP) scores by semester. The low scores in Fall 2020 (f20) are likely an outlier, due to COVID.

The decline in IP scores could indicate reduced individual learning outcomes, potentially due to overusing LLM aids. In contrast, the boost in TP scores might reflect AI’s role in enhancing team collaborations, albeit with possible masking of unequal team member contributions.

Implications and Future Directions

The findings raise critical questions about evaluating true learning amidst the evolving landscape of AI involvement. The study suggests that increased edits, while fostering completion, often do not correspond to improved understanding, echoing a strategic shift from code crafting to relying on AI-generated content.

For educators, the challenge lies in adapting curricula to foster genuine coding and problem-solving skills in an AI-augmented context, emphasizing collaboration and strategic thinking over rote code generation. The concept of the centaur model in chess, where human and AI collaboration outperforms either alone, serves as a relevant analogy. This suggests reimagining the evaluation methods to account for human-AI partnerships.

Conclusion

The study provides substantial empirical evidence that LLMs have significantly altered student coding behavior without correspondingly enhancing individual learning outcomes. While LLMs facilitate task completion and collaborative projects, they might obscure true individual competence and learning. These insights call for a redefinition of programming mastery and pedagogical strategies to prepare students for the complexities of AI-enhanced roles, ensuring the enduring value of human intelligence in synergy with AI tools.