Large Language Model Agent for User-friendly Chemical Process Simulations

Abstract: Modern process simulators enable detailed process design, simulation, and optimization; however, constructing and interpreting simulations is time-consuming and requires expert knowledge. This limits early exploration by inexperienced users. To address this, a LLM agent is integrated with AVEVA Process Simulation (APS) via Model Context Protocol (MCP), allowing natural language interaction with rigorous process simulations. An MCP server toolset enables the LLM to communicate programmatically with APS using Python, allowing it to execute complex simulation tasks from plain-language instructions. Two water-methanol separation case studies assess the framework across different task complexities and interaction modes. The first shows the agent autonomously analyzing flowsheets, finding improvement opportunities, and iteratively optimizing, extracting data, and presenting results clearly. The framework benefits both educational purposes, by translating technical concepts and demonstrating workflows, and experienced practitioners by automating data extraction, speeding routine tasks, and supporting brainstorming. The second case study assesses autonomous flowsheet synthesis through both a step-by-step dialogue and a single prompt, demonstrating its potential for novices and experts alike. The step-by-step mode gives reliable, guided construction suitable for educational contexts; the single-prompt mode constructs fast baseline flowsheets for later refinement. While current limitations such as oversimplification, calculation errors, and technical hiccups mean expert oversight is still needed, the framework's capabilities in analysis, optimization, and guided construction suggest LLM-based agents can become valuable collaborators.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A simple explanation of “LLM Agent for User‑friendly Chemical Process Simulations”

What this paper is about

This paper shows how an AI assistant (a LLM, or LLM) can help people build and understand computer simulations of chemical processes just by using everyday language. Instead of clicking through complicated menus or writing code, a user can type, “Open the methanol–water distillation model and tell me how to improve it,” and the AI will do the hard work in the background.

The researchers connected an AI to a real industrial simulator called AVEVA Process Simulation (APS) using a standard “bridge” called the Model Context Protocol (MCP). They tested it on a common chemical task: separating water and methanol using a distillation column.

What questions the researchers asked

The team wanted to know:

- Can an AI agent make professional chemical simulators easier to use for beginners and faster for experts?

- Can it read, explain, and improve existing simulations in clear language?

- Can it build a new simulation flowsheet from a plain-language description?

- Can it do all this while keeping the accuracy and safety expected in chemical engineering?

How they did it (in everyday terms)

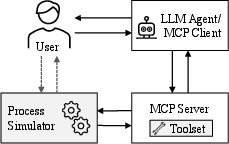

Think of three parts working together:

- The “brain” (LLM agent): This is the AI that understands your plain-language requests, plans the steps, and decides what to do next.

- The “translator” (MCP server): This turns the AI’s ideas into precise actions the simulator understands and turns the simulator’s results back into readable summaries.

- The “engine” (APS simulator): This is the professional-grade tool that performs real calculations about chemical processes.

Here’s an analogy: You tell a smart assistant, “Show me how this factory separates water and methanol and suggest improvements.” The assistant asks its translator to press the right buttons, pull the right levers, and read the gauges inside the simulator. The simulator does the math. The assistant then explains the results in simple language.

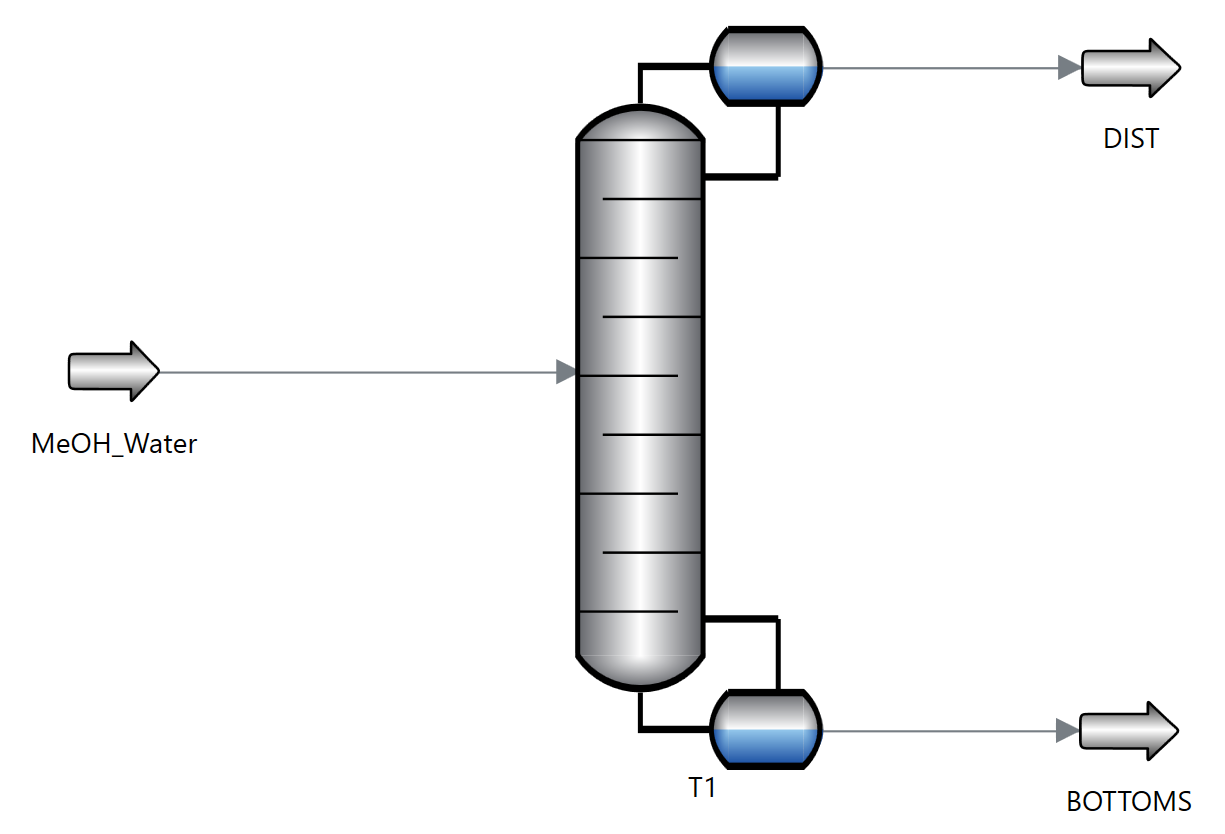

To test the system, the researchers used two kinds of tasks on a water–methanol distillation process (a standard setup where a tall tower separates liquids based on different boiling points):

- Analysis: The AI opened an existing model, explored all the parts (like the column, feed and product streams), collected key numbers (like temperatures, flow rates, energy use), and summarized what was going on. It also suggested ways to improve performance.

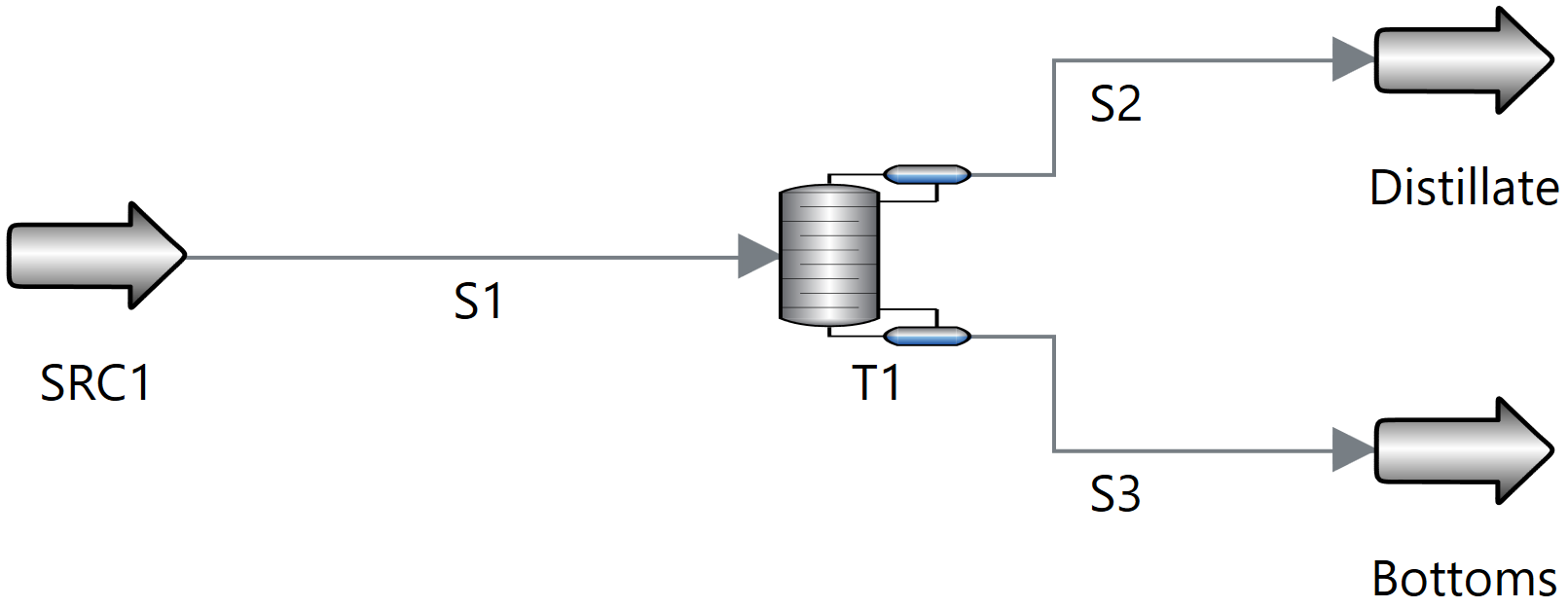

- Synthesis: The AI built a new simulation from scratch in two ways:

- Step-by-step: the user guided it through each action (good for learning and reliability).

- Single prompt: the user gave one short description, and the AI did the whole build (fast, good for a first draft).

What they found and why it matters

From the analysis task:

- The AI could connect to the simulator, list equipment and streams, read important settings, check if the model had converged (i.e., the math makes sense), and give a clear summary.

- It proposed reasonable improvements, like adjusting the number of stages in the column, the feed location, the reflux ratio (how much liquid is sent back down the column), the pressure, and adding heat integration to save energy.

- It communicated clearly and chose the most relevant numbers to show.

- But it sometimes overstated things (for example, calling a setting “optimal” without testing it) or made small judgment mistakes. This shows the AI is helpful but still needs expert oversight.

From the synthesis (building) task:

- Step-by-step mode: reliable and easy to monitor. Great for students or careful design work because you can check each move.

- Single-prompt mode: fast at getting a workable baseline flowsheet that experts can later refine.

- In both modes, the AI handled many routine steps, which can save time and reduce clicking and scripting.

Why this matters:

- For students and newcomers, talking to the simulator in plain language makes learning much easier.

- For experienced engineers, the AI can automate tedious tasks, quickly extract data, and provide starting points for design and brainstorming.

What this could change in the future

If developed further, this approach could make powerful engineering tools more accessible, like having a friendly guide inside complex software. It could:

- Speed up early-stage design and “what-if” studies.

- Help in classrooms by explaining concepts and showing standard workflows.

- Reduce time spent on repetitive tasks like data extraction and report preparation.

However, there are important limits today:

- The AI can oversimplify or make calculation mistakes.

- Technical hiccups can happen when connecting tools.

- Safety and accuracy standards mean a human expert must still review decisions.

Quick explanations of key terms

- LLM: An AI that understands and writes text, like a super-smart chatbot.

- Agent: An AI that can plan and carry out multi-step tasks using tools.

- Model Context Protocol (MCP): A standard “plug” that lets the AI talk to outside tools in a reliable way.

- AVEVA Process Simulation (APS): Professional software used to design and analyze chemical processes.

- Flowsheet: A diagram showing the “map” of a process—equipment (like columns and heat exchangers) and the streams connecting them.

- Distillation column: A tall tower that separates liquids by boiling; “stages” are like floors where vapor and liquid meet to improve separation.

- Reflux ratio: How much condensed liquid is sent back down the column to help separation.

- Heat integration: Using hot parts of the process to warm cold parts, saving energy.

Bottom line

This paper shows that an AI assistant, connected through a safe and well-structured bridge, can make chemical process simulations more user-friendly. It can read models, explain them clearly, suggest improvements, and even build new ones from scratch. It’s not perfect yet and still needs expert checks, but it’s a promising step toward faster, clearer, and more accessible chemical engineering work.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

The paper introduces an LLM–MCP framework integrated with AVEVA Process Simulation (APS) and demonstrates it on two water–methanol case studies. The following concrete gaps and open questions remain for future work:

- Benchmark breadth and generalization:

- The evaluation is limited to a single binary distillation system (water–methanol); performance on diverse unit operations (reactors, absorbers, extractors, compressors), recycle networks, reactive systems, electrolytes, solids handling, and multi-column configurations is not assessed.

- No tests on dynamic simulations (start-up/shutdown, control loops), non-ideal thermodynamics beyond NRTL (e.g., electrolyte models, EOS for hydrocarbons), or severe convergence scenarios (tear streams, complex recycles).

- Quantitative evaluation and metrics:

- Results are “qualitative”; there are no quantitative metrics for correctness, task success rate, latency, tool-call efficiency, number of retries, or cost (token/tool-use/compute).

- No comparative baseline against human experts or scripted automation (time saved, error rates, solution quality).

- No statistical replication across multiple runs to assess variability or robustness of the agent’s behavior.

- User studies and human factors:

- Claims about educational benefits are not validated through controlled classroom/user studies (learning gains, usability, cognitive load, error comprehension).

- No assessment of expert satisfaction or trust calibrated against error frequency; no analysis of prompt design best practices for novices (stepwise vs single-prompt trade-offs).

- Safety, guardrails, and compliance:

- The framework lacks explicit safety constraints (e.g., pressure/temperature limits, composition bounds, material compatibility), constraint checking before tool execution, and fail-safe defaults.

- No auditability requirements (complete logs of agent plans, tool calls, parameter changes) are specified for regulated or safety-critical settings.

- No discussion of access control, permissions, or prevention of destructive operations (e.g., deleting models, overwriting simulations) via MCP tools.

- Error taxonomy and mitigation:

- Observed “oversimplifications” and “misleading statements” are not systematically cataloged; error types (numerical, unit/scale, specification, reasoning, domain) and their rates remain unknown.

- Absent mitigation mechanisms such as automatic validation checks (unit consistency, feasibility checks), domain rule enforcement, or review cycles before applying changes.

- No recovery strategies for APS solver failures (initialization heuristics, warm starts, tear-stream handling, targeted relaxation).

- Toolset completeness and portability:

- The MCP toolset is limited to a subset of APS functionality; coverage of optimization, sensitivity analysis, dynamics, controller configuration, and advanced convergence tools is missing.

- Portability to other simulators (Aspen Plus/HYSYS, Pro/II, gPROMS, DWSIM) is untested; mapping requirements, differences in thermodynamic libraries, and adapter design are not specified.

- Versioning and compatibility across APS releases (2025 and beyond) and across OS environments are not discussed.

- Optimization integration:

- No integration with deterministic optimization (e.g., APS optimizers, sensitivity sweeps, surrogate modeling); suggested improvements are heuristic and not validated via formal optimization.

- Experimental design for parameter sweeps (reflux, pressure, feed stage) and multi-objective optimization (purity, energy, economics) is not implemented or benchmarked.

- Thermodynamics and property-model selection:

- The agent’s capability to select appropriate property models (e.g., NRTL vs UNIQUAC vs EOS) based on feed composition and conditions is not evaluated; criteria for model selection remain unspecified.

- Procedures for validating property predictions (VLE consistency checks, azeotrope detection, activity coefficient trends) are absent.

- Reliability and scaling:

- No analysis of performance on large flowsheets (tens of unit ops, thousands of variables) regarding tool-call overhead, memory/context window pressure, and latency.

- Handling of conflicting or missing data, ambiguous variable paths, and schema evolution in APS projects is not covered.

- Stepwise vs single-prompt synthesis:

- The paper describes both modes but does not quantify differences in accuracy, failure modes, coverage, or time-to-completion; criteria for when to prefer each mode are missing.

- No catalogue of common failure cases in single-prompt synthesis (missing specifications, incorrect unit choices, misconnected ports) and targeted guardrails to prevent them.

- Provenance, reproducibility, and change management:

- Methods to ensure reproducibility (pinning tool versions, APS version, environment capture) and to record provenance (prompts, tool invocations, pre/post state diffs) are not specified.

- No workflow for rollbacks, change reviews, or approvals in multi-user environments.

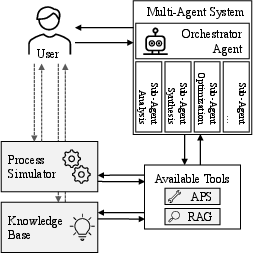

- Multi-agent architectures:

- The benefits of multi-agent peer review (e.g., critique loops to reduce hallucinations) versus single-agent latency are not empirically explored; orchestration strategies and coordination costs remain open.

- Integration with external data and documentation:

- The agent does not use retrieval-augmented generation for APS documentation, thermodynamic references, or plant standards; a plan for integrating RAG to reduce domain errors is not provided.

- Economic analysis and trade-offs:

- Although suggested, there is no integrated cost/utility module; economic trade-offs (CAPEX/OPEX, energy cost vs purity, heat integration ROI) are neither computed nor benchmarked.

- Security and enterprise deployment:

- Security posture of MCP servers (authentication, authorization, encryption), network deployment models, and handling of proprietary models/data are not addressed.

- Policies for sandboxing tool actions and preventing prompt injection or tool misuse are not detailed.

- Internationalization and accessibility:

- The agent’s performance across languages (non-English prompts), unit systems (SI vs imperial), and localization requirements is untested.

- Coverage and completeness of analysis:

- The agent accessed 356/2006 variables in one scenario, but criteria for variable selection, coverage guarantees, and risk of missing critical variables (e.g., constraints, alarms) are not defined.

- Evaluation artifacts and transparency:

- While the appendix reportedly contains full prompts/answers, standardized datasets, scripts, and open-source code for reproducing results are not provided; a public benchmark suite for process-simulation agents is absent.

- Industrial validation:

- No trials on real industrial models (proprietary complexity, noisy data, legacy constraints) or with practicing engineers under production conditions; acceptance, productivity impact, and error consequences remain unknown.

- Open research questions:

- How to formally fuse LLM planning with deterministic simulation and optimization to guarantee constraint satisfaction and correctness?

- What guardrail architectures (domain-specific validators, typed schemas, contracts) most effectively reduce hallucinations in engineering tasks?

- Can multi-agent critique meaningfully lower error rates without prohibitive latency/cost in process-simulation workflows?

- What standardized benchmarks and metrics should be used to compare agentic systems across simulators and process classes?

Practical Applications

Immediate Applications

The paper’s MCP-enabled LLM agent for AVEVA Process Simulation (APS) is already capable of connecting to APS, inspecting flowsheets, setting/reading variables, and guiding construction steps. Based on these demonstrated capabilities and the curated toolset, the following use cases can be deployed now with human oversight.

- LLM copilot for process analysis and reporting

- Sector: chemicals, petrochemicals, pharma, energy

- Tools/products/workflows: Claude Desktop MCP client + FastMCP server + APS Python API; use models_list, connectors_list, model_all_vars/params, var_get_multiple, sim_status to auto-generate concise design review summaries (equipment topology, key operating conditions, KPIs, convergence status) and improvement options

- Assumptions/dependencies: APS 2025 license and scripting access; curated tool descriptors; human-in-the-loop review for interpretations; secure access to proprietary models

- Guided flowsheet construction (stepwise mode) for novices and cross-functional teams

- Sector: industry engineering teams; academia (lab courses)

- Tools/products/workflows: “step-by-step” prompt templates; model_add, models_connect, fluid_create, fluid_to_source, var_set_multiple for incremental build with instructor/mentor oversight; suitable for distillation, simple separations, baseline unit ops

- Assumptions/dependencies: instructor/engineer supervision; validated thermodynamics selections; guardrails for parameter ranges

- Single-prompt baseline synthesis for early design exploration

- Sector: process design consultancies; R&D

- Tools/products/workflows: one-shot prompts to produce converged baseline flowsheets (e.g., water–methanol distillation) for later expert refinement; fast brainstorming of configurations and operating envelopes

- Assumptions/dependencies: may oversimplify or misplace operating targets; immediate expert validation required; convergence troubleshooting support

- Automated data extraction, KPI dashboards, and documentation

- Sector: industrial design and operations; quality and documentation teams

- Tools/products/workflows: var_get_multiple and model_all_vars/params to pull structured snapshots; generate standardized tables/plots for energy duty, product purities, reflux/reboil ratios; attach to design reviews and change logs

- Assumptions/dependencies: standardized variable naming conventions; version-controlled simulations; approval workflows

- Lightweight sensitivity checks and parameter sweeps (scripted via the LLM)

- Sector: process development and optimization groups

- Tools/products/workflows: use var_set_multiple in loops planned by the agent to explore ranges (e.g., feed stage, reflux ratio) and report impacts on purity and energy

- Assumptions/dependencies: limited scope (small sweeps); manual guardrails on parameter bounds; compute/time for repeated runs; potential extension to dedicated sweep tools

- Educational tutoring and concept translation in process simulation

- Sector: higher education, corporate training

- Tools/products/workflows: interactive, natural-language explanations of thermodynamics, VLE, distillation trade-offs; live demonstrations of APS workflows using example files (e.g., water–methanol)

- Assumptions/dependencies: classroom APS licensing; curated prompt sets; instructor oversight to correct optimistic/ambiguous claims

- Brainstorming support for improvement options

- Sector: engineering teams; continuous improvement initiatives

- Tools/products/workflows: structured lists of configuration/operating changes (e.g., feed conditioning, reflux ratio tuning, heat integration), annotated with expected trade-offs

- Assumptions/dependencies: proposals are hypotheses; require validation via simulation runs and plant constraints; watch for potentially misleading suggestions

- Flowsheet inventory and knowledge capture for model governance

- Sector: model management, knowledge engineering

- Tools/products/workflows: automated extraction of unit inventories, connections, thermodynamics choices; produce BOM-like artifacts and audit trails of tool calls (JSON-RPC logs from MCP)

- Assumptions/dependencies: access to internal models; data governance policies; secure logging

Long-Term Applications

With further research and tooling (e.g., adding optimization routines, robustness checks, and multi-agent orchestration), the framework can scale into the following advanced applications.

- Autonomous multi-step optimization and design-space exploration

- Sector: process development, advanced optimization groups

- Tools/products/workflows: integrate MAS orchestration (e.g., LangGraph, AutoGen) and optimization/sensitivity tools into the MCP server; closed-loop tuning of column parameters under constraints (purity, energy, throughput)

- Assumptions/dependencies: robust convergence strategies; domain-specific objective and constraint libraries; formal guardrails and fail-safe policies; extensive benchmarking

- Cross-simulator MCP ecosystems (vendor-neutral toolsets)

- Sector: software, chemicals, energy, pharma

- Tools/products/workflows: replicate the MCP server abstraction for Aspen Plus/HYSYS, DWSIM, and other platforms to standardize natural-language interfaces across tools

- Assumptions/dependencies: API access and vendor cooperation; harmonized tool schemas; maintenance with evolving versions

- Digital twin assistance and operational “what-if” analysis

- Sector: plant operations, advanced control, energy management

- Tools/products/workflows: connect APS models to plant historians; run scenario analyses (feed disturbances, setpoint changes) and generate operator guidance in natural language

- Assumptions/dependencies: validated high-fidelity models aligned with plant data; cybersecurity; regulatory approval for advisory use; operator training; human-in-the-loop decision-making

- Integrated techno-economic analysis (TEA), LCA, and ESG reporting pipelines

- Sector: finance and sustainability within engineering organizations; compliance teams

- Tools/products/workflows: map simulation outputs to economic/emissions metrics; produce standardized sustainability and cost reports; support early screening of process alternatives

- Assumptions/dependencies: verified correlations between simulation KPIs and economic/LCA models; consistent data provenance; auditability

- AI-assisted safety and operability pre-analysis (HAZOP support)

- Sector: process safety and risk management

- Tools/products/workflows: pre-HAZOP scanning of operating envelopes and potential failure modes based on flowsheet structure and parameter dependencies; draft guidewords and scenarios

- Assumptions/dependencies: certified methodologies; conservative risk posture; expert-led final analyses; traceability of reasoning

- Standardized curricula and competency frameworks for conversational simulation

- Sector: academia and workforce development

- Tools/products/workflows: course modules centered on LLM-enabled simulators; open benchmark flowsheets; assessment rubrics for human-in-the-loop use

- Assumptions/dependencies: pedagogical research; institutional buy-in; equitable access to tools

- MCP tool marketplace and organizational prompt libraries

- Sector: software and enterprise engineering enablement

- Tools/products/workflows: reusable tool plugins (e.g., convergence troubleshooting, model sanitizers), prompt packs for common workflows (distillation setup, data audit, report generation)

- Assumptions/dependencies: governance of shared assets; versioning and compatibility testing; security vetting

- Coupling with laboratory automation and computational chemistry agents

- Sector: R&D (pharma, specialty chemicals)

- Tools/products/workflows: bridge process simulations with autonomous experiment planning (e.g., ChemCrow/Coscientist-like agents) to close the loop from lab kinetics to flowsheet design

- Assumptions/dependencies: integrated data standards; experiment–simulation model calibration; safety and IP controls

Notes on Feasibility and Risk

- The framework preserves deterministic computation via APS while delegating planning and orchestration to the LLM agent; expert oversight remains necessary, especially given risks of oversimplification or optimistic interpretations.

- Dependencies include stable simulator APIs, robust MCP tooling, secure data access, and high-quality LLM tool-use behavior.

- Scaling to safety-critical or operational contexts requires formal validation protocols, audit logging, and governance aligned with industry and regulatory standards.

Glossary

- Agent2Agent: A high-level protocol for coordinating orchestration between agent systems. "Agent2Agent~\cite{a2a_google_2024} can help coordinate orchestration between these systems"

- APS scripting interface: The Python-based API of APS enabling programmatic access to simulation functionalities. "via the APS scripting interface, a comprehensive Python-based API"

- AVEVA Process Simulation (APS): A commercial chemical process simulator for modeling and analyzing processes. "a LLM agent is integrated with AVEVA Process Simulation (APS) via Model Context Protocol (MCP)"

- AutoGen: An orchestration framework for cyclic, stateful agent workflows. "Frameworks such as LangGraph~\cite{langgraph} and AutoGen~\cite{autogen} provide infrastructure for cyclic, stateful workflows"

- Azeotrope: A mixture whose vapor and liquid phases have the same composition at certain conditions, preventing further separation by simple distillation. "While the system does not form an azeotrope"

- Binary separation: Separation of a two-component mixture, typically by methods like distillation. "models the binary separation of methanol and water via distillation"

- Bottoms product: The liquid product drawn from the bottom of a distillation column. "Use hot bottoms product to preheat feed"

- Bubble point: The temperature at which a liquid mixture begins to boil at a given pressure. "Preheat feed closer to bubble point or partial vaporization"

- Chain-of-thought reasoning: A prompting technique that elicits step-by-step reasoning from LLMs. "chain-of-thought reasoning~\cite{wei_chain--thought_nodate}"

- Cognition: An agentic framework demonstrating specialized autonomous engineering through planning and interaction. "Cognition~\cite{cognition} demonstrates specialized autonomous engineering"

- Condenser: A unit that condenses vapor at the top of a distillation column. "equipped with both a reboiler and a condenser"

- Convergence status: An indicator of whether a simulation is properly specified and solved. "Get input, specification, and convergence status of APS simulation."

- Deterministic, physics-based solver algorithms: Numerical engines that compute results based on physical laws without stochasticity. "deterministic, physics-based solver algorithms provided by APS."

- Distillate: The overhead product collected from the top of a distillation column. "sink modules for collecting the bottom and distillate products."

- Distillation column: Equipment that separates mixture components based on volatility differences. "a distillation column equipped with both a reboiler and a condenser"

- Double-Effect Configuration: An energy-saving distillation setup using two columns at different pressures for heat coupling. "Double-Effect Configuration"

- Equimolar: Having equal molar amounts of each component. "an equimolar mixture of water and methanol"

- FastMCP: A Python framework for building lightweight MCP servers. "Our implementation uses FastMCP, a modern Python framework for building lightweight MCP servers"

- Feed stage location: The tray at which the feed enters a distillation column. "the feed stage location"

- Feedforward control: A control strategy that compensates for disturbances based on their measurements before they affect the system. "Add feedforward control for feed disturbances"

- Flowsheet: A diagrammatic representation of process units and streams in a simulation. "Simulation flowsheet of APS example \"C1 - Water Methanol Separation\"."

- Flowsheet synthesis: The construction of a complete process flowsheet from specifications. "autonomous flowsheet synthesis"

- Heat integration: The recovery and reuse of heat within a process to reduce energy consumption. "Benefit: Better heat integration and product flexibility"

- Hydrodealkylation: A petrochemical reaction that removes alkyl groups from aromatics using hydrogen, often optimized in process design. "outperforms traditional methods on hydrodealkylation optimization."

- Instruction tuning: Fine-tuning LLMs to better follow instructions and tasks. "instruction tuning~\cite{zhang_instruction_2025}"

- JSON-RPC messaging: A lightweight remote procedure call protocol that uses JSON for structured communication. "handling communication via standardized JSON-RPC messaging."

- LangGraph: A framework enabling cyclic, stateful agent workflows. "Frameworks such as LangGraph~\cite{langgraph} and AutoGen~\cite{autogen} provide infrastructure for cyclic, stateful workflows"

- Model Context Protocol (MCP): A standard for connecting an agent’s cognitive core to external tools and data. "via Model Context Protocol (MCP)"

- Multi-Agent Systems (MASs): Architectures where multiple specialized agents cooperate on complex tasks. "Multi-Agent Systems (MASs), which distribute objectives across specialized entities"

- NRTL: The Non-Random Two-Liquid model for non-ideal liquid-phase activity coefficients. "such as NRTL, to capture phase equilibrium correctly"

- Phase equilibrium: The state where multiple phases coexist with consistent compositions at given conditions. "to capture phase equilibrium correctly"

- Raoult's law: A relation describing vapor pressures of ideal liquid mixtures as proportional to component mole fractions. "positive deviations from Raoult's law"

- Reactive distillation: A unit operation combining chemical reaction and distillation in one column. "Reactive Distillation"

- Reboiler: A heat exchanger at the bottom of a distillation column that provides boil-up. "equipped with both a reboiler and a condenser"

- Reboiler duty: The heat input required by the reboiler. "Minimize reboiler duty while maintaining product quality"

- Relative volatility: A measure of the ease of separation by distillation between two components. "the relative volatility varies significantly with composition"

- Reinforcement learning from human feedback: A training approach where models learn behaviors aligned with human preferences via feedback signals. "reinforcement learning from human feedback~\cite{bai_training_2022}"

- Retrosynthesis: A planning strategy in chemistry where target molecules are decomposed into simpler precursors. "retrosynthesis planning"

- Reflux ratio: The ratio of liquid returned to the column relative to distillate withdrawn. "Reflux Ratio Adjustment"

- Structured packing: Engineered internal column packing that enhances mass transfer with low pressure drop. "Install high-efficiency trays (structured packing)"

- Subcooled liquid: A liquid at a temperature below its saturation temperature at the given pressure. "subcooled liquid, VF = -0.33"

- Thermodynamic property models: Mathematical models used to estimate thermodynamic properties and phase behavior. "manually select thermodynamic property models and operating parameters"

- Tool orchestration: The capability of agents to select and sequence external tools to accomplish tasks. "tool orchestration capabilities"

- Tray efficiency: A metric indicating how effectively a tray (or stage) achieves the intended separation compared to an ideal stage. "Standard tray efficiency of 100\% assumed throughout the column"

- Vapour-liquid behaviour: The characteristics of mixtures involving vapor and liquid phases, including non-idealities. "non-ideal vapour-liquid behaviour"

Collections

Sign up for free to add this paper to one or more collections.