- The paper introduces EviNAM, which unifies interpretable per-feature decomposition with evidential uncertainty estimation in a single model framework.

- It parametrizes distribution outputs per feature using monotonic nonlinearities, enabling closed-form estimates for predictions and both types of uncertainty.

- Experimental results on OpenML datasets show competitive performance and enhanced interpretability compared to ensemble and Bayesian methods.

EviNAM: Intelligibility and Uncertainty via Evidential Neural Additive Models

Introduction and Motivation

The paper presents EviNAM, a model architecture that unifies the intelligibility of Neural Additive Models (NAMs) with the direct, single-pass estimation of both aleatoric and epistemic uncertainty via evidential learning principles. Interpretable modeling approaches such as Generalized Additive Models (GAMs) and their neural extension, NAMs, permit per-feature decomposition of predictions, a notable advantage for human oversight and debugging. However, standard NAMs lack reliable uncertainty quantification and remain susceptible to overconfident predictions in out-of-distribution regimes.

Bayesian Neural Networks (BNNs), ensemble methods, and Deep Evidential Regression (DER) partially address uncertainty estimation, yet they are either computationally expensive or do not inherently align with the additive and interpretable paradigm of NAMs. Furthermore, prior attempts to combine additivity and evidential uncertainty, such as BNAM, entail a substantial increase in computational burden due to sampling. The EviNAM architecture overcomes these limitations by constructing distributional parameterizations for each feature and parameter of the target distribution, forwarding the necessary nonlinearities to the feature level, thus preserving additivity and interpretability.

Methodological Contributions

EviNAM is constructed by integrating the evidential parameterization of outputs as in DER into the NAM framework. For regression tasks, each feature-specific neural network predicts all parameters of the Normal Inverse Gamma (NIG) distribution, enabling closed-form estimates of the predictive mean, aleatoric uncertainty, and epistemic uncertainty per sample. The model enforces the non-negativity and other constraints on distributional parameters at the feature contribution level rather than after aggregation, using monotonic nonlinearities (e.g., softplus), which maintains strict additivity over features for each parameter.

This approach is operationalized as follows: for a sample xi, each feature j and distributional parameter k (e.g., mean, υ, α, β) has a dedicated network whose output is transformed via a monotonic function before summation. The overall parameter for the distribution is then the sum of the transformed feature-level contributions and a global bias, ensuring that each feature's influence on not just the expected outcome but also the distributional parameters—and thus the uncertainties—remains explicit and decomposable.

For classification, the paper adapts the same additive, evidential strategy to Dirichlet parameterization (DEC), again forwarding the nonlinearity required for validity (e.g., αc≥1) to the feature level, enabling additive class-probabilities—a property that traditional softmax-based NAMs do not guarantee.

Experimental Results

The forward nonlinearity strategy was directly evaluated on the OpenML 353 regression suite, showing equivalent predictive performance (by average rank over NLL, CRPS, MAE) to previous post-aggregation strategies, with the added benefit of strict interpretability.

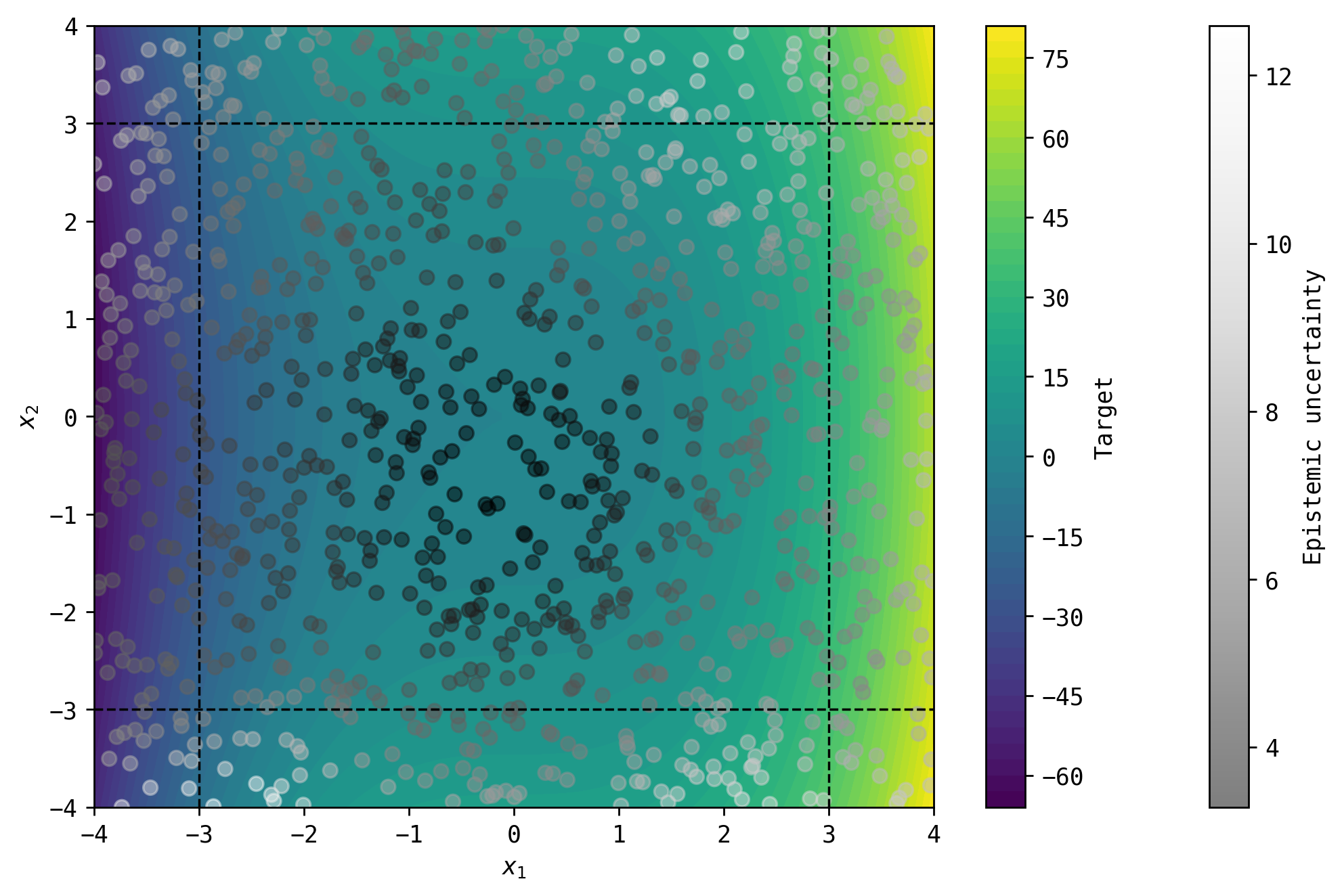

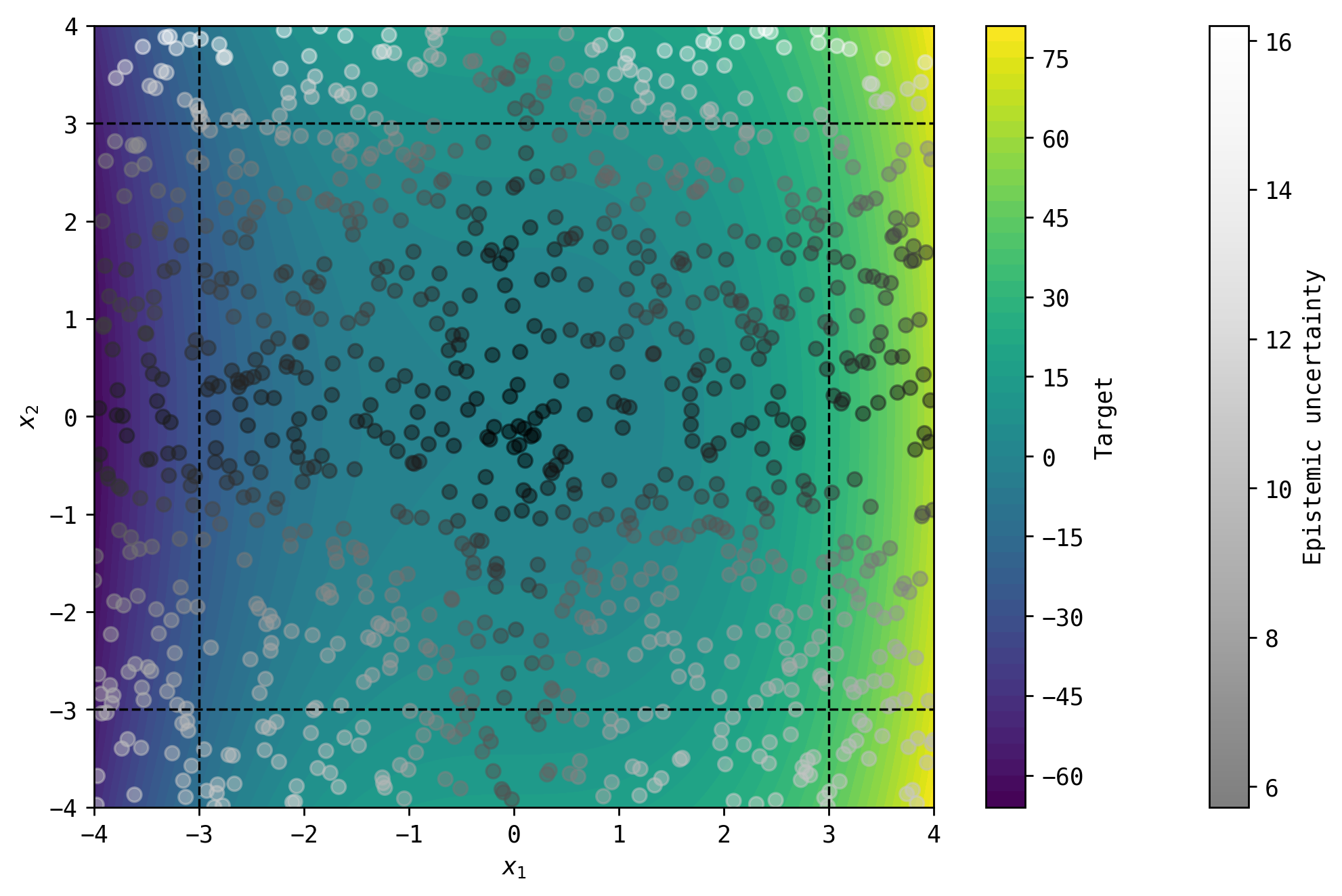

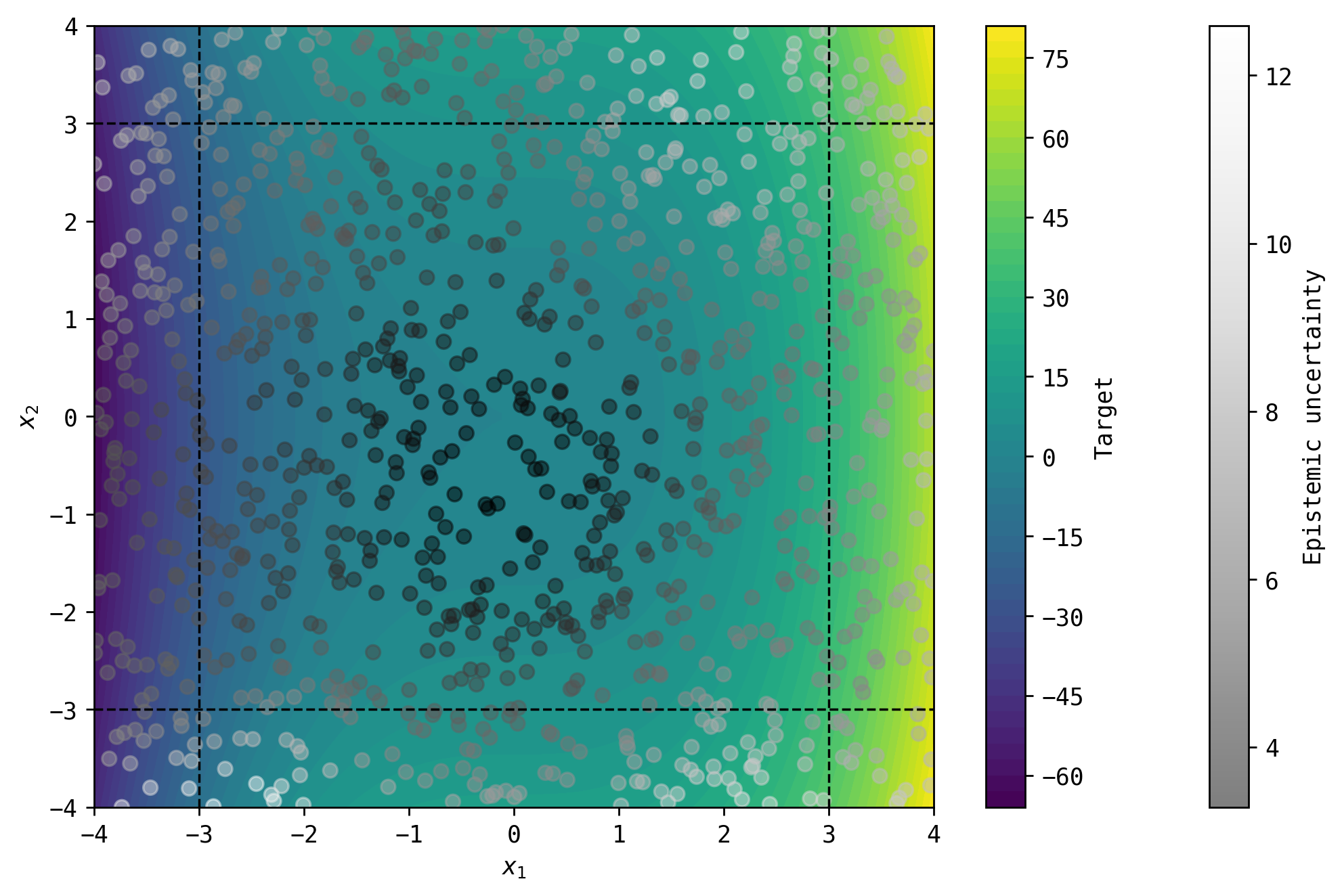

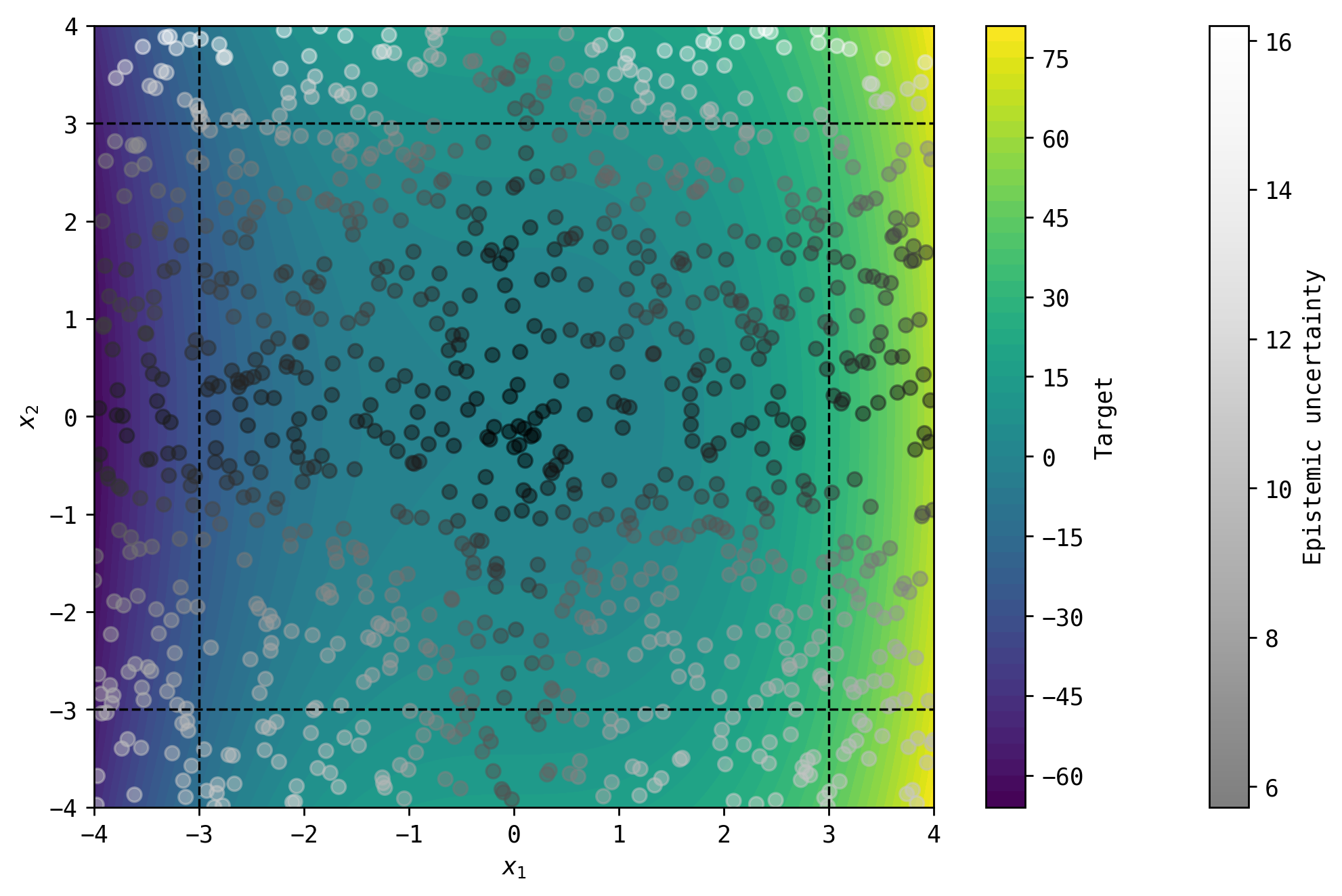

EviNAM matches the performance of state-of-the-art deterministic and uncertainty-aware NAM derivatives on both synthetic and real-world datasets, uniquely providing simultaneous single-pass estimates of both epistemic and aleatoric uncertainties and feature-level decomposition:

Figure 1: 1D and 2D synthetic data experiments demonstrate that EviNAM produces epistemic uncertainty estimates in and out of domain that closely mirror state-of-the-art non-additive DER baselines, visualized with ground truth, predictions, and uncertainty bands.

For regression on 24 OpenML datasets, EviNAM outperformed standard NAM and EnsNAM (uncertainty via ensembles) in terms of NLL and CRPS metrics, and was competitive with NAMLSS (which does not cover epistemic uncertainty), only marginally trailing in some metrics due to the greater modeling generality of NIG. EviNAM achieved the best MAE on several datasets and demonstrated no significant loss in predictive quality while providing richer outputs.

In classification (OpenML 99 suite), EviNAM remained competitive, sometimes achieving the best accuracy, Brier score or calibration. While the accuracy sometimes trailed deterministic baselines, EviNAM is the only method among interpretable additive models to provide additivity-preserving, per-feature class probabilities and both uncertainty types in a single pass. It achieved substantial speed-ups relative to ensemble-based methods and BNNs, requiring less training and inference compute.

Interpretability and Qualitative Analysis

On real-world datasets (e.g., California housing), the model provides explicit, decomposable feature effects not only on the output but also on the estimated uncertainties. Visualizations reveal that, for instance, the median income feature's contribution to both prediction mean and uncertainty is high in data-scarce and high-value regimes, empowering human domain experts to pinpoint model vulnerabilities and regions where decisions may warrant caution or deferral.

Practical and Theoretical Implications

Practically, EviNAM addresses several key needs:

- Model Intelligibility: Human-interpretable, per-feature decomposition for all predictive statistics.

- Single-pass Uncertainty: Integrated estimation of aleatoric and epistemic uncertainty at inference time without ensembling or parameter sampling.

- Computational Efficiency: Efficient compared to BNNs and ensemble NAMs, facilitating deployment in compute-constrained scenarios.

Theoretically, the forwarding-nonlinearity principle generalizes to any additive model with distributional outputs (including those with higher-order feature interactions), holding implications for interpretable and modular composition of deep models. The method's flexibility with respect to activation functions further broadens its applicability.

Limitations and Future Directions

EviNAM still inherits the inherent challenge of evidential approaches with respect to epistemic uncertainty calibration, as discussed in the cited literature. Since the method leverages the NAM class, it cannot represent high-order feature interactions unless extended with pairwise interaction modules—future research should consider such generalizations and further empirical investigation of uncertainty interpretability in more complex, structured domains.

The framework could be extended to other generalized additive models, survival analysis, and settings with structured outputs. Experimentally, its use in active learning and human-in-the-loop pipelines is a promising avenue, given explicit OOD identification.

Conclusion

EviNAM advances the state of interpretable uncertainty quantification by reconciling feature-level additivity with evidential single-pass uncertainty estimation. It offers a compelling, computationally efficient tool for interpretable regression and classification—situating itself as a practical approach for domains requiring transparent, trustworthy, and accountable AI decision support (2601.08556).