- The paper introduces SALEM, a protocol that uses syndrome information to drastically reduce the sampling overhead in logical error mitigation.

- It details both fine-grained and coarse-grained implementations that adapt error mitigation based on syndrome-conditioned estimators and decoder metrics.

- Numerical simulations show that SALEM extends circuit volume by up to 200× over standard methods, making it a promising approach for near-term quantum computing.

Syndrome-Aware Logical Error Mitigation: Fundamental Advances in Quantum Error Management

Introduction and Motivation

Quantum error correction (QEC) is critical for the practical realization of quantum computing, but resource constraints—particularly the number of available physical qubits—ensure that logical errors persist and fundamentally limit achievable quantum circuit depth and output fidelity. Recently, logical error mitigation (LEM) was introduced as a post-QEC strategy, wherein standard quantum error mitigation (EM) techniques are applied to minimize logical-level errors, albeit with considerable run-time and sampling overheads. The present work introduces syndrome-aware logical error mitigation (SALEM), an approach for LEM that explicitly incorporates the syndrome information generated during QEC to drastically reduce sampling overhead and thus expand the feasible circuit volume and accuracy within fixed physical and temporal resources (2512.23810).

Background and Prior Work

Fault-tolerant quantum computation relies on encoding logical qubits into physical states such that prevalent errors can be detected and corrected by syndrome measurements. While QEC reduces logical error rates, it does not suppress them below thresholds necessary for all high-fidelity applications, especially as industry-relevant quantum algorithms will involve O(106) logical gate circuit volumes. Existing LEM protocols, such as ExtLEM, treat logical gates as noisy and mitigate their errors using EM without leveraging syndrome values. This induces an exponential overhead in sample complexity—scaling with the logical error rate and circuit size—which severely restricts scalability. The standard approach of syndrome post-selection (EC+PS) is even less scalable due to inefficient rejection and residual bias in accepted shots.

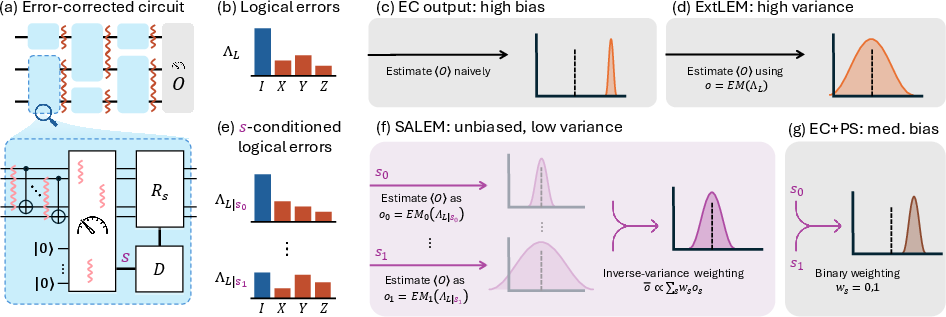

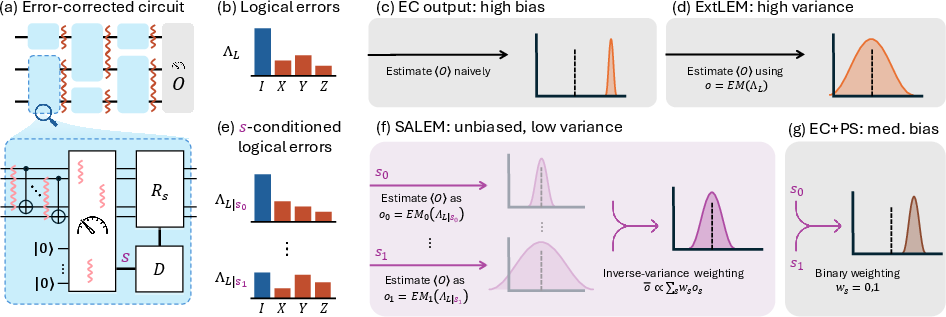

SALEM postulates that not all detected syndromes are equally informative with respect to the logical error channel: the distribution of residual logical errors after QEC is highly non-uniform and strongly conditioned on observed syndrome patterns. SALEM leverages this by adaptively applying different EM corrections for each syndrome (or equi-informative syndrome subset), effectively turning logical error mitigation into a syndrome-conditional estimation problem.

The procedure can be instantiated at fine or coarse grain:

- Fine-Grained SALEM (FG-SALEM): For each syndrome or joint syndrome string observed across the circuit, a bespoke EM protocol EMs is applied, yielding an unbiased estimator for the observable of interest. These syndrome-conditioned estimators are then averaged with weights inversely proportional to their variance ("inverse variance weighting").

Figure 1: Conceptual overview of SALEM, comparing classical EC, ExtLEM, EC+PS, and the proposed scheme, highlighting the efficient syndrome-conditional estimation pipeline.

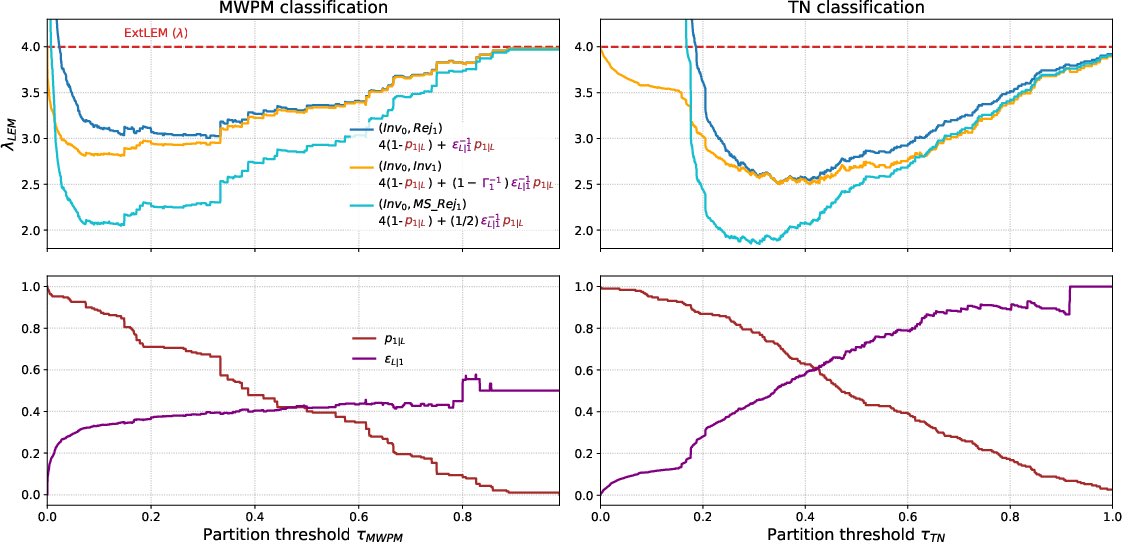

- Coarse-Grained SALEM (CG-SALEM): Syndromes are partitioned into a small number of classes based on efficiently computable metrics (e.g., decoder confidence). Separate EM protocols, or rejection, are applied to each class. This strategy trades off some of the optimality of FG-SALEM for massively improved practical feasibility on large codes.

Under both schemes, the critical improvement is the reduction of effective sample overhead from a geometric mean over all syndromes (as in ExtLEM) to a harmonic mean, with the gain determined by the syndrome-dependent non-uniformity of the logical error channel. Mathematically, this exploits a highly skewed distribution induced by the minimal-weight fault structure of QEC codes; rare "bad" syndromes dominate the logical error probability but can be efficiently flagged and treated separately.

Analysis of Sample Complexity and Runtime Overhead

Quantitative analysis demonstrates that SALEM reduces exponential blowup rates in shot overhead relative to standard LEM. The improvement is especially pronounced for codes and circuits where the syndrome-conditioned logical error rate varies dramatically between syndrome classes.

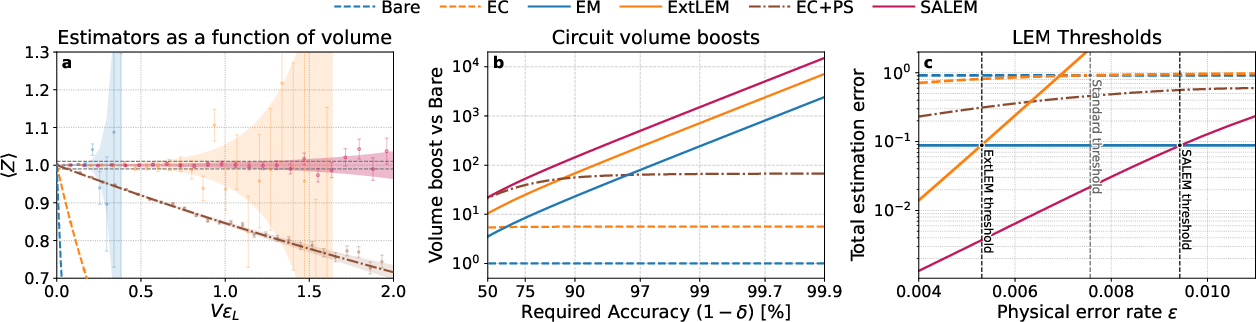

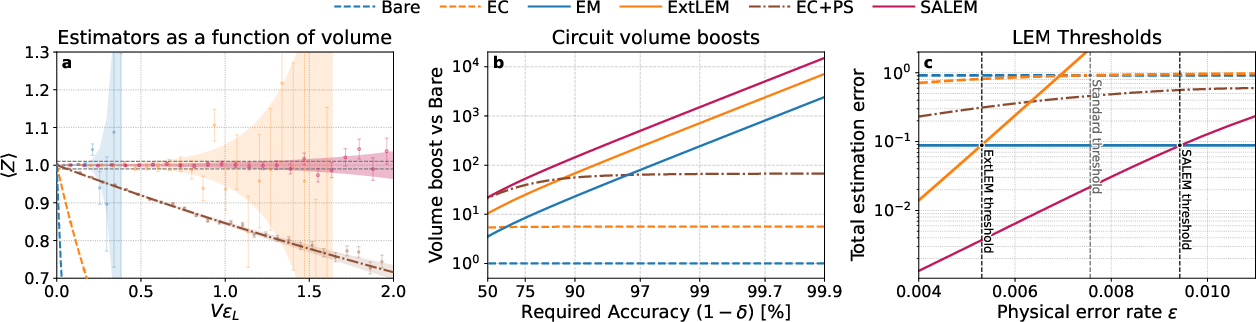

Figure 2: Performance of estimators for logical observable ⟨Z⟩ under various protocols, showing orders-of-magnitude circuit volume extension by SALEM relative to EC, EC+PS, and ExtLEM.

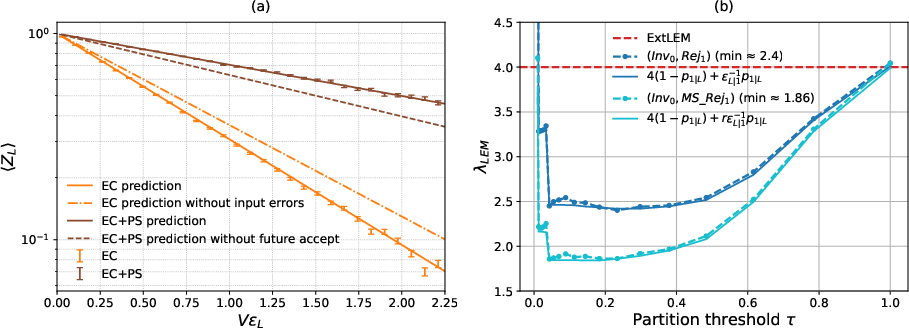

Numerical simulations for distance-3 surface codes and the Steane code show, for instance, that for a logical error rate ϵL≈10−4 and physical error ϵ=10−3:

- SALEM increases the max reliable circuit volume by a factor of 2× over ExtLEM,

- 20× over EC+PS,

- and 200× over EC alone,

at a fixed target accuracy (e.g., 99%).

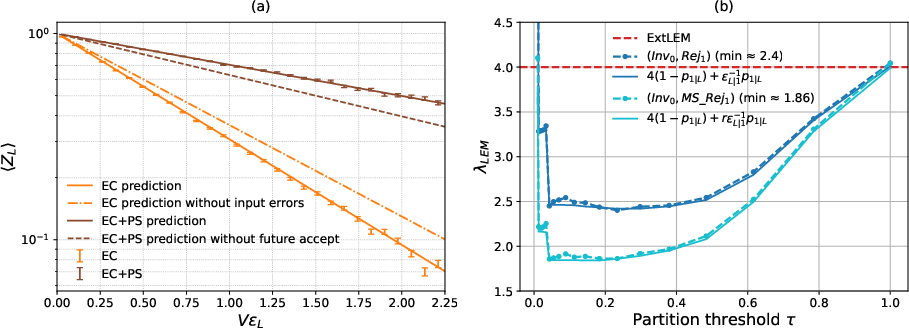

Furthermore, the theoretical analysis reveals that the new physical error rate threshold above which SALEM outperforms even physical-level EM can lie above the standard fault-tolerance threshold—a result that contradicts prevailing assumptions about the practical uselessness of EC in this regime.

Interplay with Decoding and Implications for Implementation

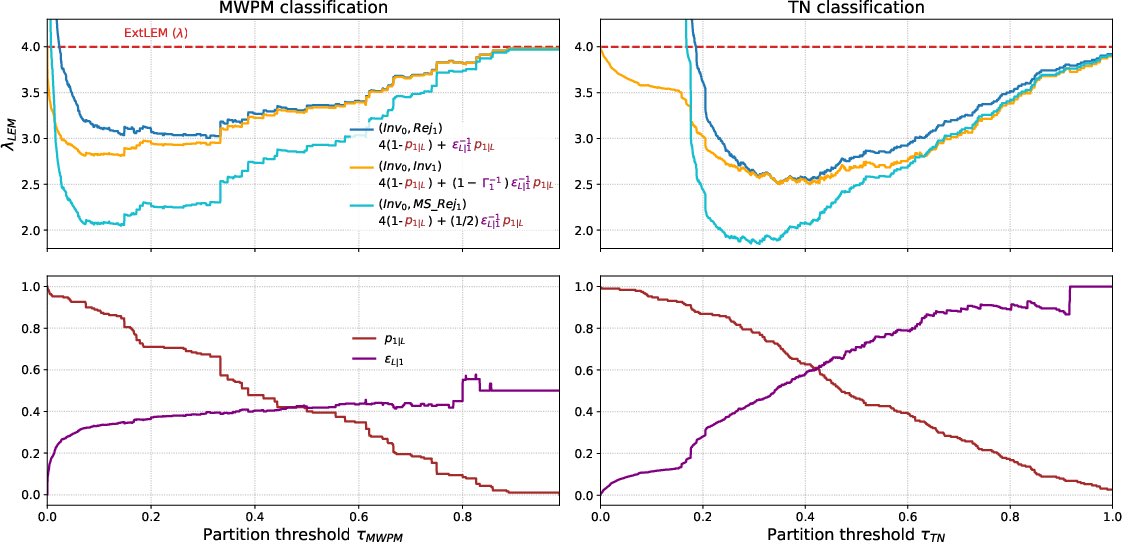

SALEM's effectiveness rests on the ability to perform accurate syndrome-conditioned logical characterization. While for small codes FG-SALEM is tractable, for large codes this step is equivalent in computational hardness to maximum-likelihood decoding (#P-hard). However, CG-SALEM mitigates this by using syndrome metrics derived from "soft-output" decoders (such as weight gaps, ML probabilities, or tensor network contraction outputs), which are available in real-time and can drive efficient online syndrome partitioning and adaptive runtime rejection.

Figure 3: Blowup rates λSALEM{S0,S1} for binary (coarse-grained) SALEM under various rejection and inversion strategies, as a function of syndrome class metric and decoder type.

Further, mid-shot rejection schemes—whereby a circuit instance is terminated as soon as a "bad" syndrome arises—are analyzed and shown to provide significant reductions in quantum processing time overhead, particularly for practical aspect ratios and depths.

Practical and Theoretical Implications

Practically, SALEM delivers a scalable, hybrid QEC/EM protocol applicable to near-term hardware with limited qubit counts and non-negligible physical error rates. It enables larger and more accurate quantum computations under fixed QPU resources.

Theoretically, this work reframes how syndrome information and error mitigation should be integrated, highlighting that even above threshold, EC remains useful when properly paired with syndrome-aware mitigation. Importantly, SALEM strictly outperforms syndrome-agnostic LEM in shot complexity for all convex functional relationships between error rate and shot overhead—an observation supported by analytic lower bounds.

Moreover, the framework generalizes to arbitrary QEC codes and decoders, and its effectiveness will improve further as code design and decoding strategies mature (e.g., via tensor network or neural decoding).

Future Directions

The adoption of SALEM introduces several directions for future work:

- Acceleration and scale-up of syndrome classifiers, especially soft-output decoders.

- Application to more recently proposed high-threshold or low-overhead QEC codes.

- Optimization and formal lower bounds for syndrome-aware versus syndrome-agnostic LEM, i.e., determining whether SALEM is, in some sense, optimal.

- Integration with QPU architectures and hardware-software workflow, including real-time syndrome processing.

Conclusion

Syndrome-aware logical error mitigation establishes a new paradigm for quantum error management, demonstrating by both analysis and simulation that leveraging syndrome information within EM dramatically improves the reachable regime of quantum circuit volume and fidelity. By tightly coupling EC and EM at the logical level, this work provides both a practical roadmap for near-term quantum circuit execution and a conceptual shift in how error mitigation should be designed and analyzed.

Figure 4: Decay curves for logical ⟨Z⟩ in a Steane code memory channel; precise agreement between simulation and SALEM-based analytic characterization, validating the protocol and its efficiency gains.