- The paper introduces a unified measure-based framework to bridge softmax and linear attention behaviors in the large-prompt regime.

- It establishes concentration bounds for outputs and gradients, demonstrating stable convergence in long-sequence training scenarios.

- The analysis shows that in-context learning with softmax attention mimics linear dynamics, informing practical transformer optimizations.

Analysis of "Softmax as Linear Attention in the Large-Prompt Regime: a Measure-based Perspective" (2512.11784)

Introduction and Motivation

The transformer architecture, primarily characterized by its attention mechanisms, has demonstrated phenomenal success across various domains, particularly in processing long sequences. Central to this architecture is the softmax attention mechanism, whose intricate nonlinear dynamics pose formidable challenges in theoretical analyses. To address these complexities, recent studies have proposed linear attention mechanisms, offering simpler algebraic structures for theoretical exploration. However, the empirical superiority of softmax attention, especially in handling length generalization and extracting task structures, necessitates a comprehensive framework that bridges the analytical insights from linear models to the more empirically potent softmax counterparts.

Contributions

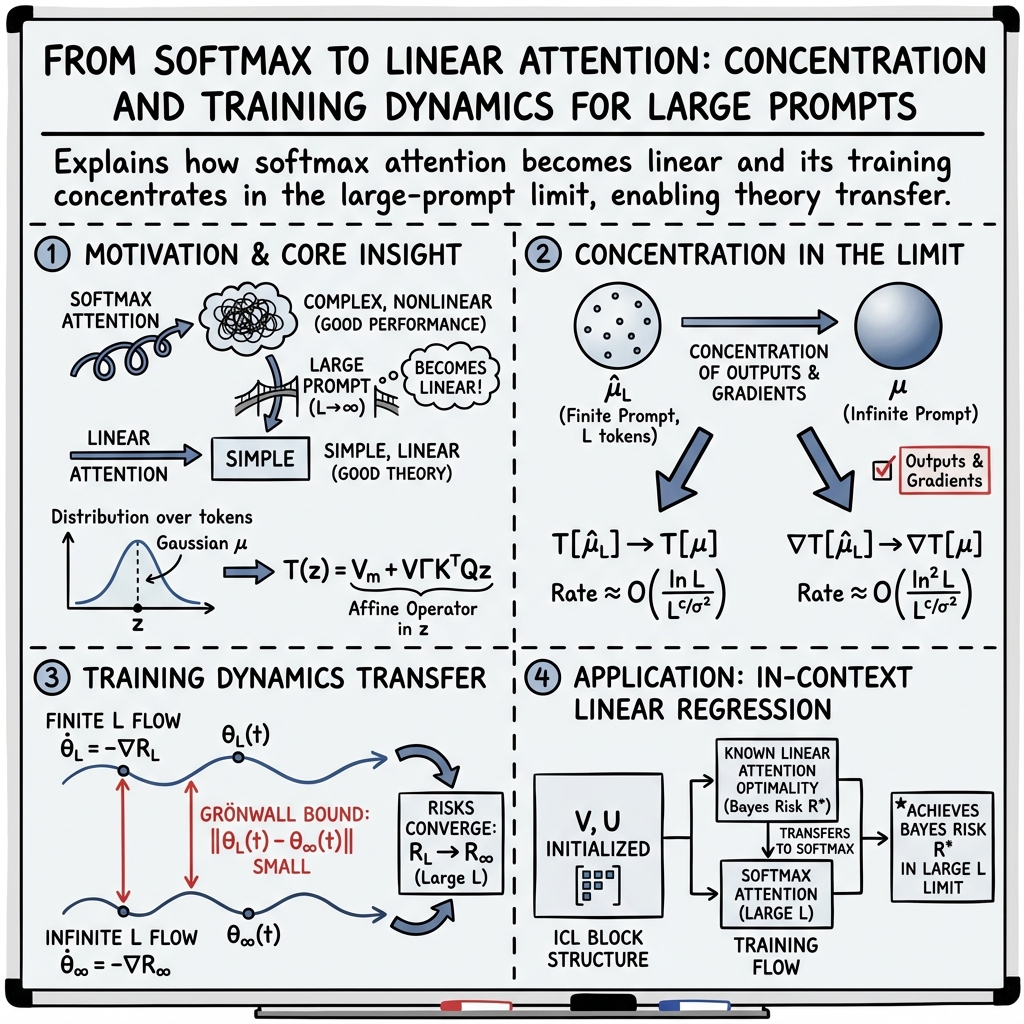

This paper introduces a unified, measure-based framework facilitating the analysis of softmax attention layers in both finite and infinite prompt settings. By considering i.i.d. Gaussian inputs, the authors show that softmax attention approximates linear operations in the asymptotic limit of infinite prompt lengths. The framework provides critical insights and quantifies the convergence rates of finite-prompt attention towards its infinite-prompt counterpart.

- Concentration Bounds: The paper establishes non-asymptotic concentration bounds for both the outputs and gradients of the softmax attention mechanism, highlighting the stability of these bounds during the training processes involving sub-Gaussian inputs.

- Stability Across Training: Using a unified measure representation, the paper demonstrates the persistence of concentration bounds throughout the entire training trajectory in general in-context learning setups. Importantly, it formalizes how theoretical risk associated with softmax attention, characterized by finite prompts, approaches the limit achievable with infinite prompts.

- In-Context Learning Analysis: Extending the analysis to in-context linear regression reveals that in the large-prompt regime, the dynamics of training with softmax attention parallel those of linear attention, allowing existing theoretical analyses to be effectively applied to softmax settings.

Implications and Future Directions

The measure-based representation of attention layers provides a novel lens through which the convergence and stability of attention mechanisms can be quantitatively studied, especially in scenarios where prompt lengths are significant but not infinite. Such insights are pivotal for extending current optimization frameworks to account for the nonlinearities inherent in softmax attention.

- Theoretical Advancements: This framework sets a precedent for exploring more complex architectures, including deeper attention networks with multiple layers, by leveraging insights obtained from single-layer analyses.

- Practical Applications: By demonstrating the equivalence of large-prompt softmax attention with linear models, this research opens pathways for optimizing transformer architectures in resource-constrained environments without sacrificing performance.

- Broader Generalization: Future research could explore extending this framework beyond Gaussian inputs to accommodate more diverse and challenging distributions. Additionally, refining concentration bounds under different data regimes or subject to distributional shifts will enhance the robustness and adaptability of these models in real-world applications.

Conclusion

The paper successfully bridges a critical gap in understanding the dynamics of softmax attention through a novel measure-based perspective. By quantifying the conditions and rates under which softmax attention emulates linear operations as prompt lengths increase, it provides both a theoretical and practical toolkit for advancing transformer-based architectures. This study is seminal in its approach, offering a robust analytical framework that places empirical successes on a firmer theoretical foundation.