DeMapGS: Simultaneous Mesh Deformation and Surface Attribute Mapping via Gaussian Splatting

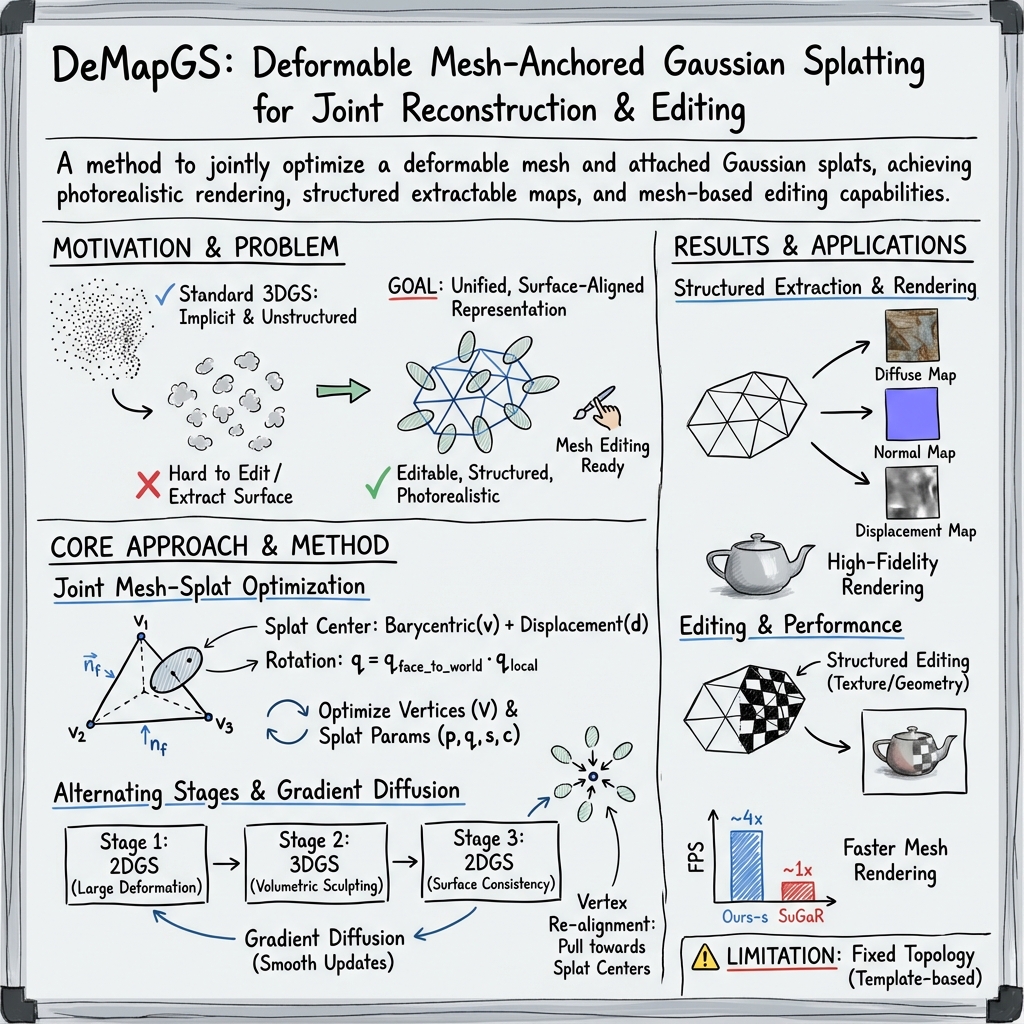

Abstract: We propose DeMapGS, a structured Gaussian Splatting framework that jointly optimizes deformable surfaces and surface-attached 2D Gaussian splats. By anchoring splats to a deformable template mesh, our method overcomes topological inconsistencies and enhances editing flexibility, addressing limitations of prior Gaussian Splatting methods that treat points independently. The unified representation in our method supports extraction of high-fidelity diffuse, normal, and displacement maps, enabling the reconstructed mesh to inherit the photorealistic rendering quality of Gaussian Splatting. To support robust optimization, we introduce a gradient diffusion strategy that propagates supervision across the surface, along with an alternating 2D/3D rendering scheme to handle concave regions. Experiments demonstrate that DeMapGS achieves state-of-the-art mesh reconstruction quality and enables downstream applications for Gaussian splats such as editing and cross-object manipulation through a shared parametric surface.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces DeMapGS, a new way to build and edit detailed 3D models from many photos. Think of a 3D model as a wireframe made of tiny triangles (a mesh). DeMapGS sticks soft “paint dots” called Gaussian splats onto that wireframe, then bends and shapes the wireframe while also adjusting those dots. In the end, you get a clean, editable 3D mesh plus high-quality “surface maps” (color, fine bumps, and shading detail) that look as good as the original splats.

Questions and Goals

The paper aims to solve two main problems:

- How can we turn super-realistic splat-based 3D scenes into structured, editable meshes without losing quality?

- How can we bend and reshape a starting mesh to match the real object in photos, while keeping everything stable and consistent?

In simple terms: they want a 3D model that looks great, is easy to edit, and works well in standard tools (like Blender or game engines), even though it starts from photo-based “splat” techniques that usually don’t give you a nice mesh.

How They Did It (Methods)

To make this understandable, imagine you have:

- A mesh: a net of triangles that forms the shape of an object (like a statue or a turtle).

- Gaussian splats: soft, fuzzy dots that add color and detail to the surface. Some are treated like flat stickers (2D splats), others like puffy blobs (3D splats).

Key ideas explained

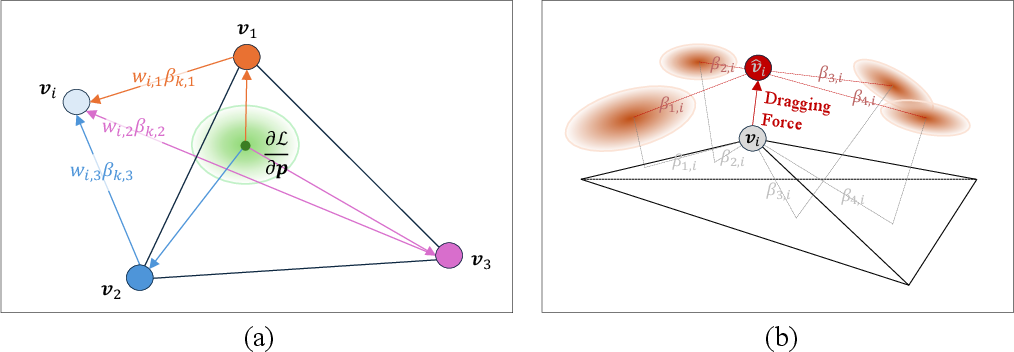

- Attaching splats to the mesh: Each splat is linked to one triangle on the mesh. Its exact spot is described by a simple recipe using the triangle’s three corners (barycentric coordinates, like saying “20% of corner A, 30% of B, 50% of C”), plus a small push outward or inward (displacement) and a twist (rotation). This keeps the splats stuck to the surface even as the mesh bends.

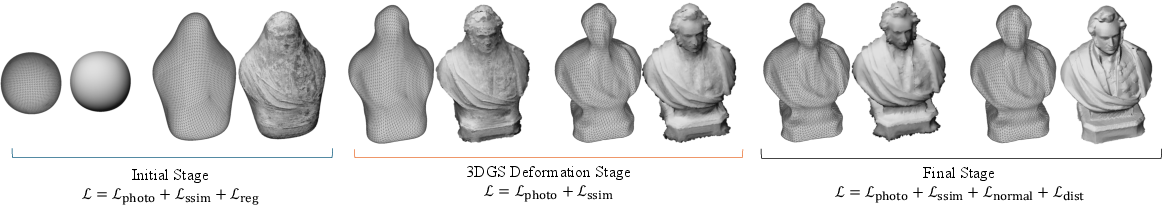

- Bending the mesh and adjusting splats together: The method moves the mesh’s points (vertices) and also updates the splats’ color, size, rotation, and position. It adds a global move (scale, rotate, shift) so the starting mesh doesn’t have to be perfect.

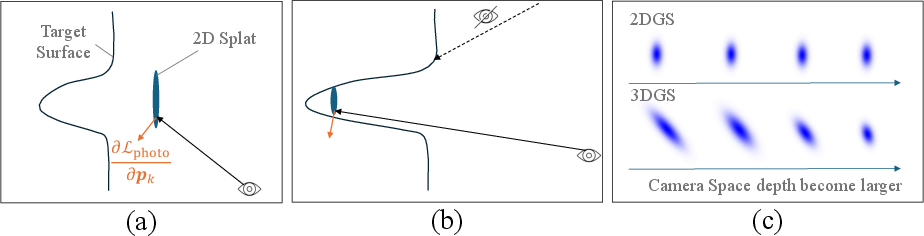

- Alternating between 2D and 3D splats while training:

- 2D splats act like flat stickers on the surface, which are great for learning accurate surface details (like sharp folds and realistic shading).

- 3D splats act like puffy blobs, which are better at pushing into tricky concave areas (like the inside of a cup), where flat stickers can get stuck.

- The system switches between them in stages: start stable with 2D stickers, use 3D blobs to sculpt harder parts, finish with 2D stickers to refine the surface.

- Gradient diffusion (sharing feedback across the mesh): When the computer learns (from comparing rendered images to real photos), it gets “feedback” (gradients) about what to change. Instead of changing only one vertex where the feedback arrives, DeMapGS smoothly spreads that feedback to neighboring vertices, like smudging clay so you don’t get bumps and dents. This lets the mesh take large, stable steps toward the right shape.

- Keeping splats on the right triangle: Sometimes splats can slide off their triangle (walk-on-triangles). The method checks the barycentric weights: if a weight goes negative (meaning it crossed an edge), it clamps and reassigns the splat to the correct neighboring triangle. It’s a fast way to keep splats correctly anchored without heavy extra calculations.

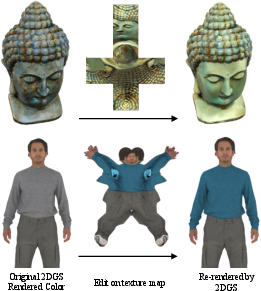

- Extracting surface maps (turning splats into textures):

- Diffuse map: the basic color painted on the surface.

- Normal map: fine shading detail (like grooves or wrinkles) that makes the surface look richer without changing the mesh’s shape.

- Displacement map: small height changes (like bumps or ridges) that can be used with GPU tessellation to create extra geometric detail at render time.

- They project splats onto each triangle and blend them to create these maps. Then they do a small refinement step to make colors consistent across all camera views.

- Works with standard tools: Because the output is a regular mesh plus textures, you can use it with normal 3D pipelines (mipmaps, tessellation) and apps like Blender or game engines.

What They Found (Results)

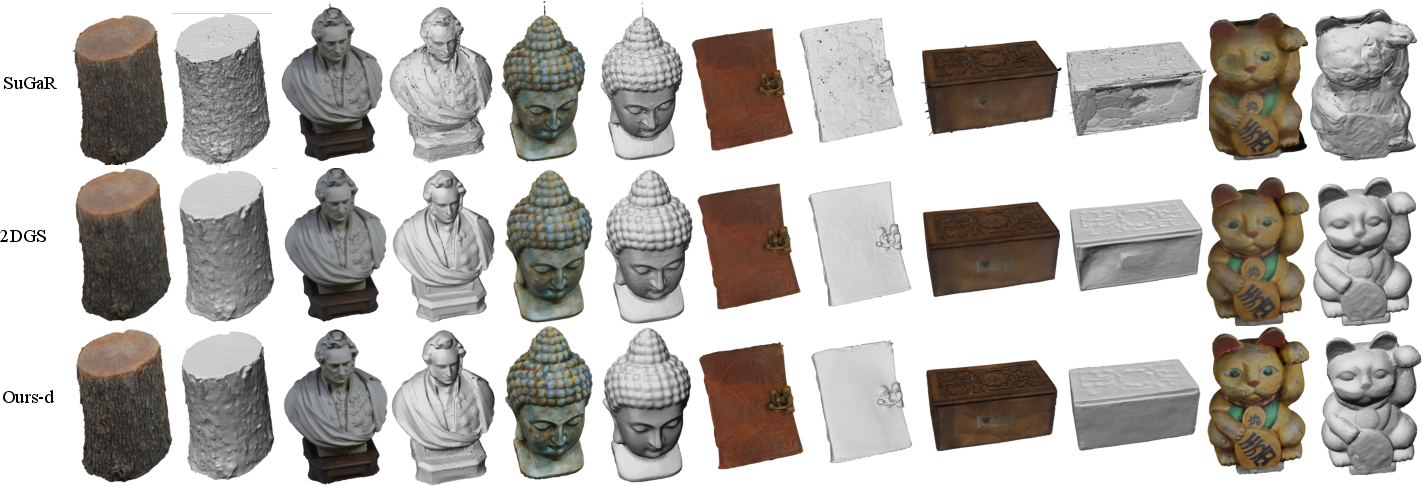

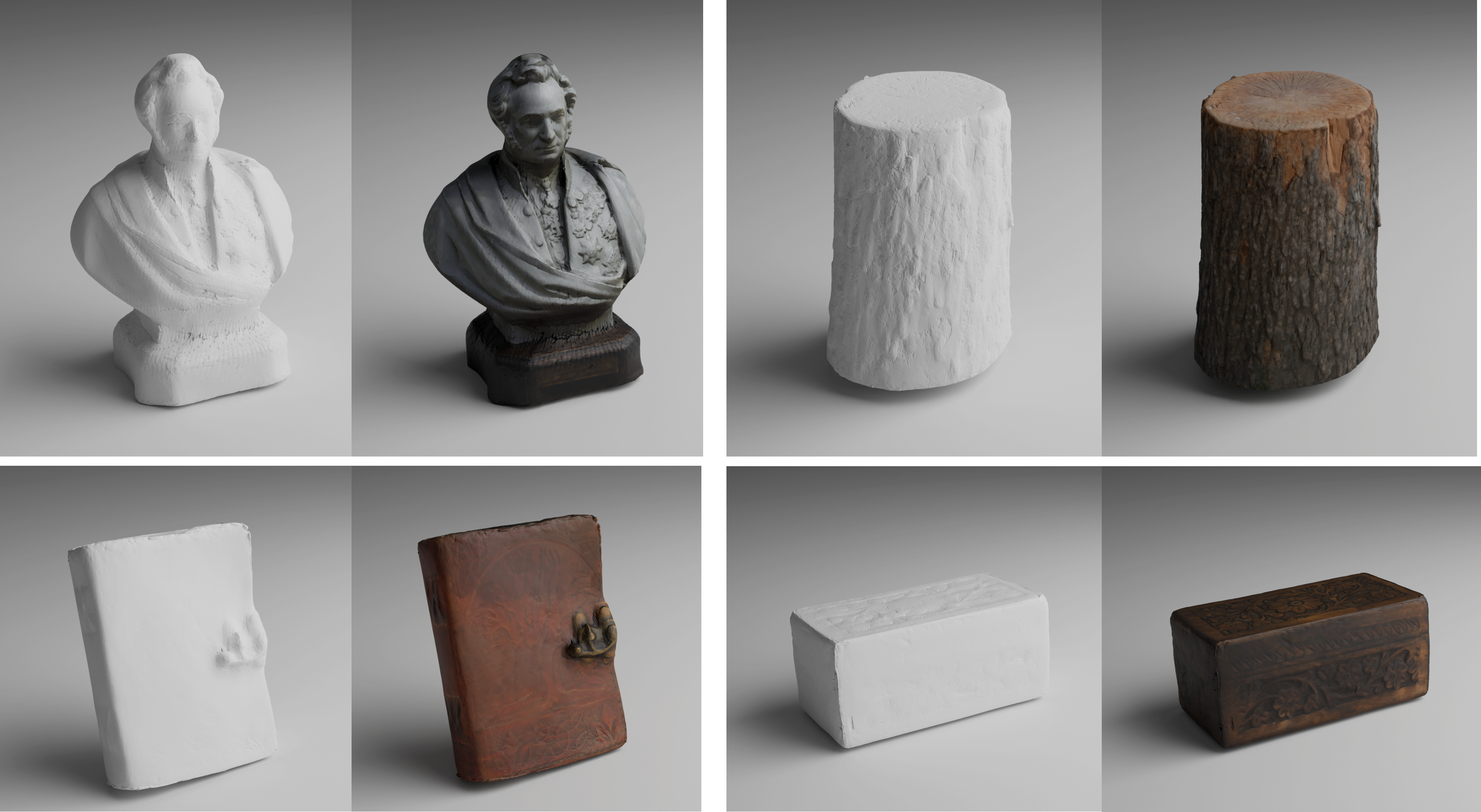

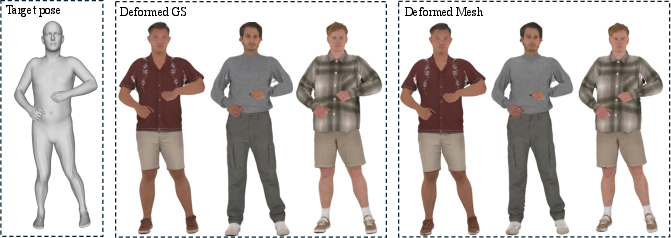

In tests on several 3D scenes and human avatars:

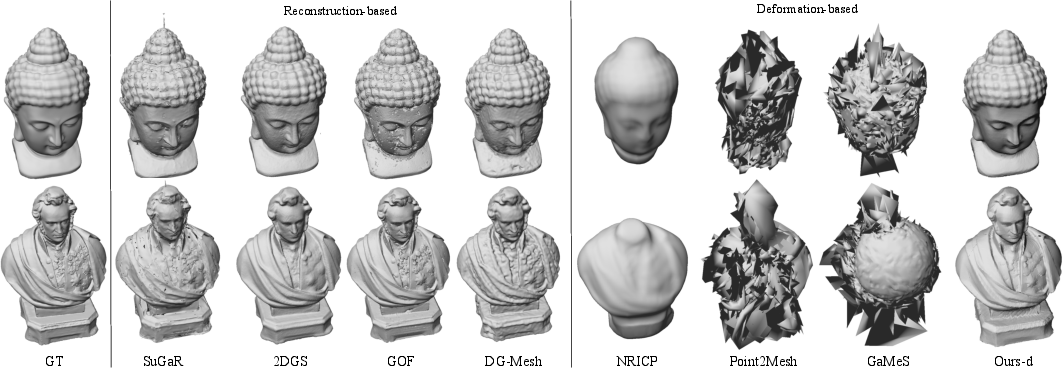

- Shape accuracy: DeMapGS reconstructed meshes that matched the real objects very closely, often as good as or better than other methods, especially compared to methods that only deform a mesh.

- Visual quality: The final renderings looked sharp and photorealistic. The combination of normal and displacement maps captured fine details like wrinkles and folds, even on relatively simple meshes.

- Speed: Rendering the resulting mesh with textures was fast, often much faster than some previous texture-heavy mesh methods.

- Robustness: The method handled hard cases like concave areas by smartly switching between 2D and 3D splats and by spreading feedback across the surface.

- Editing and reuse: Because everything is tied to a structured mesh, it supports editing (color or geometry), animation (posing avatars), and even mixing features across objects.

In short, DeMapGS made splat-quality visuals available in a clean, editable mesh format, while staying efficient.

Why It Matters (Implications)

This approach bridges the gap between two worlds:

- Splat-based methods give amazing visuals but are hard to edit and don’t produce a clean mesh.

- Mesh-based workflows are the standard in games, films, AR/VR, and 3D content creation, but they need good textures and geometry.

By combining them, DeMapGS lets artists and developers:

- Reconstruct high-quality meshes from photos that are easy to edit and animate.

- Export meshes and textures to common tools (like Blender) and engines.

- Add fine details through normal and displacement maps without making meshes super dense.

- Share and mix features across different objects because they use a common template.

Overall, DeMapGS makes realistic 3D models more practical to build, edit, and use, helping both research and real-world production.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper introduces a promising framework, but several aspects remain insufficiently explored or constrained. Below is a concrete list of gaps and questions to guide future research:

- Topology preservation: The method preserves the template’s topology by design, preventing recovery of topology changes (e.g., holes, disconnections, thin structures like hair/foliage, or merged/separated parts). How can the framework handle topology alterations while retaining mesh-semantic consistency?

- Dependence on template quality: Robustness to poor or mismatched templates (wrong scale, pose, semantics, or topology) is not quantified. What are failure modes with severely misaligned templates and how to mitigate them (e.g., automatic template selection, coarse alignment, or topology adaptation)?

- Camera calibration assumption: The approach assumes known, fixed camera poses. Can the method jointly optimize cameras and geometry to handle pose uncertainty or SfM drift, and how does calibration error affect reconstruction quality?

- Concavities and visibility limitations: Alternating 2DGS/3DGS alleviates but does not eliminate failures in highly concave regions (e.g., the “cat” case). When and why does alternation fail, and can adaptive schedules or depth-aware priors close this gap?

- Discrete “walk-on-triangles” handling: The clamp-and-reassign strategy is non-differentiable and may induce drift or instability near edges and boundaries (and may fail on non-manifold meshes). Can a smooth barrier/constraint formulation or geodesic parameterization provide stable, differentiable face transitions?

- Displacement model restriction: Displacement is along the face normal only, which limits capture of tangential microstructure and can cause self-intersections for large offsets. Would vector displacement in tangent space, thickness/offset surfaces, or learned per-texel height fields improve fidelity without artifacts?

- UV parameterization quality: The method relies on the template UVs but does not analyze UV distortion, seam artifacts, or multi-chart blending during attribute extraction/refinement. Can atlas optimization, seam-aware blending, or stretch-regularized UVs improve map quality and cross-object consistency?

- Texture refinement fidelity: The post-hoc texel color fitting risks baking view-dependent effects, cast shadows, and lighting inconsistencies into “diffuse.” How to incorporate reflectance/illumination models, multi-view robust aggregation, or intrinsic decomposition to avoid overfitting?

- Material and lighting modeling: Spherical harmonics in GS encode view-dependence, but extracted maps are limited to diffuse/normal/displacement. How to recover PBR parameters (albedo, roughness, metalness) and separate illumination for relightable assets?

- Normal map accuracy: Normals are derived from splat rotations or 2DGS depth-derived normals; errors in depth or splat alignment propagate into normal maps. Are there better normal priors or SDF/Signed Distance regularizers to stabilize fine-scale normals?

- Gradient diffusion details and scalability: The update uses (I + λL){-2} but omits specifics (cotangent vs. uniform Laplacian, boundary treatment, solver details). What is the computational cost, scalability to large meshes, sensitivity to λ and mesh resolution, and can local/preconditioned solvers or multigrid acceleration improve stability/speed?

- Realignment schedule and theory: Vertex re-alignment every 50 iterations is heuristic. What is the effect of frequency/strength on convergence and bias (e.g., surface shrinkage), and can it be adaptively triggered (e.g., displacement/vertex misfit thresholds) with theoretical guarantees?

- Densification/splitting under surface attachment: How does adaptive splat growth interact with face attachments (e.g., when splits straddle faces), capacity control, and map extraction quality? Is there a principled way to regularize splat count and placement on surfaces?

- Attribute extraction coverage: The “3-hop” neighborhood assumption during per-face map rasterization lacks guarantees for large/elongated splats. Can a conservative, radius-aware selection with bounds be derived to ensure full coverage without excessive overhead?

- Evaluation protocol for displacement/tessellation: Geometry metrics are approximated via static pre-subdivision, which differs from runtime tessellation. How to design fair evaluation protocols that account for displacement mapping with adaptive tessellation?

- Scalability and runtime profile: Training time, memory footprint, and scalability to high-resolution images, large meshes, or multi-object scenes are not reported. What are the bottlenecks (rendering, diffusion, extraction) and how do they scale?

- Robustness to sparse/uneven views: Performance under fewer cameras, narrow baselines, or sparse view distributions is not evaluated. What priors or regularizers (silhouette constraints, symmetry, learned shape priors) help in such regimes?

- Handling backgrounds/occluders: The approach seems object-centric; the handling of background segmentation, partial occlusions, or multi-object scenes is not specified. How to extend to scene-level reconstruction while preserving per-object surface structure?

- Dynamic/temporal consistency: Although suited to deformation, the method is evaluated on static instances. How does it perform on time-varying sequences, and what temporal regularization is needed to avoid flicker/drift in maps and geometry?

- Cross-object semantics: Cross-object manipulation relies on a shared template, but semantic alignment across categories is not demonstrated. How to generalize to broader shape families with partial semantic overlap or variable topology?

- Quaternion/frame continuity: The face-local rotation parameterization can suffer from discontinuities when face normals flip or under extreme deformations. Can parallel transport/rotation smoothing across faces mitigate normal map seams?

- Failure characterization of alternation: The paper asserts 3DGS gradients provide full-directional cues, with a proof in the appendix, but lacks empirical analysis of when alternation converges or oscillates. Can a criterion guide switching and step sizes per region/view?

- Sensitivity to hyperparameters: The effects of stage lengths, loss weights (normal/distortion), diffusion λ, densification thresholds, and opacity resets are not systematically analyzed. Which settings are robust across datasets and which require per-scene tuning?

- Data-dependence on external normals: Results on avatars rely on Sapiens normals for supervision; performance without such privileged signals and robustness to noisy predicted normals remain unclear.

- Seamless integration with PBR pipelines: Although compatible in principle, the work does not extract or validate physically-based material maps nor test mipmapping/anisotropic filtering artifacts at UV seams under extreme LOD changes.

- Non-manifold/poor-quality meshes: The method presumes manifold, well-conditioned meshes. What happens with non-manifold edges, degenerate faces, or highly irregular triangulations during diffusion, reassignments, and extraction?

- Uncertainty/quality measures: No uncertainty estimates are provided for maps/geometry. Can confidence measures (e.g., per-texel variance from multi-view residuals) support map denoising, inpainting, or reliability-aware editing?

- Generalization to volumetric/participating media: Surface-anchored splats cannot capture volumetric effects (e.g., translucent materials, sub-surface details). How to hybridize with volumetric components without losing surface-structure benefits?

Glossary

- 2D Gaussian Splatting (2DGS): A surface-aligned splatting formulation where each splat is a thin 2D ellipse in 3D, enabling precise depth/normal supervision and surface-aware gradients. "We use 2DGS~\cite{huang20242d} and 3DGS~\cite{kerbl20233d} as model representations and differentiable renderers during the optimization."

- 3D Gaussian Splatting (3DGS): A real-time scene representation that places anisotropic Gaussians in 3D and optimizes their geometry and appearance for view synthesis. "3DGS~\cite{kerbl20233d} represents scenes by placing anisotropic Gaussians in 3D space and optimizing their spatial and appearance parameters for novel view synthesis, and it achieves real-time performance with high-quality results."

- Adaptive densification: A training heuristic that increases representational capacity by adding or splitting splats in regions needing detail. "we adopt adaptive densification, splitting, and opacity reset strategies as was done in~\cite{kerbl20233d}"

- Anisotropic Gaussian: A Gaussian with direction-dependent covariance (elliptical shape) used to model oriented surface elements. "placing anisotropic Gaussians in 3D space"

- Barycentric coordinates: Coordinates relative to a triangle’s vertices used to locate points (or splats) on a mesh face. "For an arbitrary texel on , specified by barycentric coordinates "

- Bi-Laplacian regularization: A higher-order smoothness prior that penalizes curvature variations to stabilize optimization under image-only supervision. "we add the bi-Laplacian regularization "

- Chamfer Distance (CD): A geometric error metric measuring average closest-point distances between two point sets. "we use Chamfer Distance (CD1, CD2) to evaluate local geometric fidelity"

- Concave region: A geometric area curving inward that can trap surface-aligned gradients, making optimization harder. "In concave regions, splats are only visible from frontal views, yielding weak gradients along the surface normal."

- Depth distortion loss: A 2DGS loss that encourages clustered ray–splat intersections in depth for geometric consistency. "and depth distortion loss from 2DGS."

- Differentiable rendering: Rendering that is compatible with gradient-based optimization, allowing reconstruction from images via backpropagation. "using the differentiable rendering and backpropagation framework."

- Displacement map: A texture that stores per-surface displacements along normals, enabling subpixel geometry via tessellation. "The optimized displacement values derive the displacement map ."

- Geodesic distance: The shortest path distance measured along a surface, used to weight diffusion on meshes. "which naturally decays with an increase in the geodesic distance at the mesh surface."

- Gaussian splat: An oriented Gaussian primitive used for rasterization-based scene or surface representation. "First, we attach a set of Gaussian splats uniformly to a template mesh that covers the target object."

- Gradient diffusion: A regularized update scheme that spreads local gradients across the mesh to enable stable, large-step deformations. "we introduce a gradient diffusion strategy that propagates supervision across the surface"

- Hamilton product: The quaternion multiplication operation used to compose rotations. "where denotes the Hamilton product between the two quaternions."

- Laplacian energy: A smoothness measure based on differences between vertices and their neighbors; minimizing it promotes regular surfaces. "introduced a regularization strategy that minimizes Laplacian energy by diffusing first-order gradients"

- Laplacian matrix: A mesh operator encoding vertex–neighbor relationships used for diffusion and regularization. "where is the identity matrix, is the Laplacian matrix of the mesh"

- LPIPS: A perceptual image similarity metric used to evaluate rendering quality. "PSNR and LPIPS~\cite{zhang2018perceptual} are computed from colored renderings of the reconstructed mesh"

- Marching cubes: A classical isosurface extraction algorithm used to convert volumetric fields into meshes. "require dense meshing using marching cubes or other postprocesses"

- Mipmapping: A multiresolution texture storage technique for efficient and antialiased rendering. "compatible with standard graphics pipelines such as mipmapping, GPU tessellation, and multi-resolution rendering"

- Neural Radiance Fields (NeRF): A volumetric neural representation for novel view synthesis and geometry extraction. "such as Neural Radiance Fields (NeRF)~\cite{mildenhall2021nerf} and 3D Gaussian Splatting (3DGS)~\cite{kerbl20233d}"

- Normal consistency loss: A loss aligning per-splat normals with normals estimated from depth or reference maps. "and incorporates the normal consistency loss "

- Opacity reset: A training step that reinitializes splat opacities to avoid degenerate solutions during densification. "adaptive densification, splitting, and opacity reset strategies"

- Orthographic projection: A projection model without perspective foreshortening used for per-face map extraction. "Each triangle face is treated as a local orthographic projection surface"

- Poisson surface reconstruction: A method for reconstructing watertight meshes from oriented point samples or normals. "or Poisson surface reconstruction~\cite{guedon2024sugar, zhao2024dygasr}"

- Quaternion: A 4D representation of 3D rotation used for splat and mesh orientation. "rotation (a quaternion in scalar-first format)"

- Ray-splat intersection: The computation of where a camera ray intersects a splat’s surface for accurate depth/normal rendering. "we can compute accurate ray-splat intersections, which enables precise rendering of per-pixel depth and normal maps."

- Signed distance functions (SDFs): Scalar fields giving the signed distance to a surface, facilitating smooth surface extraction. "incorporate signed distance functions to enable the extraction of smooth and continuous surfaces"

- Sinkhorn distance: An entropic-regularized optimal transport metric used to compare geometric distributions. "the L1 Sinkhorn distance (SD)~\cite{feydy2019interpolating}"

- Spherical harmonics (SH): A basis for representing view-dependent color/lighting in splatting-based rendering. "the commonly used spherical harmonics (SH) representation in GS encodes view-dependent color"

- SSIM loss: A perceptual similarity loss (Structural Similarity Index) used to align rendered and input images. "and the SSIM loss to align the rendered and input colors."

- Tessellation: GPU-driven subdivision of mesh triangles at render time to increase geometric detail. "the displacement map enables subpixel geometric detail via GPU tessellation"

- UV mapping: A parameterization that maps 3D surface points to 2D texture coordinates. "preserving topological consistency and enabling stable UV mappings"

- Volumetric alpha blending: Compositing along the view ray using transmittance/opacity to accumulate contributions. "and composites the results via volumetric alpha blending to produce the face’s attribute maps."

- Volumetric rendering: Integrating contributions along a ray through a volume or layered surfaces to produce color/depth. "we obtain the values of displacement, surface normal, and color of the texel through volumetric rendering."

Practical Applications

Immediate Applications

The following applications can be deployed now using the paper’s methods and outputs (deformable mesh anchoring for Gaussian splats, alternating 2D/3DGS optimization, gradient diffusion, and extraction of diffuse/normal/displacement maps). Each item notes relevant sectors, potential tools/workflows/products, and assumptions/dependencies that affect feasibility.

- High-fidelity asset reconstruction from multiview capture for games and VFX

- Sector: software (graphics), media/entertainment, AR/VR

- Use case: Convert 80–160 calibrated images of a real object into a production-ready mesh with UV diffuse, normal, and displacement maps; significantly faster rendering than pure GS while maintaining photorealism; reduces need for manual retopology and hand-authored detail.

- Tools/workflows/products: Capture (turntable or handheld), camera calibration (e.g., COLMAP), DeMapGS optimization, export to Blender/Unreal/Unity (glTF/USD/FBX), enable GPU tessellation and mipmapping; integrate in studio pipelines as a “Mesh-from-capture” stage.

- Assumptions/dependencies: Requires known camera intrinsics/extrinsics; an initial template mesh (generic or category-specific); GPU acceleration; scenes with strong specular/view-dependent effects may need extra reflectance modeling.

- Digital human/avatars with cross-object manipulation and animation

- Sector: software (graphics), media/entertainment, education

- Use case: Reconstruct a subject-specific avatar from multiview imagery aligned to SMPL-X; extract normal/displacement maps to recover fine details (wrinkles, seams) and support pose-driven animation; enable morphing/interpolation across individuals via shared canonical surface.

- Tools/workflows/products: SMPL-X initialization, DeMapGS training, optional normal supervision (e.g., Sapiens), export to game engines with skinning; in-editor sliders for geometry/appearance interpolation.

- Assumptions/dependencies: Shared template topology across subjects; pose/shape priors for initialization; consent and privacy-compliant capture pipelines for human subjects.

- AR commerce: rapid, photoreal digital twins of consumer products

- Sector: retail/e-commerce, AR/VR

- Use case: Produce lightweight, fast-rendering meshes with high-fidelity textures for real-time web/mobile AR viewers; displacement and normal maps preserve detail while keeping mesh counts low.

- Tools/workflows/products: Capture rig, DeMapGS, export to web formats (glTF + KTX2 textures), device-side tessellation or baked LODs for mobile GPUs.

- Assumptions/dependencies: Consistent lighting during capture; texture refinement step for color consistency; device GPU capability for tessellation or pre-subdivision fallback.

- Robotics: category-level object models for planning and manipulation

- Sector: robotics

- Use case: Build accurate meshes of household/object categories from images; shared template topology supports canonical alignment across instances for grasp planning, collision checking, and simulation.

- Tools/workflows/products: DeMapGS reconstruction, ROS integration, import into simulators (Gazebo, Isaac), collision mesh + render mesh separation; optionally tune displacement maps for contact-rich interactions.

- Assumptions/dependencies: Camera calibration and sufficient coverage of object views; concave regions can be harder—use alternating 2D/3DGS schedule; performance depends on GPU availability.

- Cultural heritage and archival digitization

- Sector: public sector, education, museums

- Use case: Digitize artifacts into stable, topology-consistent meshes with UV maps; faster rendering for interactive exhibits; supports downstream editing and restoration workflows.

- Tools/workflows/products: Capture rigs, DeMapGS, export to Blender/Sketchfab, web viewers using efficient rasterization and texture LODs.

- Assumptions/dependencies: Licensing and rights management; controlled lighting and multi-view coverage; archival metadata standards for camera parameters and processing steps.

- Artist-friendly editing of geometry and texture via UV maps and mesh anchors

- Sector: software (graphics), media/entertainment

- Use case: Non-destructive edits to color and surface shape by painting in UV space (Photoshop/Substance) and by vertex-level deformations; changes propagate to attached splats for consistent GS re-renders.

- Tools/workflows/products: UV painting tools, Blender/Unreal editors, DeMapGS attribute extraction and differentiable refinement loop for texels; texture-consistency optimization from views.

- Assumptions/dependencies: UV map resolution choices (bandwidth vs detail); view-dependent SH color in GS may require the provided refinement step to reduce minor inconsistencies.

- Faster, production-ready mesh rendering replacing heavy GS in real-time contexts

- Sector: software (graphics), AR/VR, mobile

- Use case: Use extracted maps with standard rasterization, mipmapping, and tessellation to achieve near-GS visual quality at 3–4× FPS compared to dense mesh baselines (e.g., SuGaR), enabling mobile/VR performance budgets.

- Tools/workflows/products: OpenGL/Vulkan/Metal tessellation; LODs; engine integration; export pipelines that standardize normals/displacements.

- Assumptions/dependencies: GPU tessellation support or pre-subdivision; consistent shading models; displacement bounds to avoid self-intersections.

- Academic reproducibility and dataset generation

- Sector: academia

- Use case: Generate aligned, high-quality meshes and maps for benchmarking reconstruction algorithms, texture extraction, and deformable modeling; study gradient diffusion effects and 2D/3DGS alternation.

- Tools/workflows/products: Open-source implementations of DeMapGS, 2DGS, 3DGS; standardized evaluation with CD/SD metrics; export assets to common formats.

- Assumptions/dependencies: Access to multiview datasets and camera calibration; compute/GPU for training; parameter tuning (diffusion strength, densification).

- Prosumer object scanning for makers and hobbyists

- Sector: daily life

- Use case: Capture small objects with a phone, reconstruct into meshes suitable for 3D printing, AR showcases, or modding (e.g., textures for game mods).

- Tools/workflows/products: Simple capture tutorial; DeMapGS packaged tool; export STL/OBJ + textures for consumer software.

- Assumptions/dependencies: Simplified UIs; auto-calibration pipelines; limited specular/transparent materials.

Long-Term Applications

The following applications need further research, scaling, or engineering beyond the current paper (e.g., dynamic scenes, richer material models, mobile/on-device training, broader domain adaptation).

- Real-time, on-device reconstruction and streaming for AR glasses and mobile

- Sector: AR/VR, mobile software

- Use case: Incrementally optimize deformable mesh + splats on-device from live video streams and stream production-ready assets to clients/cloud.

- Tools/workflows/products: Efficient incremental alternation between 2D/3DGS, low-power gradient diffusion, tiled GPU map rasterization; edge inference SDKs.

- Assumptions/dependencies: Reduced compute footprint; robust auto-calibration; thermal/power constraints; privacy-preserving streaming.

- Dynamic/4D reconstruction of deforming objects and scenes

- Sector: media/entertainment, robotics, education

- Use case: Extend mesh-anchored GS to handle temporal motion and topology-preserving deformations, enabling dynamic digital humans, cloth, and soft bodies with consistent UV maps.

- Tools/workflows/products: 4D capture rigs, temporal regularization, skeletal or physics-based constraints; export to simulation engines.

- Assumptions/dependencies: High-frame-rate multiview capture; motion priors; occlusion handling in concavities; real-time optimization stability.

- Physically based material recovery beyond diffuse/normal/displacement

- Sector: software (graphics), e-commerce, design

- Use case: Estimate SVBRDFs (albedo, roughness, specular, normal, height) from multiview imagery using the structured splat-on-mesh pipeline for accurate relighting and product visualization.

- Tools/workflows/products: Inverse rendering modules plugged into DeMapGS; view- and light-parameterized capture; integration with PBR workflows (Substance, USDShade).

- Assumptions/dependencies: Controlled illumination and reflectance priors; more complex loss functions; robust view-dependent color modeling replacing simple SH.

- Category-level canonical modeling for generative design and content creation

- Sector: media/entertainment, design software

- Use case: Learn canonical parametric surfaces per category, enabling interpolation, style transfer, and generative synthesis of geometry/appearance (e.g., “blend between two shoes” while preserving topology).

- Tools/workflows/products: Libraries of templates; UI sliders for cross-object morphing; training sets created with DeMapGS for generative model pretraining.

- Assumptions/dependencies: Large-scale datasets per category; consistent topology alignment; quality control across instances.

- Closed-loop shape adaptation for robot manipulation and simulation

- Sector: robotics

- Use case: Online update of deformable meshes during interaction (e.g., soft objects), using gradient diffusion to maintain regularity; integrate tactile/force feedback for better surface estimation.

- Tools/workflows/products: Sensor fusion modules; control policies aware of mesh updates; simulators with live mesh deformations.

- Assumptions/dependencies: Sensor integration; latency-tolerant optimization; robust attachment of splats under large deformations.

- Medical and biomedical surface modeling (experimental)

- Sector: healthcare

- Use case: Build patient-specific, topology-consistent anatomical surface meshes (skin or organ surfaces) from endoscopic or multi-view imaging; export UV maps for downstream analysis or simulation.

- Tools/workflows/products: Medical capture systems; compliance pipelines; domain-adapted priors and templates; integration with surgical planning tools.

- Assumptions/dependencies: Regulated data access and consent; specialized imaging constraints and calibration; clinical validation and robustness under specular moist tissues.

- Standards and policy for interoperable, efficient 3D digital twins

- Sector: policy/public sector, smart cities

- Use case: Encourage adoption of open formats (glTF/USD) with UV attributes and displacement/normal maps for efficient rendering; define metadata for capture parameters and optimization steps to ensure provenance and reproducibility.

- Tools/workflows/products: Reference specifications; certification workflows for cultural heritage and public assets; repositories for reproducible capture-to-mesh pipelines.

- Assumptions/dependencies: Multi-stakeholder consensus; balancing IP/licensing with openness; long-term archival requirements.

- SaaS and cloud platforms for “mesh-from-capture” at scale

- Sector: software (platforms), e-commerce, media

- Use case: Hosted services that accept image sets and return production meshes plus maps, with optional editing and quality checks; batch processing for catalogs and studios.

- Tools/workflows/products: Scalable GPU clusters; job schedulers; QC dashboards; API integrations into PIM/DAM systems.

- Assumptions/dependencies: Cost-effective GPU scaling; robust failure handling for hard cases (transparent/specular); data privacy and tenancy controls.

Collections

Sign up for free to add this paper to one or more collections.