Tradeoffs between quantum and classical resources in linear combination of unitaries

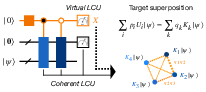

Abstract: The linear combination of unitaries (LCU) algorithm is a building block of many quantum algorithms. However, because LCU generally requires an ancillary system and complex controlled unitary operators, it is not regarded as a hardware-efficient routine. Recently, a randomized LCU implementation with many applications to early FTQC algorithms has been proposed that computes the same expectation values as the original LCU algorithm using a shallower quantum circuit with a single ancilla qubit, at the cost of a quadratically larger sampling overhead. In this work, we propose a quantum algorithm intermediate between the original and randomized LCU that manages the tradeoff between sampling cost and the circuit size. Our algorithm divides the set of unitary operators into several groups and then randomly samples LCU circuits from these groups to evaluate the target expectation value. Notably, we analytically prove an underlying monotonicity: larger group sizes entail smaller sampling overhead, by introducing a quantity called the reduction factor, which determines the sampling overhead across all grouping strategies. Our hybrid algorithm not only enables substantial reductions in circuit depth and ancilla-qubit usage while nearly maintaining the sampling overhead of LCU-based non-Hermitian dynamics simulators, but also achieves intermediate scaling between virtual and coherent quantum linear system solvers. It further provides a virtual ground-state preparation scheme that requires only a resettable single-ancilla qubit and asymptotically shows advantages in both virtual and coherent LCU methods. Finally, by viewing quantum error detection as an LCU process, our approach clarifies when conventional and virtual detection should be applied selectively, thereby balancing sampling and hardware overhead.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper studies a common building block in many quantum algorithms called the “linear combination of unitaries” (LCU). Think of LCU like mixing several simple quantum actions together to create one more complex action. The authors show a new way to balance two kinds of resources:

- Quantum hardware resources (like extra helper qubits and deeper circuits), and

- Classical resources (like how many times you need to repeat measurements, also called sampling).

Their method sits between two existing approaches—one that uses more quantum hardware but fewer samples, and one that uses almost no extra hardware but needs many more samples. They prove when and how their middle-ground approach saves effort on both sides.

What questions does the paper ask?

The paper asks:

- Can we reduce the “sampling overhead” (how many repetitions we need) without needing lots of extra quantum hardware?

- Is there a systematic way to trade circuit depth and helper qubits for fewer samples?

- Can this idea improve real tasks like simulating physics, solving systems of equations, finding ground states, or detecting errors?

How does the method work? (Simple explanation)

First, here’s what LCU does in everyday language:

- Imagine you have several buttons, each performs a specific quantum action (

unitary) on your state. - You want a “weighted mix” of these actions—like a recipe that says “do 10% of action A, 30% of action B, and 60% of action C” all together.

- The LCU trick lets you simulate this “mix” using quantum circuits.

There are two classic ways to do LCU:

- Coherent LCU: You use extra helper qubits (called “ancilla”), and complex controlled operations to build the mix directly. This usually needs more hardware but fewer measurement repeats. Sampling overhead ~ 1/P, where P is the chance the circuit succeeds.

- Virtual (randomized) LCU: You don’t build the mix. Instead, you pick random pairs of actions according to their weights and estimate the same result by averaging many runs (using a simple test called the Hadamard test). This needs much less hardware, but many more repeats. Sampling overhead ~ 1/P².

The new idea (hybrid LCU):

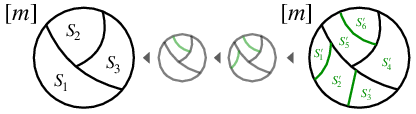

- Group the actions into a few subsets.

- Inside each group, build a smaller “coherent mix” (so you only need a few helper qubits and shorter circuits).

- Across groups, use random sampling (like in the virtual method).

- This gives you a “middle” approach: some extra hardware, but fewer repeats than the fully virtual method.

A key concept they introduce is the “reduction factor” R:

- R tells you how many repeats you need compared to the fully virtual method.

- R is always between 1 (fully virtual) and P (fully coherent).

- Bigger groups lower R (better sampling), but need more helper qubits and deeper circuits.

- Smaller groups raise R (worse sampling), but need less hardware.

Analogy: If you pre-mix ingredients in small bowls before serving, you spend a bit more prep time (quantum hardware), but you save time when serving many people (fewer repeats). If you don’t pre-mix at all, you need lots of serving runs to average out good results.

Main results and why they matter

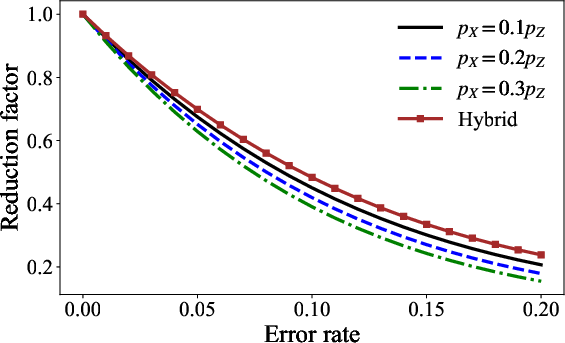

- Reduction factor R: The authors prove that R decreases as you make groups larger. In short: more coherent work inside groups means fewer samples needed later.

- Sampling overhead bridges smoothly: With grouping, the sampling cost for ratios (like expectation values divided by success probability) scales between 1/P² (virtual) and 1/P (coherent). You can choose where you land by how you group.

- Resource tradeoff is explicit: They show exactly how many helper qubits and gates you need based on the largest group size, and how that affects sampling costs through R.

- Multi-round extension: If your algorithm uses several LCU steps in sequence, the idea extends naturally; the overall sampling cost multiplies the R factors from each step.

Applications:

- Non-Hermitian dynamics (simulating systems with gain/loss): By smart grouping, they report up to 32× reduction in circuit depth and using 4 fewer ancilla qubits, while keeping sampling overhead essentially the same.

- Quantum linear system solving: Their method achieves “intermediate scaling” between virtual and coherent solvers—in terms of how performance depends on the condition number κ (how hard the system is to solve) and target accuracy ε.

- Ground-state preparation (finding lowest-energy states): They propose a new scheme that uses only one resettable ancilla qubit and a controlled time-evolution gate, showing asymptotic advantages over both purely virtual and fully coherent approaches in certain regimes.

- Quantum error detection: By viewing error detection as an LCU process, they show a practical rule of thumb: use standard (coherent) detection for frequent errors, and virtual detection for rare errors. This balances hardware cost with sampling cost.

Why is this important?

- Flexible design: The method gives algorithm designers a “dial” to tune between hardware usage and sampling overhead, rather than being stuck with only two extremes.

- Early fault-tolerant devices: For near-future quantum computers that can do some error correction but still have hardware limits, this hybrid approach helps you get better results without overloading the machine.

- Clear, provable guarantees: The paper provides clean mathematical bounds showing how performance changes as you adjust group sizes, and it ties these results directly to real algorithms.

Implications and impact

- Practical quantum advantage: Many useful quantum algorithms depend on LCU. This work makes them more practical by reducing either circuit demands or measurement demands, depending on your constraints.

- A guide for algorithm engineering: The reduction factor R and its monotonic behavior provide a clear blueprint for how to group operations in different tasks.

- Better error handling: Viewing error detection through the LCU lens helps decide when to spend hardware effort and when to lean on sampling, improving reliability at lower cost.

- Long-term: As quantum hardware improves, you can smoothly shift toward larger groups (more coherent mixing) and benefit from fewer samples, yet still keep the option to stay lightweight if needed.

In short, the paper offers a smart middle path for building LCU-based quantum algorithms: do a little more on the quantum side to save a lot on the classical side, with precise control over the tradeoff and solid theory behind it.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of concrete gaps and unresolved questions that emerge from the paper, intended to guide future research.

- Optimal grouping strategy under resource constraints:

- No constructive algorithm is provided to choose the partition {S_k} that minimizes the reduction factor R while respecting hardware constraints (e.g., ancilla budget, depth, connectivity).

- Open: formulate and solve the optimization problem “minimize R[{S_k}; ρ] subject to ancilla and gate budgets,” including worst-case (over ρ) and average-case variants, and develop efficient heuristics or provably near-optimal algorithms.

- Dependence of R on the (unknown) input state ρ:

- R[{S_k}; ρ] depends on ρ, but grouping must often be chosen without precise knowledge of ρ (or ρ varies across rounds).

- Open: design state-agnostic or robust grouping strategies (e.g., worst-case bounds over a known family of states, adaptive grouping with online updates, or grouping that minimizes a Lipschitz/VC-bounded surrogate of R).

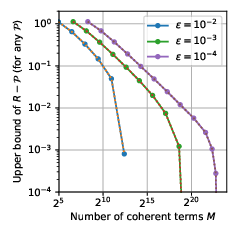

- Adaptive estimation and finite-sample procedures:

- Theorems assume knowledge of P = tr[tildeΛ(ρ)] and R, or appeal to asymptotic estimation; finite-sample, noise-robust procedures with confidence bounds are not specified.

- Open: develop adaptive sampling schemes to jointly estimate R and P with certified confidence intervals, and analyze their end-to-end sample complexity (including the ratio estimator’s error amplification).

- Noise and fault-tolerant overhead modeling:

- The analysis assumes exact block-encodings and error-free PREPARE/SELECT; there is no robustness analysis for gate synthesis errors, control errors, SPAM, or approximate block-encodings.

- Open: quantify bias and variance inflation under realistic FTQC noise models; derive error-propagation bounds for Theorems 1–2; incorporate logical error rates, syndrome extraction, and mid-circuit reset costs into the resource model.

- Controlled-Ui and SELECT costs omitted from gate counts:

- Resource formulas exclude the cost of implementing controlled-Ui and multi-controlled SELECT, which may dominate in practice.

- Open: give explicit, architecture-aware gate counts (Clifford+T, Toffoli depth, T-count/T-depth, QROM/QROAM costs), including data loading and control overheads, and characterize how these scale with m and max_k |S_k|.

- Efficient block-encodings of Kk:

- The paper notes that finding more efficient block-encodings for Kk is an important direction, but gives no constructions beyond standard LCU.

- Open: design structure-exploiting block-encodings (e.g., using sparsity, commutativity, tensor products, oracles, QROM-based techniques) that reduce ancilla, T-count, or depth for group-level operators.

- Integration with amplitude amplification and amplitude estimation:

- The framework does not incorporate amplitude amplification (to boost P) or quantum amplitude estimation (to cut 1/ε2 to 1/ε), nor analyze their tradeoffs with grouping.

- Open: characterize when amplitude amplification is beneficial under grouping constraints; analyze joint use of hybrid grouping with QAE/QSVT-style estimators; determine overall optimal asymptotic and practical regimes.

- Multi-round composition and grouping schedules:

- For r rounds, the multiplicative R factor can blow up; there is no study of optimal grouping schedules across rounds or joint optimization across the entire pipeline.

- Open: design round-dependent partitions that control ∏μ Rμ while meeting cumulative hardware budgets; analyze benefits of adaptive re-grouping as ρ evolves; derive upper/lower bounds against coherent and virtual baselines.

- Negative/quasi-probability coefficients:

- The analysis assumes non-negative coefficients (phases absorbed into Ui), but many LCU/quasi-probability expansions include signed weights that cannot be phase-absorbed.

- Open: extend the theory to quasi-probability decompositions with negativity, define the analogue of R (including L1 “negativity” overheads), and establish sample complexity bounds and monotonicity results.

- General CP maps with multiple Kraus operators:

- The main theory targets a single-operator CP map Λ(·) = K(·)K†; real-world channels often have multiple Kraus operators.

- Open: generalize Theorems 1–2 to multi-Kraus maps and analyze how grouping across operators (and within each operator’s LCU) affects R, P, and resource tradeoffs.

- Observable structure and measurement overhead:

- Results bound complexity using ||O||, but practical algorithms require decomposing O into Pauli sums or compatible bases; overhead and variance reduction from structure (commutativity, shadow tomography, control variates) are not explored.

- Open: co-design grouping and measurement schemes (e.g., grouping aligned with commuting subsets of Ui and measurement bases for O) to reduce variance and circuit depth.

- Concrete thresholds for error-detection application:

- The paper argues to use conventional detection for frequent errors and virtual detection for rare ones, but lacks a quantitative decision rule tied to R, P, code parameters, and noise rates.

- Open: derive explicit thresholds and policies (e.g., based on syndrome detection probabilities, stabilizer weights, and hardware error rates) with provable performance guarantees under realistic noise models and specific codes.

- Linear combination of Hamiltonian simulation (LCHS) details:

- The LCHS application is sketched; rigorous error budgeting (truncation and quadrature errors vs. sampling error vs. grouping-induced variance), and systematic tuning of parameters (K1, K2, quadrature nodes M, group sizes) are not provided.

- Open: develop an end-to-end parameter optimization framework, with provable bounds and practical heuristics, and validate across diverse A (including non-normal and ill-conditioned cases).

- Time-dependent and inhomogeneous differential equations:

- Extensions to A(t) or du/dt = −A(t)u + b(t) are noted but not developed.

- Open: generalize the LCHS-based hybrid method to time-dependent and inhomogeneous settings, including error analysis for time-ordering, discretization, and grouping.

- Ground state preparation and QLSS claims:

- The abstract claims asymptotic/intermediate scaling improvements for ground-state preparation and QLSS, but the paper (as presented) lacks full end-to-end complexity derivations with explicit κ- and ε-dependence, constants, and fault-tolerant costs.

- Open: deliver comprehensive resource analyses (ancillas, depth, T-count, samples) vs. κ and ε, comparing hybrid grouping with purely virtual/coherent and with state-of-the-art QSVT/variable-time/oblivious-amplification approaches.

- Exploiting structure in Ui:

- The grouping design ignores algebraic structure (e.g., commuting families, low-rank updates, tensor-product structure, locality) that could enable cheaper Kk or better R.

- Open: develop structure-aware grouping tailored to specific applications (e.g., local Hamiltonians, stabilizer groups, sparse or block-diagonal Ui), and quantify gains.

- Practical sampling and classical overhead:

- The method requires sampling (k, k′) ∼ qk qk′ and managing varying PREPARE circuits; classical overheads (sampling, table lookups, QROM, schedule management) are not analyzed.

- Open: provide classical runtime/memory analyses and implement sampling/QROM pipelines that scale to large m and heterogeneous group sizes; quantify the real-time control burden.

- Architectural and connectivity constraints:

- Depth/ancilla estimates do not consider physical layout, limited connectivity, or crosstalk; cost of implementing 3-body ABS interactions is not mapped to hardware-native gates.

- Open: compile the hybrid circuits to concrete architectures (superconducting, ion traps, photonics) and evaluate layout-aware depth/latency; explore decomposition strategies that reduce long-range control.

- Finite-precision effects in coefficients:

- Effects of finite-precision in p_i, q_k, and Kk synthesis (e.g., from quadrature in LCHS or approximate weights) on estimator bias and success probability P are not quantified.

- Open: derive stability bounds and precision requirements to keep bias below target ε and ensure reliable confidence guarantees.

- Benchmarks and experimental validation:

- Apart from a brief numerical claim for LCHS, no comprehensive benchmarks are presented across problem sizes, Hamiltonian classes, or hardware noise levels.

- Open: produce systematic numerical and (where possible) experimental studies comparing virtual, hybrid, and coherent LCU across m, P, R, and device constraints, including ablation studies of grouping strategies.

- Lower bounds and optimality of the hybrid tradeoff:

- While R is shown to be monotone with group size, there is no lower bound showing that the proposed grouping-based hybrid is optimal among all hybrid methods under given resource constraints.

- Open: establish complexity lower bounds (information-theoretic or query-complexity) for any hybrid scheme interpolating between P−2 and P−1 sampling overheads; investigate whether alternative hybrids (beyond partitioning) can outperform the proposed method.

- Extensions beyond qubits and to broader channels:

- Applicability to qudit systems and continuous-variable platforms, and to more general non-unitary primitives (e.g., non-isometric maps) is not addressed.

- Open: adapt the hybrid LCU framework and theorems to qudits/CV, and to broader classes of linear maps, including CPTP maps realized via dilations with multiple ancillas.

Practical Applications

Immediate Applications

The following items can be deployed with existing quantum software stacks and early fault-tolerant or high-quality error-mitigated hardware, relying on controlled unitaries, single-ancilla resets, and classical post-processing.

- Hybrid LCU compiler/optimizer for early FTQC workloads

- Sector: software; industry and academia

- Use case: A compiler/middleware pass that automatically partitions LCU terms into groups to minimize sampling overhead (via the reduction factor R) while respecting hardware constraints on ancilla count and circuit depth.

- Tools/products/workflows: “LCU Grouping Optimizer” that

- 1) analyzes the LCU decomposition K = ∑ c_i U_i,

- 2) estimates q_k, R for candidate partitions,

- 3) selects groups to balance hardware (ancilla, depth) and sampling cost,

- 4) emits circuits implementing L_k and randomized pairing (k, k′) with the X-on-B measurement workflow.

- Assumptions/dependencies: Availability of block-encodings or controlled-U_i; ability to estimate or bound success probability P and R; hardware support for fast qubit reset and accurate single-qubit measurements.

- Depth/ancilla reduction for non-Hermitian dynamics (LCHS)

- Sector: healthcare, materials/energy; academia and industry

- Use case: Apply hybrid LCU grouping to truncated and discretized linear combination of time evolutions in non-Hermitian dynamics simulators (e.g., chemical reaction kinetics, transport in materials) to cut circuit depth and ancilla usage while keeping sampling overhead near that of the original LCU.

- Tools/products/workflows: “LCHS Hybrid Executor” that partitions high-weight terms coherently and tail terms virtually; integrates trapezoidal rule discretization and k-domain partitioning; provides an R-aware job plan.

- Assumptions/dependencies: Time-evolution oracles for H + kL are implementable; truncation/discretization errors are managed; probability mass localization enables beneficial fragmentation as suggested by the bounds on R.

- Intermediate-scaling quantum linear system solver

- Sector: finance (risk, portfolio optimization), logistics, engineering; academia and industry

- Use case: Use grouping to achieve sampling and ancilla scaling intermediate between virtual and fully coherent LCU variants, improving runtime for target condition numbers κ and accuracies ε.

- Tools/products/workflows: “Hybrid QLS Solver” that chooses group sizes based on κ, ε, and hardware limits; provides resource forecasts for ancilla and shots using R and P.

- Assumptions/dependencies: Block-encodings of A and controlled reflections are available; κ, ε regimes permit practical gain; error mitigation or early FT is sufficient to run multi-round LCU maps.

- Single-ancilla virtual ground-state preparation (VGSP) with hybrid advantages

- Sector: materials/energy, pharma; academia and industry

- Use case: Implement the proposed ground-state preparation scheme that repeats controlled time-evolution with one resettable ancilla, leveraging hybrid grouping to asymptotically inherit benefits of both virtual and coherent LCU (improved sampling at low ancilla cost).

- Tools/products/workflows: “Single-Ancilla VGSP” library that integrates controlled-U(t) scheduling, reset-and-measure workflows, and adaptive grouping based on observed P.

- Assumptions/dependencies: Controlled time evolution is realizable at requisite precisions; ancilla reset latency is low; spectral gap and filter choices yield practical convergence.

- Selective quantum error detection (QED) policy: conventional vs virtual

- Sector: software, quantum hardware services; policy within organizations; academia and industry

- Use case: Treat QED as an LCU process (projector expanded in stabilizers) to decide when to deploy conventional detection (hardware-heavy, better sampling) versus virtual detection (constant depth, worse sampling) based on error rates (frequent vs rare).

- Tools/products/workflows: “VQED Selector” that monitors syndrome statistics, estimates R and the marginal sampling cost of virtual detection for low-rate errors, and switches modes per stabilizer.

- Assumptions/dependencies: Stabilizer measurements or controlled stabilizers available; accurate online error-rate estimation; overhead models capturing ancilla and depth constraints.

- Resource estimation and benchmarking suite for R and P

- Sector: software; academia and industry

- Use case: Provide standardized reporting and forecasting of reduction factor R, success probability P, and resulting shot counts for hybrid LCU workflows across applications (chemistry, materials, PDEs, ML).

- Tools/products/workflows: “R–P Estimator” integrated in SDKs (Qiskit, Cirq, tket) and cloud portals to inform job planning, budget, and scheduling.

- Assumptions/dependencies: Access to term weights p_i and operator norms; Hoeffding-style bounds apply to targeted observables; empirical calibration of R/P on small instances generalized to larger runs.

- Adaptive grouping during execution

- Sector: software; academia and industry

- Use case: Start with a coarse partition; adapt group boundaries online using observed shot statistics to approach lower R at fixed ancilla limits.

- Tools/products/workflows: “Adaptive Hybrid LCU Runtime” employing Bayesian or bandit strategies to refine groups S_k during job progress.

- Assumptions/dependencies: Streaming telemetry of outcome distributions; stability of hardware noise characteristics; lightweight recompilation or conditional branching.

Long-Term Applications

These items require further research, scaling, hardware maturation, and integration into quantum operating systems and cloud platforms.

- Cross-layer co-design: hardware features for block-encodings and fast ancilla reset tailored to hybrid LCU

- Sector: quantum hardware; industry and academia

- Use case: Architect qubit topologies, control stacks, and microcode that natively support efficient L_k block-encodings, rapid mid-circuit resets, and low-latency X-on-B measurement—all tuned to reduce R and depth.

- Tools/products/workflows: Hardware–software co-optimization pipelines; “LCU-native” control firmware; standardized APIs for block-encoding primitives.

- Assumptions/dependencies: Stable FTQC-era devices with repeatable reset and control; availability of controlled-U_i libraries; error rates compatible with multi-round hybrid maps.

- Quantum OS scheduling policies that optimize R vs ancilla/depth system-wide

- Sector: cloud quantum services; policy and governance within providers

- Use case: Global schedulers decide grouping strategies per job to maximize throughput under ancilla scarcity and depth limits, applying the monotonicity of R to meet SLAs.

- Tools/products/workflows: “R-aware Quantum Scheduler” integrating resource models into admission control, pricing, and queue management.

- Assumptions/dependencies: Mature telemetry, resource accounting, and standardized reporting of R, P, m, and group sizes; user acceptance of adaptive compilation.

- Large-scale PDE/differential equation solvers with hybrid LCU for scientific computing

- Sector: energy, climate modeling, engineering; academia and industry

- Use case: Deploy hybrid LCU in extensive non-Hermitian and time-dependent PDE solvers, enabling tractable sampling costs while controlling ancilla growth, to impact grid optimization, materials discovery, and climate simulations.

- Tools/products/workflows: End-to-end “Hybrid Quantum PDE Solver” with discretization, oracle construction, partition planning, error bounding, and verification steps.

- Assumptions/dependencies: Efficient oracles for complex Hamiltonians; robust error correction; validated convergence analyses beyond the early FTQC scale.

- Hybrid LCU for quantum machine learning and spectroscopy tasks

- Sector: AI/ML, pharma, materials; academia and industry

- Use case: Integrate grouping-based LCU into quantum kernel methods, spectral estimators, and feature maps that rely on linear combinations of unitaries, trading circuit depth for improved shot efficiency.

- Tools/products/workflows: ML-oriented “LCU Feature Composer” that performs grouping-aware kernel construction and training-time shot allocation based on R estimates.

- Assumptions/dependencies: Well-characterized U_i families; stability of training under stochastic measurement noise; alignment with application-specific accuracy needs.

- Automated partition discovery using optimization and learning

- Sector: software; academia and industry

- Use case: Employ combinatorial optimization and reinforcement learning to discover partitions S_k that minimize end-to-end runtime subject to hardware budgets, leveraging inequalities for R (e.g., harmonic mean bounds).

- Tools/products/workflows: “Partition RL” engine integrated in compilers; explainable policies for audit and compliance.

- Assumptions/dependencies: Reliable proxies for runtime and error; generalization across instance classes; cost of re-compilation amortized over large jobs.

- Standards and policy for resource-aware quantum algorithm reporting

- Sector: policy; standards bodies; industry consortia

- Use case: Establish guidelines requiring disclosure of R, P, ancilla counts, depth, and sampling overheads for fair benchmarking and procurement, aligned with the paper’s monotonicity insights.

- Tools/products/workflows: Reporting templates; certification processes; procurement scorecards emphasizing hybrid LCU efficiency.

- Assumptions/dependencies: Community consensus; measurable, reproducible metrics; transparent SDK support.

- End-user impacts in healthcare and materials via improved ground-state preparation

- Sector: healthcare, materials/energy

- Use case: With scalable FTQC, hybrid VGSP pipelines translate into faster, more accurate ground-state property estimation (binding energies, reaction pathways), accelerating drug and material design.

- Tools/products/workflows: Domain-specific workflows coupling hybrid VGSP with classical post-processing and validation.

- Assumptions/dependencies: High-fidelity controlled time evolutions; problem instances where hybrid grouping materially lowers total cost; verified advantage over classical baselines.

Notes on assumptions and dependencies common across applications:

- Decomposition K = ∑ c_i U_i must be obtainable efficiently; phases can be absorbed into U_i, but finding optimal decompositions can be hard and may rely on problem structure.

- Success probability P and reduction factor R drive sampling complexity; practical deployments may estimate them online rather than require exact values.

- Grouping benefits increase when the probability mass p_i is localized, allowing fragmentation of low-weight tails with minimal impact on R.

- The performance proofs rely on bounded observable norms and classical concentration (e.g., Hoeffding), adequate in typical Hadamard-test-based workflows.

- Block-encoding and controlled-U primitives must be available or approximated; improved block-encodings can further reduce gate counts beyond the baseline LCU construction.

- Multi-round applications multiply R across rounds; error rates, reset latencies, and measurement fidelities must be controlled for advantages to manifest.

Glossary

- Ancilla qubit: A helper qubit used to facilitate quantum operations without carrying problem data itself. "a shallower quantum circuit with a single ancilla qubit"

- Block-encoding: A technique that embeds a non-unitary matrix into a larger unitary so it can be accessed via controlled projections. "any (error-free) block-encoding of ."

- Coherent LCU: The fully quantum implementation of linear combination of unitaries that prepares superpositions using ancillas and controlled operations. "the full coherent LCU "

- Completely positive map (CP map): A linear, physically valid quantum operation preserving positivity even when extended to larger systems. "a completely positive map (CP map) "

- Condition number (κ): A measure of the sensitivity of a linear system’s solution to input perturbations; crucial for algorithmic complexity. "with respect to the condition number "

- Controlled unitary: A unitary operation applied conditionally based on the state of one or more control qubits. "complex controlled unitary operators"

- Fault-tolerant quantum computing (FTQC): Quantum computing with error-correction ensuring reliable execution despite noise. "early FTQC algorithms"

- Ground-state preparation: Procedures to prepare the lowest-energy eigenstate of a Hamiltonian. "virtual ground-state preparation scheme that requires only a resettable single-ancilla qubit"

- Hadamard test: A circuit technique using an ancilla and interference to estimate real or imaginary parts of expectation values. "Monte-Carlo sampling combined with the Hadamard test"

- Hamiltonian simulation: Quantum methods to approximate the time evolution governed by a Hamiltonian operator. "coherent techniques for quantum speedups such as Hamiltonian simulation"

- LCU lemma: A result guaranteeing that PREPARE–SELECT constructions implement linear combinations of unitaries upon post-selection. "which is known as the LCU lemma"

- Linear combination of Hamiltonian simulation (LCHS): An approach that represents propagators as integrals of time evolutions, enabling simulation via LCU. "linear combination of Hamiltonian simulation (LCHS) for non-Hermitian dynamics simulation"

- Linear combination of unitaries (LCU): A framework for implementing non-unitary operations by probabilistically combining unitary circuits. "The linear combination of unitaries (LCU) algorithm is a building block of many quantum algorithms."

- Mixed unitary channel: A quantum channel that is a convex mixture of unitary operations. "there exists a mixed unitary channel "

- Non-Hermitian dynamics: Evolution governed by non-Hermitian generators, relevant for open systems and dissipative processes. "non-Hermitian dynamics simulators"

- Pauli X: A single-qubit quantum gate and observable that flips |0⟩ and |1⟩ and is used in interference-based measurements. "by measuring Pauli X in the top register"

- Partial trace: An operation tracing out subsystems to obtain reduced states or marginal quantities. "where denotes the partial trace over the ancilla system"

- Post-selection: Conditioning on specific measurement outcomes to implement desired (often non-unitary) transformations. "via an appropriate post-selection"

- PREPARE operation: The LCU step that encodes probability amplitudes over an ancilla register. "The PREPARE operation is used to encode the coefficient "

- Projection probability: The probability of successfully projecting onto the desired ancilla subspace in LCU procedures. "the projection probability "

- Quantum error detection: Techniques to detect (and potentially discard) states with errors without necessarily correcting them. "quantum error detection for error suppression"

- Quantum linear system solver: Algorithms for preparing states proportional to solutions of linear systems using quantum primitives. "quantum linear system solver"

- Reduction factor: A quantity determining the sampling overhead in hybrid LCU by capturing the effect of grouping. "by introducing a quantity called the reduction factor"

- SELECT operation: The LCU step that applies the appropriate unitary conditioned on the ancilla register. "The SELECT operation acts on the whole system "

- Stabilizer unitary operator: Elements of a stabilizer group (Pauli products) used to define and project onto quantum error-correcting code spaces. "a linear combination of stabilizer unitary operators"

- Success probability: The probability that an LCU circuit yields the desired post-selected outcome (often the ancilla |0…0⟩ state). "The success probability for implementing $\tilde{\Lambda}_{p,\mathcal{U}$ is given by"

- Virtual LCU: A stochastic, measurement-based approach that estimates expectations without coherently preparing the target state. "the virtual LCU method"

- Virtual quantum error detection (VQED): A constant-depth, sampling-based error detection approach trading circuit complexity for increased sampling. "virtual quantum error detection (VQED)"

Collections

Sign up for free to add this paper to one or more collections.