- The paper introduces a novel pipeline combining REACT and SemaLens, which leverages LLMs and VLMs to transform ambiguous requirements into precise, testable specifications.

- The paper demonstrates automated error detection and comprehensive test case generation that reduces manual burden and enhances certification readiness for safety-critical systems.

- The paper’s framework improves semantic traceability and reliability through real-time monitoring and semantic coverage analysis, fostering greater trust in AI systems.

Leveraging Foundation Models for Assurance in AI-Enabled Safety-Critical Systems

Motivation and Problem Statement

Safety-critical systems integrating AI components, particularly DNNs in domains such as aerospace and autonomous vehicles, present formidable challenges for assurance. The primary obstacles stem from the opacity of neural architectures, the semantic gap between natural-language requirements and their low-level representations, and longstanding bottlenecks in Requirements Engineering (RE): ambiguity, imprecision, inconsistency, and scalability limitations. These complications are exacerbated in heterogeneous systems blending conventional and learning-enabled components. Capturing operational uncertainty, confidence bounds, and emergent behaviors in specifications further stretches the limits of traditional approaches.

Framework Overview

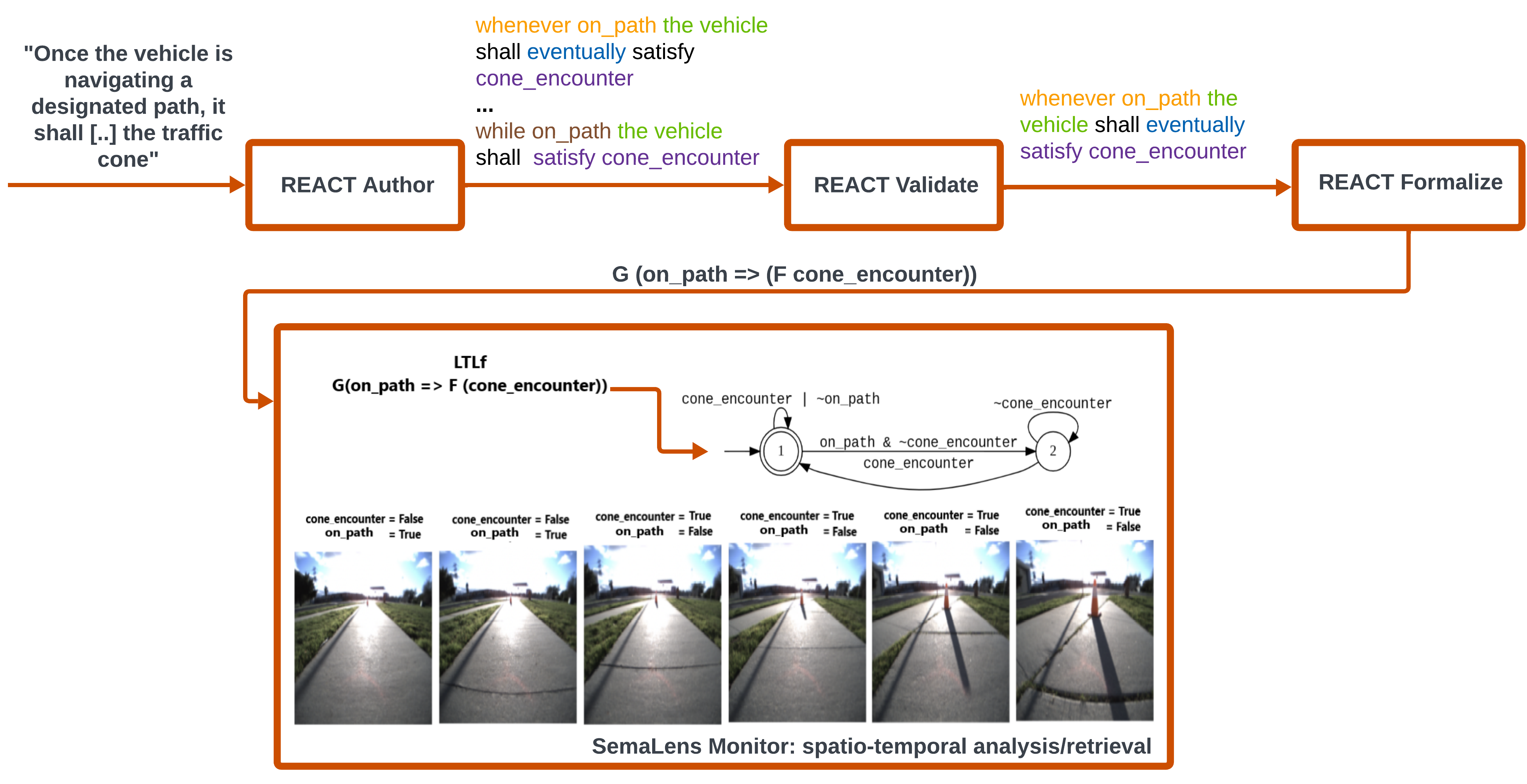

The paper proposes an integrated pipeline—‘fighting AI with AI’—utilizing foundation models (LLMs and VLMs) for systematic assurance across the life cycle. It introduces two complementary components: REACT and SemaLens.

Detailed System and Workflow

The system’s core workflow transits from English requirements to runtime monitoring:

- Authoring and Validation: REACT Author ingests unrestricted text, producing multiple candidate translations in Restricted English (RE)—a grammar-constrained representation supporting unambiguous interpretation. Human-in-the-loop validation is supported by trace-based scenario differentiation, allowing domain experts to select semantically correct formulations.

- Formalization: Selected RE requirements are automatically transformed into formal specifications (e.g., LTLf). The architecture accommodates extensions for probabilistic and uncertainty quantification, crucial for learning-enabled components.

- Automated Analysis: REACT Analyze enables automated detection of inconsistencies, conflicts, and ambiguities, minimizing expensive downstream corrections.

- Test Case Generation: Leveraging formalized requirements, REACT ensures coverage-driven test suite synthesis with explicit traceability, facilitating compliance with standards like DO-178C and emerging AI assurance frameworks.

SemaLens integrates VLM-powered modules:

- Semantic Monitoring: VLMs (e.g., CLIP) extract concepts and spatial relationships from image/video sequences. Temporal logics specify properties evaluated through concept similarity scores, enabling both offline risk identification (e.g., accident scenario mining) and online conformance monitoring.

- Test Input Generation: Text-conditional diffusion models generate diverse, semantically-rich test images and videos aligned to requirements’ preconditions, enhancing robustness evaluation beyond conventional simulation domains.

- Coverage Metrics: Semantic coverage metrics assess feature coverage across datasets, identifying gaps without manual annotation. Embedding space alignment between DNNs and VLMs extends both black-box and white-box testing.

- Debugging and Explanation: Cross-model alignment permits concept-based reasoning about perception system decisions. Semantic heatmaps and feature localization enable explainable debugging and runtime adversary detection, crucial for explainability and operational assurance.

Empirical Results and Claims

While the paper is a conceptual proposal, several strong claims are made:

- Traceability and Early Error Detection: The workflow demonstrably catches semantic ambiguities and consistency errors at the requirements stage, reducing costly remediation.

- Scalability: Leveraging LLMs and VLMs enables processing large, complex requirement sets and heterogeneous data modalities, addressing scalability bottlenecks endemic to traditional RE.

- Robustness and Reliability: SemaLens-powered monitoring detects deviations in real-time, contributing to safer AI operation in critical contexts.

- Testing Coverage: The framework supports comprehensive requirements-based testing of perception models, with guarantees on coverage across semantic features and operational domains.

- Manual Effort Reduction: Automated formalization, coverage analysis, and test generation fundamentally lower the burden on domain experts.

Implications and Future Directions

The integration of foundation models for assurance signals a paradigm shift in safety-critical AI system engineering:

- Certification Standards Evolution: The synergy between REACT and SemaLens addresses certification requirements not met by DO-178C or similar standards, aligning with the forthcoming SAE G-34 guidelines for AI assurance.

- Explainability and Trust: Semantic analysis and concept-based debugging expand explainability mechanisms for opaque DNNs, enhancing trust and facilitating regulatory acceptance.

- Semantic Robustness Evaluation: Generation and analysis of out-of-distribution and adversarial scenarios extend the robustness frontier, crucial for autonomous systems operating in unpredictable environments.

- Lifecycle Integration: The end-to-end approach points to self-adaptive assurance pipelines, with AI systems continuously monitored and validated against evolving requirements.

Potential future developments include more sophisticated semantic mapping algorithms, integration with text-conditional generative models for scenario synthesis, and federated model evaluation frameworks that interoperate with operational field data. The use of foundation models for assurance will likely extend into cross-domain applications, further blurring lines between AI development and verification.

Conclusion

This paper delineates a comprehensive framework for assurance in AI-enabled safety-critical systems by leveraging the reasoning capabilities of foundation models. The integration of REACT and SemaLens establishes an automated, scalable, and rigorous pipeline from requirements authoring to implementation monitoring, directly addressing challenges in semantic traceability, ambiguity resolution, and test coverage. The practical and theoretical implications include accelerated certification processes, enhanced explainability, and a new frontier in AI lifecycle assurance. The work contributes foundational concepts and methods that are poised to influence future standards and tools for high-assurance AI engineering.