- The paper introduces a real-time 3D recoloring system that maps 2D mask selections to precise 3D edits using Gaussian Splatting.

- It employs interactive depth estimation, statistical outlier removal, and TensorRT optimization to ensure efficient 2D-to-3D unprojection and color harmonics update.

- Benchmark evaluations demonstrate millisecond-level responsiveness and competitive GPU memory usage (~10 GB) compared to diffusion-based approaches.

Real-time 3D Scene Editing via ReCoGS: Precision Recoloring with Gaussian Splatting

Introduction and Motivation

The ReCoGS ("ReColoring for Gaussian Splatting scenes") framework introduces an interactive, user-driven approach for pixel-precise recoloring edits on 3D Gaussian Splatting (3DGS) representations. Gaussian Splatting methods have demonstrated superior efficiency and reconstruction fidelity in novel view synthesis compared to canonical neural scene representations such as NeRF. Yet, their non-geometric, photometric-only optimization remains a bottleneck for direct scene editing, especially when view-consistent, fine-grained, and interactive manipulation is required. ReCoGS addresses this gap with a pipeline emphasizing real-time performance, granular mask-based selection, and direct background optimization, suitable for consumer-grade hardware.

Methodology and Pipeline

ReCoGS frames the editing process as a seamless, interactive selection mapping from 2D user input to the corresponding 3D scene regions, facilitating high-fidelity recoloring without requiring scene re-training or heavy pre-processing.

Interactive Editing Pipeline

The user interface offers direct exploration of the scene; at any view, users can select arbitrary pixel regions via a brush tool. This 2D mask is unprojected to 3D via a pre-trained depth estimator (PCVNet) given the camera intrinsics and extrinsics, generating a corresponding pointcloud of target regions in the scene.

Figure 1: ReCoGS editing pipeline with per-pixel selection, 2D-to-3D unprojection, and background optimization workflow.

The unprojected pointcloud is sampled for outlier rejection and rendered in real-time with depth testing against the scene's rough depth. The 3D mask is then reprojectioned to all training views; pixels therein are tinted according to the user's filter, and the scene's parameters (specifically the spherical harmonic coefficients of the affected Gaussians) are optimized in the background using the edited images as supervision.

Depth Map Estimation and Filtering

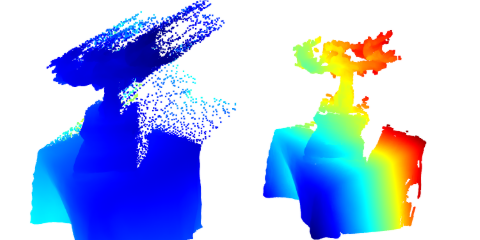

Accurate depth is pivotal for precise selection transfer from 2D to 3D. Various depth estimation models were considered; ultimately, PCVNet was adopted for its computational performance and acceptable qualitative output. Its disparity maps are fused from horizontal and vertical stereo pairs, with disparity-to-depth conversion governed by focal length and relative camera shift.

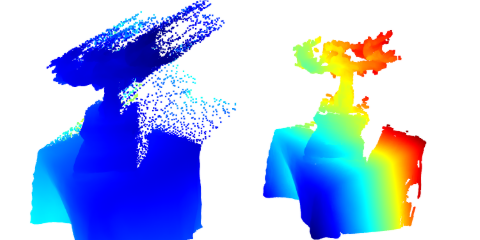

Post-unprojection, statistical outlier removal further refines the pointcloud and mitigates erroneous selection propagation.

Figure 2: Statistical outlier removal significantly improves mask fidelity after unprojection to 3D.

Optimizations such as model quantization (TensorRT with FP16) deliver substantial inference speedups and model size reductions, ensuring editing remains interactive.

Figure 3: PCVNet depth predictions: PyTorch model (top) vs. TensorRT-FP16 (bottom), demonstrating viable pointcloud quality despite increased noise at lower precision.

Differentiable Optimization and Implementation

Rather than leveraging autograd frameworks, ReCoGS hard-codes loss and gradient computations, exploiting domain-specific kernels for efficient forward and backward passes, focusing solely on updating the color harmonics without introducing new geometry or perturbing the splat structure.

Rasterization is performed in HWC format for maximal GPU memory utilization, with CHW conversion for SSIM-based loss treatment. Modular threading and CUDA stream segregation further maximize hardware throughput.

Comparative Evaluation and Results

ReCoGS diverges from prior art by delivering true pixel-level, mask-based selection, dispensing with the constraints and inefficiency of 2D diffusion-model-based approaches or coarse segmentation pipelines. Unlike methods such as ICE-G, PaintSplat, and various diffusion-assisted editors, which either require costly re-training, lack code availability, or impose selection granularity limits, ReCoGS achieves millisecond-level responsiveness and genuine interactivity.

Benchmarking demonstrates that recoloring edits can be propagated on large scenes (e.g., MipNeRF360's bicycle, kitchen, stump) with total runtimes dictated by scene complexity and edit location frequency. GPU memory consumption remains tractable (~10 GB for the largest scenes), which is highly competitive compared to diffusion-based approaches exceeding 20 GB and multi-minute latency. Optimizer continues background refinement for persistent quality improvement.

Limitations and Prospects

Despite real-time capability, the approach inherits limitations from depth estimation inaccuracies and the choice of mask rendering mechanisms. Occasional holes or leaks in the selection arise from prediction errors or rough Gaussian depth testing. Only color harmonics are optimized, so edits are constrained if the Gaussian support exceeds the intended target region. Potential mitigations include segmentation of Gaussians or dynamic densification. Custom stereo matching models tailored for Gaussian Splatting configurations could further enhance unprojection precision and efficiency.

Practical and Theoretical Implications

ReCoGS substantiates that interactive, pixel-level editing in 3DGS-represented scenes is tractable without the overhead of full scene retraining or reliance on latent diffusion models. This principle may be extrapolated to broader classes of explicit, photometrically-aligned radiance field methods. The pipeline's fidelity in mapping user intent from 2D to 3D, coupled with hardware-conscious optimization, sets a precedent for plug-and-play 3D editing tools. Targeted harmonics optimization allows for color manipulations decoupled from geometric constraints, ensuring edit propagation remains consistent across novel views.

Augmenting the method for general texture transfer, object manipulation, or structural refinement would require more intricate segmentation and geometry-aware optimization, which is an attractive direction for future research.

Conclusion

ReCoGS demonstrates a robust, interactive pipeline for region-specific recoloring of Gaussian Splatting scenes, grounded in precise 2D-to-3D selection, efficient depth estimation, and optimized background parameter updates. Its practical runtime, granular control, and minimal resource requirements endorse it as a viable framework for real-time 3D scene editing and a foundation for richer editing operations in explicit radiance field representations.