Dataset Safety in Autonomous Driving: Requirements, Risks, and Assurance

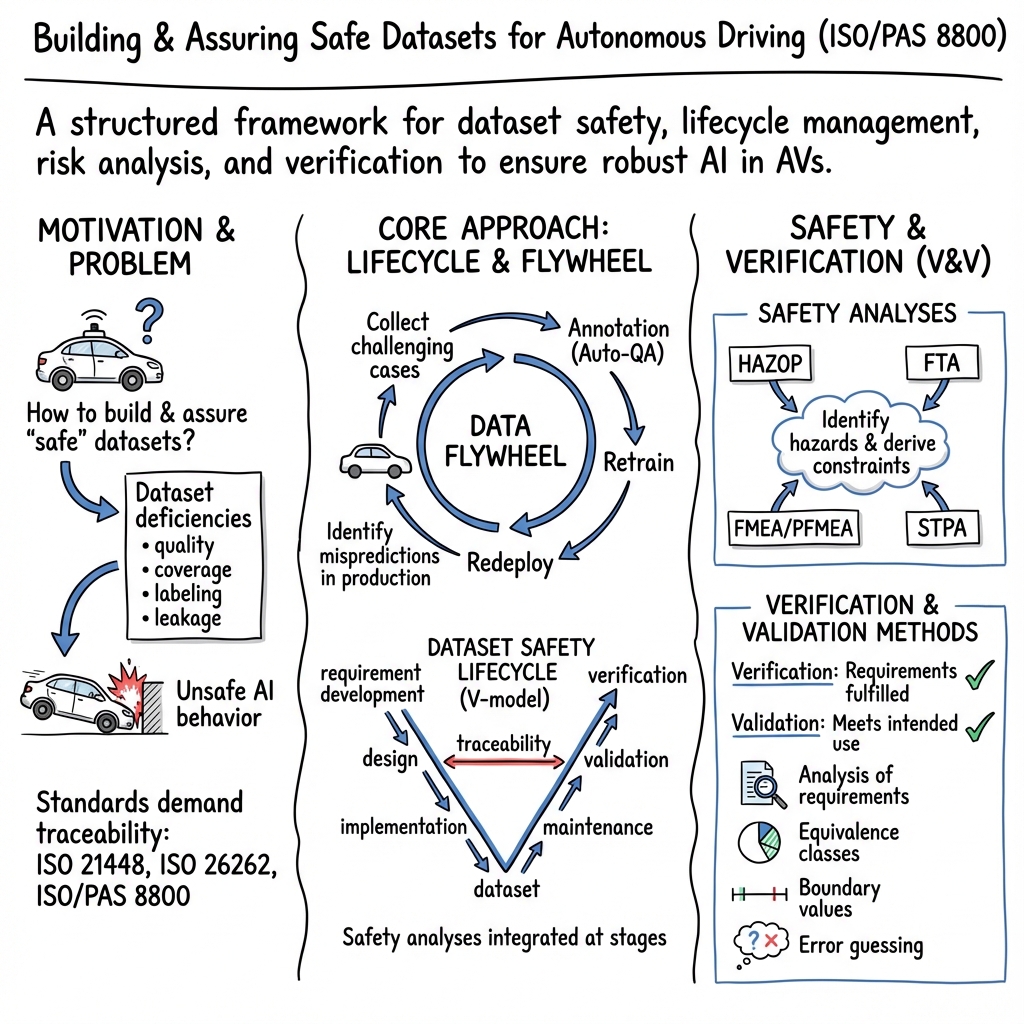

Abstract: Dataset integrity is fundamental to the safety and reliability of AI systems, especially in autonomous driving. This paper presents a structured framework for developing safe datasets aligned with ISO/PAS 8800 guidelines. Using AI-based perception systems as the primary use case, it introduces the AI Data Flywheel and the dataset lifecycle, covering data collection, annotation, curation, and maintenance. The framework incorporates rigorous safety analyses to identify hazards and mitigate risks caused by dataset insufficiencies. It also defines processes for establishing dataset safety requirements and proposes verification and validation strategies to ensure compliance with safety standards. In addition to outlining best practices, the paper reviews recent research and emerging trends in dataset safety and autonomous vehicle development, providing insights into current challenges and future directions. By integrating these perspectives, the paper aims to advance robust, safety-assured AI systems for autonomous driving applications.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper outlines a comprehensive framework but leaves several aspects under-specified or unvalidated. Future work could focus on the following concrete gaps:

- Define quantitative acceptance criteria and measurement protocols for ISO/PAS 8800 dataset safety properties (accuracy, completeness, correctness, independence, integrity, representativeness, temporality, traceability, verifiability), including thresholds, sampling strategies, and audit procedures.

- Operationalize ODD-to-dataset mapping with a standardized scenario taxonomy and coverage metrics (e.g., how to measure “complete coverage” of ODD slices, rare events, and plausible perturbations), and provide tools for automated ODD gap analysis.

- Establish rigorous, multimodal dataset leakage detection methods beyond pHash and geographic splits (e.g., temporal route re-identification across sensors, cross-modality correlations, environmental cues), with benchmark datasets and measurable leakage risk scores.

- Quantify the safety impact of dataset compression across modalities (camera, LiDAR, radar) on long-tail performance, sensor calibration, small object detection, and uncertainty calibration; define per-scenario compression safety tests and error-bounded settings that preserve safety-critical features.

- Provide empirical validation of the proposed automated annotation quality check pipeline (SAM + OpenCLIP) across tasks (2D/3D detection, semantic/instance segmentation, tracking), modalities (camera/LiDAR/radar), and conditions (night, adverse weather), including false positive/negative rates and human-in-the-loop efficiency gains.

- Specify annotation quality metrics and processes (inter-annotator agreement, label noise estimation, ground-truth uncertainty modeling, adjudication workflows), and quantify how label errors propagate to model safety risks.

- Detail active data selection policies using VLMs (selection criteria, bias assessment, multilingual performance, failure modes), and evaluate the risk of VLM-driven selection reinforcing dataset biases or underrepresenting critical scenarios.

- Formalize the “data flywheel” safety gating (criteria to accept/reject relabeled samples, guardrails against feedback loops that amplify spurious correlations, rollback policies), and demonstrate closed-loop safety evaluation on real deployments.

- Provide concrete algorithms and results for “optimized dataset collection” (cost models, multi-project trade-offs, route planning under coverage constraints, diminishing returns analysis), including ablation studies and economic benchmarks.

- Address dataset safety for E2E planning systems and VLM/VLA-based AD explicitly (what data/labels are required when perception is implicit, how to ensure planning safety without explicit detections, how to represent language/trajectory alignment, and define planning-focused dataset acceptance tests).

- Develop standardized metadata schemas and label taxonomies (classes, attributes, occlusion, relevance, lane association) with cross-dataset interoperability, unit conventions, time synchronization, and calibration protocols to support traceability and cross-benchmarking.

- Integrate distribution shift monitoring into the lifecycle with actionable triggers (which statistical distances or performance deltas mandate maintenance, thresholds per ODD slice, alerting policies, and response playbooks), and validate drift detection sensitivity/specificity.

- Provide applied case studies demonstrating HAZOP, FTA, FMEA/PFMEA, and STPA on real datasets, including templates, severity/occurrence/detection scoring, tool support, and quantification of risk reduction from mitigations.

- Expand verification and validation (V&V) beyond general statements with scenario-based test design, coverage metrics, independence checks, adversarial robustness tests, and rare-event stress testing; the incomplete V&V section needs full methodology and evidence.

- Map the framework to related standards (ISO 26262, ISO 21448/SOTIF, ISO/TR 4804, UNECE regulations) and define end-to-end compliance artifacts (e.g., dataset safety case structure, audit trails, evidence requirements).

- Incorporate data security and integrity assurance (poisoning/backdoor detection, provenance tracking, cryptographic signing/watermarking, supply chain risk management for autolabelers and third-party data) with measurable safeguards and incident response.

- Detail privacy-preserving dataset operations (PII detection, anonymization/pseudonymization efficacy, privacy risk assessments per modality—including in-cabin sensors—and compliance workflows for GDPR/CCPA), and quantify utility–privacy trade-offs.

- Address multimodal sensor selection and placement optimization with data-driven evaluation (blind-spot analysis, redundancy benefits, cost–performance trade-offs, effect on dataset coverage and label quality), including optimization formulations and results.

- Provide methods to quantify and improve long-tail/edge-case coverage (rare condition mining, targeted synthetic generation, active learning/uncertainty sampling), and report how interventions improve safety-relevant metrics.

- Establish causal links between dataset quality and end-to-end safety outcomes (define mediators like perception reliability, planning policy robustness, ODD boundary adherence), with controlled studies or quasi-experimental evidence.

- Describe governance, roles, and change control for datasets (versioning, lineage, traceability, impact analysis of modifications, sign-off criteria), and release templates/checklists to support reproducible safety assurance.

- Include reproducible artifacts (open tools, benchmarks, scripts for leakage detection, drift monitoring, annotation QC) and public case studies to validate the framework across different organizations and datasets.

- Clarify maintenance schedules and lifecycle triggers (how often to refresh, which deployment telemetry signals drive updates, how to prioritize additions, rollback mechanisms) and evaluate maintenance effectiveness quantitatively.

- Investigate cross-market/region generalization (cultural and regulatory differences affecting annotations, signage, behavior), and quantify transferability; propose strategies for adaptive datasets that remain compliant and representative across jurisdictions.

- Define standardized dataset safety dashboards and composite indices (combining coverage, quality, independence, drift, and V&V results) to support ongoing monitoring, stakeholder communication, and regulatory audits.

Collections

Sign up for free to add this paper to one or more collections.