- The paper introduces an error-guided densification approach that uses image-space photometric error and pixel-cone alignment to optimize 3D Gaussian splatting.

- It employs an innovative iNGP-based initialization and targeted error sampling to dynamically place and scale primitives, reducing redundancy.

- Empirical results demonstrate up to 0.6 PSNR improvement and 20% faster rendering, achieving superior reconstructions with fewer primitives under budget constraints.

ConeGS: Error-Guided Densification Using Pixel Cones for Improved Reconstruction with Fewer Primitives

Introduction and Motivation

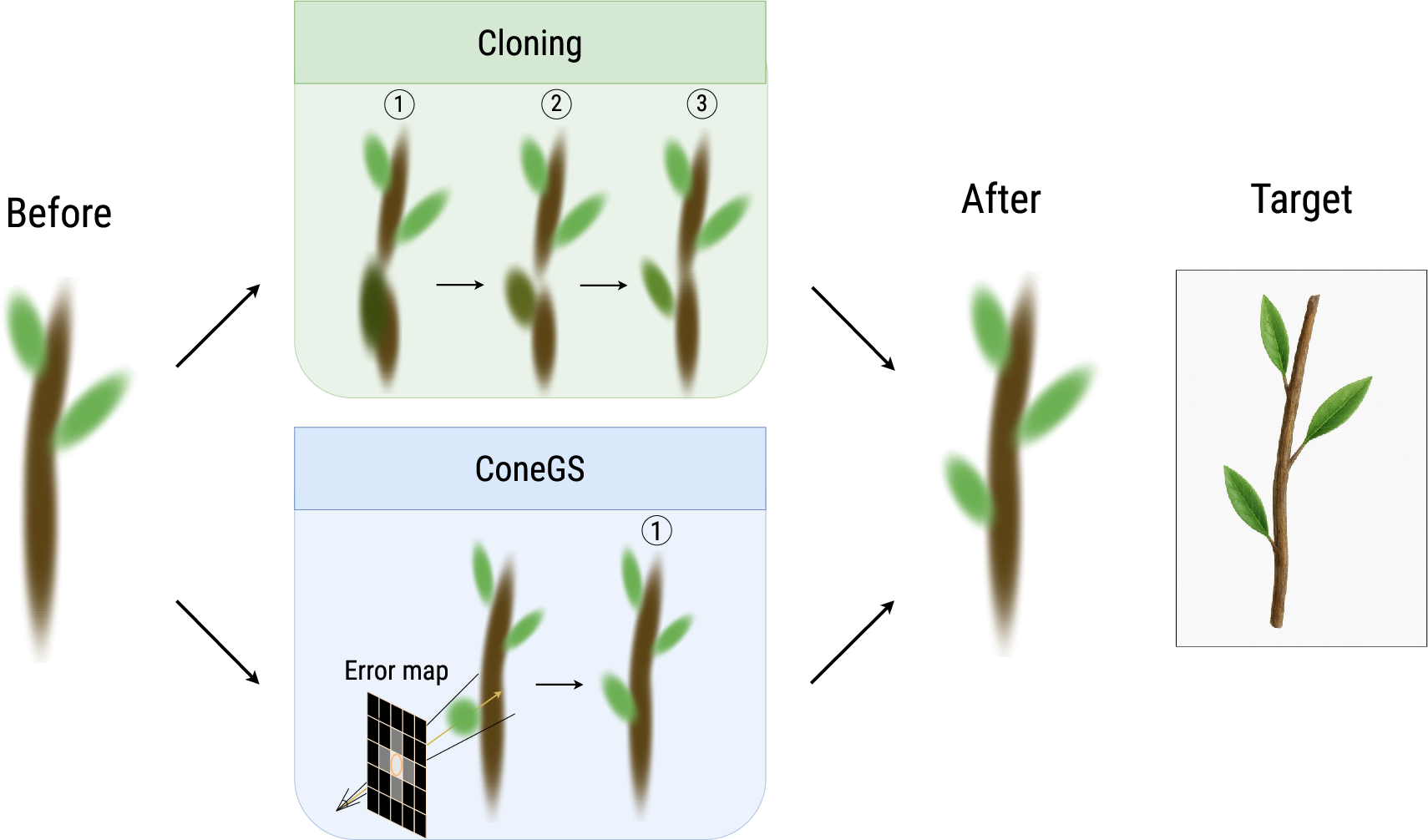

3D Gaussian Splatting (3DGS) provides a compelling paradigm for accelerated neural scene representation and novel view synthesis, but its conventional cloning-based densification strategies lead to poor global primitive distributions and excessive redundancy, especially under constrained primitive budgets. The ConeGS method addresses these limitations by leveraging efficient error-guided densification in image space and introducing explicit pixel-cone-aligned primitive sizing for improved reconstructions with fewer primitives. This approach decouples primitive growth from existing geometry, allowing target-driven exploration of underrepresented and high-frequency regions.

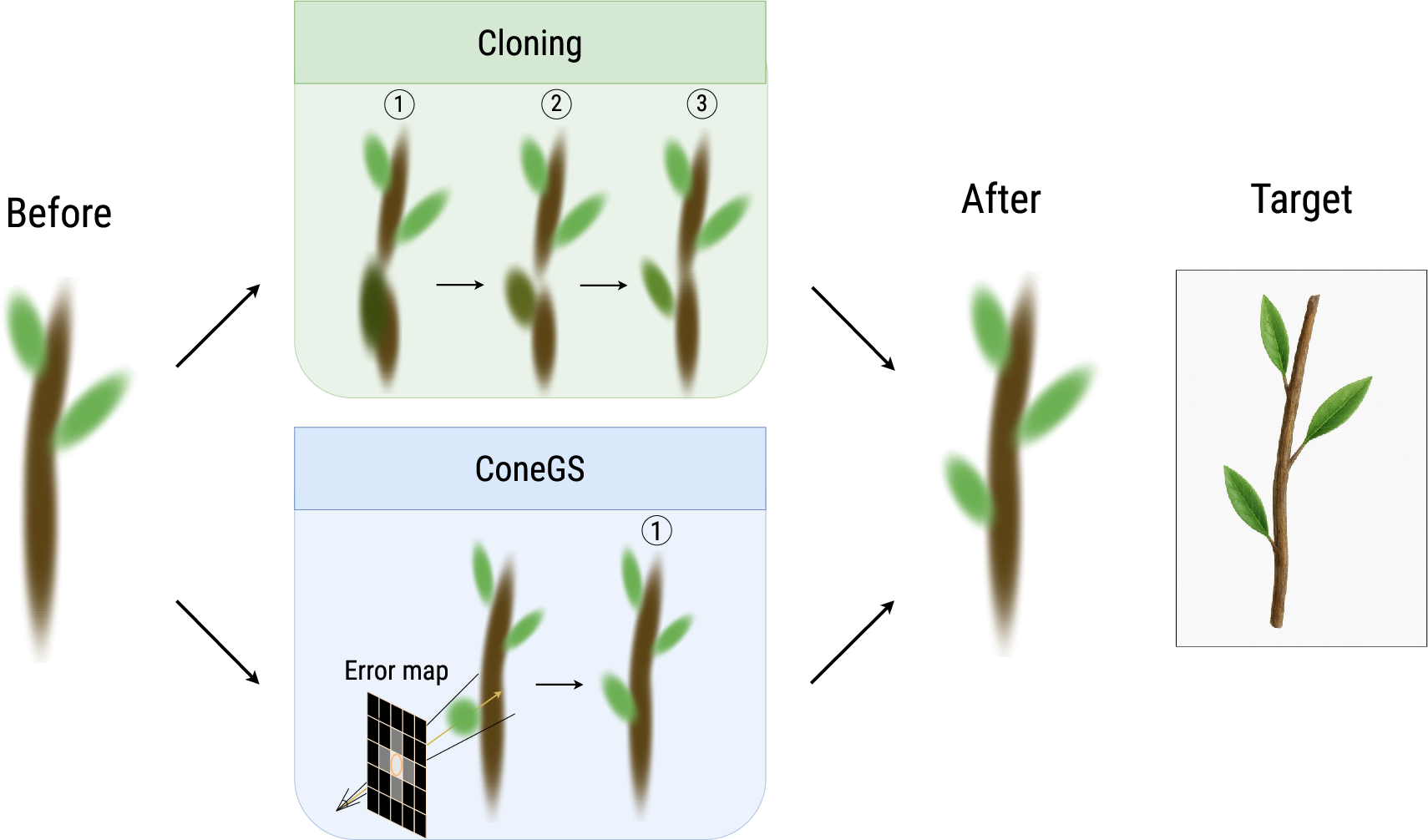

Figure 1: Densification comparison between cloning-based methods and ConeGS: error-guided cone-sized primitives offer faster and more precise scene integration.

Methodology

Overview

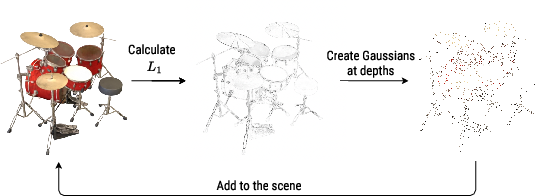

ConeGS introduces a densification regime built around image-space photometric error and proxy geometry from an auxiliary Instant Neural Graphics Primitives (iNGP) depth field. During optimization, high-error pixels are sampled, back-projected via the iNGP-predicted depth for precise 3D primitive placement, and the new Gaussians are sized according to the projected diameter of the corresponding pixel's viewing cone.

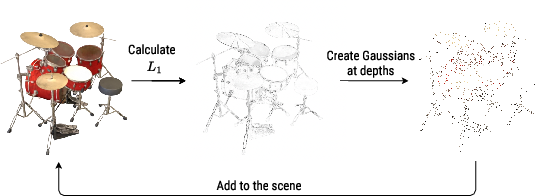

Figure 2: Error-guided densification: pixels with large L1 error are sampled, and new Gaussians are placed at iNGP-predicted depths along those rays, matching pixel footprint width.

Initialization

Instead of relying on Structure-from-Motion (SfM) or random cloud priors, ConeGS utilizes a shallow iNGP trained briefly on input images to generate accurate per-pixel depth predictions for initializing a set of Gaussians. Each pixel in a subset of the training set images is mapped to a 3D point along its camera ray at the iNGP median depth, providing an unbiased geometric coverage that is independent of the limitations of SfM or point density. The initial scales of the Gaussians use average k-NN distances, as in standard 3DGS, but subsequent densification uses a fundamentally different scaling criterion.

Error-Guided Densification

During each optimization step, the current rendered image is compared to ground-truth via per-pixel L1 loss. Pixels are multinomially sampled with probability proportional to normalized error magnitude, focusing densification on regions where capacity limits degrade reconstruction the most. For each sampled pixel, a new Gaussian is constructed at the iNGP-inferred median depth along the corresponding camera ray. Accumulation and pruning are performed every fixed number of iterations to control scene growth rate and prevent unnecessary primitive proliferation.

Pixel-Cone-Aligned Scaling

For each densified primitive, ConeGS computes scale based on the radius of the pixel’s viewing cone at the selected depth—a direct parameterization from Mip-NeRF's anti-aliasing cones, ensuring that the primitive’s image-space projection closely matches the footprint of the originating pixel. This data-driven isotropic scaling avoids both the excessive overlap that slows convergence and the under-coverage that leads to unconstrained outliers.

Opacity Penalty, Pruning, and Budgeting

Low-opacity Gaussians are pruned periodically, with a pre-activation L1 penalty encouraging aggressive sparsification without destabilizing training. The total primitive count is regulated by either a strict upper bound (fixed-budget optimization) or a growth-rate-based controller proportional to scene complexity. Importantly, the pipeline remains compatible with further 3DGS enhancements, such as codebook quantization or alternative volumetric rendering schemes.

Empirical Evaluation and Analysis

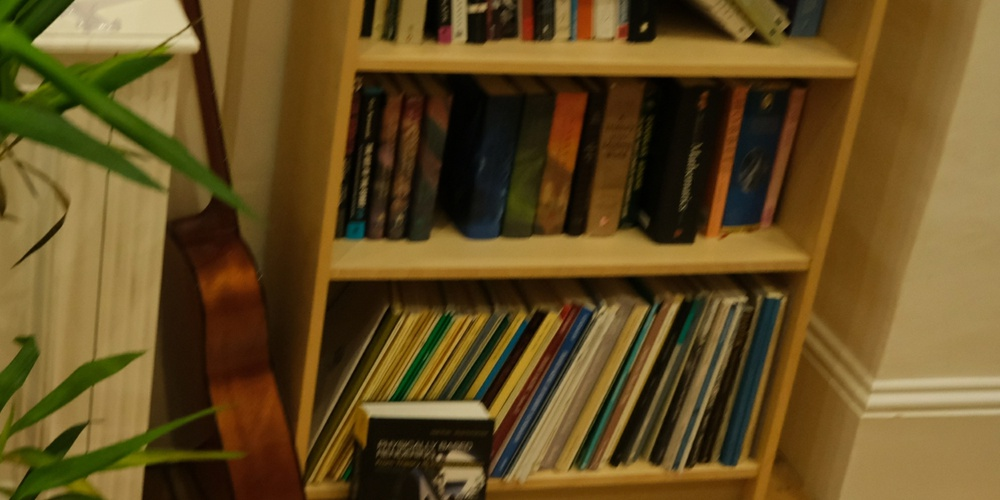

ConeGS is benchmarked on Mip-NeRF360, OMMO, and additional public datasets, with reconstructions evaluated for PSNR, SSIM, LPIPS, and FPS. Across all Gaussian budgets, ConeGS achieves consistently superior or competitive performance with markedly fewer primitives.

Notably, under constrained budgets (e.g., 100k or 500k Gaussians), ConeGS leads to up to 0.6 PSNR gain and 20% higher rendering speeds versus traditional and MCMC-based densification, while also outperforming non-densification strategies such as EDGS. On higher budgets, the gains diminish, validating the hypothesis that precise error-guided placement is most valuable when primitive capacity is at a premium.

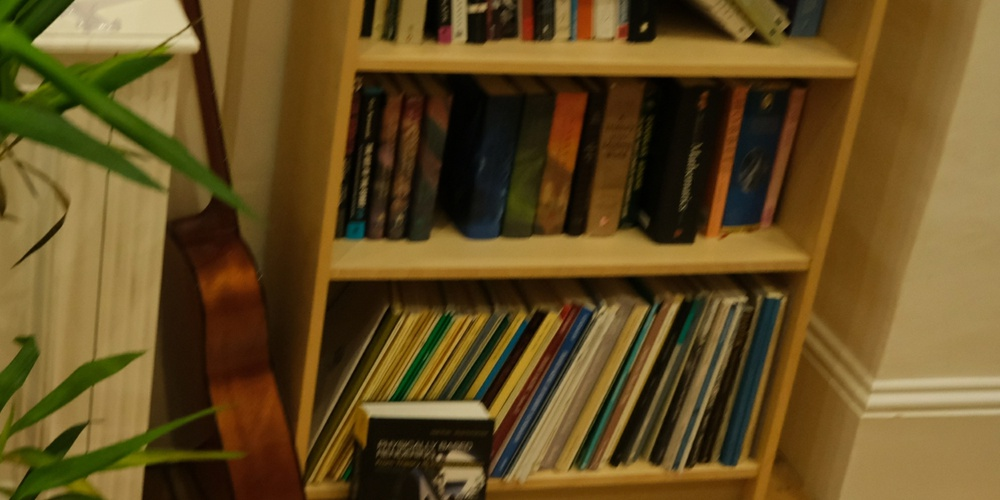

Figure 3: Qualitative comparison on diverse scenes: ConeGS demonstrates sharper details and fewer artifacts under matched primitive constraints compared to EDGS and MCMC baselines.

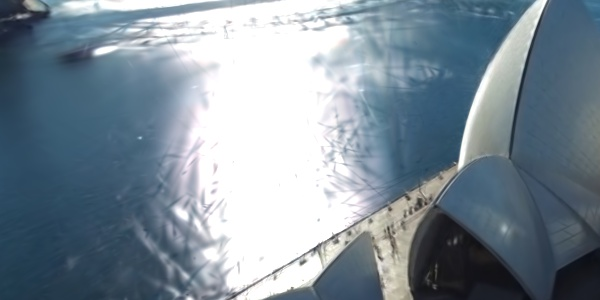

Qualitative inspection shows visibly improved coverage of fine and isolated geometric structures with less ghosting and background floaters. When explicit comparisons are made against state-of-the-art strong-initialization methods, ConeGS achieves equivalent or superior fidelity with substantially fewer primitives and lower computational cost.

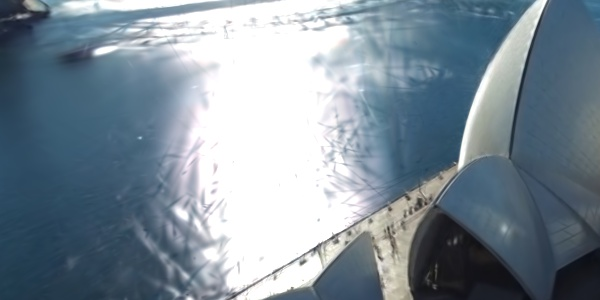

Figure 4: Comparison on challenging scenes with Mini-Splatting2: ConeGS preserves detail while using fewer and more optimally placed primitives.

Figure 5: Evaluation with shrunk Gaussians: ConeGS maintains structure and appearance under scale compression, outperforming MCMC on high-frequency and boundary regions.

Ablations and Analysis of Design Choices

Extensive ablation studies reveal that:

- The use of iNGP initialization alone provides minimal gain unless combined with error-guided densification.

- k-NN or 3DGS-depth-based scaling for new primitives is inferior to pixel-cone-aligned scale assignment.

- Uniform image-space sampling for densification (as opposed to error-guided) leads to lower-fidelity reconstructions, particularly impairing high-frequency detail.

- The pre-activation opacity penalty significantly outperforms post-activation or periodic resets, yielding more stable pruning and consistent primitive growth.

- Even with aggressive pruning and fixed budgets as low as 100k, ConeGS avoids the spatial bias and degeneracy associated with cloning or splitting-based densification.

Figure 6: Initialization methods visualized—iNGP-based initialization ensures broader scene coverage and reduced bias compared to random or SfM-based seeds.

Theoretical Perspective and Broader Implications

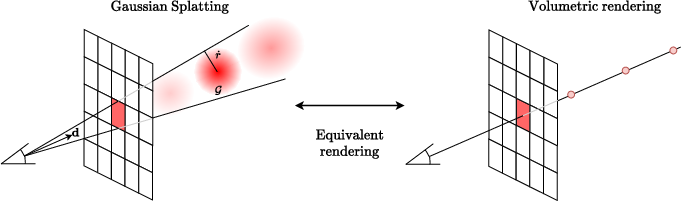

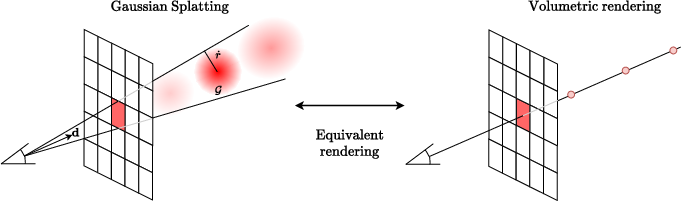

The ConeGS approach makes explicit the connection between NeRF-style volumetric rendering and 3DGS rasterization: error-guided pixel-wise placement with radiance field regularization converges to an implicit model equivalent, especially when initialization and densification operate on cone-projected footprints.

Figure 7: Schematic illustration of the equivalence between NeRF ray marching optimization and 3DGS rasterization with pixel-cone-aligned primitive spawning.

By aligning densification policy with photometric objectives, ConeGS separates scene expressiveness from geometric priors, paving the way for dynamic, adaptive, and weakly supervised scene reconstructions in scenarios where initial geometry is noisy, sparse, or absent. It also suggests a clear roadmap for integrating learned densification controllers, automatic budget tuning, and potential adoption in streaming or online SLAM systems where real-time adaptive fidelity and sparsity are critical.

Limitations and Future Directions

ConeGS exhibits clear advantages on conventional datasets with reliable camera poses and dense viewpoints. However, its dependency on proxy depth estimation limits transferability to scenes with inaccurate geometry priors, strong occlusion, or significant out-of-distribution sparsity. Moreover, at extremely high primitive budgets, the benefits of error-driven placement are naturally attenuated, with rendering speed emerging as the primary differential. These results indicate a need for future work on robustifying proxy estimation and explicit uncertainty modeling in densification, as well as hierarchical multi-scale budget controllers that can adapt render complexity in real time.

Conclusion

ConeGS demonstrates a methodologically robust and practically efficient solution to the limitations of cloning-based 3DGS densification. Through rigorous error-guided, cone-aligned placement, it achieves competitive or superior reconstruction quality with reduced primitive counts and higher rendering efficiency, especially in budget-limited settings. The explicit decoupling of densification from prior geometry enables more stable, interpretable, and adaptable scene optimization, suggesting applicability in both offline high-fidelity modeling and real-time adaptive systems for graphics and vision.