- The paper introduces a synthetic-data-driven Mask R-CNN approach to automatically segment CME envelopes in coronagraph images.

- The model achieves a median IoU of 0.98 on synthetic data and shows strong generalization with robust performance on real datasets.

- The method offers scalable, automated CME detection with implications for improved space weather monitoring and potential extension to 3D models.

Automatic Detection of Coronal Mass Ejections Using Synthetically-Trained Mask R-CNN

Introduction and Context

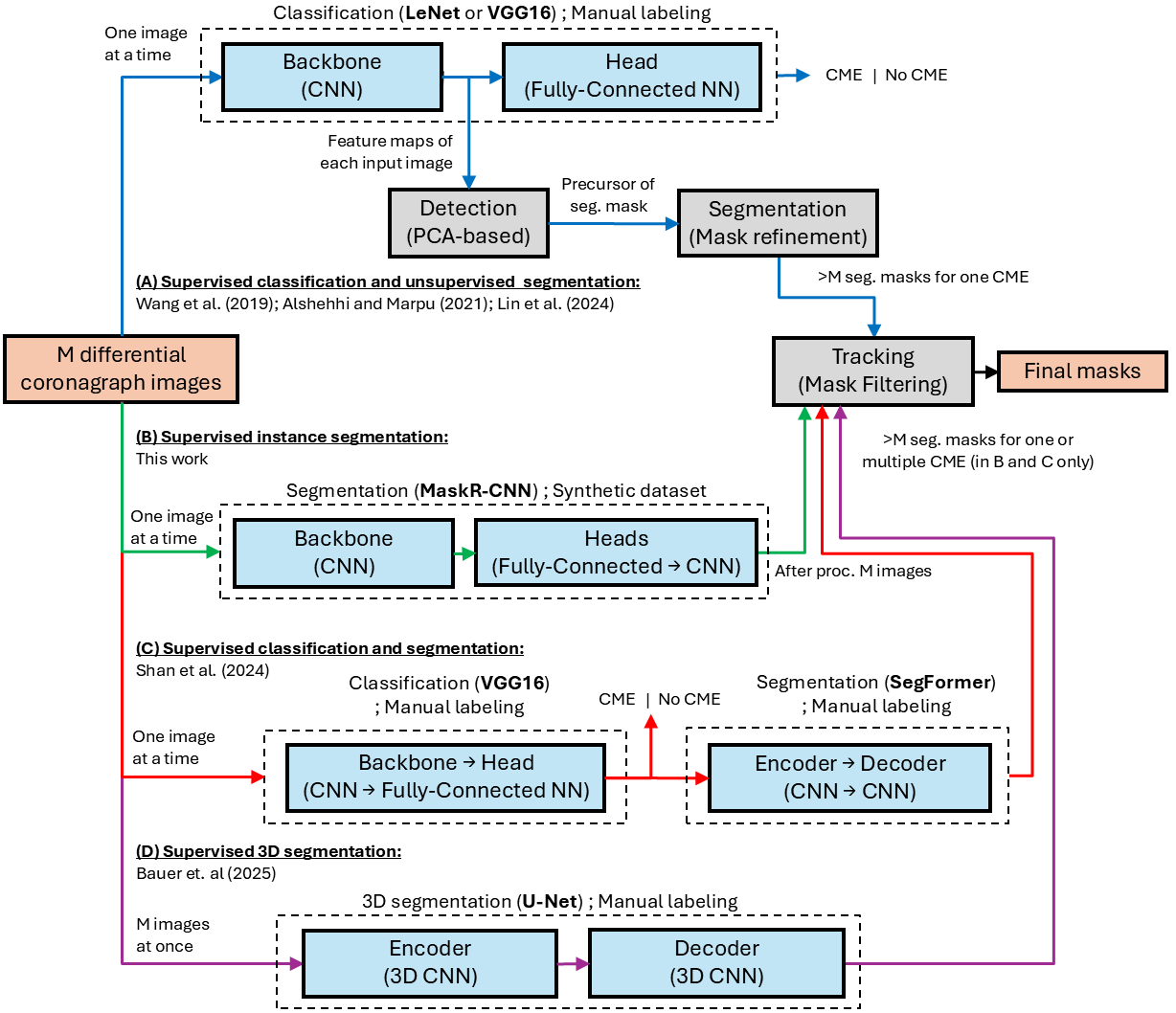

Coronal Mass Ejections (CMEs) are fundamental drivers of heliospheric variability and geoeffective space weather phenomena, characterized by the rapid expulsion of plasma and magnetic field from the solar corona into interplanetary space. Accurate identification of CME morphology and kinematics in coronagraph data is essential for both scientific understanding and practical forecasting. Manual catalogs, though able to incorporate expert judgment, are limited by subjectivity and inefficiency, while prior automated and ML-based approaches face significant shortcomings in generalizability and segmentation fidelity.

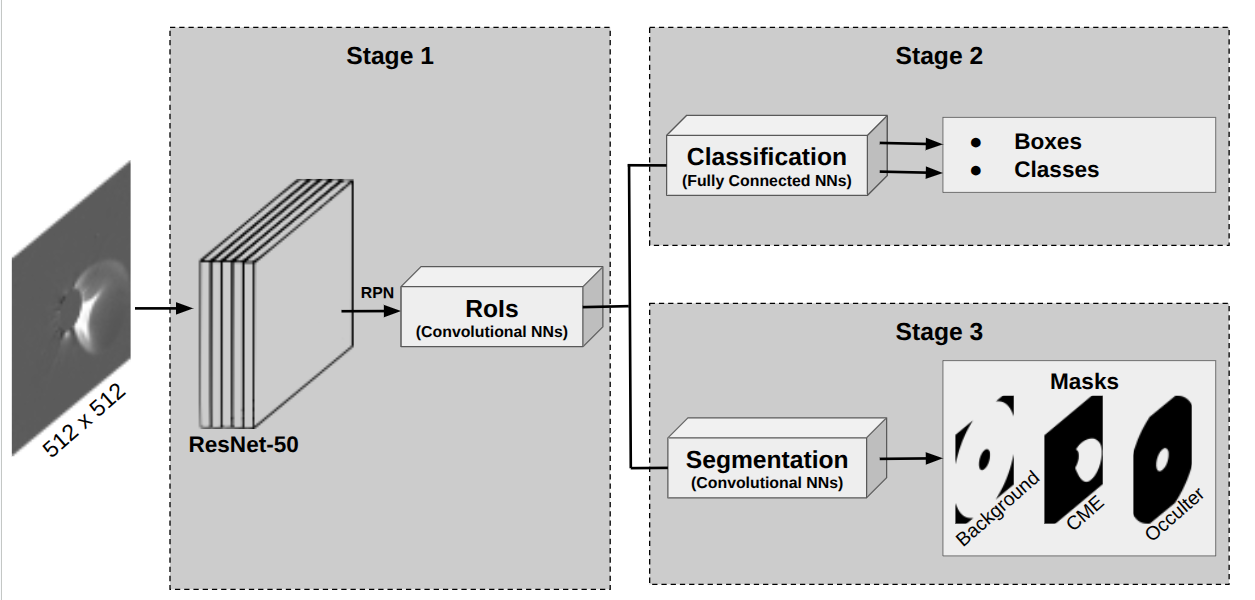

This paper addresses the problem by applying Mask R-CNN, a supervised deep convolutional instance segmentation model, to the task of CME shell detection in single-differenced coronagraph images. The notable novelty lies in training the instance segmentation network with a synthetic dataset generated via the empirical Graduated Cylindrical Shell (GCS) geometric CME model, rather than relying on scarce and subjective human annotations.

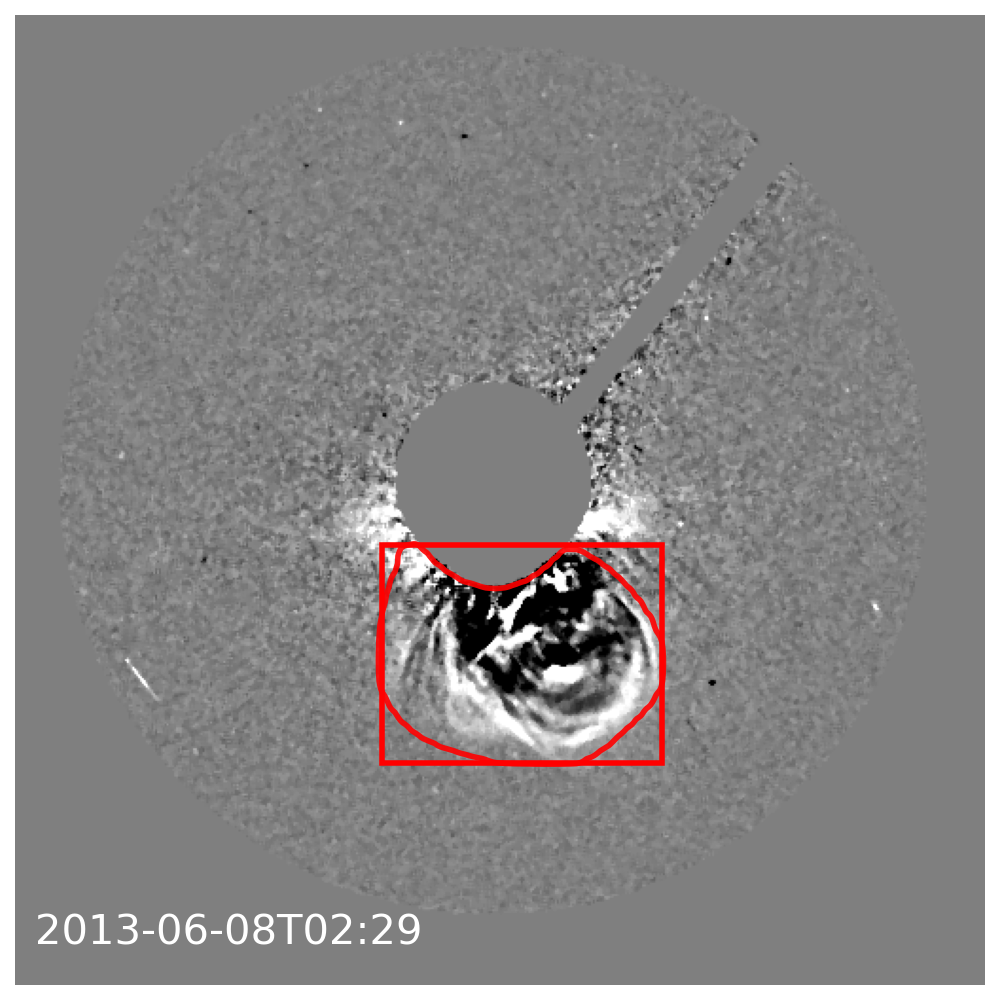

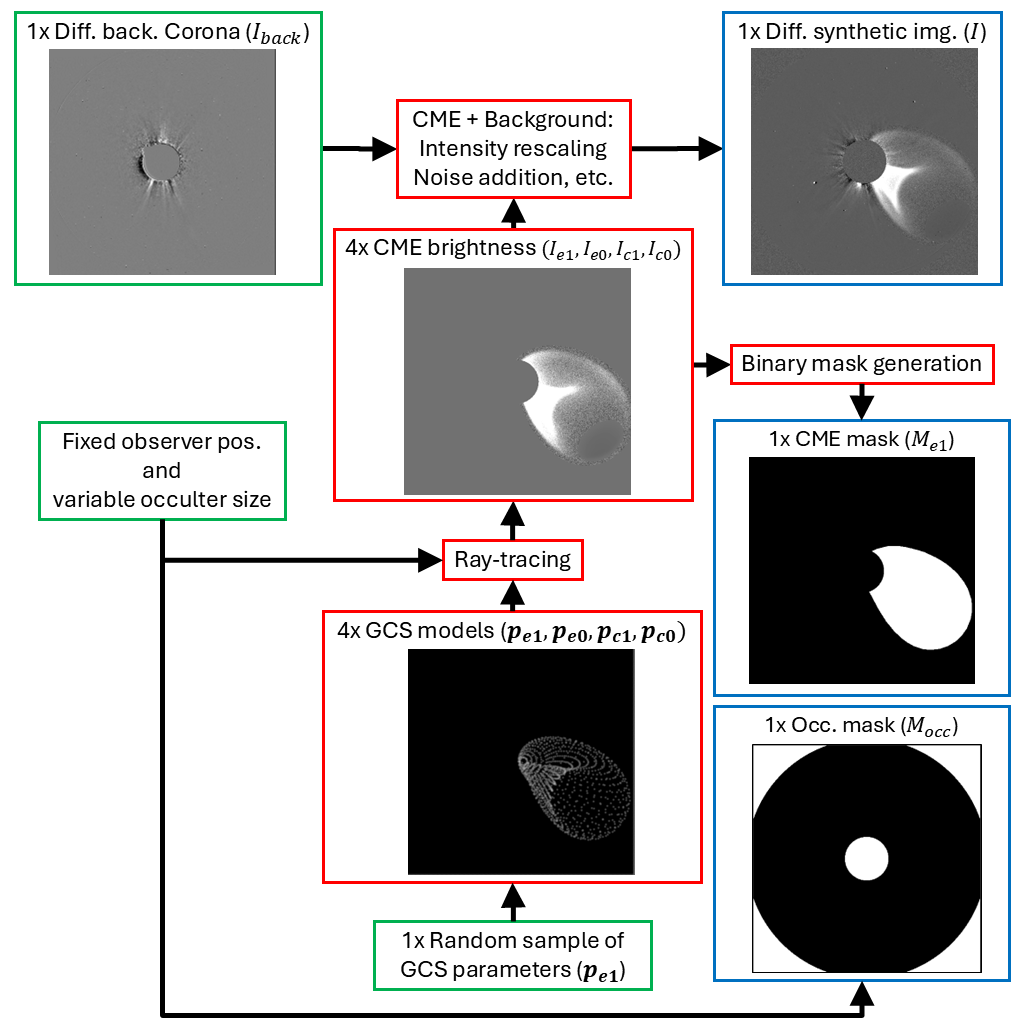

Figure 1: Overview of ML-based CME segmentation approaches; this work utilizes instance segmentation (B, green arrows) with synthetically generated data.

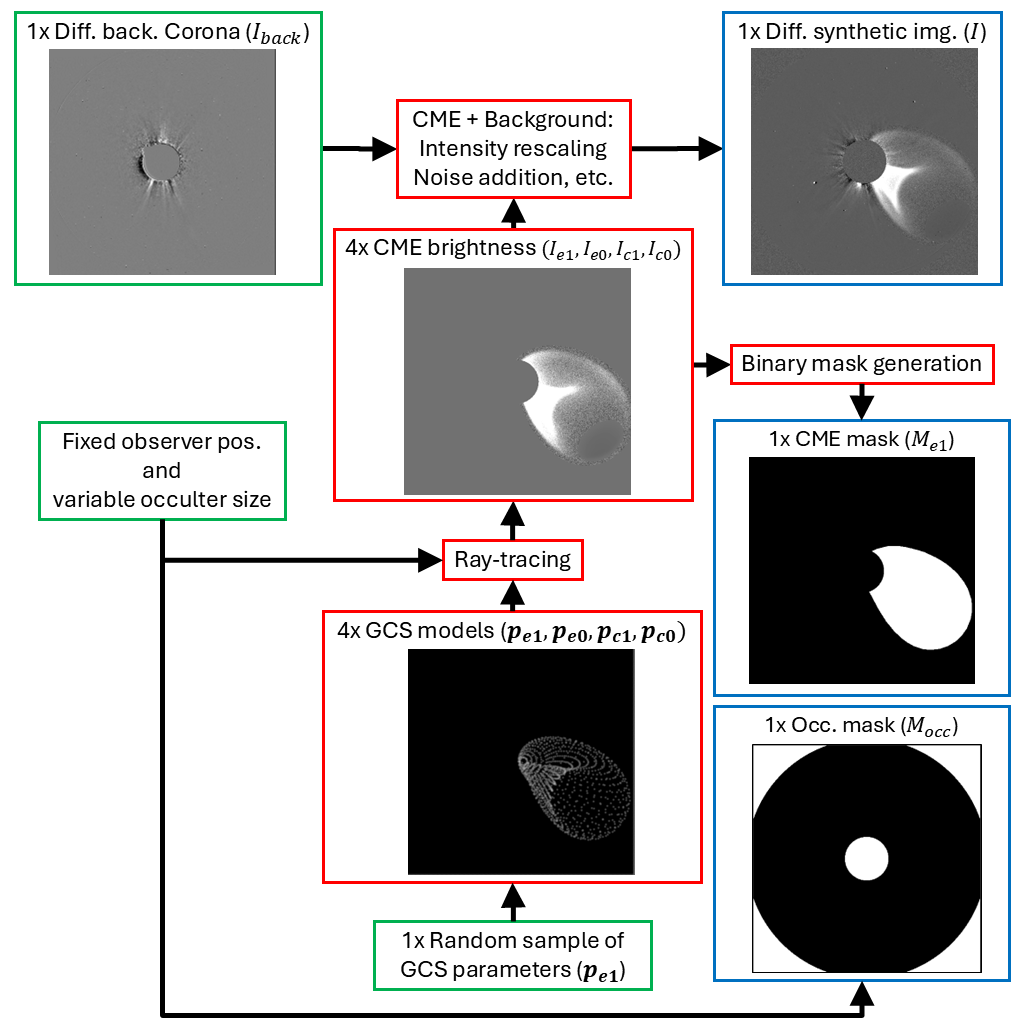

Synthetic Dataset Generation

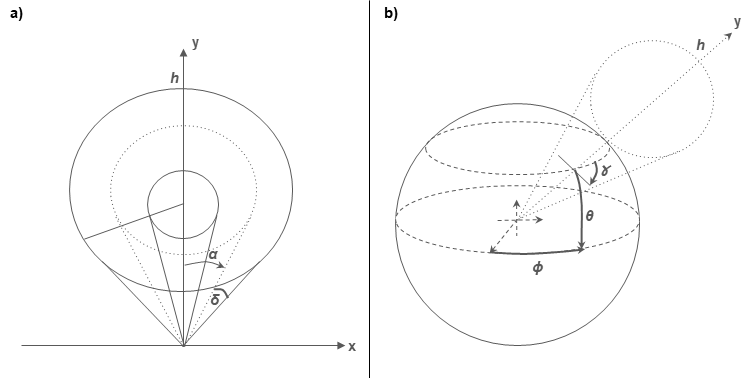

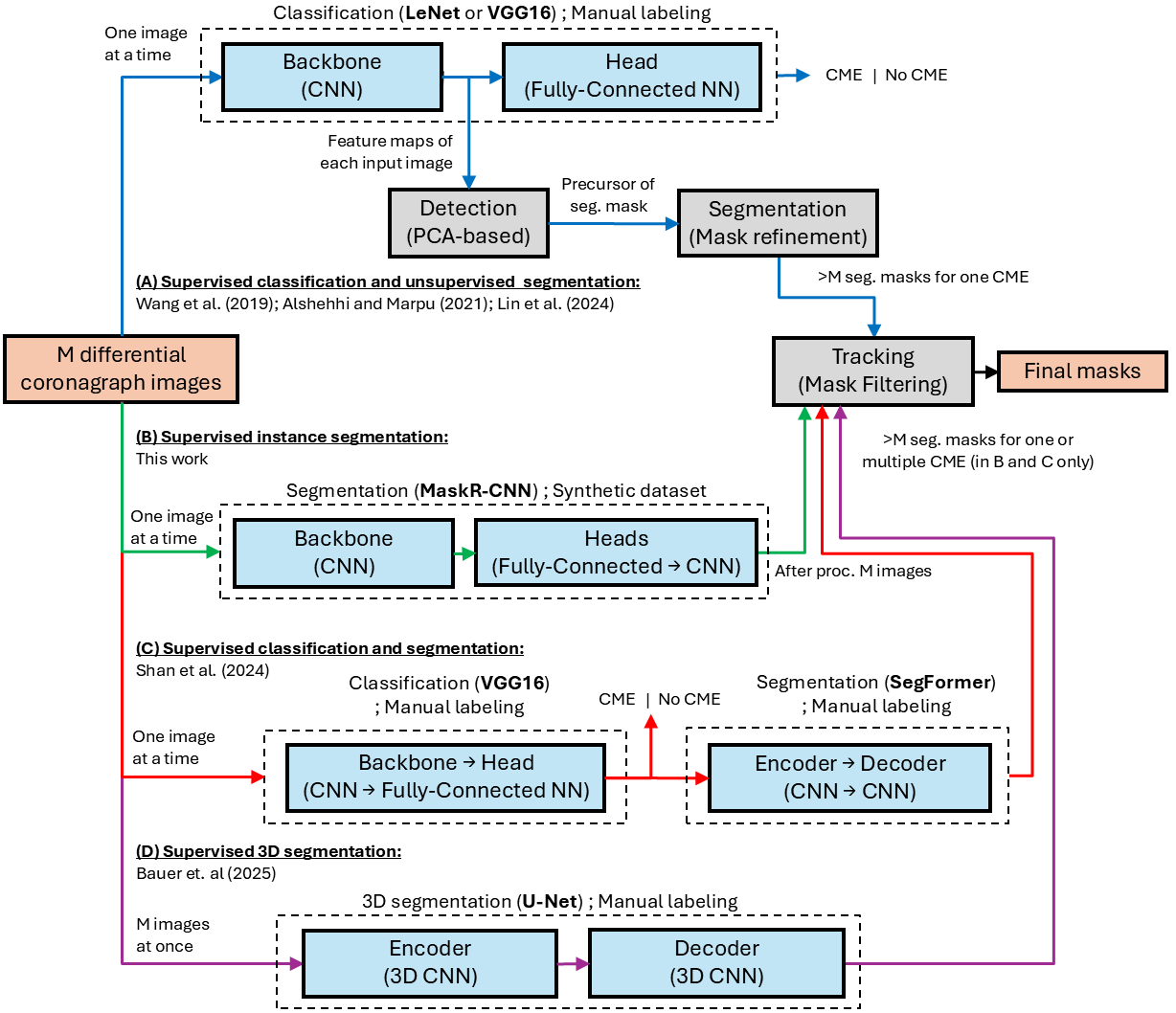

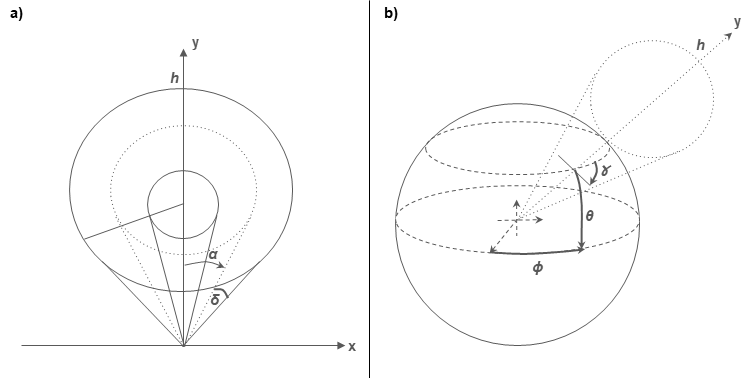

A critical contribution is the construction of a large, diverse synthetic training dataset that provides pixel-level ground truth for CME segmentation, overcoming the chronic limitations of labeled solar data. Synthetic images I are constructed by superposing ray-traced GCS model CMEs—parameterized by position, height, aspect ratio, and angular separation—onto real coronagraph backgrounds that are manually verified to be CME-free. The suite includes broad variation across source region longitude (ϕ), latitude (θ), tilt (γ), apex height (h), aspect ratio (κ), and leg separation (α) (see Figure 2).

Figure 2: GCS geometric model and its six defining parameters for CME morphology.

Additive Poisson noise, variability in the occulter, and random scaling further augment realism. Segmentation masks for both the CME envelope and the occulter are computed directly from the model geometry. The final corpus exceeds 105 image-mask pairs, enabling comprehensive supervised training.

Figure 3: Empirical synthetic dataset pipeline, illustrating the combination of real backgrounds with ray-traced model CMEs and derived masks.

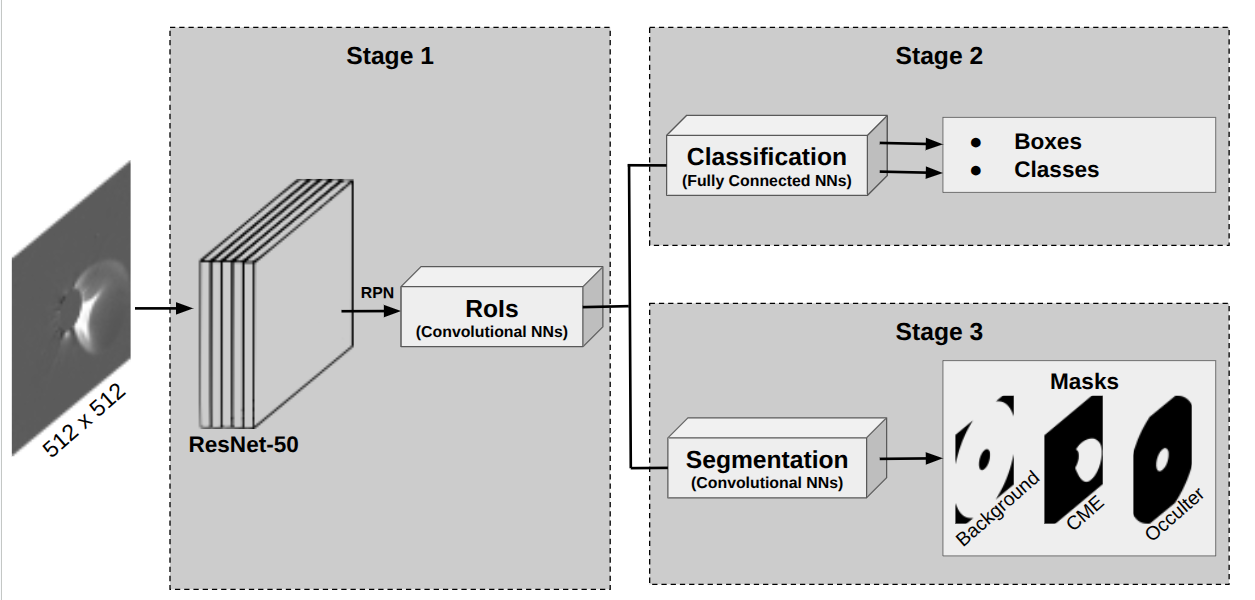

Mask R-CNN Architecture and Training

The instance segmentation framework is Mask R-CNN with a 50-layer ResNet backbone, initialized from COCO weights and fine-tuned for this solar imaging task. The model infers both object (CME, Occulter, Background) segmentation masks and classification for each bounding box region. No temporal sequences are used as inputs, restricting the model to morphology-centered discriminants.

Figure 4: Schematic of the Mask R-CNN architecture adapted for CME instance segmentation.

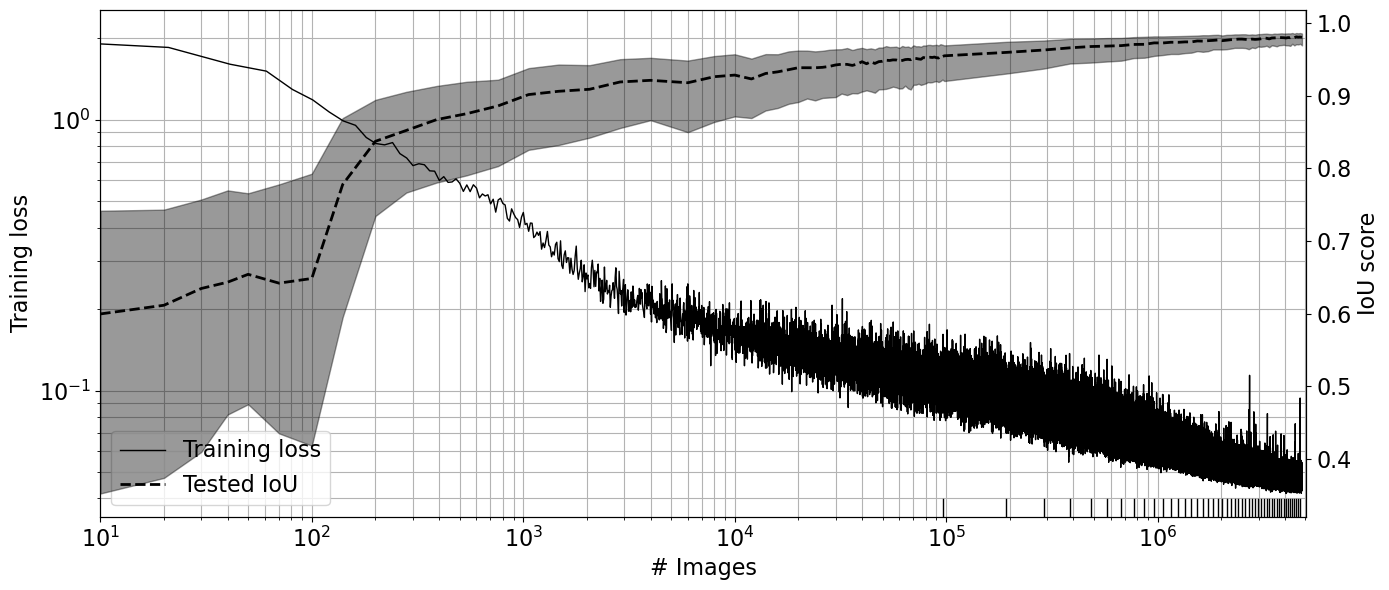

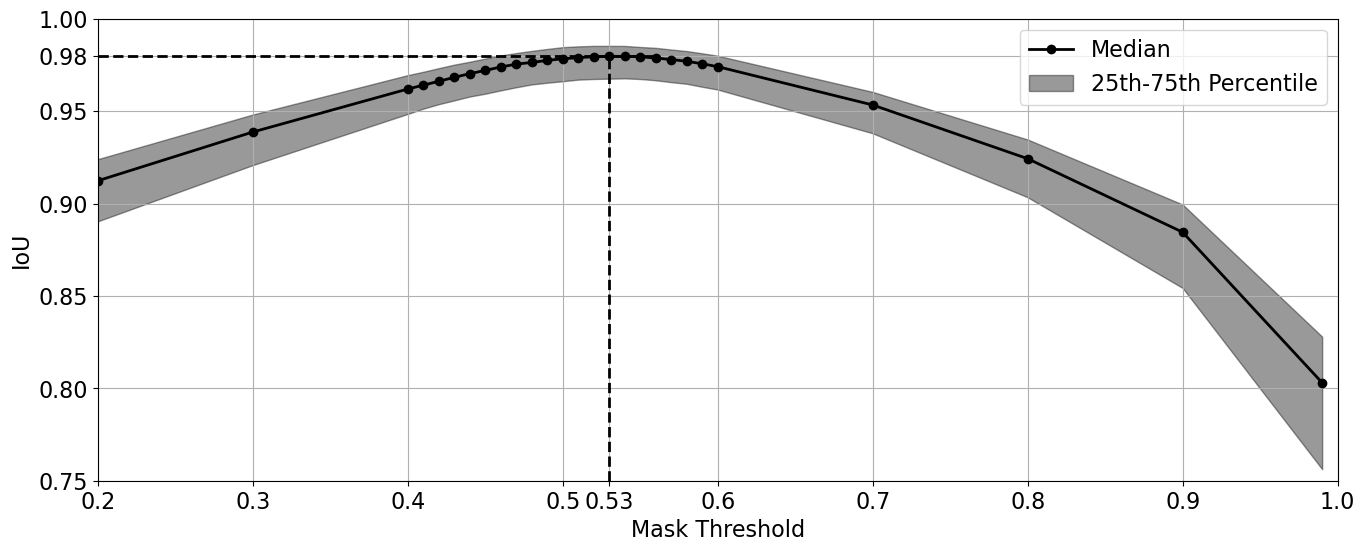

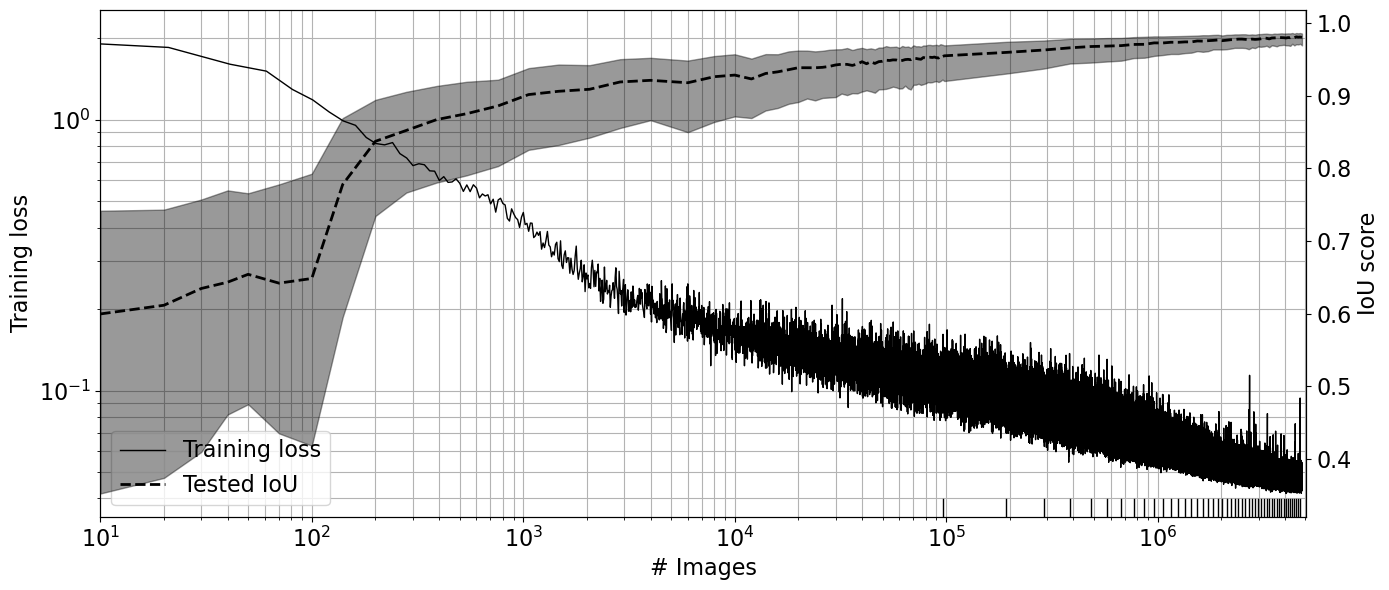

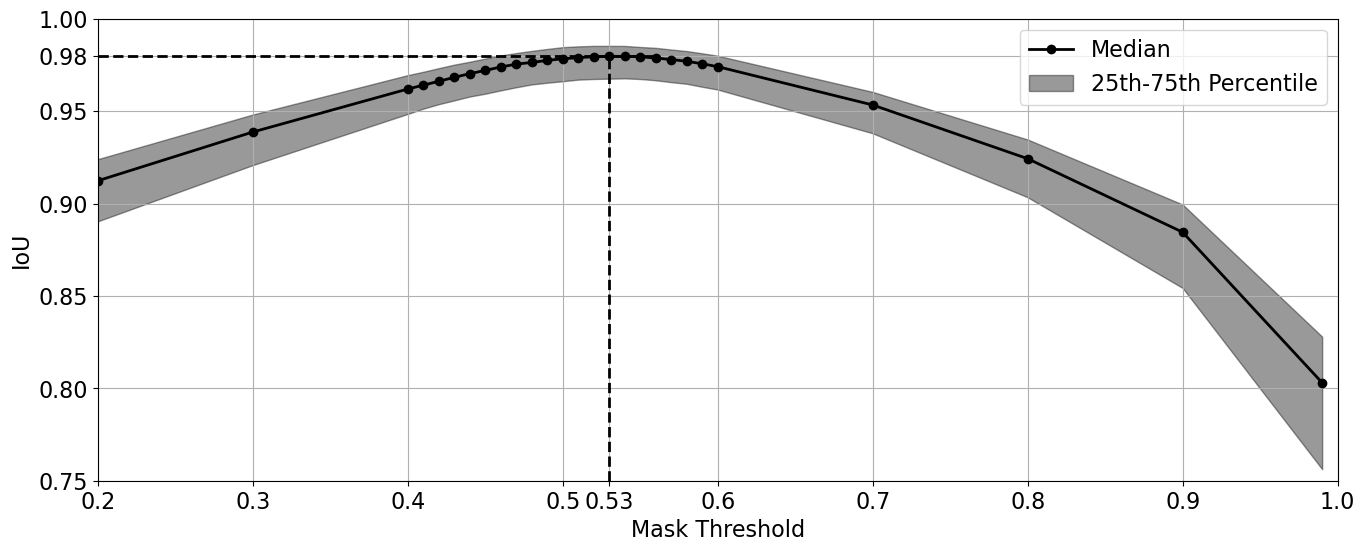

Training is performed on 1.13×105 synthetic images over 50 epochs. During training, the mean validation Intersection over Union (IoU) metric rapidly increases, asymptoting to a plateau near 0.98 on the synthetic validation set. The choice of mask threshold is optimized (at 0.53) for this task, lower than common benchmarks in computer vision, in order to accommodate the diffuse CME shell morphology.

Figure 5: Training progress showing decreasing loss and rising validation mean IoU, with narrow quartile bands—indicating strong generalization on the synthetic data.

Figure 6: Sensitivity of validation IoU to mask threshold; the optimal threshold maximizes overall overlap with ground truth masks.

Evaluation on Synthetic and Real Observations

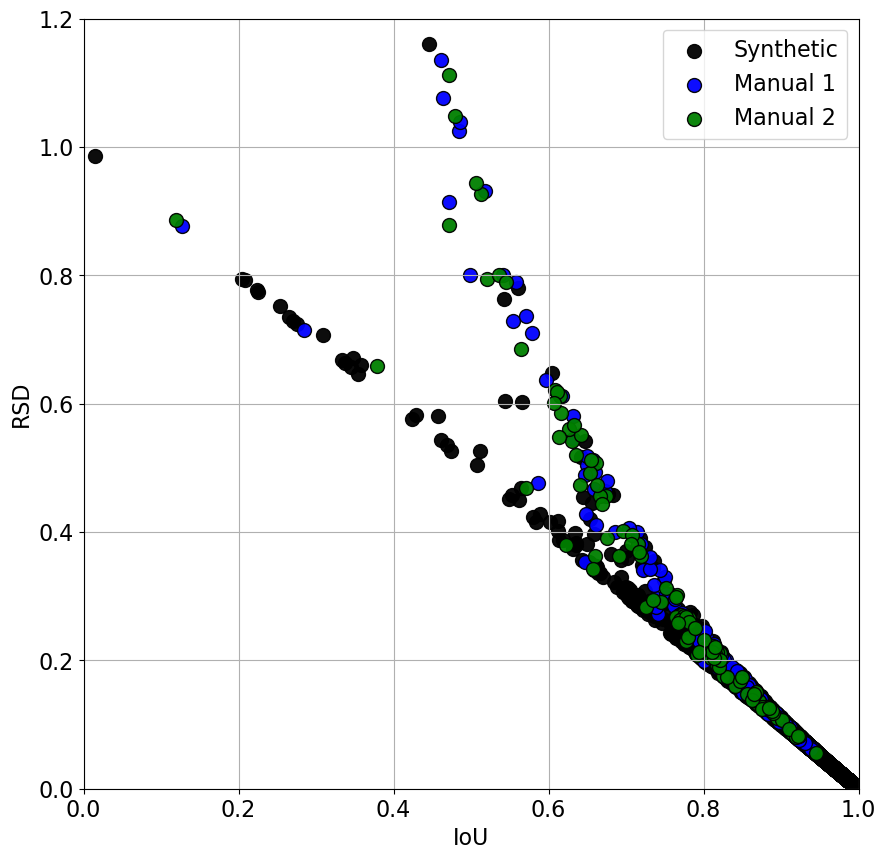

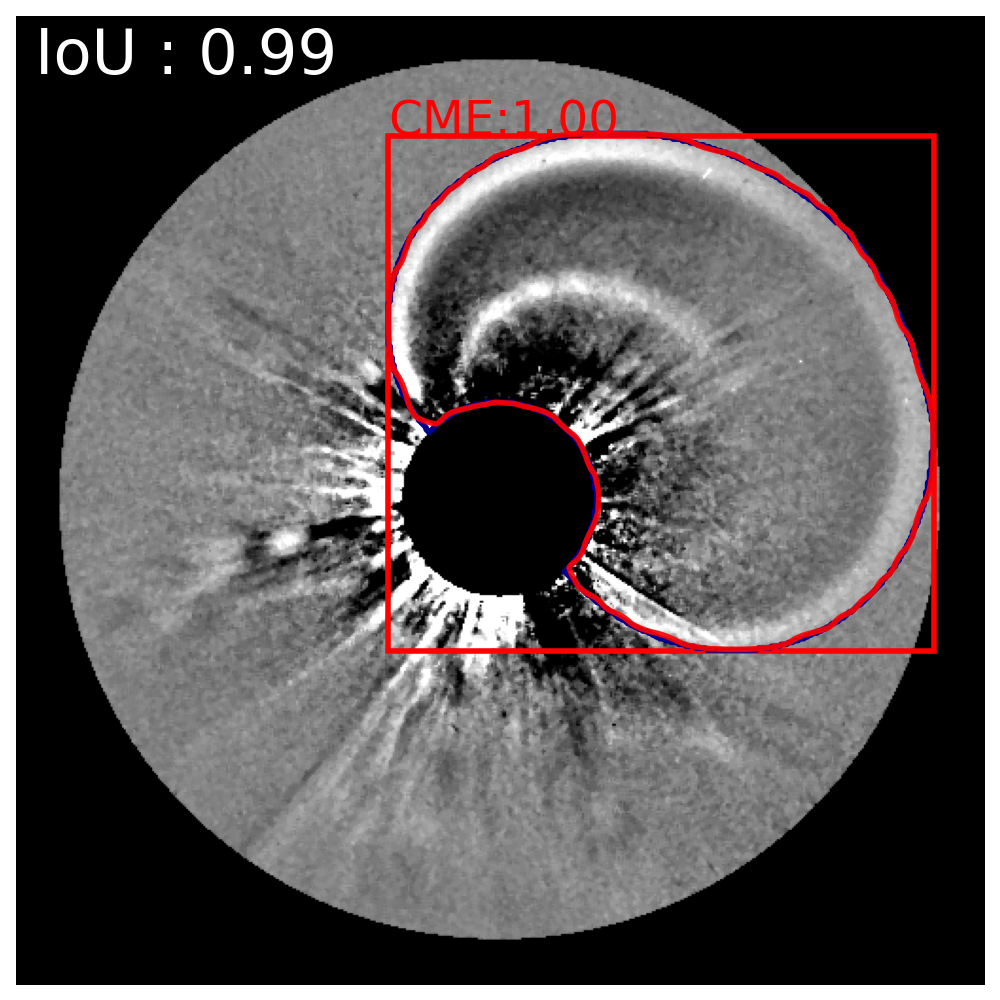

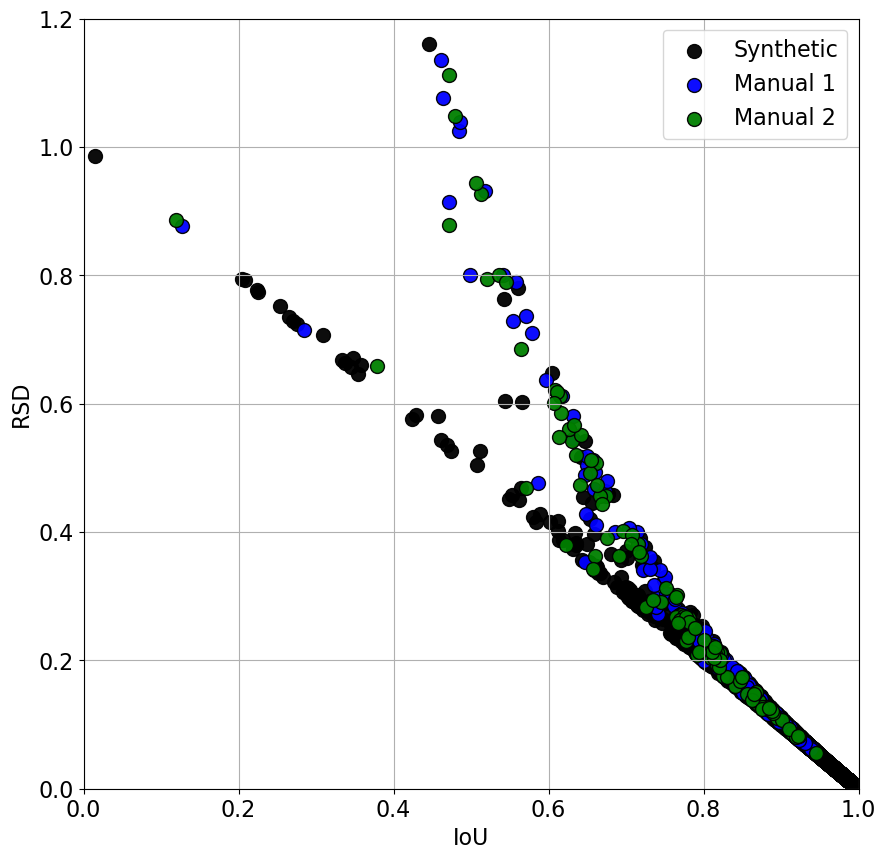

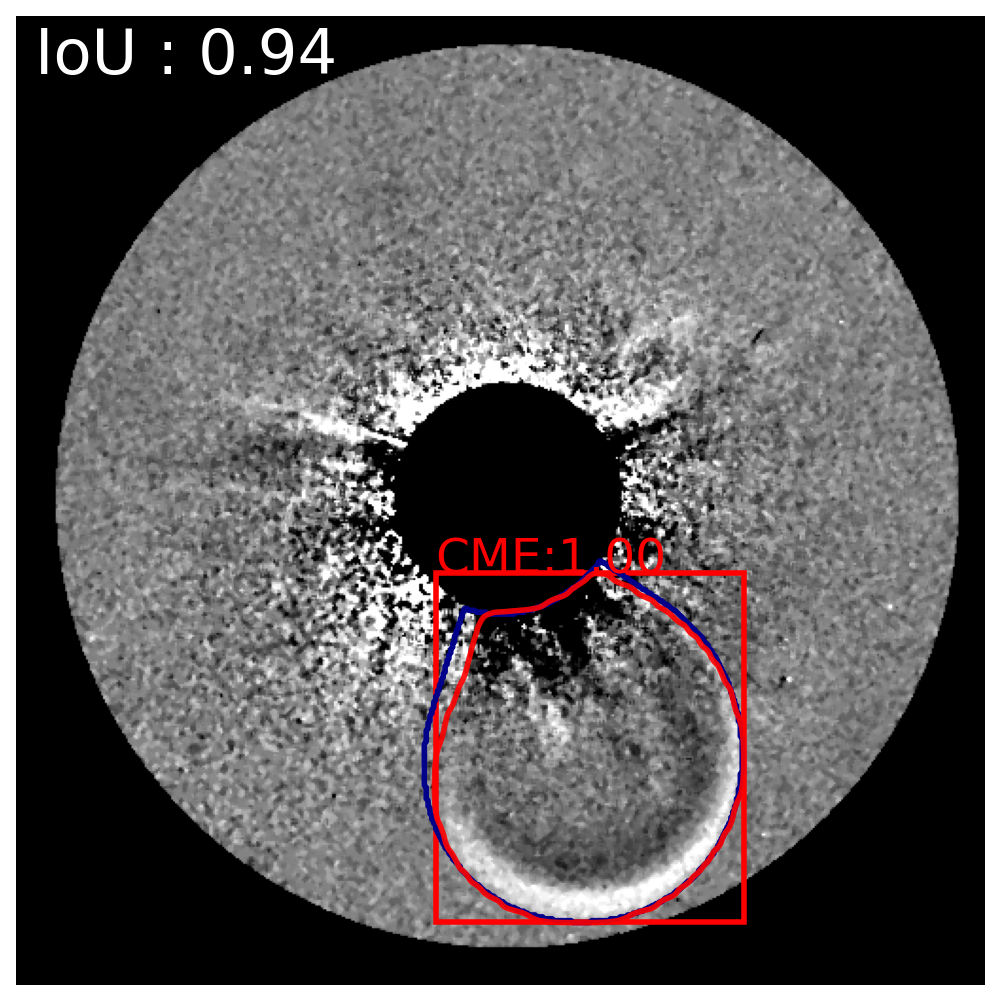

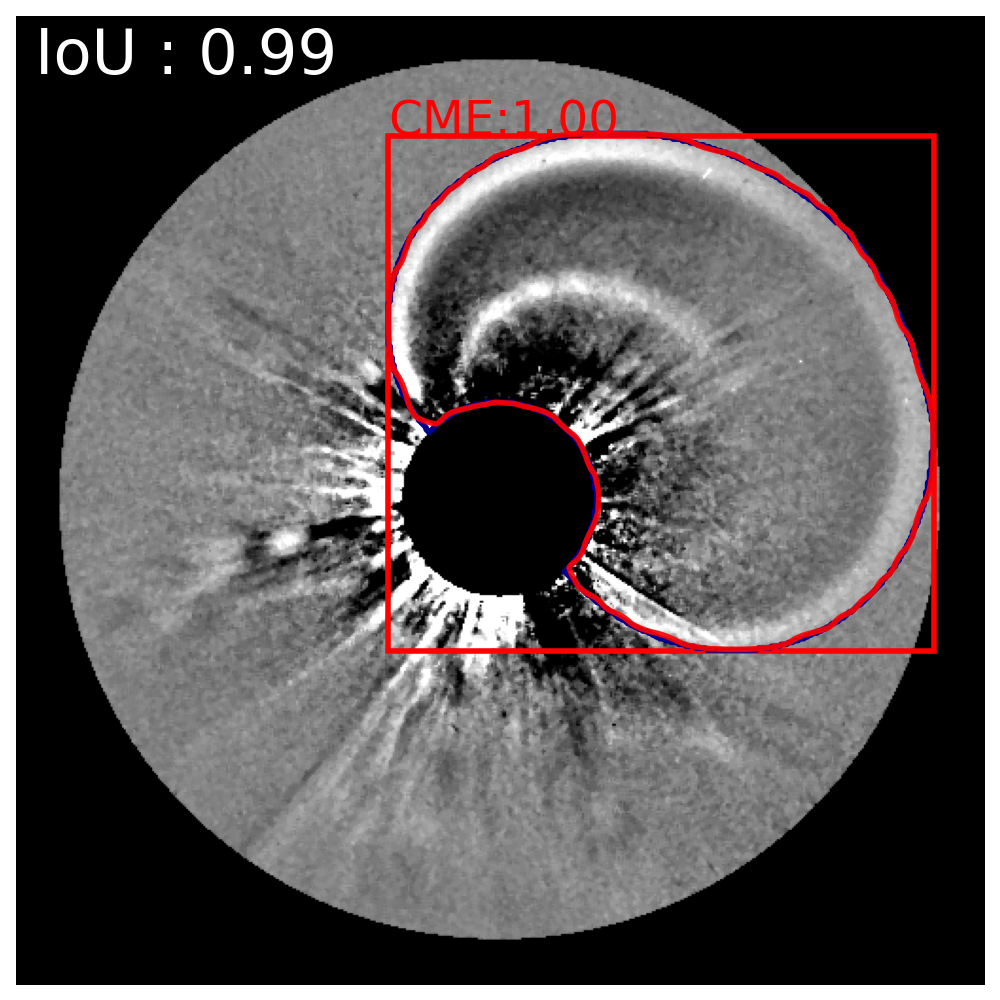

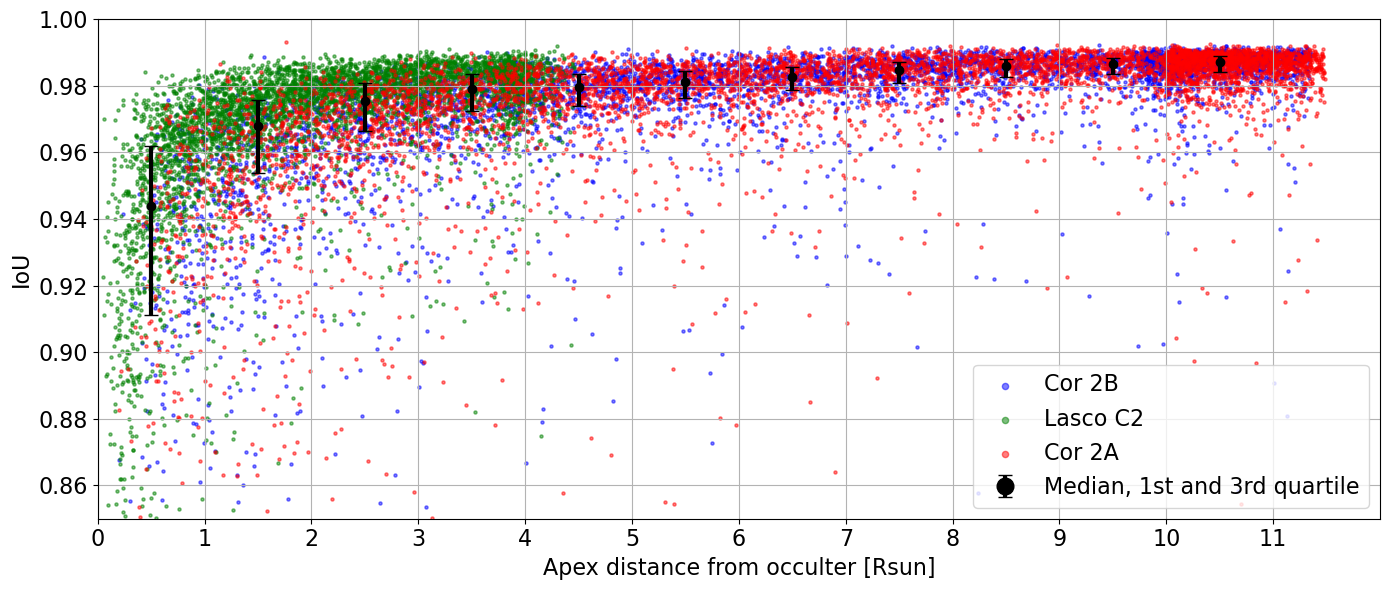

On synthetic validation data—where true mask boundaries are exactly known—the model achieves a median IoU = 0.98 (RSD≈2%), with 88% of events exceeding IoU = 0.95. The relation between IoU and Relative Symmetric Difference (RSD) is nearly linear in the well-performing regime, confirming reliable area recovery.

Figure 7: Relationship between RSD and IoU calculated for synthetic and manual-segmented validation images.

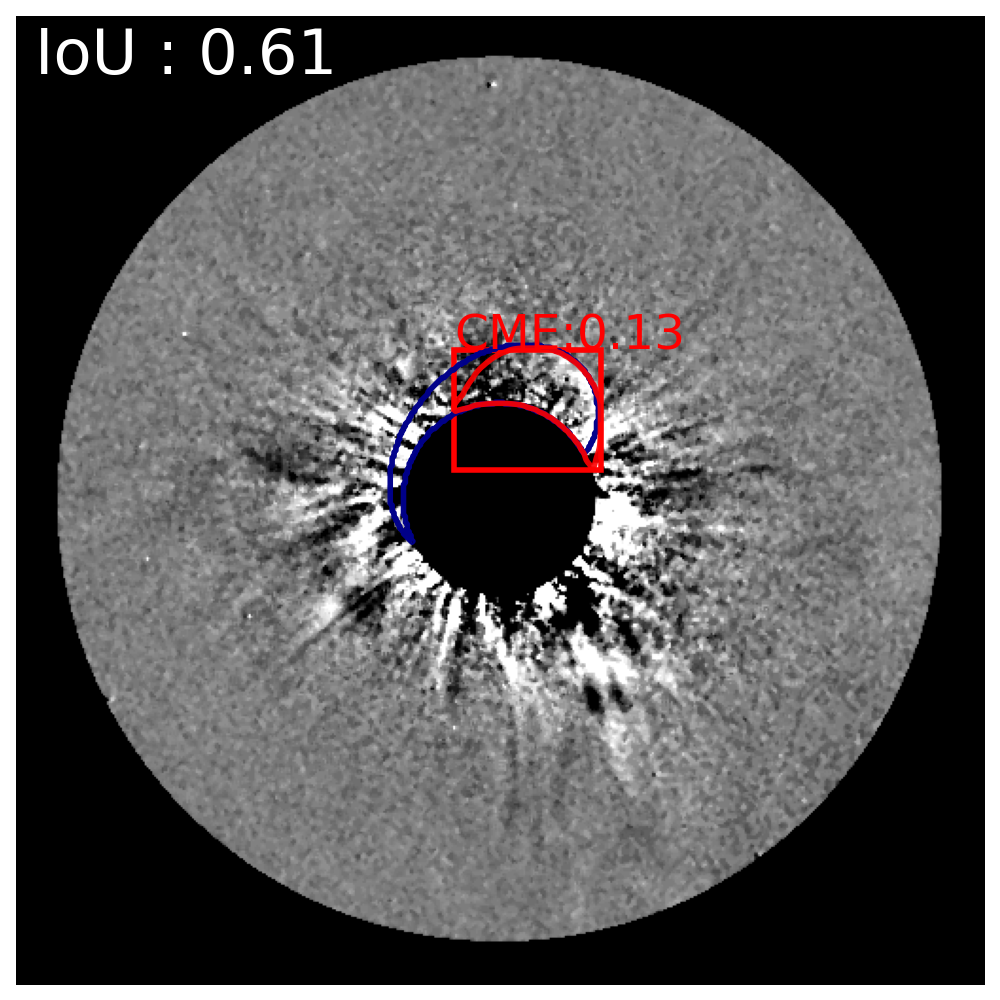

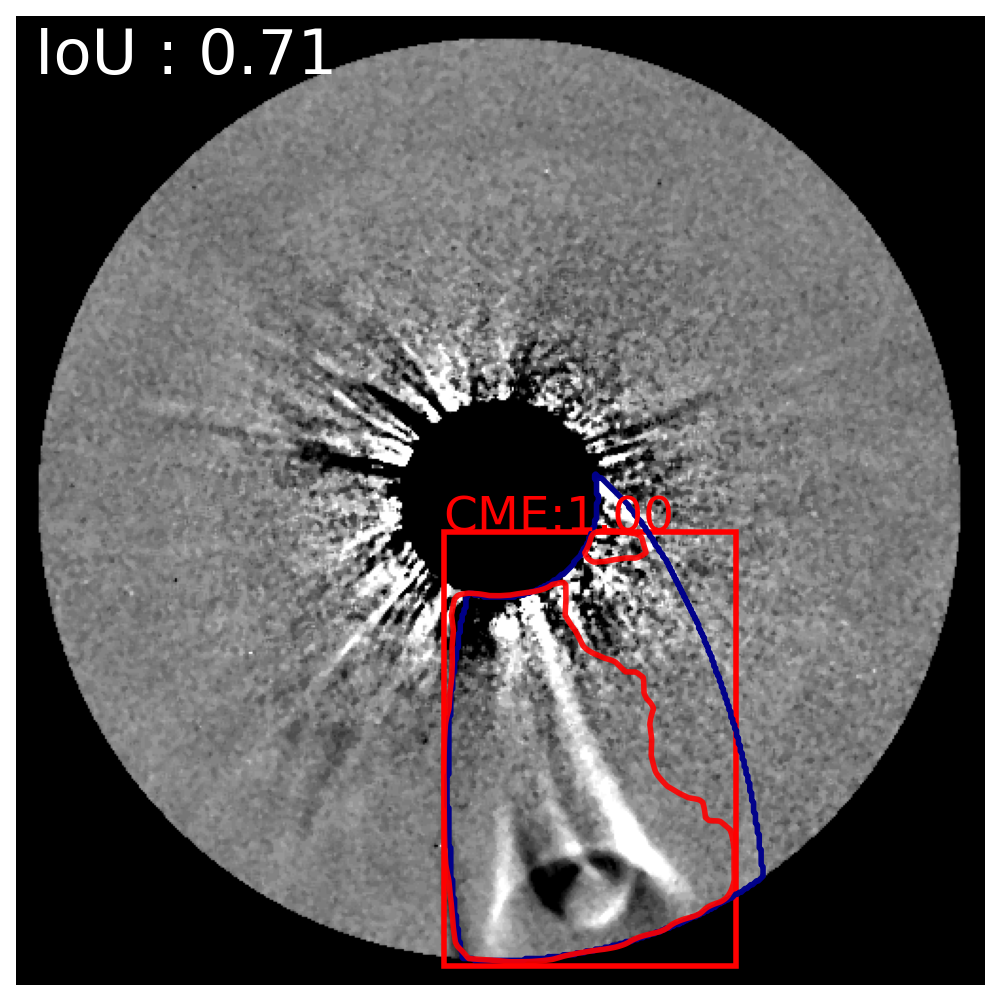

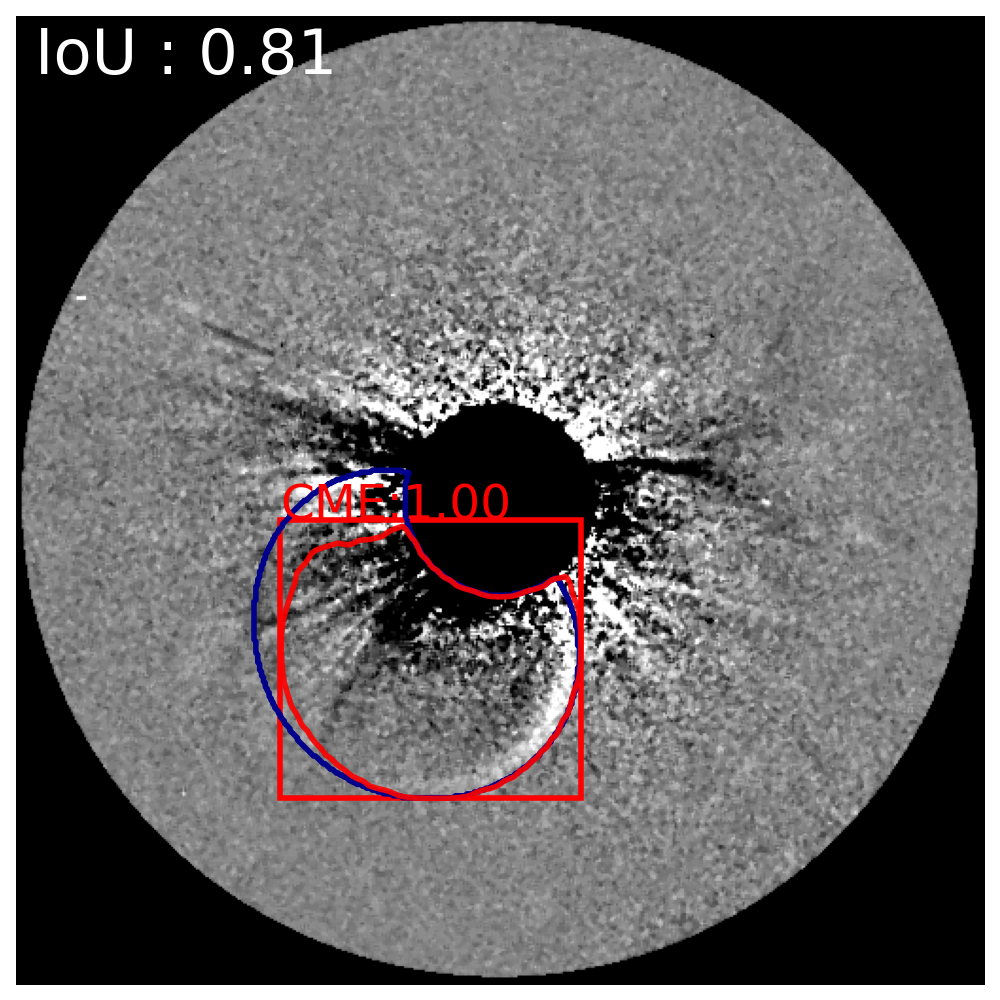

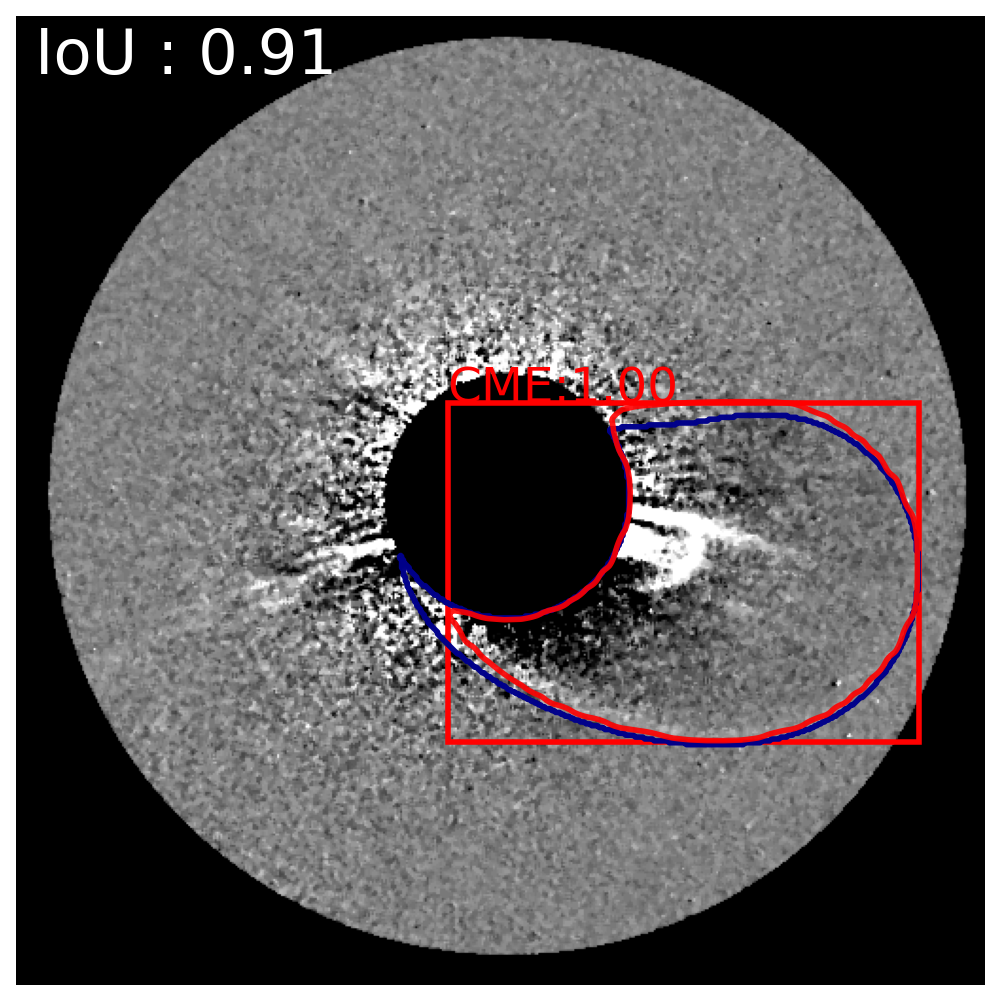

Figure 8: Representative synthetic data segmentations across a range of IoU scores, demonstrating the discrepancy spectrum.

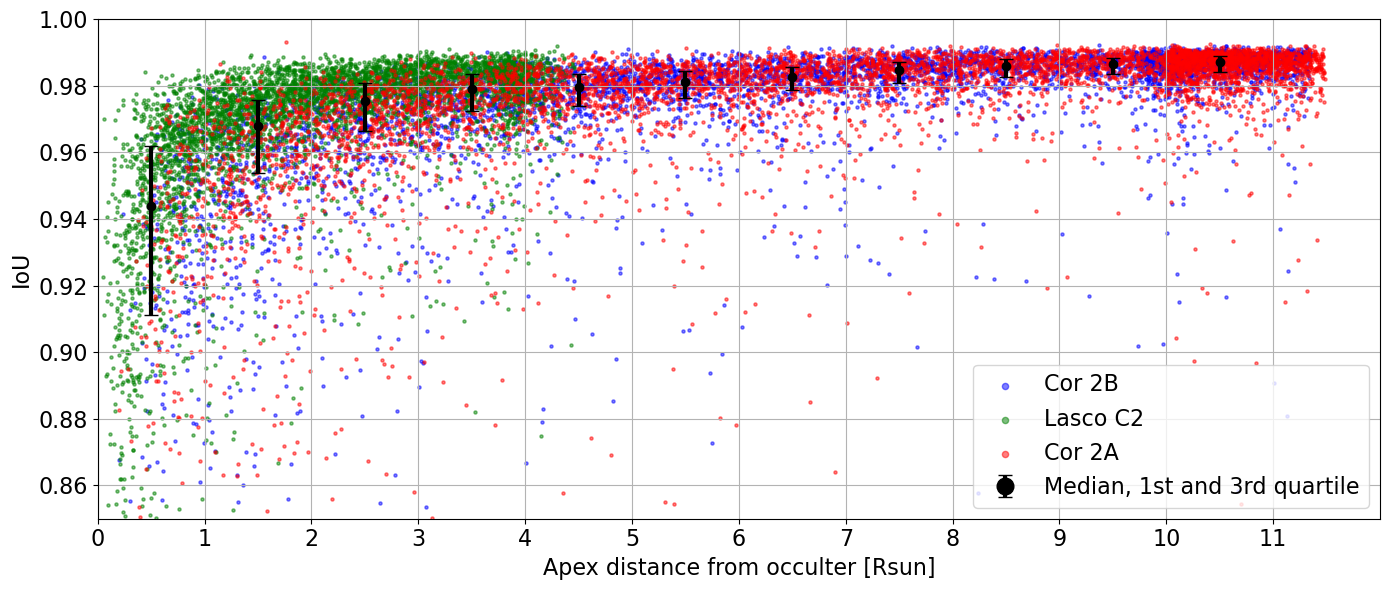

Analysis of segmentation fidelity as a function of projected CME apex shows minor degradation at lower heights, attributed primarily to increased corona background complexity near the occulter and not to instrument-specific artifacts.

Figure 9: IoU versus CME apex for different coronagraphs, revealing loss in accuracy only for events close to the occulter.

Generalization to Observational Data

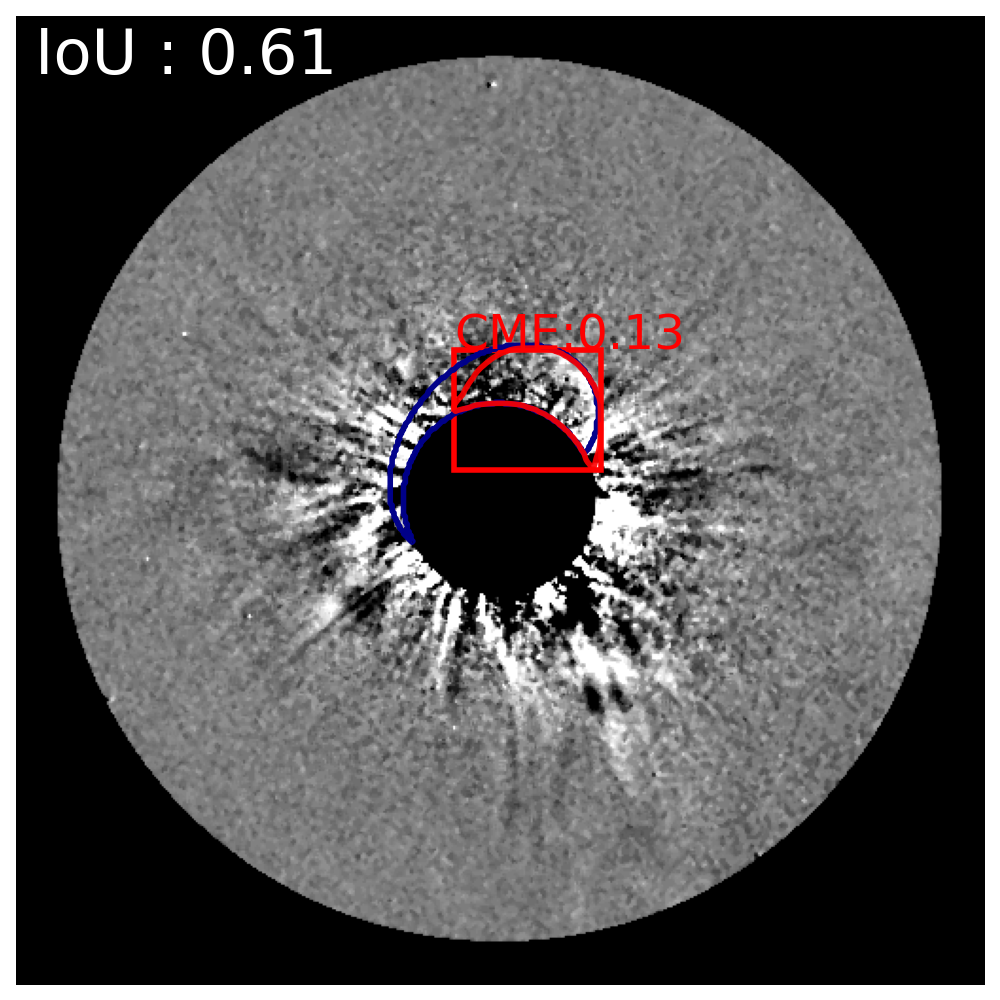

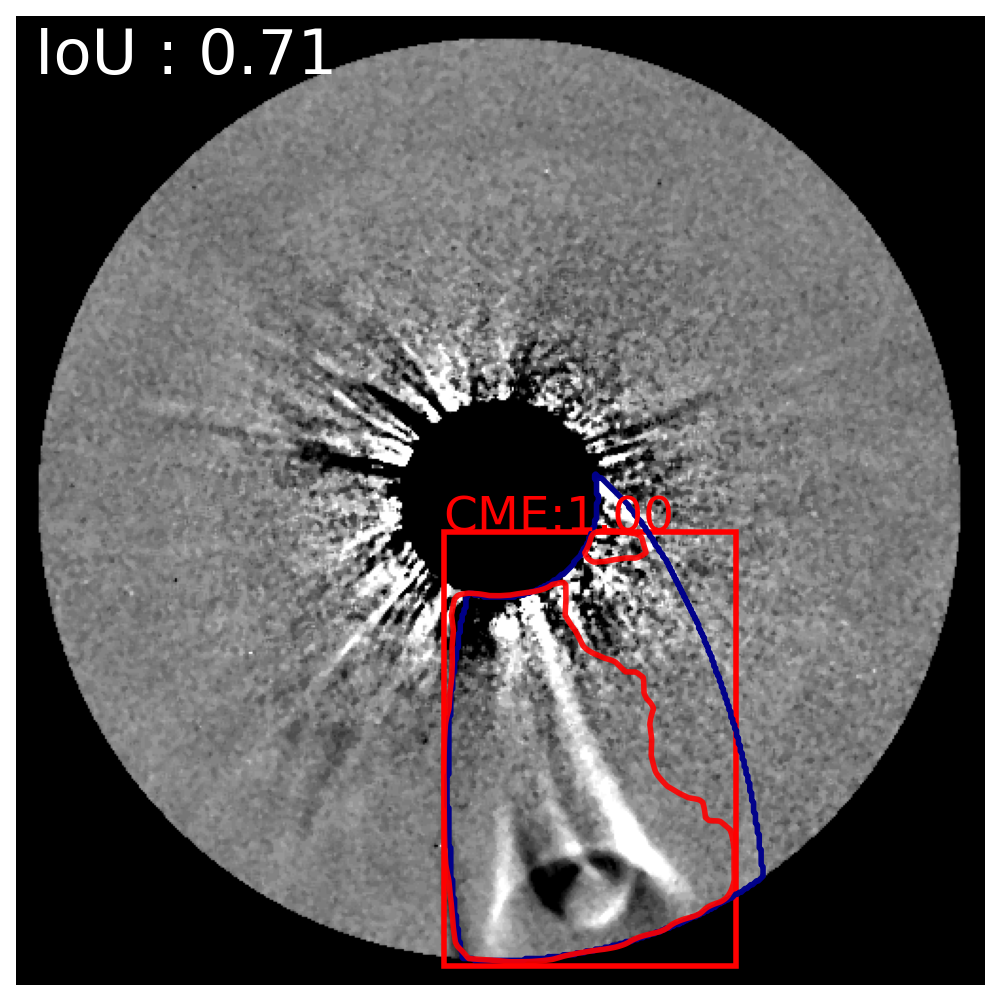

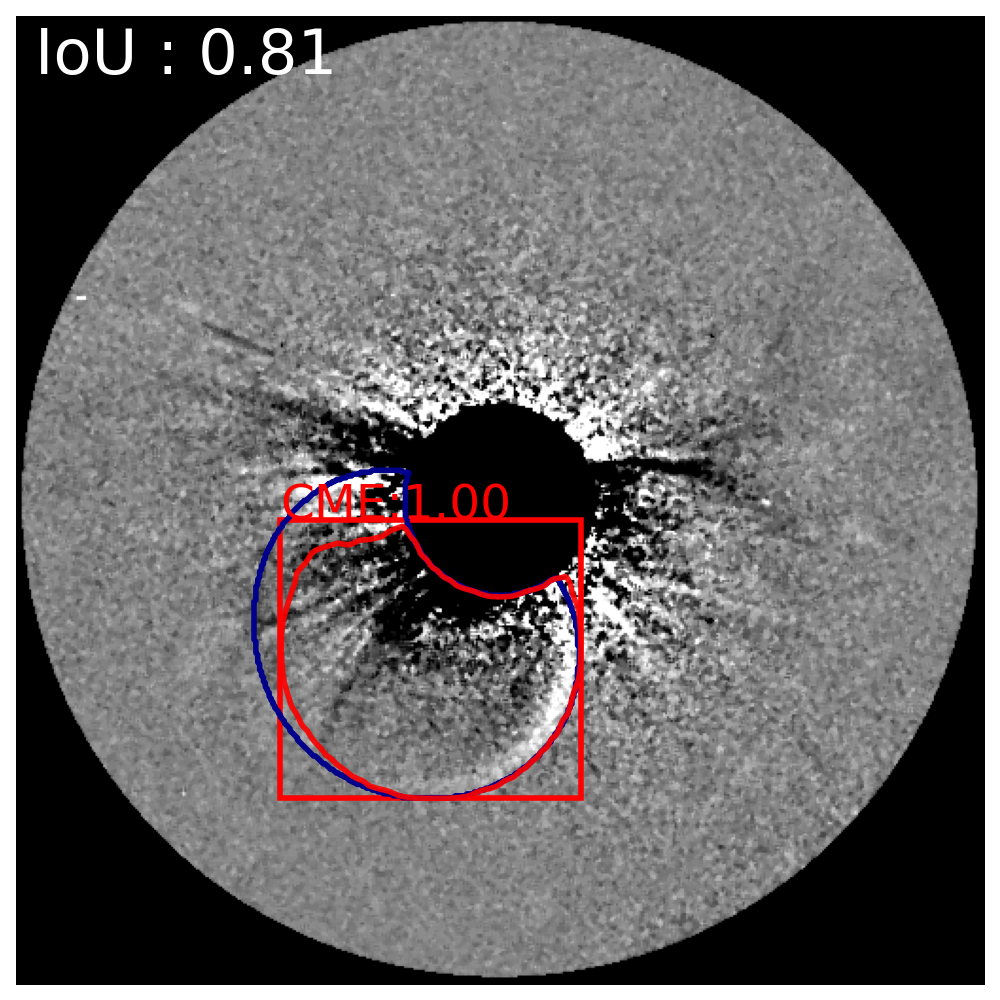

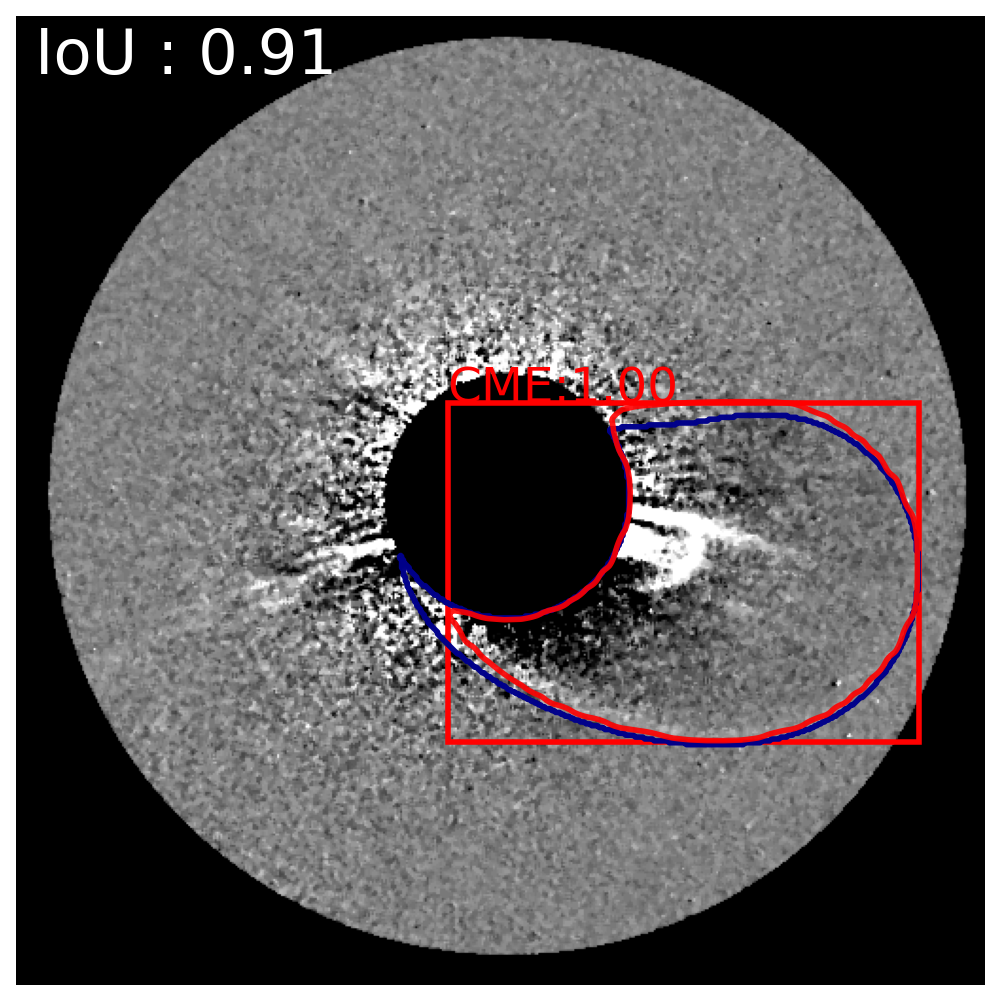

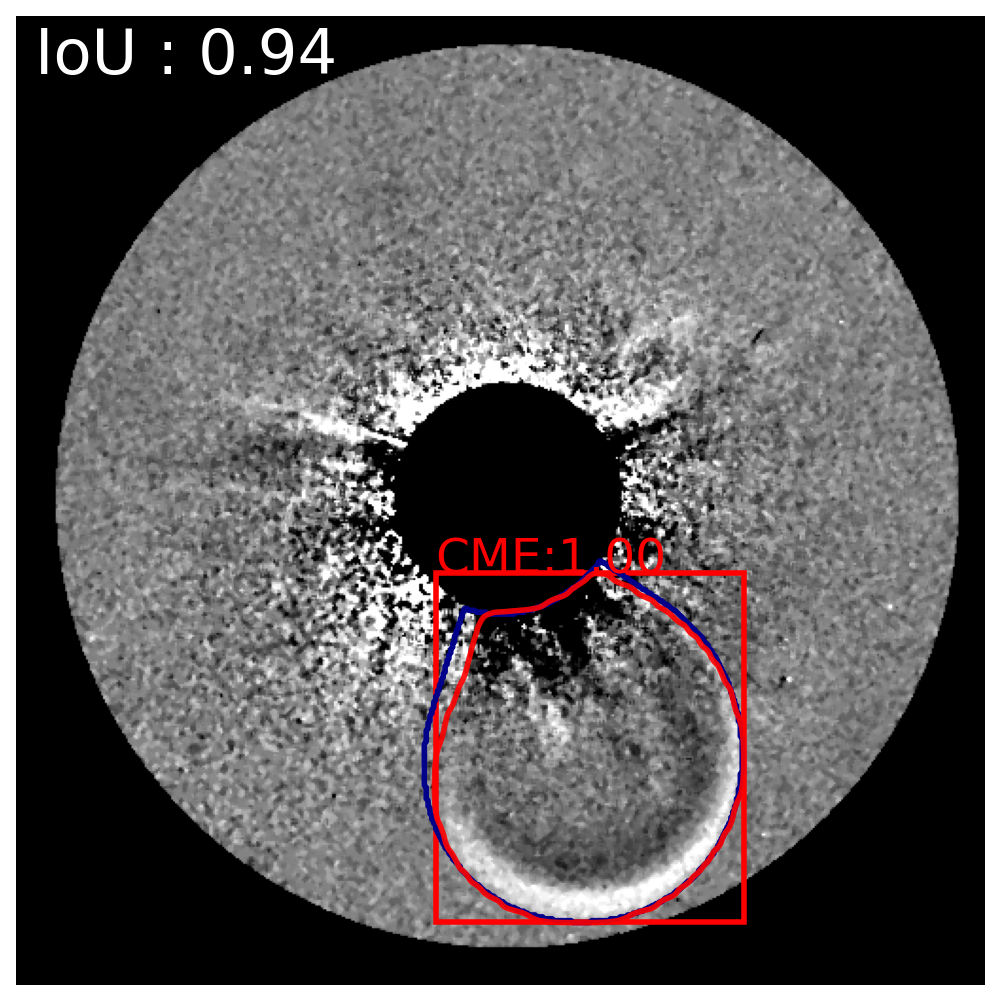

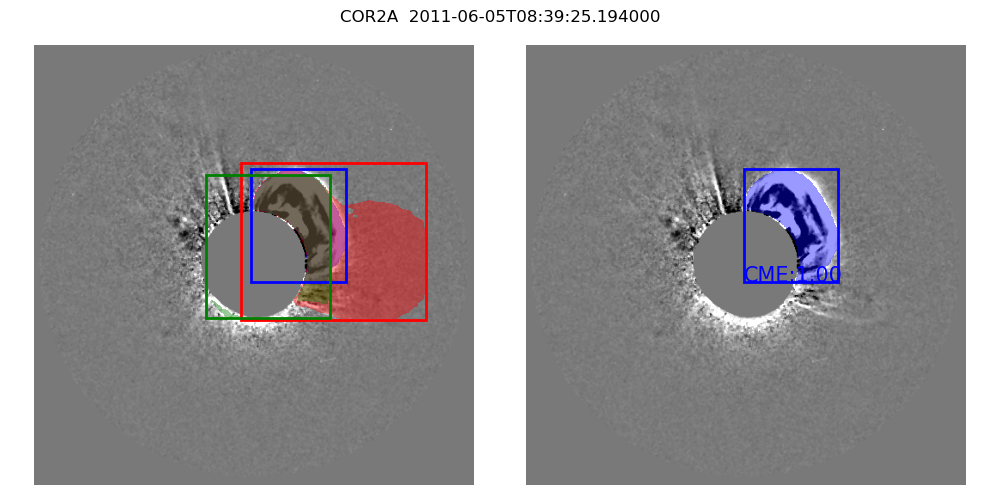

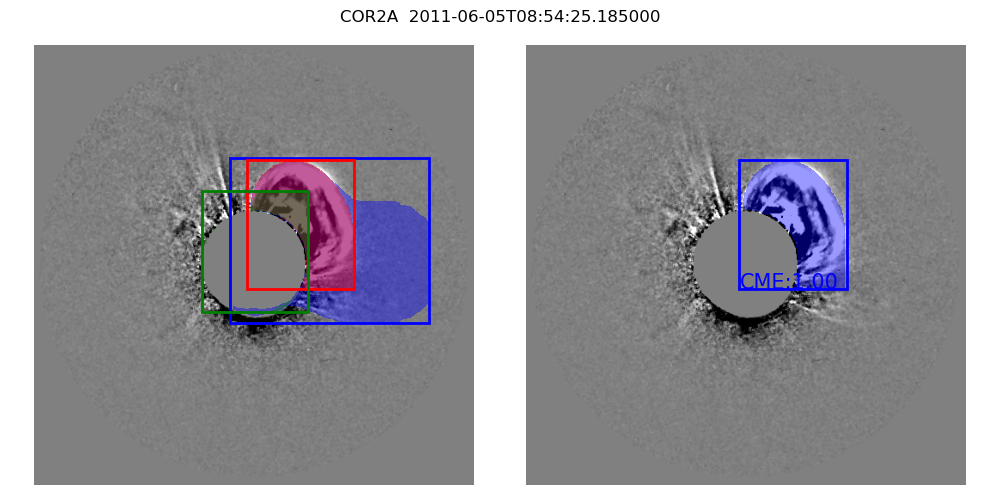

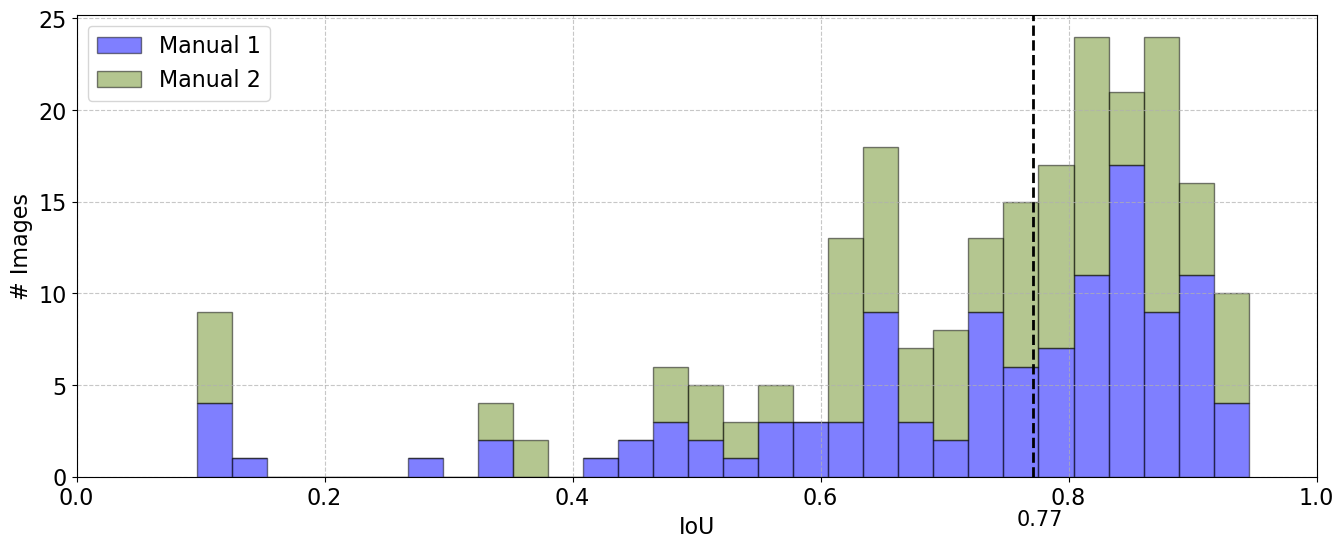

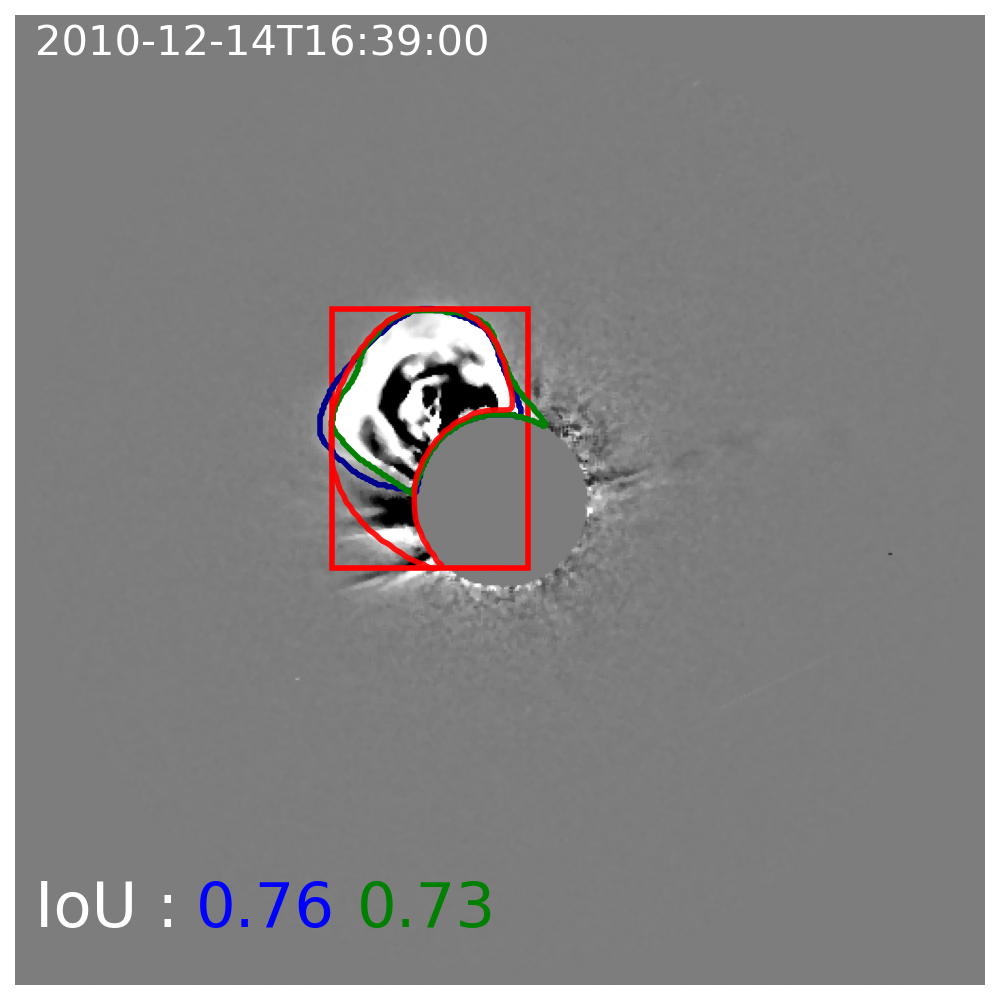

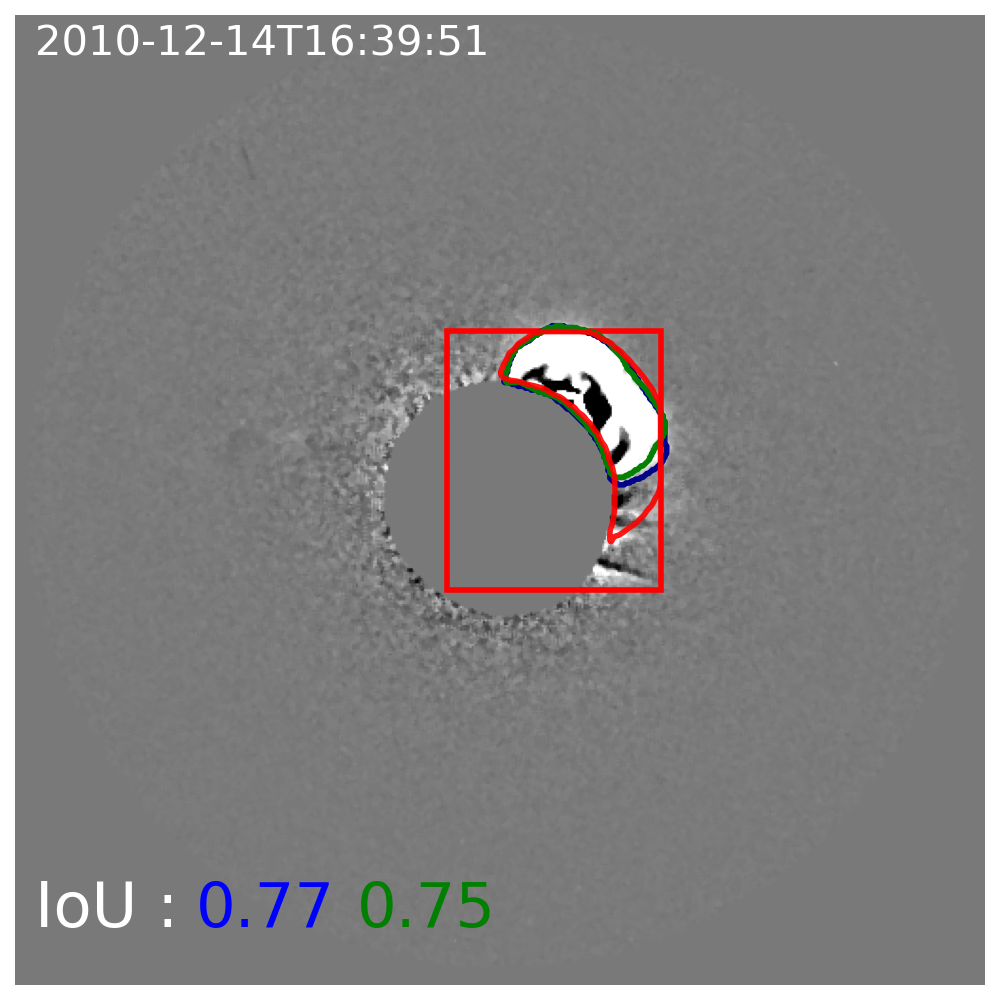

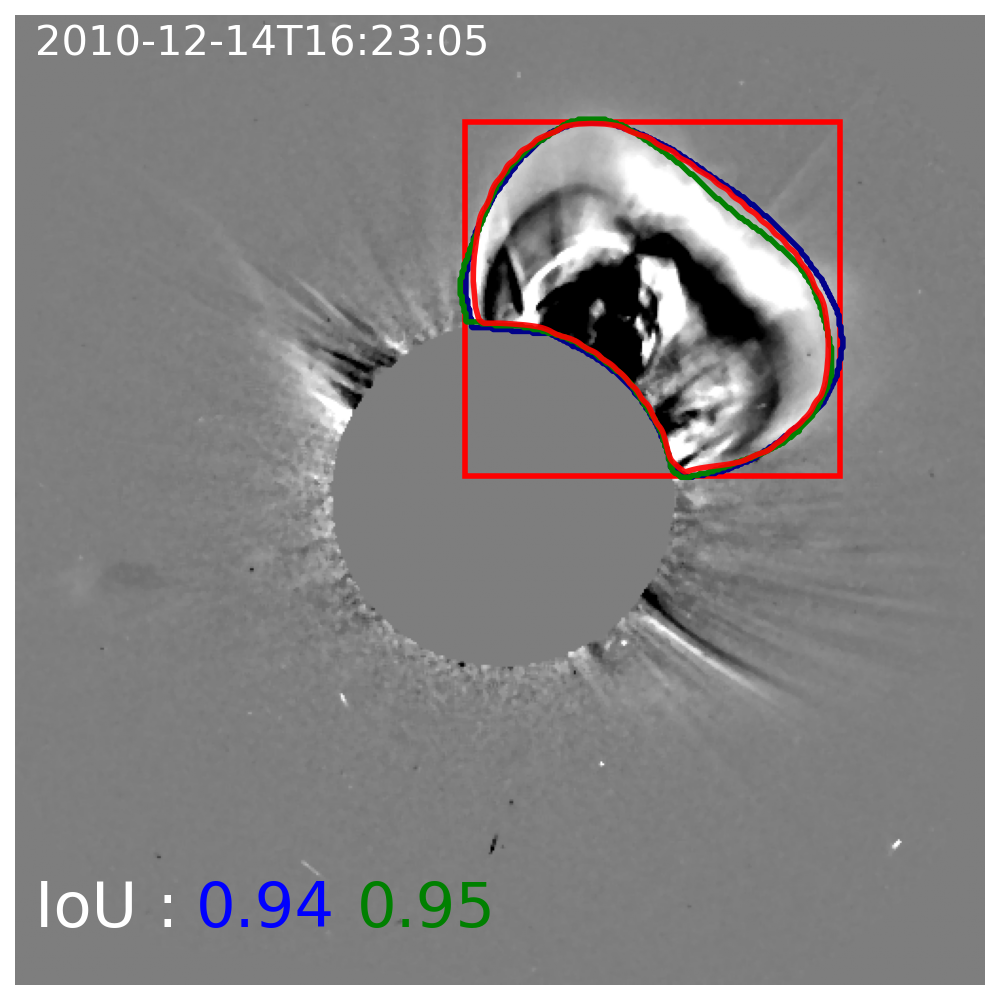

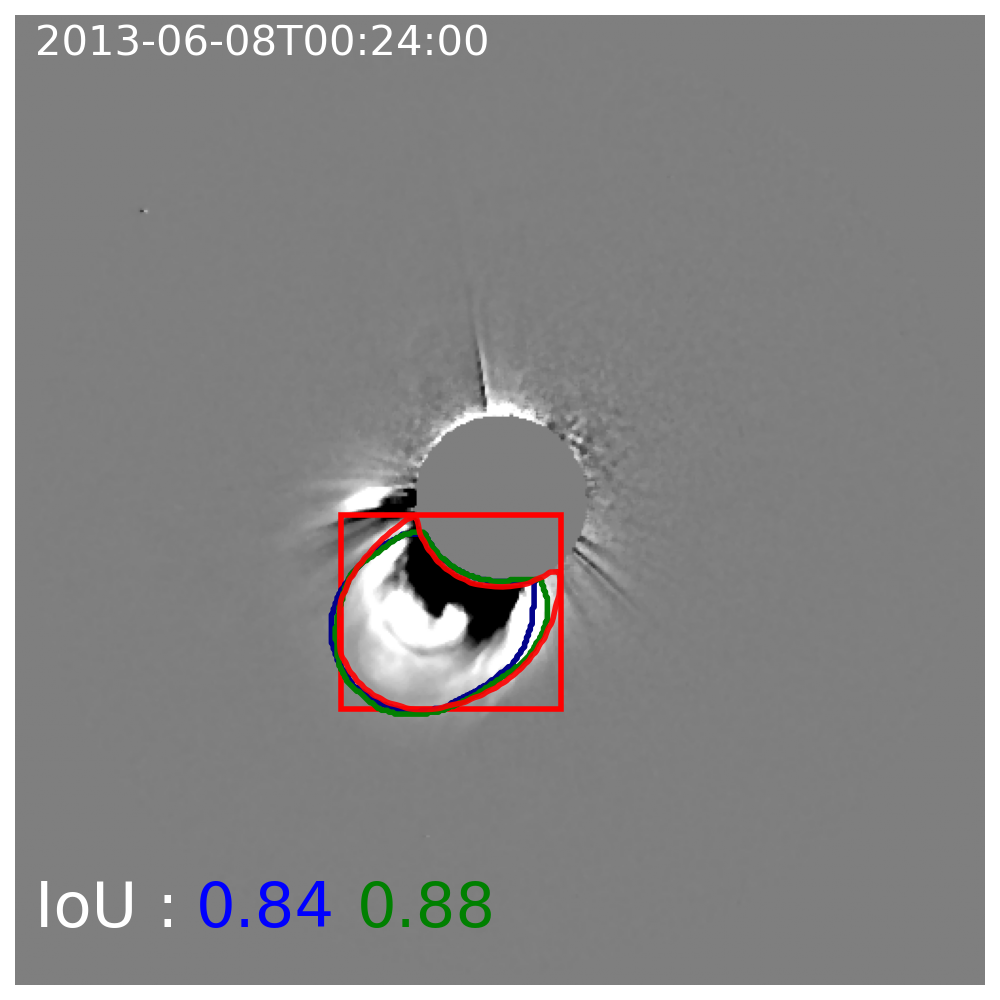

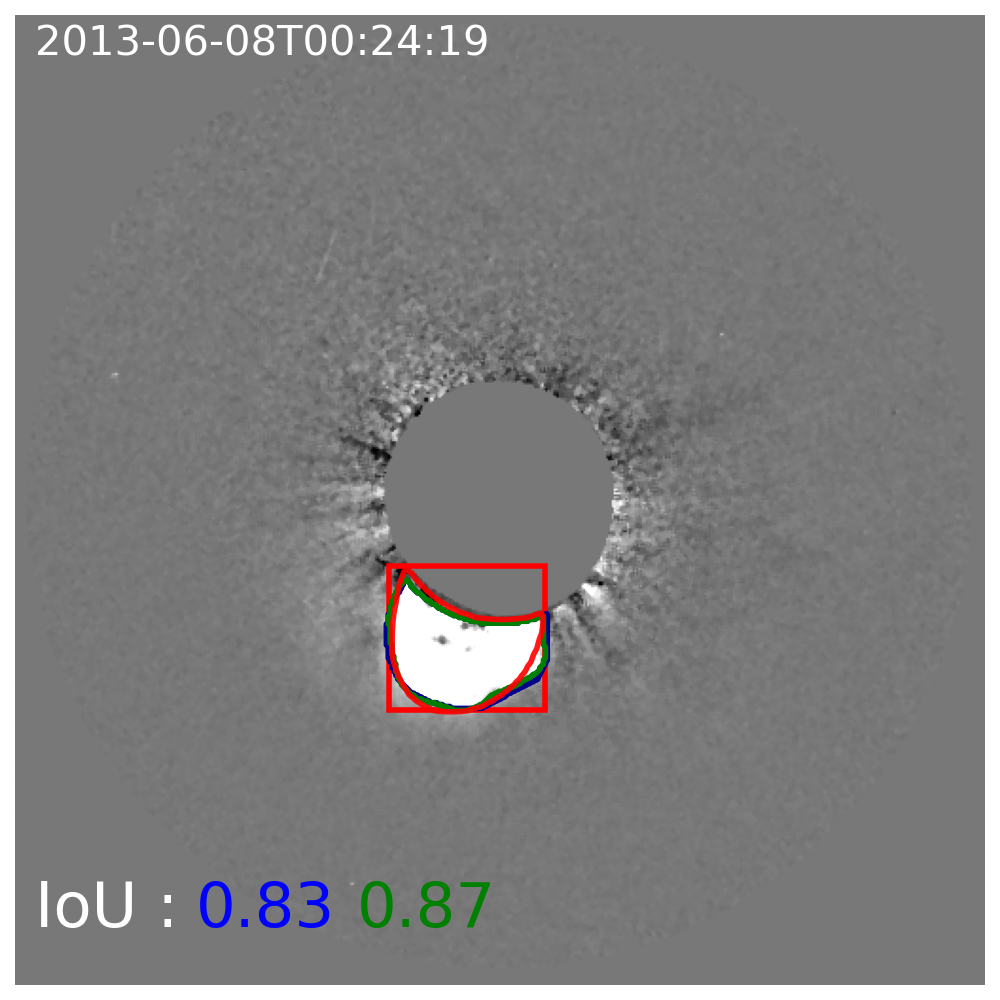

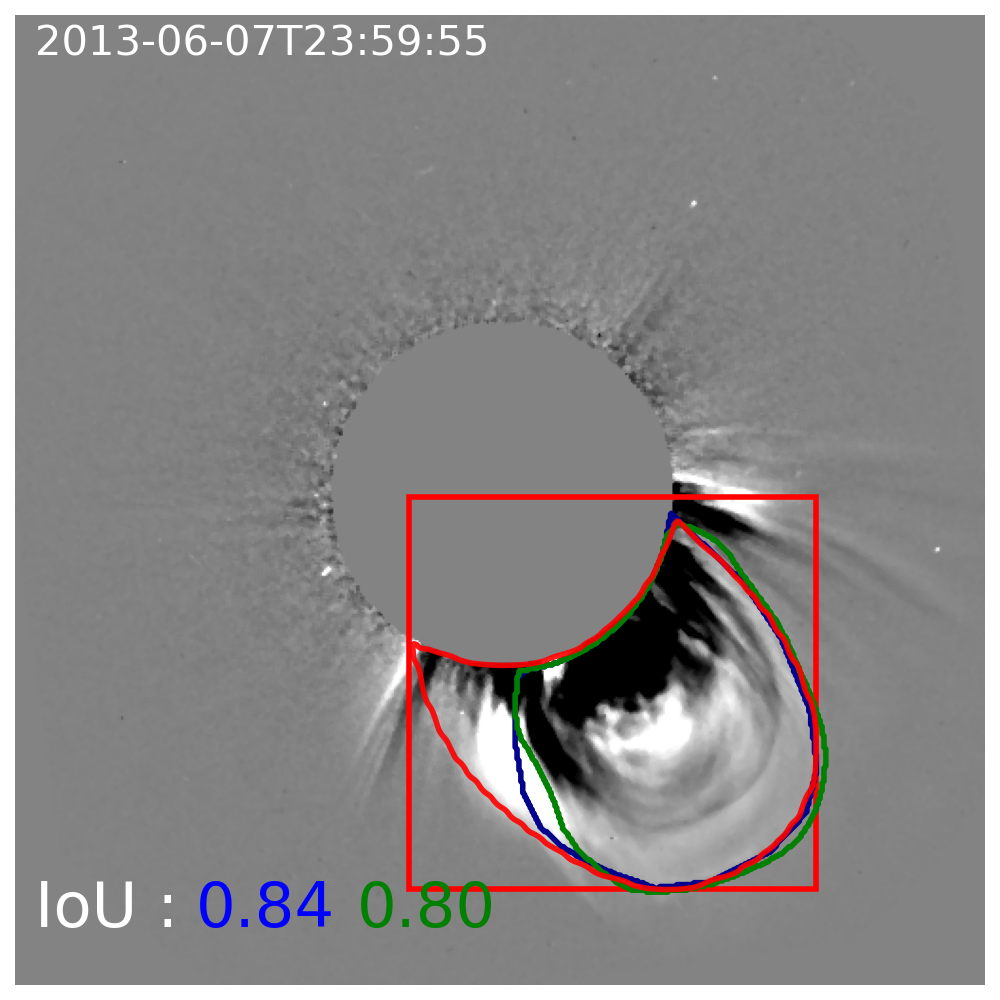

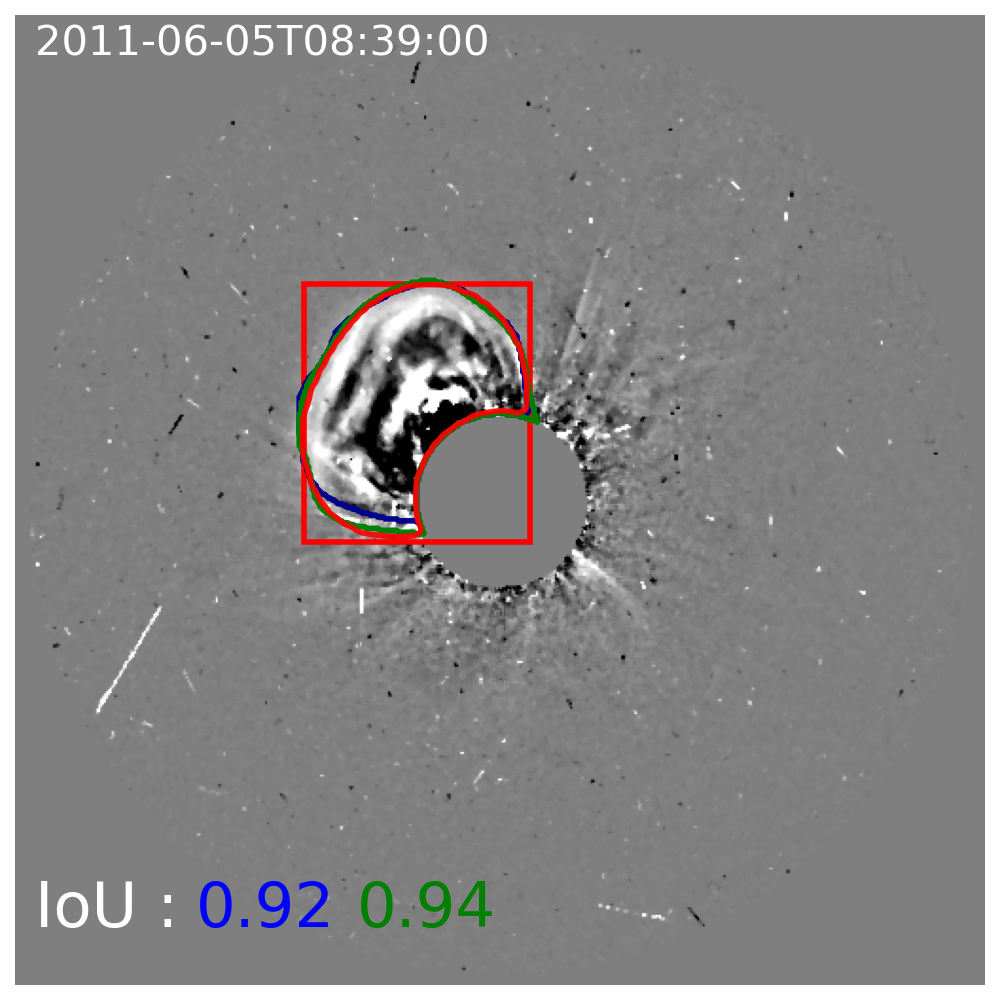

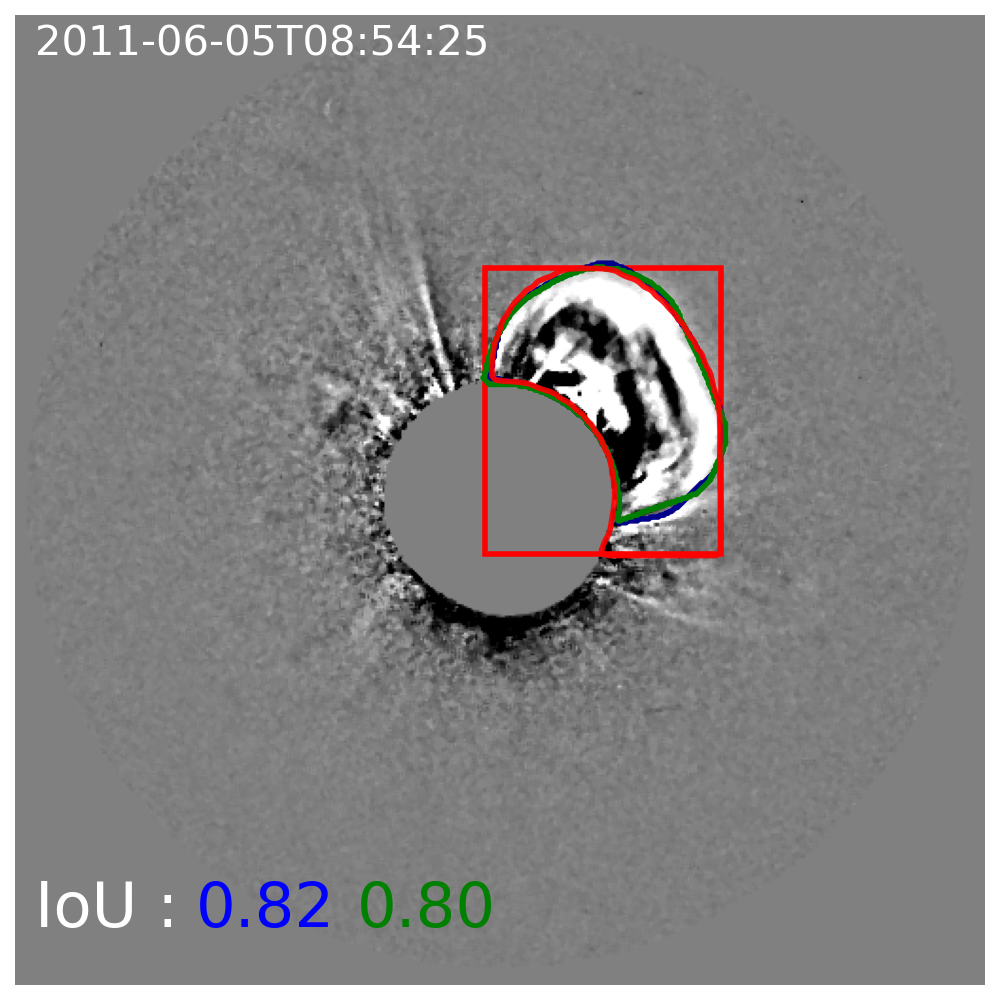

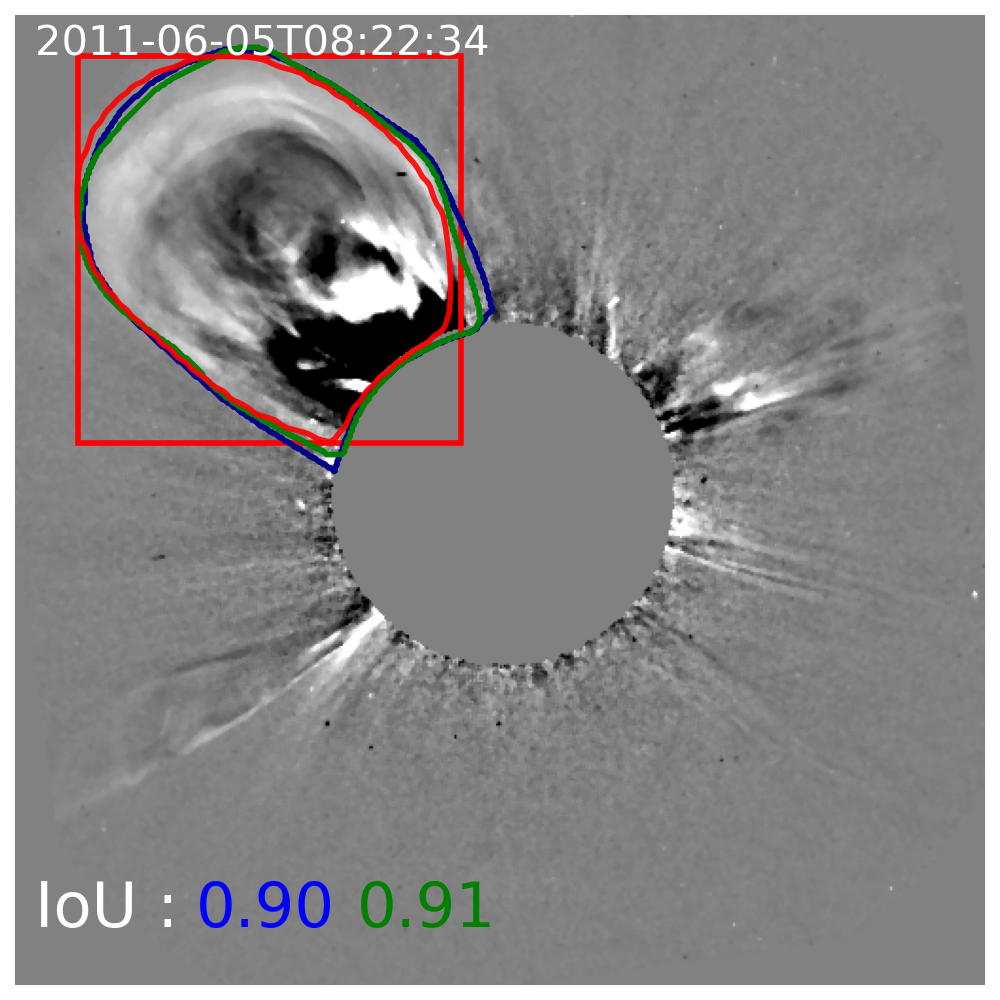

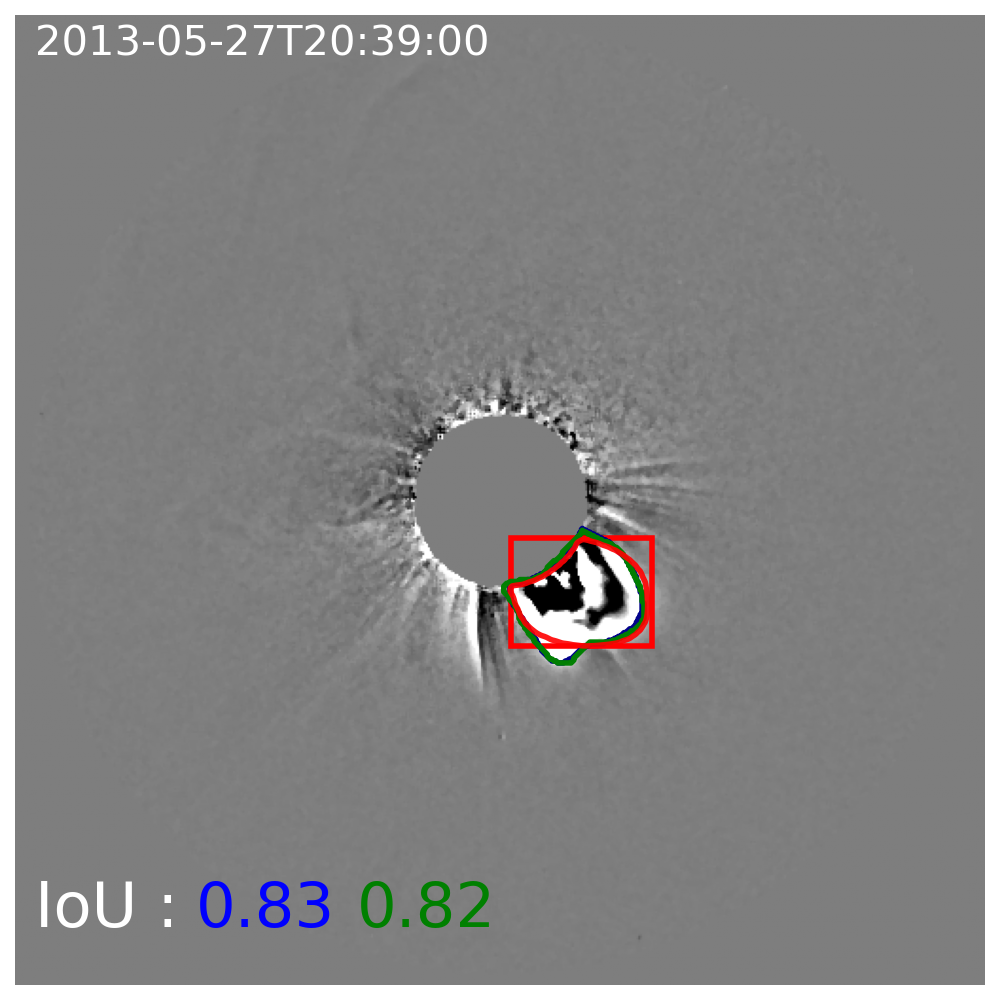

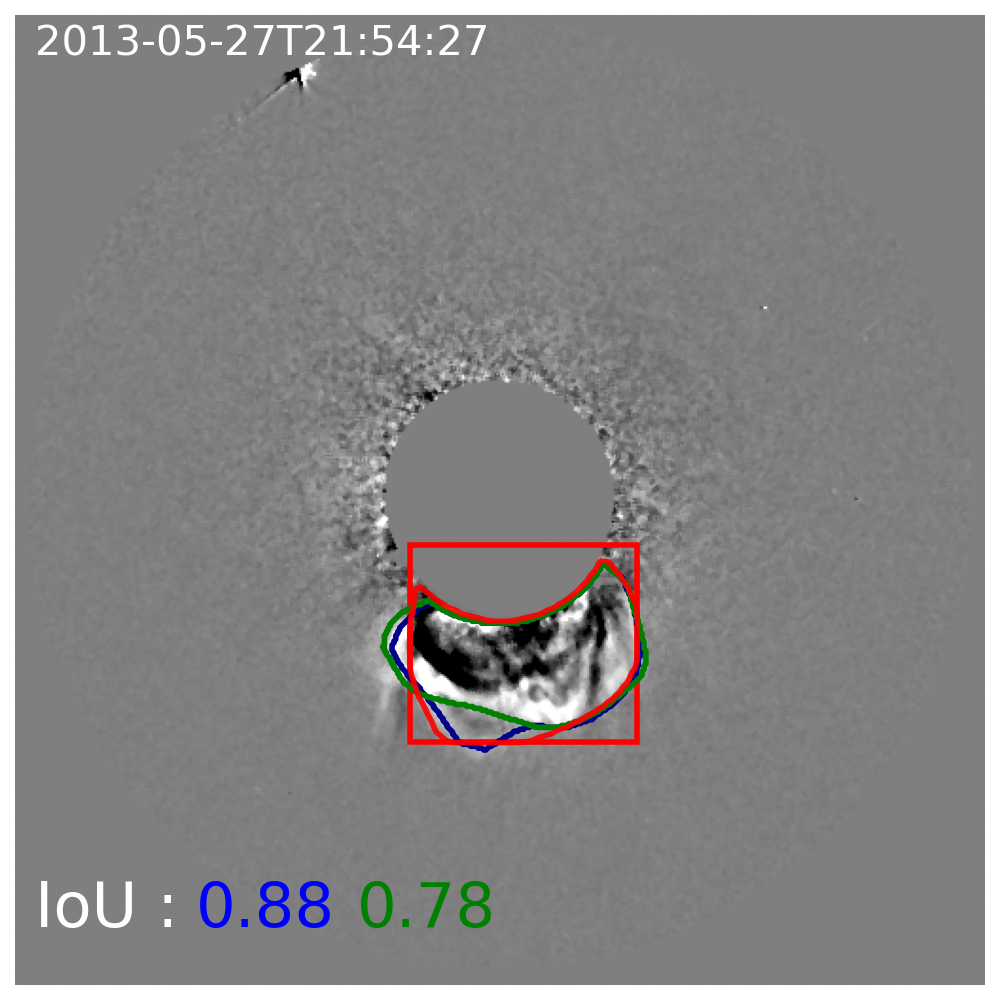

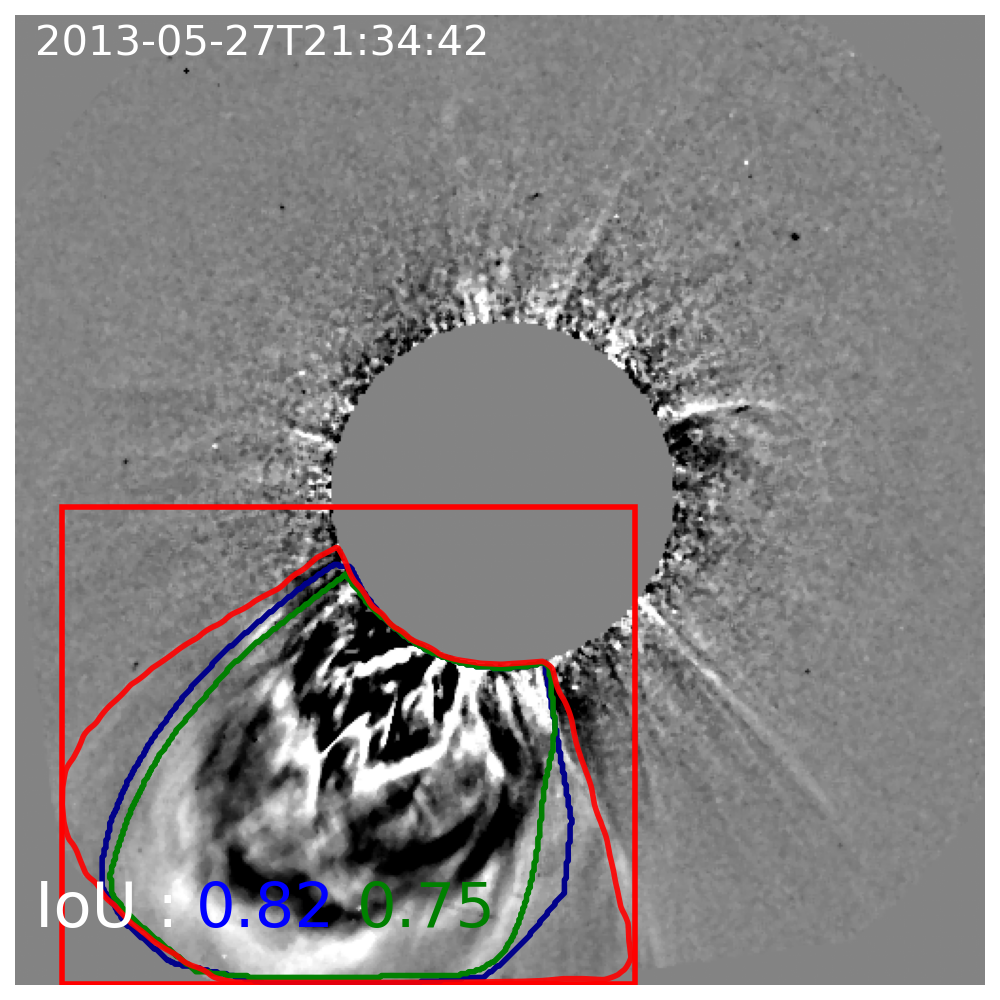

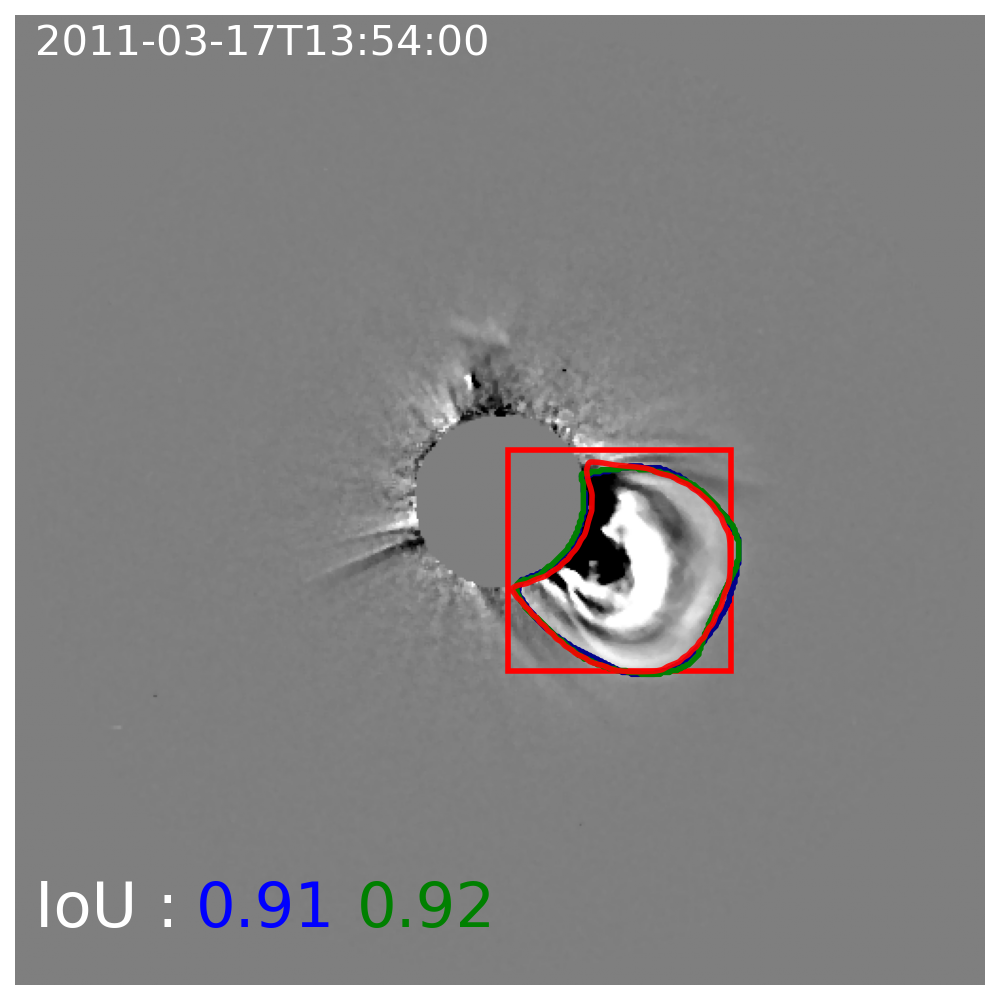

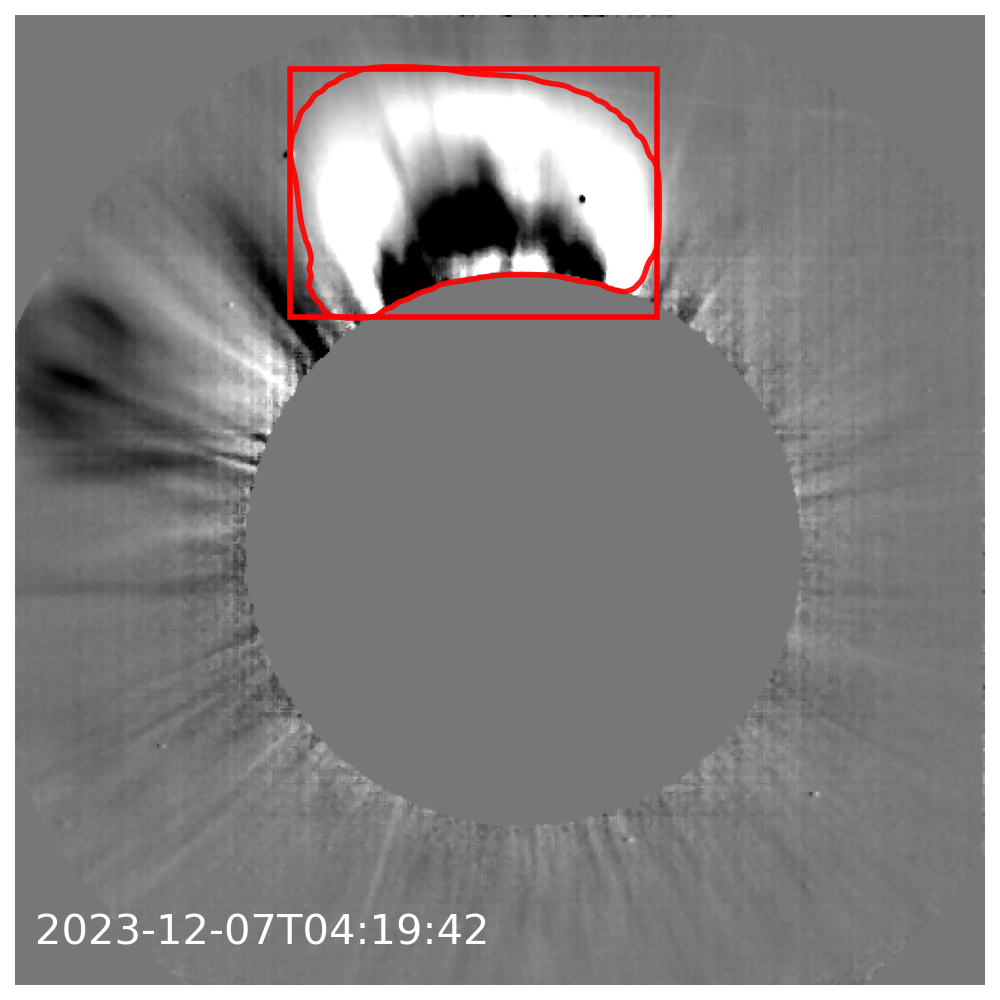

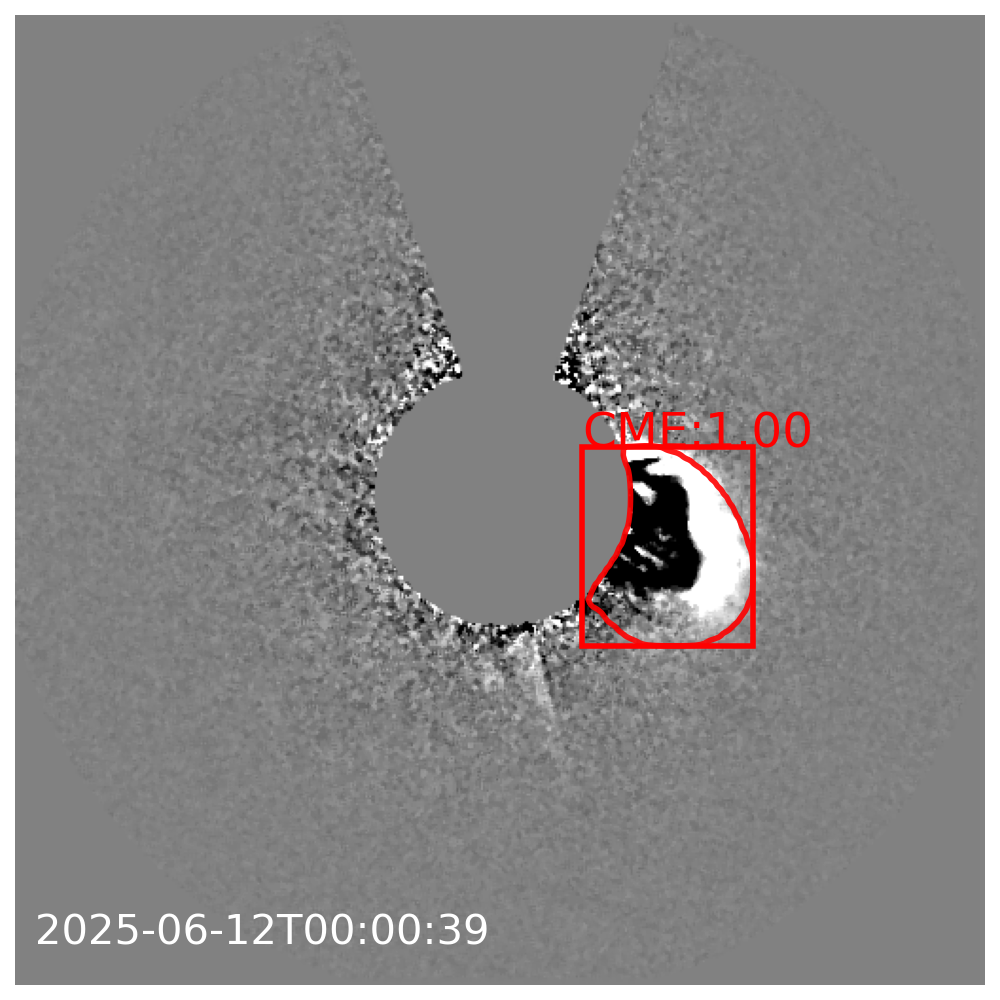

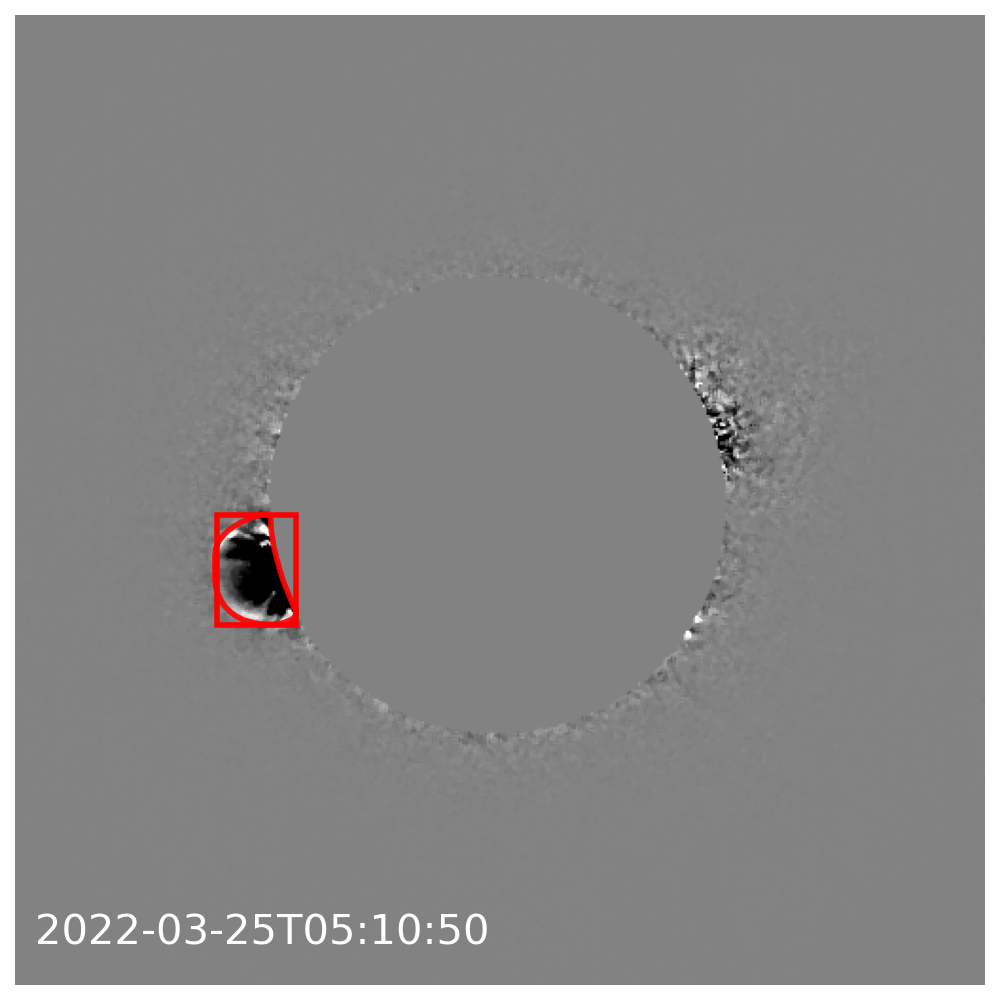

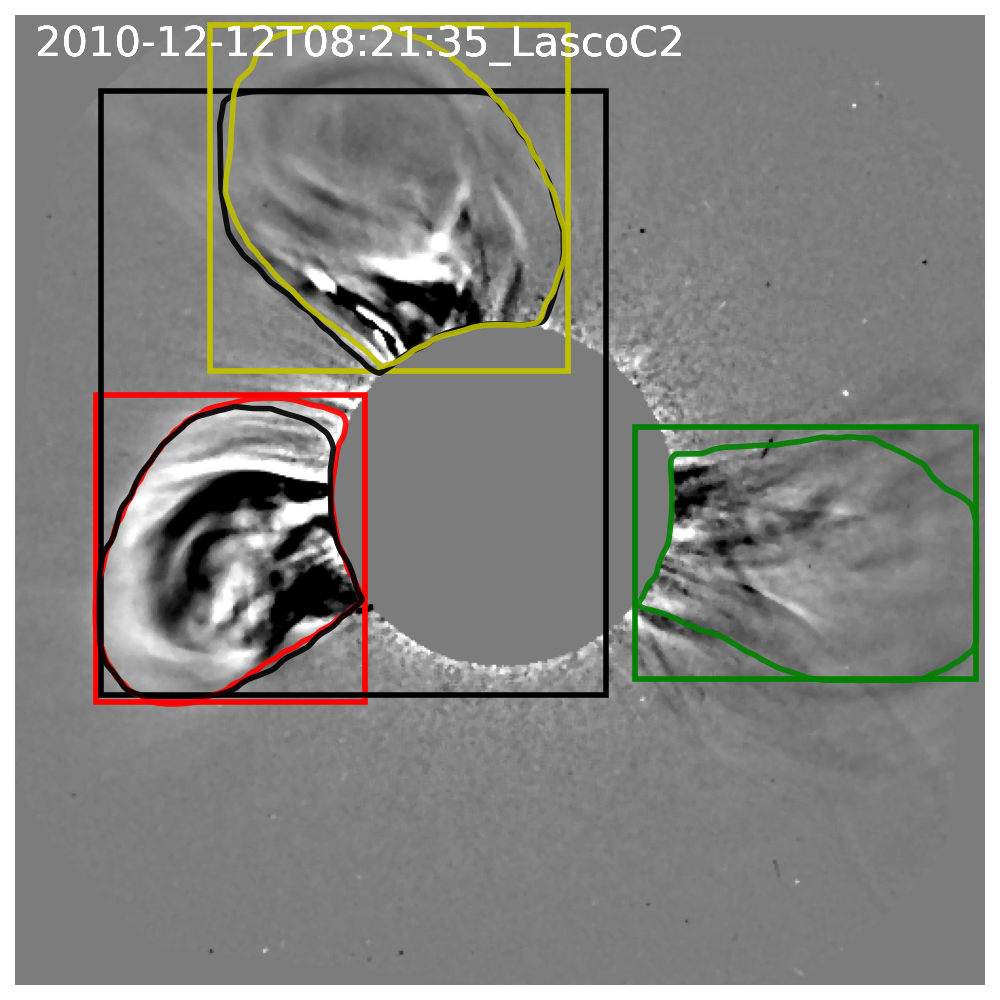

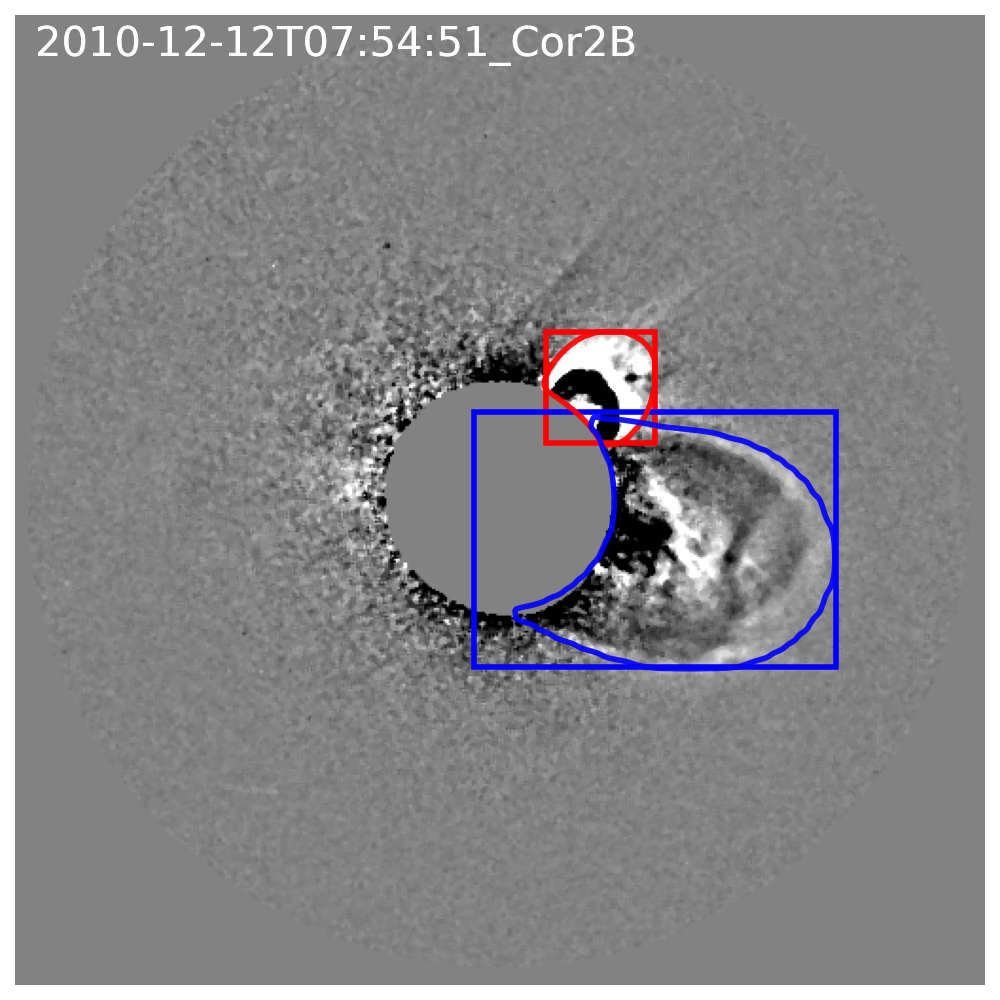

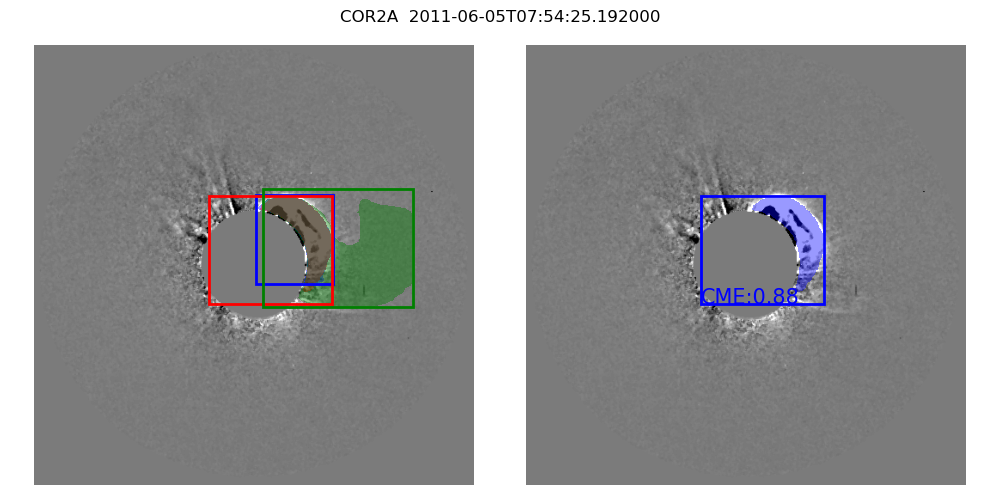

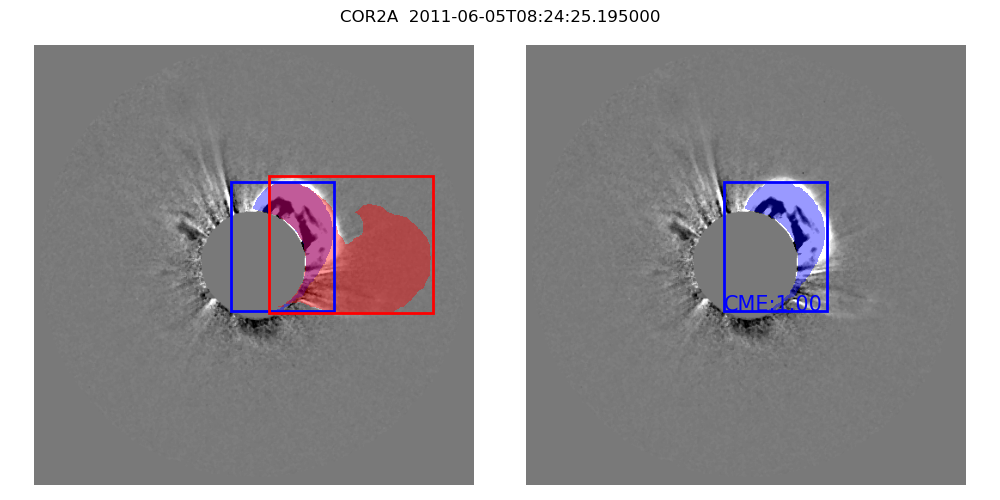

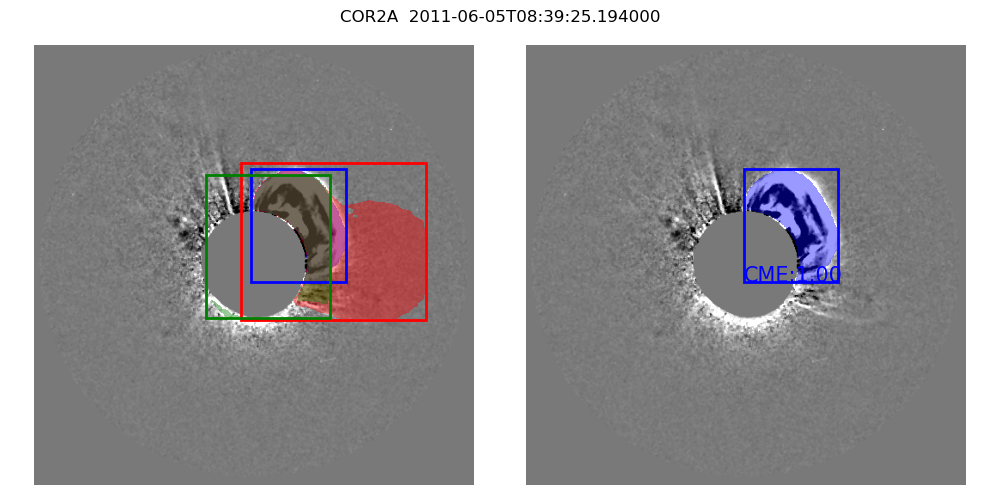

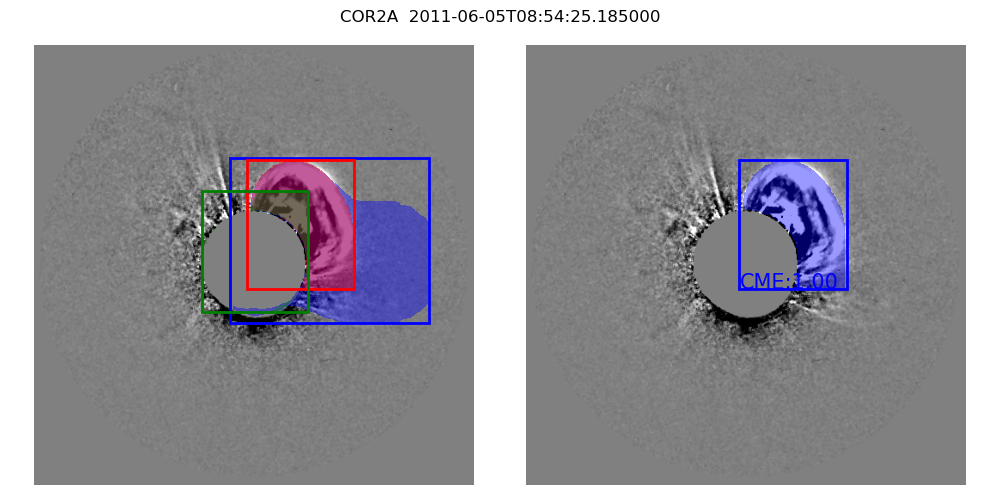

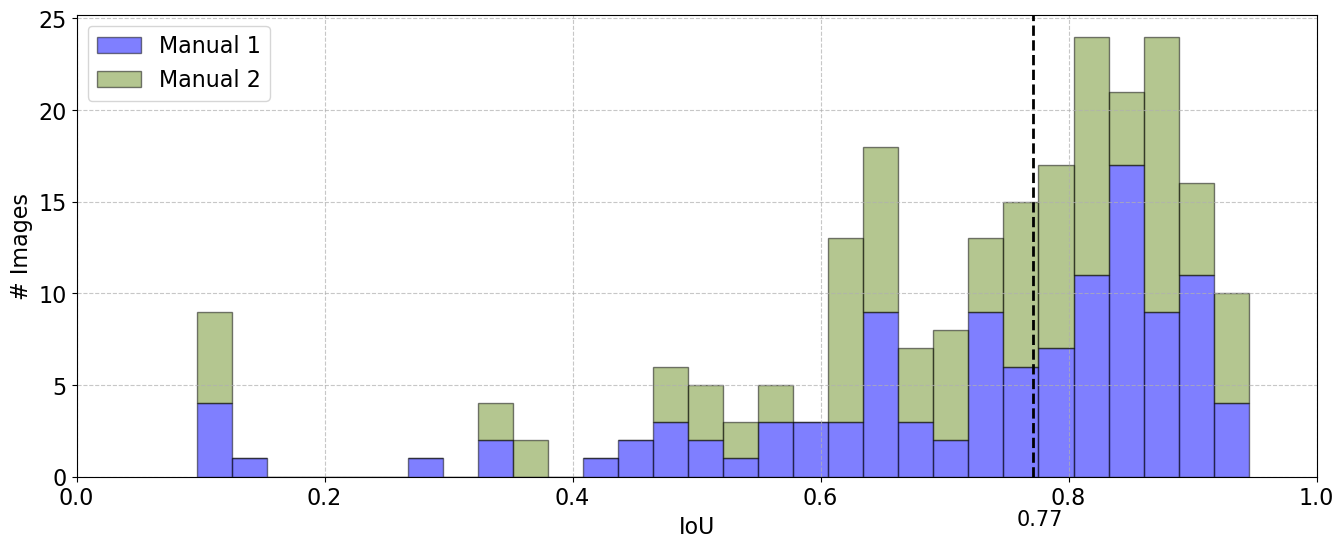

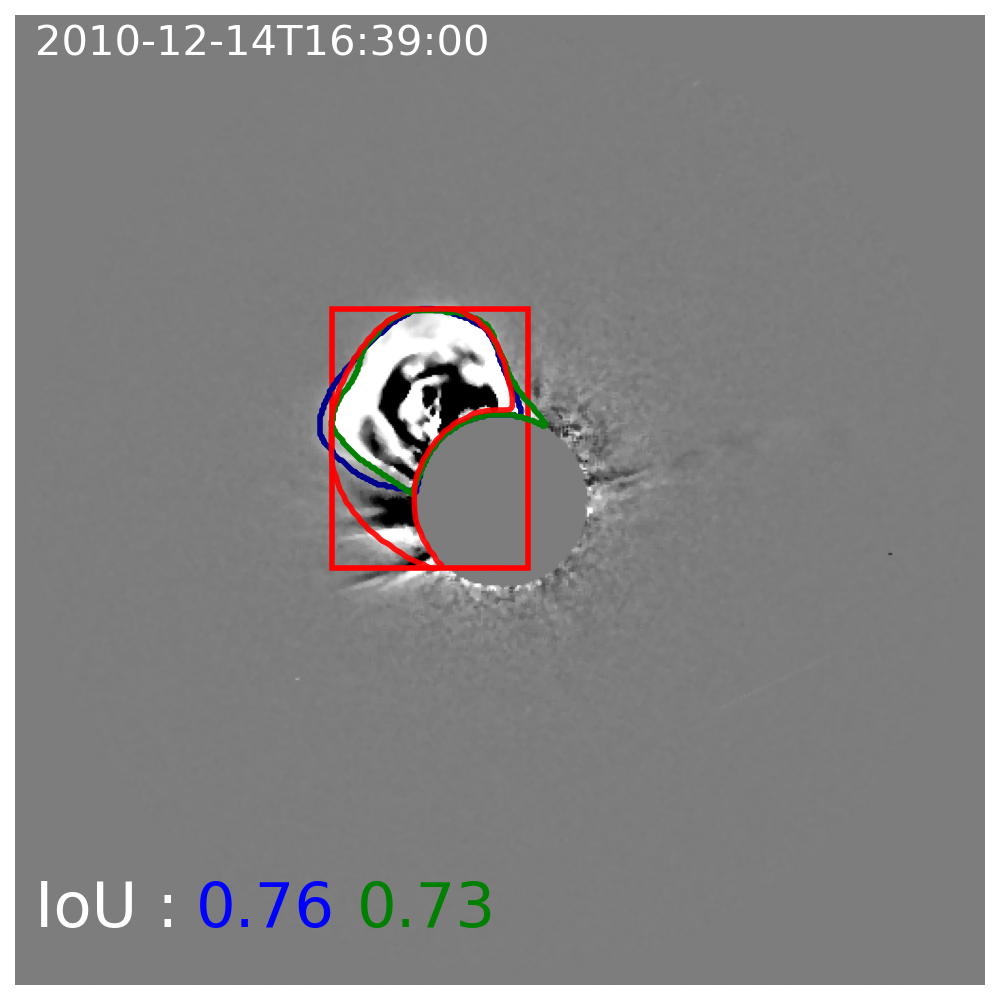

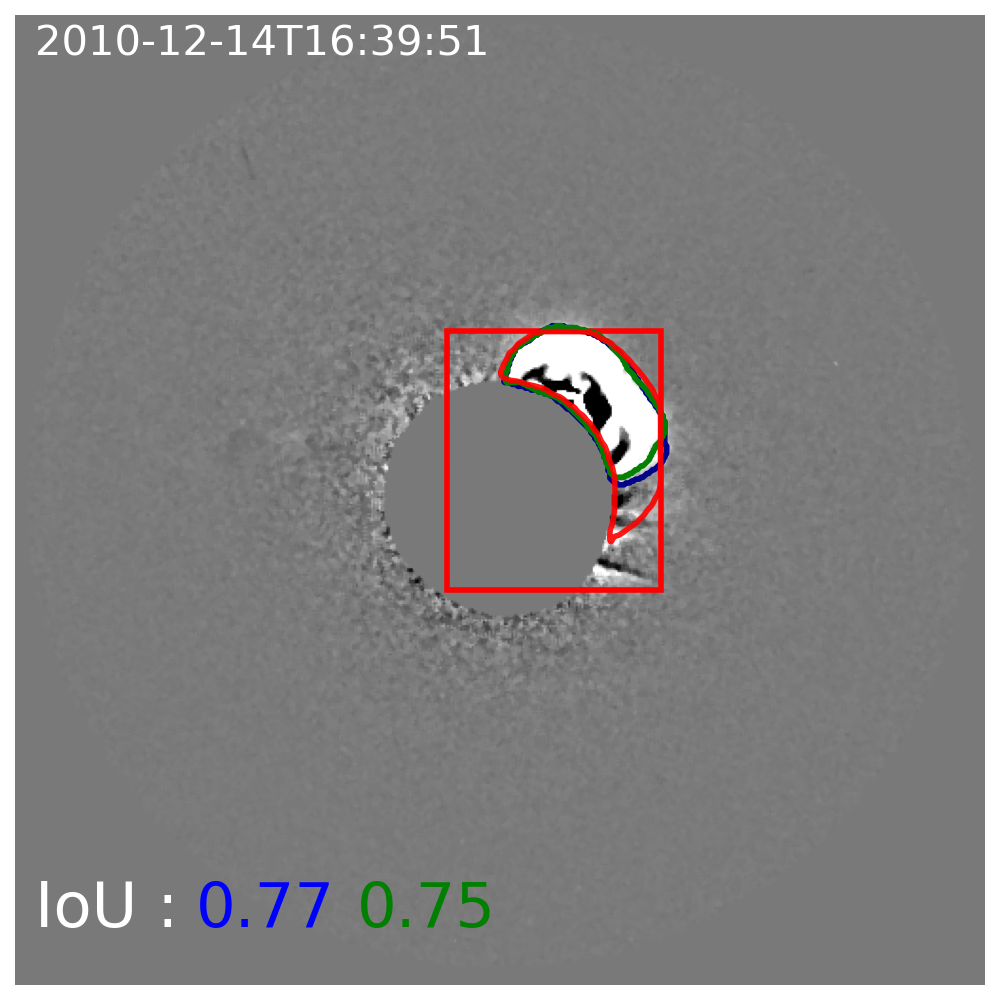

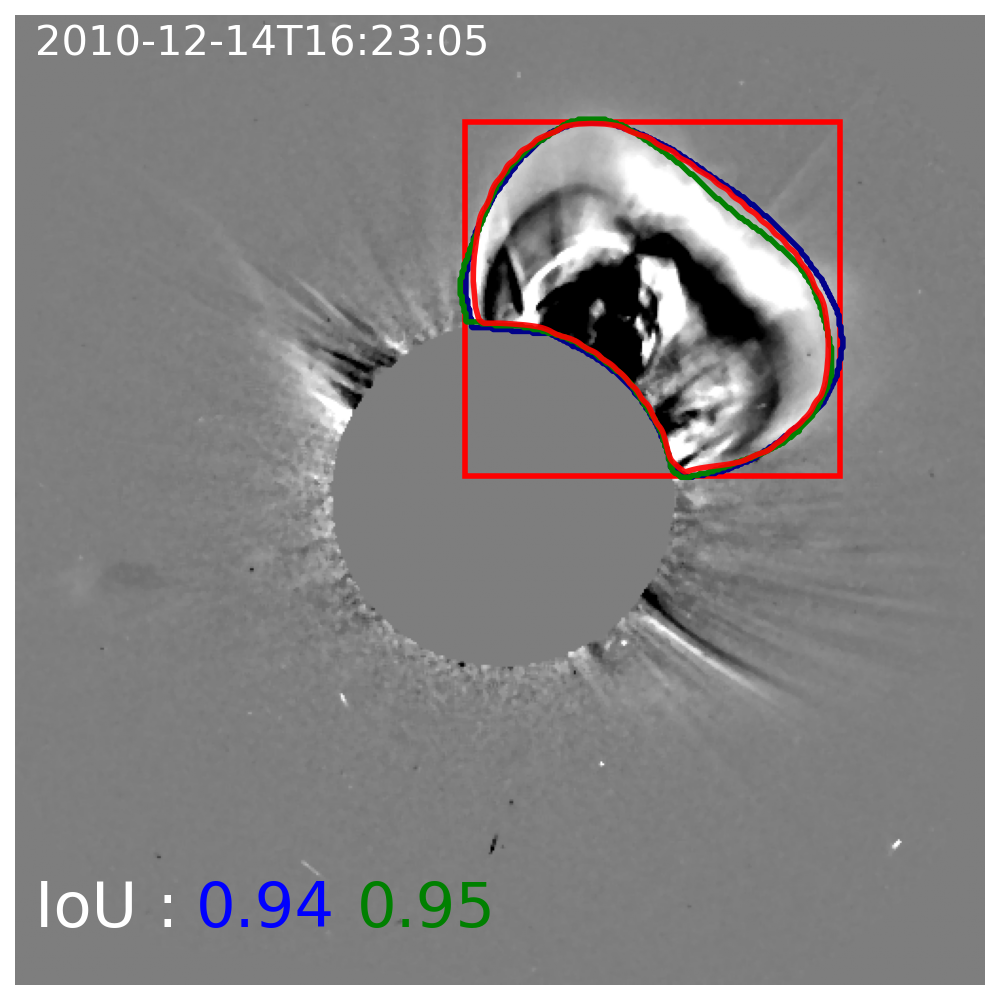

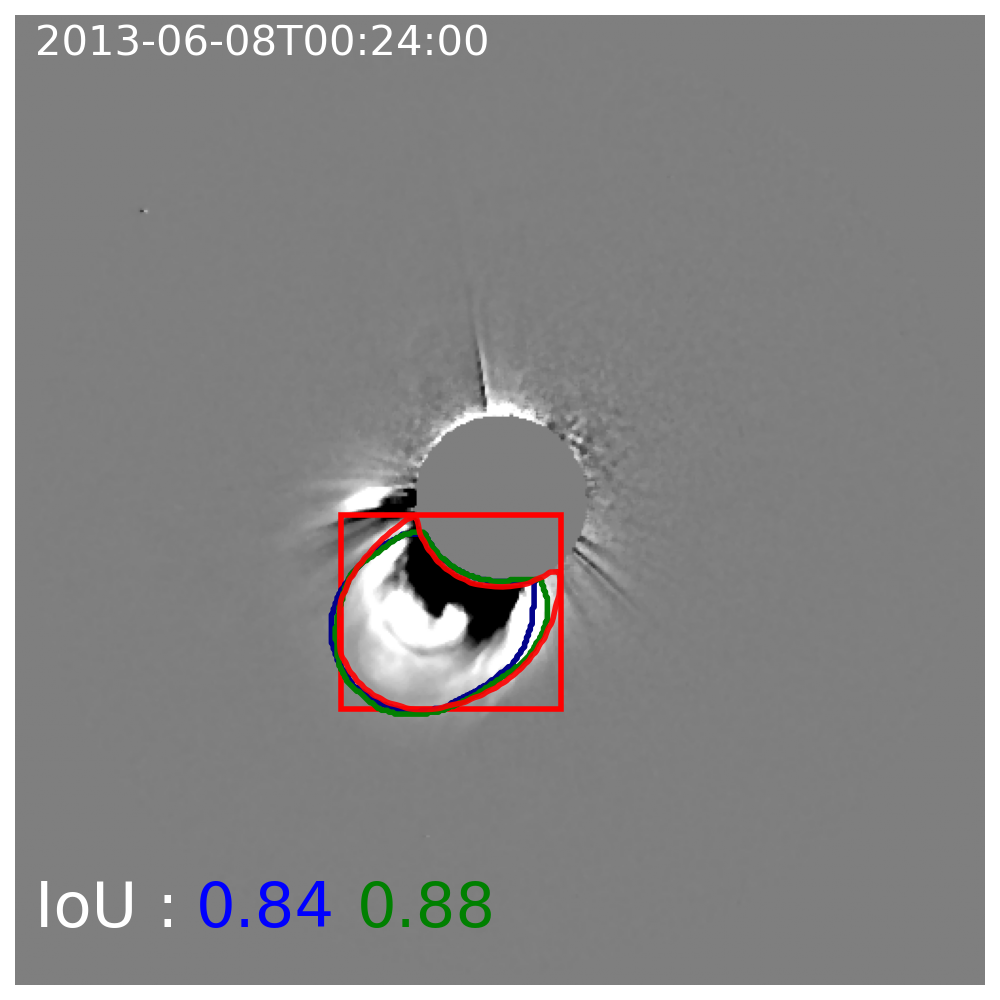

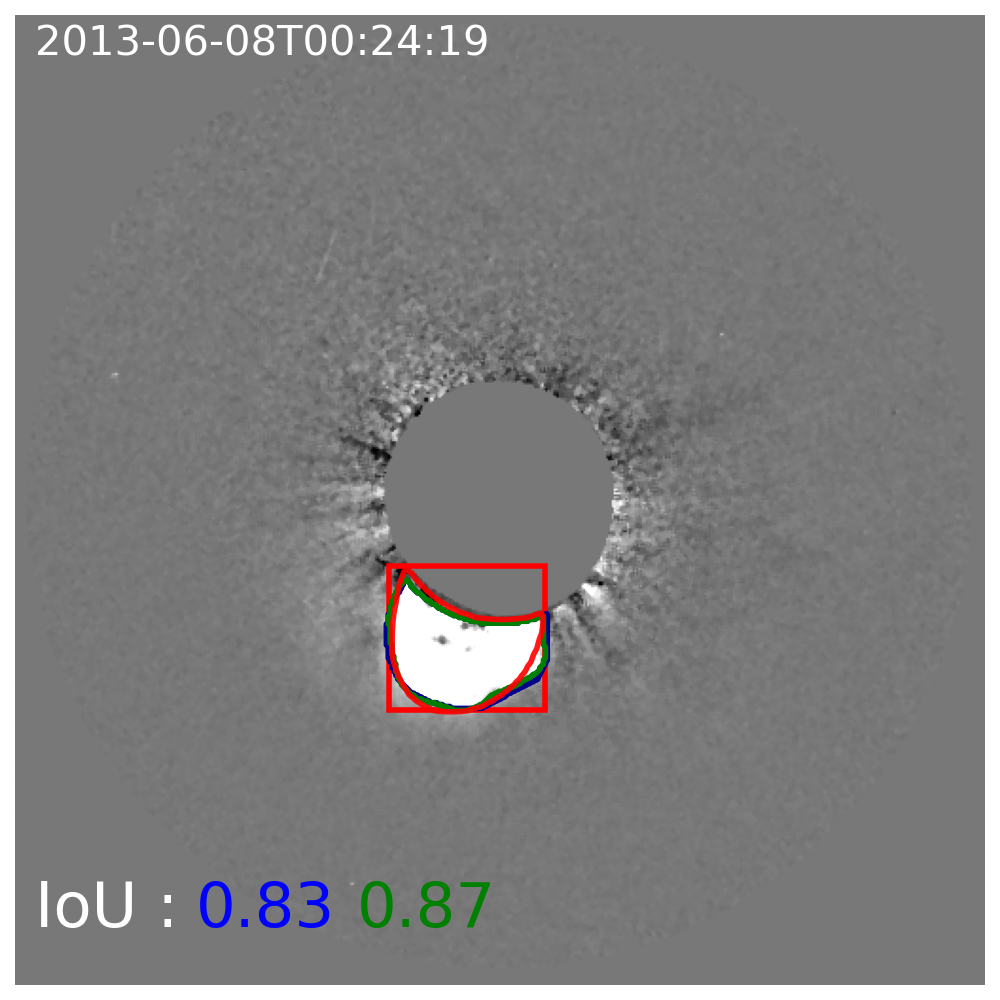

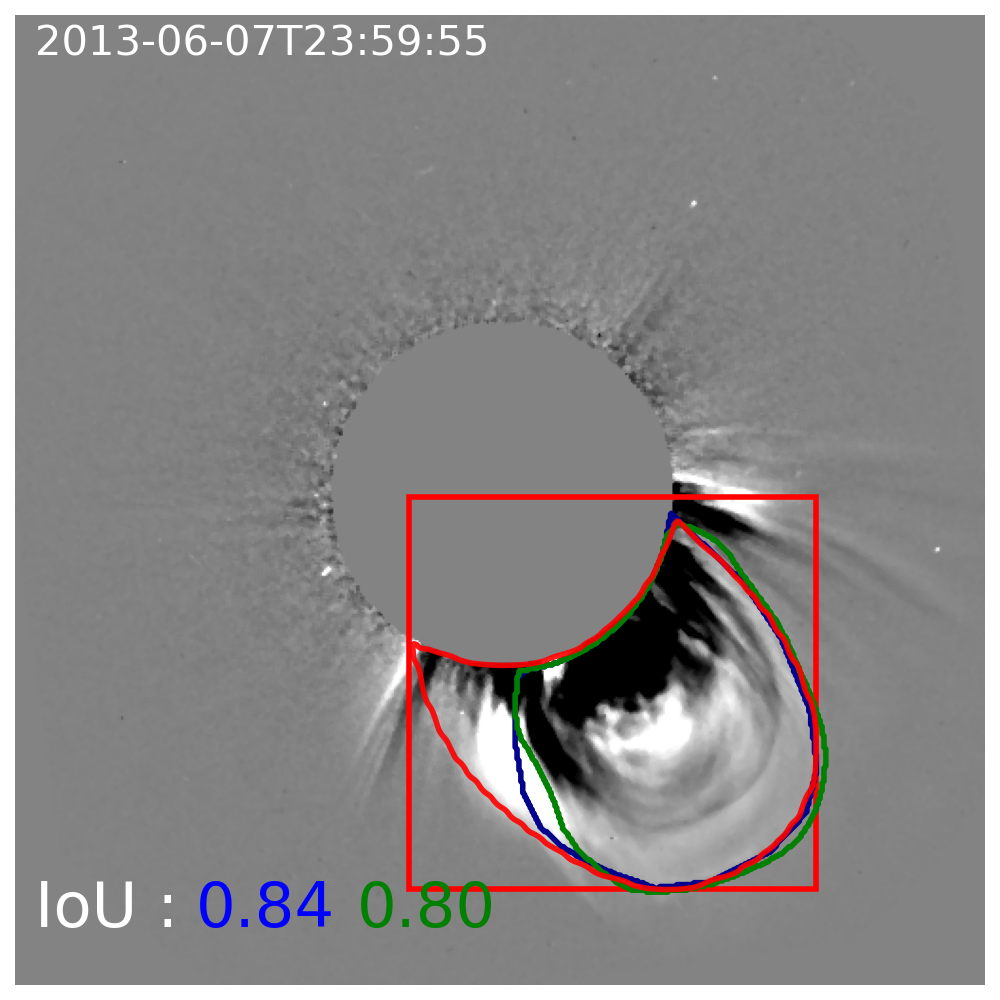

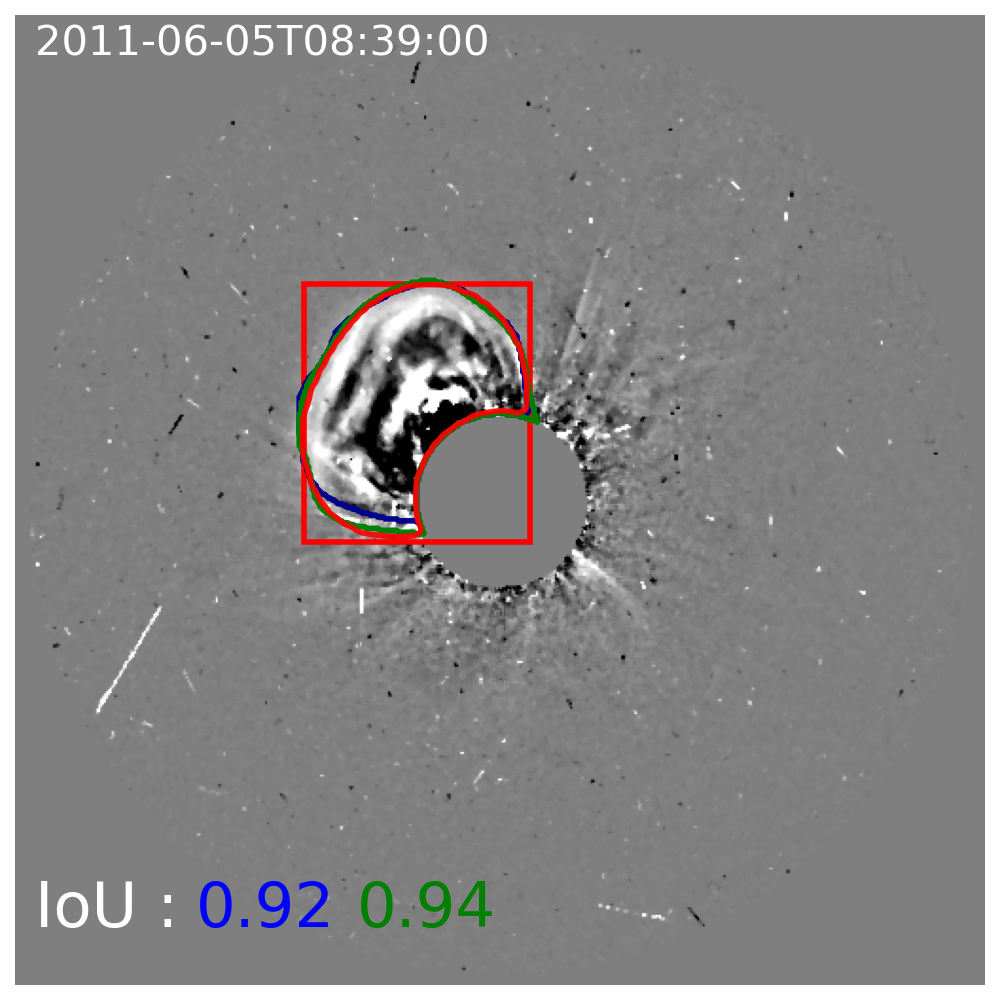

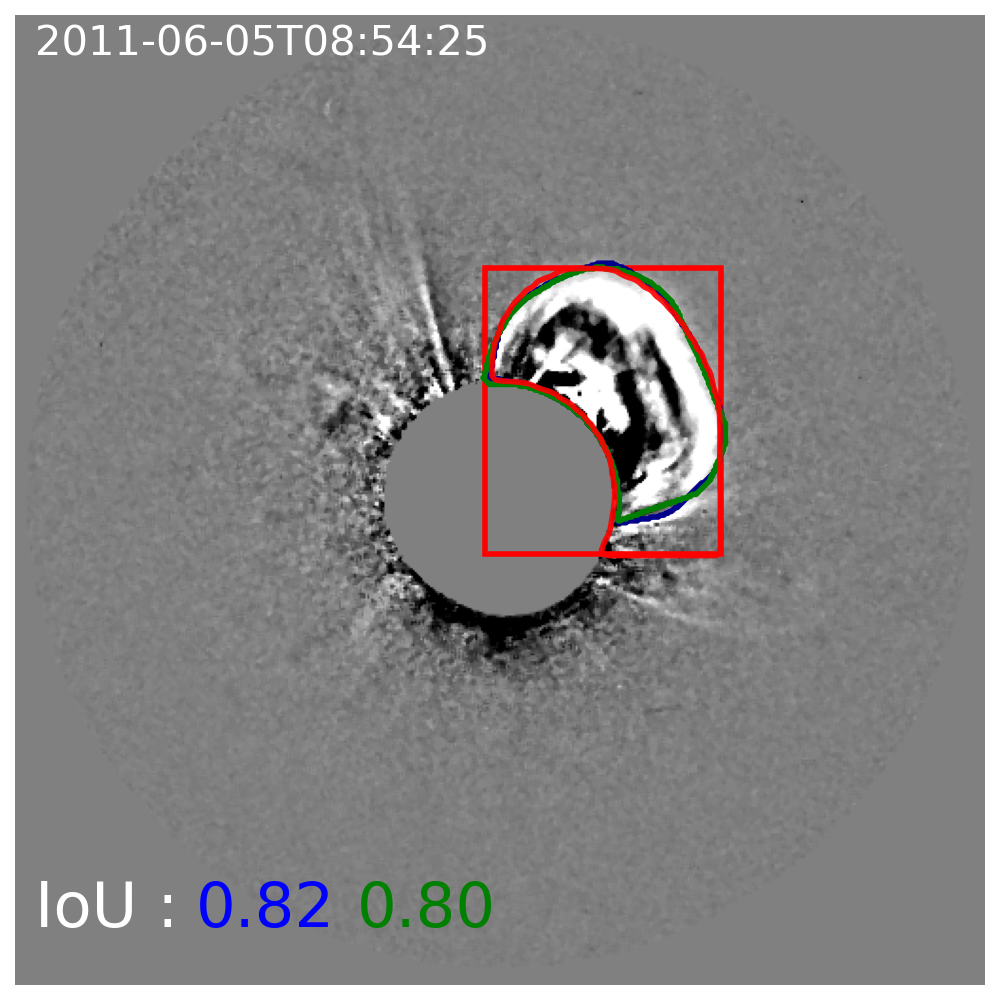

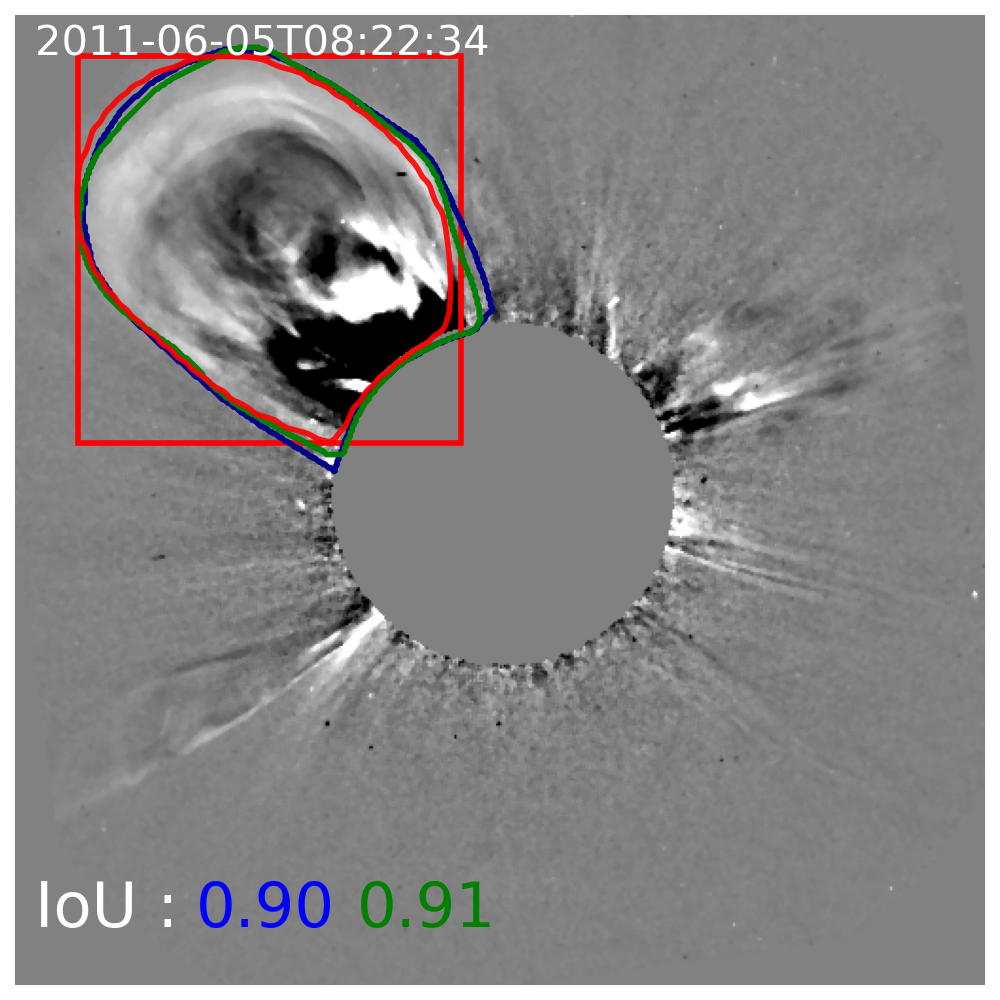

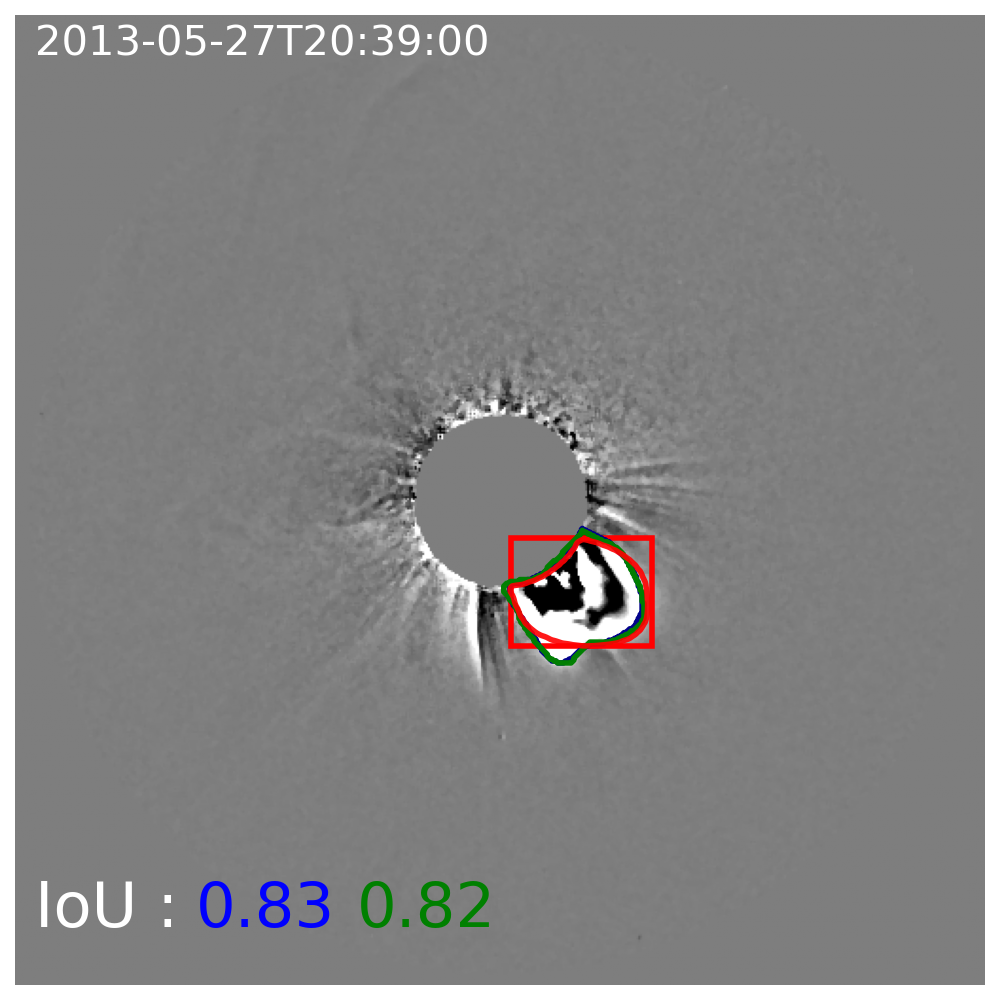

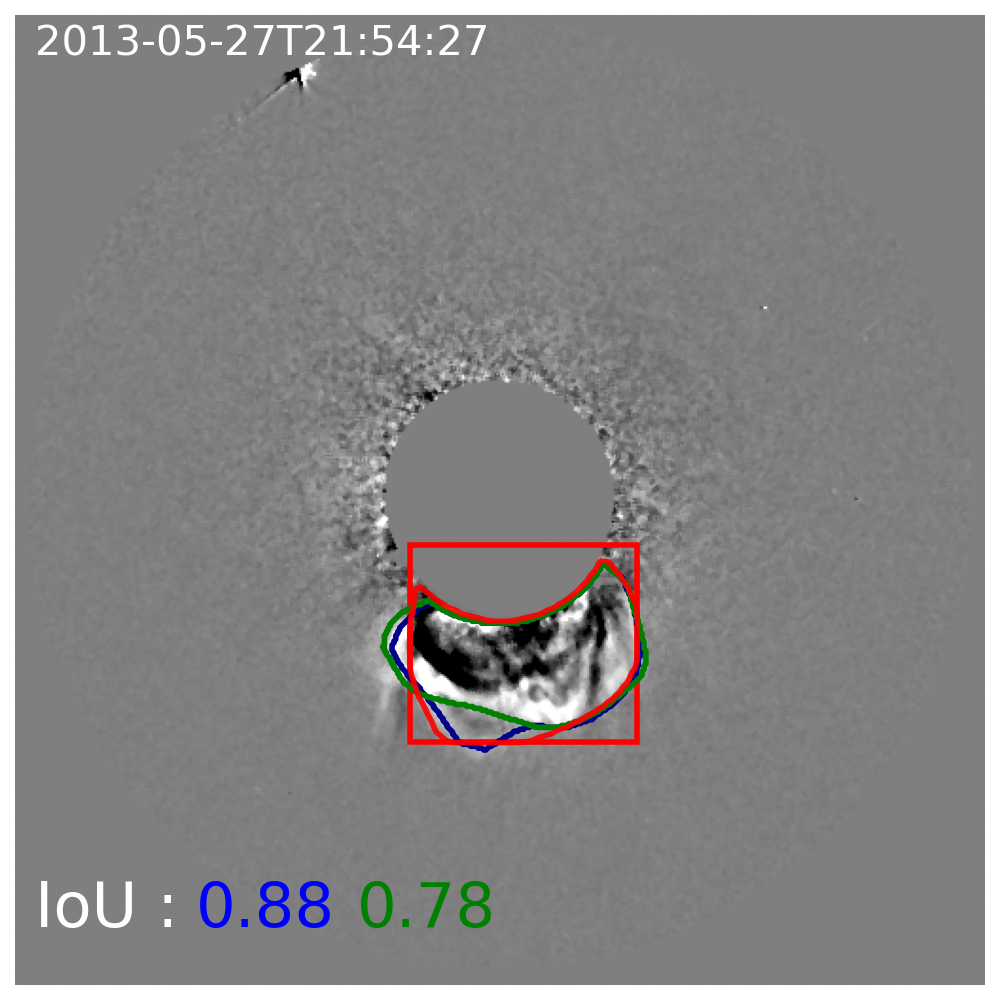

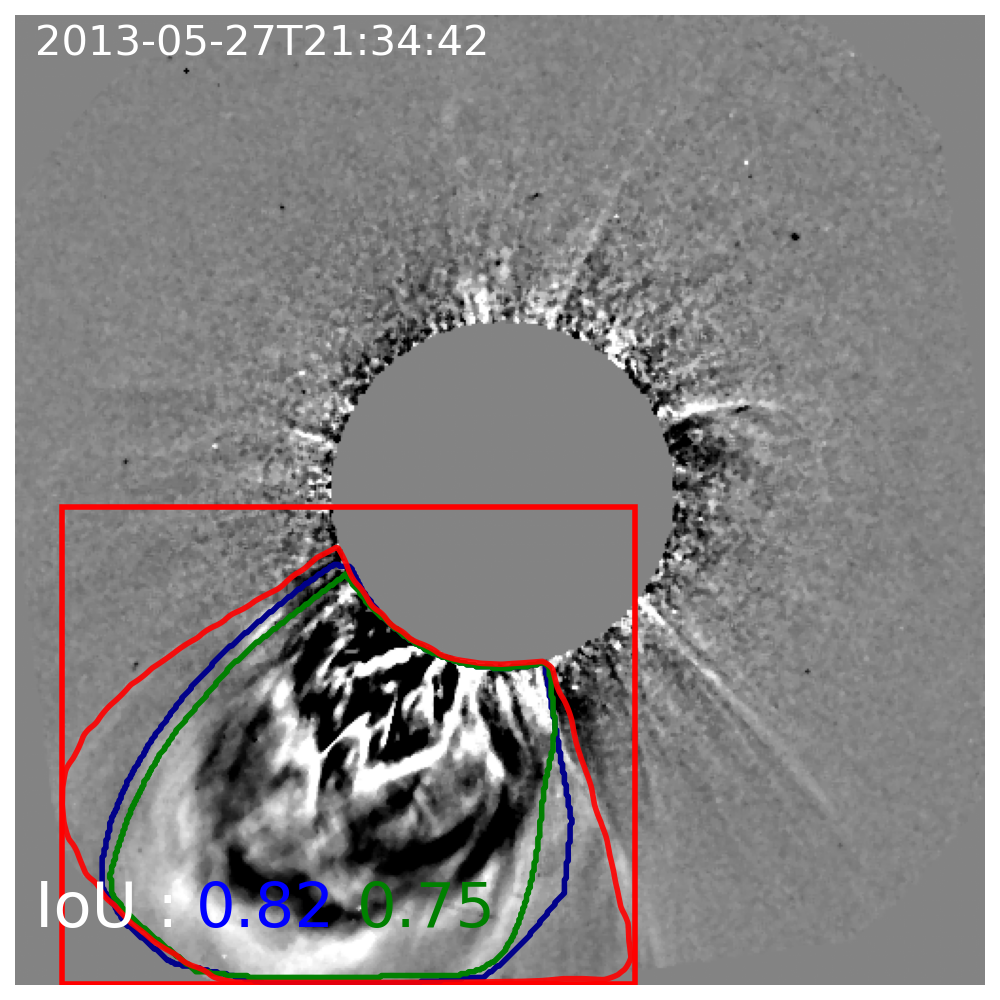

The model is evaluated on 115 real coronagraph images comprising the validation benchmark, with human masks drawn independently by two expert operators. The overall median IoU of the Mask R-CNN relative to manual trace is 0.77, with operator-to-operator agreement at IoU = 0.90, highlighting persistent subjectivity in even "ground truth" human annotations.

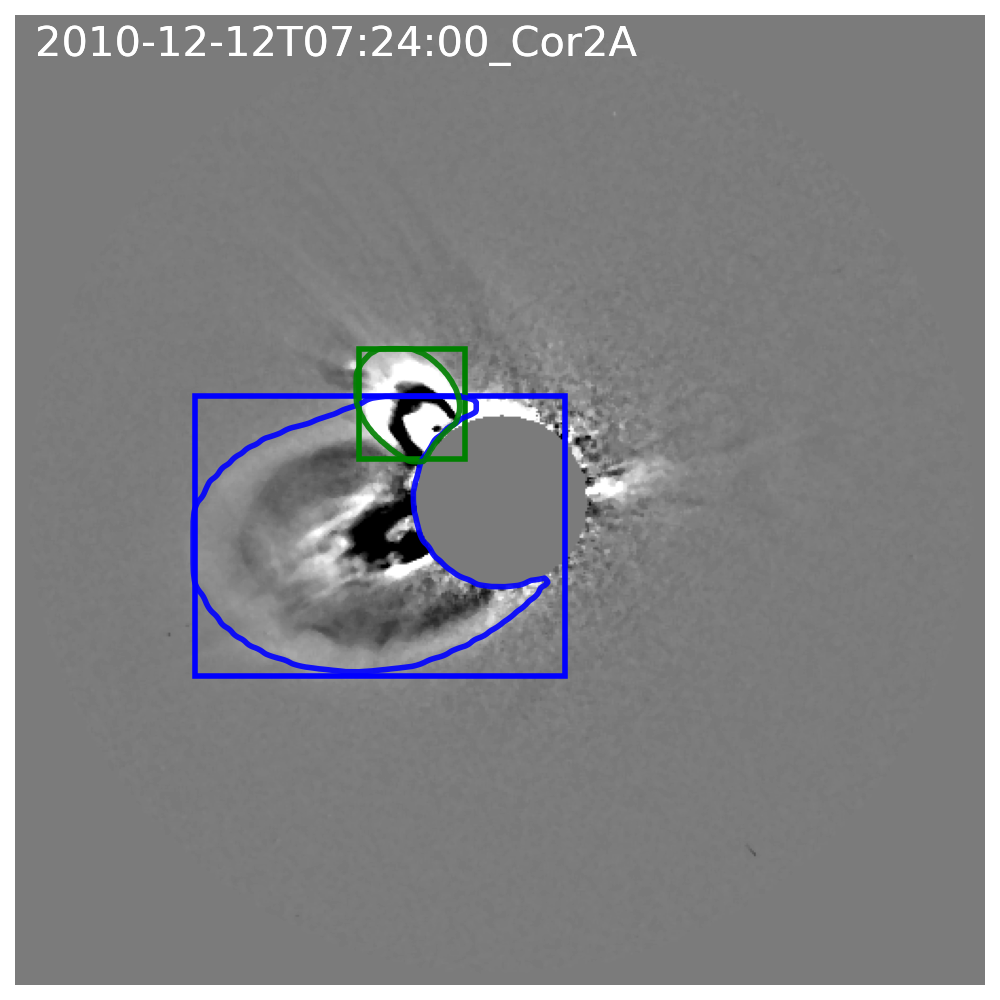

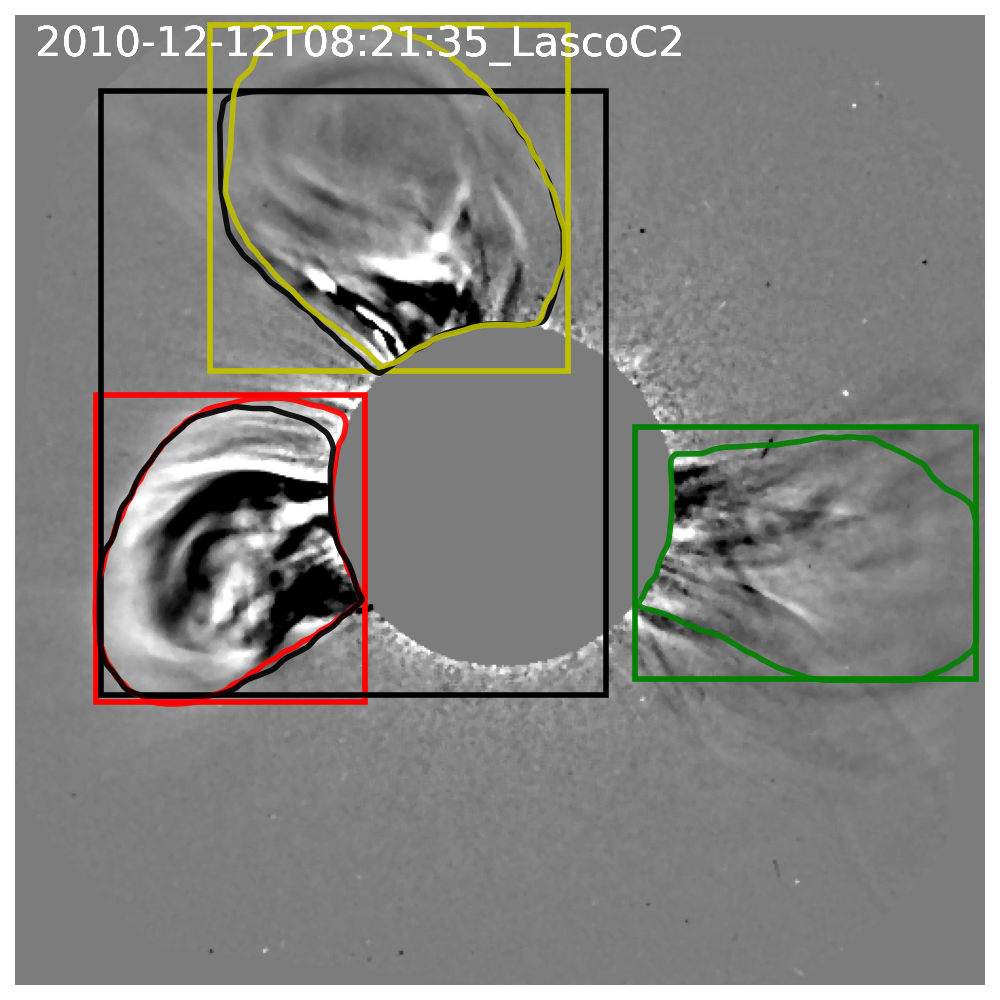

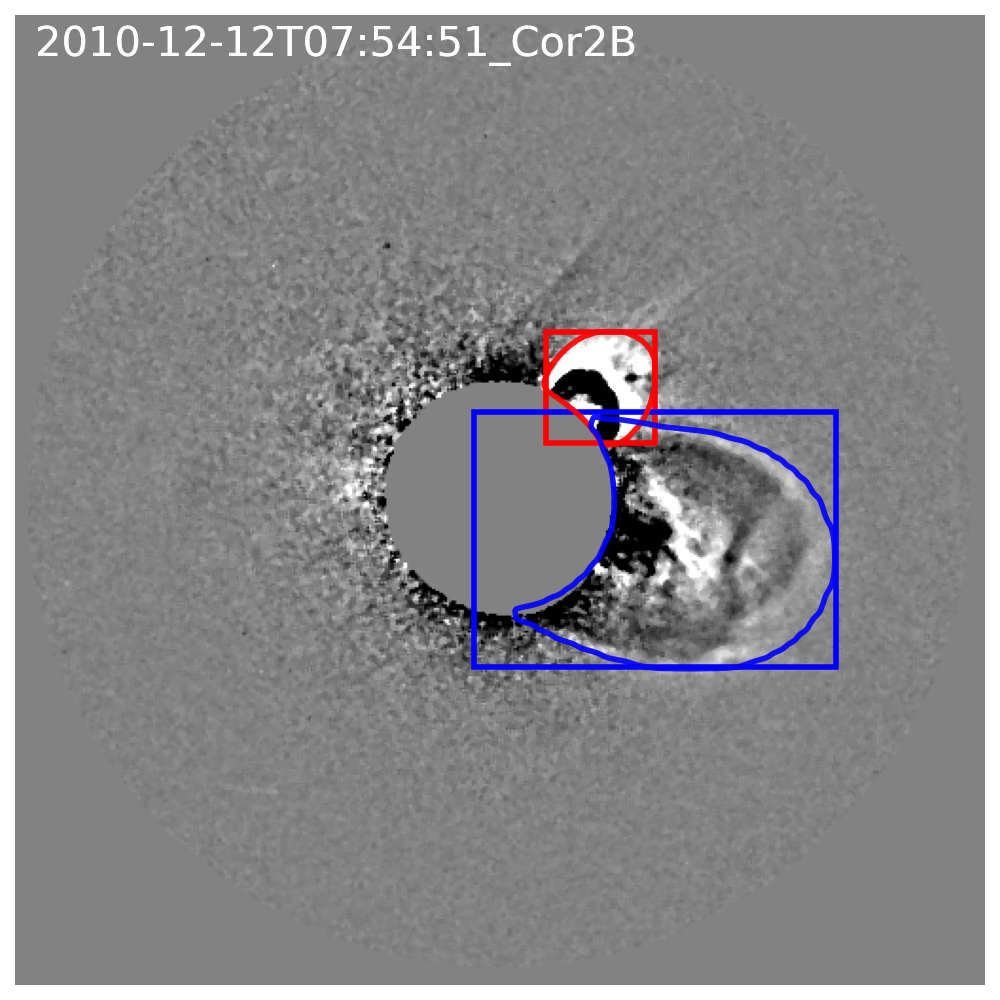

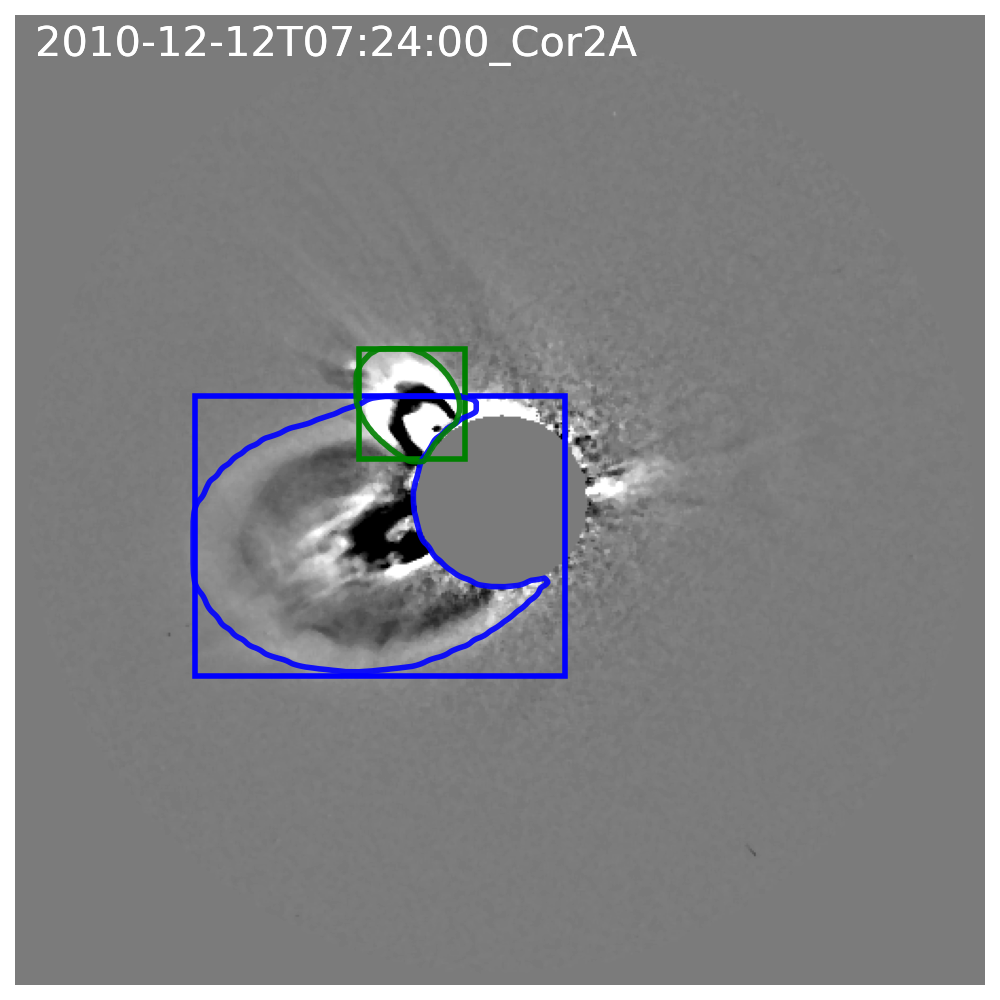

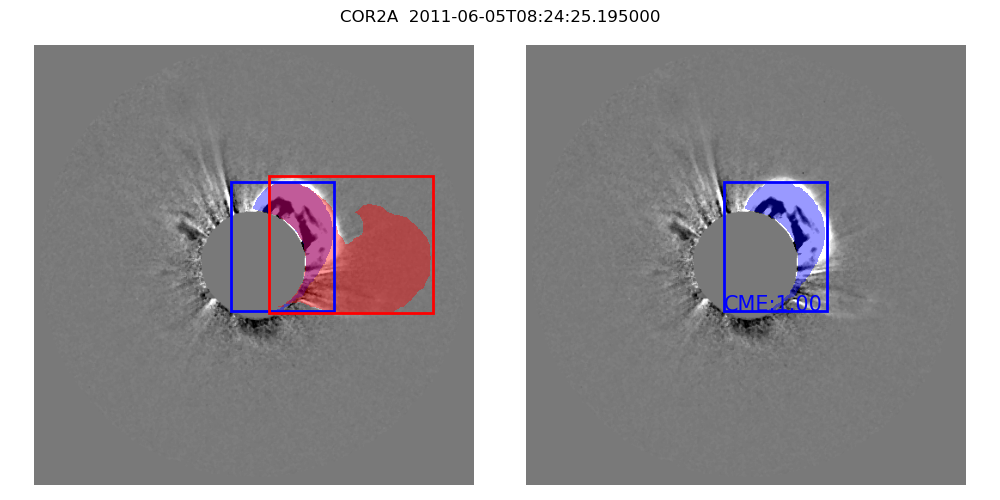

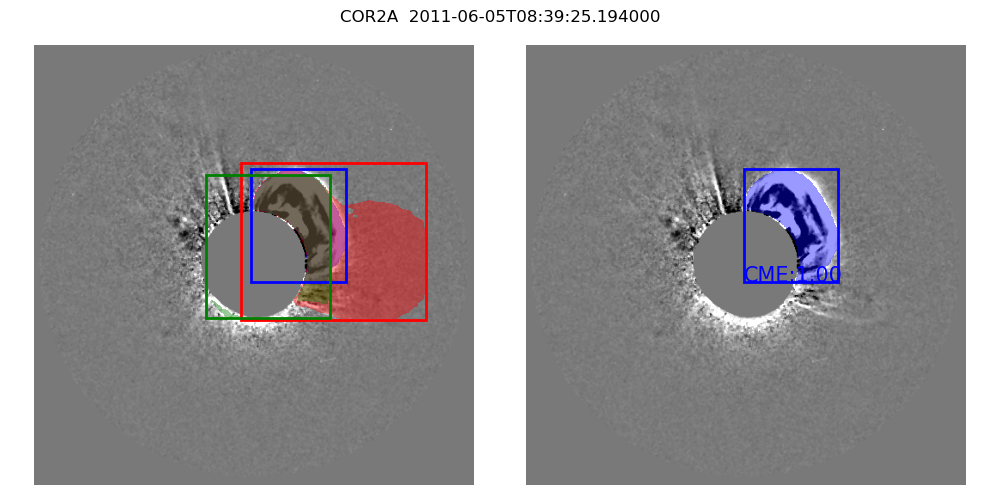

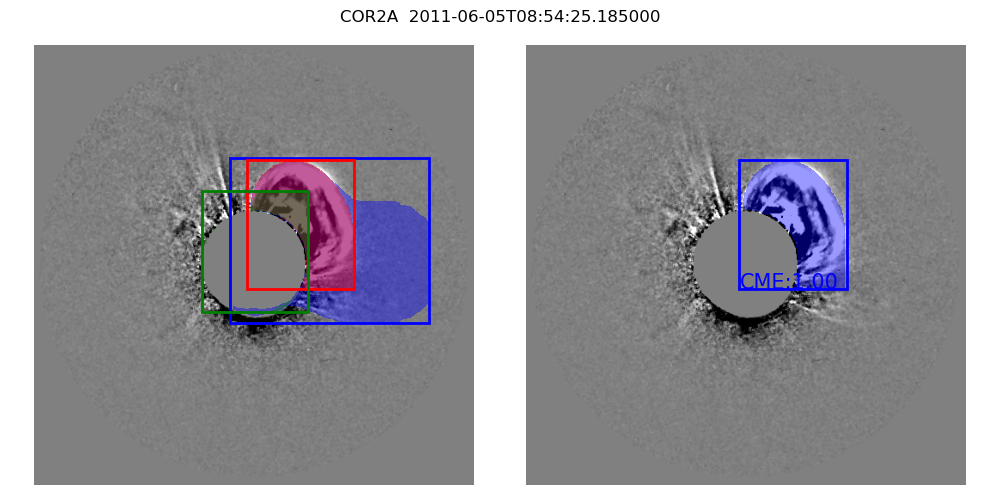

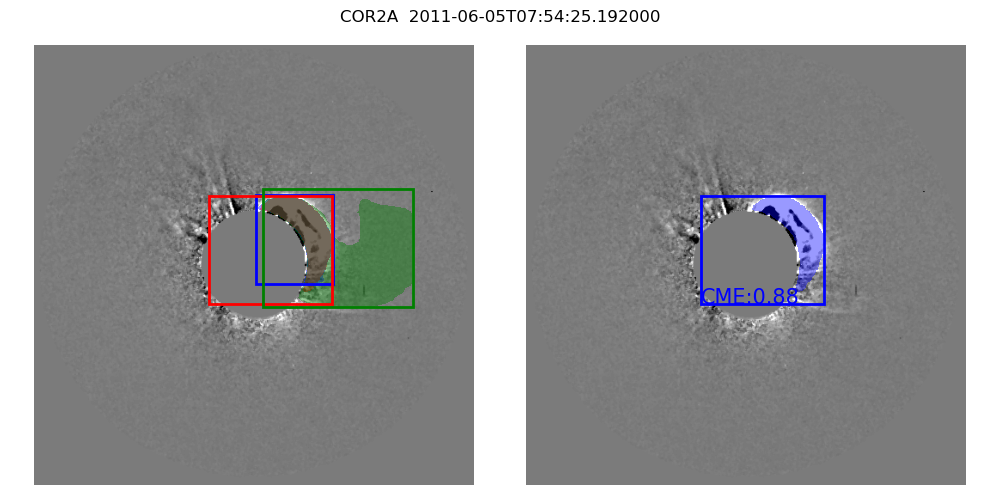

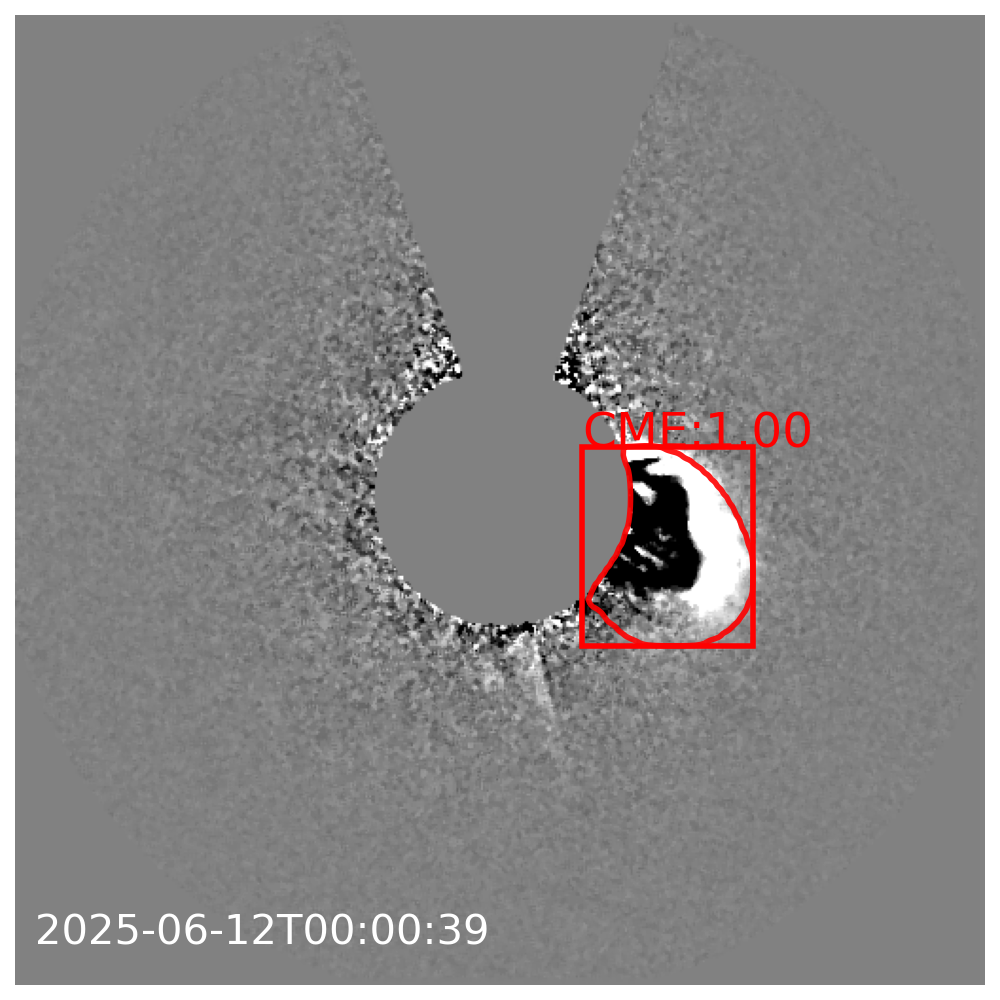

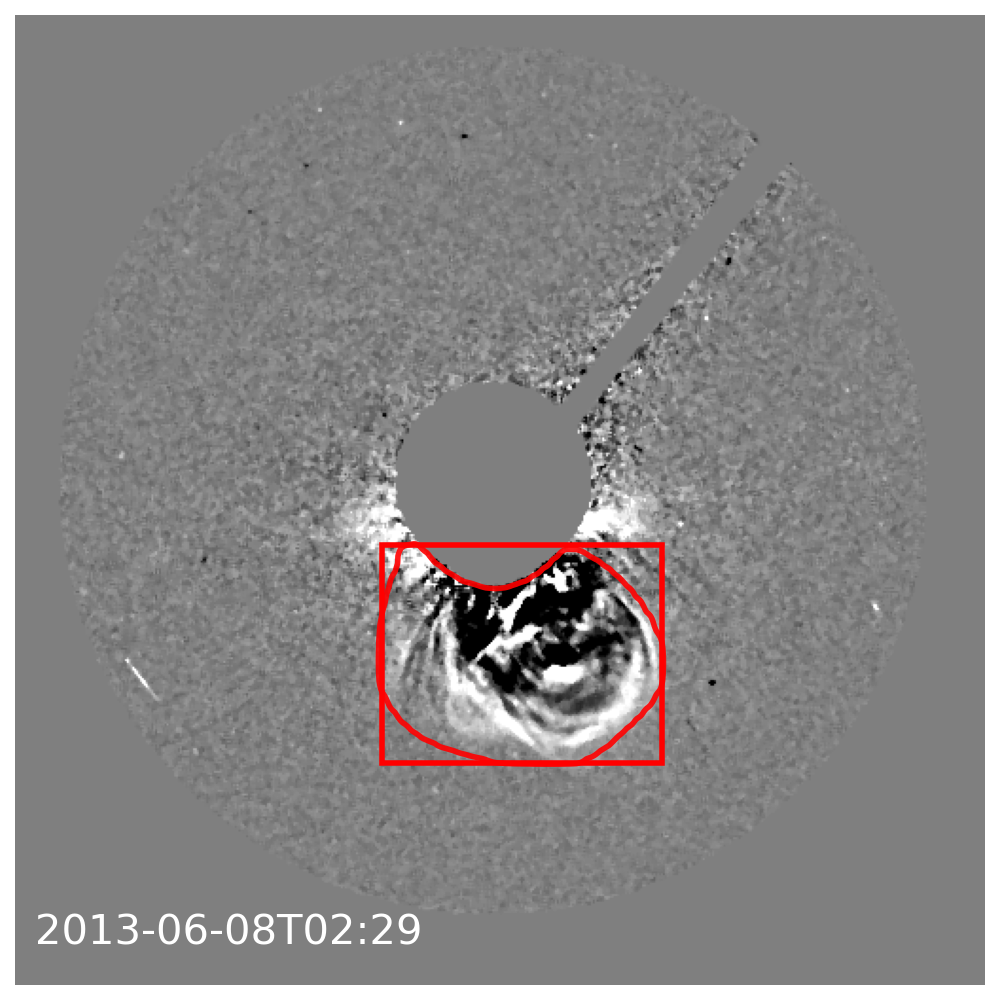

Instance segmentation enables concurrent identification of overlapping CMEs and robust rejection of confounding radially moving background features, a failure mode for classical image processing and prior ML-segmentation pipelines.

Figure 10: Instance segmentation in real multi-event images; distinct CMEs are separated and labeled with minimal overlap.

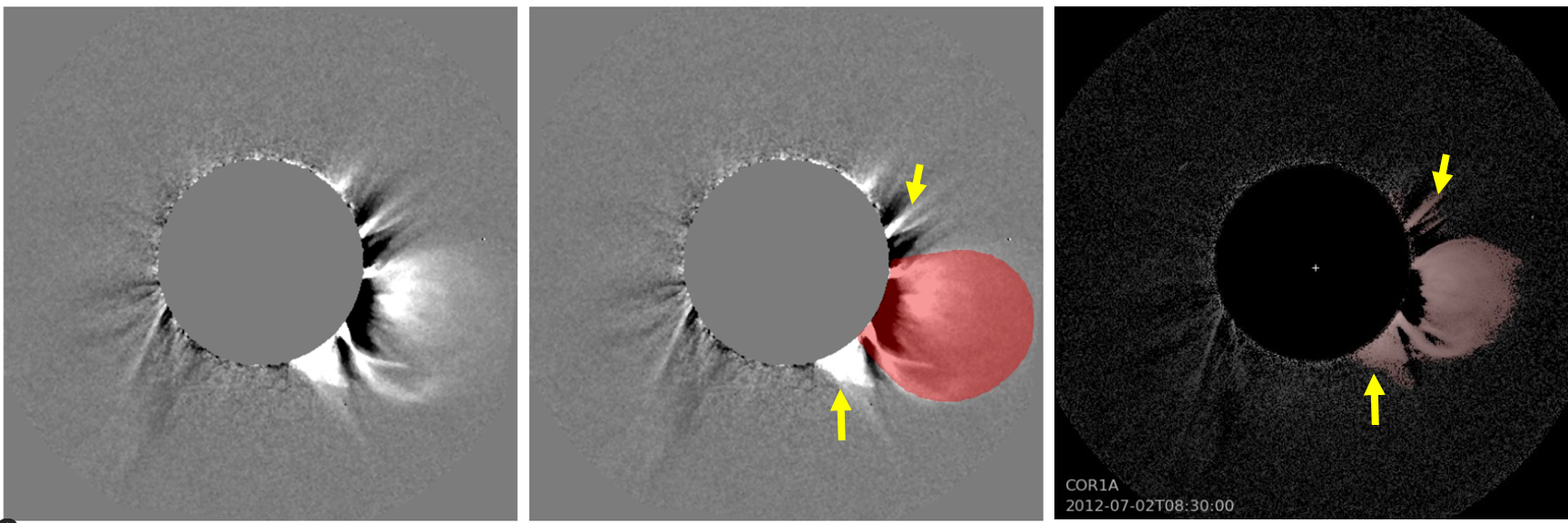

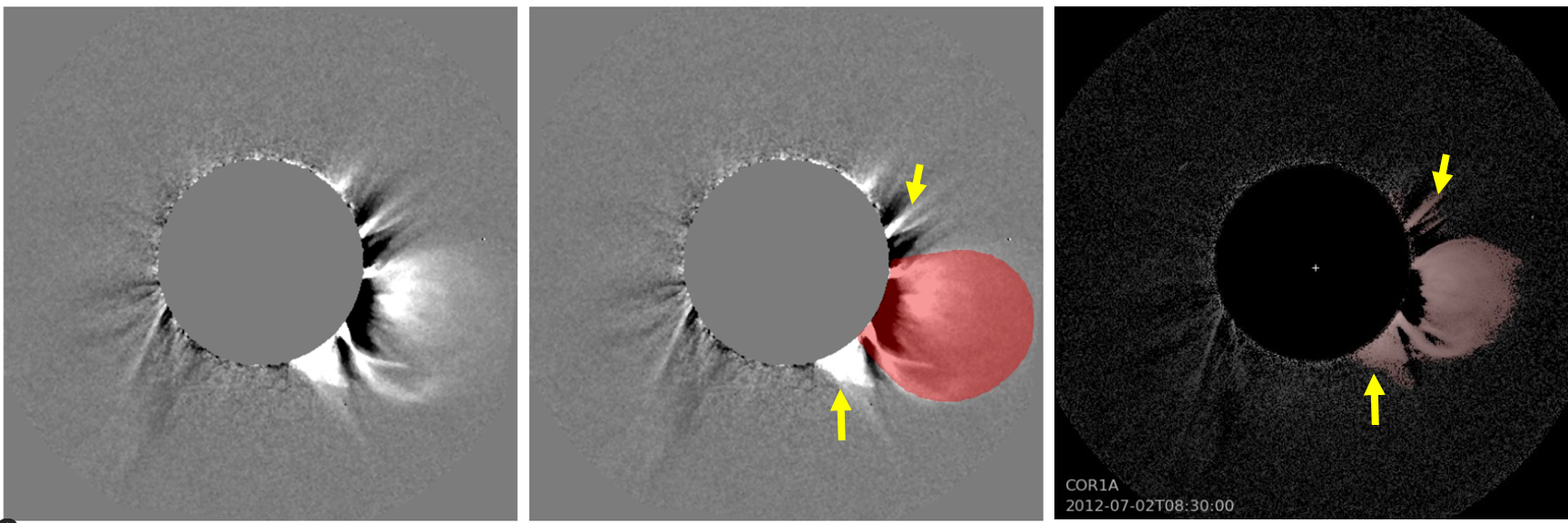

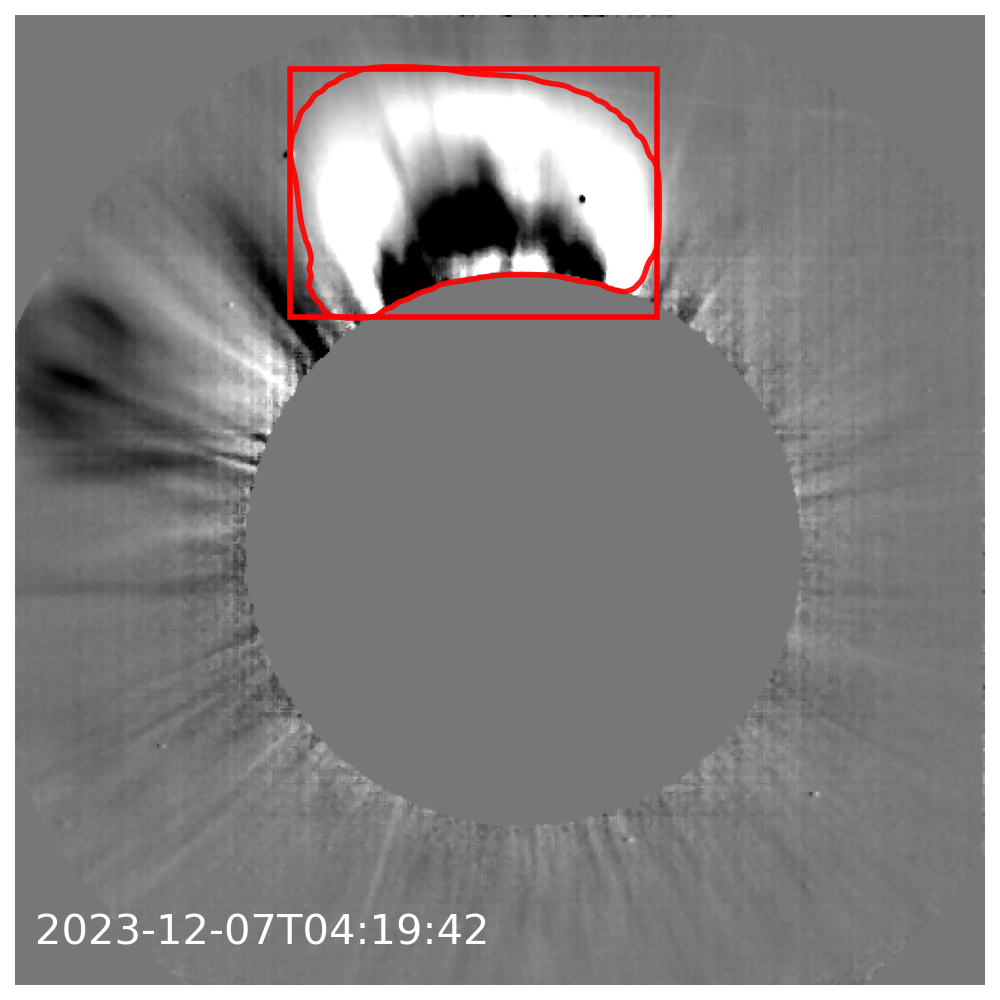

Comparison with recent ML segmentation catalogs demonstrates that the synthetic-model-driven approach yields smoother, topologically connected masks, which, while less detailed for highly irregular CMEs, are more consistent for identifying the global CME envelope without overattributing dispersed features.

Figure 11: Side-by-side segmentation for a complex event, showing that the synthetic-model approach yields coherent envelopes and less background contamination compared to direct annotation-based methods.

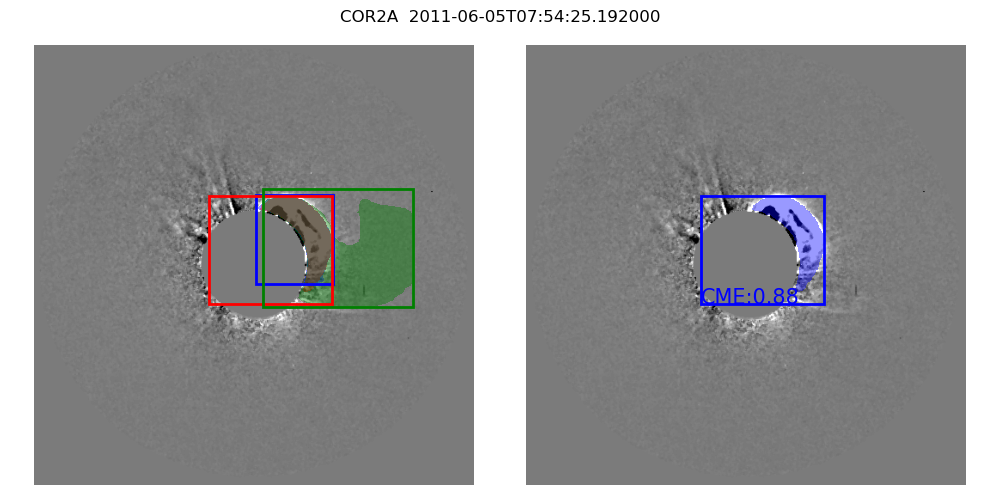

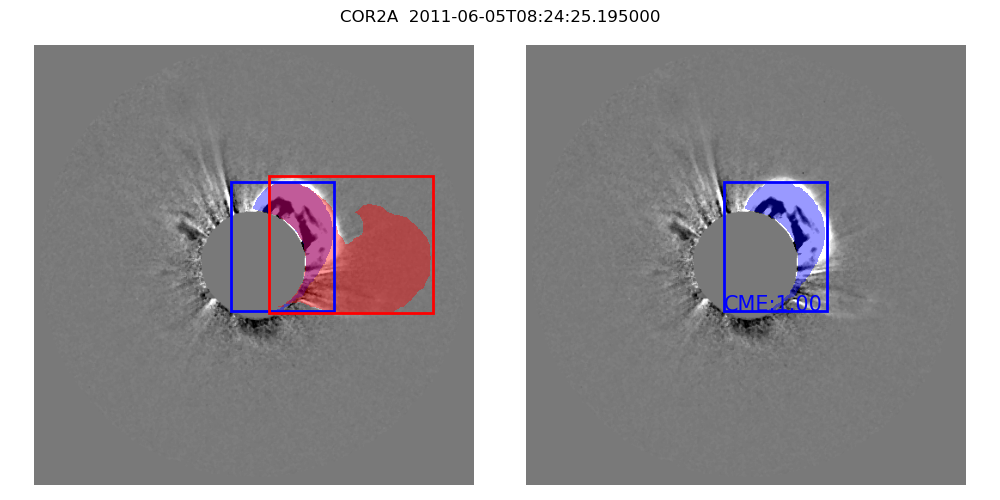

To minimize false positives within time series, a tracking/selection procedure uses temporal consistency of mask morphology (apex, central position angle, angular width, IoU continuity) as a filter, without altering the inferred mask shape.

Figure 12: Temporal tracking applied to mask instances ensures mask consistency for a CME time series.

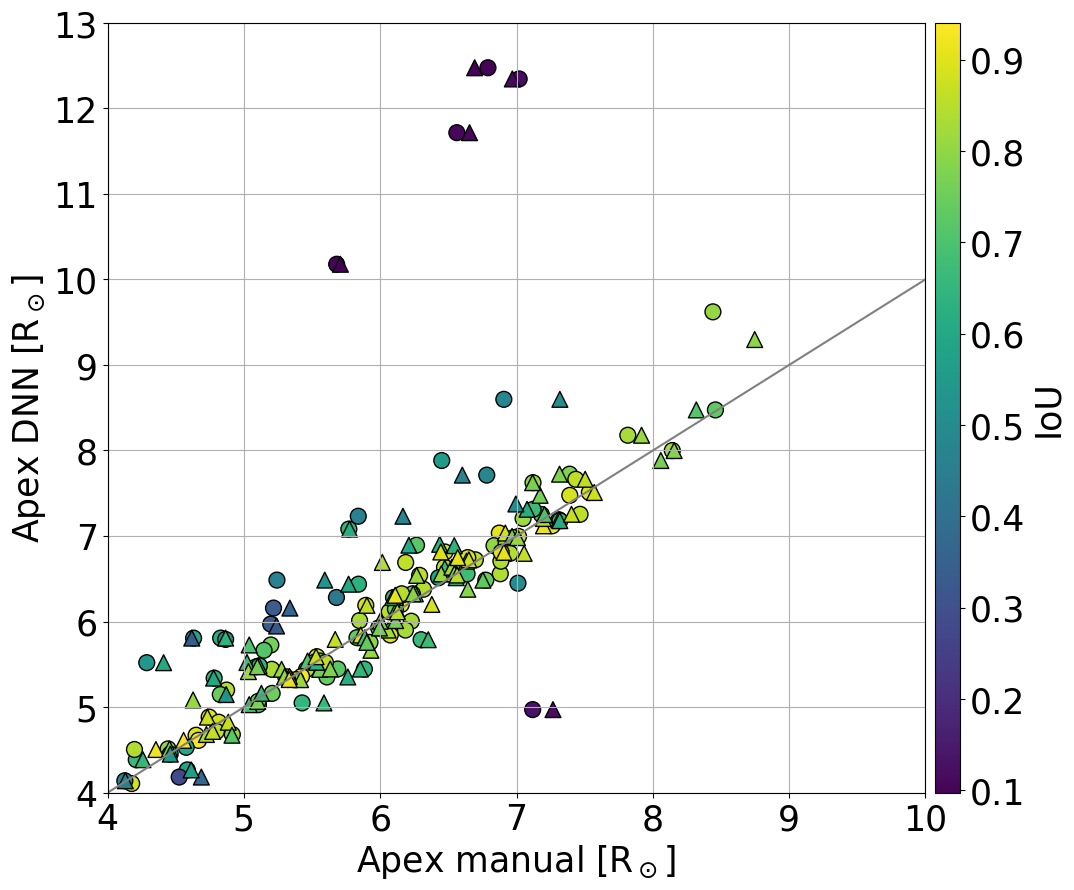

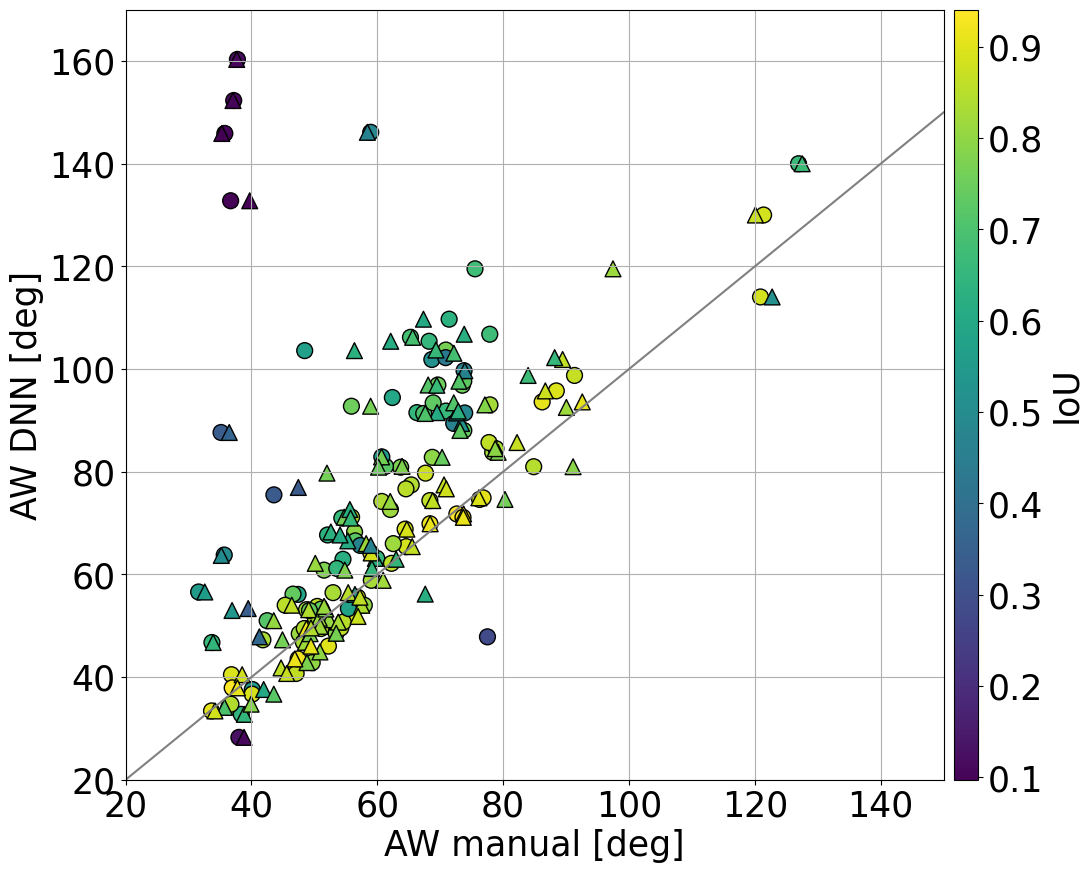

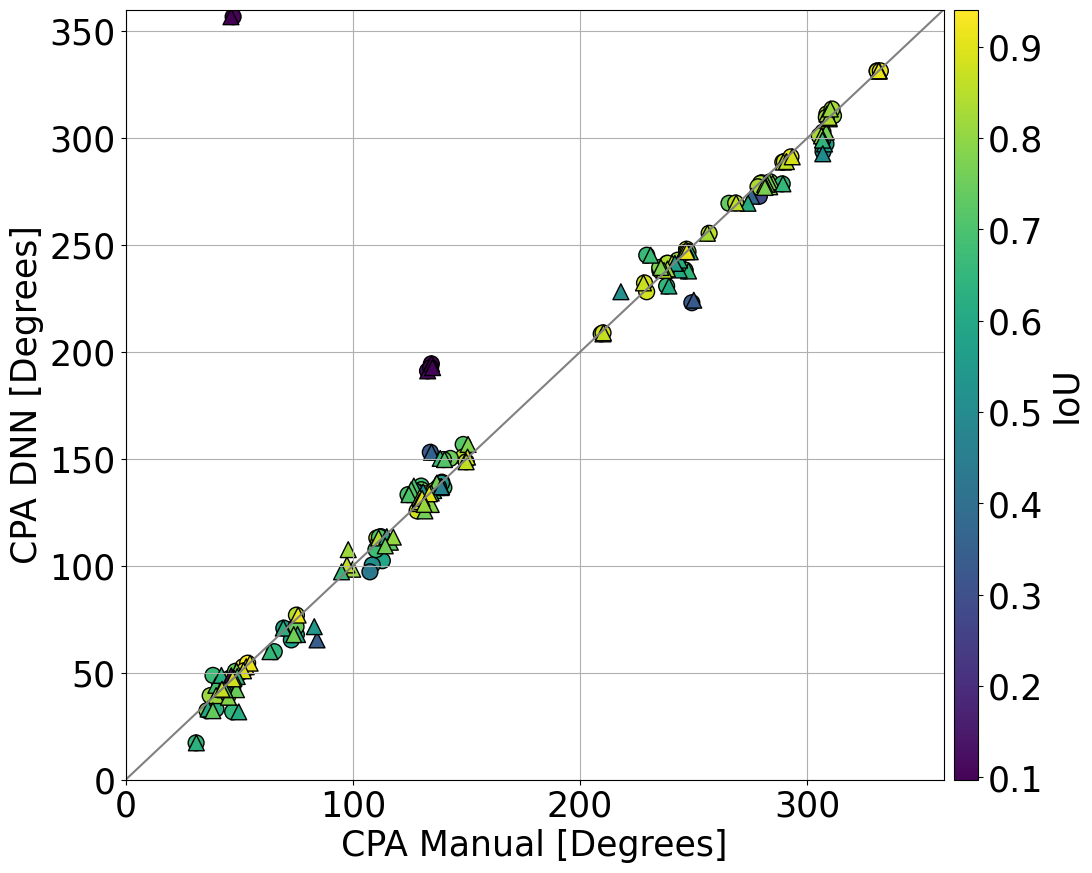

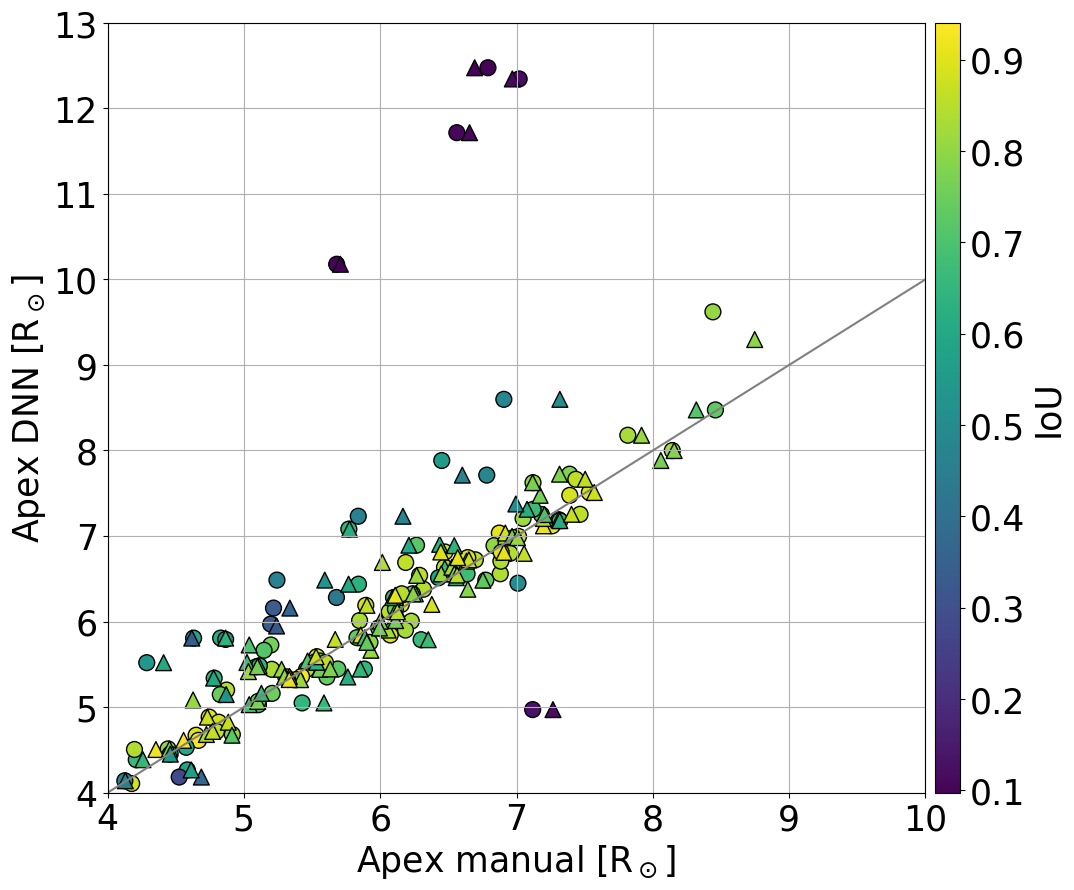

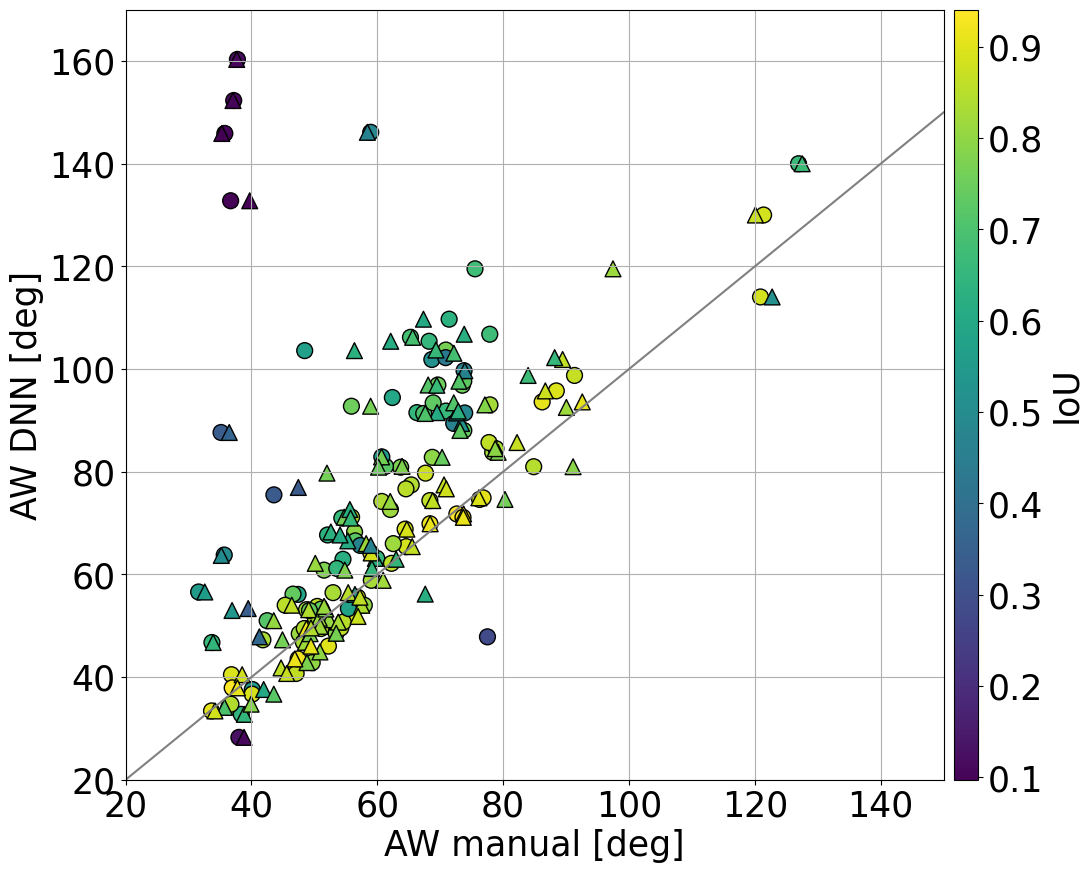

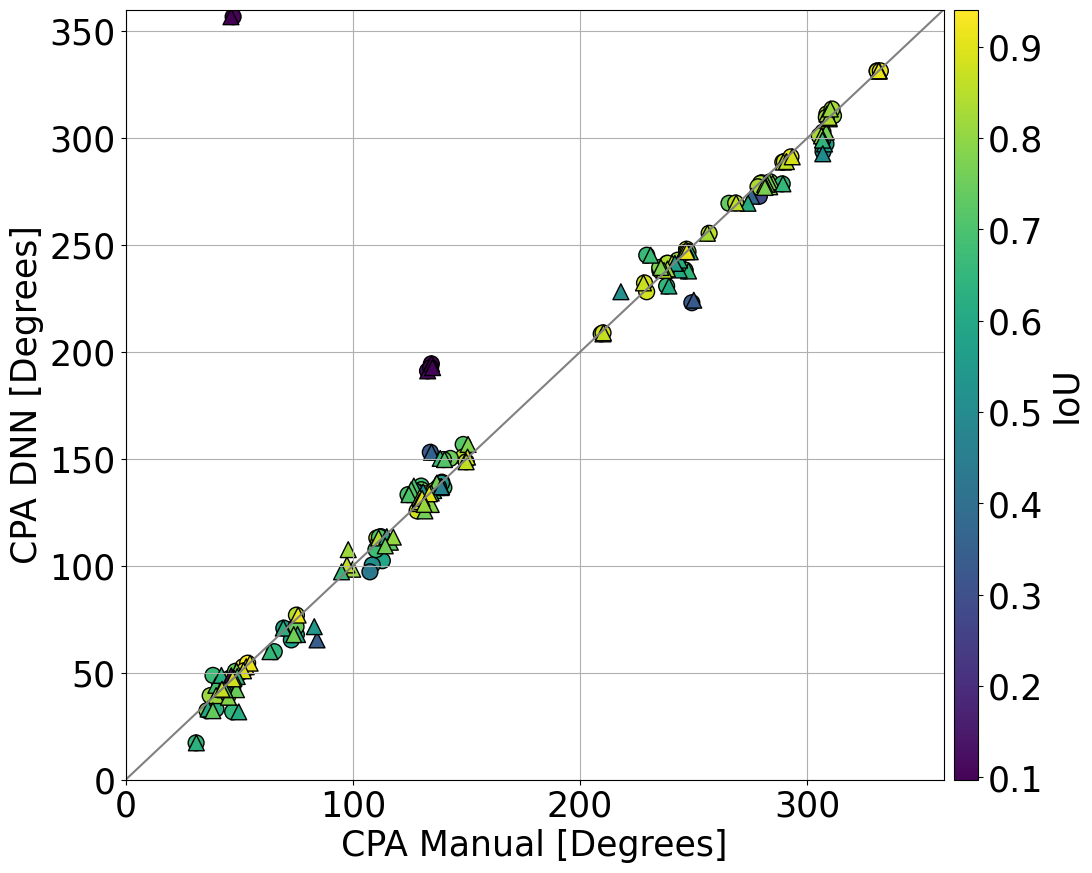

The mask area and shape properties maintain high correlation with manual estimates for apex and central position angle (r>0.9), with somewhat reduced agreement in angular width due to differences in faint envelope inclusion.

Figure 13: Histogram of manual-to-automatic mask IoU for the real validation set.

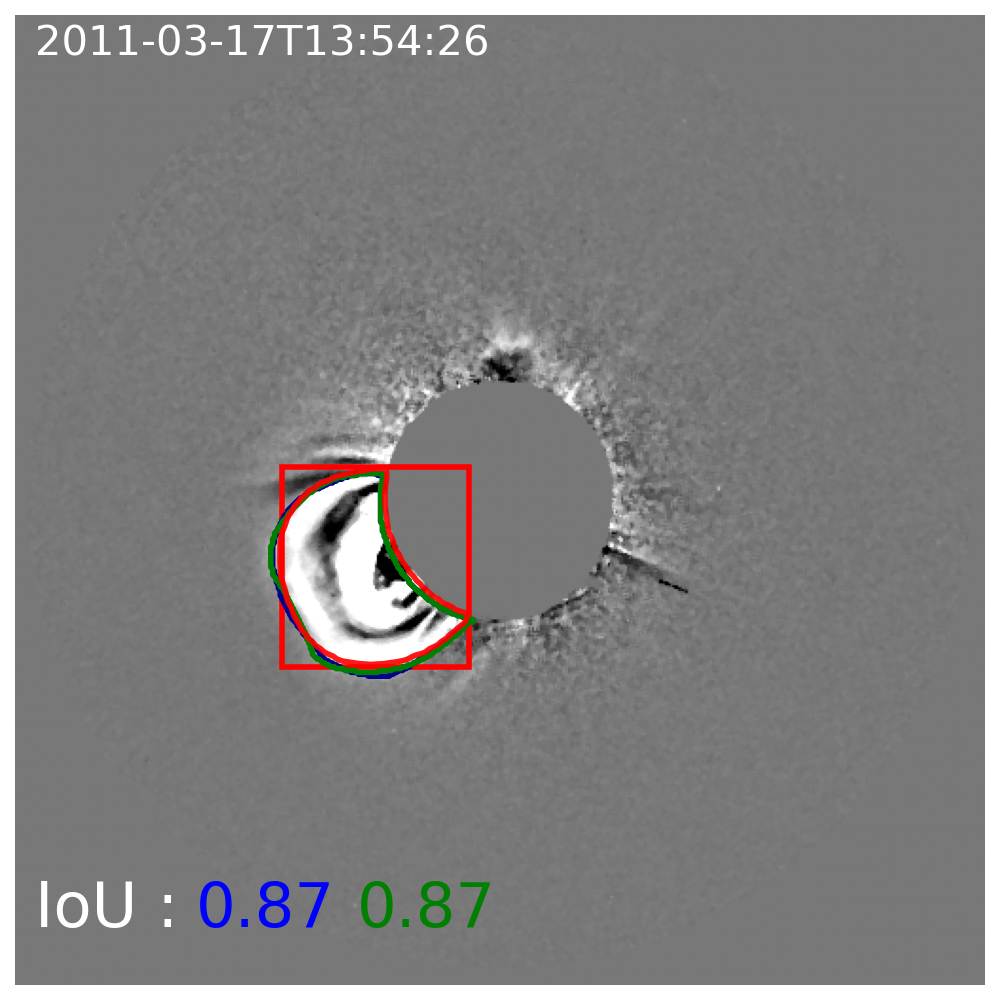

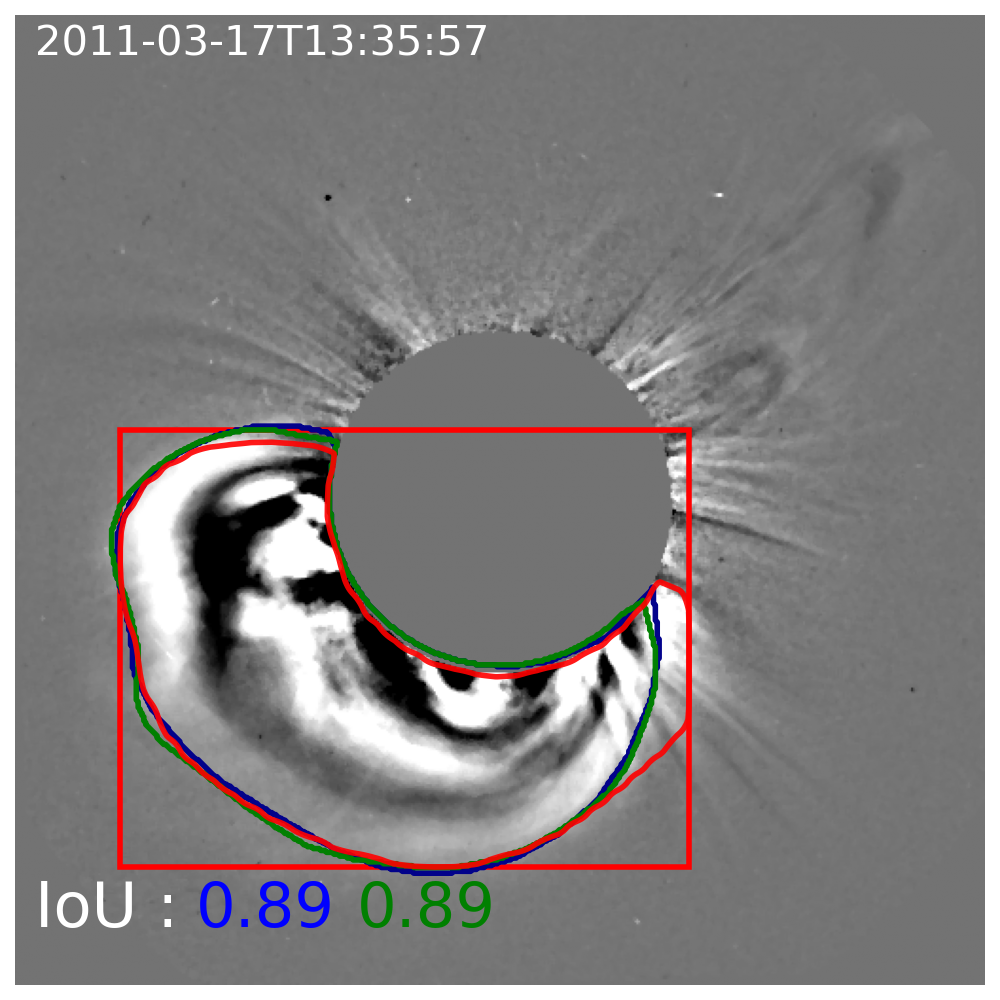

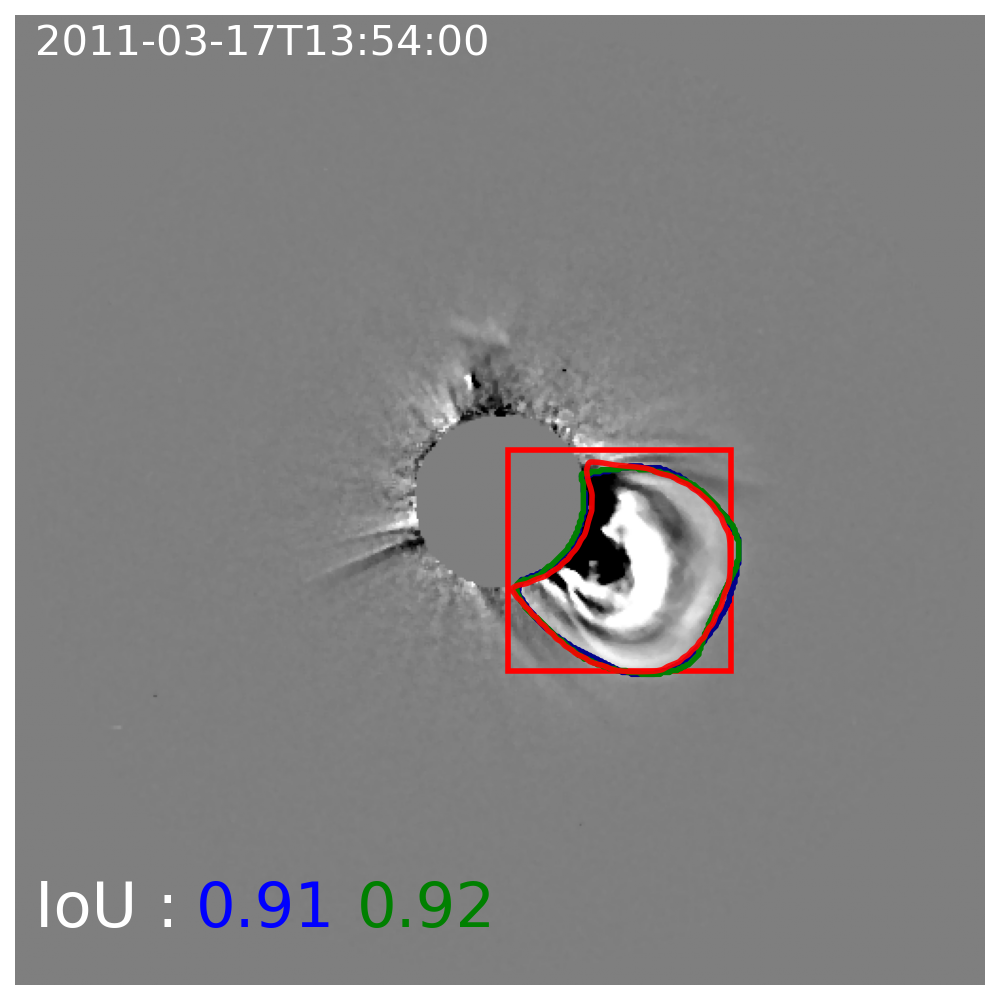

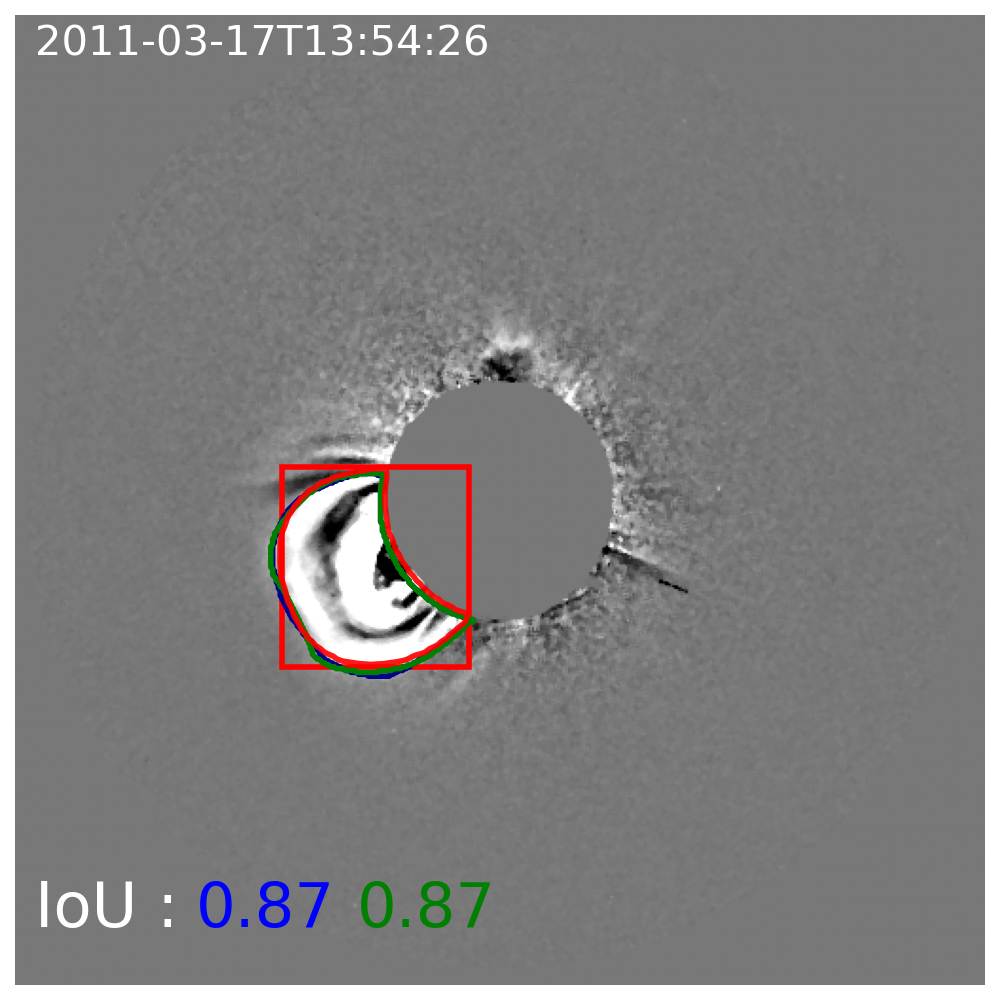

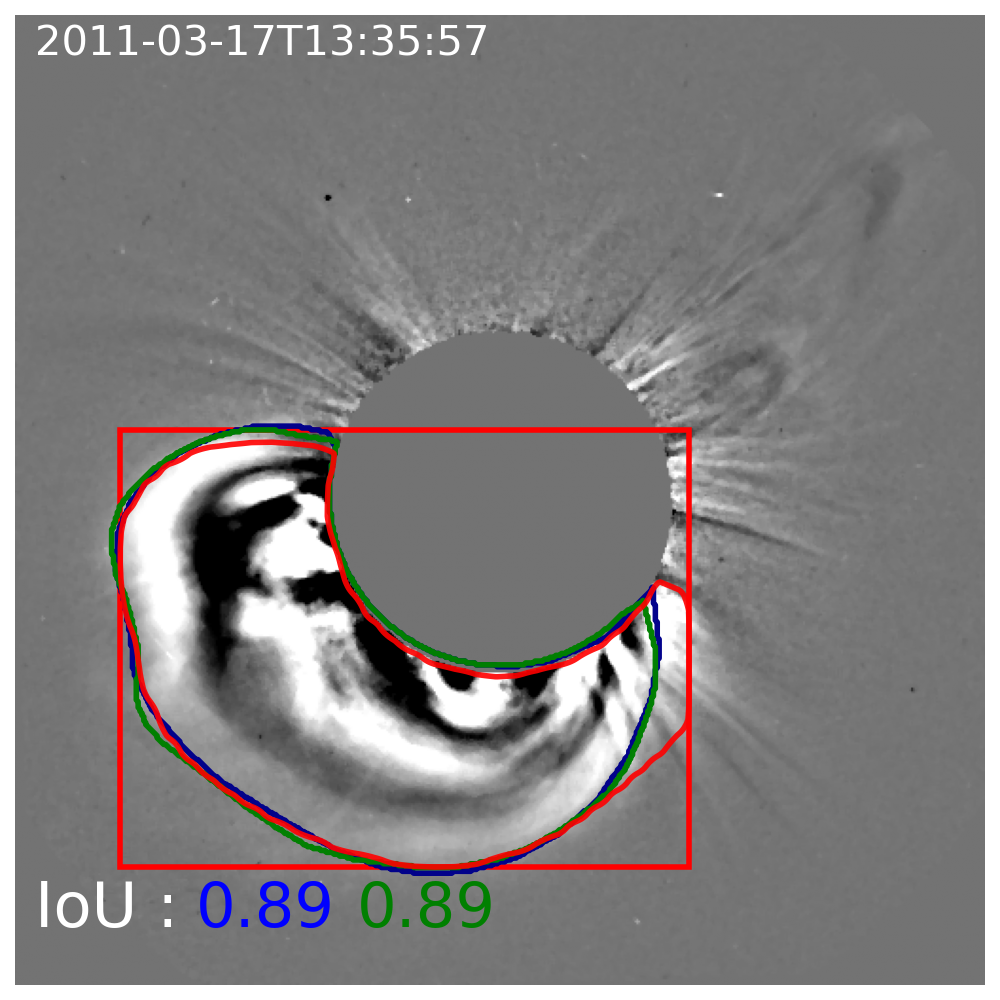

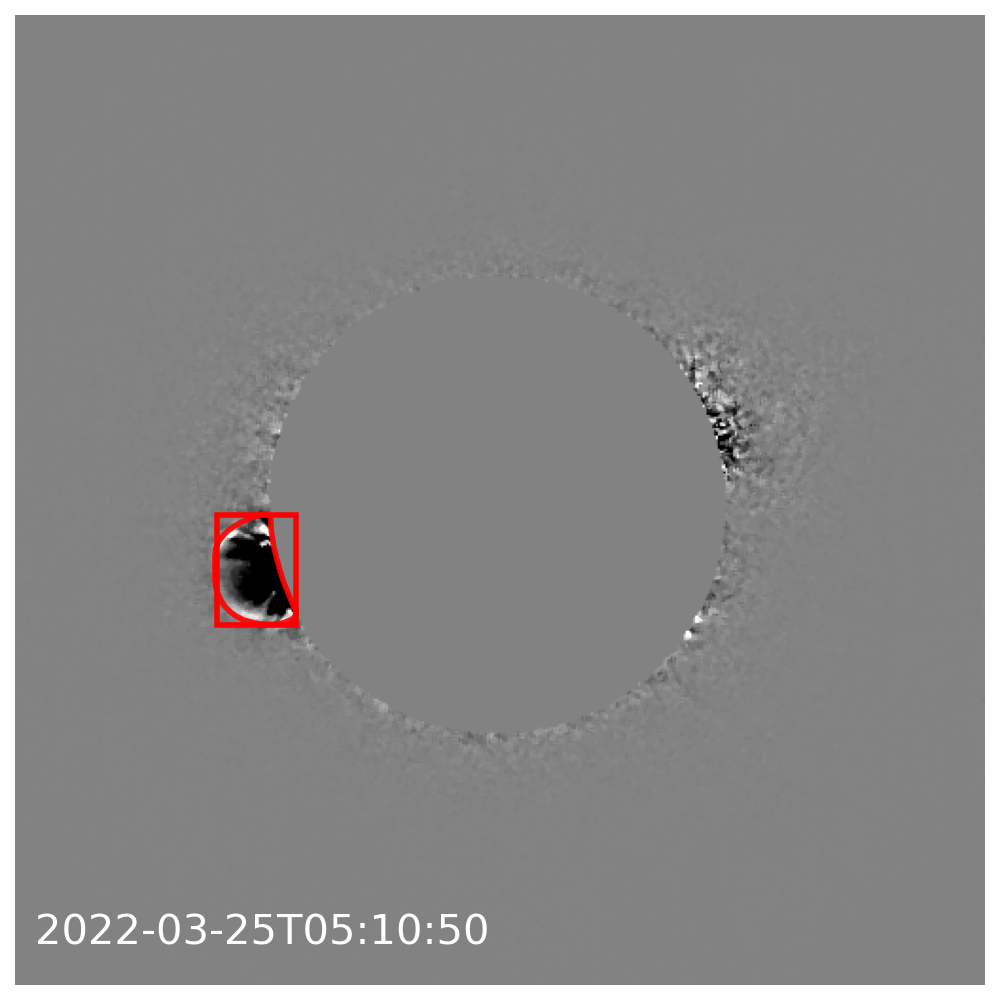

Figure 14: Typical successful segmentation cases displaying strong agreement (IoU ≥0.75) with both manual annotators.

Figure 15 presents scatter plots of apex, angular width (AW), and central position angle (CPA), color-coded by IoU.

Figure 15: Morphological property comparison between Mask R-CNN and manual masks; high correlation in key shape descriptors.

Transfer to Out-of-Distribution Instruments

The model, though trained only on images from LASCO C2 and COR2-A/B, generalizes well to coronagraph data from other spacecraft with distinct FOV and imaging properties (Metis, CCOR-1/GOES-19, LASCO C3, FSI 17.4nm), requiring only minimal input preprocessing to handle variable occulter morphology.

Figure 16: Successful CME segmentation on images from instruments not included in the synthetic training pipeline.

Implications, Limitations, and Future Directions

The synthetic-data-driven instance segmentation paradigm for CMEs has significant practical advantages:

- Independence from labor-intensive and subjective manual segmentation.

- Control over training set size and coverage.

- Production of morphologically consistent output masks, aiding catalog-level studies and statistical analyses of CME properties.

However, CME masks produced by the model reflect the geometric constraints of the GCS prior: smooth, connected shells that may inadequately describe highly structured or fragmented events. This limitation is functionally beneficial for bulk identification, but less so for fine-scale envelope recovery. Incorporation of temporal or multi-viewpoint information (e.g., via 3D U-Nets or similar architectures) is suggested as a future enhancement, potentially enabling improved handling of background dynamics and faint/complex CMEs.

The approach is amenable to extension with more realistic CME and coronal models, such as MHD-based synthetic data or physics-informed boundary conditions, which would further bridge the realism gap between synthetic and observational data. Integration with tracking/association algorithms for event cataloging across multiple time steps and viewpoints is a key area for future development.

The model’s observed cross-instrument generalization also supports deployment in operational settings, including onboard applications where telemetry constraints or real-time event prioritization are paramount.

Conclusion

This work demonstrates that instance segmentation networks, when trained on a comprehensive, morphologically controlled synthetic dataset, achieve state-of-the-art performance in CME envelope detection and mask delineation, overcoming the limitations of both manual annotation and prior ML/image-processing pipelines. The resultant system provides robust, scalable, and generalizable CME segmentation, and lays the groundwork for physically-informed, fully automated space weather monitoring systems.

Future research should focus on advancing physical realism in synthetic training data, optimizing architectures for spatio-temporal correlation, and expanding transferability to new instruments and data modalities.