- The paper introduces a joint optimization framework that decomposes DNNs into dynamic submodels for efficient caching and request routing in mobile edge computing.

- It proposes the CoCaR algorithm, which leverages LP-based problem transformation and random rounding to deliver provable approximation guarantees and improved inference precision.

- The CoCaR-OL variant adapts caching in real-time, achieving a 46% precision improvement and demonstrating enhanced scalability under fluctuating user demand.

Joint Optimization of DNN Model Caching and Request Routing in Mobile Edge Computing

The paper "Joint Optimization of DNN Model Caching and Request Routing in Mobile Edge Computing" (2511.03159) addresses the challenge of optimally caching dynamic DNN submodels and routing requests in MEC environments to improve user QoE. The inclusion of dynamic DNNs provides a novel approach for fine-grained caching, crucial for resource-limited edge servers. The following sections detail the methodologies, algorithmic developments, and evaluations presented in the paper.

Dynamic DNN Caching and Routing Problem

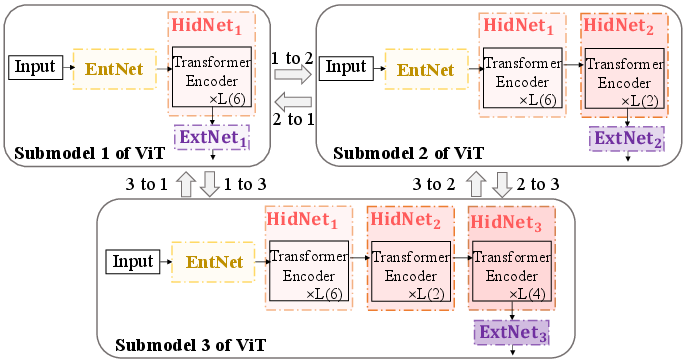

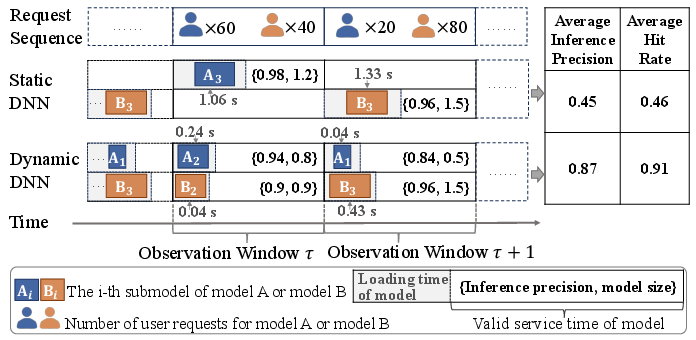

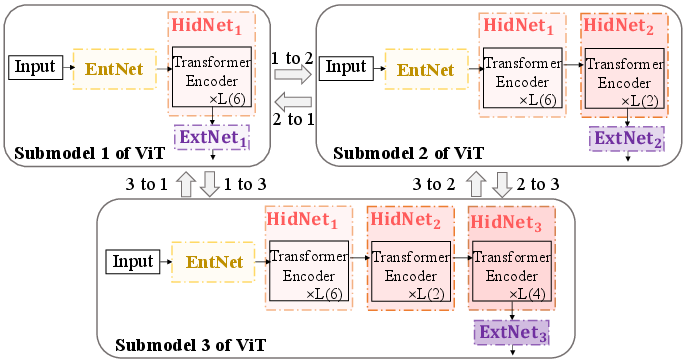

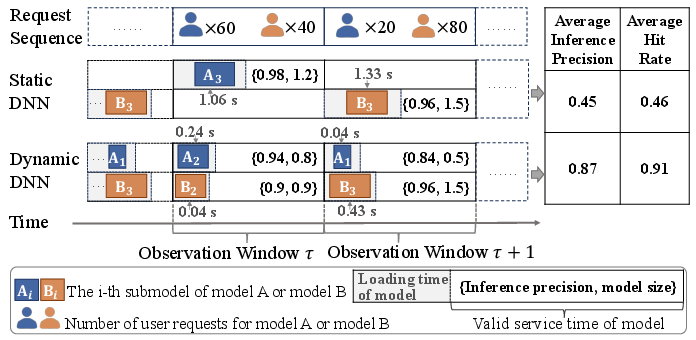

MEC environments enhance real-time processing by caching DNNs at BSs to reduce latency. However, constraints such as limited storage and computational resources at edge servers necessitate innovative caching techniques. This research proposes the decomposition of DNNs into dynamic submodels, wherein a complete DNN is fragmented into interrelated submodels. Each BS caches a single submodel per DNN type, necessitating an optimization problem formulation that considers server resource constraints and model loading time.

The study introduces the JDCR problem aiming to maximize the precision of user inference requests. The optimization objectives integrate submodel caching decisions xn,h, routing decisions yn,u, and latency constraints, forming a nonlinear integer programming challenge that demands a strategic blend of analytical transformations and heuristic methods for practical solution derivation.

Figure 1: Submodels division and switching of ViT. When switching from submodel 1 to submodel 2 of ViT, we only need to remove ExtNet1 and connect HidNet2 and ExtNet2.

CoCaR Algorithm

The CoCaR algorithm emerges as a solution, leveraging LP and random rounding to address the JDCR problem's complexity. The approach breaks down as follows:

- Problem Transformation: The initially nonlinear problem is linearized through variable substitutions and relaxation techniques, producing a linearized problem solvable via LP solvers.

- Random Rounding: Post LP-solution, CoCaR applies random rounding to convert fractional solutions into feasible integer solutions, ensuring compliance with the original constraints on cache and routing decisions.

- Theoretical Guarantees: The algorithm achieves a provable approximation ratio, ensuring near-optimal performance under practical parameter settings, validated through rigorous mathematical analysis.

Figure 2: Examples of static DNN and dynamic DNN schemes.

CoCaR-OL for Online Scenarios

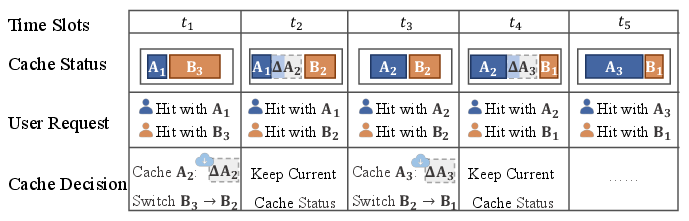

Real-world applications necessitate adaptation to fluctuating user demand, prompting the development of CoCaR-OL, an online variant responsive to real-time requests.

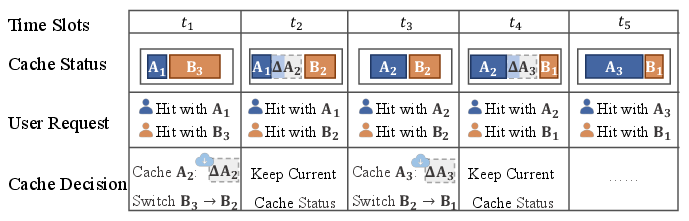

- Online Model Adaptation: CoCaR-OL utilizes historical request data, predictive modeling, and dynamic submodel downloading strategies to adjust caching decisions efficiently.

- Heuristic Decisions: The algorithm assesses expected future gains, adopting a gain-oriented policy that maximizes QoE by dynamically switching submodels based on real-time constraints and predictions.

Figure 3: Illustration of model caching in an online scenario. Downloads and cache adjustments are coordinated in real-time to adapt to varying request patterns.

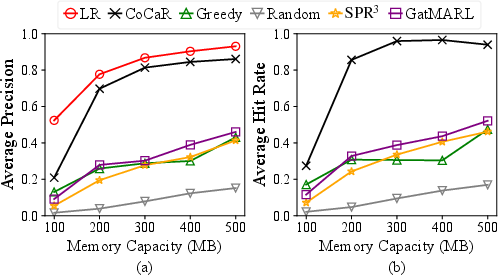

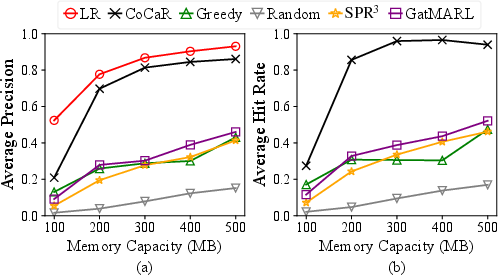

Comprehensive simulations underscore the efficacy of CoCaR and CoCaR-OL:

- Comparison Against Baselines: CoCaR significantly outperforms conventional caching algorithms, achieving a 46% improvement in inference precision over state-of-the-art methods, attributed to its fine-grained resource utilization and adaptiveness to dynamic scenarios.

- Statistical Metrics: Key performance indicators include average inference precision and hit rate, validating the algorithm’s capability to leverage dynamic model structures for superior service quality and resource efficiency.

Figure 4: Impact of different BS memory capacities: (a) Average inference precision; (b) Average hit rate.

Conclusion

The research successfully implements a dynamic approach to MEC configurations, integrating dynamic DNNs into the caching framework and enhancing precision and adaptability through CoCaR and CoCaR-OL algorithms. Future work will explore further optimizations in BS resource allocations and distributed decision-making frameworks to amplify the efficiency and scalability of these systems in complex, real-world environments.