- The paper introduces the first public standard benchmark for automotive ML systems, assessing tasks like 2D/3D object detection and semantic segmentation.

- It details a robust methodology with closed and open submission categories to ensure reproducible and fair performance comparisons.

- The evaluation leverages diverse datasets and models to address challenges in real-time processing, safety, and power efficiency in automotive contexts.

MLPerf Automotive: A Comprehensive Benchmarking Suite for Automotive ML Systems

Introduction

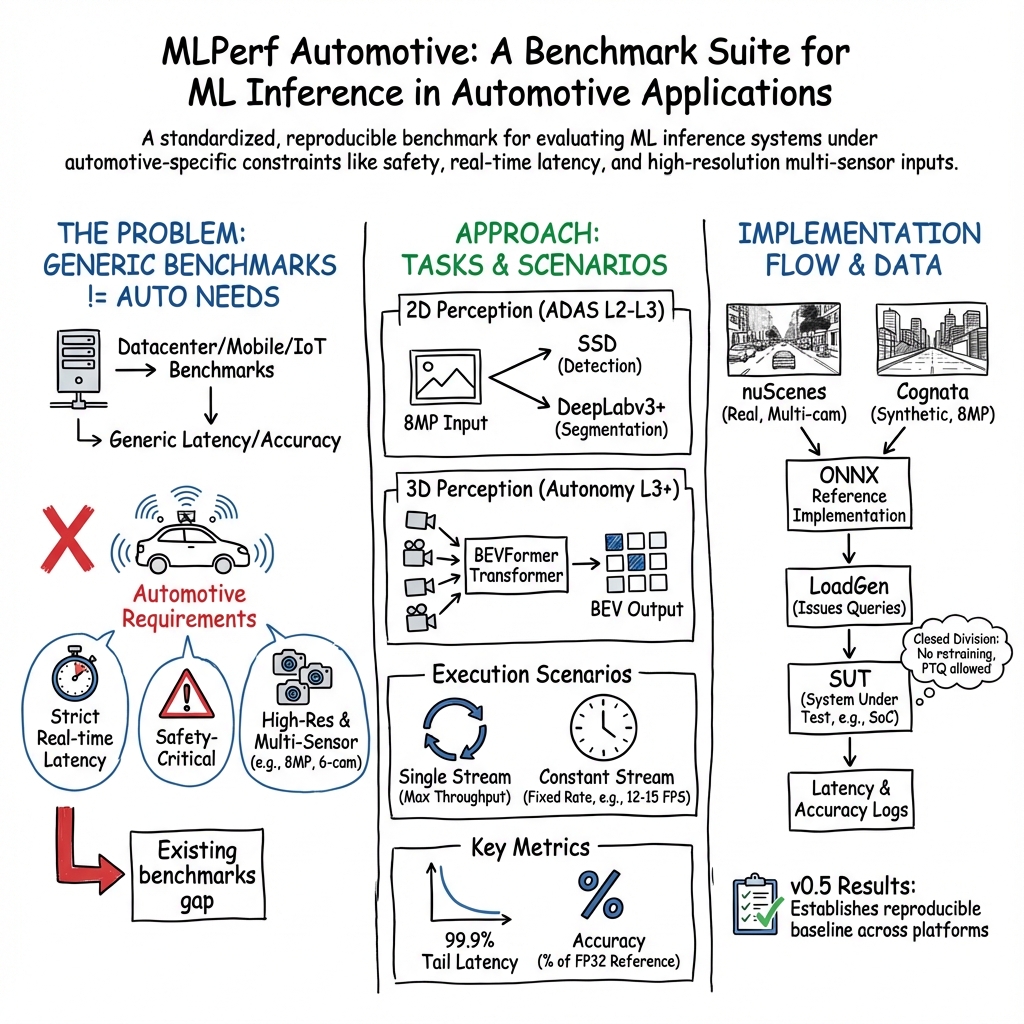

The paper "MLPerf Automotive" (2510.27065) introduces the first standardized public benchmark specifically designed for evaluating ML systems used in automotive applications. Developed collaboratively by MLCommons and the Autonomous Vehicle Computing Consortium (AVCC), MLPerf Automotive addresses the unique requirements of automotive ML systems, which differ significantly from those in other domains due to constraints such as safety, real-time processing, and power efficiency.

Benchmark Design and Methodology

MLPerf Automotive establishes a robust framework for assessing the performance of ML systems in automotive environments. Unlike existing benchmarks targeting datacenters, mobile, or IoT applications, this benchmark is tailored to the distinct needs of automotive workloads, which are heavily perception-centric and demand stringent real-time processing capabilities. The benchmark suite includes tasks such as 2D object detection, 2D semantic segmentation, and 3D object detection, reflecting typical Advanced Driver Assistance Systems (ADAS) workloads.

The benchmark adheres to standardized protocols to ensure consistent and reproducible results. It offers two submission categories: closed and open. The closed category facilitates fair comparisons by enforcing strict guidelines, including prohibitions on retraining and caching. Conversely, the open category allows for greater flexibility, enabling model retraining and alternative implementations.

Unique Challenges of Automotive Workloads

Automotive ML applications face distinct challenges compared to other domains. The paper highlights the necessity for handling diverse and complex sensor data, imposing stringent latency requirements, and maintaining high safety standards. Automotive System-on-Chips (SoCs) need to balance computational power and power consumption across varying levels of autonomy, as defined by SAE International.

The benchmark's design accounts for these factors by selecting tasks and datasets pertinent to automotive systems. The initial benchmark iteration leverages models such as SSD, DeepLabv3+, and BEVFormer, chosen for their relevance to the different levels of driving autonomy and their representation of contemporary ML challenges in the automotive sector.

Dataset Selection and Challenges

Selecting appropriate datasets posed a significant challenge due to licensing constraints and the need for datasets that accurately reflect real-world driving scenarios. The MLPerf Automotive benchmark incorporates the nuScenes dataset for BEVFormer and a synthetic dataset from Cognata for SSD and DeepLabv3+. These datasets provide a comprehensive representation of driving conditions and are essential for evaluating model performance under diverse scenarios.

The paper underscores the importance of dataset diversity, encompassing various geographic locations, weather conditions, and sensor modalities, to ensure comprehensive performance assessments.

Submissions and Results

The first round of submissions for the benchmark attracted multiple participants, with results highlighting the efficacy and areas for improvement in current ML systems for automotive applications. Submissions spanned both the open and closed categories, with various optimization techniques employed, such as reduced precision formats like INT8 and FP16, to enhance performance.

Future Developments and Conclusion

The paper concludes by outlining plans for future iterations of the benchmark. These include expanding the scope to incorporate end-to-end autonomous driving models, introducing power measurement capabilities, and refining the submission categories to better differentiate between development and production-ready systems.

In summary, MLPerf Automotive presents a significant advancement in the standardization of performance evaluation for automotive ML systems. By addressing the unique challenges and requirements of this domain, the benchmark provides a crucial tool for guiding the development of more efficient and effective automotive ML solutions. The ongoing evolution of the benchmark will ensure its continued relevance and utility as the automotive industry progresses towards higher levels of autonomy and ML integration.