- The paper introduces GeT-USE, a framework that learns generalized tool usage through simulated embodiment extensions to enhance bimanual manipulation.

- It leverages a dual-phase process: first, it builds tools in simulation via reinforcement learning, then distills vision-based policies for real-world deployment.

- Experimental results show a 30-60% improvement over baselines, emphasizing the importance of 6-DOF control and robust tool selection in challenging tasks.

Introduction and Motivation

The paper introduces GeT-USE, a framework for learning generalized tool usage in bimanual mobile manipulation tasks by leveraging simulated embodiment extensions. The central premise is that robots can acquire versatile manipulation capabilities by first exploring and learning to extend their own embodiment in simulation, and then transferring the learned geometric and control strategies to real-world visuomotor policies. This approach addresses the limitations of prior work, which typically assumes the presence of a single, ideal tool and does not generalize to scenarios where the optimal tool is absent or multiple objects are available.

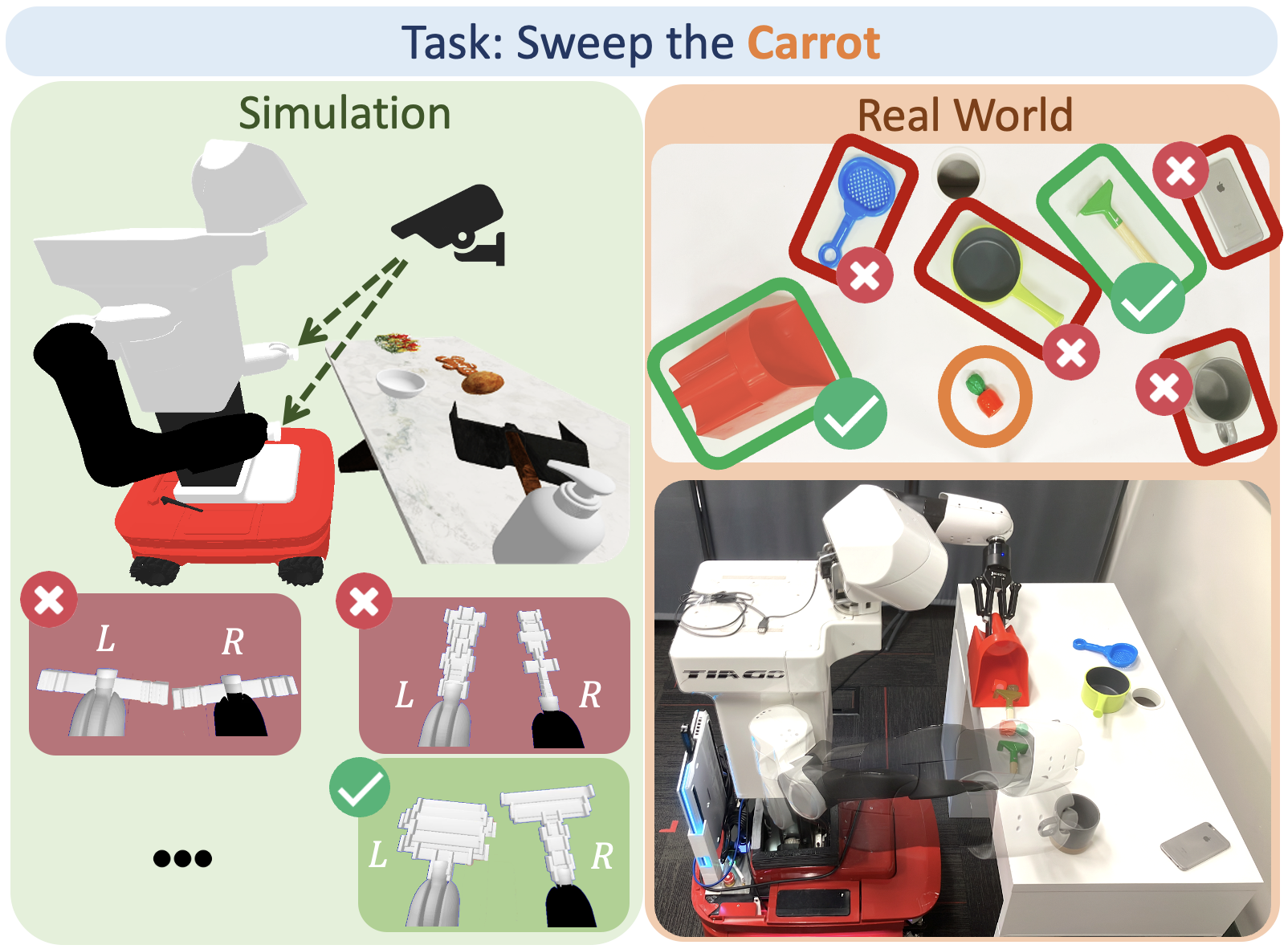

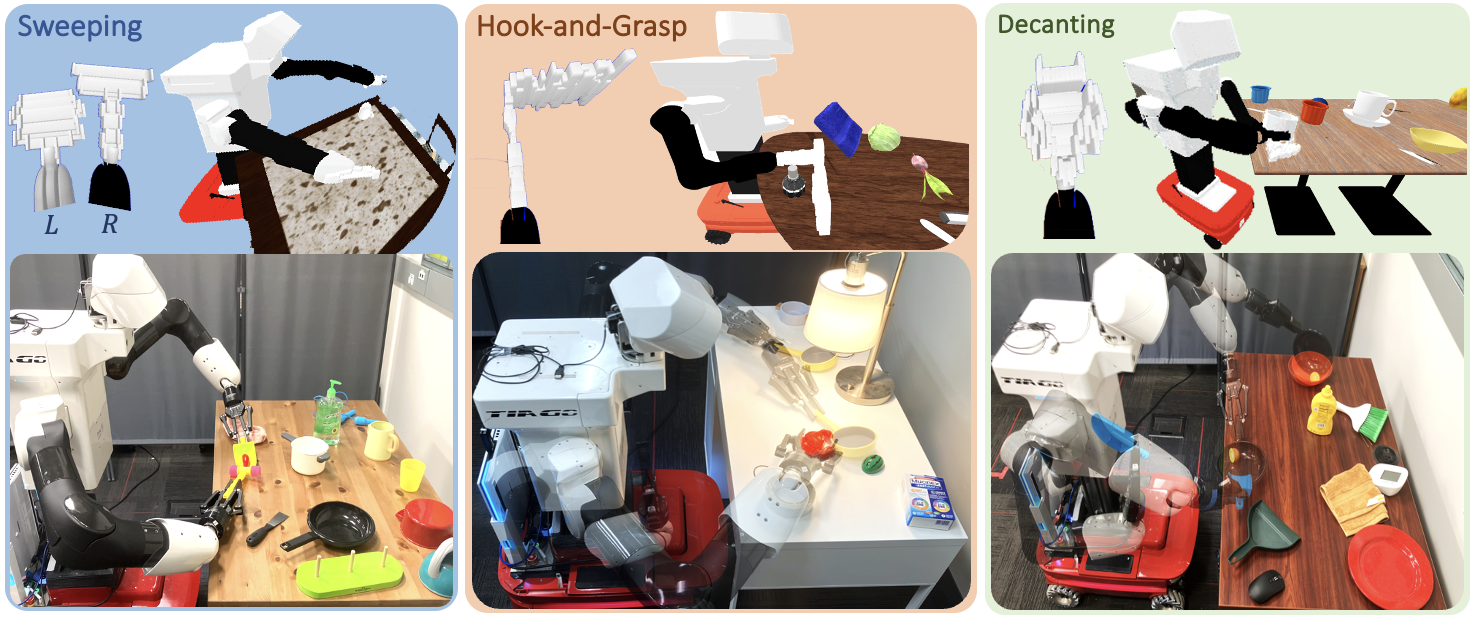

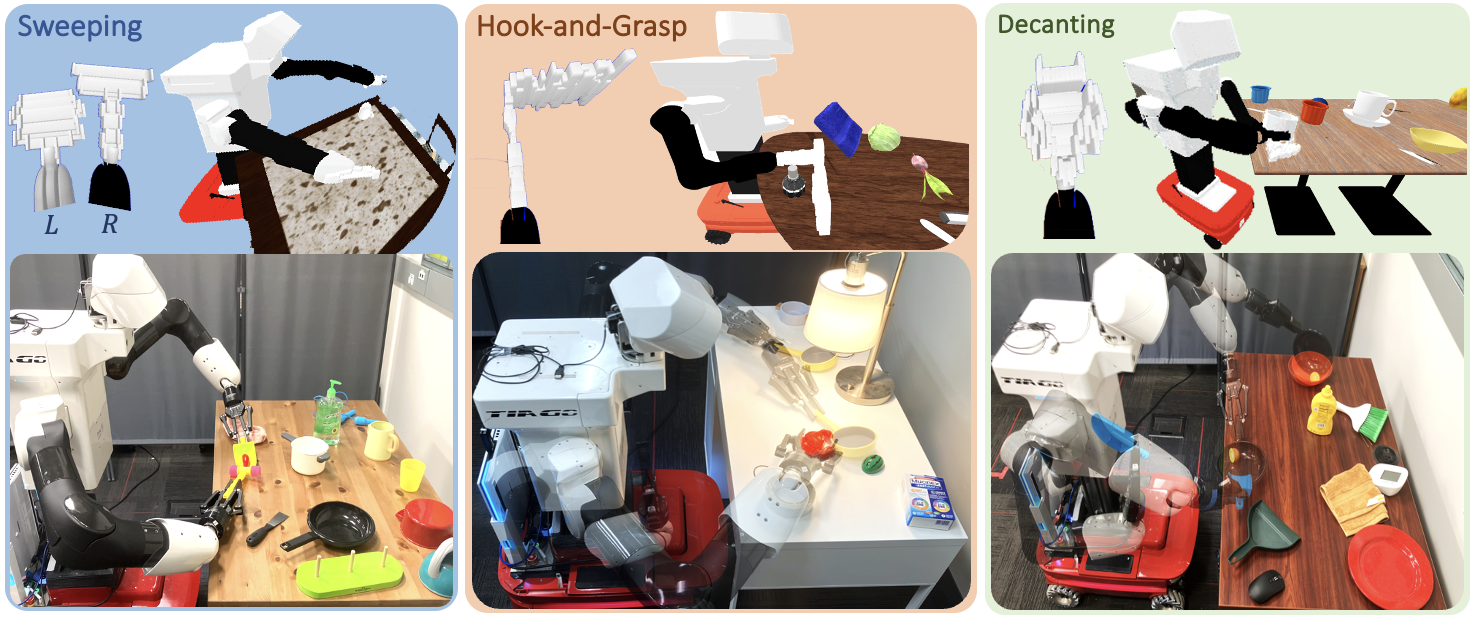

Figure 1: GeT-USE enables a TIAGo robot to solve bimanual mobile manipulation tasks by learning in simulation to build tools and transferring the strategy to real-world vision-based modules.

Framework Overview

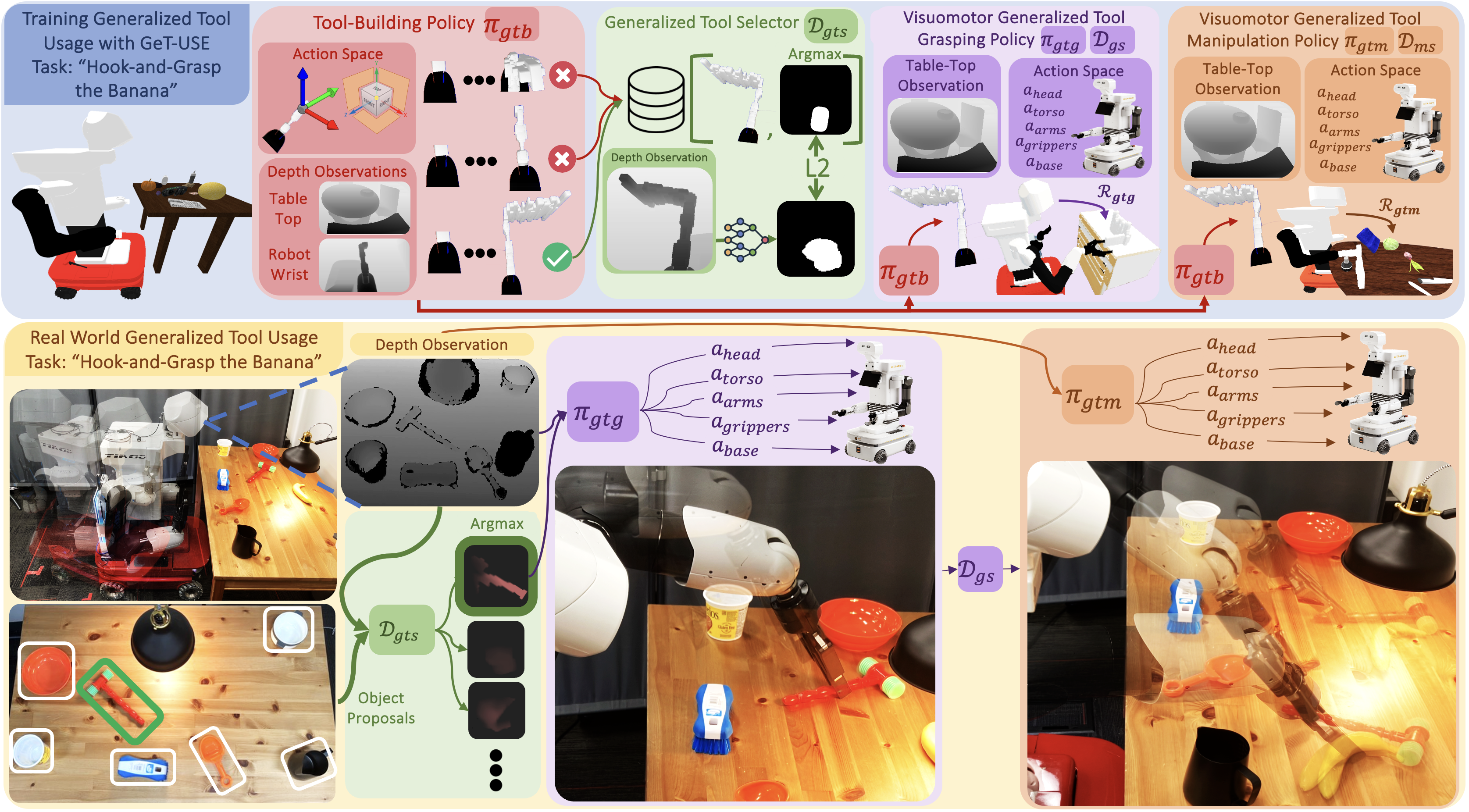

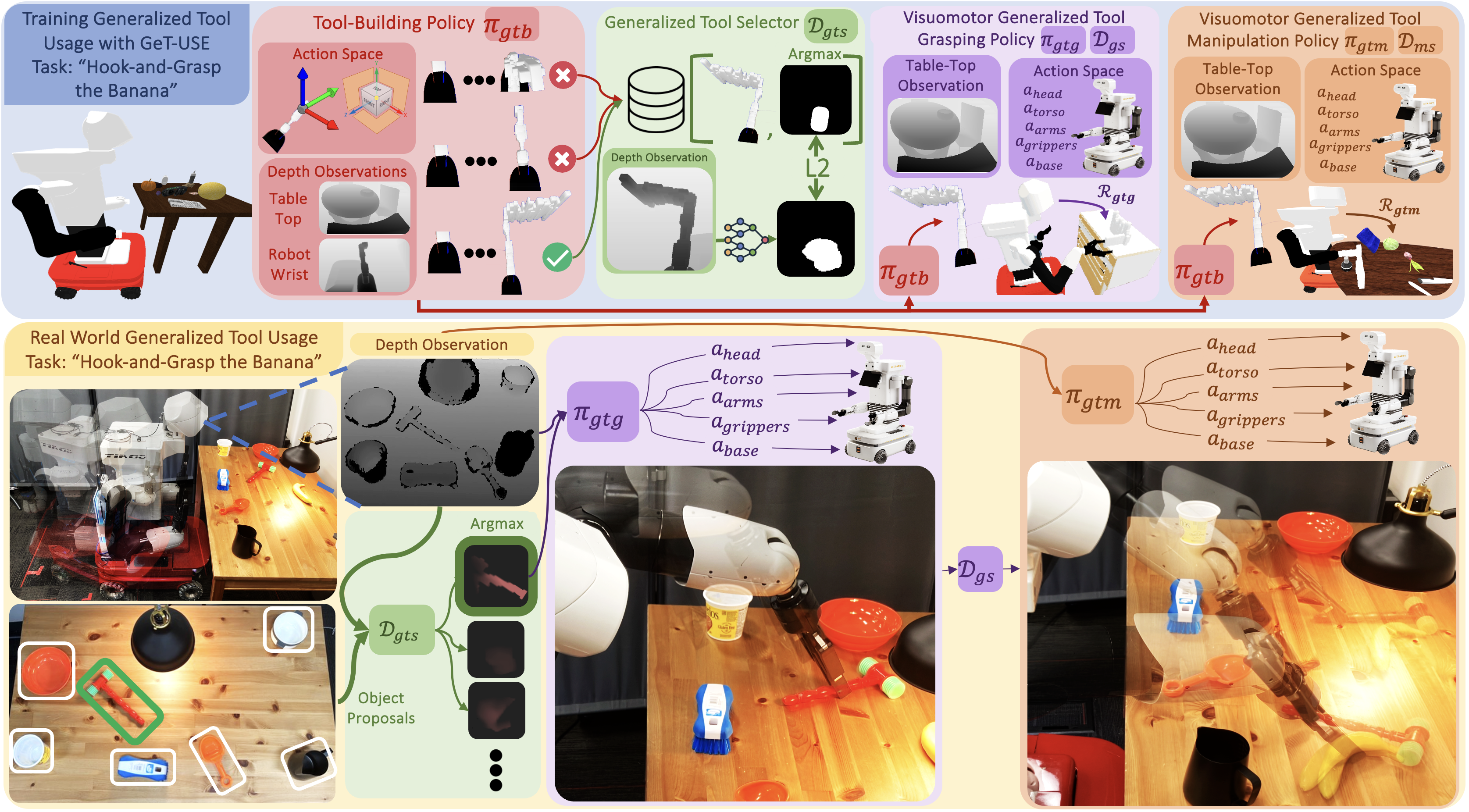

GeT-USE operates in two main phases:

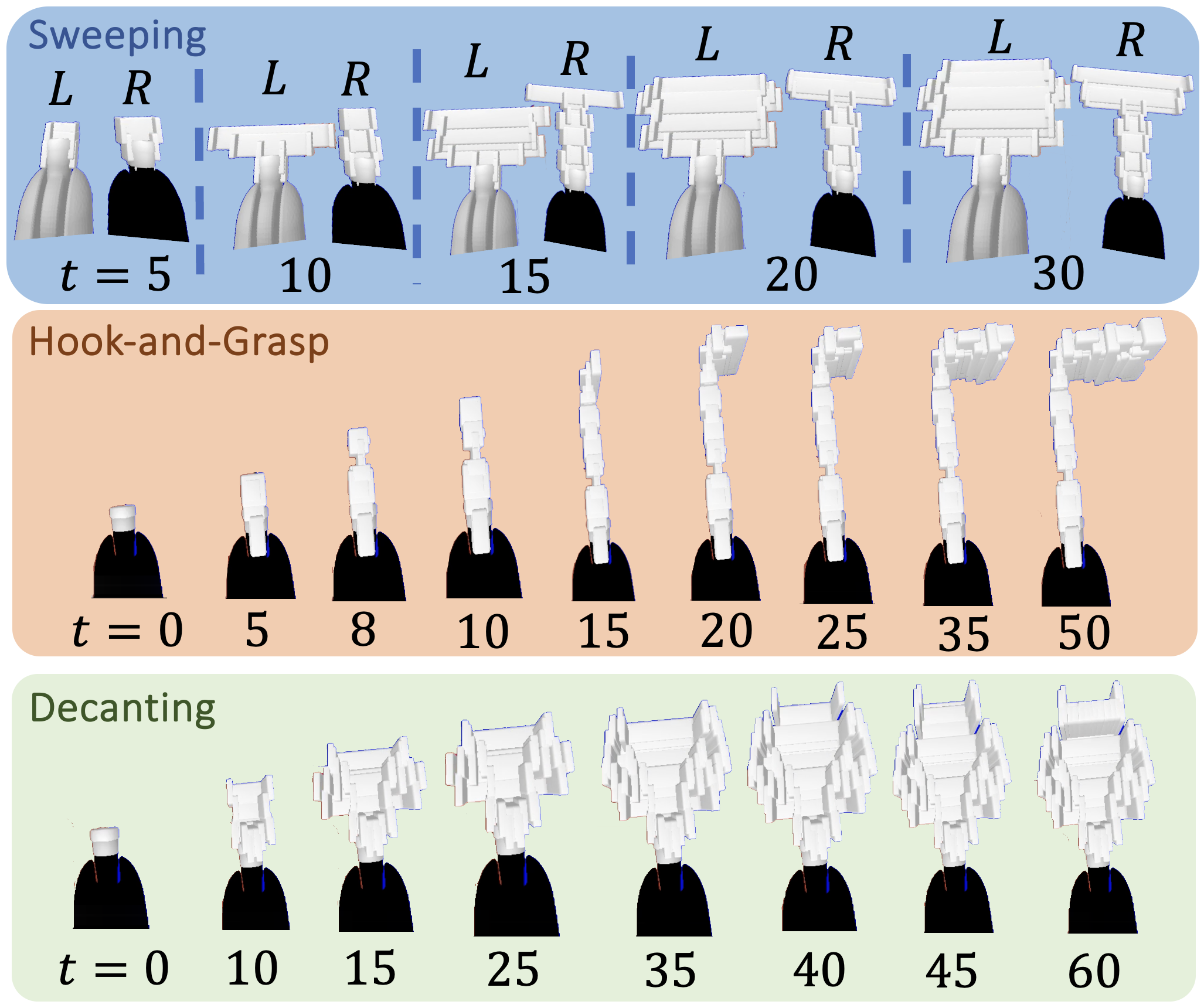

- Simulated Embodiment Extension: The robot incrementally builds tools by appending small blocks to its wrists in simulation, guided by a reinforcement learning policy. The policy receives depth images and outputs the position and size of new blocks, terminating when a suitable tool is constructed. Success is determined by executing a predefined manipulation strategy using privileged information.

- Vision-Based Module Training and Sim2Real Transfer: The geometric and morphological properties of successful simulated tools are distilled into three vision-based modules:

- Generalized Tool Selector: Trained to rank real-world objects by their suitability for the task using depth images.

- Visuomotor Tool Grasping Policy: Learns to grasp selected objects using depth images and proprioception.

- Visuomotor Tool Manipulation Policy: Controls the robot to perform the task with the grasped tool.

Figure 2: The GeT-USE framework: training in simulation (top) and deployment in the real world (bottom), with modules for tool selection, grasping, and manipulation.

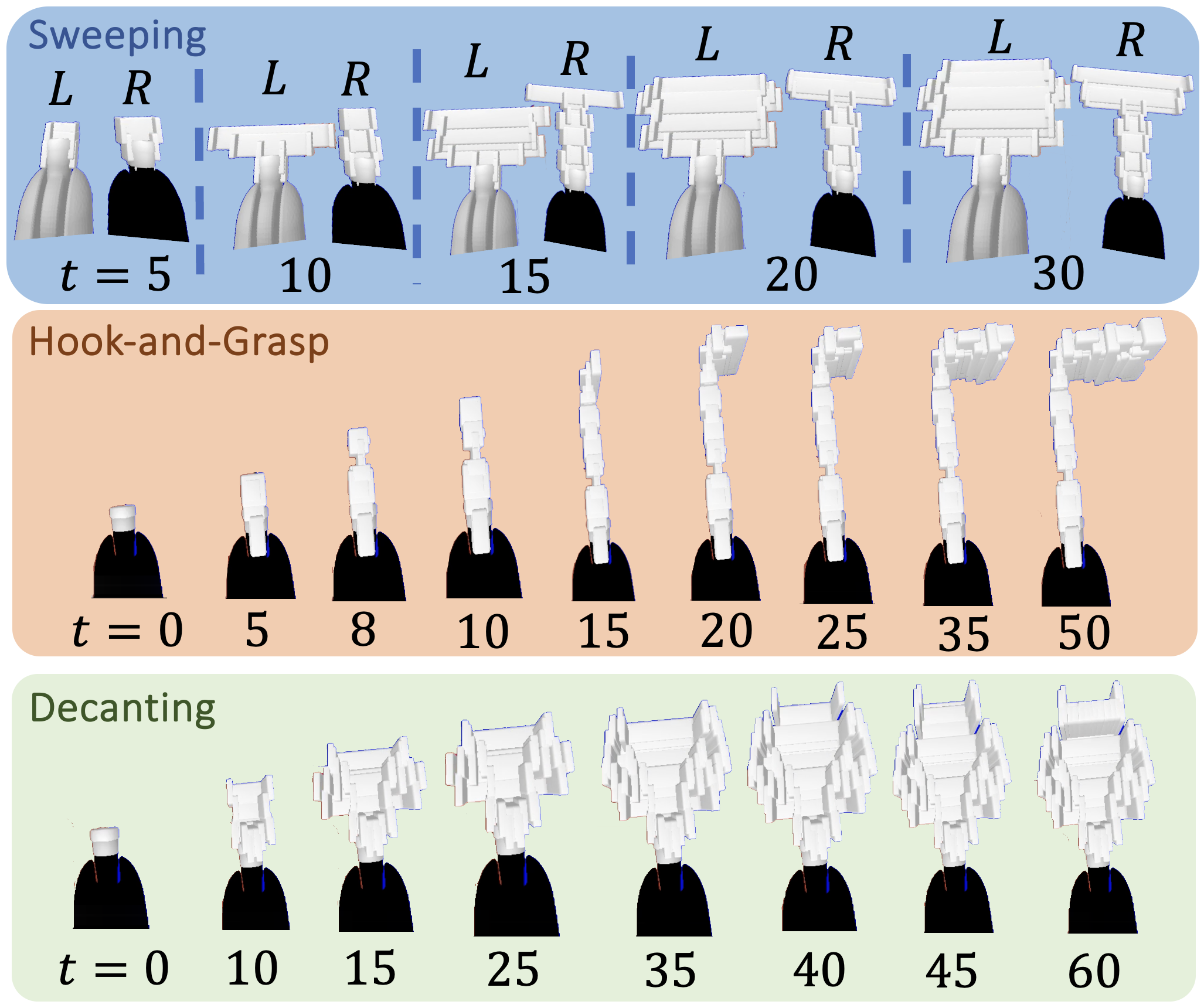

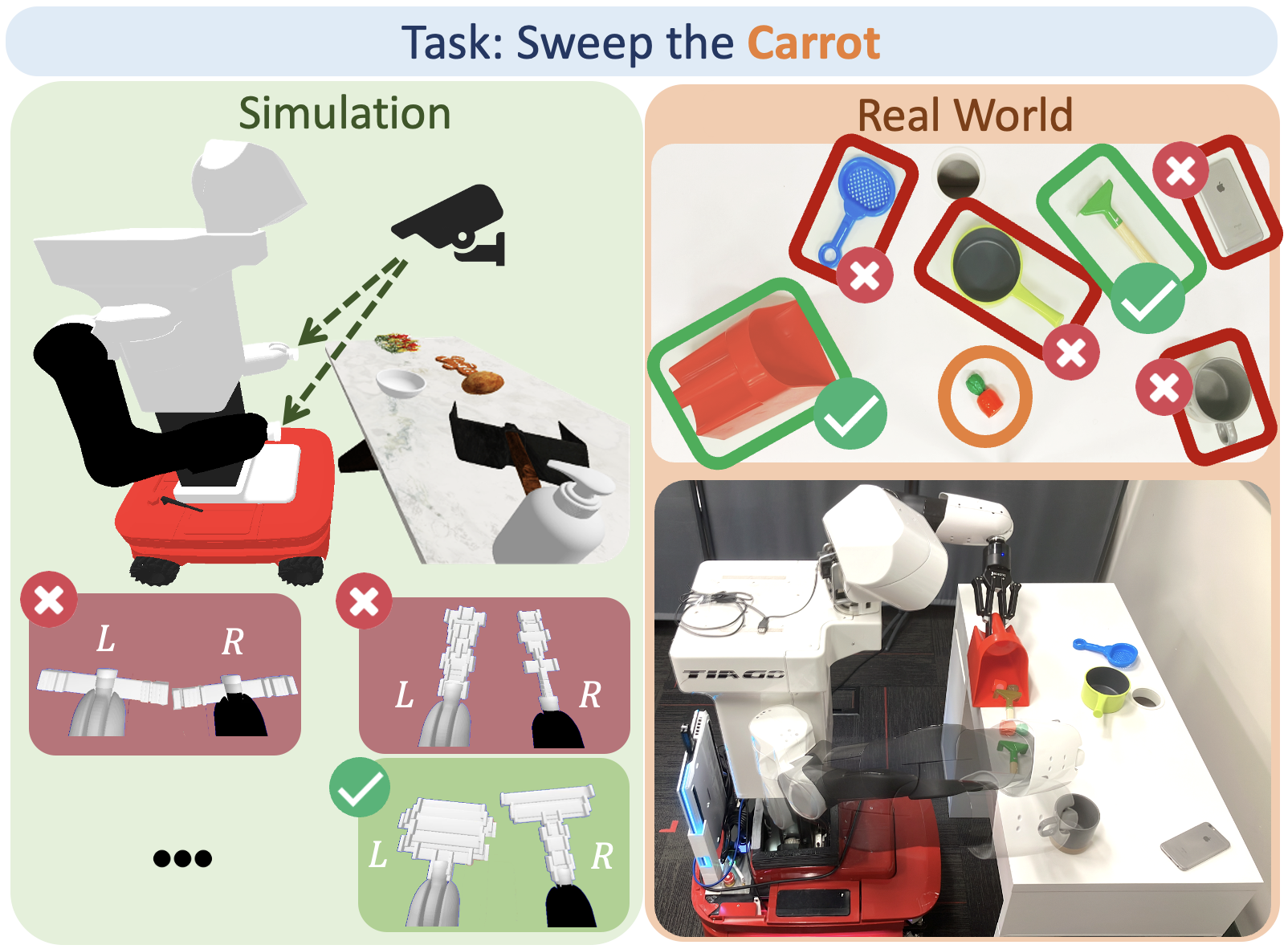

The tool-building policy, πgtb, is trained via RL to explore the space of possible tool geometries by appending blocks to the robot's wrists. The action space includes both the relative position and size of each block. The policy is rewarded for constructing tools that enable successful task completion, as determined by downstream manipulation strategies. This process generates a diverse set of both optimal and suboptimal tool geometries, which are critical for training robust selection and manipulation modules.

Figure 3: Example rollouts of GeT-USE's tool-building policy for Sweeping, Hook, and Decanting tasks, showing incremental construction of complex tools.

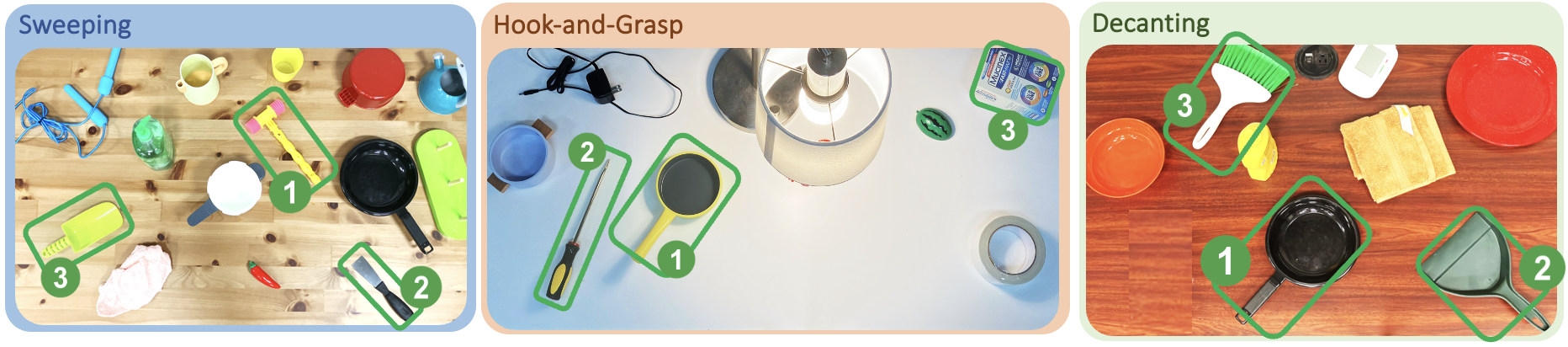

The tool selector module, Dgts, is trained using depth images of simulated tools labeled with success or failure. Successful tools are annotated with binary masks indicating the graspable region. The module outputs a likelihood map for each candidate object, enabling the robot to "make the best of what it has" even when the ideal tool is absent.

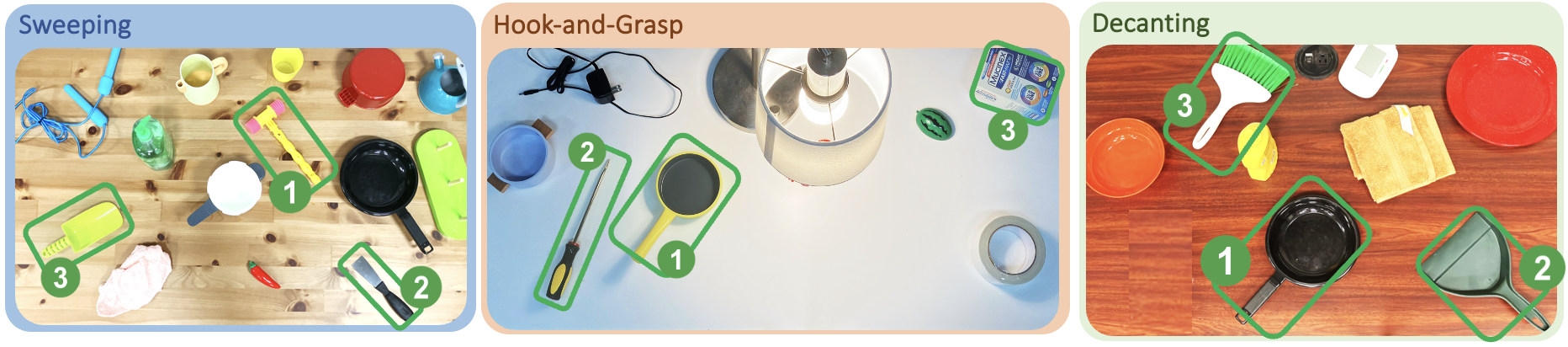

Figure 4: GeT-USE's tool selector ranks objects for Sweeping, Hook, and Decanting tasks, preferring those with suitable geometric features.

Visuomotor Grasping and Manipulation Policies

The grasping policy, πgtg, and manipulation policy, πgtm, are trained in simulation using depth images and proprioceptive data. The grasping policy learns to pick up objects in a manner consistent with their intended use as tools, while the manipulation policy controls all 22 DOFs of the TIAGo robot to execute the task. Both policies are supported by success detectors trained to autonomously identify successful execution from visual input.

Real-World Deployment and Evaluation

At test time, the robot uses an object detector to generate candidate patches, applies the tool selector to choose the best object, and then executes the grasping and manipulation policies. The system is evaluated on three tasks—Sweeping, Hook, and Decanting—using a diverse set of real-world objects, including both useful and adversarial items.

Figure 5: Simulated and real-world versions of Sweeping, Hook, and Decanting tasks, demonstrating GeT-USE's sim2real generalization.

Figure 6: All real-world objects used in experiments, illustrating the diversity and challenge of generalized tool usage.

Experimental Results

GeT-USE achieves 30-60% higher success rates than state-of-the-art baselines (TOG-Net variants) across all tasks. Notably, TOG-Net fails completely when restricted to top-down grasping/manipulation or when lacking a tool selector. GeT-USE's ability to control all 6-DOFs and to select the most suitable object is critical for success. Ablation studies confirm that both the tool selector and full 6-DOF control are essential; removing either results in a 50-60% drop in performance.

Failure Analysis

Failures in Sweeping and Hook are primarily attributed to sim-to-real dynamics gaps, such as objects sliding under the tool or being pushed out of reach. Decanting failures are due to hardware limitations causing vibration during pouring. These limitations highlight the need for improved simulation fidelity and more robust real-world controllers.

Implications and Future Directions

GeT-USE demonstrates that simulated embodiment extension is an effective strategy for learning generalized tool usage, enabling robots to adapt to novel objects and tasks without requiring extensive real-world data collection. The approach is scalable and leverages depth-based vision for robust sim2real transfer. However, the framework currently assumes accurate simulation of rigid-body dynamics and is limited to parallel-jaw grippers. Extending GeT-USE to deformable objects, articulated tools, and multi-fingered hands represents a promising direction for future research.

Conclusion

GeT-USE provides a principled framework for learning and deploying generalized tool usage in bimanual mobile manipulation. By combining simulated embodiment extension with vision-based policy distillation, it achieves superior performance and generalization compared to prior methods. The results underscore the importance of geometric reasoning, robust selection mechanisms, and full DOF control in enabling versatile robotic manipulation. Future work should address sim-to-real gaps, richer object dynamics, and more dexterous manipulation capabilities.