- The paper presents a novel PCL framework that dynamically adjusts collaboration intensity based on agent affinity to reduce sample complexity.

- It employs an affinity measure and importance correction techniques to balance decentralized collaboration with individual learning needs.

- Empirical results show that PCL achieves faster convergence and lower MSE compared to FedAvg and independent learning, especially in heterogeneous environments.

Personalized Collaborative Learning with Affinity-Based Variance Reduction

Introduction

The paper introduces a novel approach, Personalized Collaborative Learning (PCL), designed to address the inherent trade-offs in multi-agent learning environments characterized by significant agent heterogeneity. PCL leverages distributed collaboration without compromising the personalization required by diverse agents. This framework enables agents to collaboratively learn personalized solutions while adapting to varying levels of agent heterogeneity. Crucially, PCL achieves sample complexity reductions compared to independent learning, interpolating between linear speedup in homogeneous settings and the baseline of independent learning in highly heterogeneous regimes.

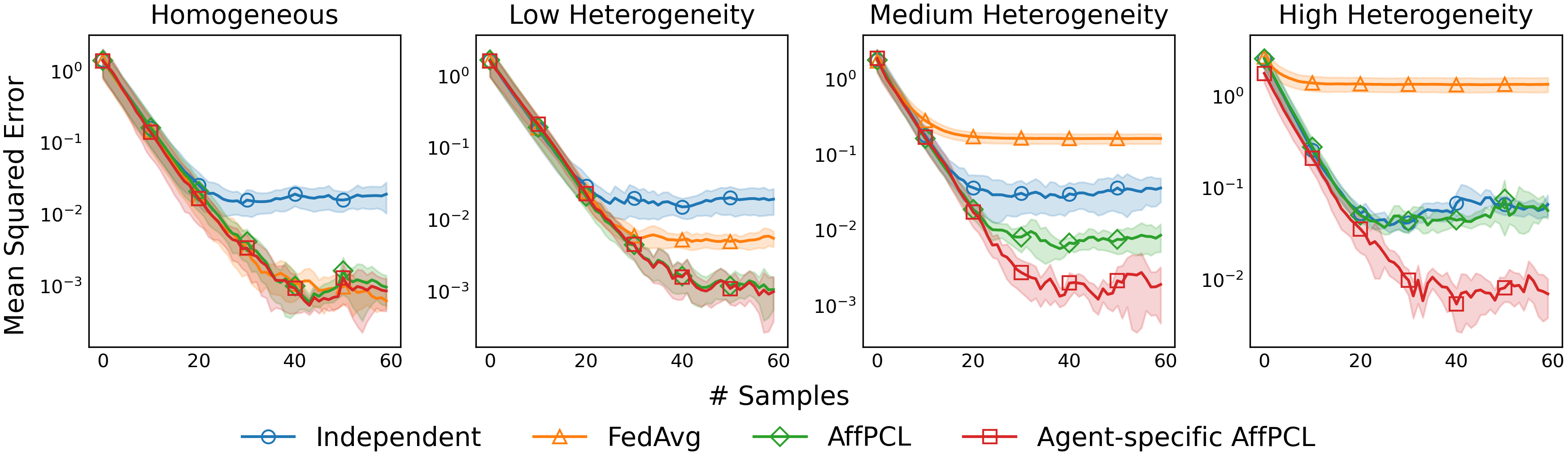

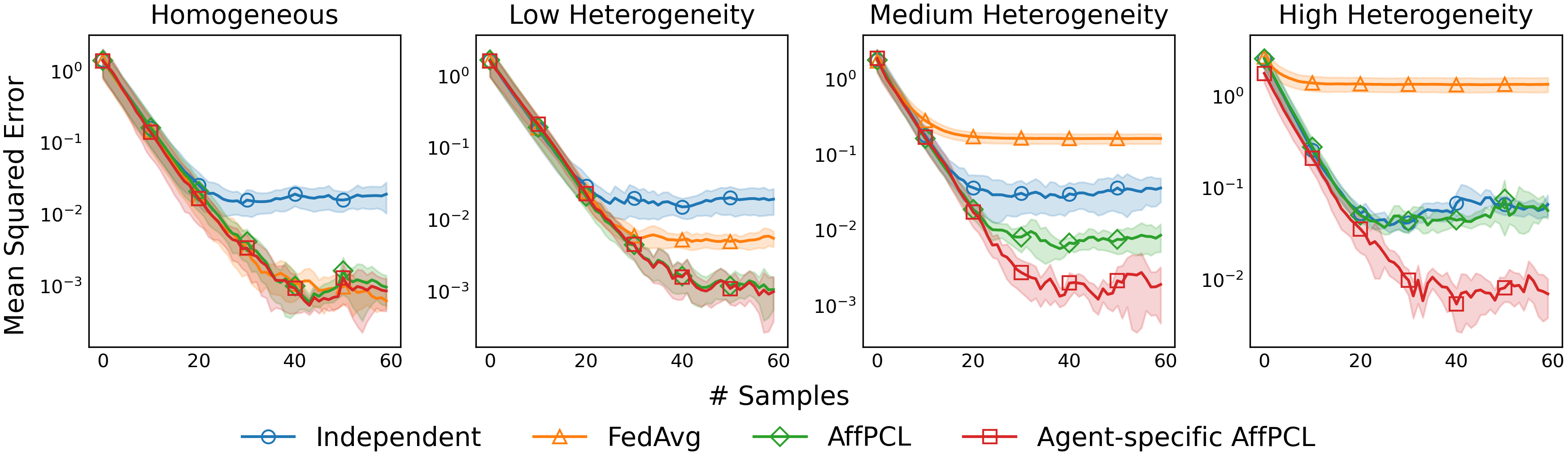

Figure 1: \PCL matches \FedAvg in the homogeneous setting and independent learning in the high heterogeneity regime.

Framework and Methodology

PCL is grounded in mechanisms for bias and importance correction to handle both environment and objective heterogeneity. It reduces sample complexity by max{n−1,δ}, where n denotes the number of agents and δ∈[0,1] quantifies heterogeneity. This adaptation does not require prior knowledge of system heterogeneity, allowing agents to potentially gain linear speedup even when collaborating with highly dissimilar agents.

The PCL framework introduces the Affinity-based Personalized Collaborative Learning (AffPCL) method, which employs distributed learning models with bias correction, utilizing the shared experiences of agents to ensure that the learning bias does not impede individual agent objectives. Importance correction further adjusts the central learning direction to maintain efficiency in the decentralized context.

Theoretical Insights

PCL introduces an affinity measure δ to quantify agent similarity and uses this measure to dynamically adjust collaboration intensity. This measure captures both objective similarities (via model parameters) and environmental similarities (via distributional differences). The method's theoretical backbone is a robust variance reduction technique that exploits these affinities to adjust learning rates dynamically, offering a seamless transition between federated and independent learning paradigms.

The theoretical contribution demonstrates that PCL can interpolate between these extremes, achieving linear federated speedup when agents are similar and defaulting to independent learning strategies when differences are stark.

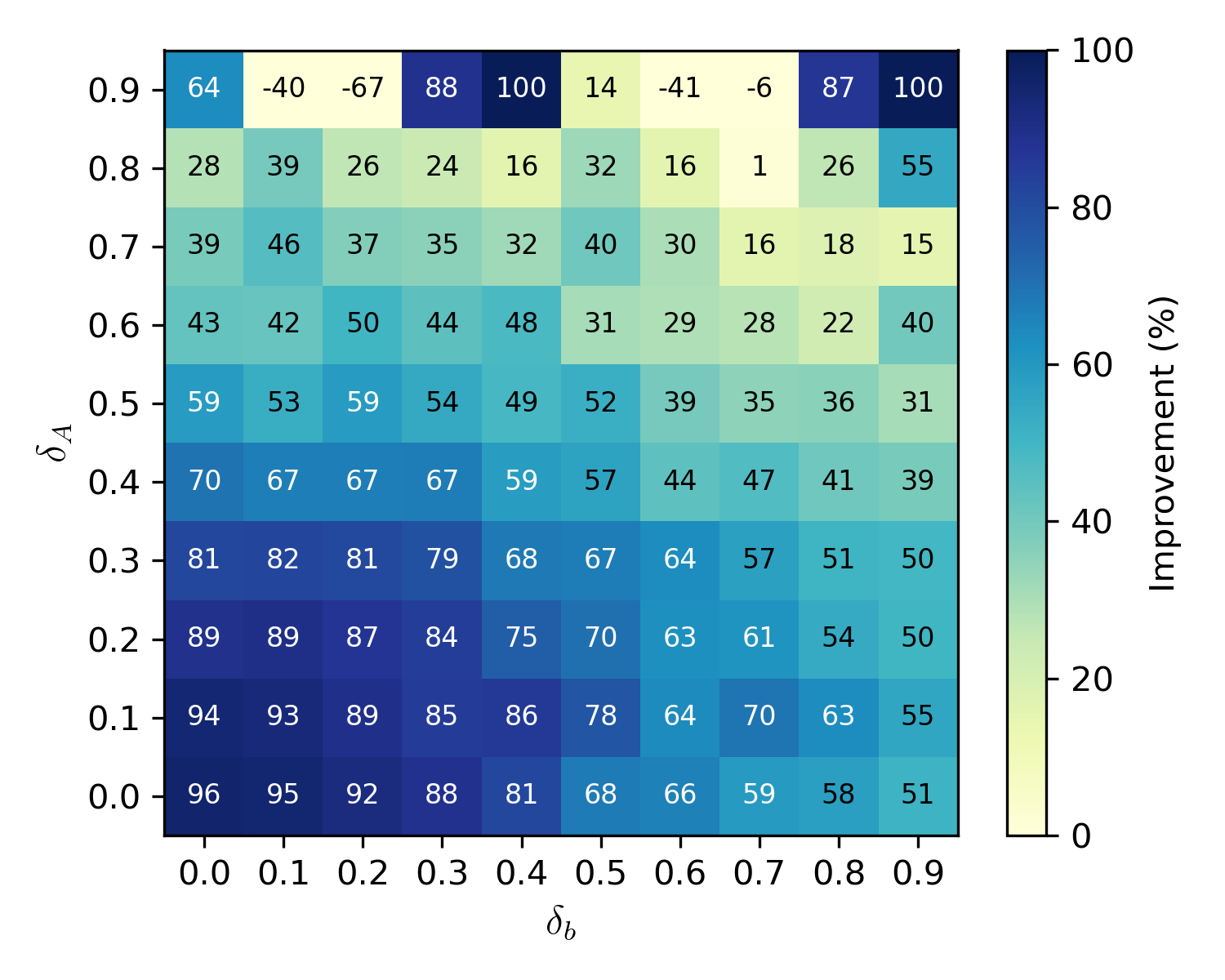

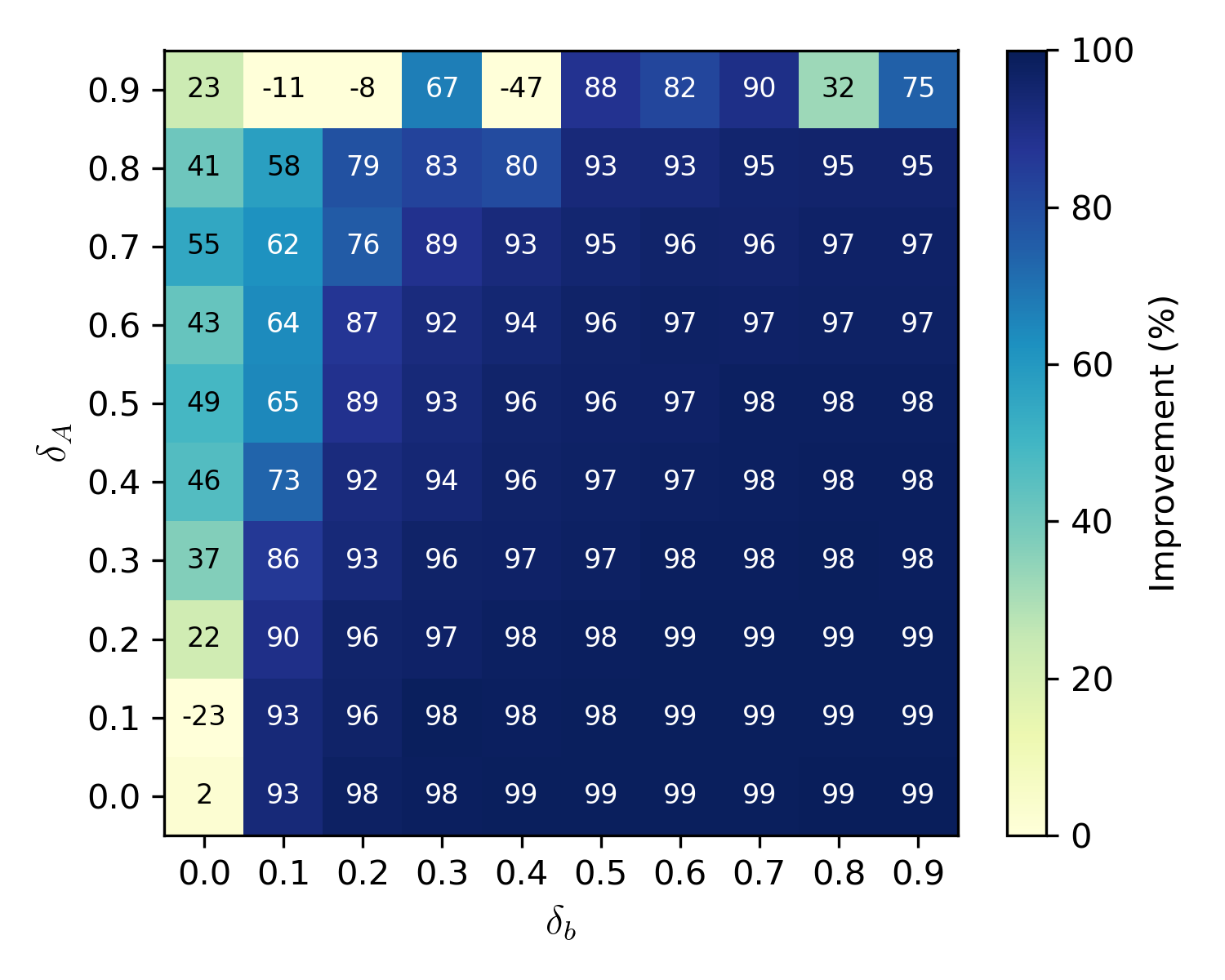

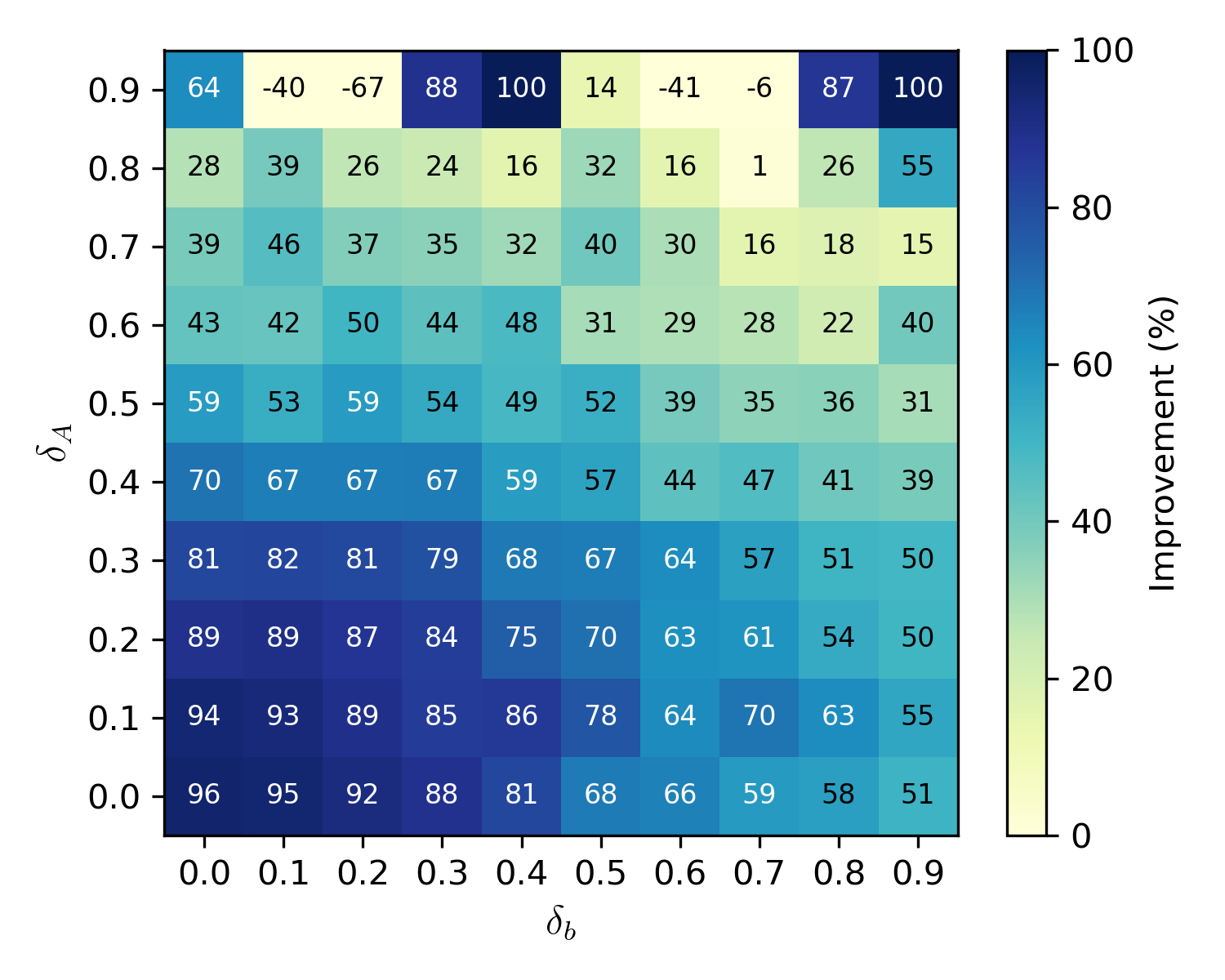

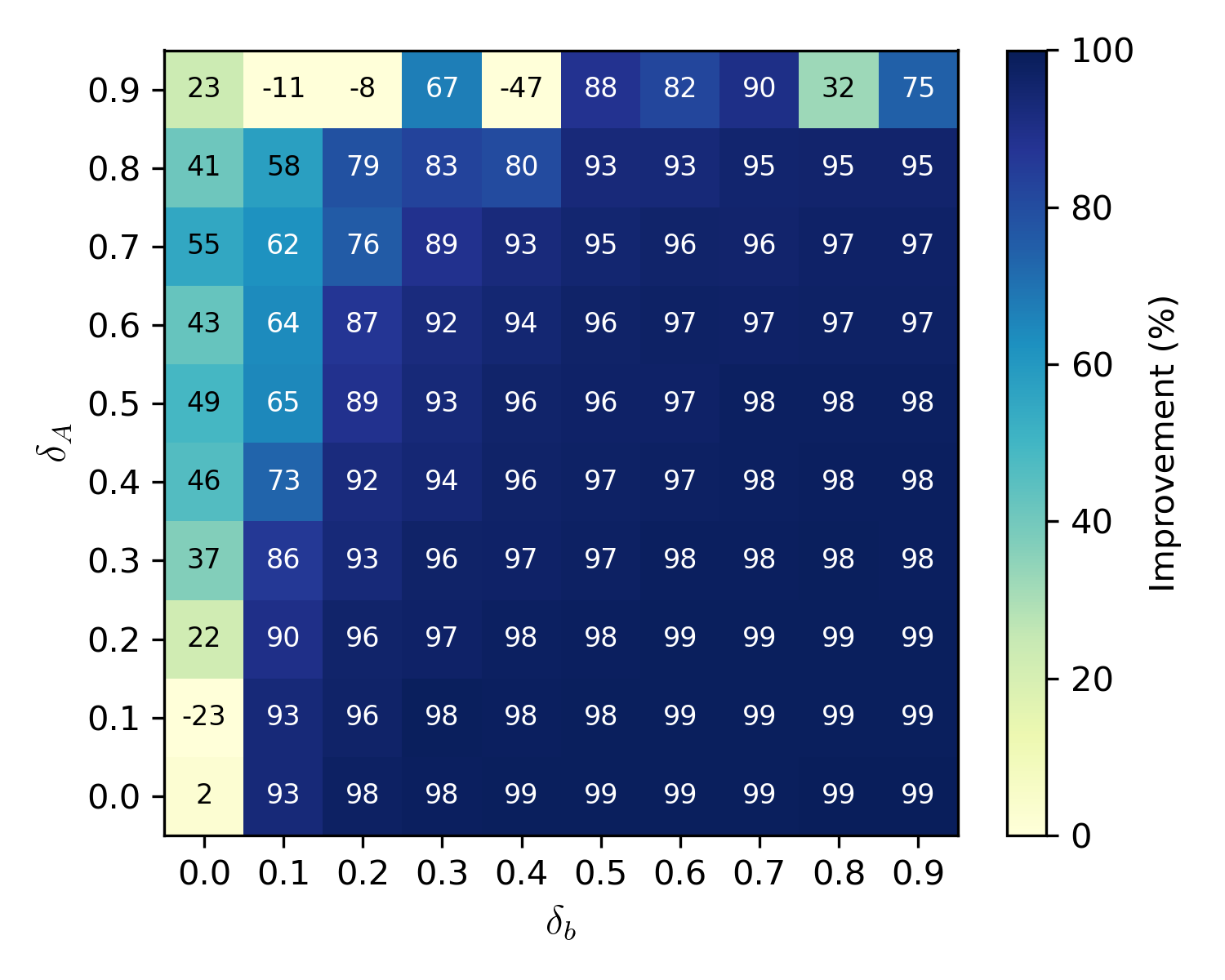

Figure 2: Over independent learning.

Empirical Results

Simulation results illustrate that PCL outperforms traditional federated learning (FedAvg) and independent learning in various heterogeneity settings, as highlighted in Figure 1. Notably, PCL achieves faster convergence with lower mean squared error (MSE) than the baseline methods across the spectra of agent heterogeneity levels. In highly heterogeneous environments, PCL's performance closely aligns with independent learning, validating its adaptive versatility.

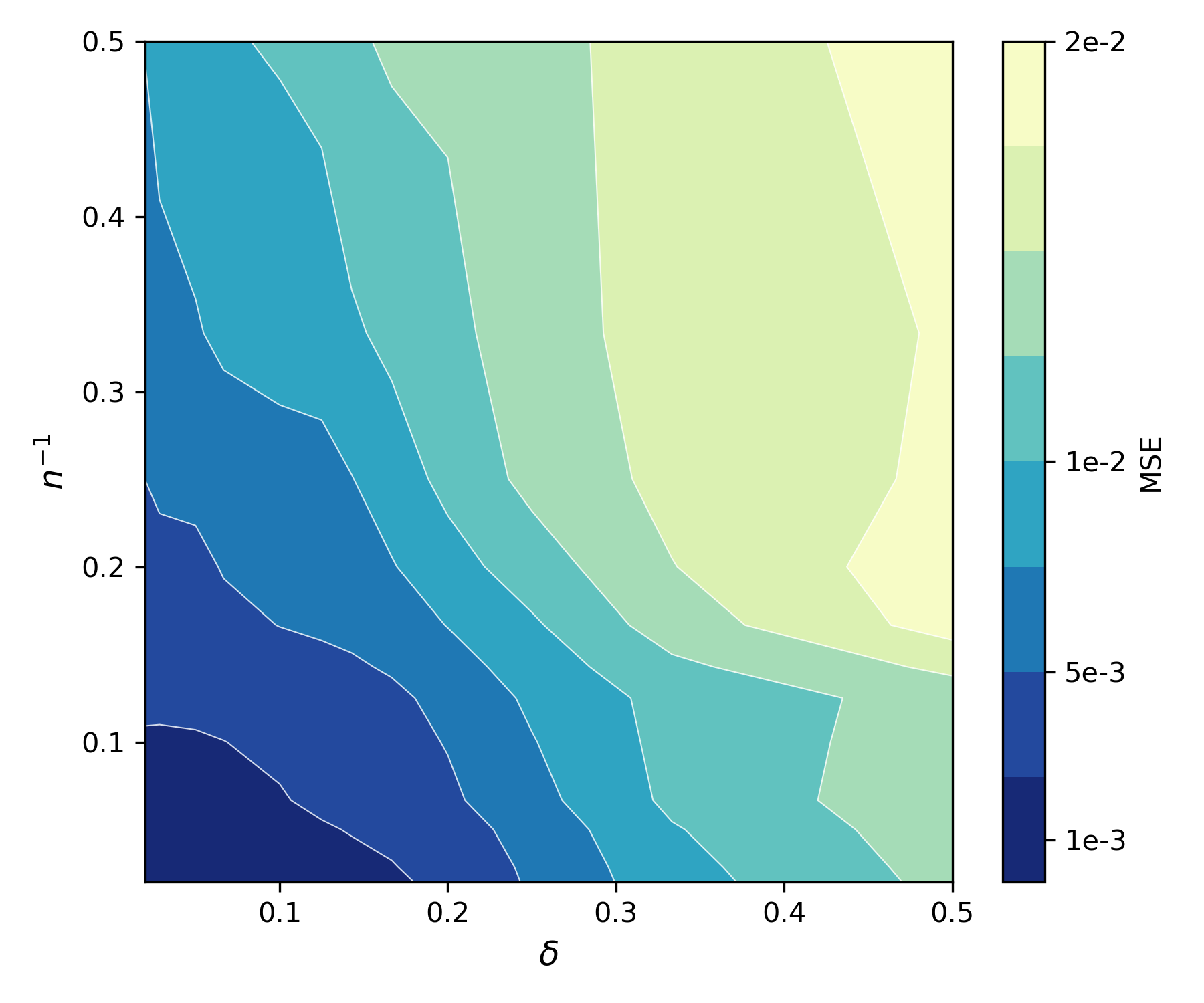

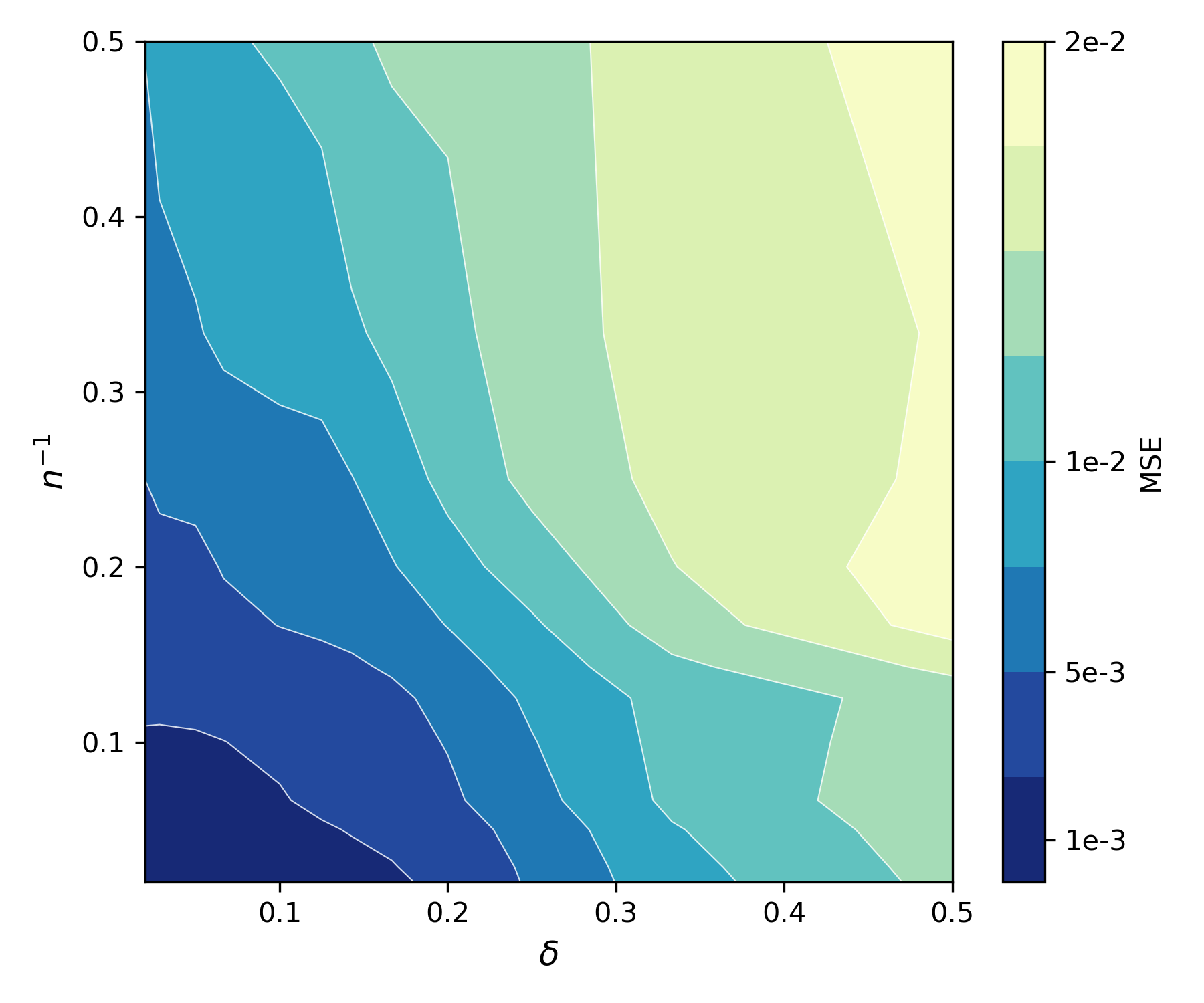

Figure 3: Iso-performance contours of \PCL.

Figure 3 demonstrates Iso-performance contours indicating that PCL achieves balanced performance by optimally distributing the learning workload according to agent affinities. This highlights the potential of PCL in dynamic agent networks where communication costs and agent capabilities vary significantly.

Conclusions and Implications

PCL offers a flexible, adaptive learning framework capable of harmonizing collaborative and personalized decision-making in multi-agent systems. Its ability to seamlessly transition between collaborative and independent operations based on real-time affinity measurements makes it suitable for applications requiring a high degree of personalization, such as customized recommendation systems, personalized medicine, and adaptive user interfaces in AI-driven applications.

Future research directions include investigating more complex communication structures, enhancing privacy and scalability, and extending the PCL framework to broader stochastic optimization problems. These advancements can further solidify PCL's role in advancing autonomous, intelligent multi-agent systems capable of efficiently leveraging collaboration without sacrificing individualized agent performance.