- The paper introduces KVComm, a framework that leverages attention scores and Gaussian priors to selectively share key-value pairs for efficient LLM communication.

- It reduces computational overhead and addresses hidden state bias by focusing on intermediate layer representations, ensuring effective data transmission.

- Experimental results show that KVComm outperforms baseline methods, achieving competitive performance with significantly lower data transmission requirements.

KVComm: Enabling Efficient LLM Communication through Selective KV Sharing

Introduction

The paper "KVComm: Enabling Efficient LLM Communication through Selective KV Sharing" (2510.03346) addresses the inefficiencies in inter-LLM communication within multi-agent systems. Traditional methods either rely on natural language, which incurs high inference costs and information loss, or on hidden states, which suffer from information concentration bias and inefficient data sharing. The KVComm framework proposes a novel approach by leveraging selective sharing of KV pairs, which offers a balance between efficiency and effectiveness in communicating rich semantic information without interacting directly with hidden states.

Motivation

The motivation behind KVComm lies in overcoming the shortcomings of existing communication protocols. The inefficiency of natural LLMs and the bias toward the concentration of hidden state information necessitates a more robust framework. KV pairs provide a representative form of activation information across layers, allowing models to utilize the encoded information efficiently through attention mechanisms.

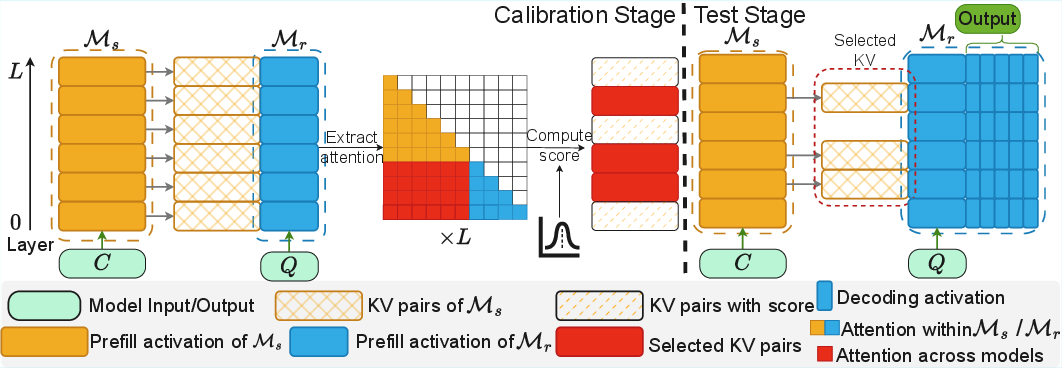

KVComm Framework

KVComm adopts a selection strategy based on attention importance scores and Gaussian priors to identify the most informative KV pairs for communication.

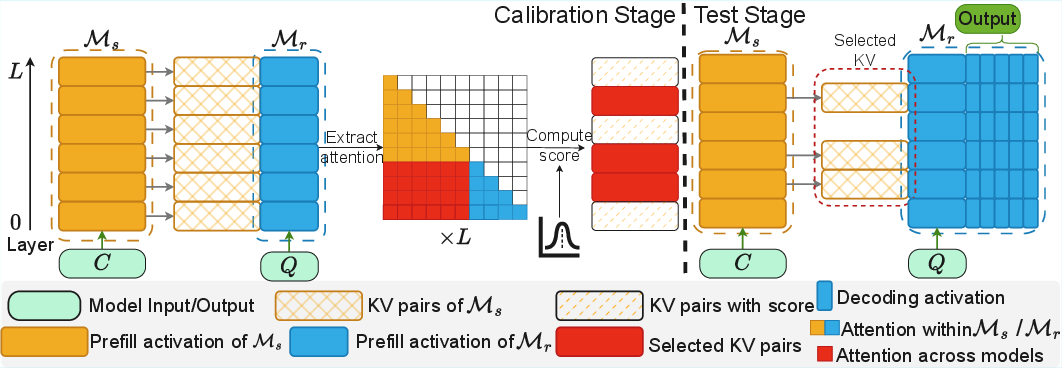

Figure 1: KVComm framework for efficient LLM communication through selective KV sharing.

In scenarios where LLMs collaboratively solve tasks, Ms processes context C, generating information IC to be transmitted. Mr uses IC, combined with a query Q, to produce the final output. The communication protocol must be efficient while transmitting minimal data without sacrificing effectiveness.

Limitations of Previous Approaches

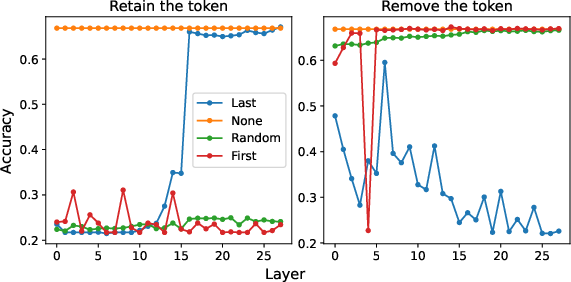

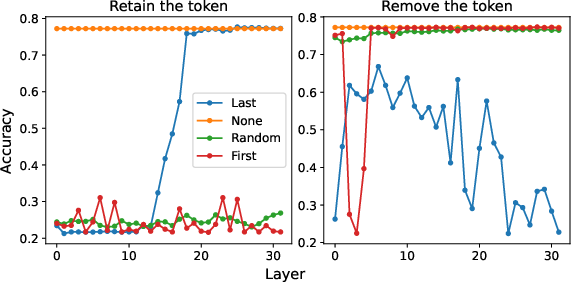

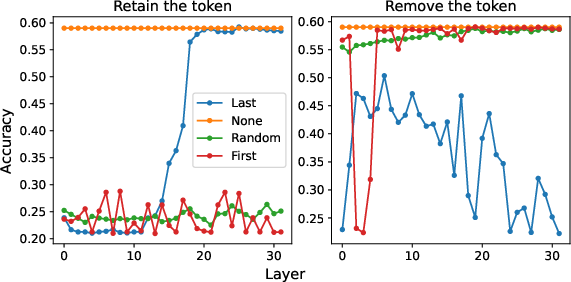

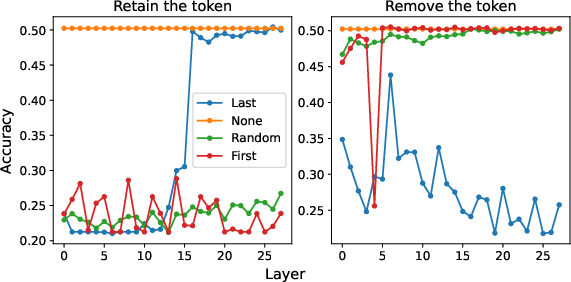

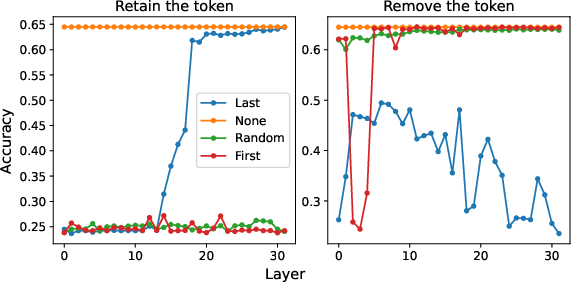

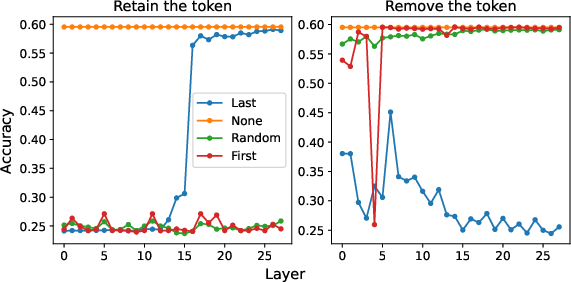

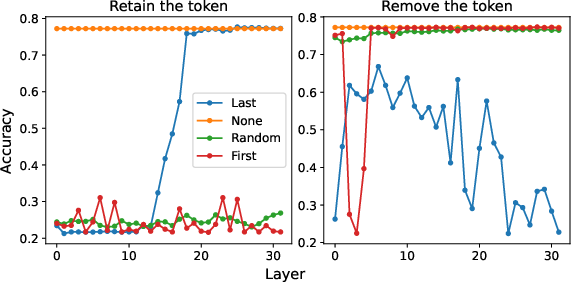

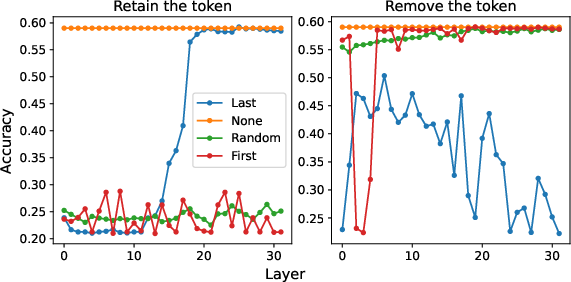

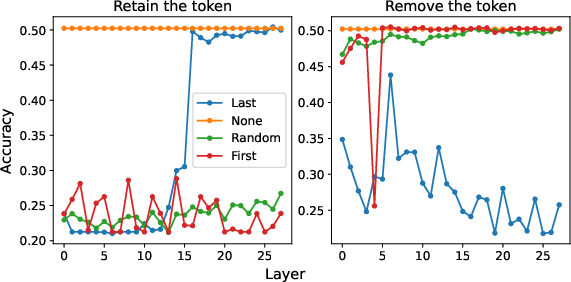

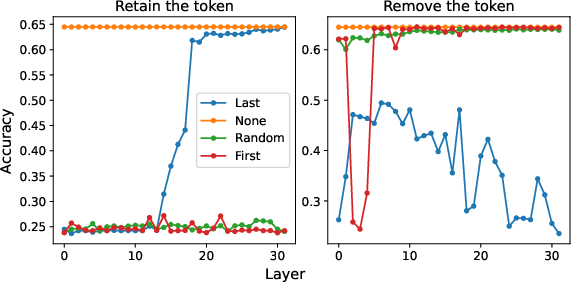

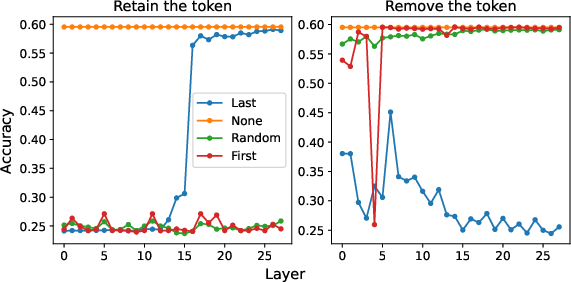

Hidden states have been proposed before as a medium, yet they fail due to biases in information concentration, predominantly in the last token's hidden state in later layers. This bias leads to significant information loss when used for communication. Additionally, methods relying on all tokens' hidden states face dilemmas of computational inefficiency or performance degradation.

Figure 2: Compared to other token positions, the last token's hidden state is the most critical, especially in later layers.

Efficient Communication through Selective KV Sharing

KVComm circumvents these challenges by selectively sharing KV pairs determined from attention scores and semantic richness derived from intermediate layers. This selection ensures efficiency and retains essential context information.

KV Selection Strategies

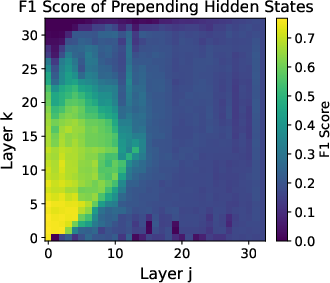

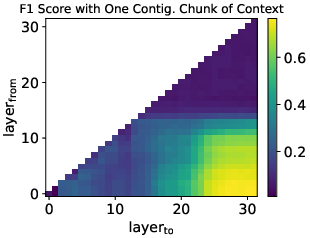

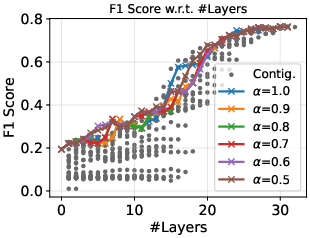

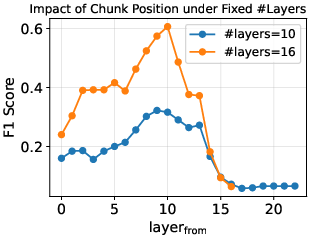

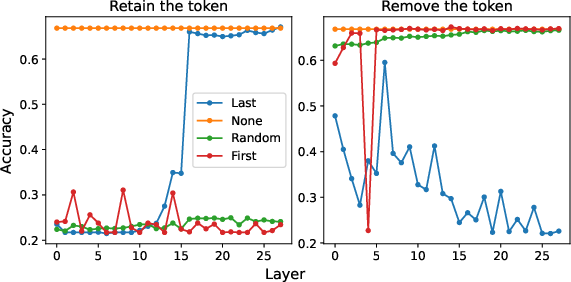

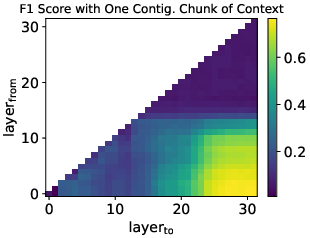

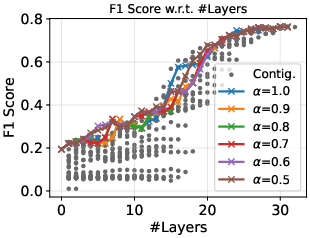

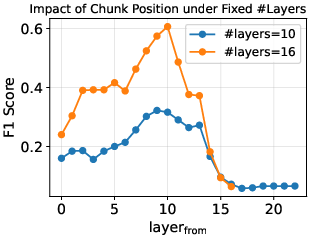

The selection leverages hypotheses regarding knowledge embedding in intermediate layers and attention distribution as proxies for communication value.

Figure 3: Effective communication with limited hyperparameters.

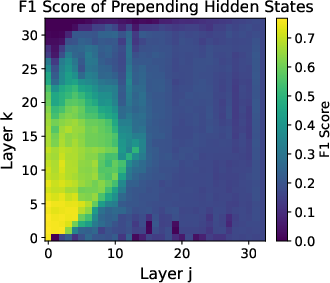

Attention importance scores average context token weights across layers, refining the selection process using a Gaussian center spread approach, enhancing intermediate layer selection efficacy.

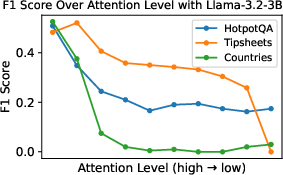

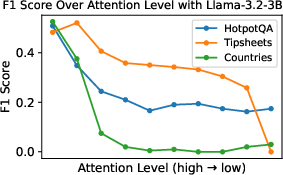

Figure 4: Better communication performance with higher attention level.

Experimental Results

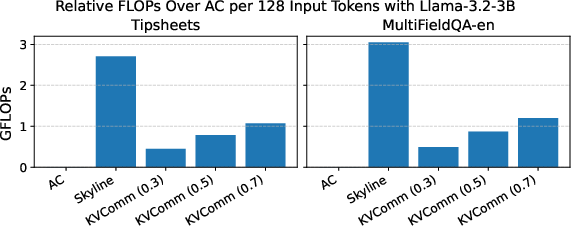

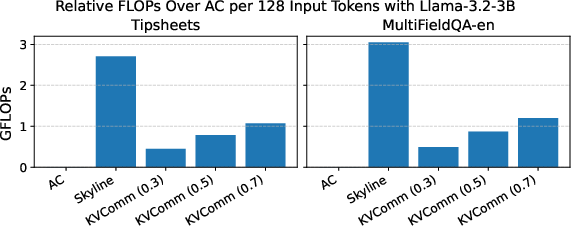

KVComm demonstrates significant reductions in computational complexity compared to baselines, while maintaining or enhancing performance. It consistently outperforms methods like Skyline and NLD by achieving reduced data transmission requirements.

Figure 5: Llama-3.2-3B on MMLU Social Science

The experiments underscore KVComm’s ability to achieve performance comparable to direct input merging methods, with drastically reduced communication overhead. Such results manifest KVComm’s practical utility in scalable multi-agent systems.

Conclusion

KVComm presents a paradigm shift in efficient LLM communication through selective KV sharing, overcoming existing challenges in hidden state bias and natural language inefficiencies. By focusing on intermediate layers and utilizing attention scores, KVComm establishes a foundation for future developments in efficient inter-LLM communication strategies, balancing computational demands with rich semantic data sharing.

The broader implications of KVComm lie in its potential to enhance collaborative problem-solving capabilities in AI-driven multi-agent environments, paving the way for more scalable and efficient systems.

This approach opens avenues for further research in optimizing communication protocols by blending KVComm's principles with existing methods to address varying complexities in real-world applications.