- The paper introduces a novel automated approach for segmenting 3D objects into parts using a point-based prompt system and streamlined network design.

- It employs PointTransformerV3 for robust multi-scale feature extraction and uses an IoU predictor to optimize segmentation accuracy across datasets.

- P3-SAM achieves state-of-the-art performance on diverse benchmarks, emphasizing efficiency and versatility in fully automated 3D segmentation.

Detailed Analysis of P3-SAM for 3D Part Segmentation

The paper "P3-SAM: Native 3D Part Segmentation" (2509.06784) presents a novel approach to automate the segmentation of 3D objects into constituent parts. This work addresses current limitations in the domain and offers a robust solution aimed at accurate and fully automated 3D part segmentation, leveraging novel techniques such as point-based prompts and a newly proposed network architecture. This essay provides an in-depth analysis of the methodologies, results, and implications of the presented research.

Methodology

The proposed P3-SAM model is structured around three core components: a feature extractor, multiple segmentation heads, and an IoU predictor. The model's design is inspired by the Segment Anything Model (SAM), but it has been specifically tailored for 3D segmentation tasks, omitting complex decoders and instead employing a streamlined approach.

Network Architecture

Figure 1: The Network Architecture of P3-SAM, showing the process from feature extraction to multi-mask segmentation.

Feature Extraction and Segmentation: The feature extractor employs PointTransformerV3 to obtain point-wise features from input point clouds, enabling robust feature extraction across multiple scales. The segmentation module is characterized by a two-stage, multi-mask segmentor that predicts segmentation outputs at varying scales, which are evaluated using an IoU predictor.

Training Strategy: Training of the P3-SAM model uses a vast dataset comprising 3.7 million 3D models with part-level annotations. The dataset emphasizes diverse and complex models, reinforcing the network's generalization capabilities.

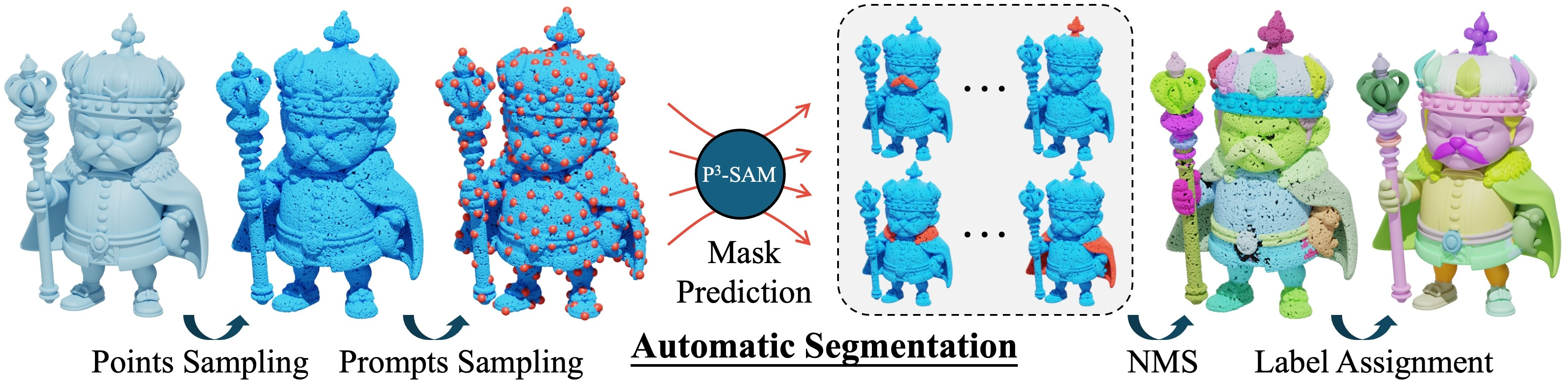

Automatic Segmentation

Critical to this research is the development of an automatic segmentation pipeline.

Figure 2: Automatic Segmentation Pipeline illustrating the use of FPS, NMS, and mesh reconstruction for part segmentation.

This process involves sampling prompts, predicting masks per prompt point, and merging these masks using Non-Maximum Suppression (NMS) to ensure coherent segmentation results. This fully automated process surpasses the manual intervention required in competing methodologies, enhancing efficiency in large-scale applications.

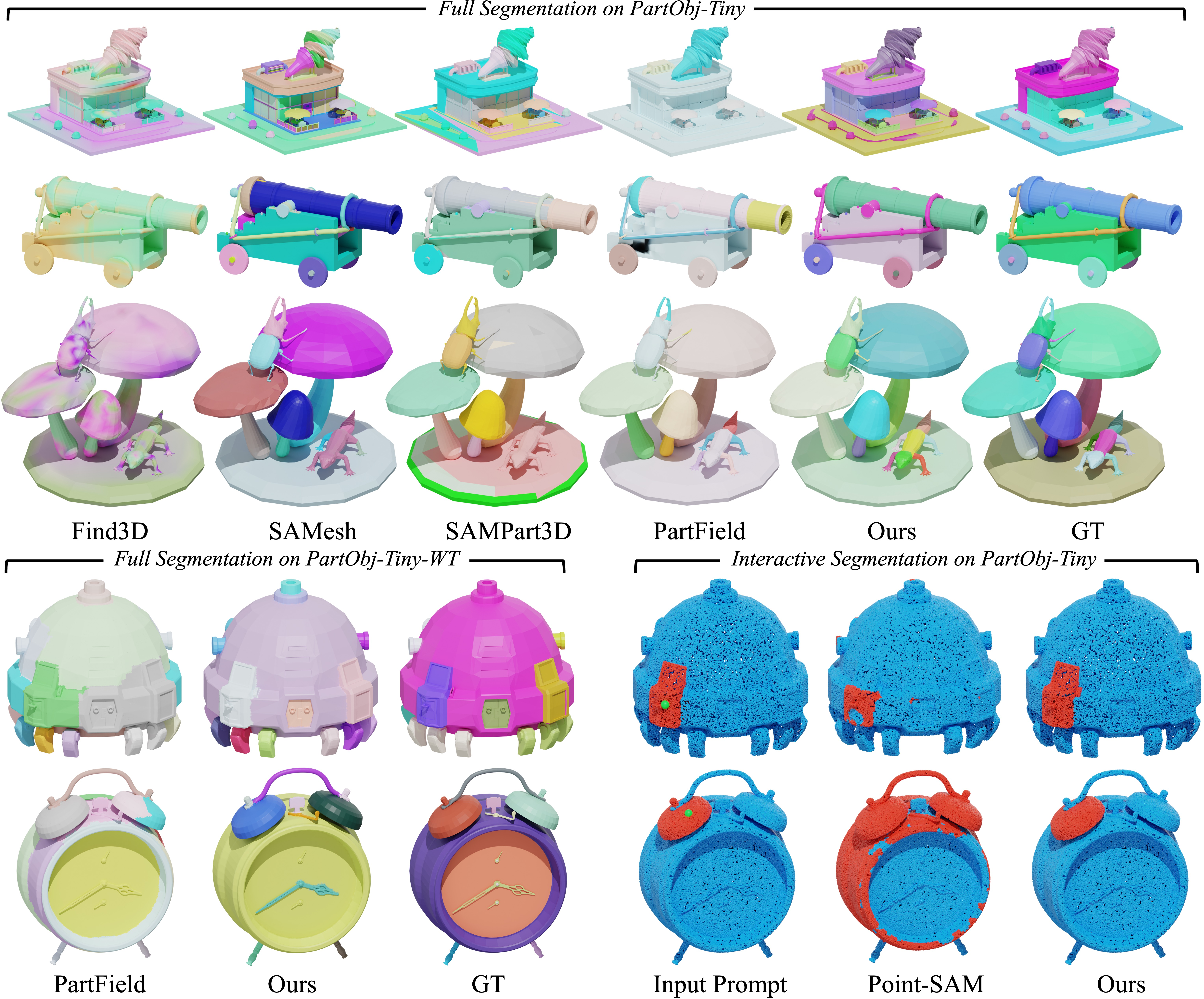

Experimental Results

The paper showcases extensive experimentation across multiple datasets, demonstrating P3-SAM's superiority in segmentation performance.

Benchmarking

Figure 3: The comparison of our method across different tasks.

P3-SAM achieves state-of-the-art performance on datasets such as PartObj-Tiny, PartObj-Tiny-WT, and PartNetE, excelling in both segmentation accuracy and robustness. Notably, the model performs equally well on non-watertight and watertight datasets, highlighting its versatility.

Applications

Figure 4: The three applications of our method: Multi-Prompt Segmentation, Hierarchical Segmentation, and Part Generation.

The versatility of P3-SAM extends beyond segmentation to various applications including multi-prompt segmentation, enabling fine-tuned control over segmented parts, and hierarchical part segmentation, facilitating nuanced understanding of object structures.

Theoretical Implications and Future Research

The introduction of a native 3D part segmentation model presents significant theoretical advancements for the field. P3-SAM's ability to effectively integrate native 3D data without reliance on 2D projection bridges a crucial gap in 3D model analysis, promising future extensions in automatic processing and interactive applications.

Conclusion

P3-SAM signifies a significant leap in the field of 3D part segmentation, addressing prevalent challenges with a comprehensive and efficient solution. By utilizing a vast training dataset and a novel network architecture, this model establishes new benchmarks in segmentation accuracy and robustness. Future explorations might explore integrating spatial volume understanding to further enrich 3D segmentation capabilities. The implications of this research extend into practical domains such as 3D modeling, virtual reality, and robotics, where enhanced model segmentation can drive innovation and efficiency.