- The paper demonstrates that generative AI models, including VAEs and GANs, learn crystal structure distributions to bypass conventional energy evaluations in CSP.

- It evaluates various architectures and data representations, emphasizing their impact on model robustness, sample diversity, and computational efficiency.

- The paper highlights practical applications and future research directions such as defect analysis and property-driven design in materials discovery.

Generative AI for Crystal Structures: A Review

Introduction

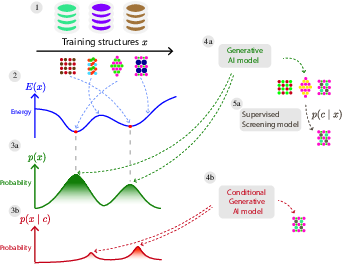

The paper "Generative AI for Crystal Structures: A Review" provides a comprehensive survey of the current state and progress in applying generative models to inorganic crystalline materials. The focus is on overcoming traditional computational challenges in crystal structure prediction (CSP), where generative methods, unlike classical approaches, learn the data distribution from existing databases and propose crystal structures directly. Such models enable bypassing the computational constraints of energy evaluations inherent in CSP.

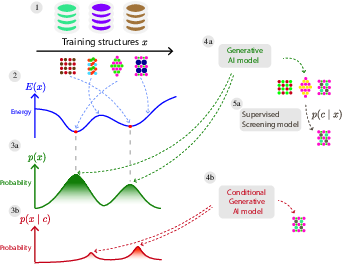

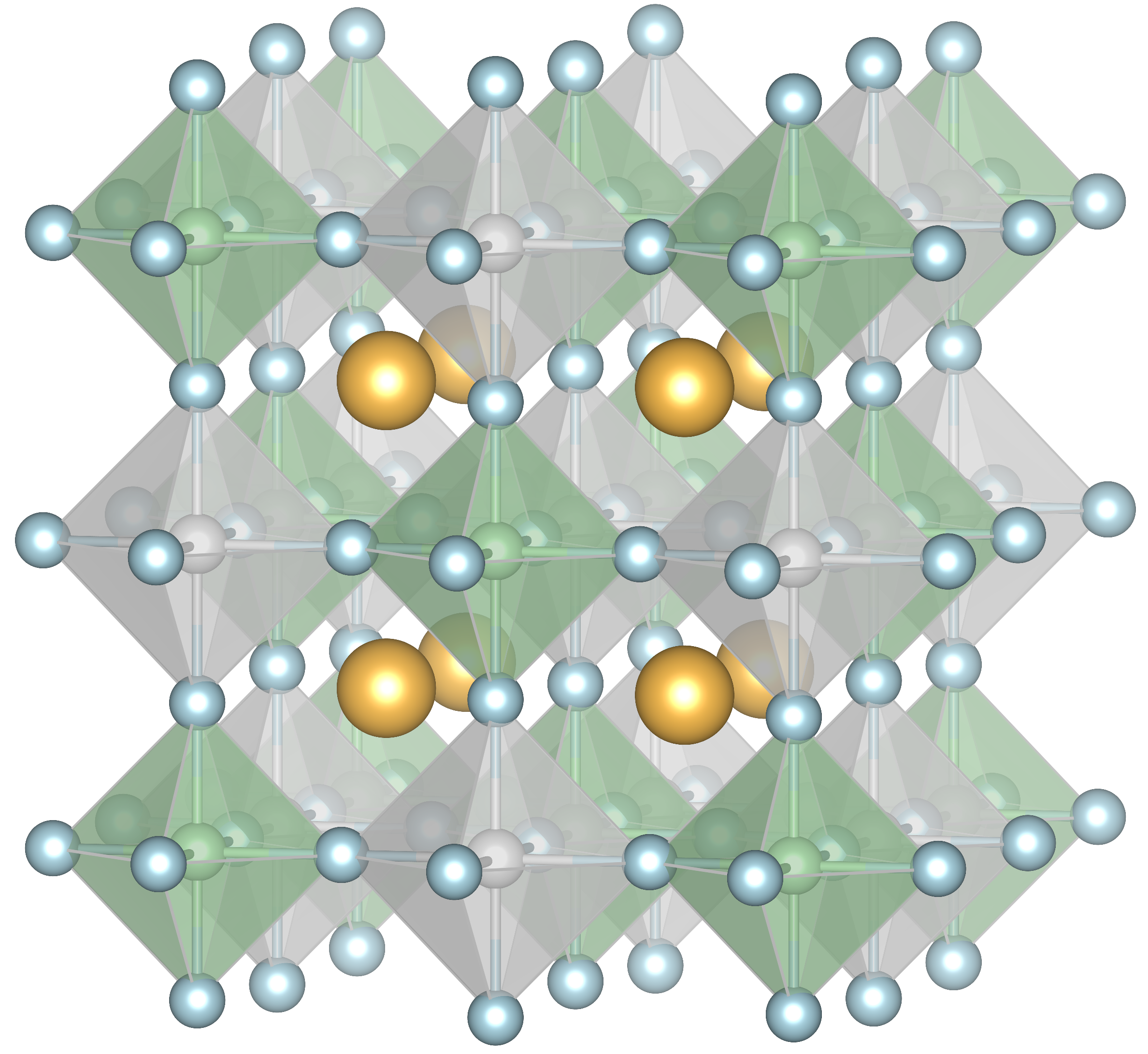

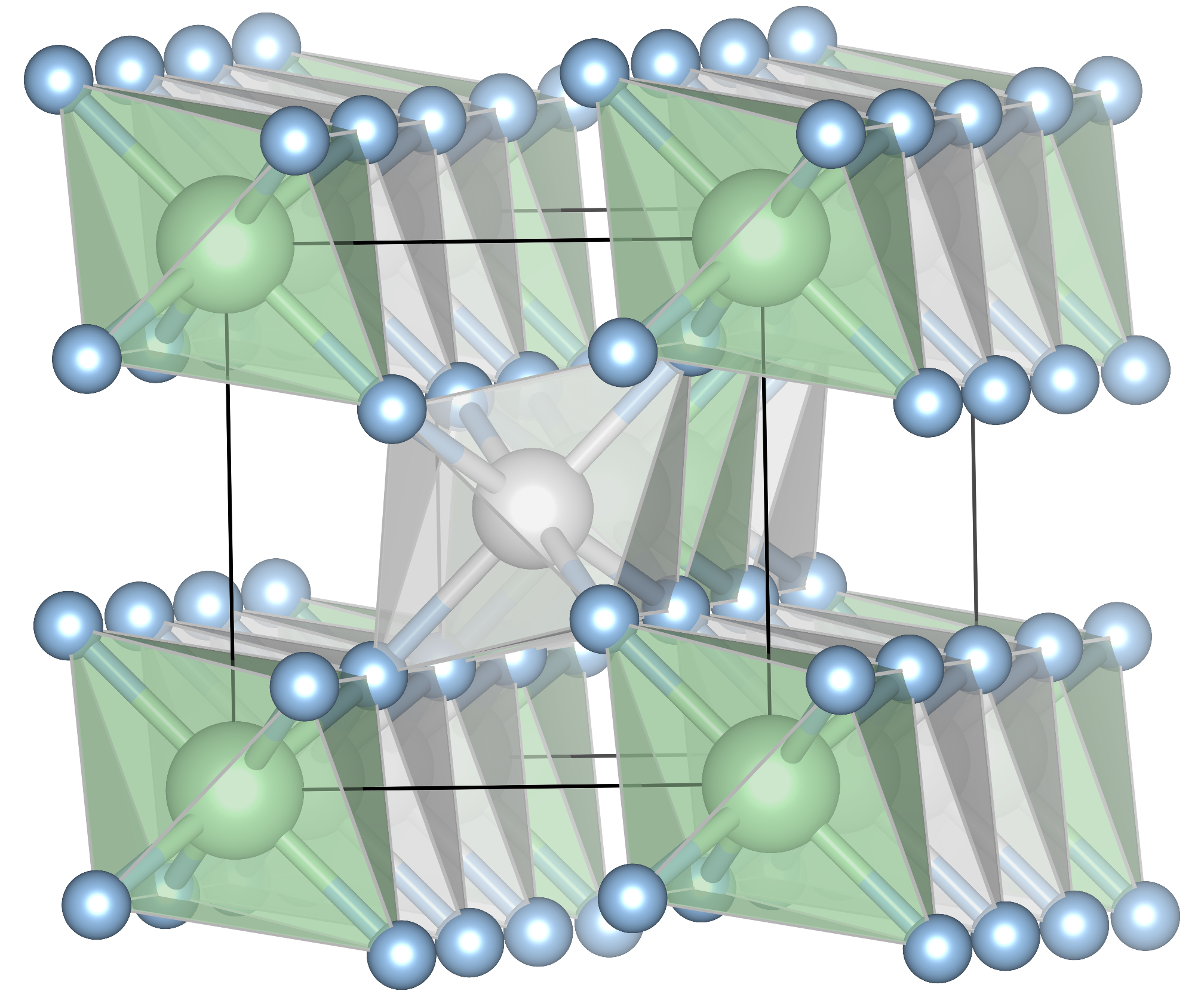

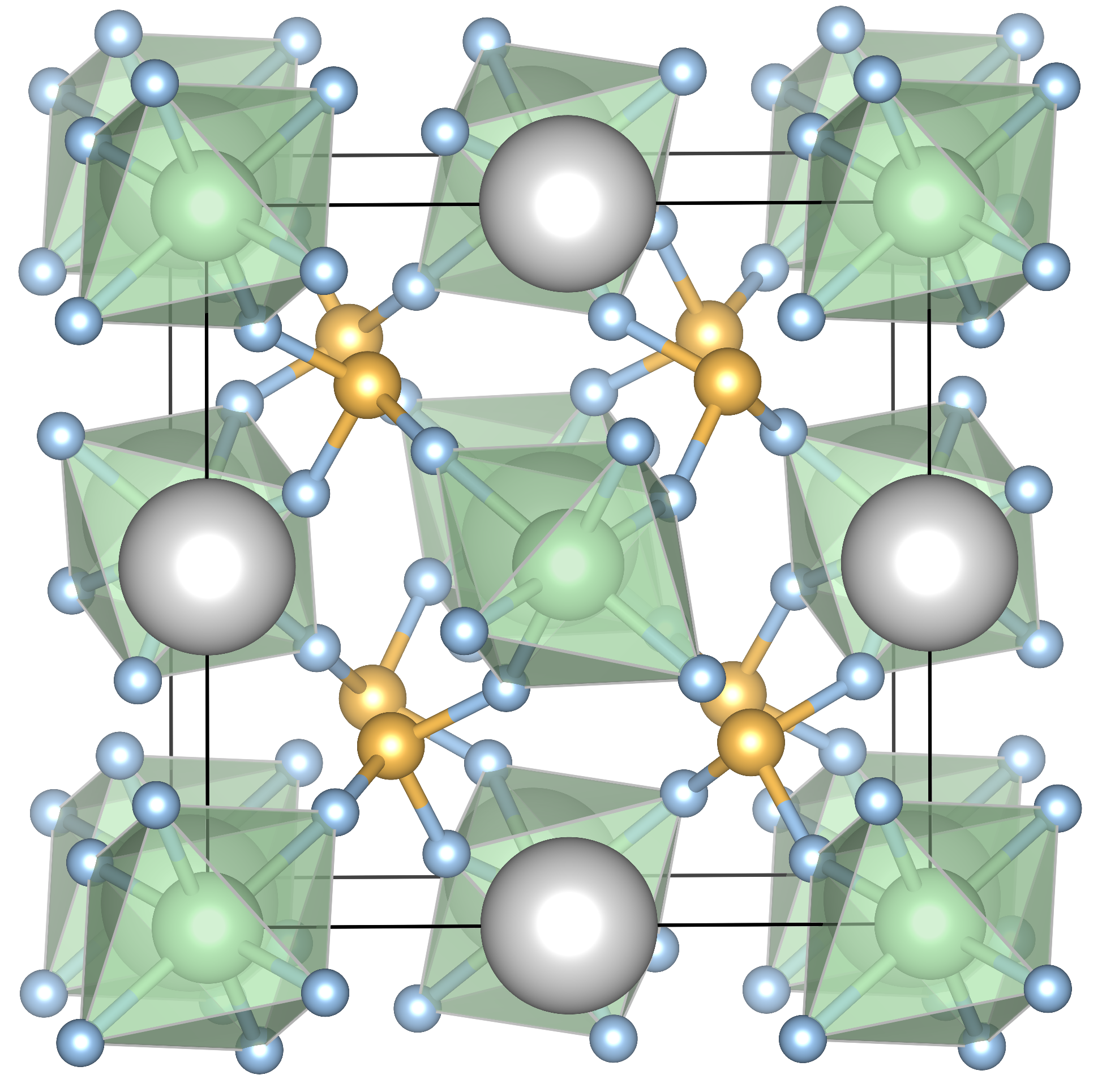

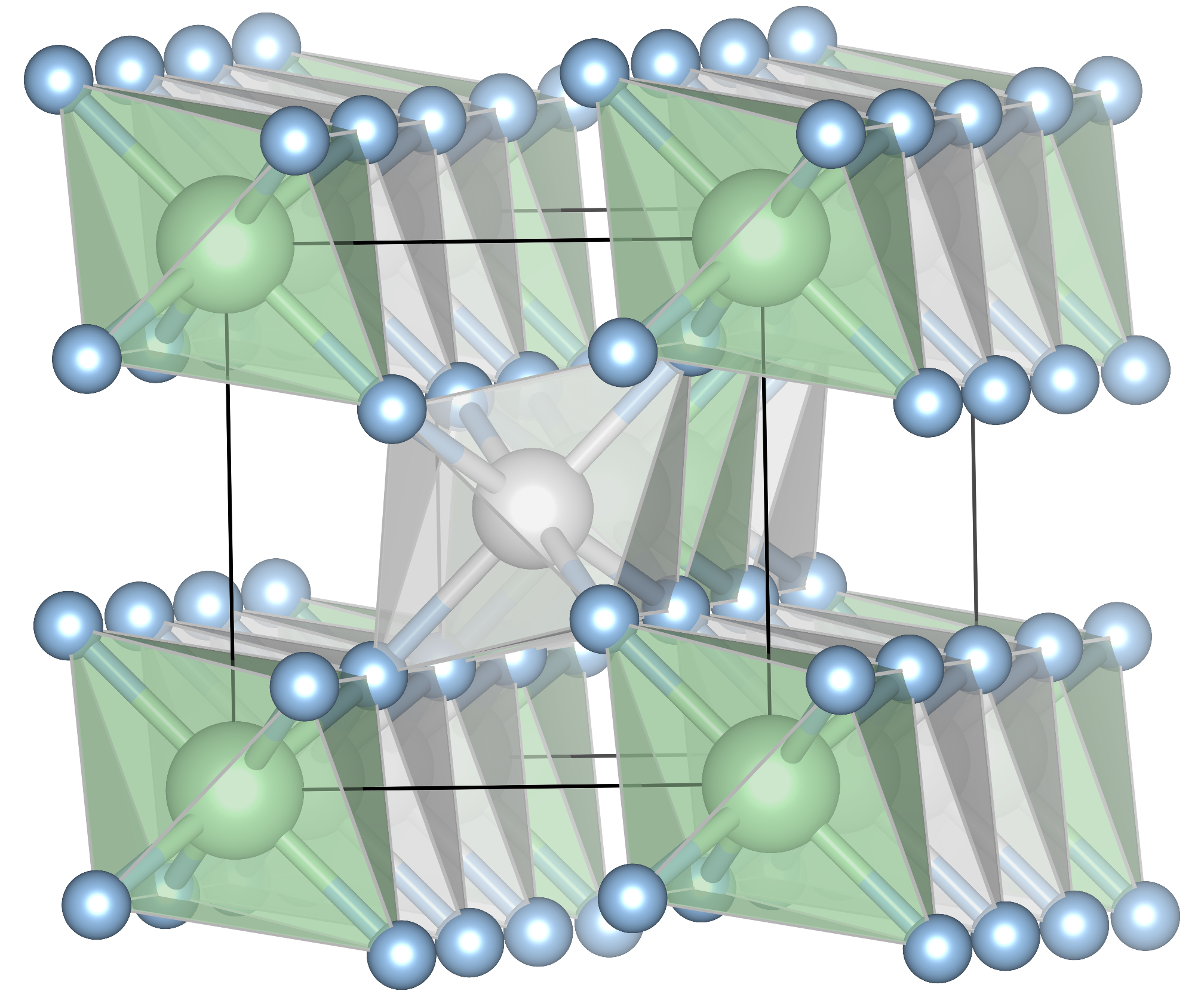

Figure 1: Schematic overview of generative AI for crystal structure generation. (1)~Crystal structure training data is collected from various databases.

Generative Model Architectures

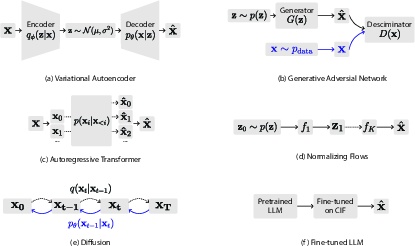

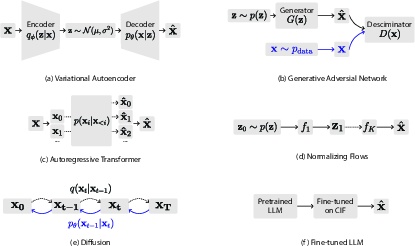

The paper categorizes the architectures used in generative AI under several families, including Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), transformers, normalizing flows, diffusion models, and fine-tuned LLMs.

Variational Autoencoders (VAEs)

VAEs incorporate a probabilistic approach to encode high-dimensional data into a latent space and decode it back, maintaining a smooth manifold for sampling. This ensures continuous data generation through learned distributions, enhancing model robustness.

Generative Adversarial Networks (GANs)

GANs involve a pair of networks—a generator and a discriminator—trained adversarially. While GANs are powerful in data generation, they are subject to issues like mode collapse. They produce samples without explicit distribution modeling.

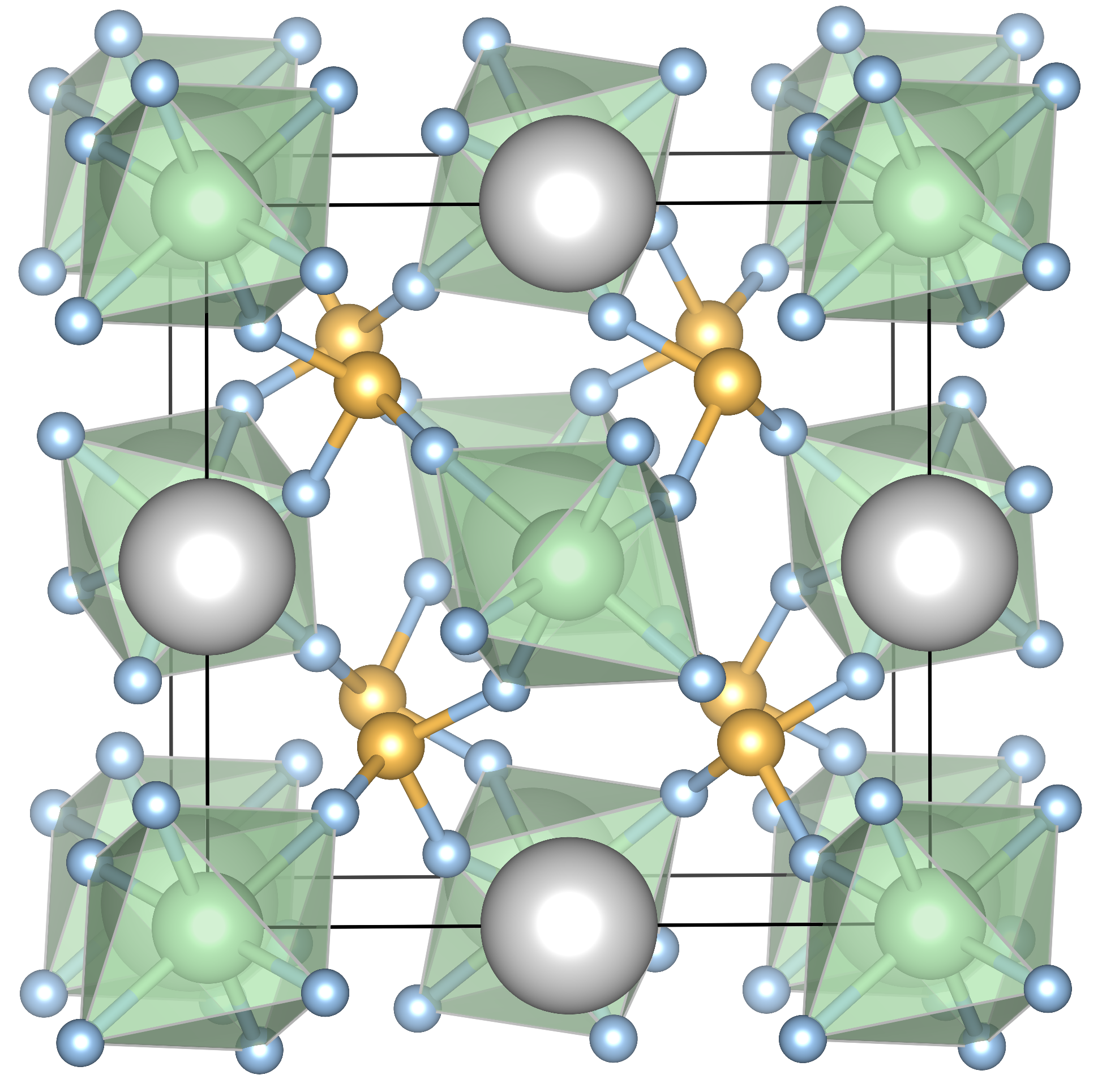

Figure 2: Schematic overviews of the main generative model architectures, including VAEs, GANs, and diffusion models.

Data Representation and Challenges

The choice of data representation is crucial for model performance. Representations include point clouds, graphs, voxel grids, and reciprocal space, each providing unique insights and constraints during model training and sampling.

Evaluation Metrics and Benchmarks

The evaluation of generative models incorporates a gamut of metrics, addressing validity, coverage, uniqueness, novelty, stability, and synthesizability. Despite progress, a standardized benchmarking approach across models is lacking, hindering direct performance comparisons.

Practical Applications

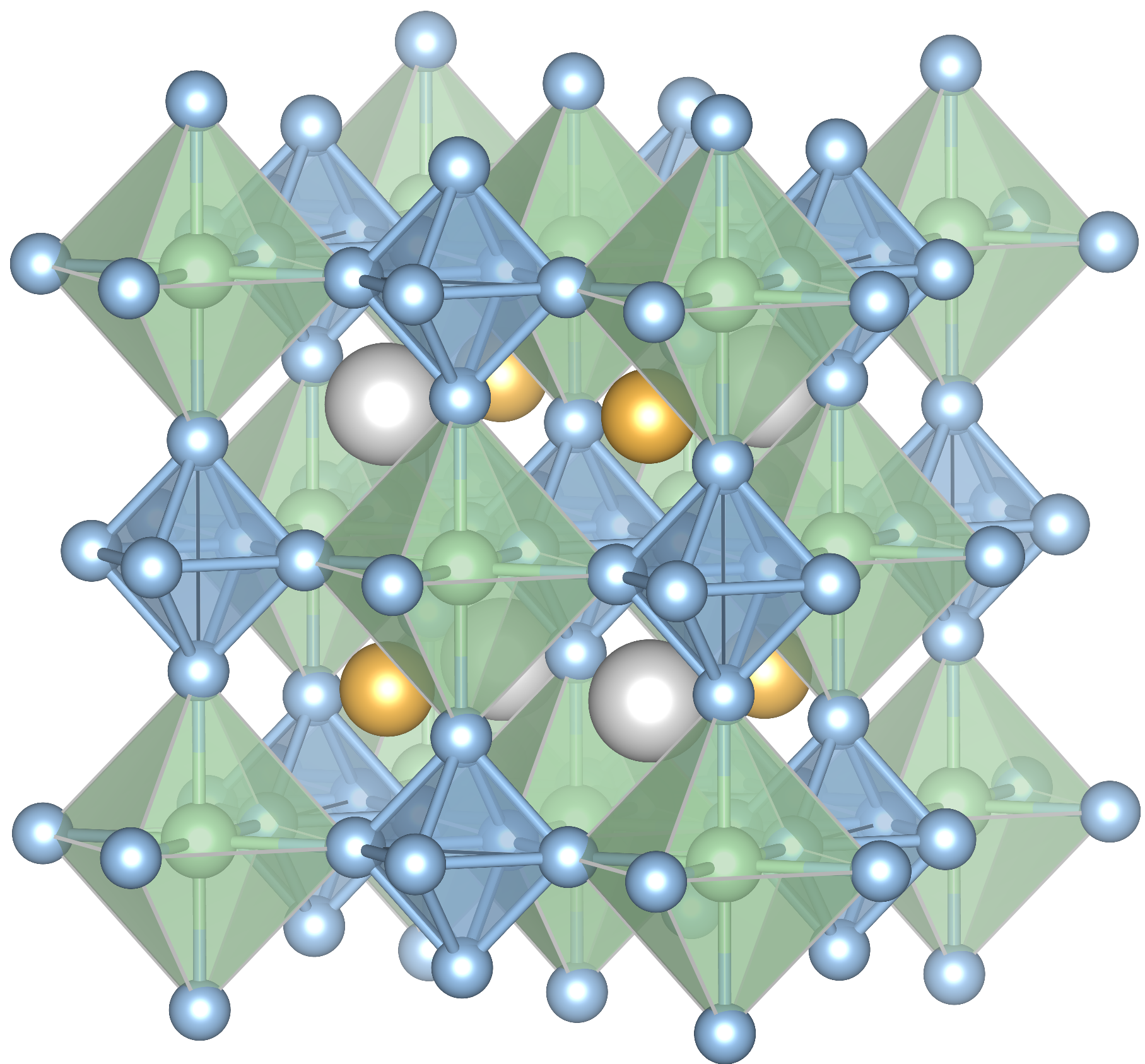

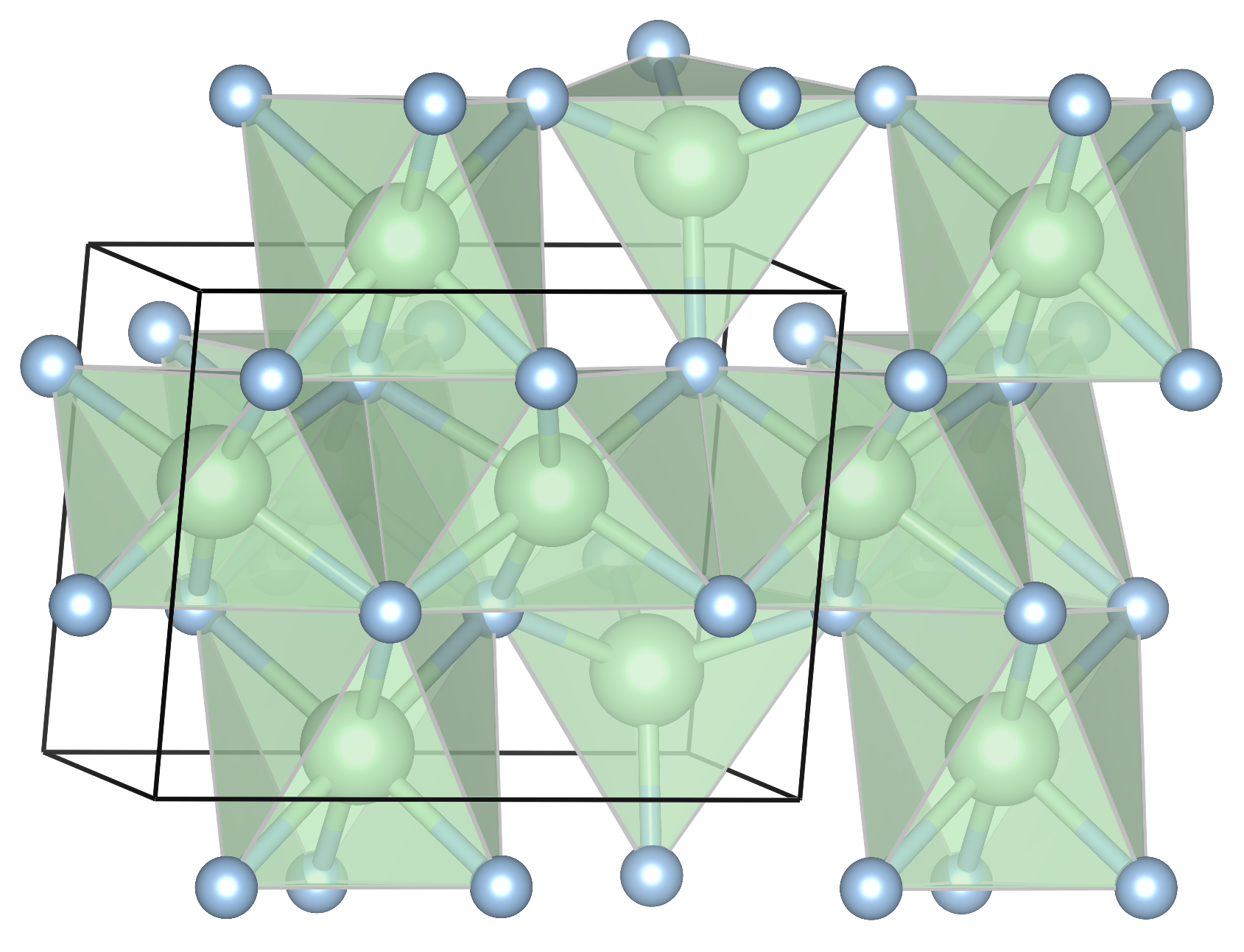

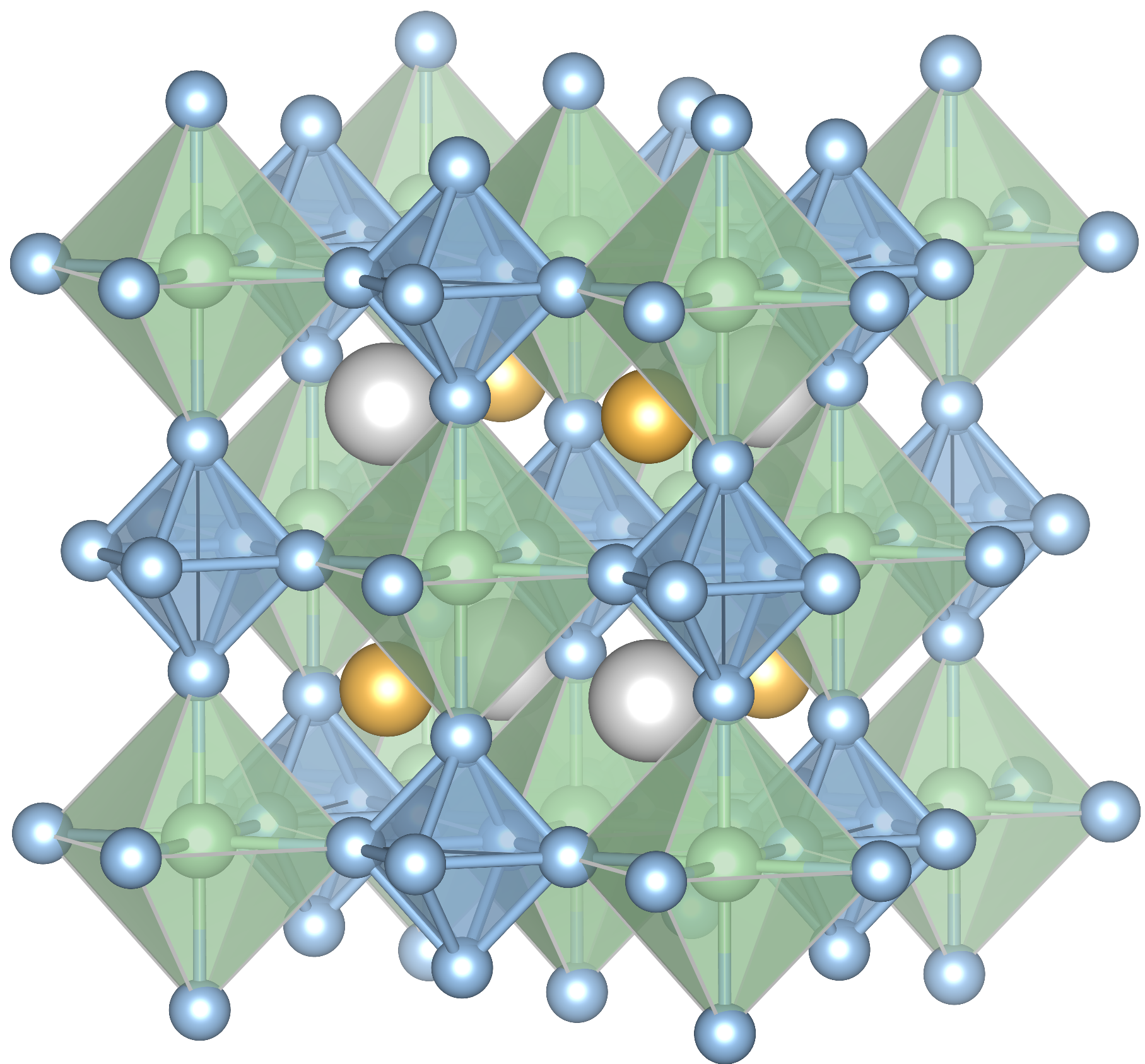

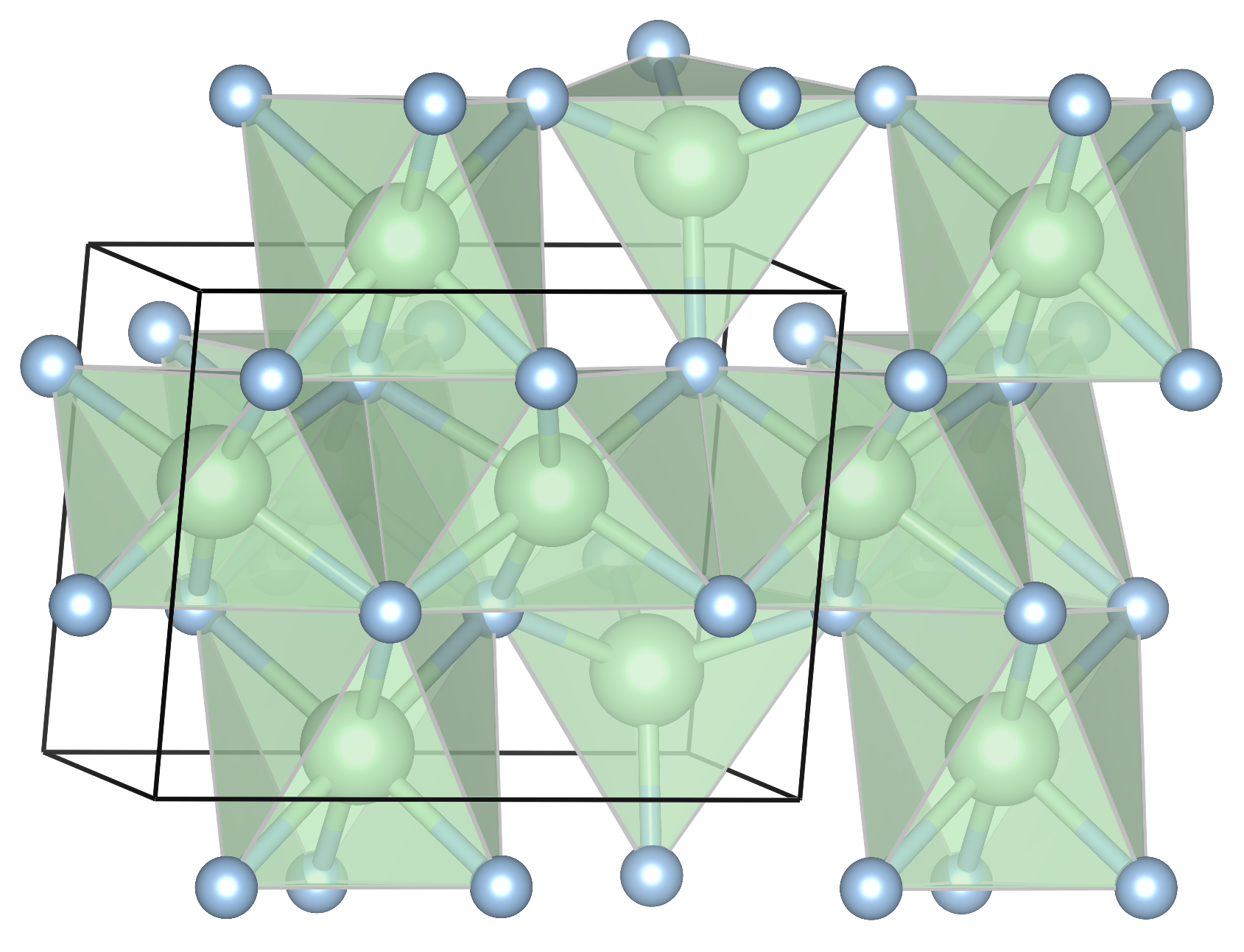

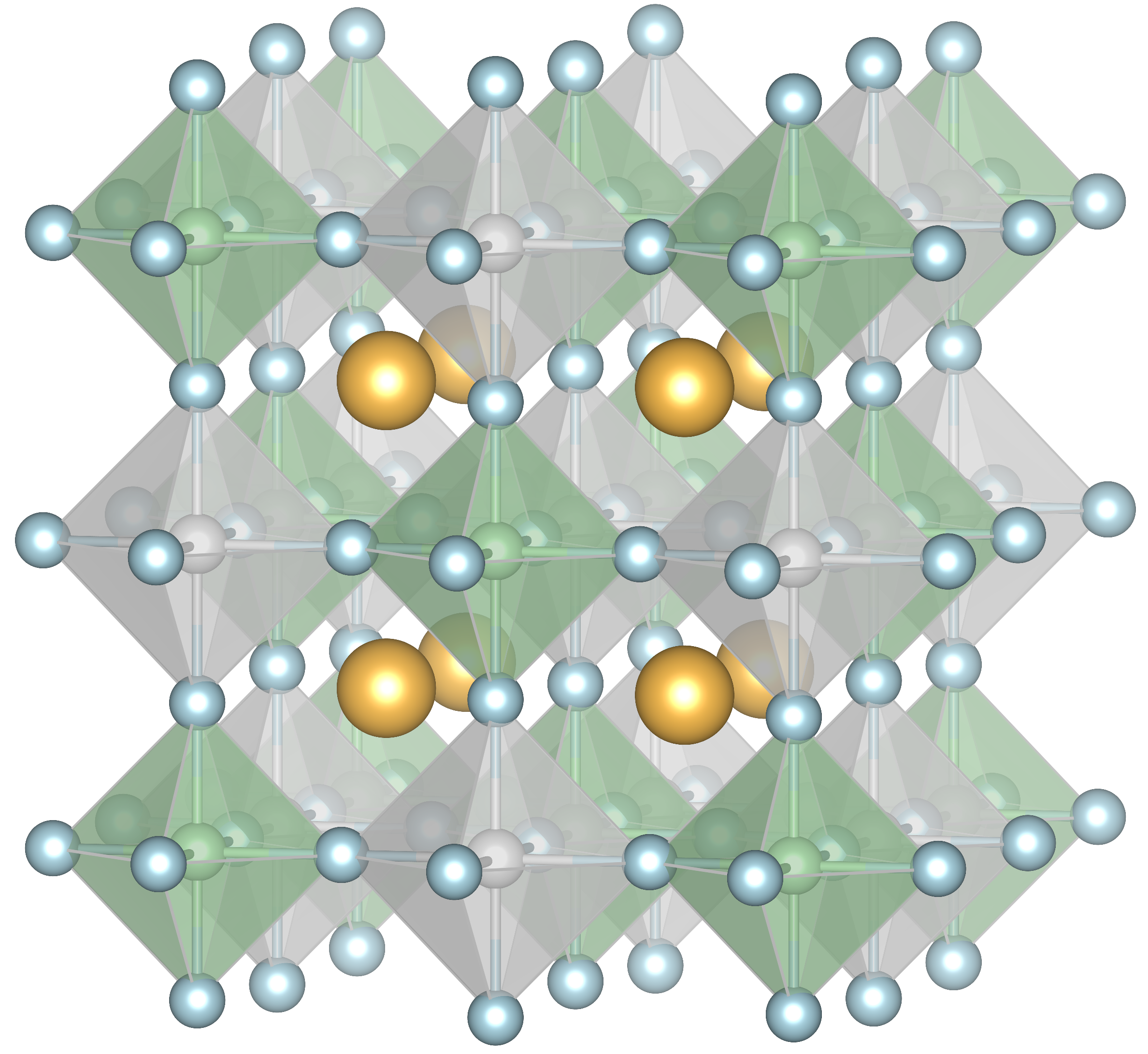

Generative models have shown promise in expansive screening for crystal structures and property-targeted generation. CubicGAN, for example, explored 10 million candidate structures, resulting in 506 mechanically stable ones. MatterGen demonstrated the generation of Li-ion battery materials adhering to stability criteria.

Figure 3: Examples of generated structures by CrystaLLM models, illustrating the diversity and fidelity achievable through generative AI.

Future Directions

The paper emphasizes future research centered on extending representation capabilities to consider defects, disorder, and multi-objective optimization. Integration with uMLIPs and deeper reinforcement learning strategies holds potential for more efficient exploration of complex material spaces.

Conclusion

Generative models for crystal structures are evolving rapidly, offering innovative pathways in materials discovery. As methodology, data curation, and benchmarking improve, these models will likely become pivotal in accelerating affordable and scalable materials innovation, ultimately bridging computational predictions and experimental realizations.