- The paper introduces a novel EKF-based hybrid calibration approach for TI-ADC systems, modeling offset, gain, and timing errors as a sequential state estimation problem.

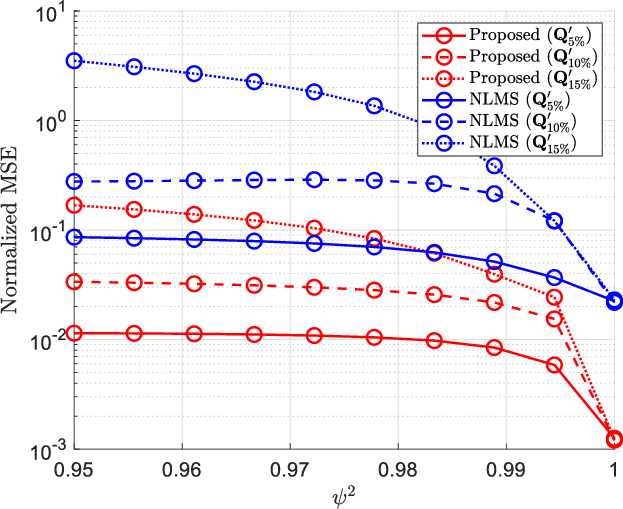

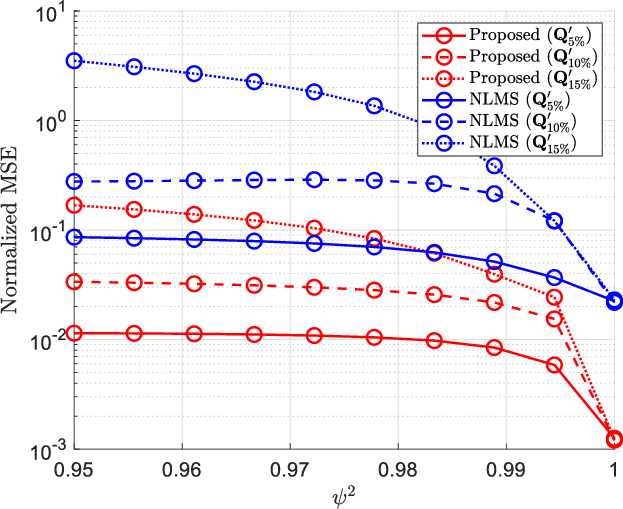

- It employs a two-stage compensation algorithm combining direct normalization and fractional delay filters to mitigate static and dynamic mismatches with nearly 10× NMSE improvement over traditional methods.

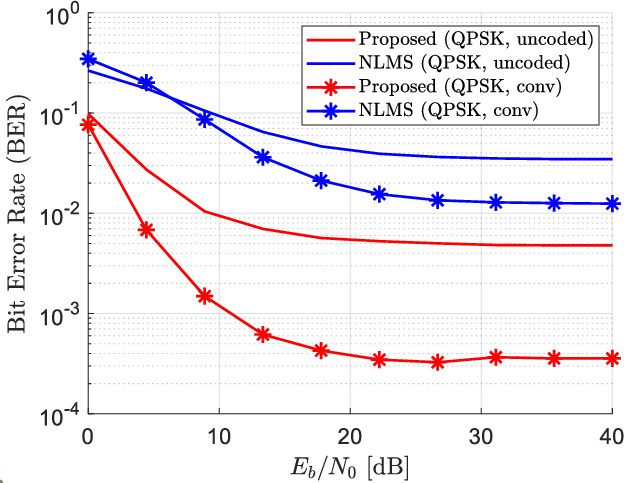

- Simulation results demonstrate robust performance improvements in error metrics and BER, confirming the method’s efficacy in high-speed, realistic ADC applications.

Introduction

Time-interleaved analog-to-digital converters (TI-ADCs) scale sample rates by deploying M parallel sub-ADCs, each clocked at a fraction of the overall rate. However, offset, gain, and timing mismatches between the sub-ADCs are a principal bottleneck limiting achievable resolution, integration density, and effective SNR. Conventional calibration strategies either interrupt signal acquisition (foreground) or necessitate extensive averaging and complexity (background). Hybrid calibration schemes, which interleave known references into normal operation, attempt to reconcile estimation precision and real-time applicability. This paper reframes the TI-ADC mismatch problem as a sequential state estimation task and introduces an extended Kalman filter (EKF)-based hybrid calibration method (2503.10022).

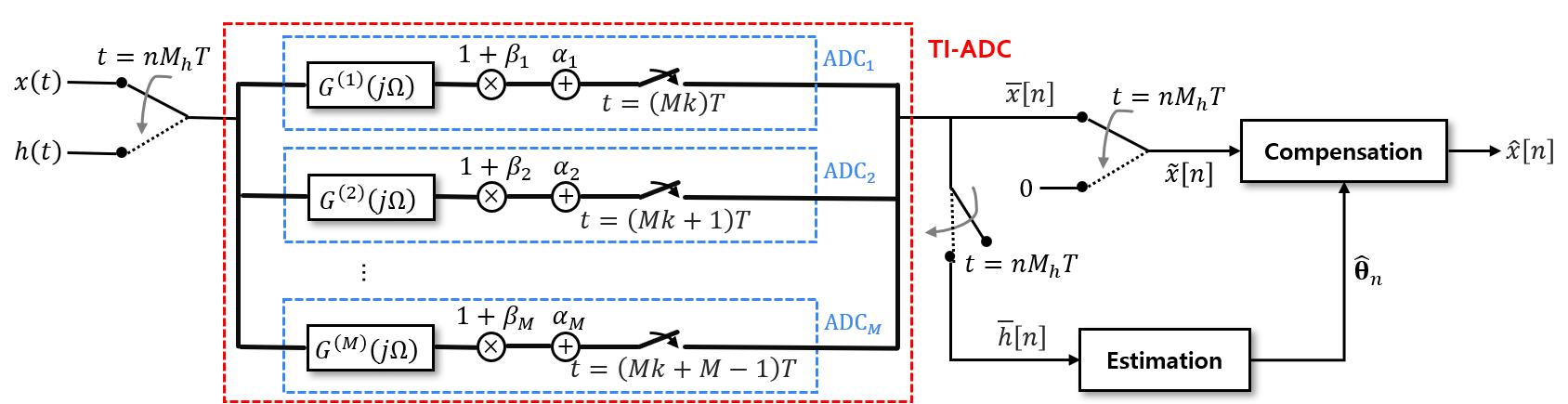

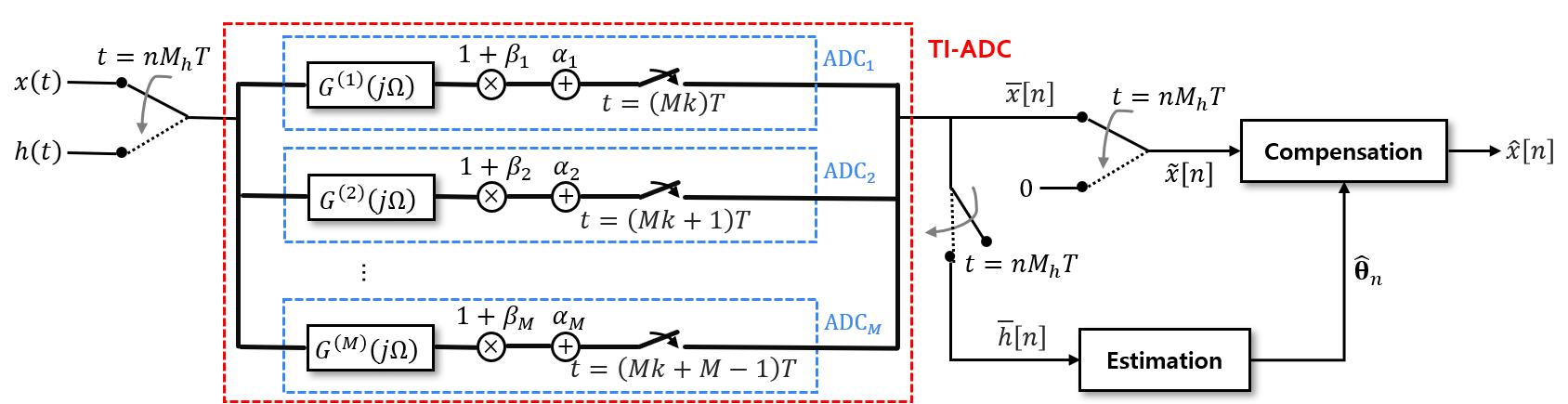

Figure 1: The hybrid calibration structure with mismatch estimation and compensation phases for time-interleaved ADCs.

The paper recognizes all key mismatch types in TI-ADC operation: offset (α(m)), gain (β(m)), and timing (ϕ(m)) for each sub-ADC m. Critically, it recognizes the inherent time-variability of these mismatches, modeling their evolution as a first-order autoregressive process within a discrete-time nonlinear state-space model. The observation channel utilizes periodically injected reference samples h(t), leading both to effective statistical identifiability and the "missing sample" issue on the main signal path.

This viewpoint enables the application of the EKF for real-time, recursive estimation, explicitly incorporating process and measurement noise into the calibration framework. The observation model is nonlinear, with the reference output affected by all three mismatch categories.

EKF-Based Hybrid Mismatch Estimation

The algorithm utilizes the following structure per sub-ADC:

- State vector: θt(m)=[αt(m),βt(m),ϕt(m)]⊤

- Process equation: First-order AR with known or estimated dynamics and noise covariance.

- Observation model: Output of known reference under current mismatch parameters with additive observation noise.

At each time step, the EKF alternates between:

- Prediction (propagation of state mean and covariance via process model and Jacobian)

- Update (innovation-based correction using nonlinear observation Jacobian)

Unlike previous hybrid calibration approaches, the EKF-based method provides optimal (in the local linear sense) data assimilation, addresses both static and dynamic mismatch behavior, and natively incorporates uncertainties for robust tracking.

Compensation and Reconstruction

Given real-time estimates α^(m), β^(m), ϕ^(m), the method proposes a two-stage compensation algorithm:

- Offset and gain compensation: Direct normalization using the latest estimates for each sample and sub-ADC.

- Timing and missing sample compensation: Synthesis of fractional delay filters for timing errors and a high-pass filter-based approach for missing samples, with a Gauss-Seidel iteration for inverting the composite (but structured) filtering via an efficient iterative solver.

This pipeline guarantees that timing mismatch and sample loss—two critical sources of SOI distortion—are systematically addressed, achieving practical computational complexity. Importantly, the mismatch estimation is explicitly decoupled from previous filter optimization bottlenecks; the approach does not require case-specific FIR filter design or iterative VDF optimization as in [Tsui & Chan, 2013].

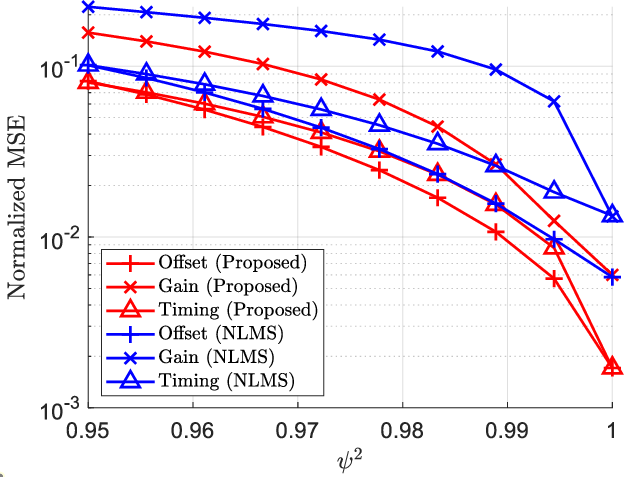

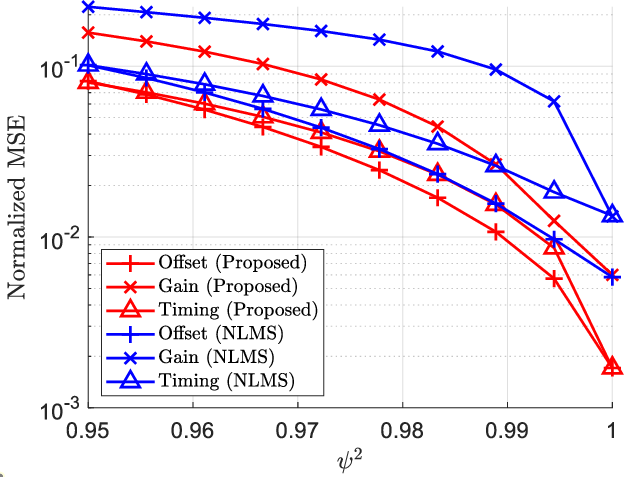

The proposed method is benchmarked against the established hybrid VDF+NLMS calibration approach. Simulation parameters include four sub-ADCs, moderate reference injection interval (Mh), physically realistic time-varying mismatch evolution, and 50 dB SNR for the reference observations. The time-variability model—AR(1) with controlled ψ—enables systematic analysis of tracking capabilities.

Figure 2: The normalized MSE of (a) the estimated mismatch errors for Q′=Q5%′ and (b) the reconstructed signal for various Q′, both with respect to ψ2.

Theoretical and Practical Implications

This work demonstrates that reframing hybrid TI-ADC calibration as sequential Bayesian estimation yields tangible benefits:

- Theoretically, it establishes a framework where the tradeoff between prior knowledge, process dynamics, and measurement noise is made explicit. This enables rigorous analysis of inherent limits and motivates future investigation into more sophisticated state/process models (e.g., with nonlinearity, unknown statistics, or partially observed control).

- Practically, it enables computationally efficient, online calibration applicable to high-speed, high-resolution ADCs in emerging communication, instrumentation, and software-defined radio systems. The modularity also facilitates future extensions to multirate, asynchronous, or RF-impairment-limited architectures.

Conclusions

This paper provides a principled algorithmic advance for calibration of TI-ADC systems. By recasting mismatch identification as a tracking problem and leveraging the EKF, it achieves strong performance across static and time-varying regimes, with reduced complexity and without reliance on filter optimization heuristics. The results are substantiated through detailed error and BER analysis under realistic models. Further research may focus on adaptive or model-free extensions, joint calibration-channel estimation for communication systems, or hardware prototype validation.