- The paper introduces the Model Human Learner, a computational framework that simulates human learning to optimize instructional design choices.

- The study employs the Trestle model from the Apprentice Learner Architecture to simulate learning trajectories and test tutoring strategies.

- Results from fraction arithmetic and box and arrow tutors reveal that interleaved practice and constrained problem designs enhance long-term learning outcomes.

Model Human Learners: Computational Models to Guide Instructional Design

Introduction to Model Human Learners

The concept of the Model Human Learner is introduced as an analogous counterpart to the Model Human Processor, originally proposed by Card et al. in 1986. The Model Human Learner is described as a unified computational model that incorporates cognitive and learning theories to predict human learning within instructional systems. This model is proposed as a tool to aid instructional designers in making informed decisions that enhance pedagogical effectiveness, suggesting solutions to the challenges posed by the overwhelming array of design choices available in instructional design.

Addressing Instructional Design Complexity

Instructional design poses unique challenges due to its combinatorial nature, presenting countless configurations that designers must navigate to determine optimal interventions. Traditional A/B testing, while useful, is limited by its cost and time requirements, as well as its inherent informational constraints—often providing insufficient granularity in results. Computational models of learning are proposed as a mechanism to simulate A/B experiments, thereby circumventing the need for extensive human testing and enabling rapid evaluation of potential instructional strategies before real-world implementation.

Mechanistic Approach of the Model

The study utilizes the Trestle model from the Apprentice Learner Architecture, which simulates learning processes by implementing mechanisms derived from prior models, such as ACM, CASCADE, STEPS, and SimStudent. This mechanistic approach to modeling emphasizes the simulation of knowledge updates and performance evolution over time, leveraging AI and ML technologies. The Trestle model focuses on skill acquisition through practice, demonstration, and feedback integration, generalizing procedural knowledge from examples to create reusable skills.

Study 1: Fraction Arithmetic Tutor

Experiment Overview

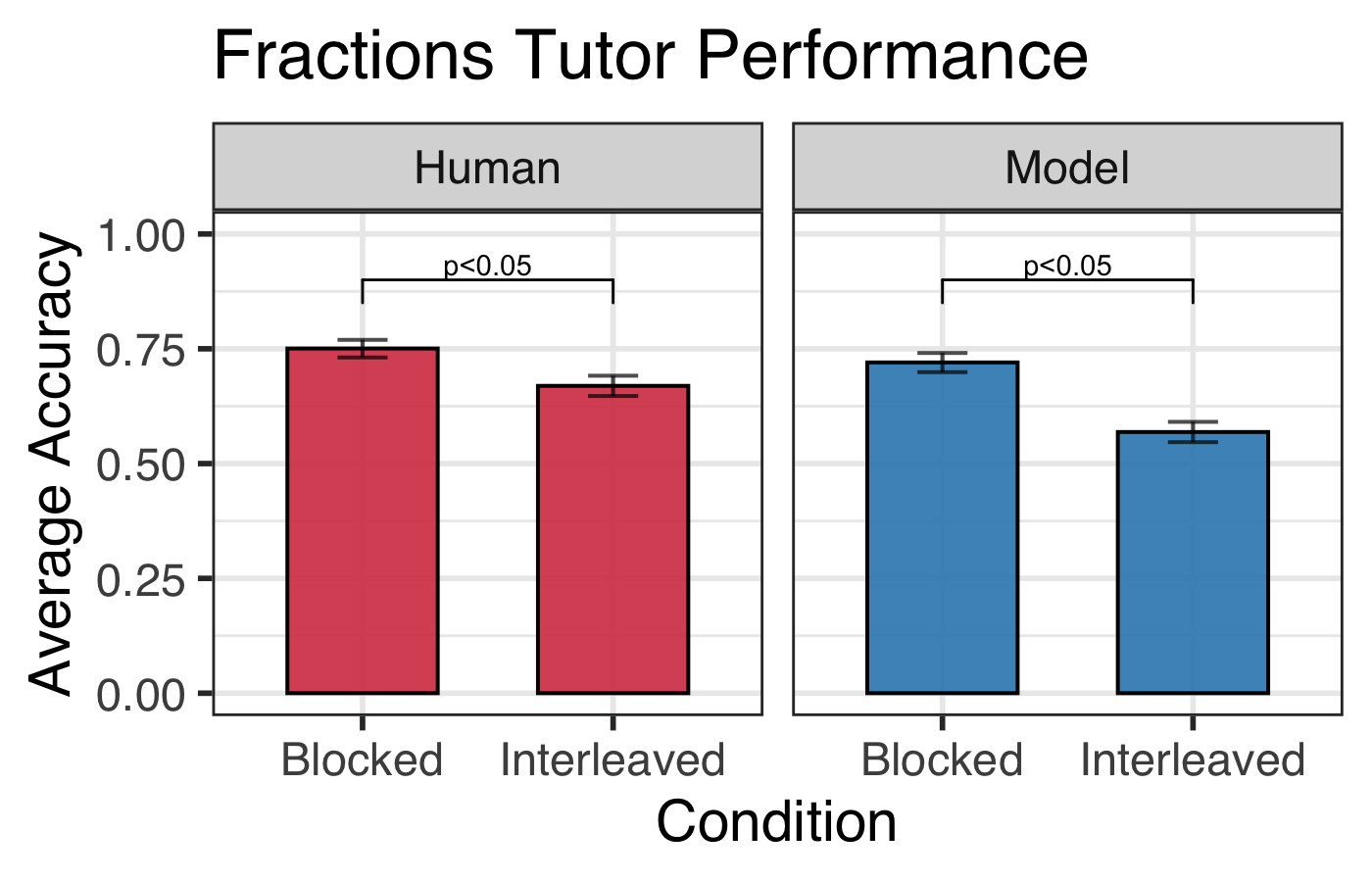

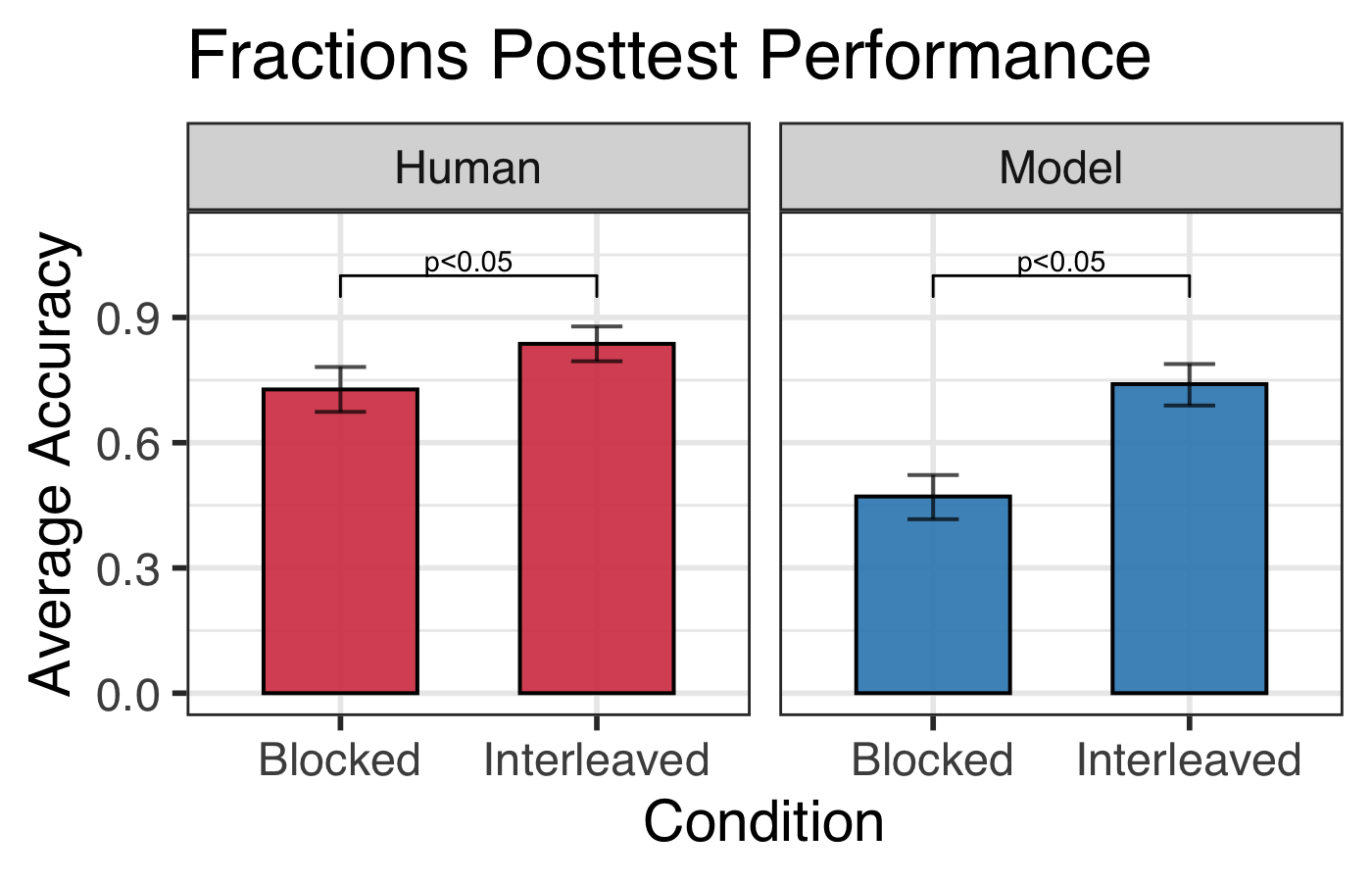

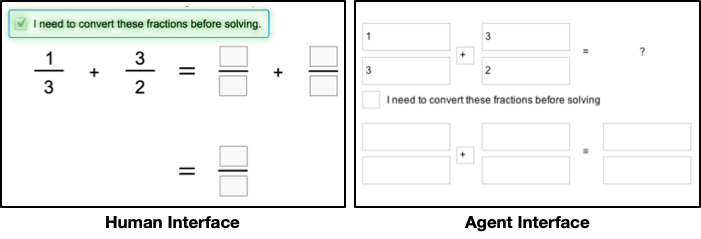

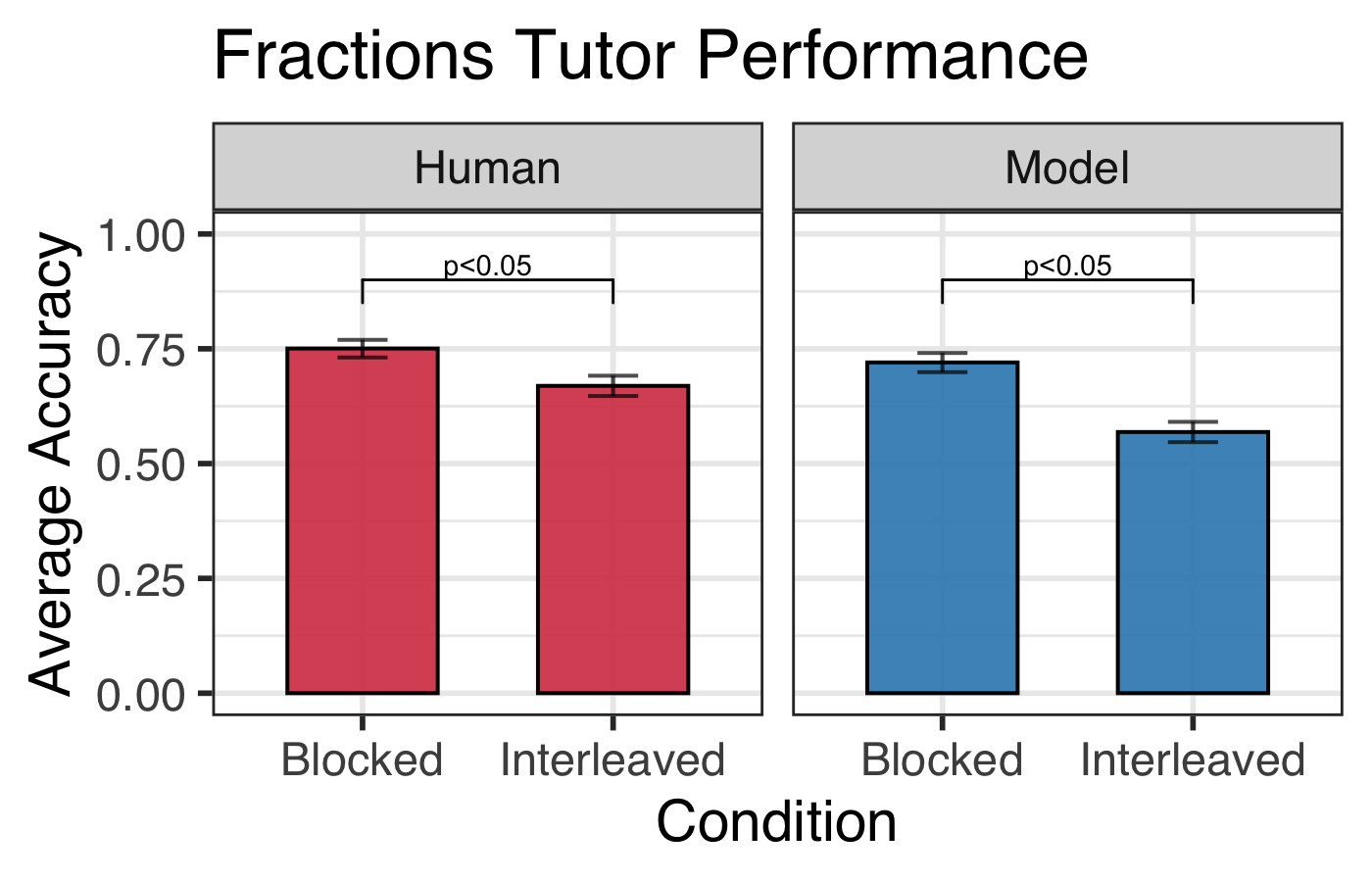

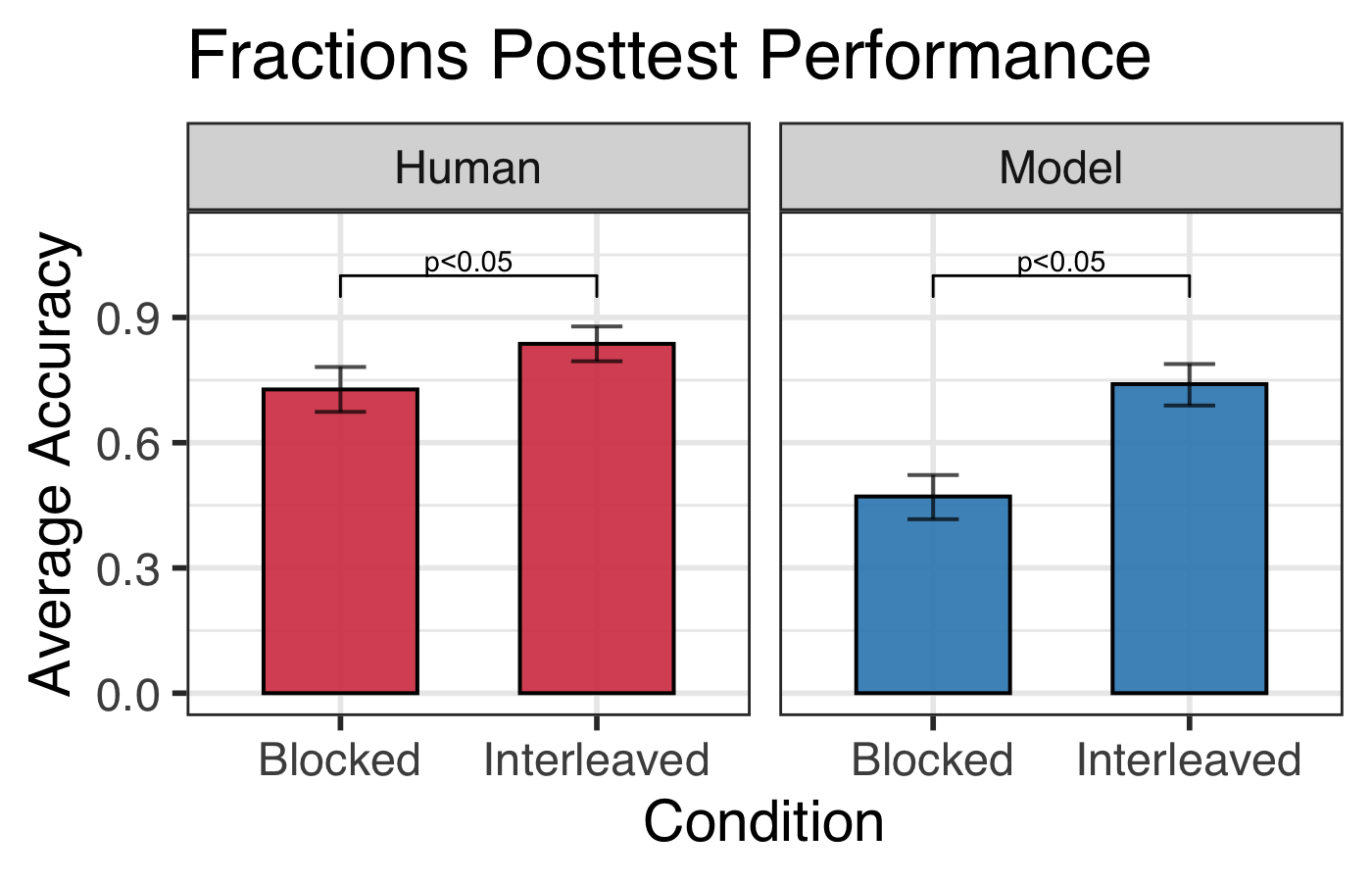

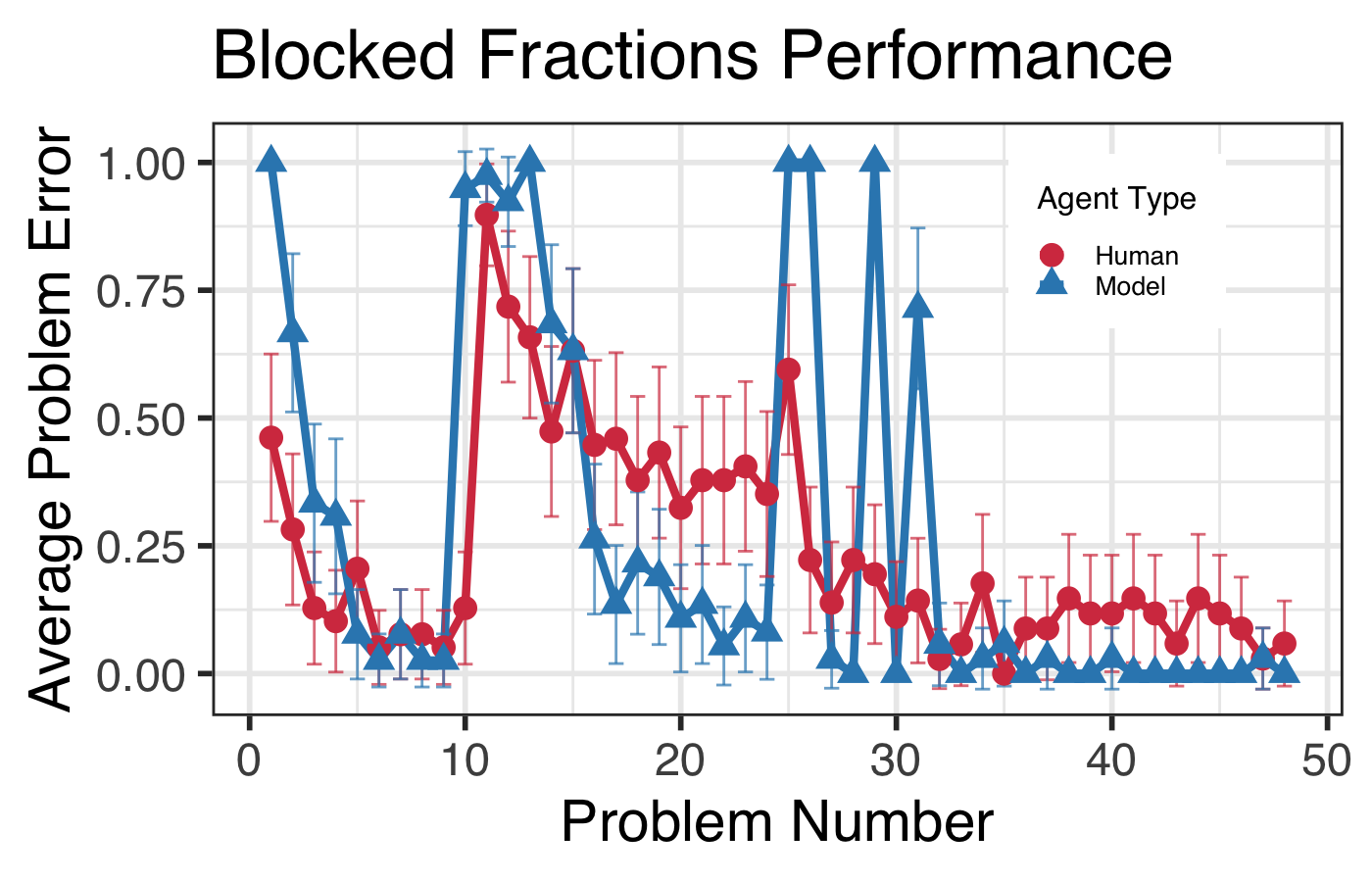

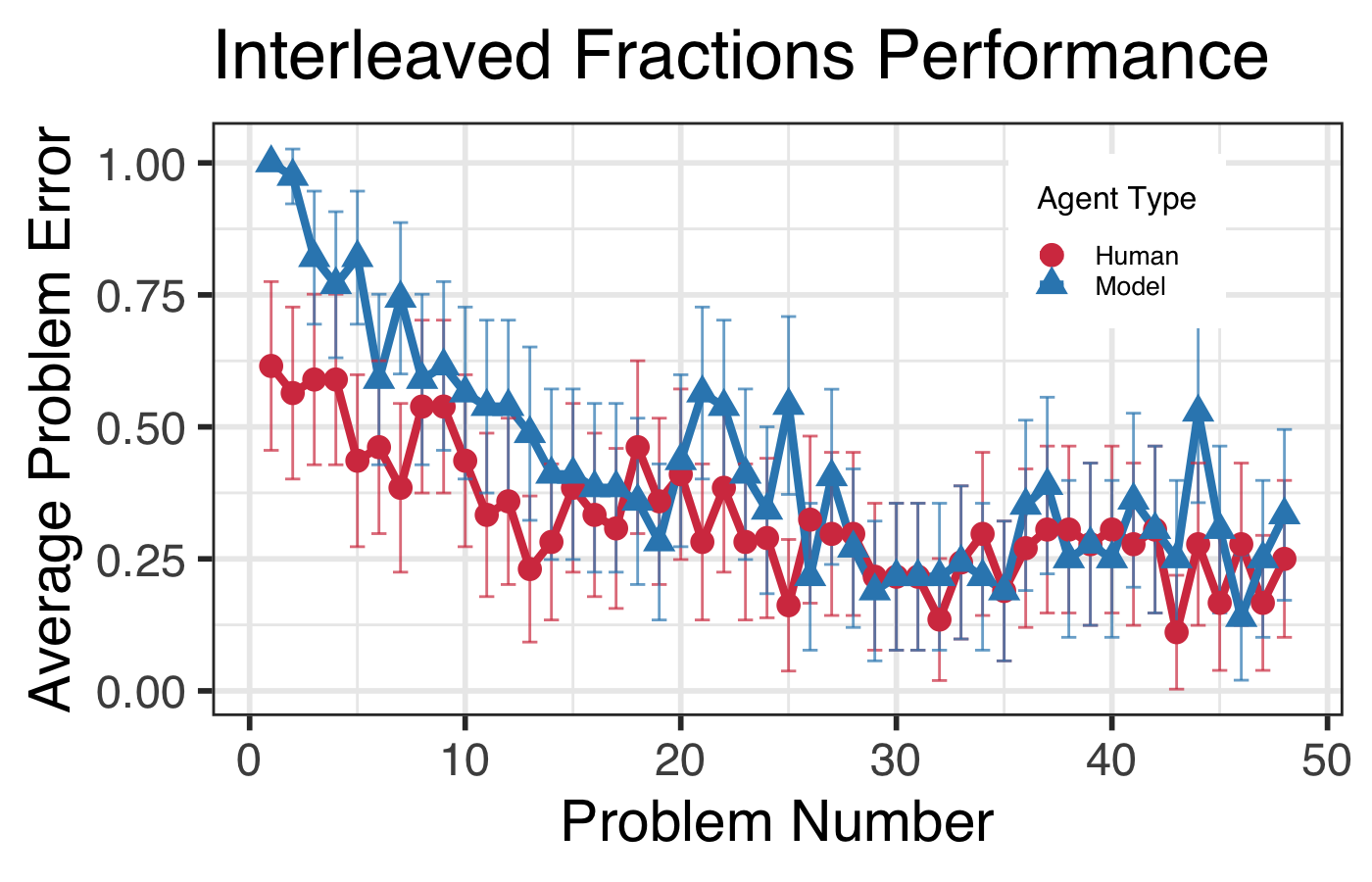

The first study examines the Model Human Learner's predictive capabilities in a fraction arithmetic learning task. Human data was sourced from the Fraction Addition and Multiplication dataset, analyzing the effectiveness of blocked versus interleaved problem sequencing on student performance. The contrasting results on training versus posttest performance highlight the model's capability to predict long-term learning outcomes even when initial errors during training are prevalent.

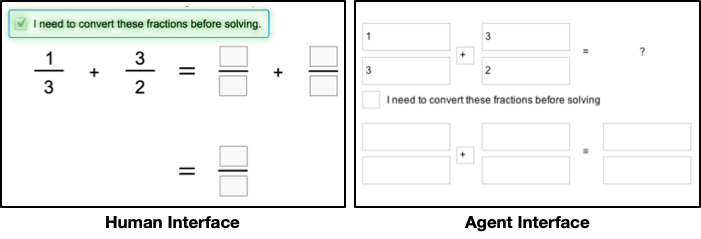

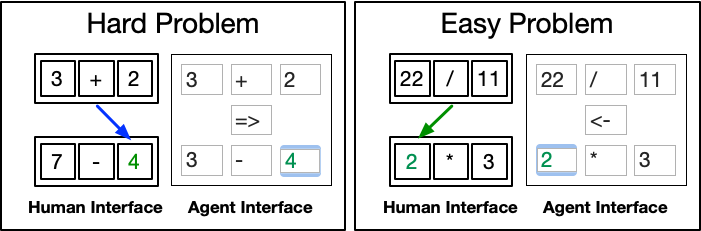

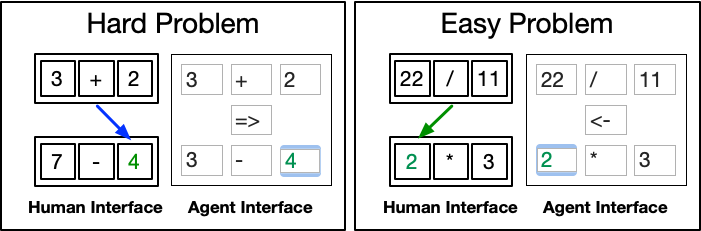

Figure 1: Fraction arithmetic tutor interfaces.

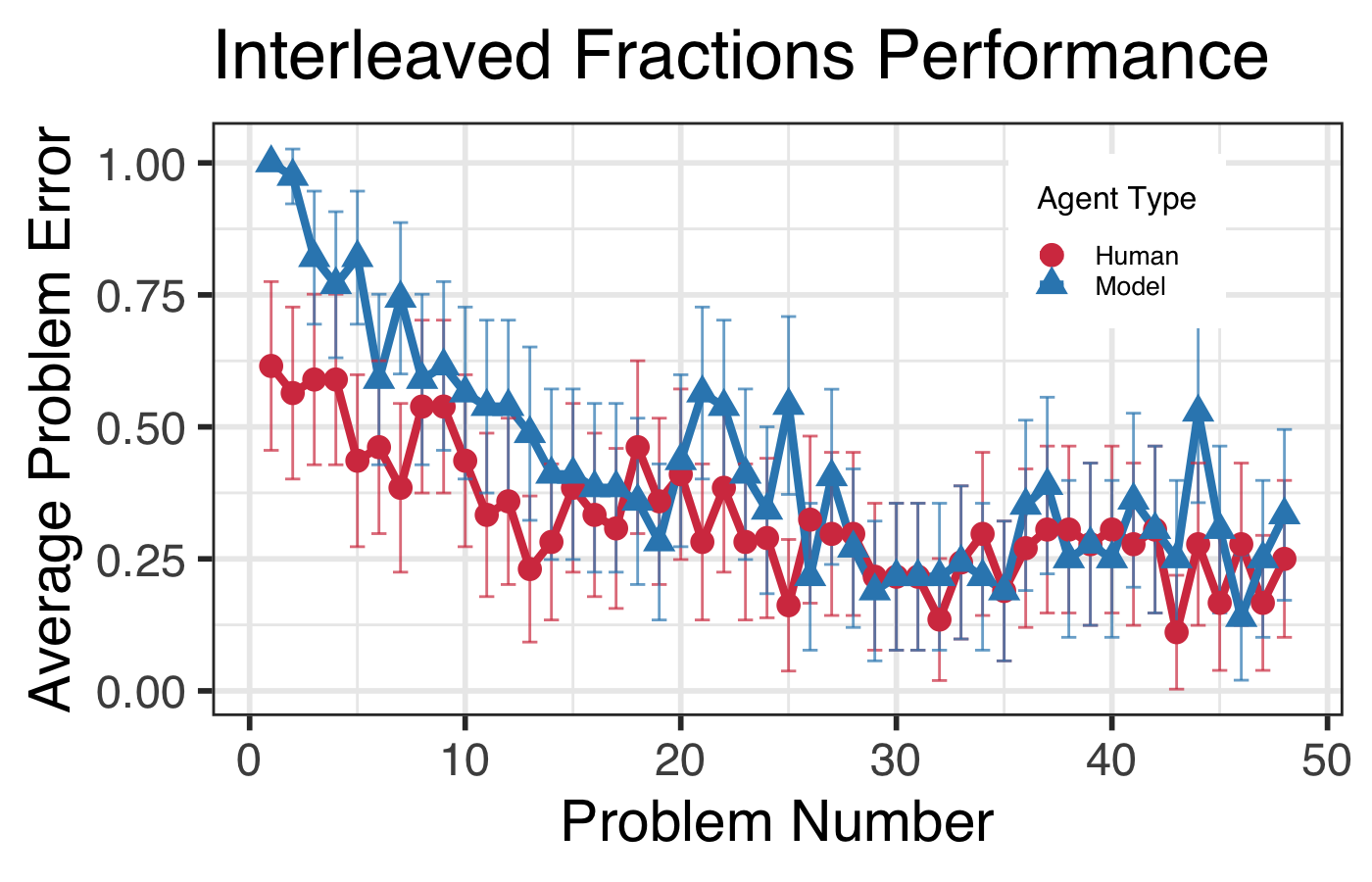

Results and Learning Curves

Results indicated that both humans and simulated agents in interleaved conditions exhibited improved posttest performance despite initial training errors. This finding underscores the Model Human Learner's ability to capture the qualitative trends of human learning trajectories, revealing insights into the cognitive advantages of interleaved practice.

Figure 2: Fraction arithmetic accuracy on tutor and posttest problems with 95\% confidence intervals (CIs).

Figure 3: Fraction arithmetic learning curves with 95\% CIs. The blocked tutor transitions types at problems 11 and 25.

Study 2: Box and Arrows Tutor

Experiment Overview

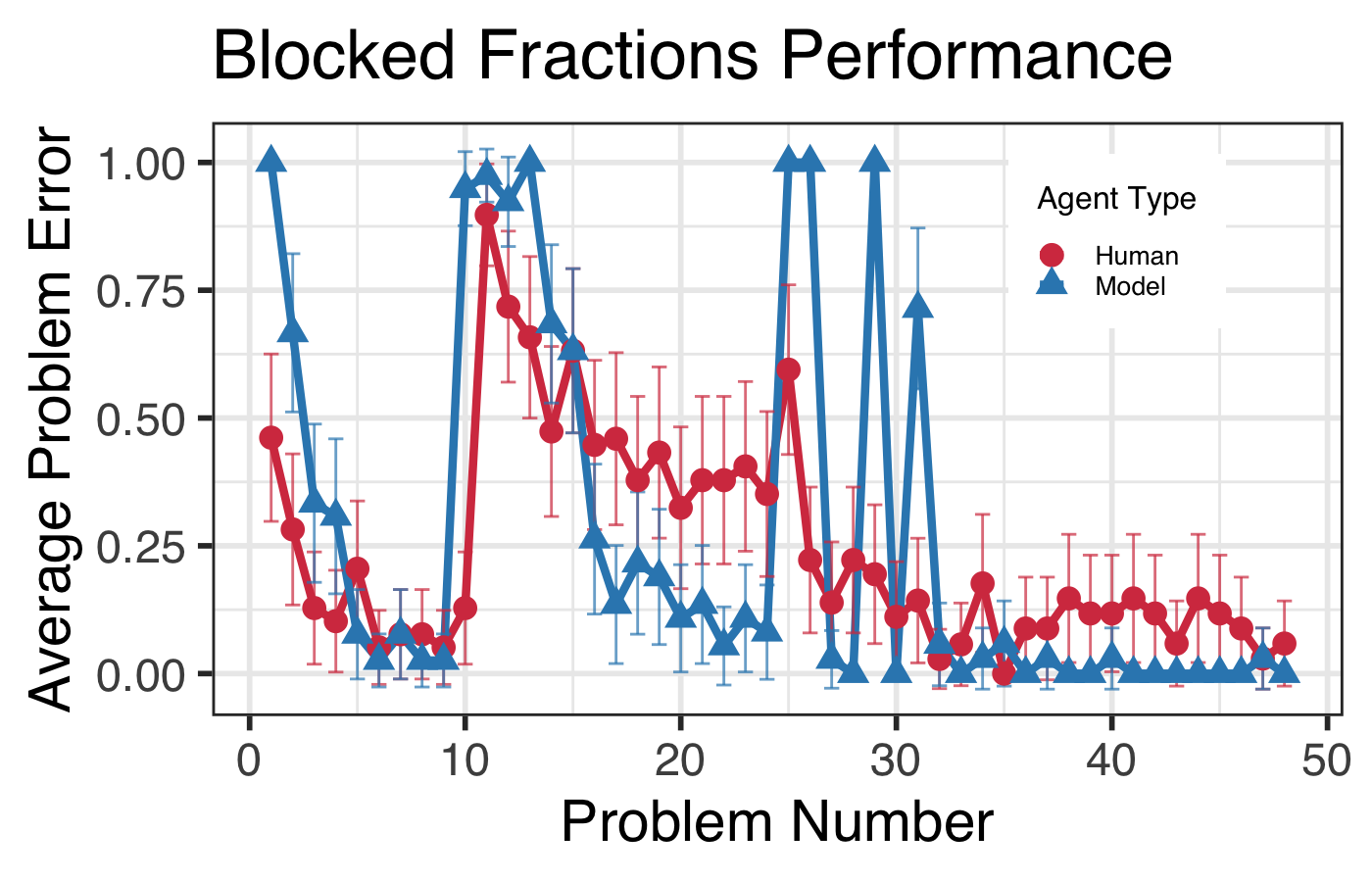

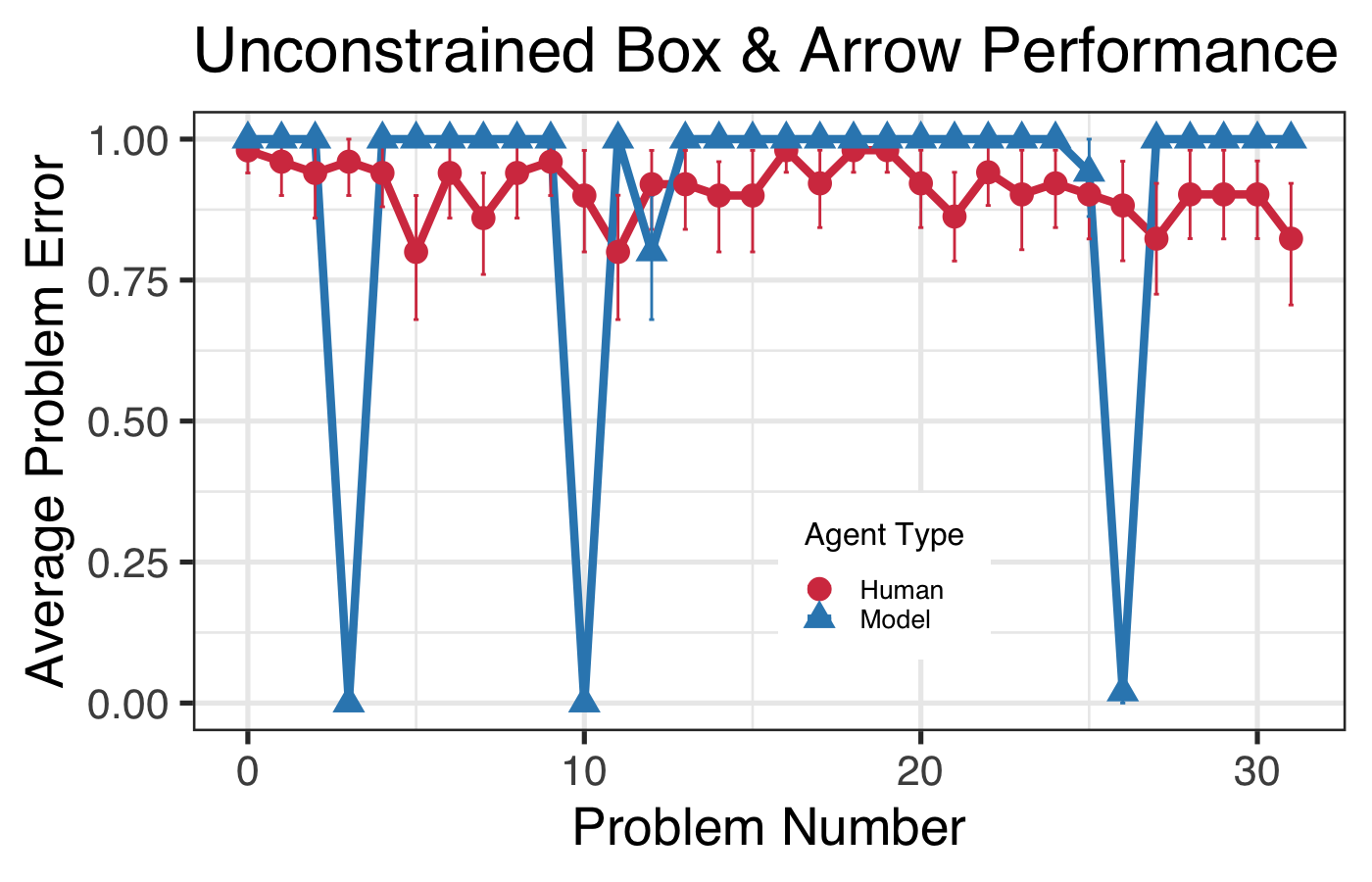

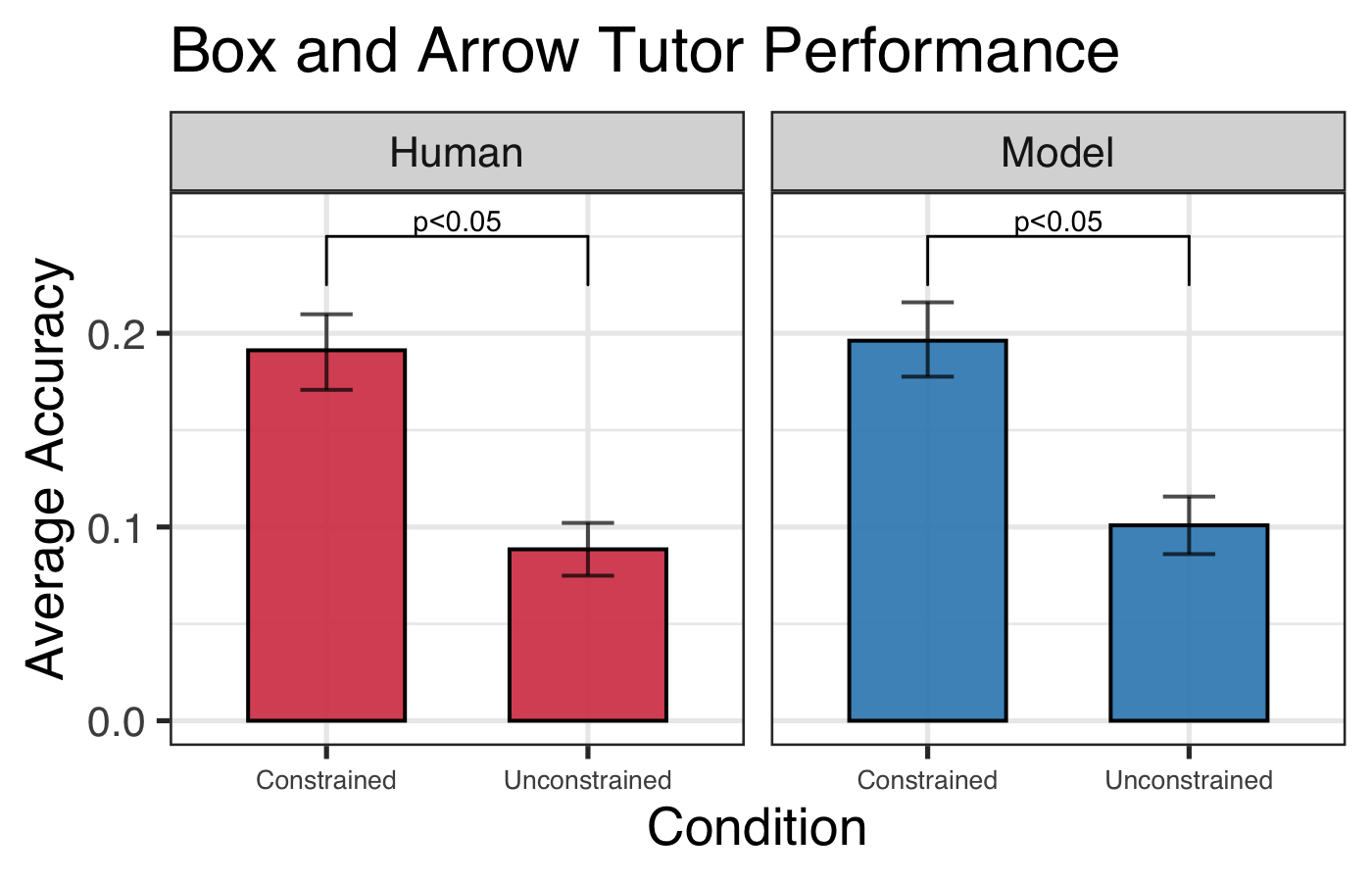

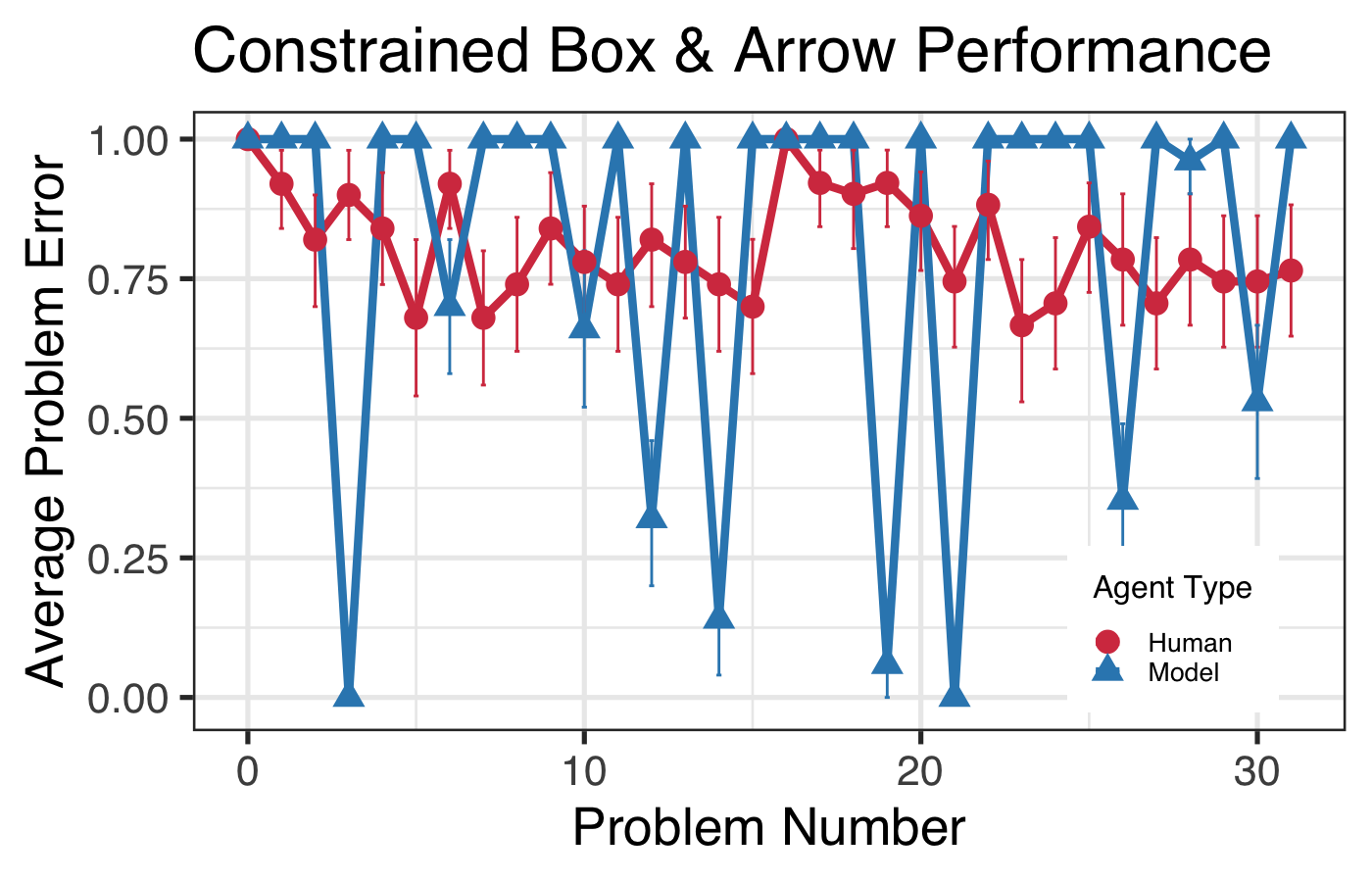

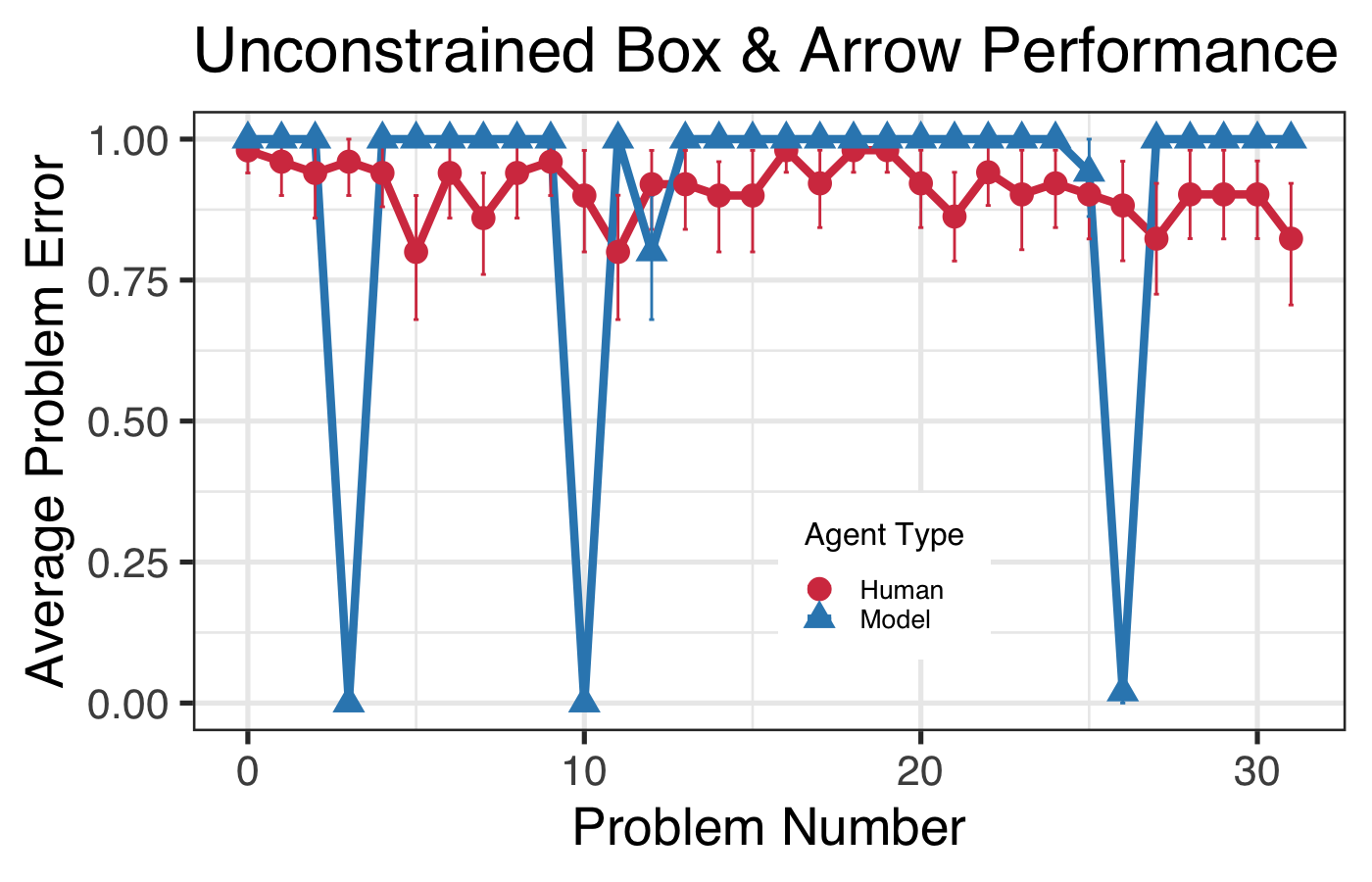

A second validation study employed human data from the Box and Arrow Tutor dataset, exploring the effects of constrained versus unconstrained items on rule discovery. The Model Human Learner aims to directly instantiate learning theories and hypotheses, testing the cognitive implications of problem constraint in learning.

Figure 4: Box and arrows tutor interfaces.

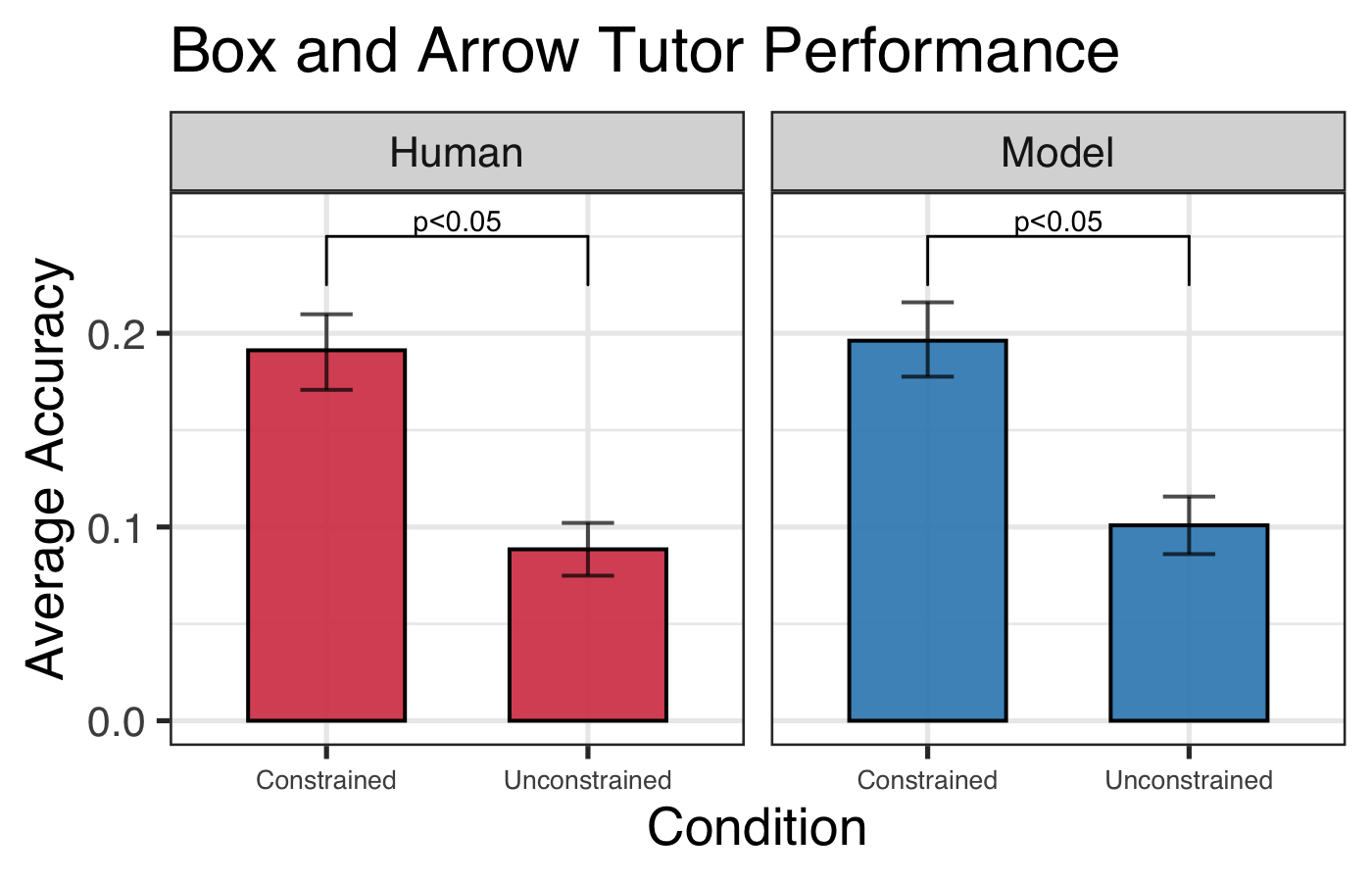

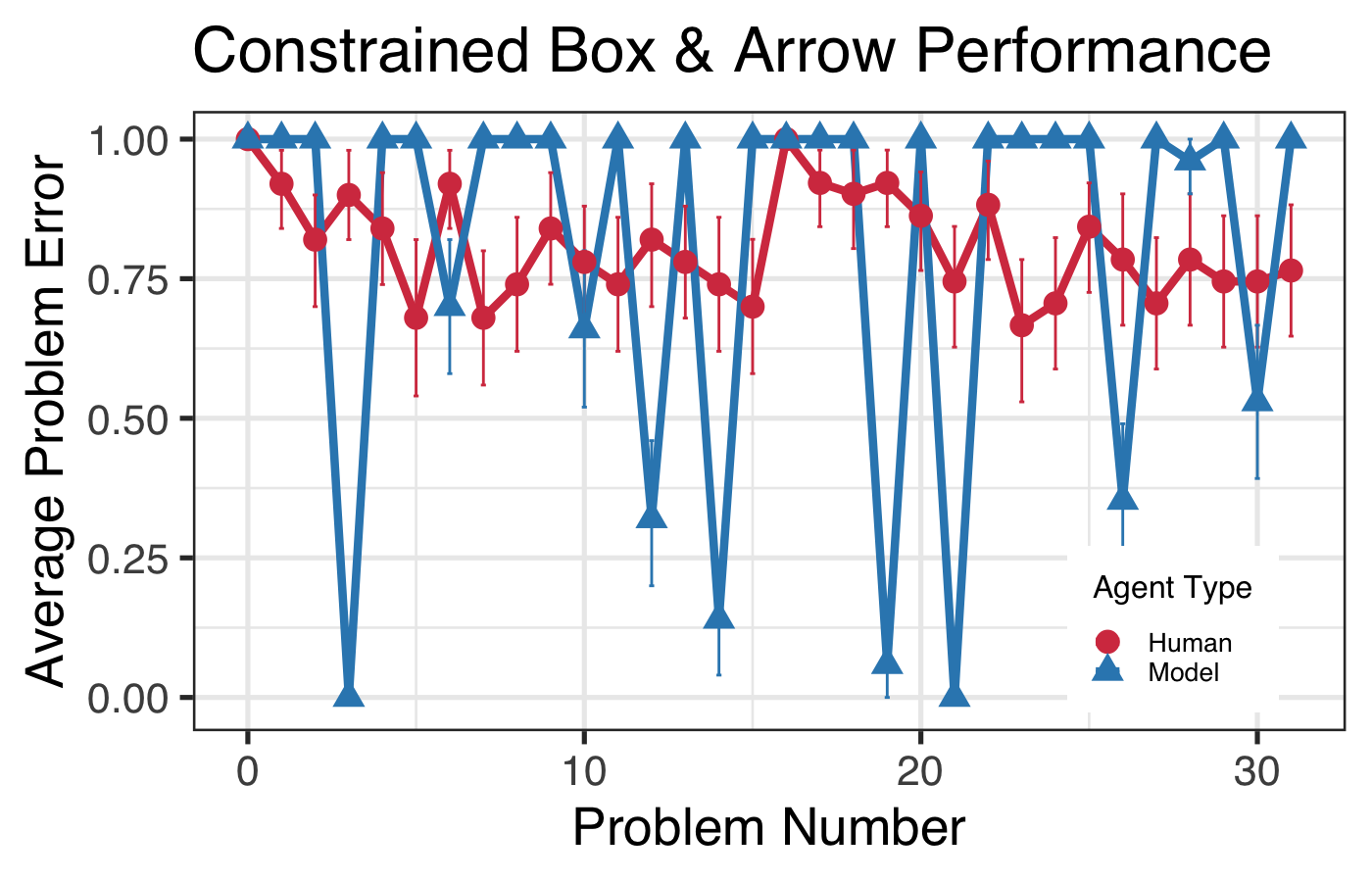

Predictive Insights and Implications

Results from this study aligned with human performance patterns, suggesting that problem constraint advantages derive from reduced procedural ambiguity rather than ease of computation with whole numbers. This insight challenges prior hypotheses and suggests new directions for instructional design focusing on minimizing procedural alternatives to enhance learning.

Figure 5: Overall box and arrow accuracy with 95\% CIs.

Figure 6: Box and arrow learning curves with 95\% CIs.

Conclusion

The presented studies demonstrate the validity of computational models of learning as predictive tools for instructional design, supporting the Model Human Learner concept across diverse tasks and interventions. These models offer theoretical insights into effective instructional strategies and generate predictions consistent with observed human learning effects. Future research should expand this concept across a broader array of tasks and interventions to further refine the Model Human Learner's applicability in enhancing educational design decisions.