Quantum Machine Learning: A Hands-on Tutorial for Machine Learning Practitioners and Researchers

Abstract: This tutorial intends to introduce readers with a background in AI to quantum machine learning (QML) -- a rapidly evolving field that seeks to leverage the power of quantum computers to reshape the landscape of machine learning. For self-consistency, this tutorial covers foundational principles, representative QML algorithms, their potential applications, and critical aspects such as trainability, generalization, and computational complexity. In addition, practical code demonstrations are provided in https://qml-tutorial.github.io/ to illustrate real-world implementations and facilitate hands-on learning. Together, these elements offer readers a comprehensive overview of the latest advancements in QML. By bridging the gap between classical machine learning and quantum computing, this tutorial serves as a valuable resource for those looking to engage with QML and explore the forefront of AI in the quantum era.

Summary

- The paper introduces a comprehensive hands-on tutorial that bridges classical machine learning with quantum computing using practical code workflows.

- It rigorously details quantum foundations, QML paradigms, and architectures such as quantum kernels, neural networks, and transformers.

- It evaluates hardware trade-offs and data encoding challenges, offering actionable guidance for both NISQ and fault-tolerant quantum computing regimes.

Quantum Machine Learning: A Comprehensive Tutorial for Practitioners and Researchers

Introduction and Motivation

"Quantum Machine Learning: A Hands-on Tutorial for Machine Learning Practitioners and Researchers" (2502.01146) provides an in-depth and systematic overview of quantum machine learning (QML), aiming to bridge communities in classical machine learning and quantum computing. The tutorial emphasizes not only the theoretical foundations but also real-world implementations, including practical code workflows and detailed discussions on algorithms, architectures, and resource trade-offs.

The motivation stems from computational bottlenecks in classical machine learning, driven both by the physical limitations of Moore’s law and the exponentially increasing demands of deep learning models. Quantum computing offers a fundamentally different paradigm, leveraging principles such as superposition and entanglement to represent and process information beyond classical tractability. As such, QML emerges as a promising area with potential to achieve algorithmic advantages in sample complexity, runtime, and representational power over classical frameworks.

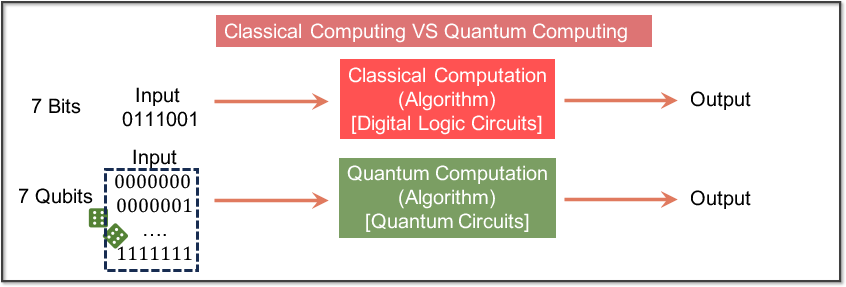

Figure 1: Schematic comparison between classical and quantum computation, highlighting the mapping from classical bits and circuits to quantum qubits, circuits, and measurement as the interface to classical outputs.

Quantum Computing Foundations for Machine Learning

The tutorial begins by rigorously delineating the foundational concepts of quantum computation—the distinctions between classical bits and quantum bits (qubits), logic circuits versus quantum circuits, and the role of quantum measurement. Importantly, it frames computational complexity distinctions in terms of P versus BQP, contextualizing historical results such as Shor's algorithm and the limitations introduced by noisy intermediate-scale quantum (NISQ) hardware.

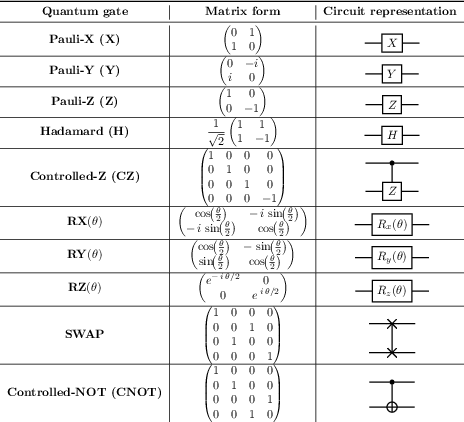

The text reviews basic circuit primitives down to mathematical detail—unitary evolution of qubits, tensor product structure of multi-qubit systems, and summarizes universal gate sets. The isometry extension theorem and Kraus representations are provided for the general treatment of quantum channels, enabling a seamless translation between idealized unitary evolution and practical noisy operations.

Figure 2: Canonical quantum gates forming the basis for universal circuit construction, with both algebraic and graphical representations.

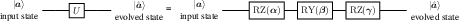

Figure 3: Decomposition of a single-qubit state evolution via sequential applications of quantum gates.

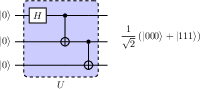

Figure 4: Full decomposition of a generic N=3 multi-qubit circuit as a composition of elementary gates for state preparation.

The tutorial also covers quantum measurement theory—including projective measurements and positive operator-valued measures (POVMs)—as well as insights on empirical sample complexity versus information theoretic complexity.

Quantum Machine Learning Paradigms and Task Taxonomy

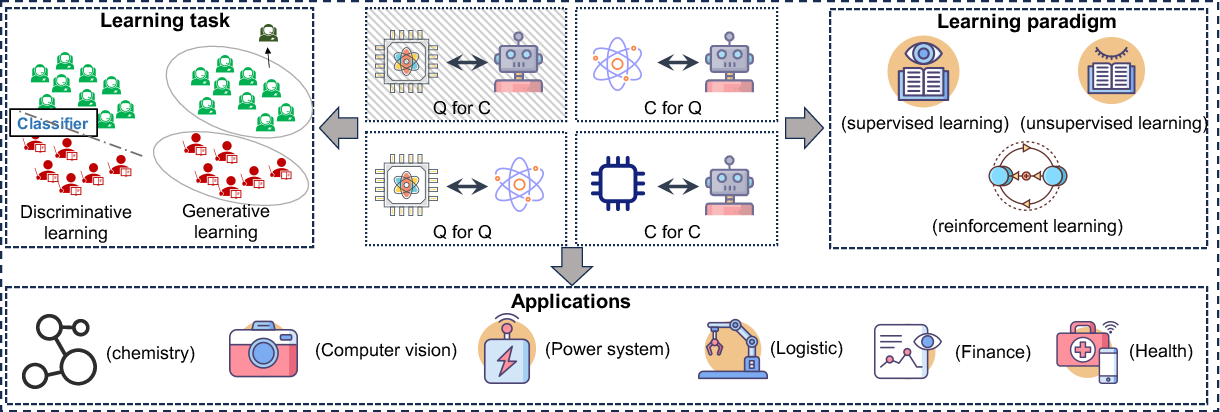

The paper classifies QML research along the axes of hardware (classical or quantum processor) and data (classical or quantum data), distinguishing four sectors: the traditional classical (\textsf{C} for \textsf{C}), classical learning for quantum data (\textsf{C} for \textsf{Q}), quantum learning for classical data (\textsf{Q} for \textsf{C}), and quantum learning for quantum data (\textsf{Q} for \textsf{Q}). The majority of the manuscript focuses on \textsf{Q} for \textsf{C}—the scenario most immediately relevant to classical AI researchers aiming to leverage quantum computation for enhanced machine learning.

Figure 5: Overview of research avenues in QML, partitioned by classical/quantum data and learning, and further subdivided into learning paradigms and applications.

Quantum Hardware Landscape and Architectural Trade-offs

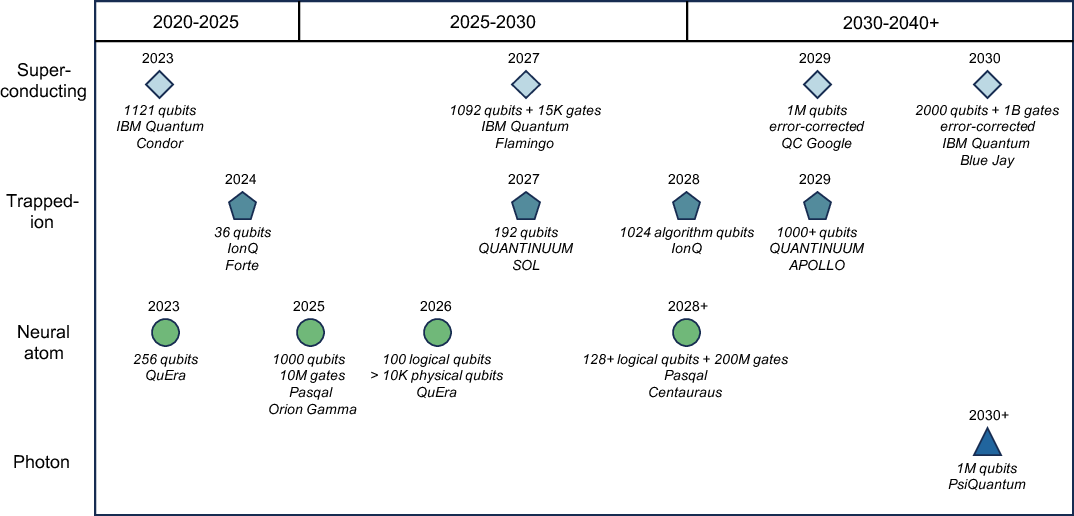

A detailed review of quantum hardware architectures is provided, contrasting superconducting, trapped ion, and Rydberg atom systems. The discussion highlights respective strengths and limitations: e.g., superconducting qubits for scalability, ion traps for connectivity, Rydberg atom arrays for flexible couplings. The critical role of coherence time, gate fidelity, and connectivity constraints is discussed relative to large-scale QML algorithm deployment, contextualized by the path from NISQ architectures to the longer-term goal of fault-tolerant quantum computing (FTQC).

Figure 6: Roadmaps of architectural advances and scaling metrics from key industrial quantum computing efforts, tracking qubit count, quantum volume, and system class.

Quantum Model Architectures: Kernels, Neural Networks, and Transformers

Central to the tutorial are detailed methodological expositions for quantum kernels and quantum neural networks (QNNs), both under NISQ and FTQC scenarios.

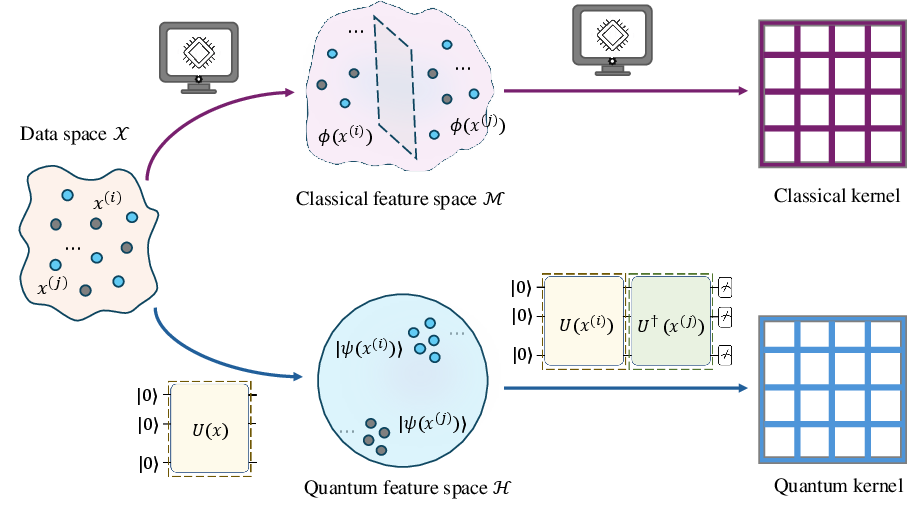

Quantum Kernel Methods

The transition from classical kernel approaches to quantum versions is developed in detail. Quantum feature maps are constructed via data-dependent quantum circuits, and quantum kernel functions defined via inner products in Hilbert space. The theoretical universality and the construction of efficient quantum kernels are analyzed, with explicit proofs provided for the conditions under which quantum kernels can (approximately) realize arbitrary kernel functions.

Figure 7: Paradigm shift from classical to quantum kernel methods, demonstrating the mapping and similarity evaluation in high-dimensional Hilbert spaces.

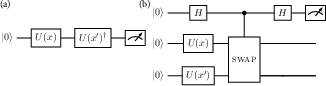

Figure 8: Representative quantum circuits for evaluating kernel inner products: Loschmidt echo (a) and Swap test (b) architectures.

Strong theoretical results include:

- Proof that any Mercer kernel can (in principle) be approximated by quantum kernels to arbitrary precision with polynomial quantum resources, up to additive/multiplicative normalization factors.

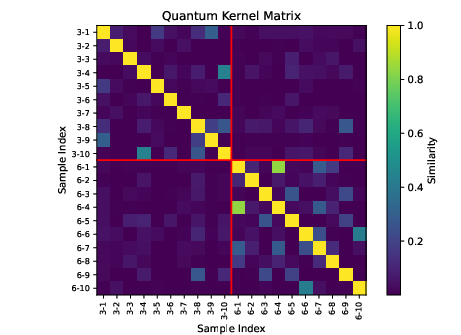

- Quantitative assessment and visualization of quantum kernel matrices and feature-induced similarities in standard benchmarks (e.g., MNIST), with clear block-structure indicating capacity for discrimination.

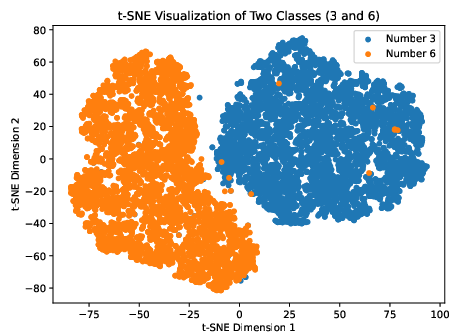

Figure 9: t-SNE visualization of the feature space induced by quantum kernels on MNIST "3" vs "6" digit instances.

Figure 10: Quantum kernel matrix for 20 samples, highlighting intra-class similarity and inter-class separation in kernel structure.

Practical evaluation is demonstrated via SVM performance as a function of training set size, and the tutorial discusses the effect of kernel expressivity, the problem of exponential concentration, and empirical generalization in noisy regimes.

Figure 11: Quantum SVM test accuracy as training set size varies, illustrating data efficiency in quantum kernel regimes.

Quantum Neural Networks

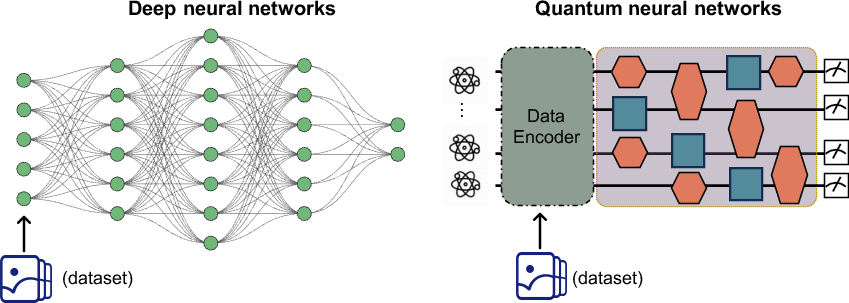

QNNs are positioned as variational models constructed via parameterized quantum circuits, with architectures paralleling deep neural networks but implemented as quantum gates. Practical instantiations of encoding, circuit construction, observable measurement, optimization (via gradient descent and parameter shift rule), and code implementation (e.g., with PennyLane) are given.

Figure 12: Structural comparison of DNNs and QNNs, emphasizing the replacement of linear layers with quantum circuits and the iterative optimization process.

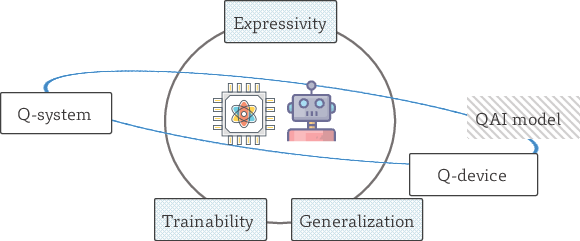

The learnability triangle—expressivity, generalization, trainability—is analyzed both in practice and theoretically (Figure 13):

- Covering number theory is developed for QNN hypothesis spaces, with explicit dependence on circuit depth, parameter count, and qubit number.

- Generalization bounds are provided, with sublinear dependence on parameterization and explicit sensitivity to QNN architectural choices.

- The barren plateau phenomenon is treated in depth, including analytic scaling of vanishing gradients in randomly-parameterized circuits and strategies for mitigation.

Figure 13: Schematic of the trade-offs defining learnability in QML: expressivity (hypothesis space), trainability (optimization landscape), generalization (prediction on unseen data).

Quantum Transformers

Quantum extensions of Transformer architectures are presented for the FTQC regime. Each subcomponent (embedding, self-attention, normalization, feed-forward layers) is translated into quantum algorithmic primitives using block-encoding, quantum linear algebra, and polynomial approximations (e.g., for softmax and activation functions). The tutorial provides both circuit-level descriptions and formal scaling analyses.

Significant results include:

- Derivation of end-to-end runtime scaling for quantum Transformers, with quadratic speedup over classical inference under certain QRAM and block encoding models.

- Empirical studies of matrix norm scaling for commonly used embeddings in LLMs, supporting the feasibility of the quantum speedup in practical regimes.

Data Encoding, Read-in/Read-out, and Linear Algebra Primitives

The authors devote considerable attention to practical bottlenecks: state preparation (basis, angle, amplitude, and QRAM-based encoding), quantum measurement, and block-encoding as the foundational primitive for quantum linear algebra.

Figure 14: Example circuit for basis encoding—the integer 6 mapped to computational basis state ∣110⟩.

Figure 15: QRAM encoding example—storing and superposing classical dataset items in quantum memory for parallel access.

Figure 16: Quantum circuit schematic for block encoding, enabling matrix operations as subroutines within quantum algorithms.

The limitations of input/output are carefully delineated: exponential circuit depth for amplitude encoding, the necessity of measurement reduction, and general strategies for hybrid classical/quantum preprocessing.

Hands-On Implementation and Code Narratives

Throughout the tutorial, code workflows (primarily via PennyLane) are provided for all discussed techniques: encoding protocols, kernel computation, QNN construction, GANs and classification workflows, and full end-to-end pipelines on classical datasets (e.g., MNIST, Wine). Furthermore, advanced topics such as measurement frugality, adaptive shot allocation, and variational circuit pruning are positioned as practical concerns for current and near-term hardware.

Bibliographic Remarks, Open Problems, and Future Outlook

Each chapter concludes with a section reviewing key literature, survey papers, and recent advances—handing the reader a curated entry point into specialized research areas. The final section discusses open questions:

- Hard resource barriers in quantum data loading and state preparation.

- Limitations imposed by NISQ-era hardware, and strategies for QML algorithms under noise and decoherence.

- Existence and identification of real-world datasets where QML provides provable or empirical advantage over classical approaches.

- Scalability of quantum neural and transformer architectures, and the challenge of implementing deep, multi-layer quantum models in the absence of efficient tomography or error-correction.

Conclusion

This tutorial establishes a rigorous reference point for QML, providing the technical depth, mathematical formalism, and practical guidance required for researchers aiming to both understand and implement quantum-enhanced machine learning algorithms. The authoritative treatment of both theory and implementation enables critical evaluation of claims of quantum advantage, and the explicit treatment of limitations is essential for realistic assessment of the field.

Future developments are expected to focus on scalable data encoding protocols, error correction for deep models, and the empirical identification of problem classes where QML surpasses classical baselines. The modularity of the architectures and generality of the framework outlined here provides a robust foundation for such extensions.

Paper to Video (Beta)

No one has generated a video about this paper yet.

Whiteboard

No one has generated a whiteboard explanation for this paper yet.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Open Problems

We found no open problems mentioned in this paper.

Continue Learning

- How do quantum kernel methods compare to classical kernel techniques in real-world applications?

- What approaches are proposed to mitigate the barren plateau phenomenon in quantum neural networks?

- How is QRAM employed to enhance the efficiency of quantum data encoding and processing?

- What are the limitations of current NISQ architectures for implementing advanced QML algorithms?

- Find recent papers about quantum neural network architectures.

Related Papers

- Supervised quantum machine learning models are kernel methods (2021)

- Parameterized quantum circuits as machine learning models (2019)

- Quantum Machine Learning (2016)

- An introduction to quantum machine learning (2014)

- Quantum-Inspired Machine Learning: a Survey (2023)

- Subtleties in the trainability of quantum machine learning models (2021)

- Challenges and Opportunities in Quantum Machine Learning (2023)

- Quantum machine learning: a classical perspective (2017)

- A Geometric-Aware Perspective and Beyond: Hybrid Quantum-Classical Machine Learning Methods (2025)

- Introduction to Quantum Machine Learning and Quantum Architecture Search (2025)

Collections

Sign up for free to add this paper to one or more collections.

Tweets

Sign up for free to view the 6 tweets with 920 likes about this paper.