- The paper introduces a novel approach using Conditional Diffusion Models to generalize on unseen state combinations in complex decision-making tasks.

- It demonstrates superior performance over traditional RL methods through experiments in maze navigation, driving scenarios, and multi-agent settings.

- The study emphasizes the importance of latent vector conditioning and cross-attention in enhancing combinatorial generalization in decision-making.

State Combinatorial Generalization In Decision Making With Conditional Diffusion Models

Introduction

The paper addresses the challenge of zero-shot generalization in combinatorial decision-making tasks by leveraging Conditional Diffusion Models (CDMs). Real-world environments often present states as combinations of fundamental elements (e.g., vehicles, pedestrians), leading to a vast combinatorial state space unfeasible to fully explore during training. Traditional RL methods often fail to generalize to unseen combinations of these elements. The authors propose a novel approach utilizing CDMs trained on expert trajectories, which effectively generalizes to states formed by novel combinations of previously seen elements.

In environments characterized by combinatorial complexity, the state space is defined by combinations of basic elements with distinct attributes. The central challenge lies in generalizing to out-of-combination (OOC) states—those composed of new element combinations not encountered during training. The paper formalizes this OOC generalization as requiring algorithms to handle out-of-support states, thereby addressing a more realistic and challenging facet of distribution shift than typically explored in RL.

Limitations of Traditional RL

Traditional RL algorithms estimate expected cumulative rewards, but their value predictions become unreliable in unsupported states due to distribution shifts. These state distribution shifts, particularly those that lead to OOC states, cannot be remedied by merely increasing exploration or data collection. The inherent architecture of diffusion models, however, provides a framework naturally conducive to generalizing across combinations due to its manifold learning abilities.

Conditional Diffusion Models

CDMs are structured to denoise trajectories conditioned on current state and latent vectors, which encode compositionality. The diffusion process leverages a learned manifold structure that adeptly encapsulates the combinatorial nature of state spaces. Theoretical and empirical evidence underpin the capability of CDMs to generate OOC states with non-zero probability, enhancing generalization in state space.

Experimental Evaluation

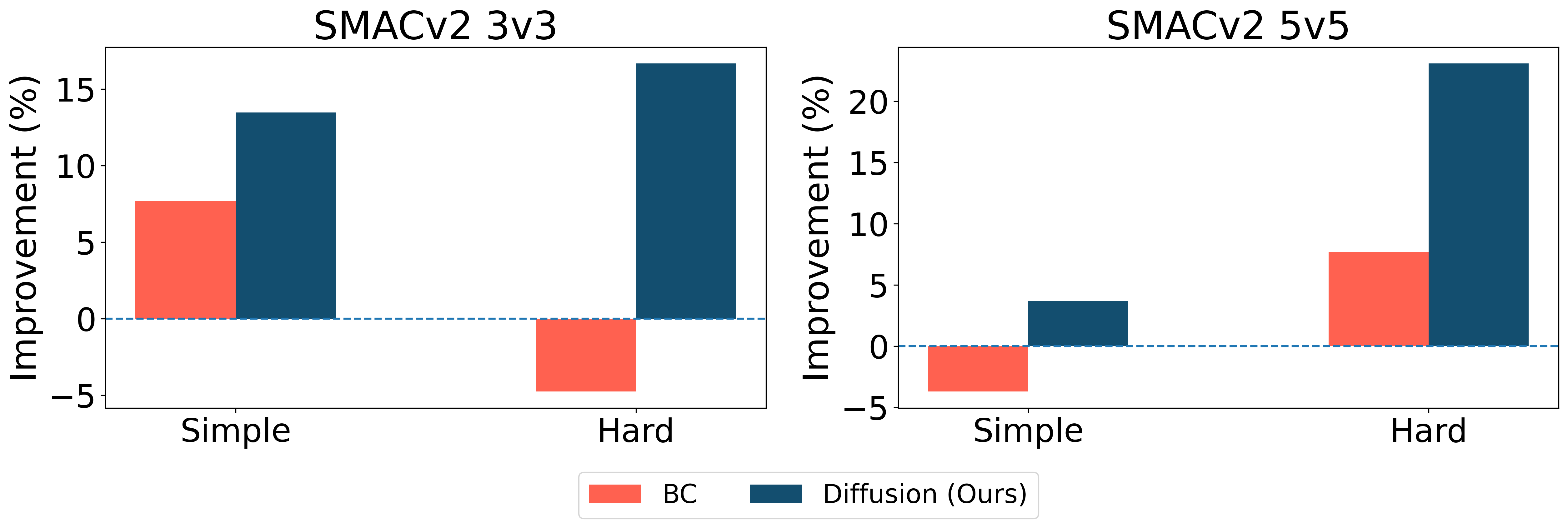

The efficacy of the proposed method is validated in three domains: maze navigation, driving (Roundabout environment), and multi-agent settings (StarCraft). CDMs exhibit superior performance over baseline methods, including traditional RL and behavior cloning, across differing scenarios reflecting varied levels of environmental complexity and novelty. Notably:

Ablation Studies and Architectural Considerations

The inclusion of combinatorial inductive bias through latent vectors is crucial. The ablation study shows that cross-attention between these latent vectors and the diffusion model’s outputs yields better generalization than simple concatenation. The authors highlight the importance of effective model conditioning to leverage the diffusion model fully.

Conclusion

This paper delineates a pathway towards achieving combinatorial generalization in decision-making tasks using Conditional Diffusion Models, addressing critical limitations of standard RL architectures. By framing state generalization as a problem of manifold learning within diffusion processes, the approach introduces a significant advance in handling combinatorial state spaces. Future exploration may further refine these models to improve computational efficiency and expand applicability across more complex scenarios involving unseen fundamental elements.