DAMAGE: Detecting Adversarially Modified AI Generated Text

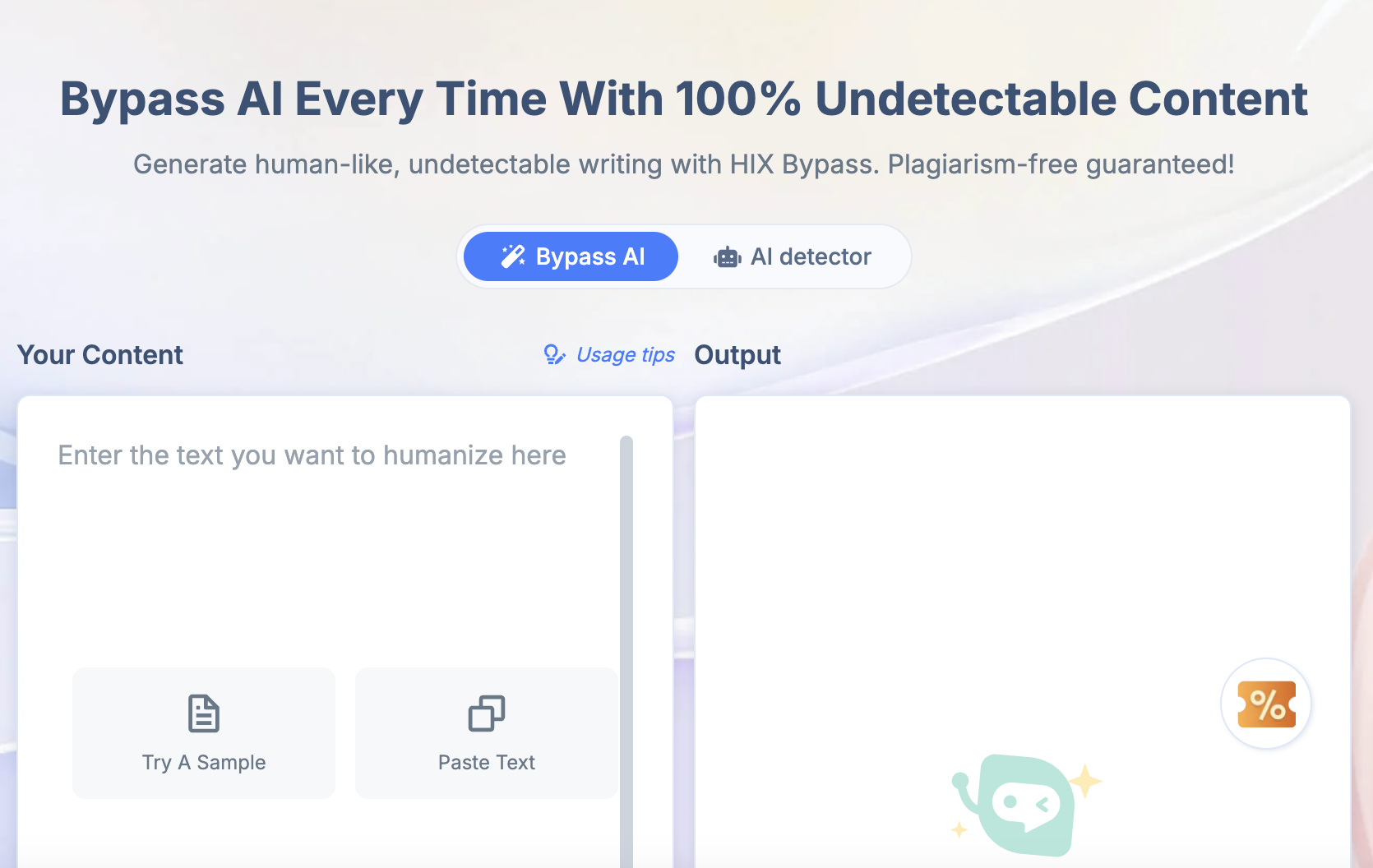

Abstract: AI humanizers are a new class of online software tools meant to paraphrase and rewrite AI-generated text in a way that allows them to evade AI detection software. We study 19 AI humanizer and paraphrasing tools and qualitatively assess their effects and faithfulness in preserving the meaning of the original text. We show that many existing AI detectors fail to detect humanized text. Finally, we demonstrate a robust model that can detect humanized AI text while maintaining a low false positive rate using a data-centric augmentation approach. We attack our own detector, training our own fine-tuned model optimized against our detector's predictions, and show that our detector's cross-humanizer generalization is sufficient to remain robust to this attack.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper looks at “AI humanizers” — online tools that rewrite AI‑generated text so it looks more like a person wrote it. The authors test many of these tools, show that a lot of current AI detectors can be fooled by them, and present their own detector, called DAMAGE, that is much better at catching humanized AI text without wrongly accusing real human writing.

What questions did the researchers ask?

To guide your reading, here are the main questions they wanted to answer:

- What exactly do AI humanizers do to text, and how good are they at keeping the original meaning?

- Do popular AI detectors miss AI text after it’s been “humanized”?

- Can we build a detector that still works even when the AI text has been rewritten by these tools?

- If someone trains a custom humanizer specifically to fool a detector, can the detector still catch it?

How did they study it?

The authors used a mix of hands-on testing and machine learning. Here’s the approach in everyday terms:

1) Auditing humanizer tools

They picked 19 humanizer/paraphrasing tools (some simple synonym-swappers, some full AI models) and ran lots of samples through them. They looked for:

- How the words and sentences were changed

- Whether meaning stayed the same

- Whether the writing sounded natural or became weird/nonsensical

They grouped tools into three quality levels:

- L1 (best): tends to keep tone and meaning; reads fairly well

- L2 (average): keeps the message but makes the writing worse

- L3 (worst): often adds errors or nonsense

They also found many humanizers are just AI models with special instructions like “write in a conversational tone.” Some could even be “jailbroken” to reveal their hidden prompts.

2) Building and training a stronger detector (DAMAGE)

Think of an AI detector as a spam filter, but for AI writing. The key idea was to train the detector to “ignore the disguise.” In other words, teach it to recognize AI writing even after it’s been rewritten.

To do this, they:

- Gathered lots of real human writing (especially school essays) and created “twin” AI essays on the same topics and lengths. These twins are called synthetic mirrors — like asking an AI to write an essay similar to a specific human one.

- “Humanized” both sides: they ran some human essays and some AI essays through high‑quality humanizers (L1). This trains the detector to be invariant to the humanizer’s changes — it learns that the rewrite is just a disguise, not a new author.

- Split long documents into smaller chunks (about 300 words) so the detector could focus on short passages.

- Used active learning: they found cases where the detector was wrong, added those and their AI twins back into training, and retrained so it improved over its mistakes.

Note: They carefully used only a small, high‑quality set of humanized samples so the detector stayed accurate and didn’t start flagging honest human writing too often.

3) Testing against several challenges

They checked the detector on:

- Regular AI essays

- AI essays after humanization

- Public “attack” benchmarks that use paraphrasing and synonym swaps

- “Watermarks” (hidden statistical signals some AI text carries): they showed that paraphrasing can erase these signals

- A special test where they trained a custom humanizer directly against their detector to see if it could still be fooled

What did they find?

Here are the key takeaways and why they matter:

- Many humanizers hurt quality. Most tools make the writing worse. The best ones (L1) usually keep meaning and tone; the worst (L3) sometimes add nonsense like fake citations or random symbols.

- Watermarking isn’t enough. A modern watermark system (Google’s SynthID) detected most watermarked AI text at first. But after paraphrasing, detection dropped to almost zero. This means “erase-the-watermark” attacks are easy with humanizers.

- Many detectors fail after humanization. Popular detectors that work on raw AI text miss a lot once the text is humanized. In one comparison, a leading method’s detection rate of AI text fell sharply after humanization.

- DAMAGE stays accurate even after humanization. The new detector kept very high accuracy on humanized AI text while keeping a low rate of false accusations against real human writing. It also did well on tough public tests involving paraphrase and synonym attacks.

- Still strong against targeted attacks. Even when they trained a custom humanizer specifically to fool DAMAGE, the detector still caught the large majority of the humanized AI text. This suggests the “AI fingerprints” are hard to hide completely.

Why this is impressive: The detector learned to treat humanization as a “disguise” and look past it, rather than treating humanized text as a totally different kind of writing.

Why does this matter?

- For schools: Many students can access humanizers that help them cheat. A detector that can still catch AI writing after humanization helps teachers and schools keep grading fair.

- For the web and search: Some marketers use AI to mass‑produce content and then humanize it to dodge AI checks. Stronger detection helps keep search results trustworthy.

- For policy and safety: Since paraphrasing can remove watermarks, relying on watermarks alone won’t prevent misuse. Robust detection methods and better guidelines are needed.

- For research: This work shows a practical way to build detectors that generalize across many humanizers, even new or adversarial ones, by training on “disguised” examples and teaching the model to ignore the disguise.

In short: Humanizers can fool many detectors and can even erase watermarks, but with the right training strategy, detectors like DAMAGE can still spot AI writing reliably and help protect academic integrity and information quality online.

Glossary

- AdamW optimizer: A variant of the Adam optimization algorithm that decouples weight decay from the gradient update to improve training stability. "We train the model to convergence using 8 A100 GPUs with a batch size of 24 using a weighted cross entropy loss and the AdamW optimizer for 1 epoch."

- Adversarial humanizer: A paraphrasing model intentionally optimized to evade a specific detector’s predictions. "After training the detector-specific humanizer, we use GPT-4o to create synthetic mirrors of 2000 examples from the test set and pass them through the adversarial humanizer."

- Autoregressive LLM: A model that generates tokens sequentially, each conditioned on previously produced tokens. "Following the usual convention for sequence classification modeling using an autoregressive LLM, the hidden state from the final token in the sequence is used as the input to both classification heads."

- Bootstrap sampling: A resampling technique for estimating statistics by sampling with replacement, often used to compute confidence intervals. "TPR @ FPR=5% for Academic Text with 1000 iterations of bootstrap sampling."

- Burstiness: A statistical property of text reflecting variability in token probabilities over time, used as a detection signal. "claiming that more recent models like GPT-4 are less detectable because perplexity and burstiness are less useful evidence markers."

- Chunking: Splitting a document into smaller segments to facilitate processing or training. "After humanizing both human and AI essays, we apply chunking logic to divide each document into roughly 300-word chunks before adding both the human and the AI humanized documents back into the training set."

- Classification head: A trainable layer on top of a pretrained model used to make final predictions for a classification task. "We use the Mistral NeMo architecture \cite{Mistral_NeMo_2024} which has approximately 12 billion parameters, with an untrained linear classification head."

- Context window: The maximum number of tokens a model can attend to for a single input sequence. "We truncate the context window to 512 tokens to constrain the model to using only short-range features."

- Cross-humanizer generalization: The ability of a detector to remain effective across multiple, unseen humanizer tools. "show that our detector's cross-humanizer generalization is sufficient to remain robust to this attack."

- Cross-perplexity: A detection signal derived from comparing perplexity of the same text across different LLMs. "Binoculars \cite{hans2024spottingllmsbinocularszeroshot} is an even more effective recent approach which uses the cross-perplexity between two different LLMs as a signal that text is LLM-generated."

- Deep learning based methods: Detector approaches that use neural networks trained on labeled human and AI text to classify authorship. "Open-source methods typically fall into two categories: perplexity-based detection methods and deep learning based methods."

- DetectGPT: A perplexity-based detection method that examines local curvature in probability space to identify LLM-generated text. "DetectGPT \cite{mitchell2023detectgpt} and FastDetectGPT \cite{bao2024fastdetectgptefficientzeroshotdetection} are earlier examples of perplexity-based methods which look at the local curvature in probability space around a given example."

- Direct Preference Optimization (DPO): A training technique that optimizes a model to prefer outputs ranked higher by preferences, often using paired comparisons. "and then using DPO to optimize the LLM to prefer undetected outputs \cite{nicks2024language}."

- DIPPER: A T5-based paraphraser designed to evade AI detectors by rewriting input text while preserving meaning. "A study from Google Research \cite{krishna2023paraphrasingevadesdetectorsaigenerated} released DIPPER: a paraphrasing T5-based model that is able to bypass some of the above-mentioned detectors by rewriting the input text."

- ELI5 dataset: A dataset of long-form question answering used as prompts for generating watermarked text in experiments. "using the ELI5 dataset \cite{fan2019eli5longformquestion} as prompts."

- False positive rate (FPR): The proportion of human-written text incorrectly classified as AI-generated by a detector. "We used the unwatermarked text to set a FPR threshold and evaluated SynthID watermark detection TPR at a fixed FPR."

- Fluency Win Rate: A metric that measures how often a model’s output is judged more fluent than a reference sample. "To quantify the difference between L1, L2, and L3 humanizers, we use the Fluency Win Rate metric introduced in \cite{nicks2024language}."

- Gemma-2B-IT: A specific instruction-tuned LLM used to generate watermarked texts for evaluation. "We used Gemma-2B-IT \cite{gemmateam2024gemmaopenmodelsbased} to generate 200 tokens for each example with a temperature of 1.0, using the ELI5 dataset \cite{fan2019eli5longformquestion} as prompts."

- GPT-4o fine-tuning API: An interface for fine-tuning GPT-4o on task-specific datasets, used to train the detector-specific humanizer. "We then use the GPT-4o fine-tuning API to train a new model on only these pairs."

- Green tokens: Special tokens sampled at higher probabilities to embed a detectable watermark signal in generated text. "One watermarking scheme \cite{kirchenbauer2023watermark} introduces the idea of \"green tokens\", which are sampled with higher probability than other tokens in a traceable way."

- Hard negative mining: An active learning strategy that focuses training on examples the model gets wrong to improve performance. "After training, following the procedure in \cite{emi2024technicalreportpangramaigenerated}, we run hard negative mining with synthetic mirrors."

- Hidden state: The internal representation produced by a model for a token, often used for downstream classification. "the hidden state from the final token in the sequence is used as the input to both classification heads."

- Instruction-tuned and post-trained: A model training paradigm where base models are further trained on instruction-following tasks and refined after initial training. "It is notable that we only use modern LLMs that are instruction-tuned and post-trained."

- Jailbreaks: Techniques to bypass guardrails or constraints in an LLM, often to reveal prompts or elicit restricted behavior. "we found that some of them are susceptible to popular jailbreaks."

- Learned invariance: A property where a model is trained to be insensitive to specific input transformations, maintaining consistent predictions. "We describe the necessity of treating humanizer robustness as a learned invariance rather than a separate domain."

- LoRA adapters: Low-Rank Adaptation modules that enable parameter-efficient fine-tuning of large models. "As is common practice in LLM fine-tuning, we use trainable LoRA \cite{hu2022lora} adapters while keeping the base model frozen."

- LLM: A neural LLM with billions of parameters capable of generating coherent text. "The ability of LLMs such as ChatGPT \cite{openai2023gpt4} to generate realistic and fluent text has spurred the need for AI text detection software."

- Mirror prompt: A prompt constructed from an original example to generate a synthetic text that mirrors its topic and length. "We define the term \"mirror prompt\" to be a prompt based on the original example that is used to generated a \"synthetic mirror\" example."

- Mistral NeMo architecture: A specific 12B-parameter architecture used as the backbone for the detector. "We use the Mistral NeMo architecture \cite{Mistral_NeMo_2024} which has approximately 12 billion parameters, with an untrained linear classification head."

- Oversampling: Increasing the frequency of certain examples in training to compensate for limited data volume. "To compensate for the small data volume, we oversample the humanizer data by a factor of 18."

- Perplexity: A measure of how well a LLM predicts a sample; lower perplexity indicates higher confidence. "Perplexity-based methods attempt to leverage the fact that the tokens in LLM-based outputs in general will be predicted as consistently more likely by the LLM itself."

- RADAR: A framework that adversarially trains detectors and paraphrasers to improve robustness. "RADAR \cite{hu2023radar} adversarially trains a LLM detector and a paraphraser against each other to create a more robust detector."

- RAID: A benchmarking leaderboard that evaluates AI text detectors across domains, models, and attacks. "RAID \cite{dugan2024raidsharedbenchmarkrobust} is a live leaderboard measuring the performance of AI-generated text detection methods against each other on multiple domains, models, and adversarial attacks."

- RoBERTa: A transformer-based LLM architecture commonly used for classification tasks. "They used a RoBERTa based model to classify human text and GPT-2 written text."

- SeqXGPT: A detection method targeting sentence-level identification of AI-generated text. "SeqXGPT \cite{wang2023seqxgpt} attempts to solve this by using an architecture which is able to detect AI on the sentence level rather than the document level."

- SynthID: A watermarking system that embeds detectable signals in generated text. "Google's recently released SynthID \cite{synthid2024} works in a similar fashion."

- Tekken tokenizer: A tokenization scheme noted for strong multilingual performance used in the detector. "We use the Tekken tokenizer out of the box, which is noted for its strong multilingual performance."

- True positive rate (TPR): The proportion of AI-generated text correctly identified as AI by a detector. "Results are presented as true positive rate at a fixed false positive rate of 5\%."

- Watermarking: Embedding signals in generated text to enable later detection of AI authorship. "Watermarking AI-generated text is another relevant subfield of research."

- Weighted cross entropy loss: A loss function that assigns different weights to classes to handle imbalance during training. "We train the model to convergence using 8 A100 GPUs with a batch size of 24 using a weighted cross entropy loss and the AdamW optimizer for 1 epoch."

Collections

Sign up for free to add this paper to one or more collections.