- The paper introduces UniKD, combining Adaptive Features Fusion and Feature Distribution Prediction to unify feature and logits-based distillation.

- It demonstrates superior performance improvements on datasets like CIFAR-100 and ImageNet by effectively transferring knowledge to student models.

- Experimental and ablation studies confirm that harmonized knowledge transfer yields robust convergence and efficient neural network training.

Harmonizing Knowledge Transfer in Neural Networks with Unified Distillation

Introduction

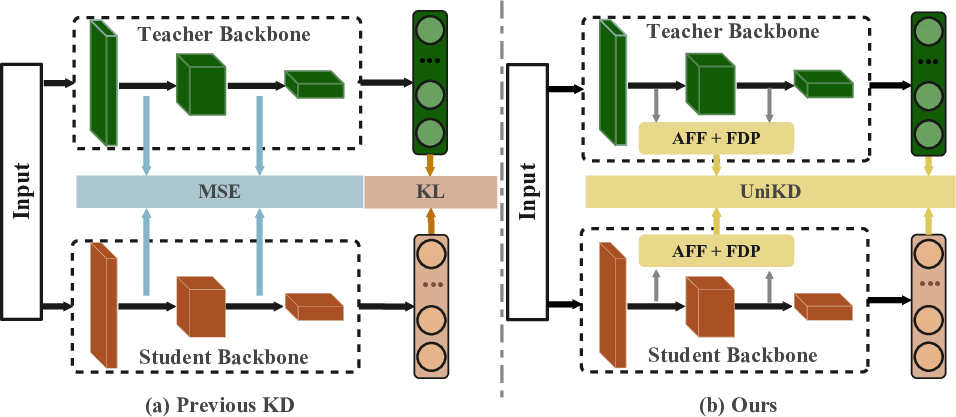

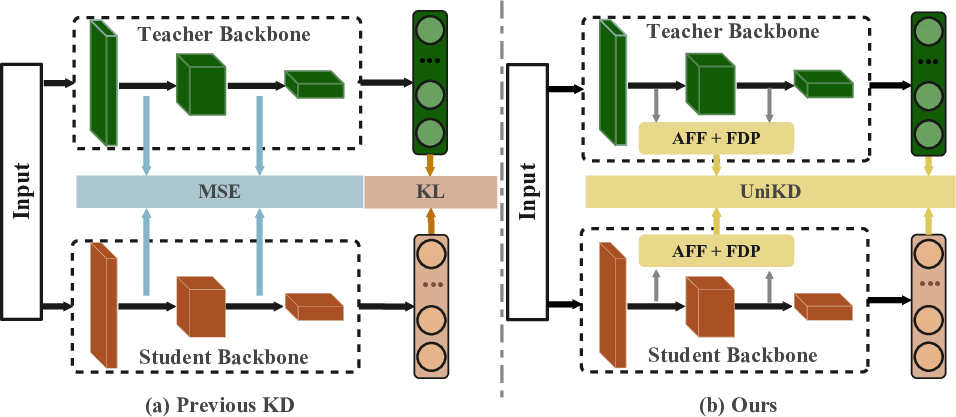

The paper introduces a novel approach to optimize knowledge transfer in neural networks using a unified distillation framework, termed UniKD. Knowledge distillation (KD) enables transferring learned information from a large, high-capacity teacher network to a smaller, more efficient student network. Conventional KD techniques primarily fall into feature-based and logits-based categories. Feature-based approaches emphasize alignment of features from intermediate network layers, while logits-based strategies focus on probabilistic matching of network output. This paper proposes UniKD, which aggregates semantic information across various network stages, unifying disparate knowledge forms under a singular constraint mechanism.

Figure 1: Comparison of methodologies in feature-based and logits-based knowledge distillation against the proposed UniKD.

Methodology

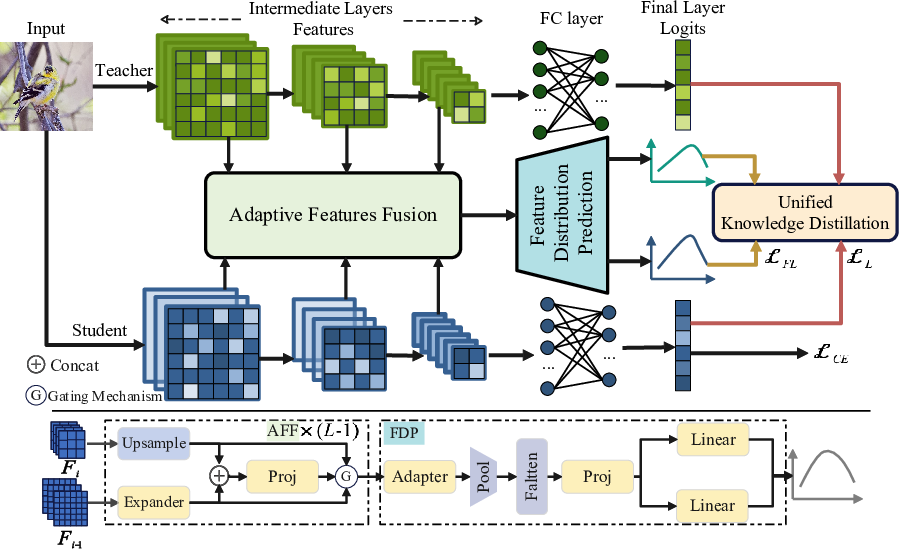

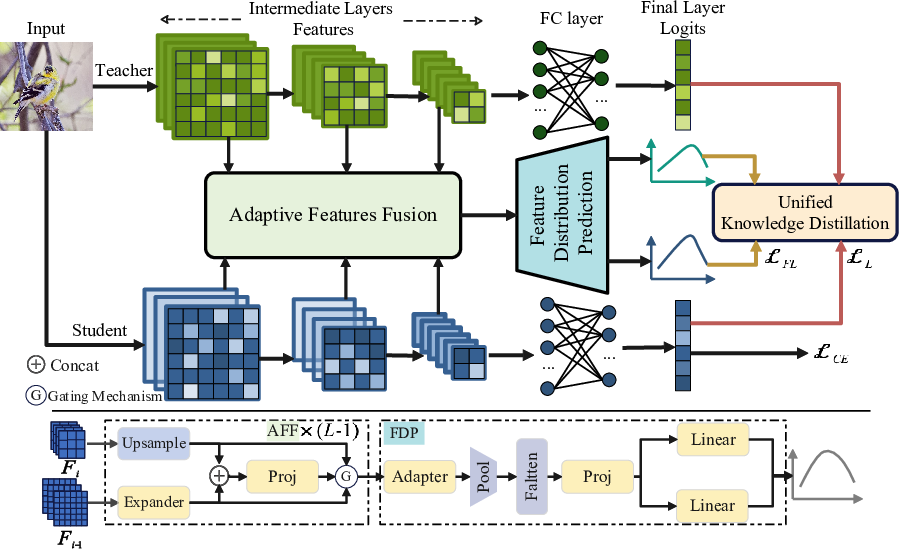

UniKD operates through two fundamental modules: Adaptive Features Fusion (AFF) and Feature Distribution Prediction (FDP). Initially, AFF integrates features from several intermediate layers, synthesizing multi-scale information. This avoids operational overhead associated with per-layer feature transformation, efficiently encoding diverse semantic data.

Subsequently, FDP converts this comprehensive feature representation into a distribution format akin to logits. By aligning feature distributions to multivariate Gaussian forms, FDP ensures scalable and effective KD through distribution-level alignment. FDP employs KL divergence for distillation loss calculation, adapting intermediate layer features using affine mappings to project onto the network's task-specific output space.

Figure 2: The overall framework of the proposed UniKD approach.

Both modules are pivotal in UniKD's ability to harmonize knowledge from intermediate layers and final output logits, circumventing incoherence typical in conventional hybrid approaches.

Experimental Results

The efficacy of the UniKD framework was validated across multiple datasets and network architectures, demonstrating considerable improvements over existing KD mechanisms. Performance was evaluated using classification tasks on CIFAR-100, ImageNet, and object detection on MS-COCO.

Convergence and Ablation Analysis

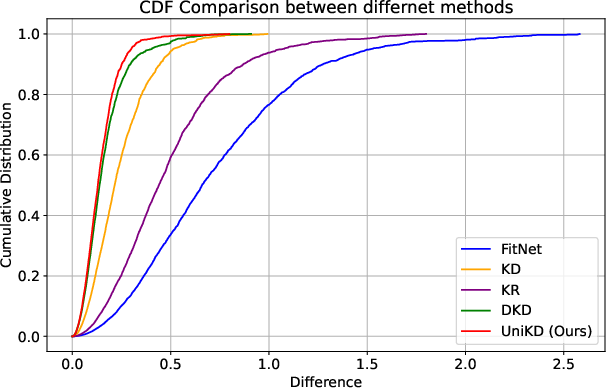

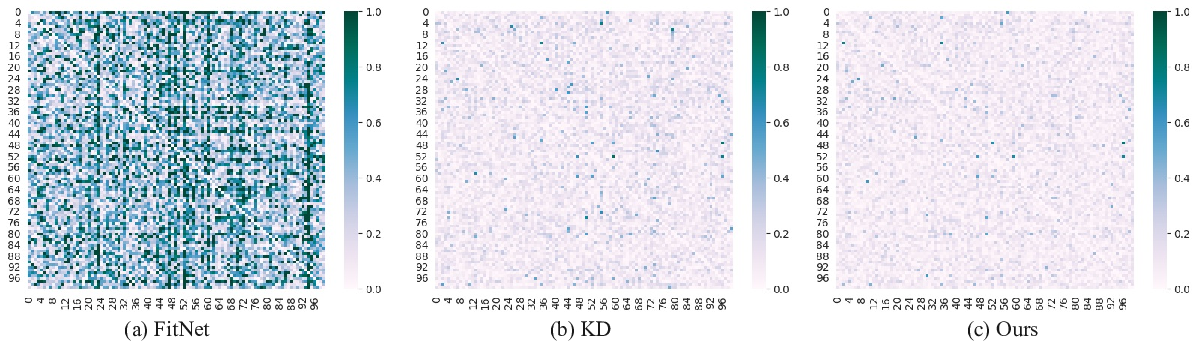

UniKD demonstrated consistent and stable convergence across various distillation scenarios. Comparative analyses with hybrid distillation methods showed UniKD's capacity to achieve a balanced optimization trajectory for network training, aligning outputs of student models closer to teachers.

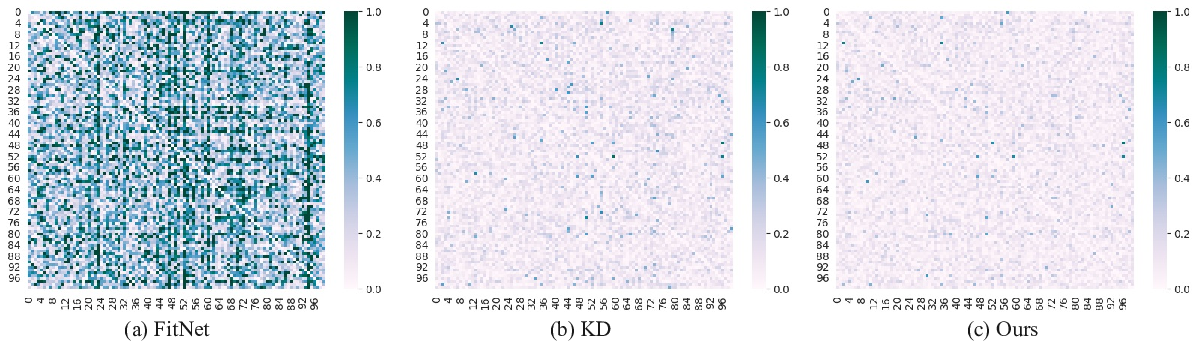

Figure 4: Difference of correlation matrices of student and teacher logits.

Ablation studies confirmed the integral roles of AFF and FDP modules in enhancing the distillation capability, underscoring how feature aggregation and distribution prediction collectively mitigate layer-wise inconsistencies.

Conclusion

The UniKD framework presents a significant advancement in knowledge transfer strategies for neural networks. By unifying disparate knowledge types into a singular framework, UniKD promotes coherent and comprehensive distillation across network layers. This method not only enhances the performance and scalability of student models but also provides a robust template for future explorations in neural network distillation methodologies. Integrating such techniques could facilitate further developments in efficient network compression and optimization for diverse AI applications.