- The paper introduces a Stackelberg game formulation to model bandwidth allocation challenges in hierarchical federated learning systems.

- It proposes both centralized and distributed heuristic algorithms that achieve near-optimal fairness and efficiency across diverse non-iid settings.

- Experimental evaluations on non-iid MNIST benchmarks validate the approach's adaptability to varying FL server funds and per-client bandwidth requirements.

Fair Bandwidth Allocation in Concurrent Hierarchical Federated Learning Systems

Introduction

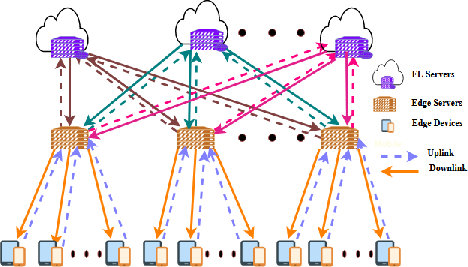

The paper "Fair Allocation of Bandwidth At Edge Servers For Concurrent Hierarchical Federated Learning" (2409.04921) presents a rigorous analysis of the bandwidth allocation problem in hierarchical federated learning (FL) systems. It addresses a core bottleneck: efficiently and fairly distributing limited uplink bandwidth at edge servers among multiple concurrent FL processes. The authors develop a three-tier system architecture, formalize the problem as a Stackelberg game, and propose both centralized and distributed heuristic algorithms to approximate Nash Equilibria. This approach is thoroughly evaluated in diverse non-iid and heterogeneous settings, with extensive experimental validation using non-iid MNIST benchmark scenarios.

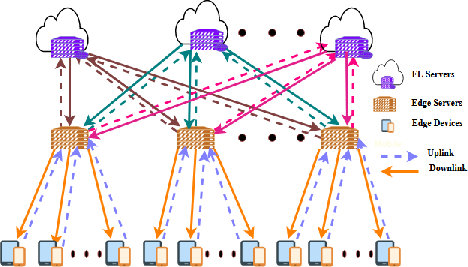

Figure 1: Overview of the proposed three-tier FL architecture with clients, edge servers, and cloud FL servers.

System Model and Problem Definition

The system is composed of three hierarchical tiers:

- FL Servers (Cloud): Each server orchestrates one FL process and seeks to engage as many clients as possible, subject to funding constraints.

- Edge Servers: These serve as intermediaries, aggregating model updates and managing uplink bandwidth. Their total uplink capacity is a critical shared resource.

- FL Clients (Edge Devices): Resource-constrained devices providing local data and computation.

Bandwidth scarcity at edge servers—exacerbated by diverse, overlapping client sets across multiple concurrent FL tasks—creates contention. The fundamental objective is two-fold:

- Bandwidth Utility: Maximize utilization so no bandwidth is idle.

- Fairness: Allocate bandwidth such that FL servers with equal funds receive equal resources, and those with more funds receive proportionally more, avoiding biased allocations arising from client distribution heterogeneity.

This challenge is especially acute in scenarios where FL servers compete for resources of the same edge servers due to overlapping geographic coverage or similar client pools.

The bandwidth allocation is modeled as a Stackelberg game:

- Leaders: Edge servers, setting bandwidth prices to maximize their profit (price × allocated bandwidth).

- Followers: FL servers, distributing their budgets across edge servers to maximize the number of supported clients.

Key features of the model include:

- Nash Equilibrium analysis establishes that, in equilibrium, all edge servers must converge to the same bandwidth price.

- Feasibility and optimality constraints are explicitly encoded: allocations must be integer multiples of per-client bandwidth, not exceed client or edge server capacity, and respect FL server funding.

- The utility functions for edge and FL servers are specifically defined to reflect realistic diminishing returns and stakeholder objectives.

Algorithms

Two classes of heuristic schemes are detailed:

Centralized Algorithm

Assuming global state knowledge, the algorithm iteratively assigns bandwidth according to a probabilistic ranking and allocation mechanism, ultimately setting a uniform bandwidth price. This acts as an idealized performance reference.

Distributed Algorithm

Edge servers and FL servers iteratively adjust their bandwidth requests and offers using message passing and local observations:

- Each FL server initially requests bandwidth for all its clients proportional to its fund.

- Edge servers estimate current equilibrium prices based on aggregate demand/supply ratios.

- Requests and allocations are updated iteratively, with convergence detected when all edge servers' prices are sufficiently close (within a ratio Δ).

This fully decentralized approach is designed for scalability and robustness in practical hierarchical FL deployments.

Experimental Evaluation

The evaluation uses a federated MNIST image classification setup over a simulated hierarchical topology. Both one-class and two-class non-iid client data distributions are assessed. The following aspects of heterogeneity are systematically explored:

- Client Distribution: Varying the overlap and concentration of client populations across edge servers.

- FL Server Funding: Testing the fairness mechanism's response to varying FL server budgets.

- Bandwidth-Per-Client Needs: Exploring scenarios where different FL servers have different per-client bandwidth requirements.

Results

Fairness and Efficiency

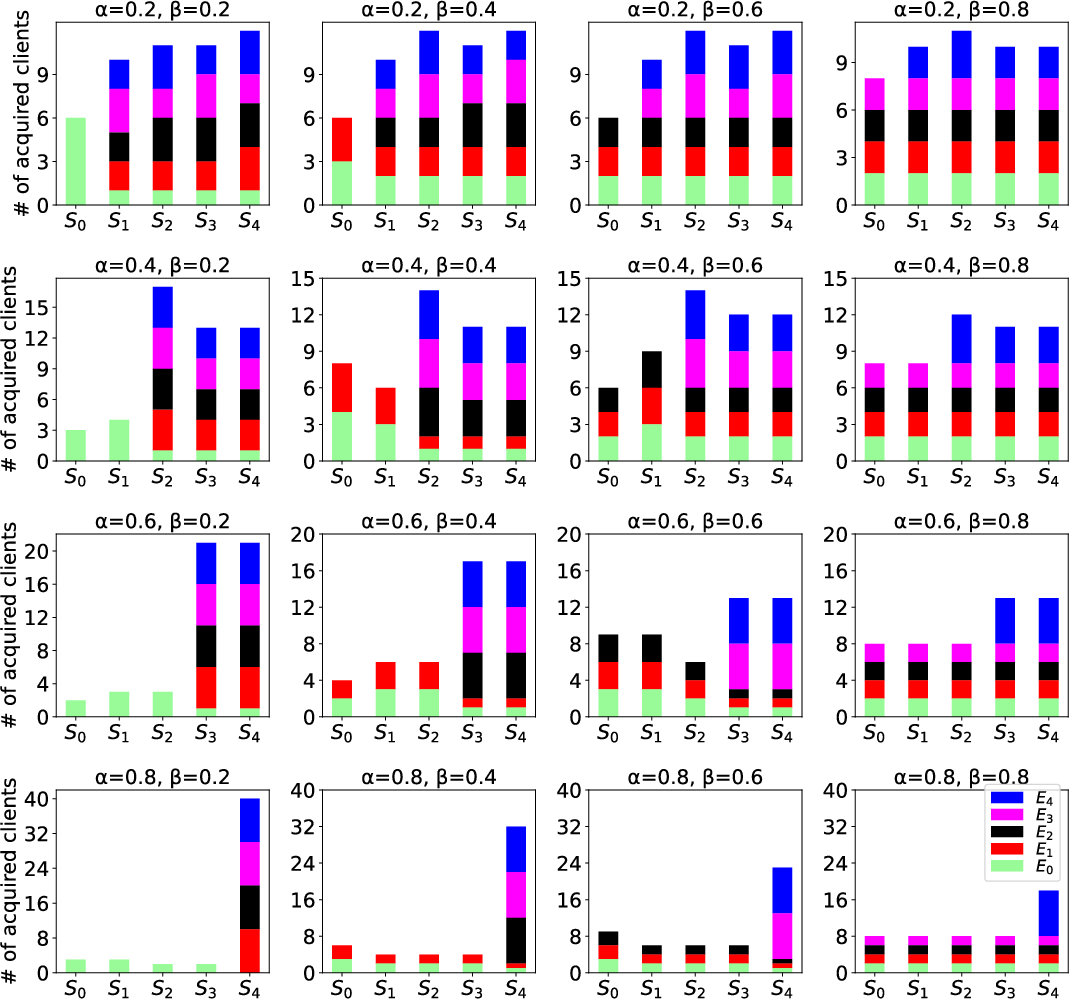

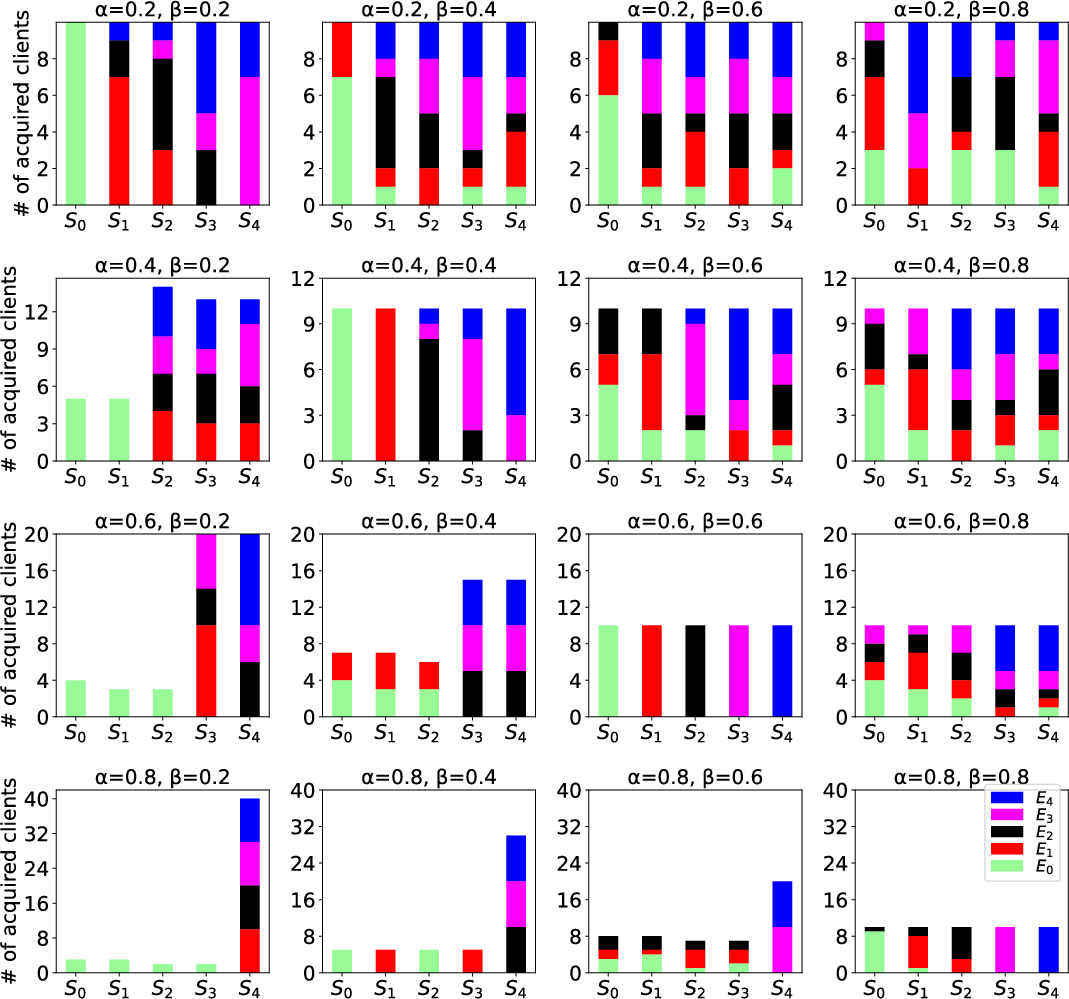

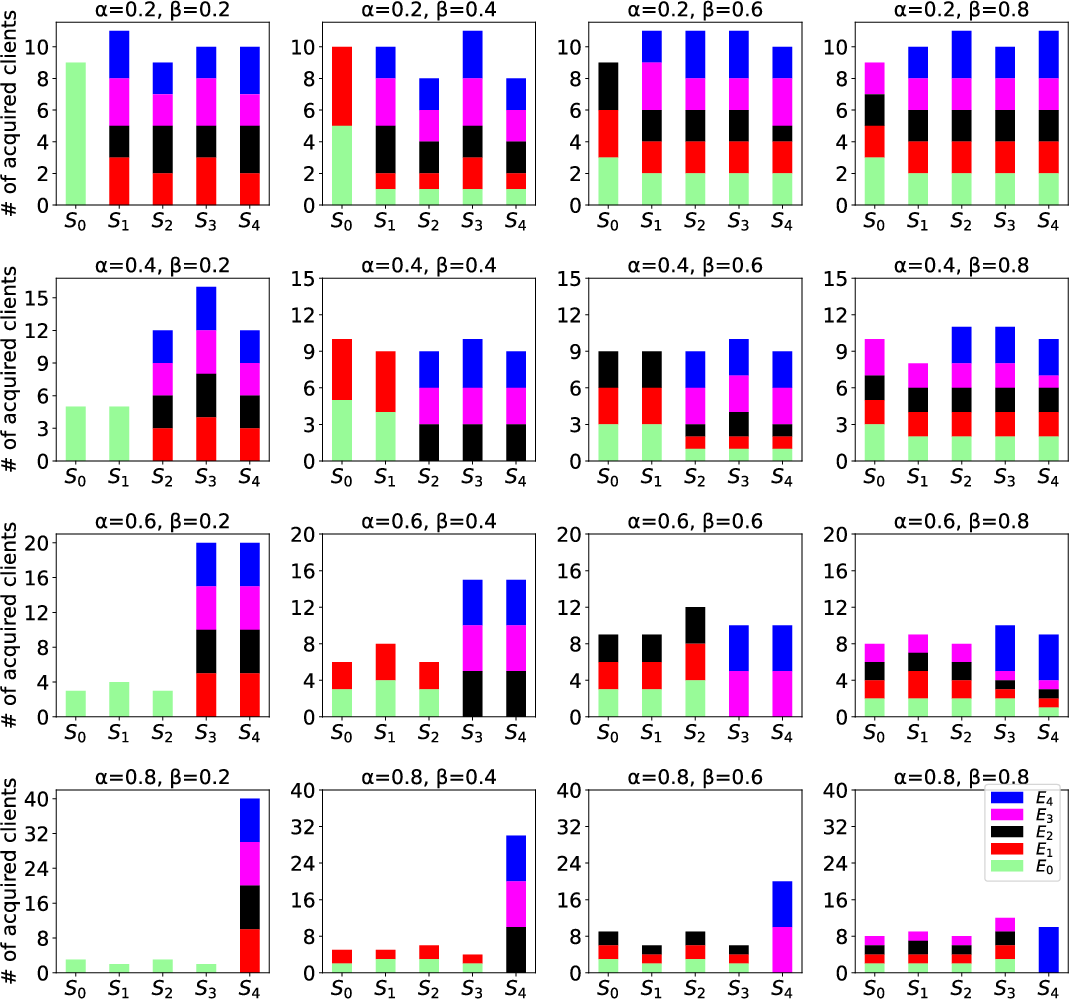

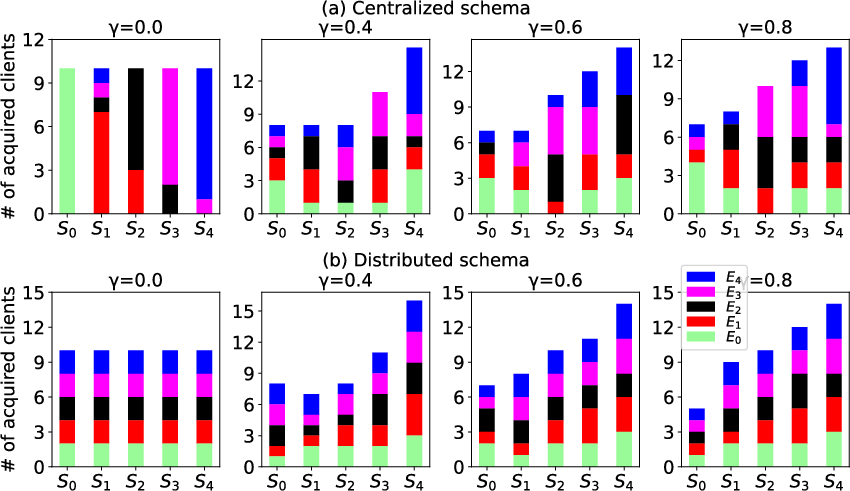

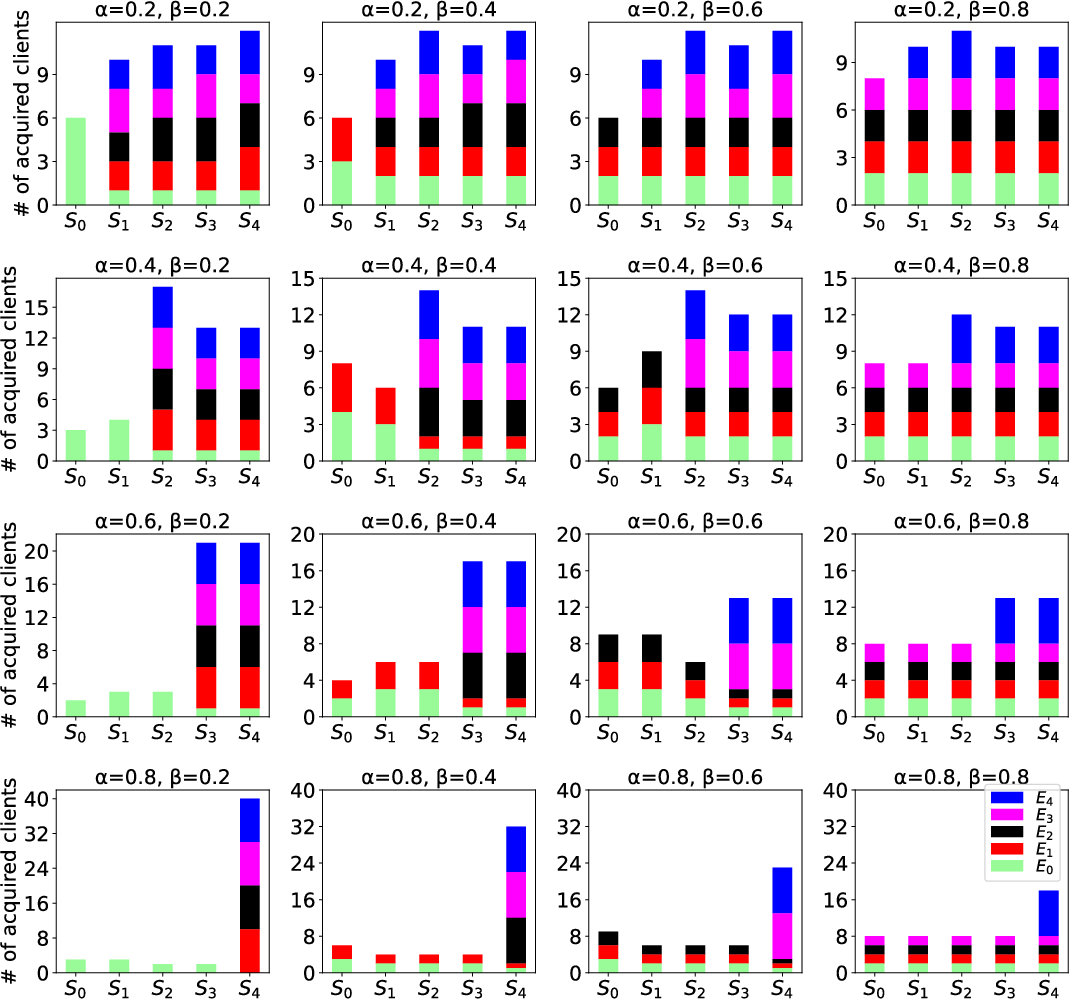

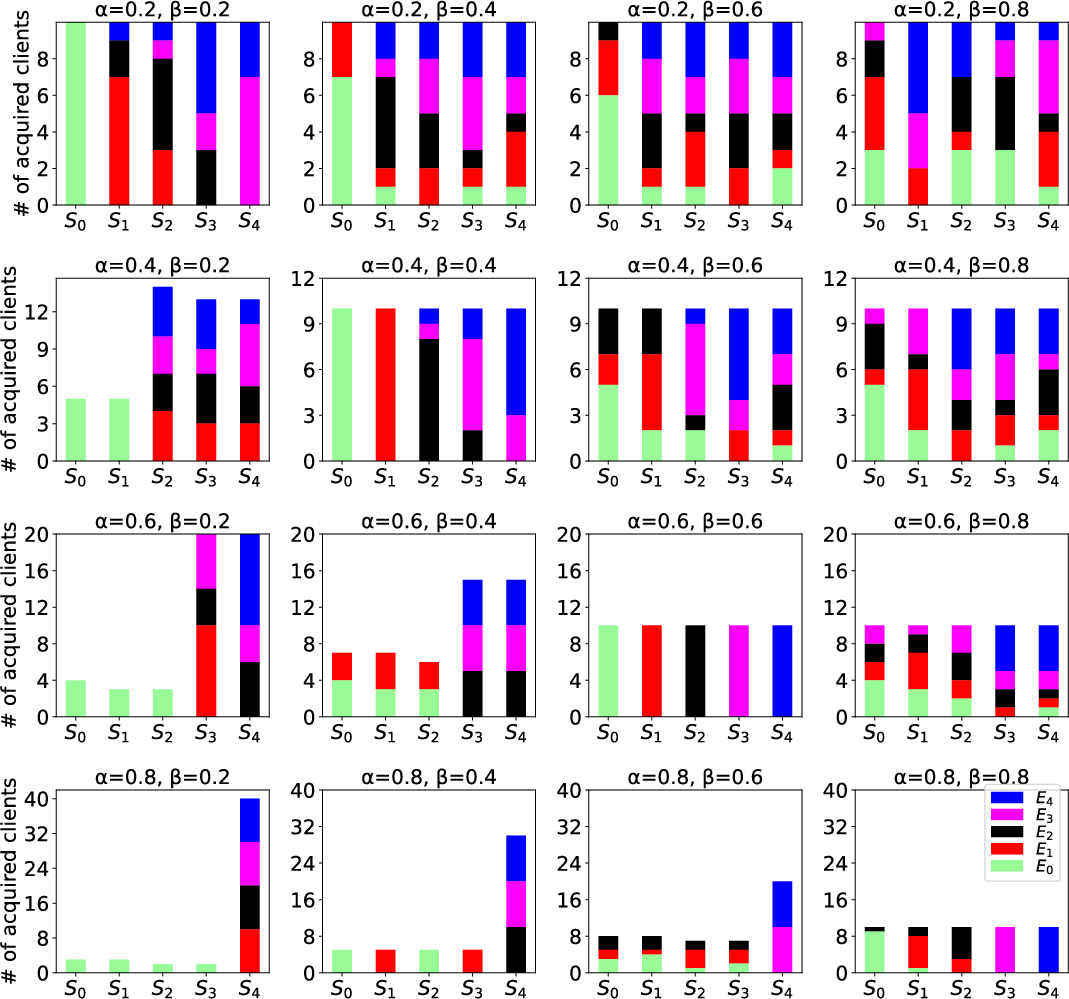

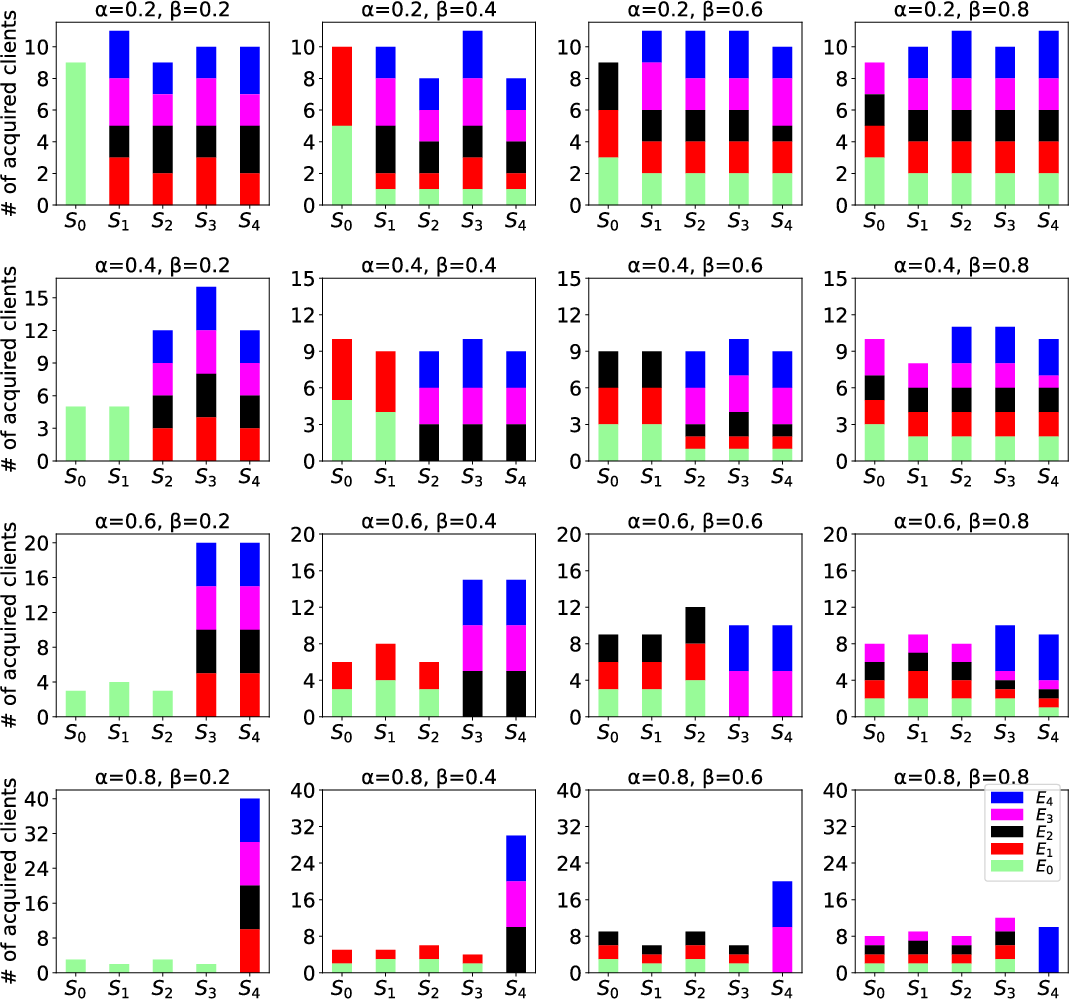

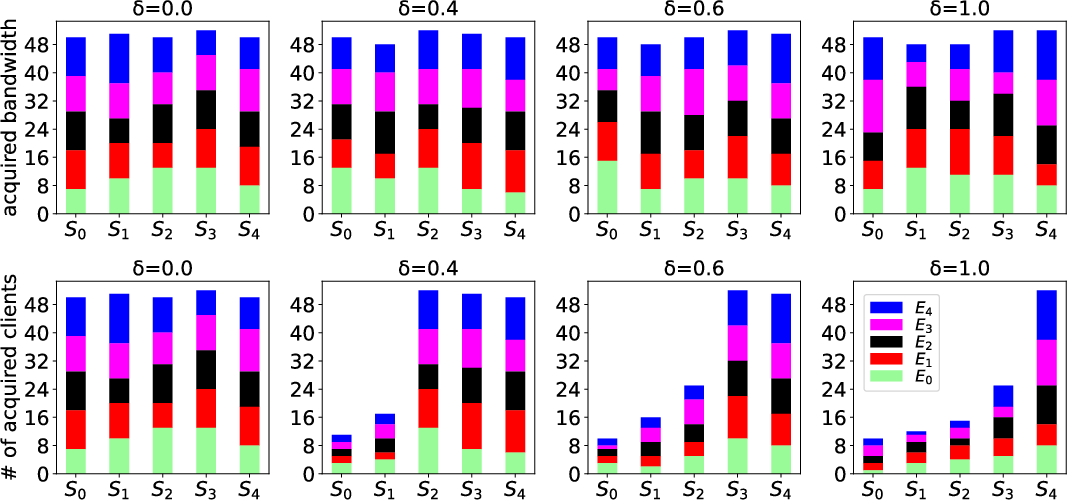

- The proposed distributed and centralized schemes consistently achieve near-identical performance, both significantly outperforming the baseline proportional allocation (Figure 2, 3, and 4). In all but the most extreme heterogeneity conditions, all FL servers receive a fair allocation reflective of their declared funds.

- Near-optimal bandwidth utility is maintained; nearly all available edge bandwidth is consumed productively.

Figure 2: Numbers of clients acquired by FL servers in the proportional (baseline) scheme, demonstrating unfairness under client heterogeneity.

Figure 3: Numbers of clients acquired by FL servers with the proposed centralized, game-theoretic scheme, illustrating improved fairness.

Figure 4: Numbers of clients acquired by FL servers in the distributed, game-theoretic scheme, matching centralized scheme outcome.

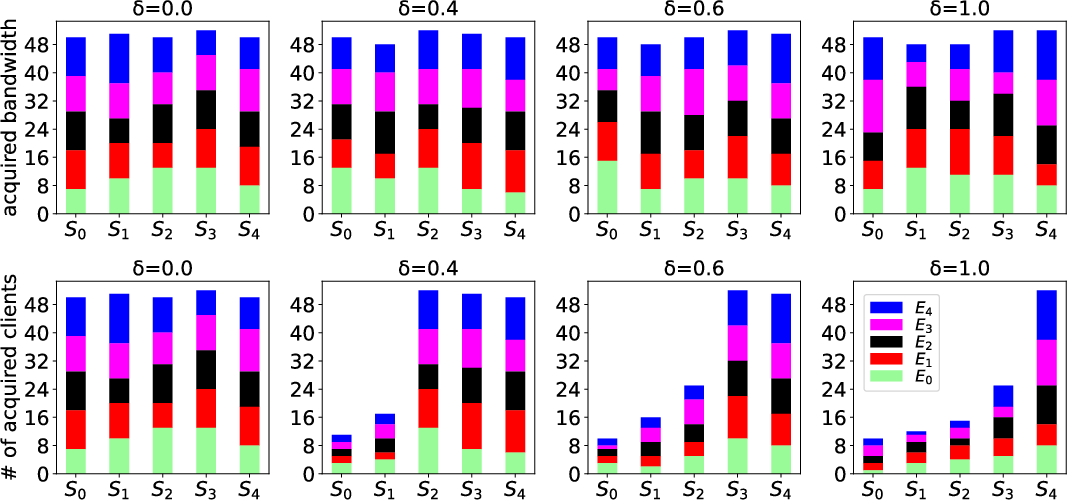

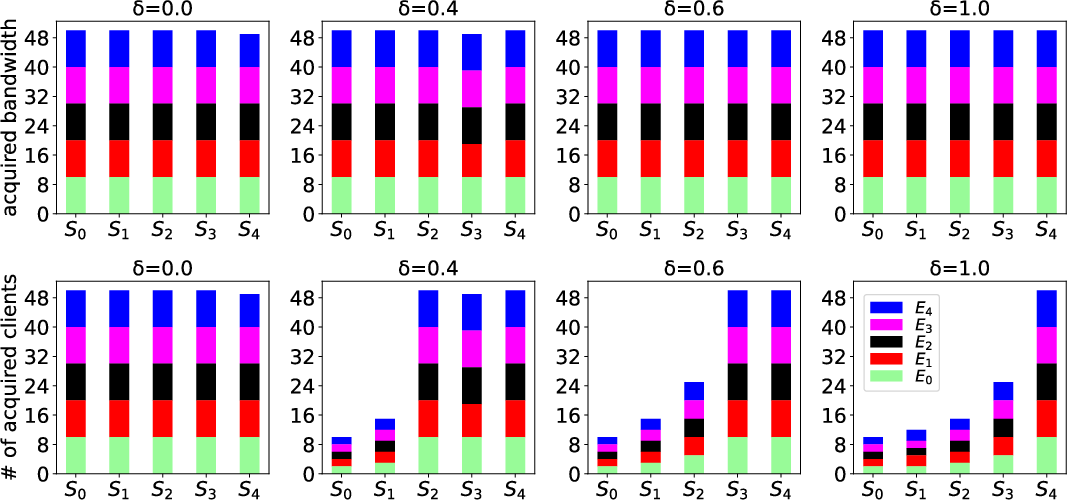

Adaptation to Funds and Per-Client Bandwidth

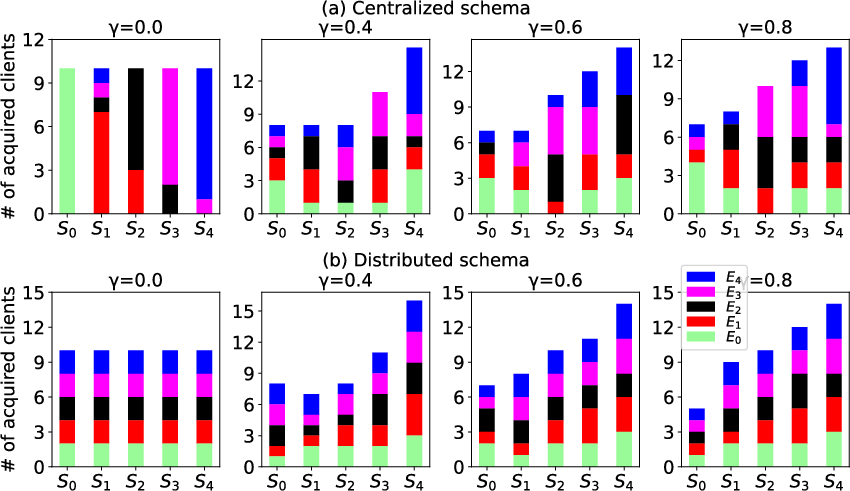

- FL servers with proportionally larger funds consistently acquire more bandwidth and thus can include more clients, with the relationship holding across both centralized and distributed approaches (Figure 5).

Figure 5: Number of clients per FL server as a function of server fund, confirming proportional fairness.

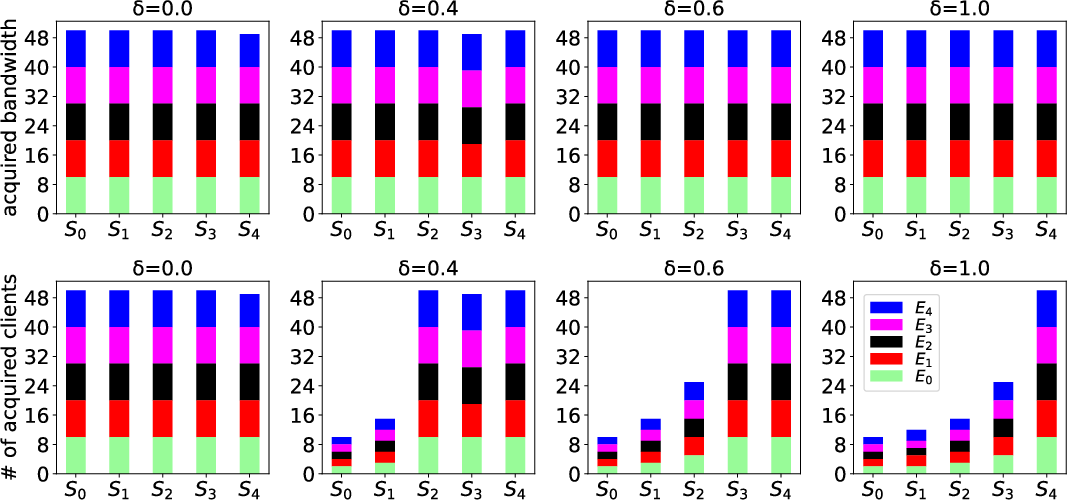

- When per-client required bandwidth varies among FL servers, all are granted comparable bandwidth; thus, FL servers with lower per-client requirements can support more clients (Figure 6, Figure 7).

Figure 6: Centralized scheme—bandwidth and number of clients versus required per-client bandwidth.

Figure 7: Distributed scheme—bandwidth and number of clients versus required per-client bandwidth.

Impact on Test Accuracy

- In scenarios with uniform or mildly heterogeneous client distributions, all game-theoretic schemes achieve balanced and high test accuracy across FL servers, both for single-class and two-class per client setups.

- Under severe heterogeneity, some degradation in fairness and accuracy can occur, as predicted by theory, but even then, game-theoretic schemes maintain clear superiority over non-game-theoretic baseline methods.

Theoretical Implications

This work establishes several significant theoretical properties:

- The Nash Equilibrium structure for this setting ensures that decentralized coordination is possible, even in highly competitive environments.

- The requirement that all edge servers settle on identical pricing emerges as a necessary equilibrium property—a potentially relevant characteristic for real-world FL resource marketplaces.

- Formal fairness guarantees are rigorously defined and achieved as long as resource distribution is not extremely skewed.

Practical Implications and Future Prospects

The decentralized algorithm's design makes it directly deployable in realistic hierarchical FL systems, where edge servers are independently operated. As federated learning expands in geographically and administratively distributed settings, the need for robust, fair resource arbitration protocols will increase.

Potential extensions and future directions include:

- Generalization to asynchronous FL with dynamic client churn.

- Extension to multi-resource settings (e.g., combined compute and bandwidth).

- Integration with privacy-preserving protocols at the bandwidth allocation level.

- Market mechanisms for real-world deployment using the equilibrium insights provided.

Conclusion

This work formally establishes, implements, and empirically validates decentralized and fair bandwidth allocation for concurrent FL tasks in hierarchical systems. The combination of Stackelberg game modeling, efficient Nash Equilibrium approximation, and practical distributed coordination mechanisms concretely advances the design and operation of multi-tenant, hierarchical FL infrastructures.

Reference: "Fair Allocation of Bandwidth At Edge Servers For Concurrent Hierarchical Federated Learning" (2409.04921).