Robust Coordination under Misaligned Communication via Power Regularization

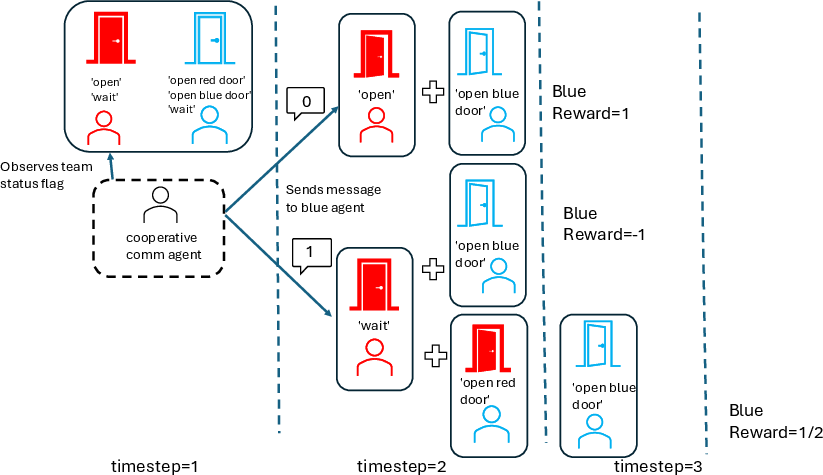

Abstract: Effective communication in Multi-Agent Reinforcement Learning (MARL) can significantly enhance coordination and collaborative performance in complex and partially observable environments. However, reliance on communication can also introduce vulnerabilities when agents are misaligned, potentially leading to adversarial interactions that exploit implicit assumptions of cooperative intent. Prior work has addressed adversarial behavior through power regularization through controlling the influence one agent exerts over another, but has largely overlooked the role of communication in these dynamics. This paper introduces Communicative Power Regularization (CPR), extending power regularization specifically to communication channels. By explicitly quantifying and constraining agents' communicative influence during training, CPR actively mitigates vulnerabilities arising from misaligned or adversarial communications. Evaluations across benchmark environments Red-Door-Blue-Door, Predator-Prey, and Grid Coverage demonstrate that our approach significantly enhances robustness to adversarial communication while preserving cooperative performance, offering a practical framework for secure and resilient cooperative MARL systems.

- The emergence of adversarial communication in multi-agent reinforcement learning. In Conference on Robot Learning, pp. 1394–1414. PMLR, 2021.

- Multi-agent adversarial attacks for multi-channel communications. arXiv preprint arXiv:2201.09149, 2022.

- Liir: Learning individual intrinsic reward in multi-agent reinforcement learning. Advances in Neural Information Processing Systems, 32, 2019.

- Adversarial attacks in consensus-based multi-agent reinforcement learning. In 2021 American Control Conference (ACC), pp. 3050–3055. IEEE, 2021.

- Counterfactual multi-agent policy gradients. In Proceedings of the AAAI conference on artificial intelligence, 2018.

- Towards comprehensive testing on the robustness of cooperative multi-agent reinforcement learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 115–122, 2022.

- Sparse adversarial attack in multi-agent reinforcement learning. arXiv preprint arXiv:2205.09362, 2022.

- Reward redistribution mechanisms in multi-agent reinforcement learning. In Adaptive Learning Agents Workshop at the International Conference on Autonomous Agents and Multiagent Systems, 2020.

- Social influence as intrinsic motivation for multi-agent deep reinforcement learning. In Kamalika Chaudhuri and Ruslan Salakhutdinov (eds.), Proceedings of the 36th International Conference on Machine Learning, volume 97 of Proceedings of Machine Learning Research, pp. 3040–3049. PMLR, 09–15 Jun 2019. URL https://proceedings.mlr.press/v97/jaques19a.html.

- Model-free conventions in multi-agent reinforcement learning with heterogeneous preferences. arXiv preprint arXiv:2010.09054, 2020.

- The benefits of power regularization in cooperative reinforcement learning. In Proceedings of the 2023 International Conference on Autonomous Agents and Multiagent Systems, pp. 457–465, 2023.

- Attacking cooperative multi-agent reinforcement learning by adversarial minority influence, 2023.

- On the robustness of cooperative multi-agent reinforcement learning. In 2020 IEEE Security and Privacy Workshops (SPW), pp. 62–68, 2020. doi: 10.1109/SPW50608.2020.00027.

- Learning to ground multi-agent communication with autoencoders. Advances in Neural Information Processing Systems, 34:15230–15242, 2021.

- A review of cooperative multi-agent deep reinforcement learning. Applied Intelligence, 53(11):13677–13722, 2023.

- A theory of mind approach as test-time mitigation against emergent adversarial communication. In Proceedings of the 2023 International Conference on Autonomous Agents and Multiagent Systems, pp. 2842–2844, 2023.

- Learning when to communicate at scale in multiagent cooperative and competitive tasks. arXiv preprint arXiv:1812.09755, 2018.

- Learning multiagent communication with backpropagation. Advances in neural information processing systems, 29, 2016.

- Adversarial attacks on multi-agent communication. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 7768–7777, 2021.

- Deconstructing cooperation and ostracism via multi-agent reinforcement learning, 2023.

- Fop: Factorizing optimal joint policy of maximum-entropy multi-agent reinforcement learning. In International Conference on Machine Learning, pp. 12491–12500. PMLR, 2021.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.