Stable Code Technical Report

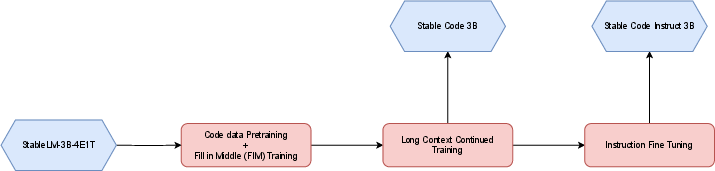

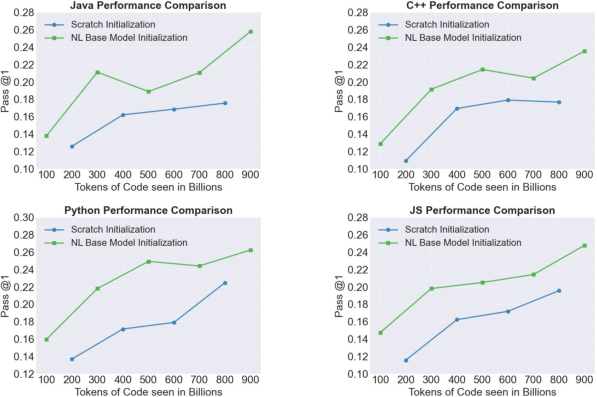

Abstract: We introduce Stable Code, the first in our new-generation of code LLMs series, which serves as a general-purpose base code LLM targeting code completion, reasoning, math, and other software engineering-based tasks. Additionally, we introduce an instruction variant named Stable Code Instruct that allows conversing with the model in a natural chat interface for performing question-answering and instruction-based tasks. In this technical report, we detail the data and training procedure leading to both models. Their weights are available via Hugging Face for anyone to download and use at https://huggingface.co/stabilityai/stable-code-3b and https://huggingface.co/stabilityai/stable-code-instruct-3b. This report contains thorough evaluations of the models, including multilingual programming benchmarks, and the MT benchmark focusing on multi-turn dialogues. At the time of its release, Stable Code is the state-of-the-art open model under 3B parameters and even performs comparably to larger models of sizes 7 billion and 15 billion parameters on the popular Multi-PL benchmark. Stable Code Instruct also exhibits state-of-the-art performance on the MT-Bench coding tasks and on Multi-PL completion compared to other instruction tuned models. Given its appealing small size, we also provide throughput measurements on a number of edge devices. In addition, we open source several quantized checkpoints and provide their performance metrics compared to the original model.

- Stable code complete alpha.

- Learning to represent programs with graphs. ArXiv, abs/1711.00740, 2017.

- code2seq: Generating sequences from structured representations of code. ArXiv, abs/1808.01400, 2018.

- code2vec: learning distributed representations of code. Proceedings of the ACM on Programming Languages, 3:1 – 29, 2018.

- Program synthesis with large language models. arXiv preprint arXiv:2108.07732, 2021.

- Llemma: An open language model for mathematics, 2023.

- Layer normalization, 2016.

- Qwen technical report, 2023.

- Training a helpful and harmless assistant with reinforcement learning from human feedback, 2022.

- Efficient training of language models to fill in the middle. ArXiv, abs/2207.14255, 2022.

- Stable lm 2 1.6b technical report, 2024.

- A framework for the evaluation of code generation models. https://github.com/bigcode-project/bigcode-evaluation-harness, 2022.

- Gpt-neox-20b: An open-source autoregressive language model, 2022.

- Multipl-e: A scalable and polyglot approach to benchmarking neural code generation. IEEE Transactions on Software Engineering, 49(7):3675–3691, 2023.

- Teaching large language models to self-debug, 2023.

- Together Computer. Redpajama: An open source recipe to reproduce llama training dataset, 2023.

- Ultrafeedback: Boosting language models with high-quality feedback, 2023.

- Cursor. Cursor: The ai-first code editor, 2024.

- Premkumar T. Devanbu. On the naturalness of software. 2012 34th International Conference on Software Engineering (ICSE), pages 837–847, 2012.

- GitHub. Github copilot: The world’s most widely adopted ai developer tool., 2024.

- Deepseek-coder: When the large language model meets programming – the rise of code intelligence, 2024.

- MLX: Efficient and flexible machine learning on apple silicon, 2023.

- Large language models for software engineering: A systematic literature review, 2023.

- Camels in a changing climate: Enhancing lm adaptation with tulu 2, 2023.

- The stack: 3 tb of permissively licensed source code. Preprint, 2022.

- StarCoder: may the source be with you!, 2023.

- Starcoder 2 and the stack v2: The next generation. arXiv preprint arXiv:2402.19173, 2024.

- Octopack: Instruction tuning code large language models. arXiv preprint arXiv:2308.07124, 2023.

- Codegen: An open large language model for code with multi-turn program synthesis. In International Conference on Learning Representations, 2022.

- The refinedweb dataset for falcon llm: Outperforming curated corpora with web data, and web data only, 2023.

- Improving language understanding by generative pre-training, 2018.

- Direct preference optimization: Your language model is secretly a reward model, 2023.

- Zero: Memory optimizations toward training trillion parameter models, 2020.

- Code llama: Open foundation models for code, 2023.

- StackOverFlow. Stackoverflow developer survey - 2022, 2022.

- Roformer: Enhanced transformer with rotary position embedding, 2023.

- Llama: Open and efficient foundation language models, 2023.

- Stablelm 3b 4e1t, 2023.

- Zephyr: Direct distillation of lm alignment. arXiv preprint arXiv:2310.16944, 2023.

- Wizardlm: Empowering large language models to follow complex instructions. arXiv preprint arXiv:2304.12244, 2023.

- If llm is the wizard, then code is the wand: A survey on how code empowers large language models to serve as intelligent agents.

- Metamath: Bootstrap your own mathematical questions for large language models. arXiv preprint arXiv:2309.12284, 2023.

- Root mean square layer normalization, 2019.

- Judging llm-as-a-judge with mt-bench and chatbot arena, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.