How well can a large language model explain business processes as perceived by users?

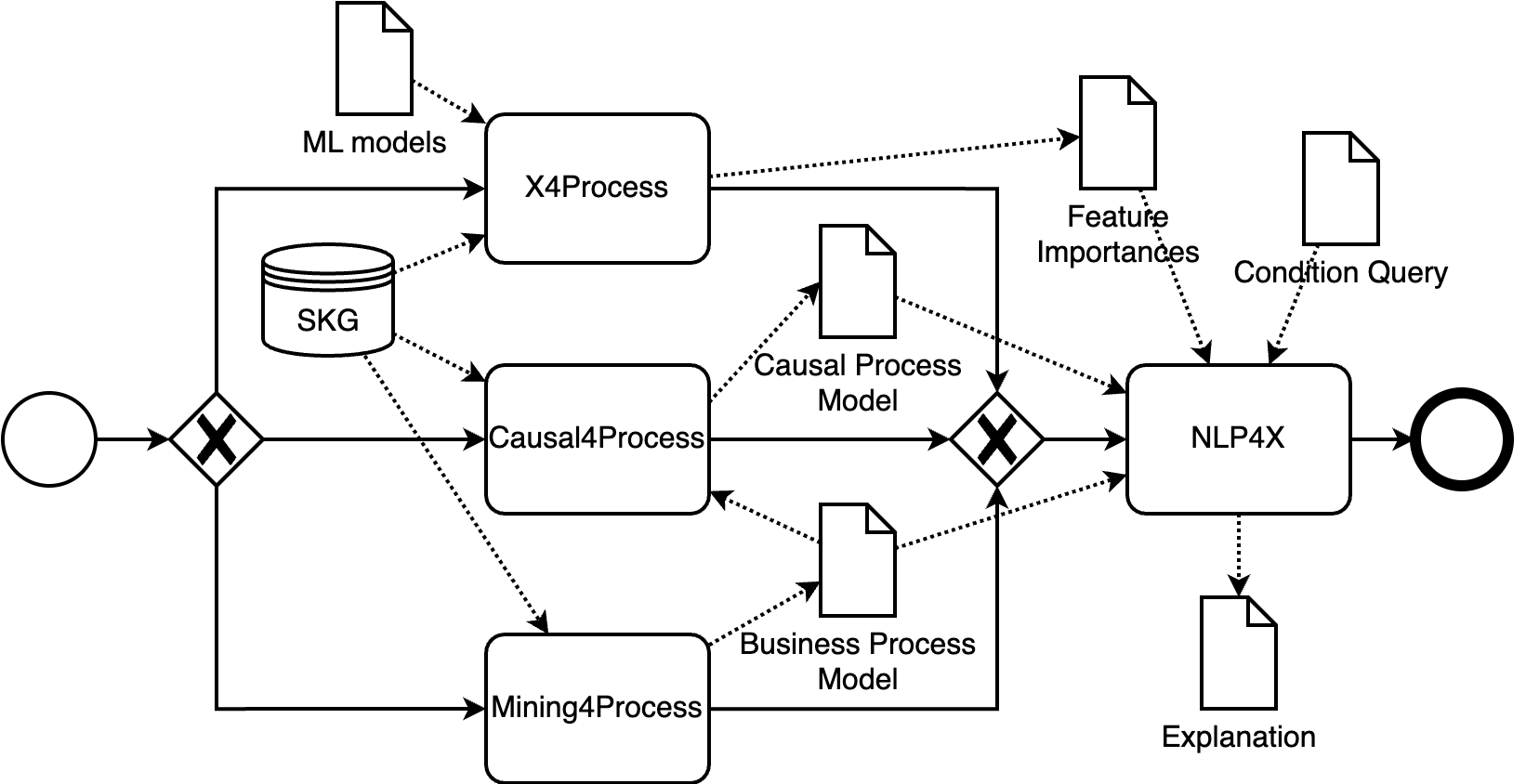

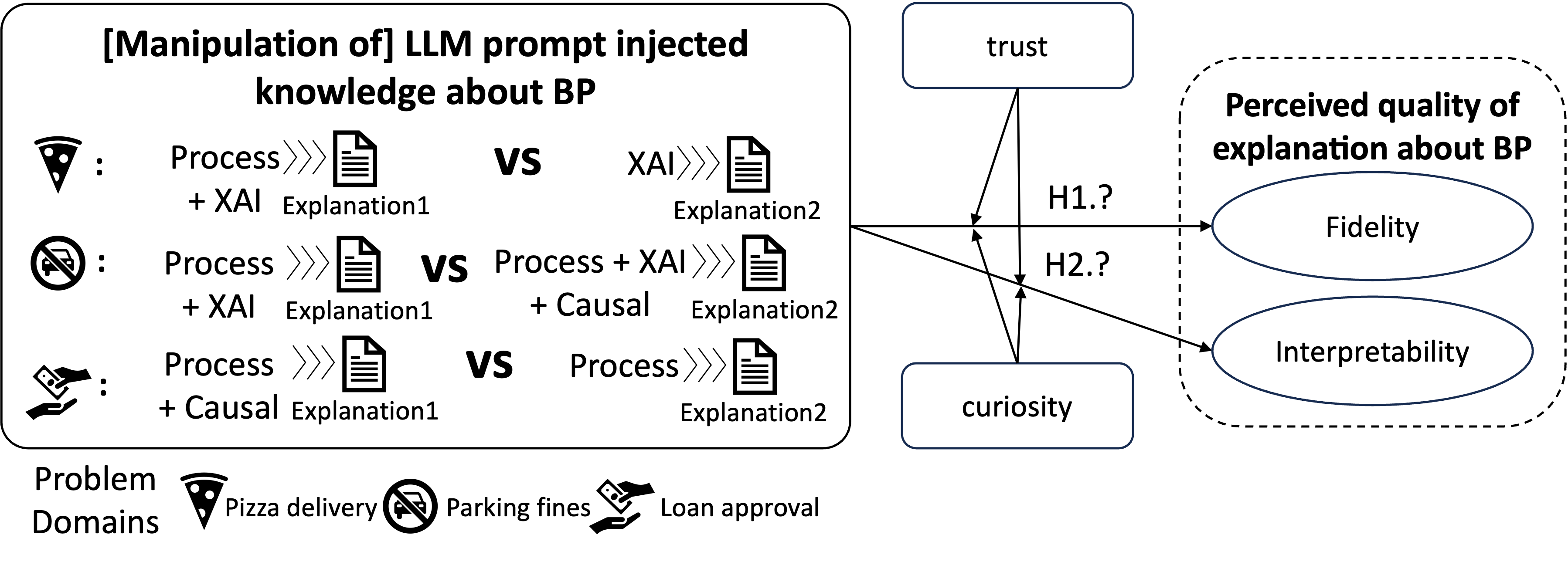

Abstract: LLMs are trained on a vast amount of text to interpret and generate human-like textual content. They are becoming a vital vehicle in realizing the vision of the autonomous enterprise, with organizations today actively adopting LLMs to automate many aspects of their operations. LLMs are likely to play a prominent role in future AI-augmented business process management systems, catering functionalities across all system lifecycle stages. One such system's functionality is Situation-Aware eXplainability (SAX), which relates to generating causally sound and human-interpretable explanations. In this paper, we present the SAX4BPM framework developed to generate SAX explanations. The SAX4BPM suite consists of a set of services and a central knowledge repository. The functionality of these services is to elicit the various knowledge ingredients that underlie SAX explanations. A key innovative component among these ingredients is the causal process execution view. In this work, we integrate the framework with an LLM to leverage its power to synthesize the various input ingredients for the sake of improved SAX explanations. Since the use of LLMs for SAX is also accompanied by a certain degree of doubt related to its capacity to adequately fulfill SAX along with its tendency for hallucination and lack of inherent capacity to reason, we pursued a methodological evaluation of the perceived quality of the generated explanations. We developed a designated scale and conducted a rigorous user study. Our findings show that the input presented to the LLMs aided with the guard-railing of its performance, yielding SAX explanations having better-perceived fidelity. This improvement is moderated by the perception of trust and curiosity. More so, this improvement comes at the cost of the perceived interpretability of the explanation.

- doi:10.1145/3576047.

- doi:10.3390/electronics10050593.

- doi:10.18653/v1/2023.findings-emnlp.743. URL https://aclanthology.org/2023.findings-emnlp.743

- doi:10.48550/arXiv.2310.14975. URL https://arxiv.org/abs/2310.14975v1

- doi:10.1145/3560815.

- doi:10.2196/50638.

- doi:10.1007/978-3-662-59432-2{_}8. URL http://link.springer.com/10.1007/978-3-662-59432-2_8

- doi:10.1007/978-3-662-49851-4. URL http://link.springer.com/10.1007/978-3-662-49851-4

- doi:10.1007/978-3-319-77525-8{_}88. URL https://doi.org/10.1007/978-3-319-77525-8_88

- doi:10.7551/mitpress/1754.001.0001. URL https://direct.mit.edu/books/book/2057/causation-prediction-and-search

- doi:10.1007/978-3-030-66498-5{_}12. URL https://link.springer.com/10.1007/978-3-030-66498-5_12

- doi:10.1007/978-4-431-55784-5. URL https://link.springer.com/10.1007/978-4-431-55784-5

- doi:10.1007/978-3-031-41623-1{_}7. URL https://link.springer.com/10.1007/978-3-031-41623-1_7

- doi:10.1109/ACCESS.2018.2870052.

- doi:10.1080/10580530.2020.1849465.

- doi:10.3390/electronics12071670.

- doi:10.1007/s13218-019-00586-1.

- doi:10.1145/3236009.

- doi:10.1145/2939672.2939778.

- doi:10.2200/s00873ed1v01y201808dtm051.

- doi:10.1007/s13740-021-00122-1.

- doi:10.1007/978-3-031-08848-3{_}9. URL https://link.springer.com/10.1007/978-3-031-08848-3_9

- doi:10.1007/s10115-018-1214-x.

- doi:10.1007/978-3-031-25383-6{_}5.

- doi:10.1016/j.inffus.2021.05.009.

- doi:10.1145/3351095.3372870.

- doi:10.1016/j.jbi.2020.103655.

- doi:10.3390/electronics8080832.

- doi:10.1109/VLHCC.2013.6645235.

- doi:10.1518/hfes.46.1.50{_}30392.

- doi:10.1109/DSAA.2018.00018.

- doi:10.3389/fcomp.2023.1096257.

- doi:10.1007/s13218-020-00636-z.

- doi:oclc/56932490.

- doi:10.2307/249008.

- doi:10.1287/isre.2.3.192.

- doi:10.3969/j.issn.1672-7347.2012.02.007.

- doi:10.1177/070674379403900303.

- doi:10.1109/ICWS60048.2023.00099.

- doi:10.1007/978-3-031-50974-2{_}4.

- doi:10.1007/978-3-031-50974-2{_}34.

- doi:https://doi.org/10.1007/978-3-031-50974-2{_}32.

- doi:10.18653/v1/2022.acl-demo.9.

- doi:10.1109/ICPM49681.2020.00012. URL https://doi.org/10.1109/ICPM49681.2020.00012

- doi:10.1109/ICPM57379.2022.9980535. URL https://doi.org/10.1109/ICPM57379.2022.9980535

- doi:10.3390/A15060199. URL https://doi.org/10.3390/a15060199

- doi:10.1007/978-3-030-98581-3{_}15. URL https://doi.org/10.1007/978-3-030-98581-3_15

- doi:10.1007/978-3-030-91431-8{_}4. URL https://doi.org/10.1007/978-3-030-91431-8_4

- doi:10.1016/J.ENGAPPAI.2023.106678. URL https://doi.org/10.1016/j.engappai.2023.106678

- doi:10.1016/J.ENGAPPAI.2023.105904. URL https://doi.org/10.1016/j.engappai.2023.105904

- doi:10.1109/ICPM49681.2020.00028.

- doi:10.1109/ICPM53251.2021.9576853.

- doi:10.1016/j.is.2023.102198.

- doi:10.1073/pnas.1804597116.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.