Improving the performance of weak supervision searches using transfer and meta-learning

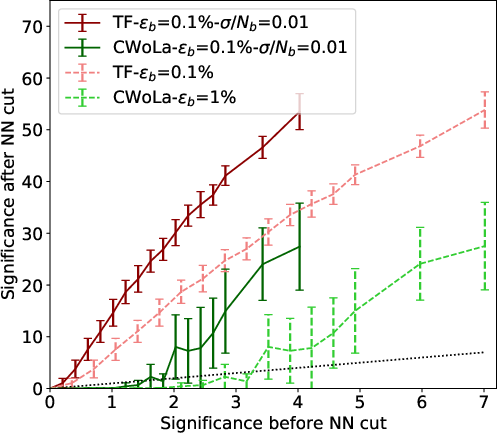

Abstract: Weak supervision searches have in principle the advantages of both being able to train on experimental data and being able to learn distinctive signal properties. However, the practical applicability of such searches is limited by the fact that successfully training a neural network via weak supervision can require a large amount of signal. In this work, we seek to create neural networks that can learn from less experimental signal by using transfer and meta-learning. The general idea is to first train a neural network on simulations, thereby learning concepts that can be reused or becoming a more efficient learner. The neural network would then be trained on experimental data and should require less signal because of its previous training. We find that transfer and meta-learning can substantially improve the performance of weak supervision searches.

- Y.-C. J. Chen, C.-W. Chiang, G. Cottin, and D. Shih, “Boosted W𝑊Witalic_W and Z𝑍Zitalic_Z tagging with jet charge and deep learning,” Phys. Rev. D 101 no. 5, (2020) 053001, arXiv:1908.08256 [hep-ph].

- E. Bernreuther, T. Finke, F. Kahlhoefer, M. Krämer, and A. Mück, “Casting a graph net to catch dark showers,” SciPost Phys. 10 no. 2, (2021) 046, arXiv:2006.08639 [hep-ph].

- S. Chang, T.-K. Chen, and C.-W. Chiang, “Distinguishing W′superscript𝑊′W^{\prime}italic_W start_POSTSUPERSCRIPT ′ end_POSTSUPERSCRIPT signals at hadron colliders using neural networks,” Phys. Rev. D 103 no. 3, (2021) 036016, arXiv:2007.14586 [hep-ph].

- C.-W. Chiang, D. Shih, and S.-F. Wei, “VBF vs. GGF Higgs with Full-Event Deep Learning: Towards a Decay-Agnostic Tagger,” Phys. Rev. D 107 no. 1, (2023) 016014, arXiv:2209.05518 [hep-ph].

- E. M. Metodiev, B. Nachman, and J. Thaler, “Classification without labels: Learning from mixed samples in high energy physics,” JHEP 10 (2017) 174, arXiv:1708.02949 [hep-ph].

- M. Farina, Y. Nakai, and D. Shih, “Searching for New Physics with Deep Autoencoders,” Phys. Rev. D 101 no. 7, (2020) 075021, arXiv:1808.08992 [hep-ph].

- J. Batson, C. G. Haaf, Y. Kahn, and D. A. Roberts, “Topological Obstructions to Autoencoding,” JHEP 04 (2021) 280, arXiv:2102.08380 [hep-ph].

- R. T. D’Agnolo and A. Wulzer, “Learning New Physics from a Machine,” Phys. Rev. D 99 no. 1, (2019) 015014, arXiv:1806.02350 [hep-ph].

- B. Nachman and D. Shih, “Anomaly Detection with Density Estimation,” Phys. Rev. D 101 (2020) 075042, arXiv:2001.04990 [hep-ph].

- A. Andreassen, B. Nachman, and D. Shih, “Simulation Assisted Likelihood-free Anomaly Detection,” Phys. Rev. D 101 no. 9, (2020) 095004, arXiv:2001.05001 [hep-ph].

- A. Hallin, J. Isaacson, G. Kasieczka, C. Krause, B. Nachman, T. Quadfasel, M. Schlaffer, D. Shih, and M. Sommerhalder, “Classifying anomalies through outer density estimation,” Phys. Rev. D 106 no. 5, (2022) 055006, arXiv:2109.00546 [hep-ph].

- G. Kasieczka, R. Mastandrea, V. Mikuni, B. Nachman, M. Pettee, and D. Shih, “Anomaly detection under coordinate transformations,” Phys. Rev. D 107 no. 1, (2023) 015009, arXiv:2209.06225 [hep-ph].

- A. Hallin, G. Kasieczka, T. Quadfasel, D. Shih, and M. Sommerhalder, “Resonant anomaly detection without background sculpting,” arXiv:2210.14924 [hep-ph].

- ATLAS Collaboration, G. Aad et al., “Dijet resonance search with weak supervision using s=13𝑠13\sqrt{s}=13square-root start_ARG italic_s end_ARG = 13 TeV pp𝑝𝑝ppitalic_p italic_p collisions in the ATLAS detector,” Phys. Rev. Lett. 125 no. 13, (2020) 131801, arXiv:2005.02983 [hep-ex].

- J. H. Collins, P. Martín-Ramiro, B. Nachman, and D. Shih, “Comparing weak- and unsupervised methods for resonant anomaly detection,” Eur. Phys. J. C 81 no. 7, (2021) 617, arXiv:2104.02092 [hep-ph].

- B. M. Dillon, L. Favaro, F. Feiden, T. Modak, and T. Plehn, “Anomalies, Representations, and Self-Supervision,” arXiv:2301.04660 [hep-ph].

- J. H. Collins, K. Howe, and B. Nachman, “Anomaly Detection for Resonant New Physics with Machine Learning,” Phys. Rev. Lett. 121 no. 24, (2018) 241803, arXiv:1805.02664 [hep-ph].

- T. Finke, M. Hein, G. Kasieczka, M. Krämer, A. Mück, P. Prangchaikul, T. Quadfasel, D. Shih, and M. Sommerhalder, “Back To The Roots: Tree-Based Algorithms for Weakly Supervised Anomaly Detection,” arXiv:2309.13111 [hep-ph].

- M. Freytsis, M. Perelstein, and Y. C. San, “Anomaly Detection in Presence of Irrelevant Features,” arXiv:2310.13057 [hep-ph].

- S. J. Pan and Q. Yang, “A survey on transfer learning,” IEEE Transactions on Knowledge and Data Engineering 22 no. 10, (2010) 1345–1359.

- L. Y. Pratt, J. Mostow, and C. A. Kamm, “Direct transfer of learned information among neural networks,” in AAAI Conference on Artificial Intelligence. 1991.

- T. Hospedales, A. Antoniou, P. Micaelli, and A. Storkey, “Meta-learning in neural networks: A survey,” IEEE Transactions on Pattern Analysis; Machine Intelligence 44 no. 09, (Sep, 2022) 5149–5169.

- Q. Sun, Y. Liu, T.-S. Chua, and B. Schiele, “Meta-transfer learning for few-shot learning,” arXiv:1812.02391 [cs.CV].

- G. Albouy et al., “Theory, phenomenology, and experimental avenues for dark showers: a Snowmass 2021 report,” Eur. Phys. J. C 82 no. 12, (2022) 1132, arXiv:2203.09503 [hep-ph].

- Z. Chacko, H.-S. Goh, and R. Harnik, “The Twin Higgs: Natural electroweak breaking from mirror symmetry,” Phys. Rev. Lett. 96 (2006) 231802, arXiv:hep-ph/0506256.

- P. W. Graham, D. E. Kaplan, and S. Rajendran, “Cosmological Relaxation of the Electroweak Scale,” Phys. Rev. Lett. 115 no. 22, (2015) 221801, arXiv:1504.07551 [hep-ph].

- H. Beauchesne, E. Bertuzzo, and G. Grilli Di Cortona, “Dark matter in Hidden Valley models with stable and unstable light dark mesons,” JHEP 04 (2019) 118, arXiv:1809.10152 [hep-ph].

- E. Bernreuther, F. Kahlhoefer, M. Krämer, and P. Tunney, “Strongly interacting dark sectors in the early Universe and at the LHC through a simplified portal,” JHEP 01 (2020) 162, arXiv:1907.04346 [hep-ph].

- H. Beauchesne and G. Grilli di Cortona, “Classification of dark pion multiplets as dark matter candidates and collider phenomenology,” JHEP 02 (2020) 196, arXiv:1910.10724 [hep-ph].

- CMS Collaboration, A. M. Sirunyan et al., “Search for new particles decaying to a jet and an emerging jet,” JHEP 02 (2019) 179, arXiv:1810.10069 [hep-ex].

- CMS Collaboration, A. Tumasyan et al., “Search for resonant production of strongly coupled dark matter in proton-proton collisions at 13 TeV,” JHEP 06 (2022) 156, arXiv:2112.11125 [hep-ex].

- ATLAS Collaboration, G. Aad et al., “Search for non-resonant production of semi-visible jets using Run 2 data in ATLAS,” Phys. Lett. B 848 (2024) 138324, arXiv:2305.18037 [hep-ex].

- ATLAS Collaboration, G. Aad et al., “Search for Resonant Production of Dark Quarks in the Dijet Final State with the ATLAS Detector,” arXiv:2311.03944 [hep-ex].

- D. Bardhan, Y. Kats, and N. Wunch, “Searching for dark jets with displaced vertices using weakly supervised machine learning,” arXiv:2305.04372 [hep-ph].

- C. Bierlich et al., “A comprehensive guide to the physics and usage of PYTHIA 8.3,” arXiv:2203.11601 [hep-ph].

- L. Carloni, J. Rathsman, and T. Sjostrand, “Discerning Secluded Sector gauge structures,” JHEP 04 (2011) 091, arXiv:1102.3795 [hep-ph].

- L. Carloni and T. Sjostrand, “Visible Effects of Invisible Hidden Valley Radiation,” JHEP 09 (2010) 105, arXiv:1006.2911 [hep-ph].

- J. Alwall, R. Frederix, S. Frixione, V. Hirschi, F. Maltoni, O. Mattelaer, H. S. Shao, T. Stelzer, P. Torrielli, and M. Zaro, “The automated computation of tree-level and next-to-leading order differential cross sections, and their matching to parton shower simulations,” JHEP 07 (2014) 079, arXiv:1405.0301 [hep-ph].

- DELPHES 3 Collaboration, J. de Favereau, C. Delaere, P. Demin, A. Giammanco, V. Lemaître, A. Mertens, and M. Selvaggi, “DELPHES 3, A modular framework for fast simulation of a generic collider experiment,” JHEP 02 (2014) 057, arXiv:1307.6346 [hep-ex].

- A. Butter et al., “The Machine Learning landscape of top taggers,” SciPost Phys. 7 (2019) 014, arXiv:1902.09914 [hep-ph].

- L. de Oliveira, M. Kagan, L. Mackey, B. Nachman, and A. Schwartzman, “Jet-images — deep learning edition,” JHEP 07 (2016) 069, arXiv:1511.05190 [hep-ph].

- G. Kasieczka, T. Plehn, M. Russell, and T. Schell, “Deep-learning Top Taggers or The End of QCD?,” JHEP 05 (2017) 006, arXiv:1701.08784 [hep-ph].

- F. Chollet et al., “Keras.” https://keras.io, 2015.

- M. Abadi et al., “TensorFlow: Large-Scale Machine Learning on Heterogeneous Distributed Systems,” arXiv:1603.04467 [cs.DC].

- ATLAS Collaboration, “Formulae for Estimating Significance,” 2020.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.