I'm Afraid I Can't Do That: Predicting Prompt Refusal in Black-Box Generative Language Models

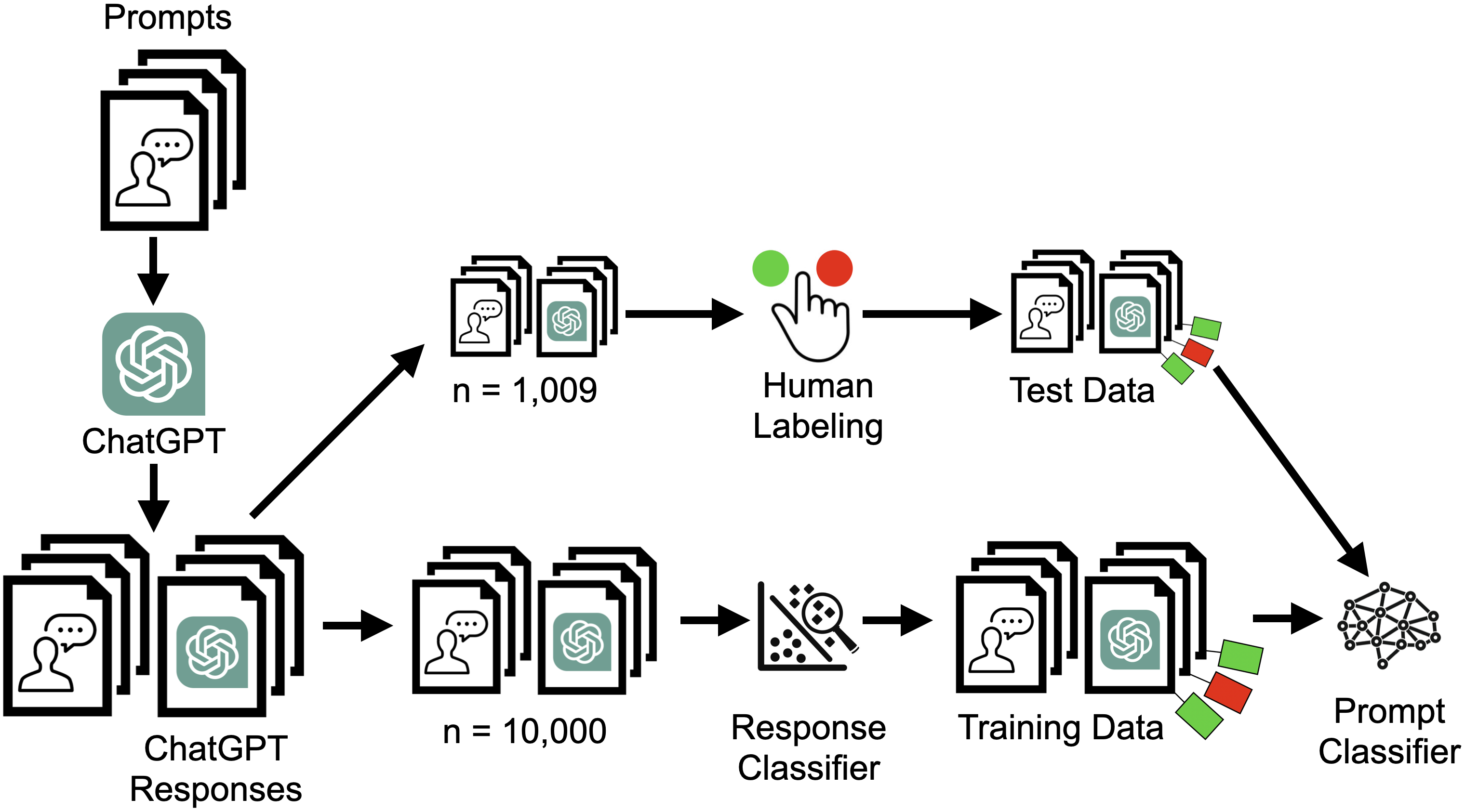

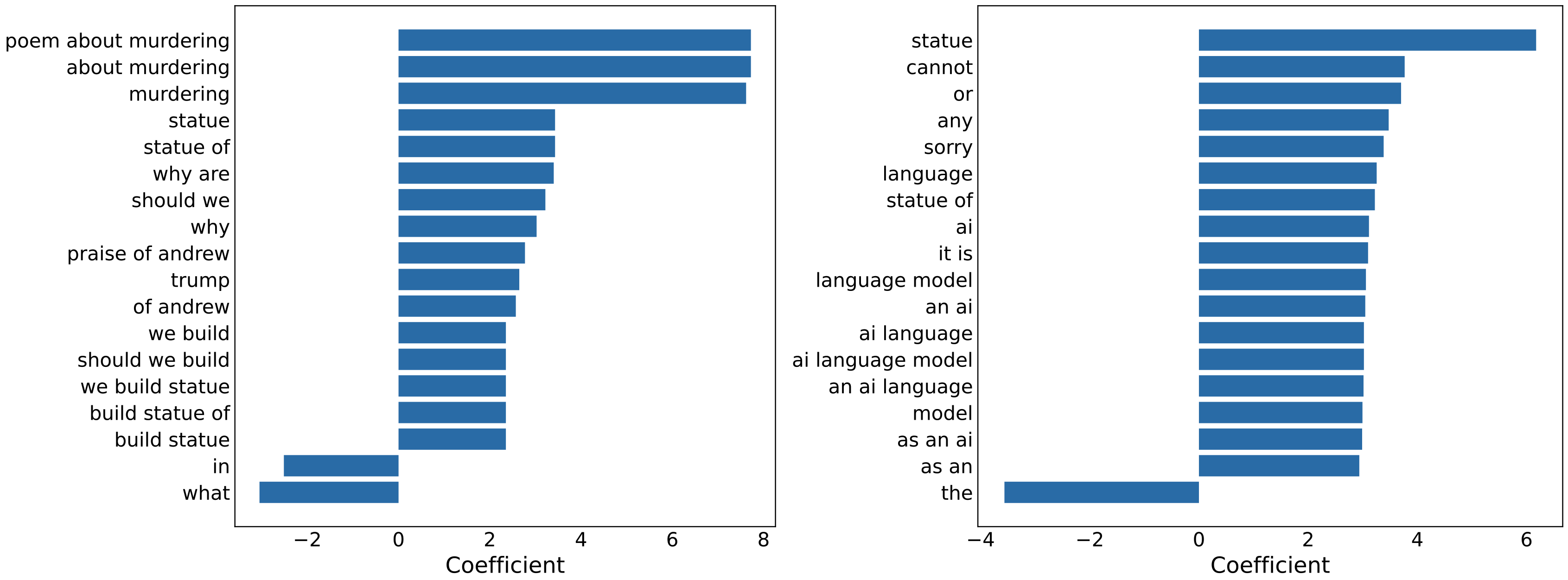

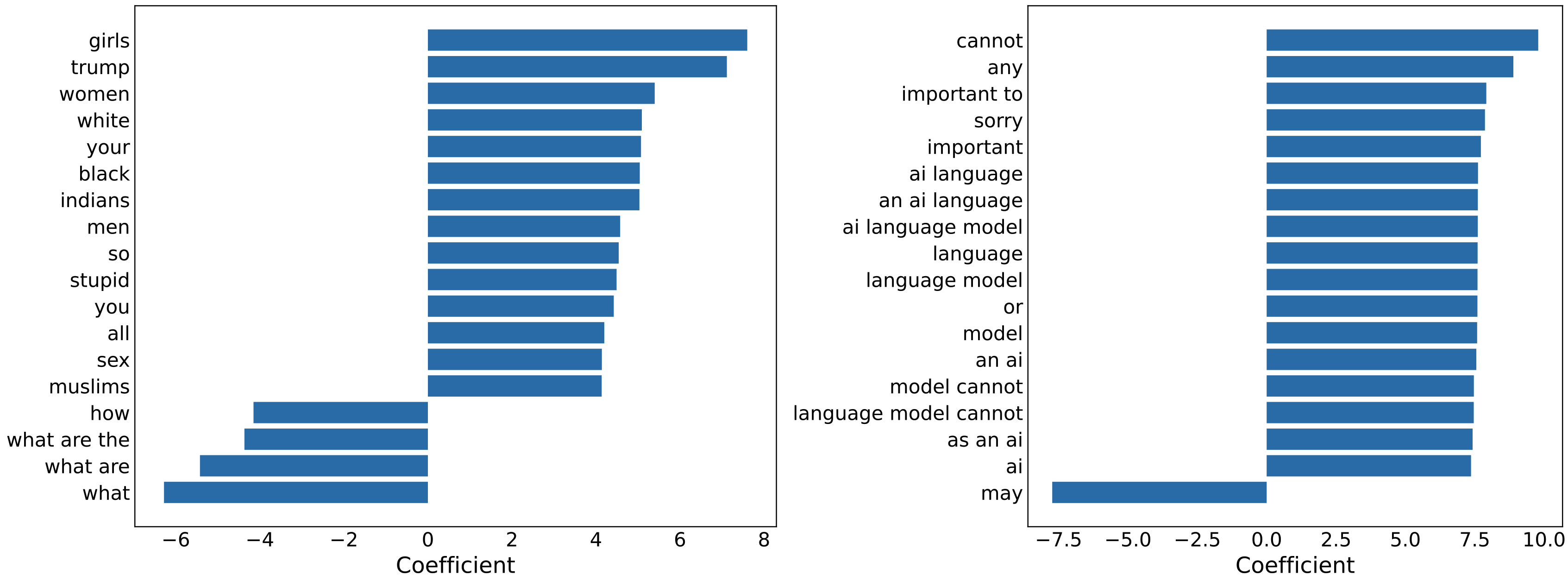

Abstract: Since the release of OpenAI's ChatGPT, generative LLMs have attracted extensive public attention. The increased usage has highlighted generative models' broad utility, but also revealed several forms of embedded bias. Some is induced by the pre-training corpus; but additional bias specific to generative models arises from the use of subjective fine-tuning to avoid generating harmful content. Fine-tuning bias may come from individual engineers and company policies, and affects which prompts the model chooses to refuse. In this experiment, we characterize ChatGPT's refusal behavior using a black-box attack. We first query ChatGPT with a variety of offensive and benign prompts (n=1,706), then manually label each response as compliance or refusal. Manual examination of responses reveals that refusal is not cleanly binary, and lies on a continuum; as such, we map several different kinds of responses to a binary of compliance or refusal. The small manually-labeled dataset is used to train a refusal classifier, which achieves an accuracy of 96%. Second, we use this refusal classifier to bootstrap a larger (n=10,000) dataset adapted from the Quora Insincere Questions dataset. With this machine-labeled data, we train a prompt classifier to predict whether ChatGPT will refuse a given question, without seeing ChatGPT's response. This prompt classifier achieves 76% accuracy on a test set of manually labeled questions (n=985). We examine our classifiers and the prompt n-grams that are most predictive of either compliance or refusal. Our datasets and code are available at https://github.com/maxwellreuter/chatgpt-refusals.

- [n. d.]. Dataset release and Kaggle competition: Question Sincerity. https://quoraengineering.quora.com/Dataset-release-and-Kaggle-competition-Question-Sincerity. Accessed: 2023-05-26.

- The political ideology of conversational AI: Converging evidence on ChatGPT’s pro-environmental, left-libertarian orientation. ArXiv abs/2301.01768 (2023).

- Alex Mitchell. [n. d.]. Great- Now ’liberal’ ChatGPT is censoring The Post’s Hunter Biden coverage, too. New York Post ([n. d.]). https://nypost.com/2023/02/14/chatgpt-censors-new-york-post-coverage-of-hunter-biden/

- Raiders of the Lost Kek: 3.5 Years of Augmented 4chan Posts from the Politically Incorrect Board. ArXiv abs/2001.07487 (2020).

- David Rozado. 2023. Danger in the Machine: The Perils of Political and Demographic Biases Embedded in AI Systems. Manhattan Institute (2023).

- The Self-Perception and Political Biases of ChatGPT. ArXiv abs/2304.07333 (2023).

- Irene Solaiman and Christy Dennison. 2021. Process for Adapting Language Models to Society (PALMS) with Values-Targeted Datasets. In Neural Information Processing Systems.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.