- The paper introduces iTFA, a fine-tuning method that achieves a 30% higher AP on LVIS compared to meta-learning methods.

- It utilizes a novel branch separation strategy to mitigate catastrophic forgetting during adaptation to novel classes.

- The method is validated on PASCAL VOC, COCO, and LVIS, effectively balancing novel class detection with base class retention.

Incremental Few-Shot Object Detection via Simple Fine-Tuning Approach

Introduction

The paper discusses the Incremental Few-Shot Object Detection (iFSD) problem, focusing on learning novel classes incrementally using only a few examples without revisiting base classes. Unlike previous iFSD works that rely primarily on meta-learning algorithms, which have shown insufficient performance for practical applications, the paper proposes a simple Incremental Two-stage Fine-tuning Approach (iTFA). The iTFA leverages fine-tuning rather than meta-learning strategies, aiming to improve both novel class detection and base class retention. iTFA is evaluated on various datasets including PASCAL VOC, COCO, and LVIS, demonstrating competitive performance and a 30% higher Average Precision (AP) than meta-learning methods on the LVIS dataset.

Methodology

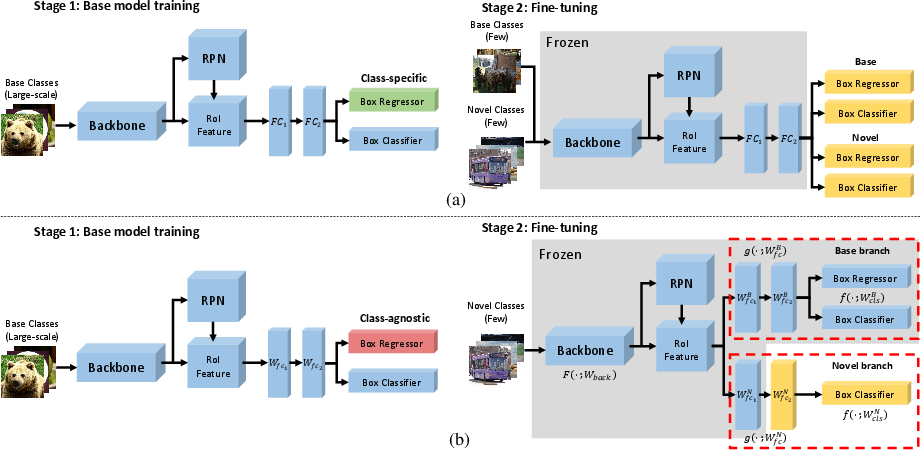

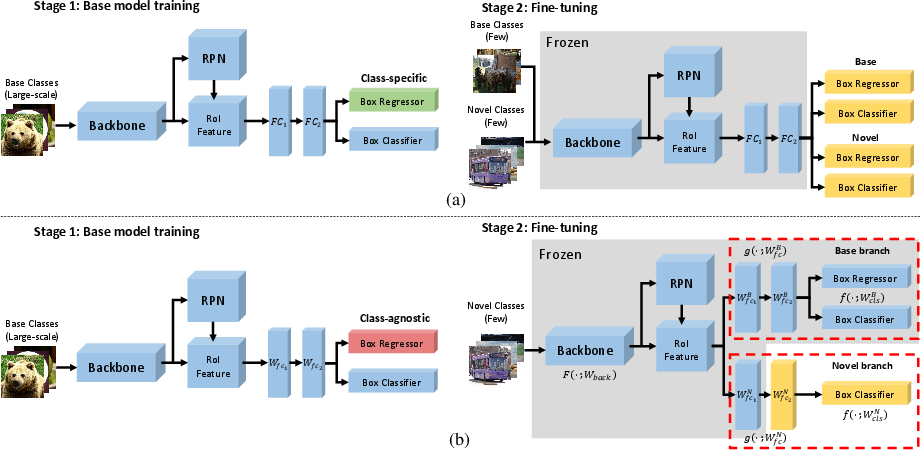

The proposed iTFA method effectively addresses iFSD by following a modified two-stage training strategy inspired by the TFA method. Unlike TFA, which uses both base and novel classes during the fine-tuning stage, iTFA focuses exclusively on novel classes to mitigate catastrophic forgetting. This section delineates the procedural implementation of iTFA, divided into base model training and fine-tuning stages.

Base Model Training

During the base model training stage, the feature extractor, the classifier, and a class-agnostic box regressor are trained using a dataset containing ample examples of base classes. This approach ensures the model acquires a well-aligned feature space ready for adaptation to novel classes.

Figure 1: Method overview. (a) the illustration of TFA, (b) the overall framework of iTFA. Both frameworks consist of two stages: base model training and fine-tuning stages. The whole object detector is trained with large-scale base classes in the base model training stage. In the fine-tuning stage, each method fixes the components indicated by gray boxes and fine-tunes other structures. TFA uses both base and novel classes in the fine-tuning stage. However, iTFA only uses novel classes, which causes catastrophic forgetting. To overcome catastrophic forgetting, iTFA divides the RoI feature extractor and classifier into two branches and fine-tunes only the novel branch. Also, the class-agnostic regressor is used for easier adaptation to novel classes.

Fine-Tuning

In the fine-tuning stage, the method adapts to novel class data without revisiting base class data, employing strategies to minimize catastrophic forgetting. The inclusion of a class-agnostic regressor during base model training stands out as a critical element, enhancing iTFA's capability to manage novel classes effectively.

- Separation of the Novel Branch: By dividing the RoI feature extractor and the classifier into distinct branches for base and novel classes, iTFA isolates novel class adaptation from base class retention, ensuring that the learning of novel classes does not overwrite base class knowledge.

- Feature and Classifier Compatibility: The method employs a linear classifier over a cosine similarity-based one to maintain consistency within feature space, tailored specifically for novel class feature alignment.

Experiments

Dataset and Evaluation Metric

The paper evaluates iTFA on PASCAL VOC, COCO, and LVIS datasets, adhering to established benchmarks in the iFSD domain. The emphasis on maintaining high accuracy over both base and novel classes is quantified using various metrics, including AP50 and mean average precision at multiple IoU thresholds.

Ablation Studies

Extensive experiments underline the efficacy of the various components of iTFA. The fine-tuning of specific layers in the RoI feature extractor and the use of a class-agnostic regressor are shown to significantly boost performance relative to baseline and prior methods, substantiating the modular effectiveness of the approach.

Comparison with State-of-the-Art

The results presented in Tables and Figures (as shown in Figure 2 for qualitative evaluation) display the superior performance of iTFA over both existing fine-tuning and meta-learning methods across different datasets and shot settings. iTFA consistently maintains high base class retention rates while enhancing novel class AP, outperforming state-of-the-art approaches without requiring base class exemplars during fine-tuning.

Figure 2: Qualitative results of iTFA on COCO with K=10. The top two rows are success cases, and the bottom row images are failure cases. The blue and red boxes indicate the base and novel classes, respectively (zoom view better).

Conclusion

The paper presents iTFA as a robust and simple fine-tuning framework for addressing the iFSD challenge, preserving base class accuracy while effectively adapting to novel classes with minimal data. The approach significantly elevates the performance benchmark over traditional meta-learning models, providing insights into fine-tuning strategies applicable to various object detection architectures. Future directions may explore extending this methodology to other detection frameworks and deeper networks to further enhance adaptability and performance in real-world scenarios.