- The paper introduces a hybrid CNN-Transformer model with belief matching loss that achieves patient-independent, robust seizure detection in EEG.

- It employs a three-level detection pipeline and a novel MOES metric to handle cross-dataset variations and clinically realistic evaluations.

- The model generalizes efficiently across diverse EEG datasets with minimal parameters, outperforming larger neural architectures and commercial tools.

Introduction

The paper "Six-center Assessment of CNN-Transformer with Belief Matching Loss for Patient-independent Seizure Detection in EEG" (2208.00025) presents a methodologically rigorous and systematically validated approach to automated patient-independent seizure detection in EEGs, leveraging a hybrid CNN-transformer model with belief matching (BM) loss, cross-dataset generalization, and a novel minimum overlap evaluation scoring (MOES) metric. The architecture is designed to be robust across variations in patient population, acquisition modality (scalp EEG, iEEG), and electrode configuration, addressing crucial barriers in clinical deployment of automated seizure detection systems.

Seizure Detector Pipeline and Model Architecture

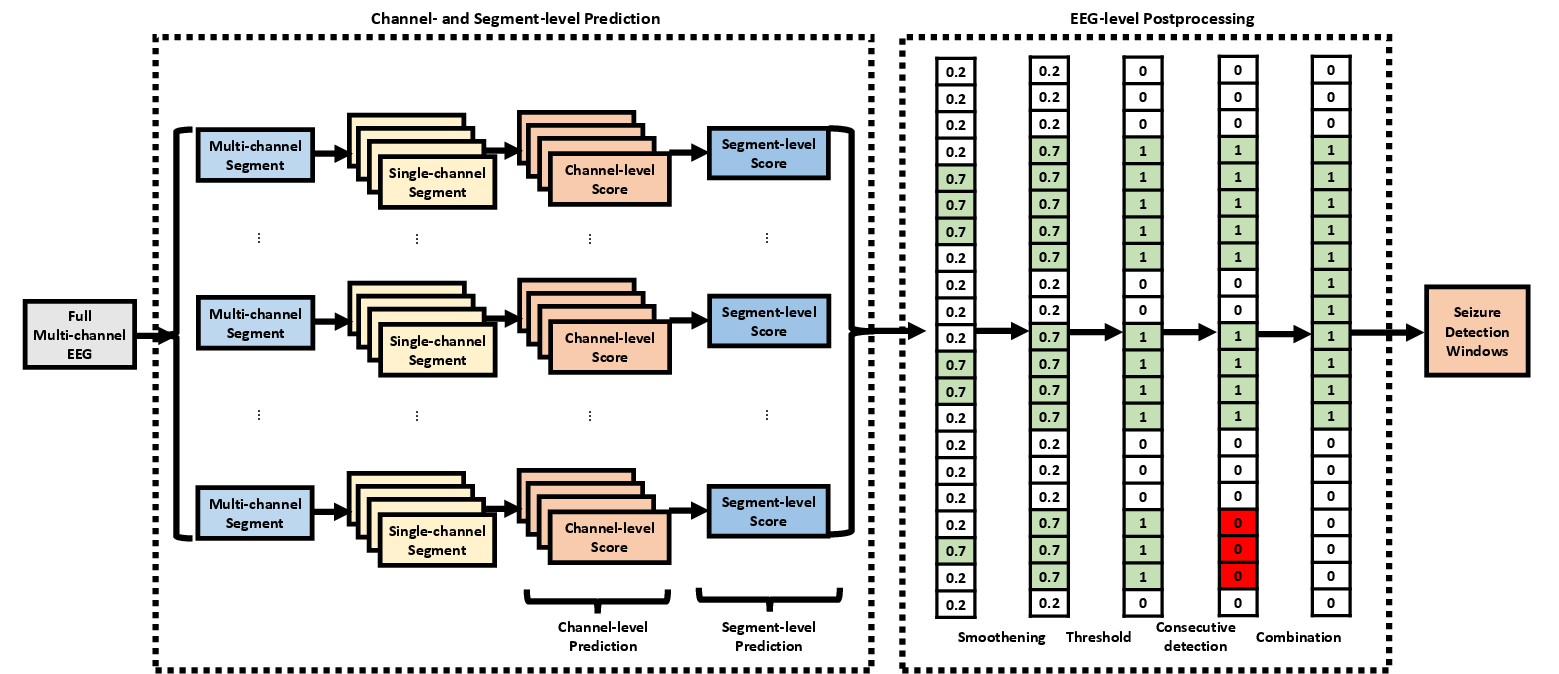

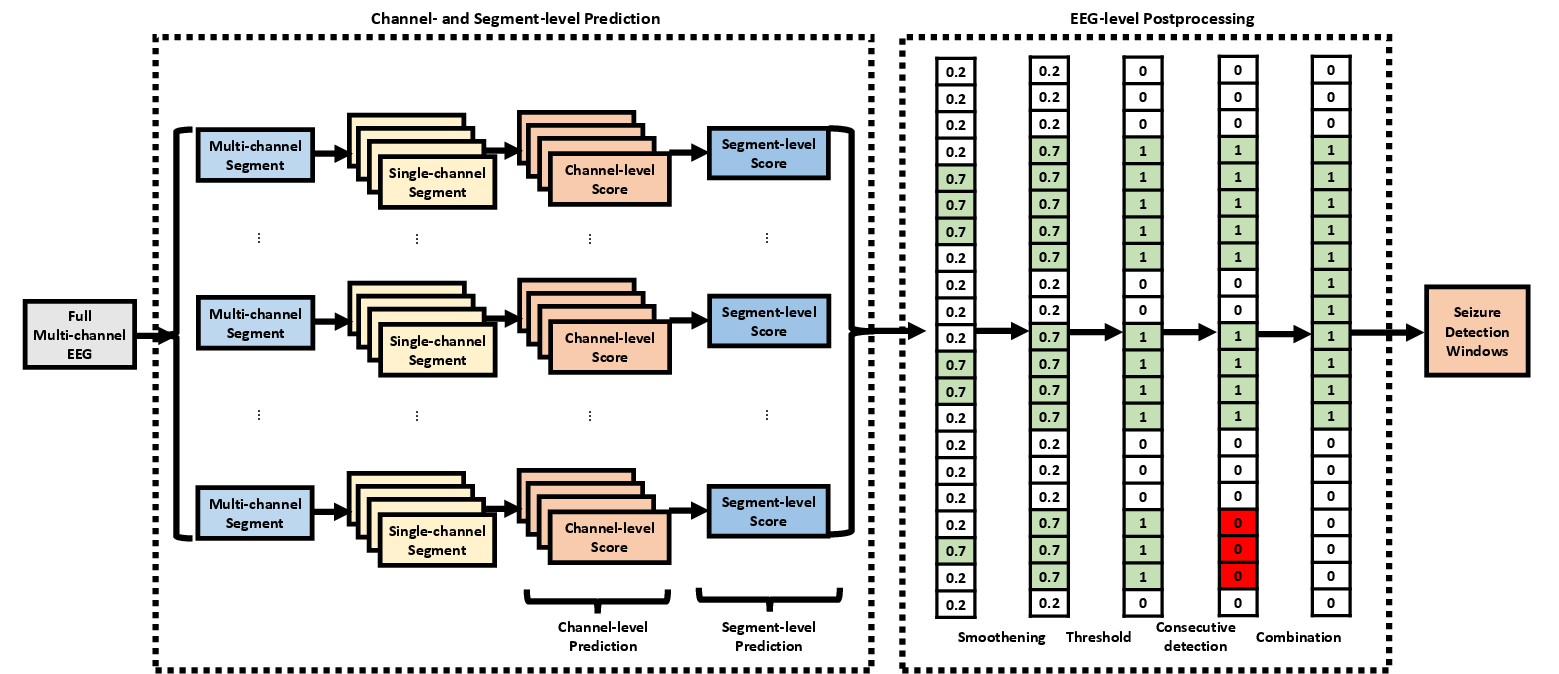

The proposed pipeline operates hierarchically at three levels: channel, segment, and EEG. Initial detection is performed on single-channel EEG windows, aggregating predictions through statistical feature extraction and regional grouping to form segment-level detection. These outputs are then post-processed at the EEG level using filtering, thresholding, chain identification, and window merging to resolve final seizure onset and offset predictions (Figure 1).

Figure 1: The seizure detector pipeline executes detection at channel, segment, and EEG levels using deep learning, feature aggregation, and multi-stage postprocessing.

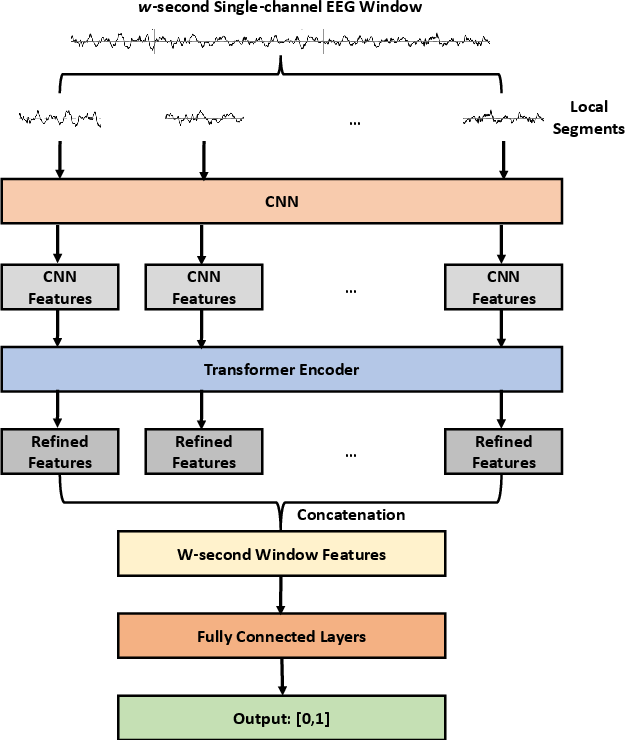

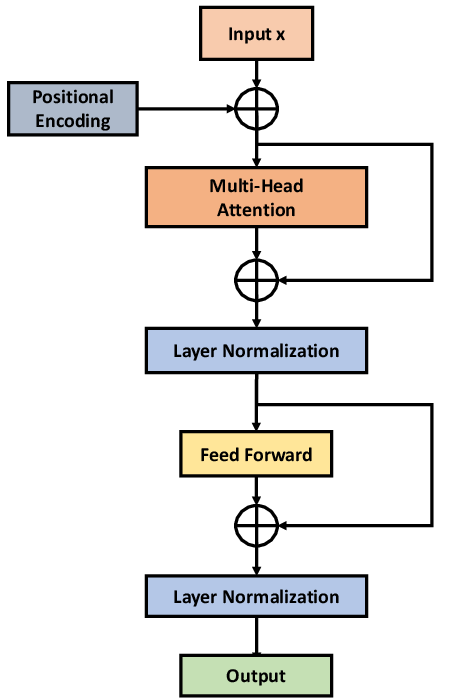

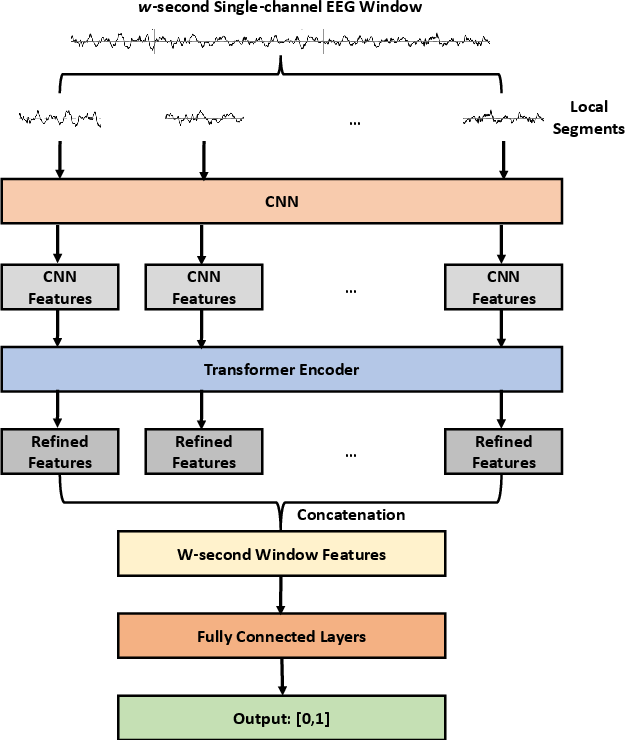

At the core, the CNN-TRF-BM model utilizes convolutional layers for local feature extraction, followed by a transformer encoder to model long-range sequence dependencies often missed by pure CNNs. The channel-level classifier incorporates a belief matching loss, optimizing both the alignment of predicted and target distributions and producing more calibrated uncertainty estimates critical for high-stakes decision making compared to conventional softmax-based loss. The transformer is attached after the last convolutional layer, taking as input 1s-interval features from the W-second window with 25% overlap (Figure 2).

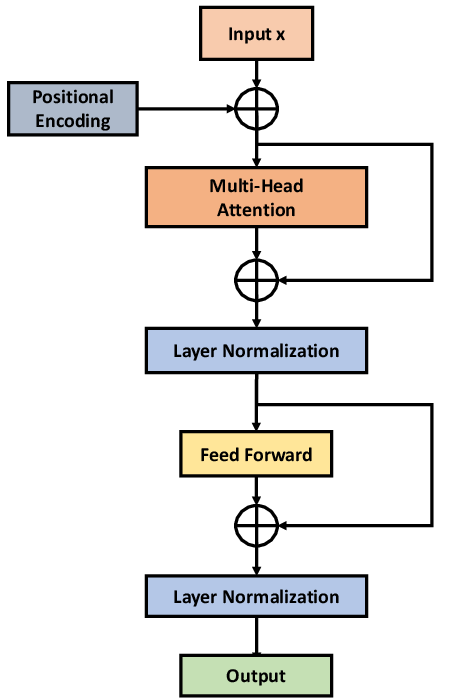

Figure 2: (a) CNN-TRF-BM architecture with convolutional frontend and transformer encoder; (b) transformer encoder details.

Following channel-wise probability computation, five regional statistical summaries (frontal, central, occipital, parietal, and global) are extracted per segment. These 40-dimensional features are input to an XGBoost segment-level detector. The architecture is dataset-independent, able to function across arbitrary channel counts, and supports both scalp EEG (converted to bipolar montage) and iEEG (processed in native monopolar montage).

Evaluation Metric: Minimum Overlap Evaluation Scoring (MOES)

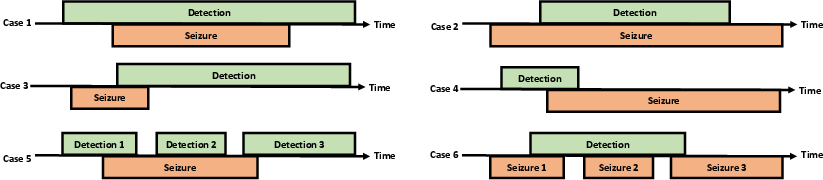

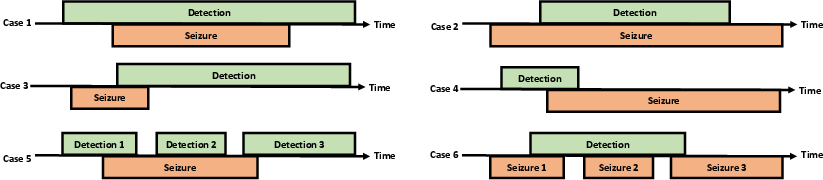

A substantial limitation in prior work is the lack of realistic evaluation metrics. Existing metrics such as any-overlap (OVLP), time-aligned event scoring (TAES), and increased margin scoring (IMS) are either overly permissive or stringent, allowing for clinically irrelevant performance inflation or underestimation. The MOES criterion accepts a detection only if both detection overlap (DOL) and seizure overlap (SOL) exceed 30% and the intersection duration is minimally 10 seconds, aligning with real-world clinical requirements (Figure 3).

Figure 3: Illustration of canonical detection/annotation overlap scenarios and how MOES adjudicates cases.

Experimental Setup

The methodology is validated via training on the Temple University Hospital Seizure (TUH-SZ) dataset, with blinded performance evaluation on five independent datasets: CHB-MIT (pediatric scalp EEG), HUH (neonatal scalp EEG), SWEC-ETHZ (adult iEEG), IEEGP (human/dog iEEG), and EIM (adult iEEG). All signals are harmonized in sampling rate and montage preprocessing to eliminate confounds. Comprehensive comparisons are made to alternative losses (softmax vs. BM), architectures (with/without transformer), different window lengths, and competing 2D CNN designs.

Results

At the channel-level, the CNN-TRF-BM shows consistently superior performance compared to CNN-BM and CNN-SM, with peak balanced accuracy (BAC) on TUH-SZ at W=20 s (BAC = 0.858, SEN = 0.828, SPE = 0.889). Similar trends are observed for segment-level detectors, albeit with higher expected calibration error (ECE) since the segment classifier is not directly trained on probability calibration.

EEG-level Seizure Detection

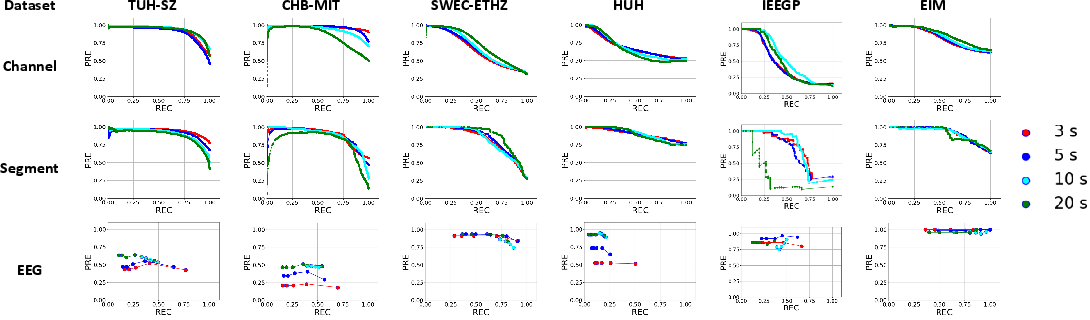

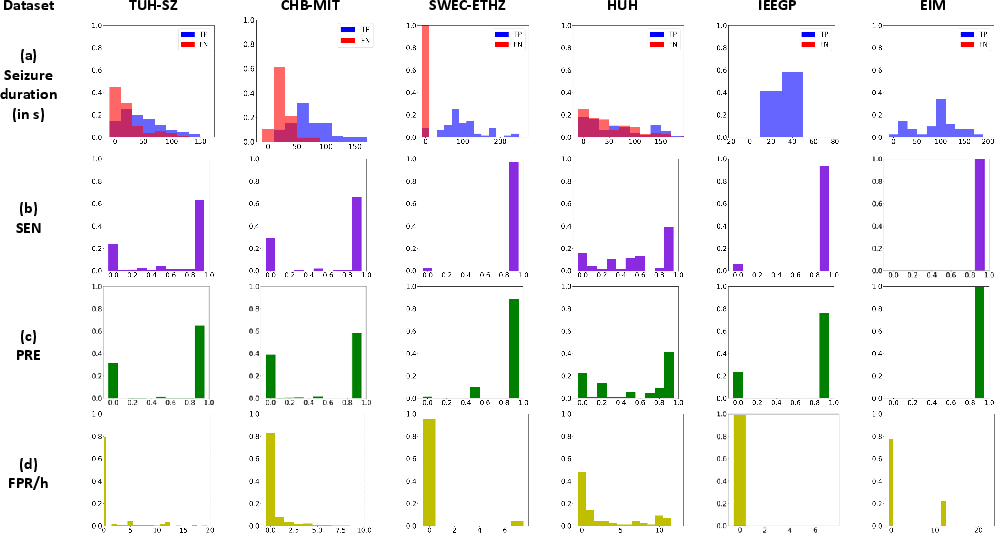

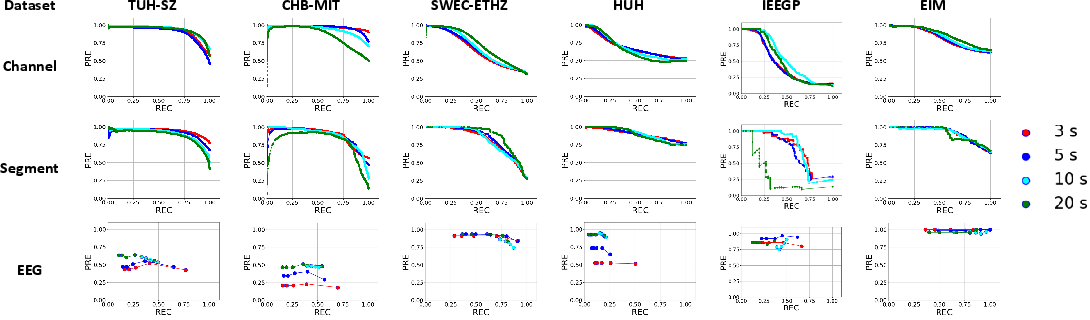

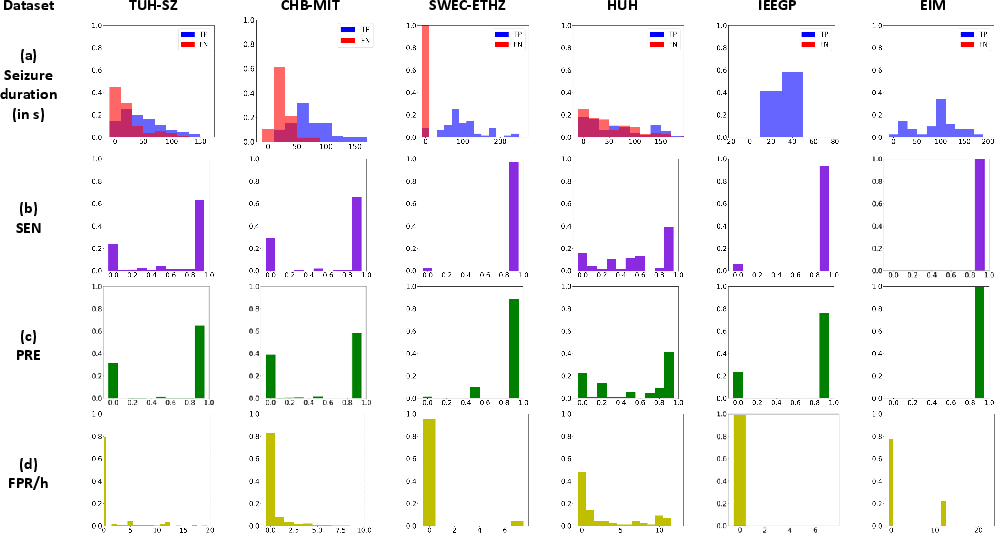

Using MOES as the reference, EEG-level sensitivity (SEN) ranges from 0.617 to 1.00, precision (PRE) from 0.534 to 1.00, and median false positive rates (mFPR/h) near zero for scalp and iEEG (except for HUH/IEEGP, where performance is depressed due to significant domain shift and lack of channel-level seizure annotations). Figure 4 and Figure 5 summarize PR curves and the distribution of event- and subject-level detection statistics, demonstrating high system precision and minimal false alarms on most EEGs.

Figure 4: Precision-recall profiles at each detection scale across datasets using the proposed CNN-TRF-BM discriminator.

Figure 5: EEG-level detection on the CNN-TRF-BM system—(a) distribution of true/false positives by event duration, (b) histogram of sensitivity, (c) histogram of precision, (d) distribution of false positive rates per hour.

System performance for long-duration (>10s) seizures is consistently higher than for short events. This delineates a key trade-off for the choice of analysis window—shorter windows improve short-seizure detection at the expense of increased FPR/h.

Complexity and Generalization

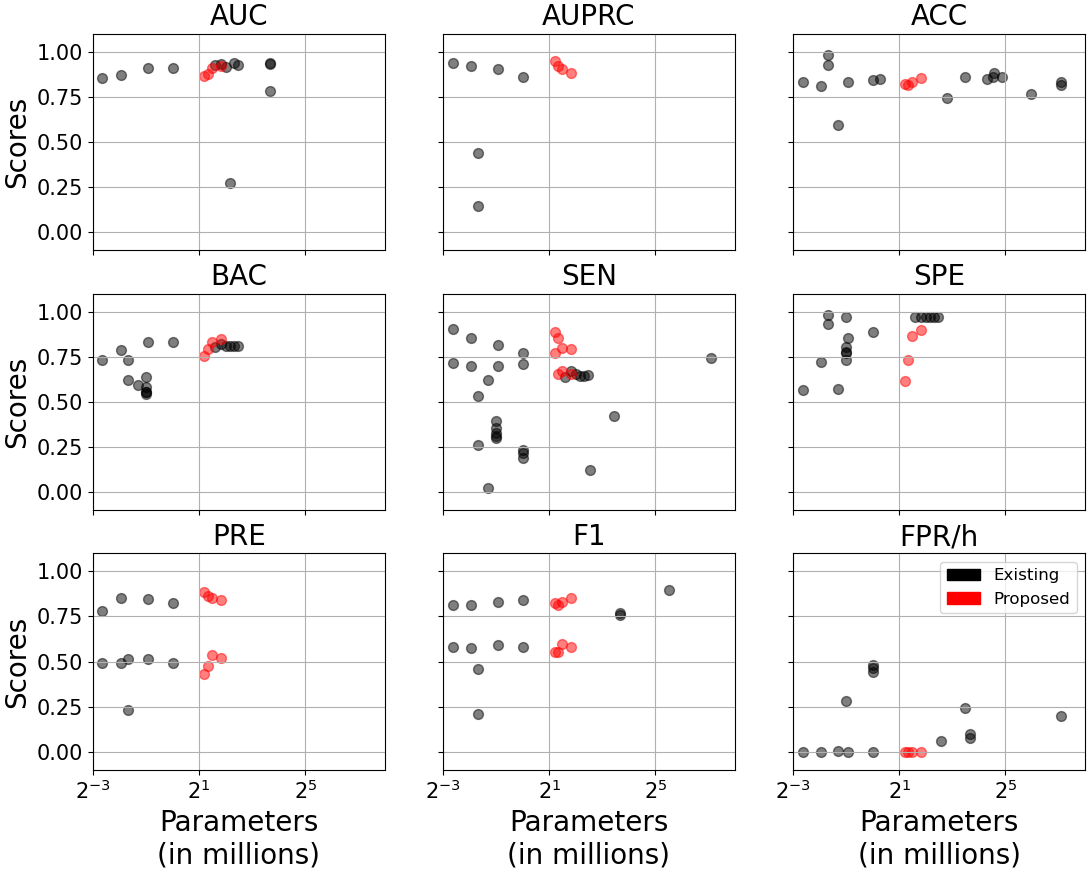

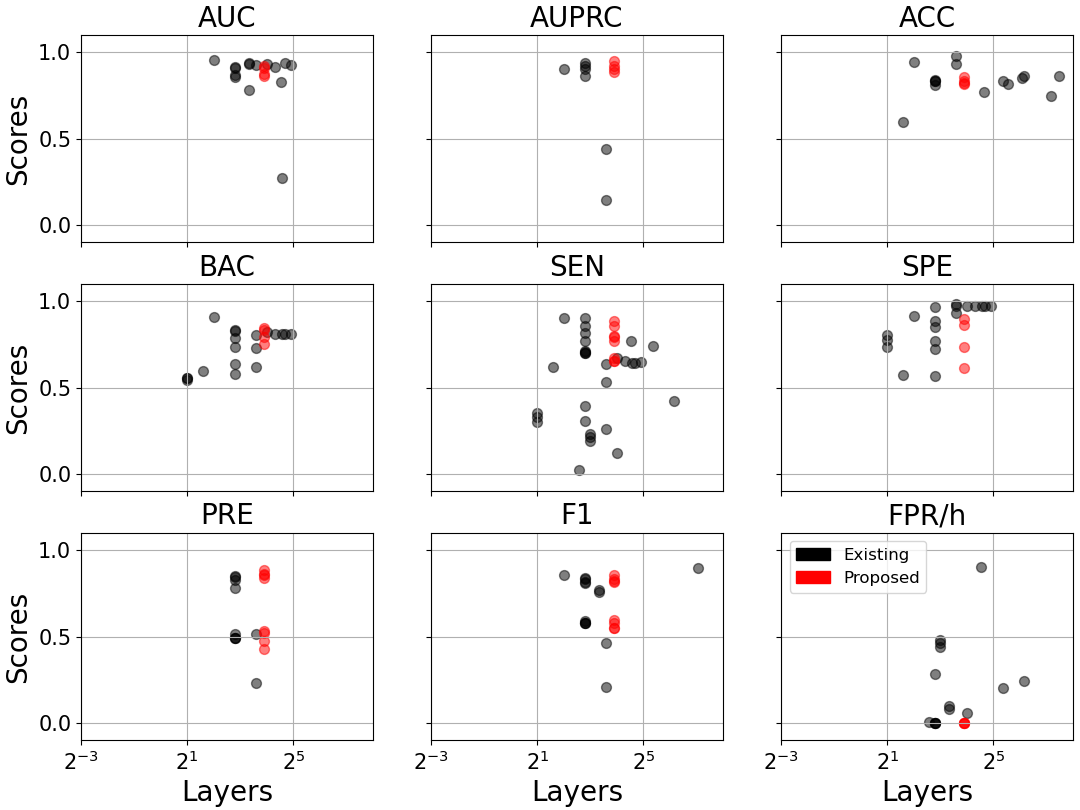

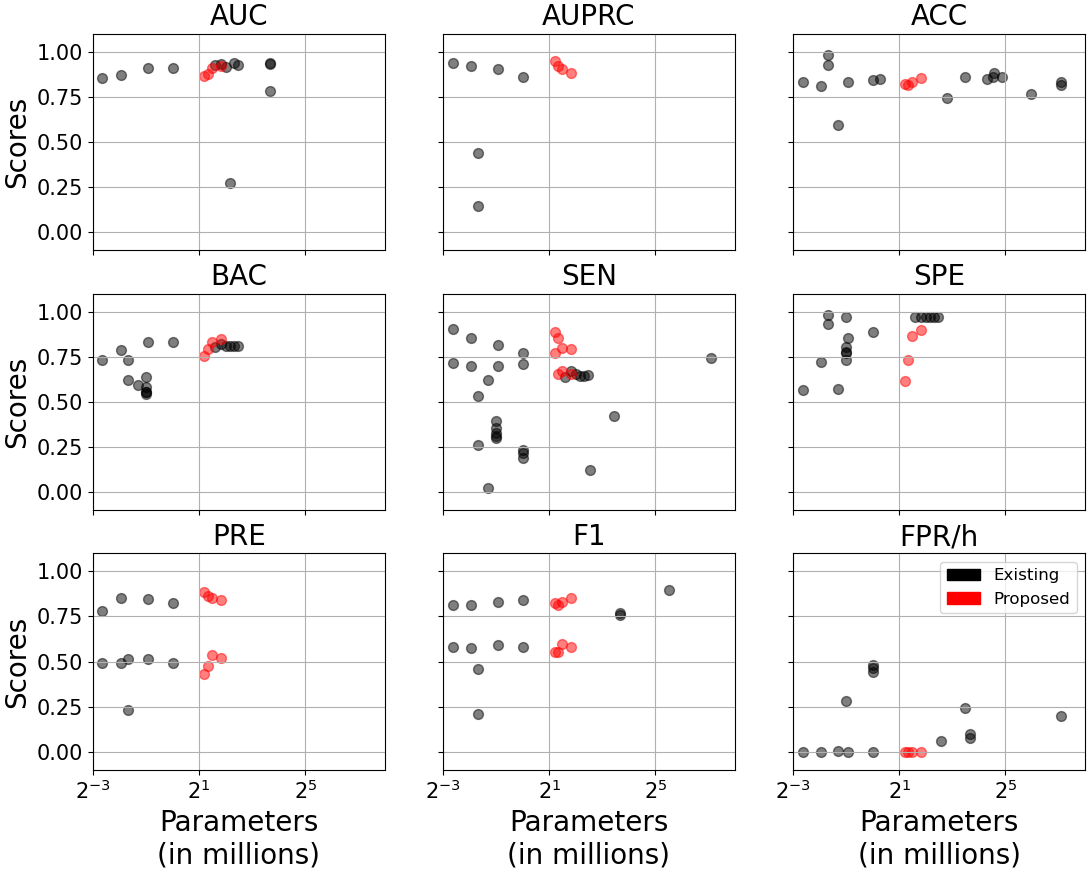

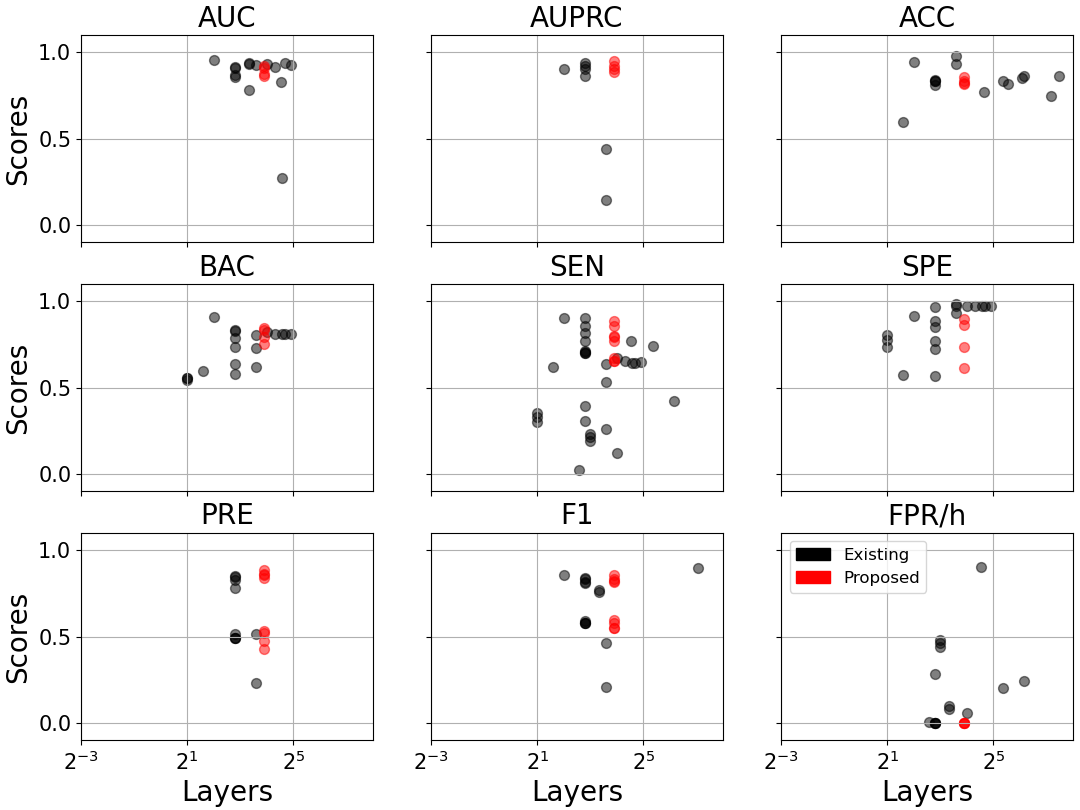

An ablation study on model complexity shows no performance gains with deeper/wider architectures: CNN-TRF-BM repurposes only 2.3–3.5M parameters with 7–15 layers, outperforming prior works with up to 138M parameters and 700+ layers (Figure 6).

Figure 6: Model performance versus parameter count (a) and number of network layers (b) across literature and this study. The CNN-TRF-BM achieves high sensitivity and low FPR/h at minimal complexity.

Detectors trained solely on TUH-SZ generalize robustly to CHB-MIT and SWEC-ETHZ—systems trained with large, heterogeneous datasets yield better cross-domain sensitivity and precision than those trained with limited local data. In direct comparison, 2D-CNN segment-level detectors demonstrate inferior generalization across variable montage/dataset conditions and suffer from severe overfit or channel-count inflexibility.

Implications and Future Directions

This research advances patient-independent, dataset-agnostic seizure detection pipelines by merging local and sequential feature modeling using transformers with principled distributional calibration (BM loss). The ability to function without retraining and across arbitrary montages, combined with MOES for clinically meaningful evaluation, bridges the key translational gap toward robust clinical application.

Strong numerical claims: The CNN-TRF-BM model achieves median FPR/h of zero and sensitivity up to 1.0 on adult iEEG datasets, outperforming both contemporary deep neural networks with much larger parameter budgets and commercial solutions (e.g., Persyst, Encevis, BESA) evaluated with realistic metrics.

Theoretically, the use of calibrated uncertainty via BM loss improves deployment trustworthiness, while architectural simplicity overcomes overfitting/plausible deniability. Practically, the detector reaches clinically actionable throughput, processing 30-minute traces in under 15 seconds, facilitating routine EEG interpretation at scale.

Limitations

The main performance limitation is in neonatal and highly heterogeneous (animal) datasets, attributable to the adult-centric training set. Short seizure detection remains more challenging, necessitating window length/FPR tradeoff management. The system’s reliance on deep learning prohibits interpretability of decision basis.

Future Prospects

Potential directions include the introduction of frequency-band feature extraction, explicit artifact rejection modules, hybrid supervised and weak supervision frameworks, and exploration of advanced classifiers (finite element machines, dynamic ensemble algorithms). Addressing cross-age/animal domain shift via meta-learning or multi-task adaptation may further improve universality.

Conclusion

This paper provides a computationally efficient, flexible, and robust EEG seizure detection framework that meets the clinical requirements for sensitivity, precision, and false alarm control, validated exhaustively across multiple modalities, centers, and montages. Its approach to integrated deep sequence modeling with calibrated uncertainty and dataset/montage agnosticism represents a notable advance in the deployment of patient-independent EEG biomarker systems (2208.00025).