- The paper proposes a multi-domain deep learning framework that integrates virtual and real IMU data for enhanced human activity recognition.

- It employs hybrid 2D and 1D CNNs combined with uncertainty-aware feature fusion to extract and merge spatio-temporal features.

- The framework utilizes transfer learning from a large synthetic dataset to significantly improve accuracy and convergence on real-world tasks.

MARS: Mixed Virtual and Real Wearable Sensors for Human Activity Recognition with Multi-Domain Deep Learning Model

This paper addresses the challenges of human activity recognition (HAR) using wearable inertial measurement units (IMUs) by proposing a novel multi-domain deep learning framework. The method leverages a large database of virtual IMUs to overcome limitations in the quantity and quality of real-world data. The framework integrates hybrid convolutional neural networks (CNNs), uncertainty-aware feature fusion, and transfer learning to achieve robust and efficient HAR.

Data Synthesis and Preparation

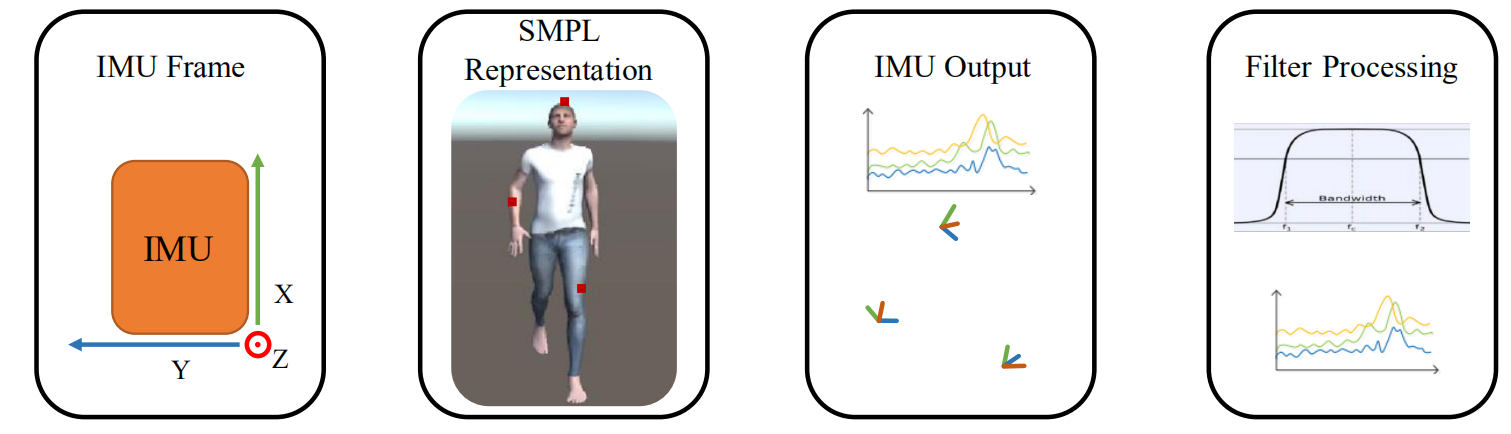

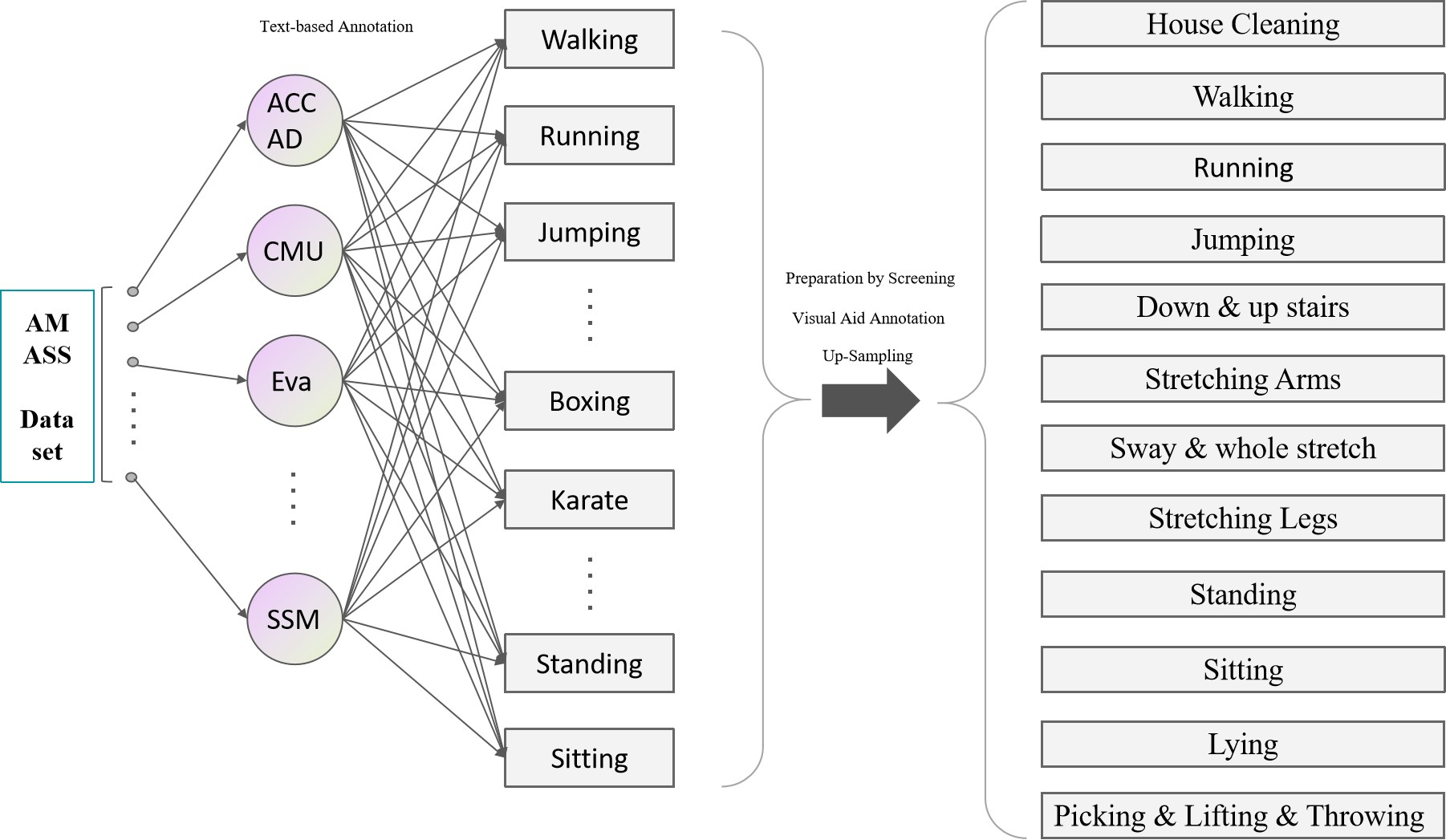

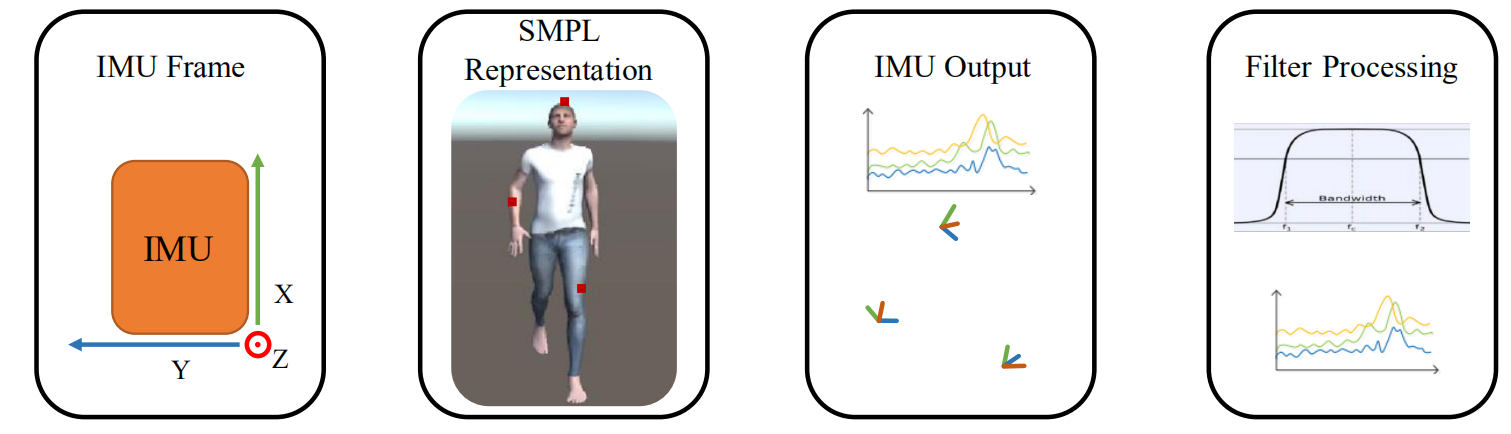

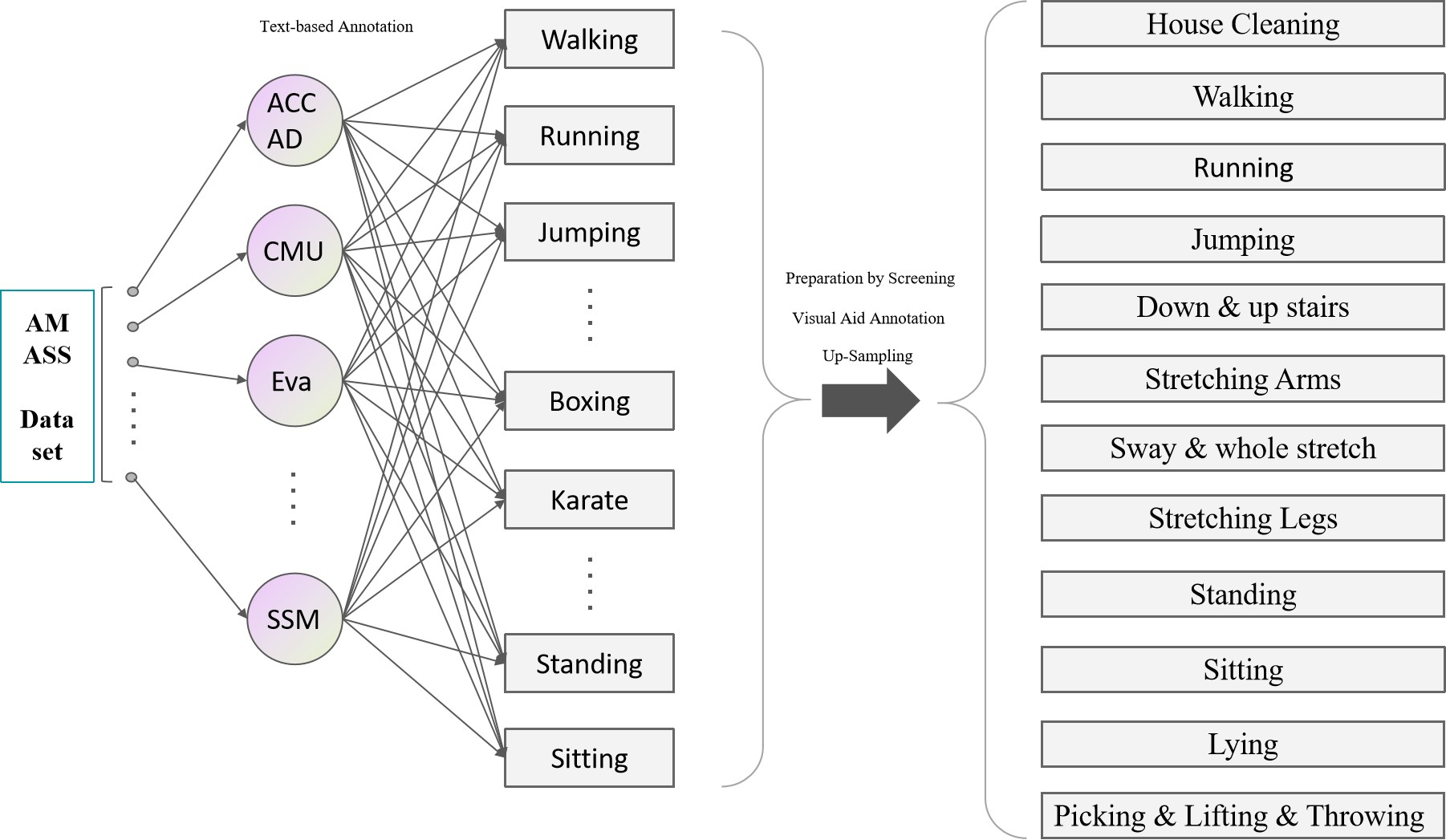

The authors address the data scarcity issue by constructing an extensive database of virtual IMU data based on the Archive of Motion Capture as Surface Shapes (AMASS) dataset (Kumar et al., 2019) and the Deep Inertial Poser (DIP) dataset. The AMASS dataset, containing a diverse range of human poses, is converted into a SMPL body model, and virtual IMU data is generated by simulating sensor placement on the model's surface. This process involves forward kinematics to calculate the orientation and acceleration data of the virtual IMUs. Data labeling is performed through text description division, common motion category screening, and visual auxiliary annotation, resulting in a dataset of 12 HAR categories.

Figure 1: IMU output and SMPL body model.

Figure 2: Data labeling flowchart.

Figure 3: SMPL models with Unity visual action.

Multi-Domain Deep Learning Framework

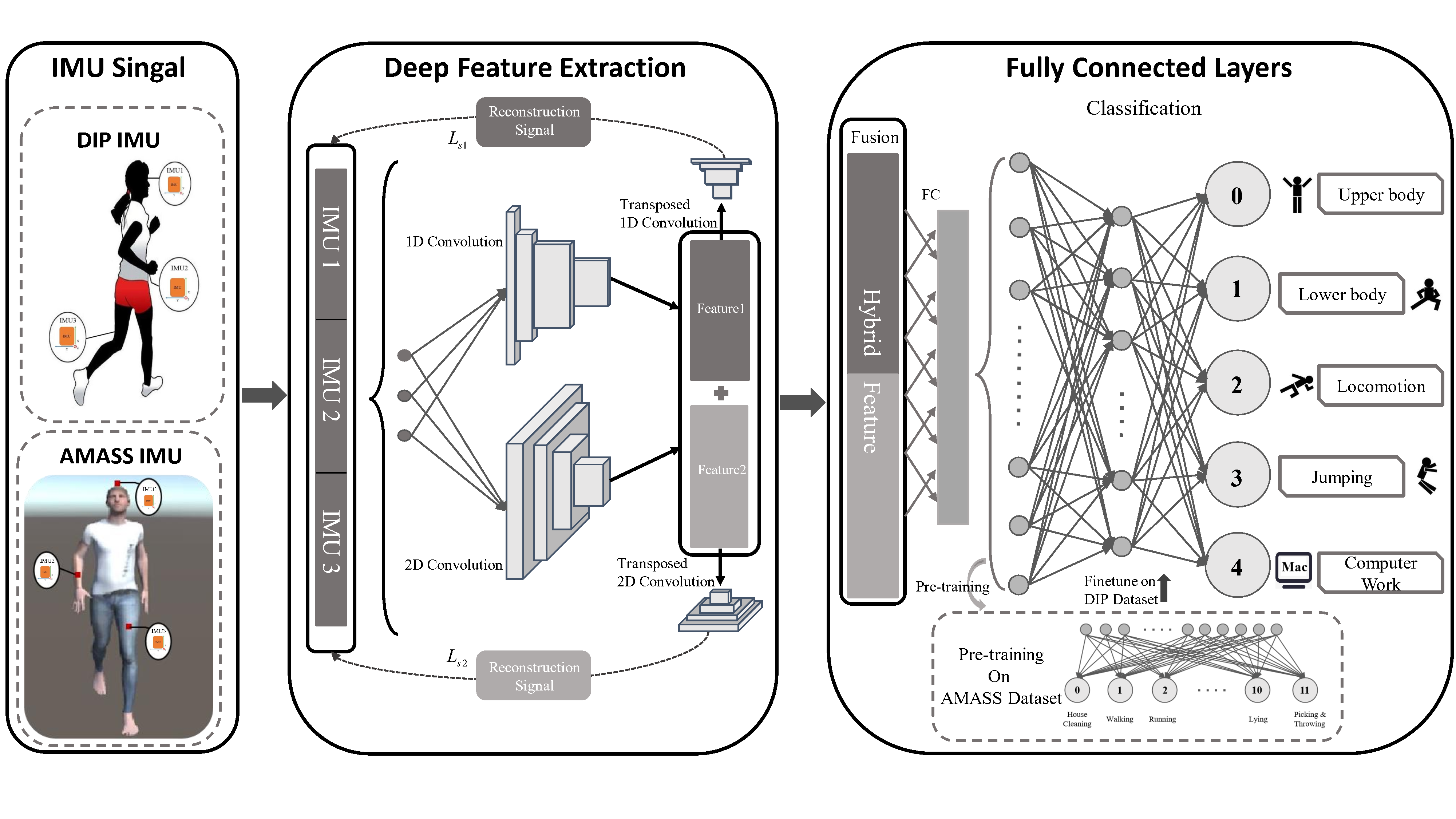

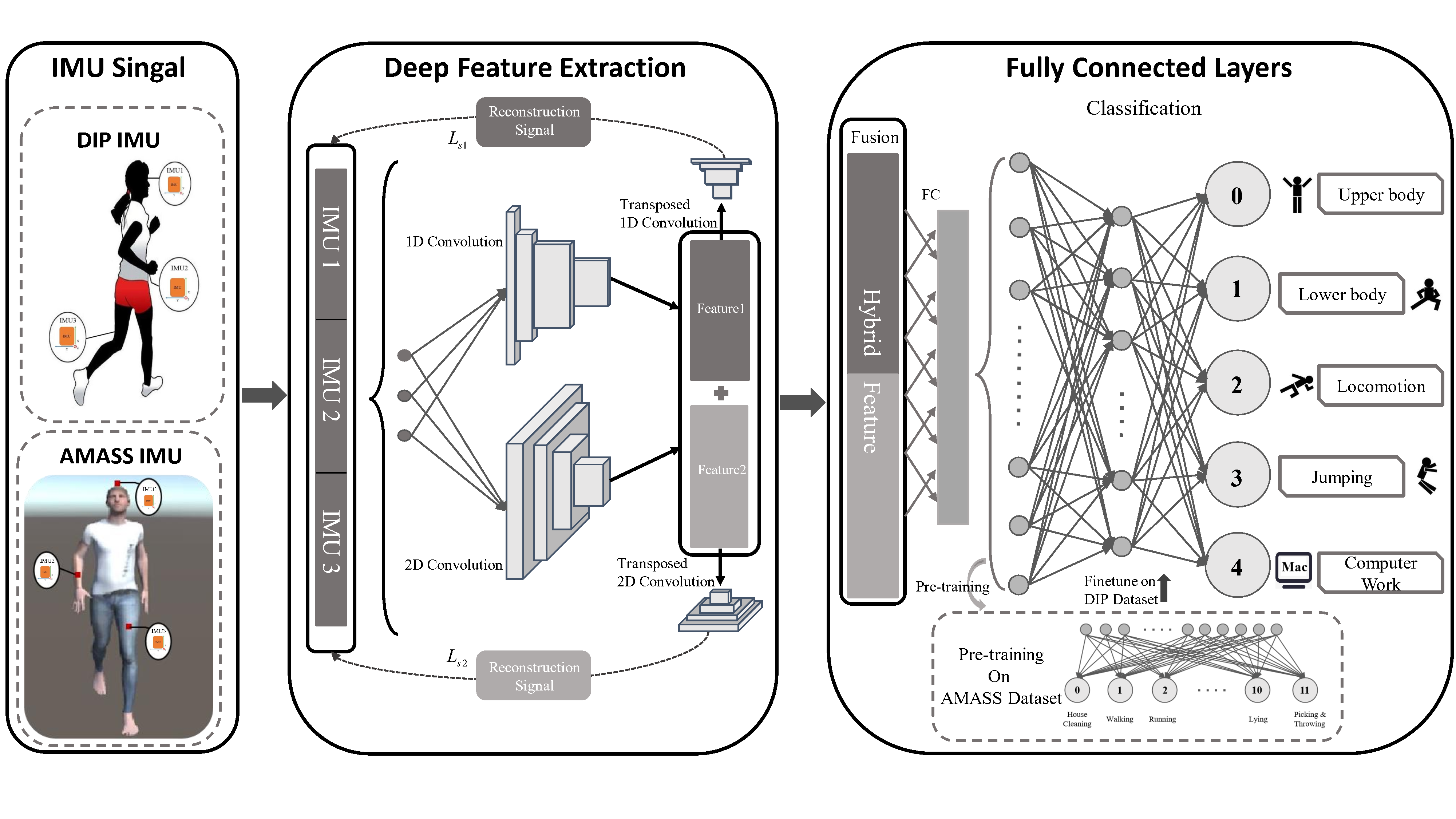

The proposed framework consists of three key modules: feature extraction, feature fusion, and cross-domain knowledge transfer. The feature extraction module employs hybrid CNNs (both 2D and 1D) to learn spatio-temporal features from the IMU data. The 2D-CNNs capture connectivity patterns among multi-modal signals, while the 1D-CNNs identify characteristics in individual channels.

Figure 4: Proposed overview.

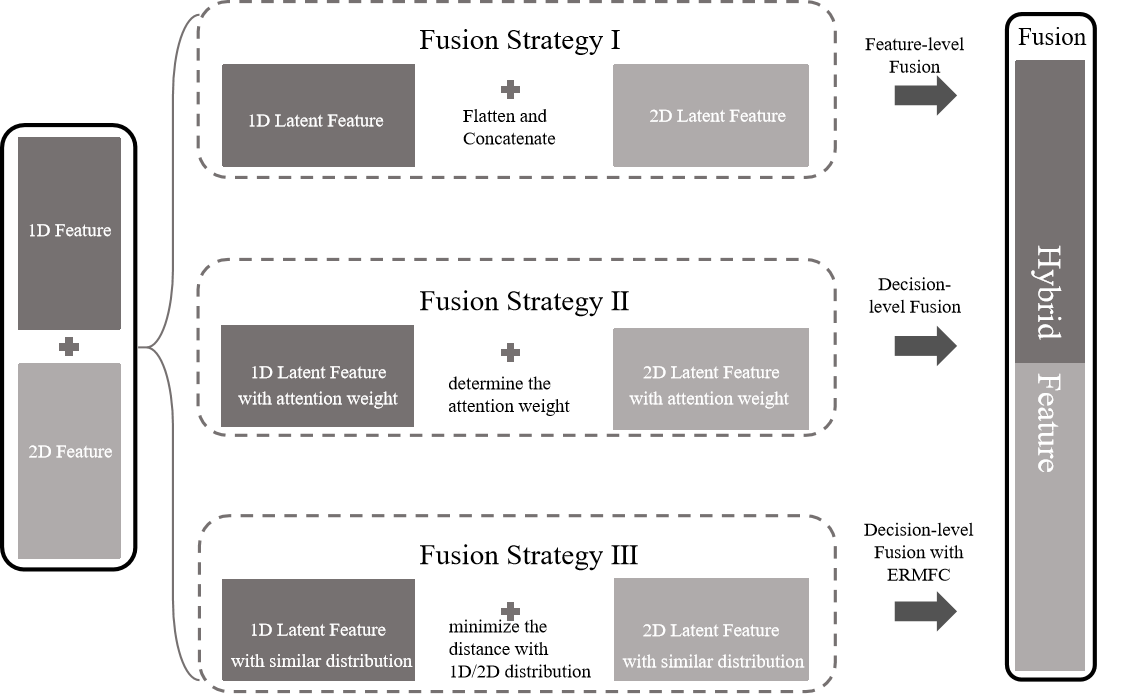

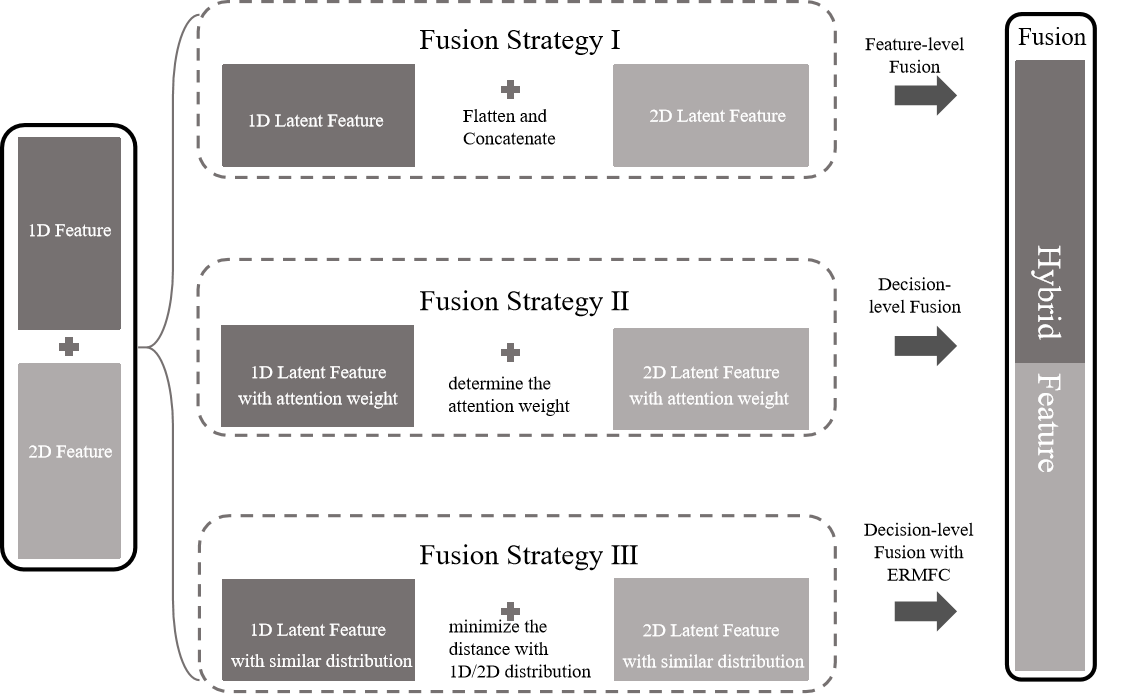

Uncertainty-Aware Feature Fusion

To effectively combine the features extracted from different domains, the authors propose an uncertainty-aware consistency approach. This method fuses latent representations obtained from five single-domain feature extractors, weighting the importance of each feature based on its uncertainty. Three fusion strategies are explored: feature-level fusion, decision-level fusion, and decision-level fusion with fairness consideration. The decision-level fusion strategy utilizes a sigmoid function to determine attention weights for each merging feature. To enhance fairness, the authors minimize the Kullback-Leibler (KL) divergence between the distributions of different features, ensuring that they are sampled from similar distributions. The symmetrized version of the KL divergence is used:

SDKL(P,Q)=DKL(P,Q)+DKL(Q,P)

Figure 5: Illustration of the proposed feature fusion strategies.

Cross-Domain Knowledge Transfer

To leverage the synthetic IMU data for real-world HAR, the framework incorporates transfer learning. The network is first pre-trained on the AMASS dataset and then fine-tuned on the real DIP dataset. This approach allows the model to transfer knowledge from the synthetic domain to the real domain, improving its performance and generalization ability.

Experimental Evaluation and Results

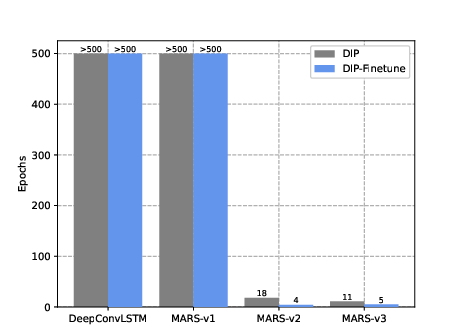

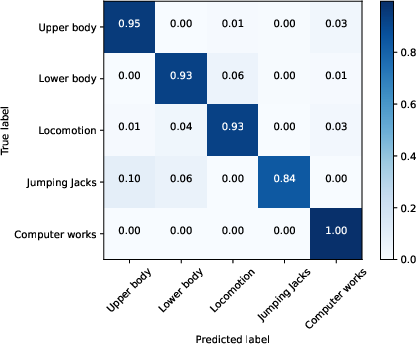

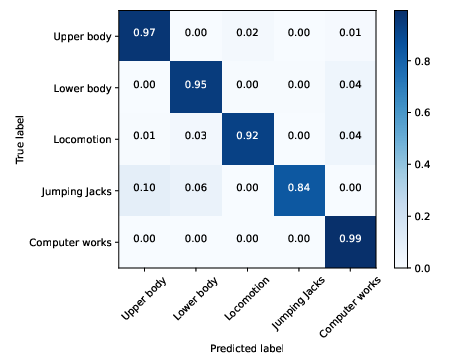

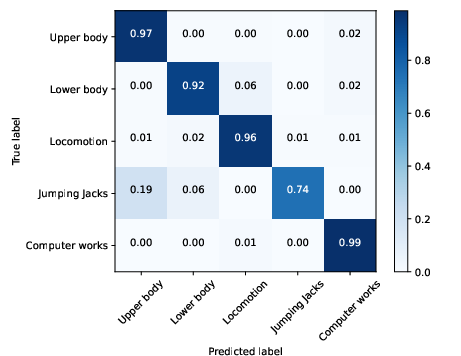

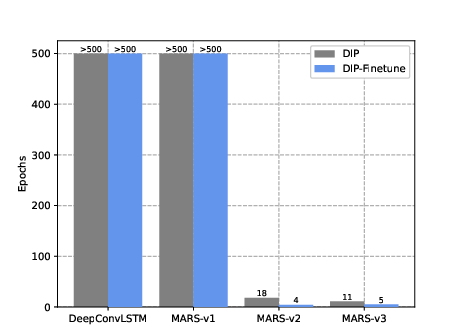

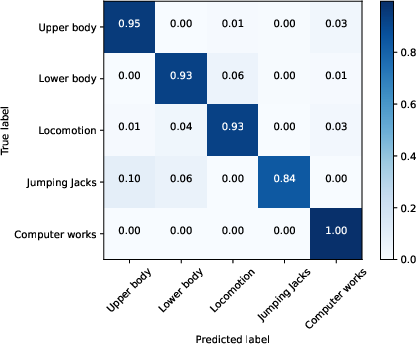

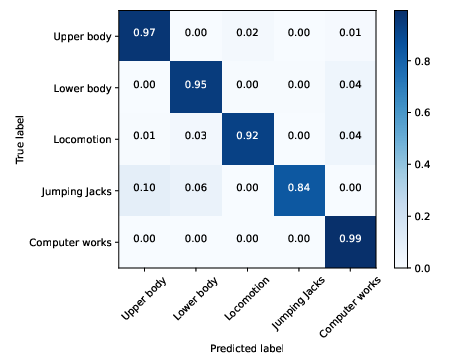

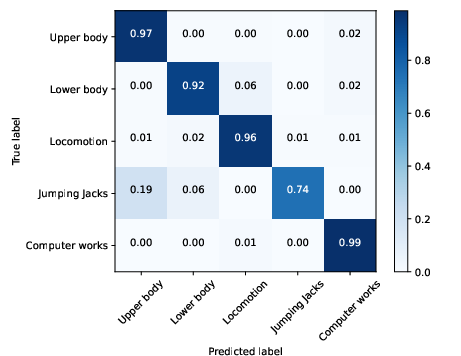

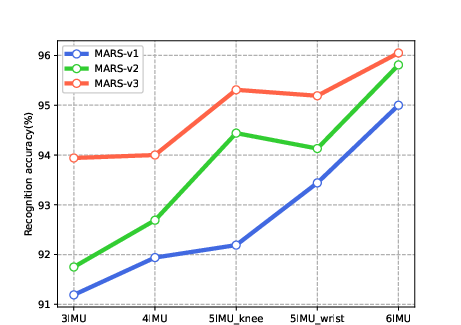

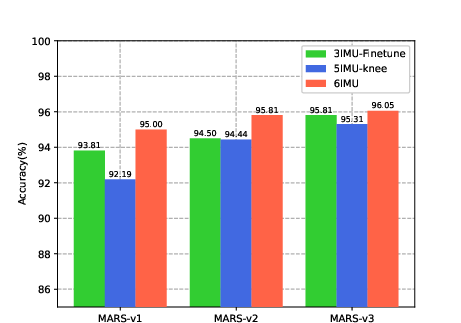

The authors evaluate the performance of the proposed method on the DIP dataset, comparing it against several competing HAR algorithms, including Random Forest (RF), LSTM, Deep ConvLSTM, and a deep convolutional autoencoder-based network. The experimental results demonstrate that the proposed methods outperform all competing approaches, achieving high accuracy, precision, and F1-score. The results also show that the proposed methods can converge surprisingly fast. For example, the MARS-v2 and MARS-v3 converge after only 4 iterations when fine-tuned with the AMASS dataset pre-training model. Furthermore, the use of the AMASS dataset for pre-training improves the performance of all deep learning-based HAR algorithms. The confusion matrices illustrate the classification performance of the proposed methods, highlighting the impact of fine-tuning on the recognition accuracy of different activities.

Figure 6: Convergence performance comparison.

Figure 7: Confusion matrices illustration in the second case (6 IMUs).

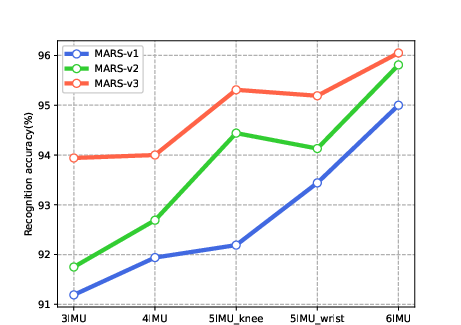

Figure 8: HAR accuracy comparison using different numbers of IMUs.

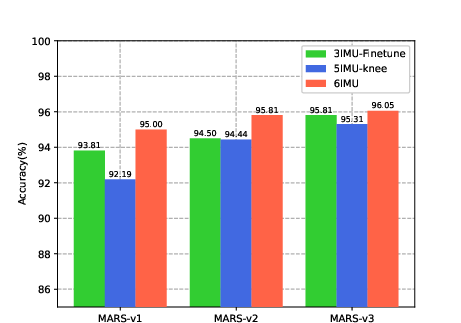

Figure 9: Human activity recognition accuracy comparison histogram.

Impact of Sensor Number

The authors also investigate the effect of sensor number on HAR performance. The results show that increasing the number of IMUs generally improves accuracy. However, the placement of the sensors is also crucial. The HAR accuracies of all three proposed methods with three IMUs achieves that with using 6 IMUs. This can be explained by noticing the fact that AMASS contains abundant action features, which encourages effective feature extraction.

Conclusion

The authors present a comprehensive framework for HAR that addresses the challenges of data scarcity and domain adaptation. By leveraging virtual IMU data, hybrid CNNs, uncertainty-aware feature fusion, and transfer learning, the proposed method achieves state-of-the-art performance on real-world HAR tasks. The experimental results demonstrate the effectiveness of the framework and its potential for practical applications.