- The paper presents DNN refactoring that transforms neural architectures to improve verification efficiency while preserving accuracy.

- It employs architectural transformation and knowledge distillation to simplify networks, reducing verification runtimes by up to 82%.

- The method strategically balances error-verifiability trade-offs by optimizing layer structures to maintain safety-critical performance.

Refactoring Neural Networks for Verification

Refactoring neural networks presents a novel approach for improving the verification process of deep neural networks (DNNs) used in safety-critical systems. Unlike traditional code refactoring, which guarantees behavioral equivalence, DNN refactoring transforms neural architectures to preserve accuracy while making verification more efficient.

Introduction

Deep learning has expanded capabilities for applied systems, notably seen in autonomous systems like self-driving cars and drones. DNNs characterize these advances but pose significant challenges in cost-effective verification methods to ensure behavior meets crucial correctness properties, impacting safety arguments in such systems. This paper introduces DNN refactoring as a solution to enhance DNN verification applicability and scalability.

Concept and Framework

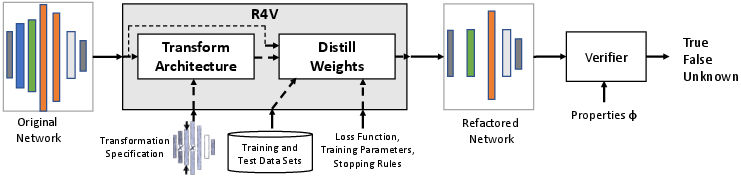

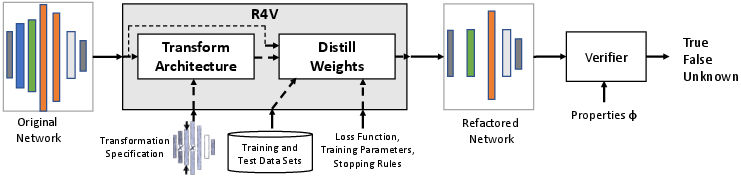

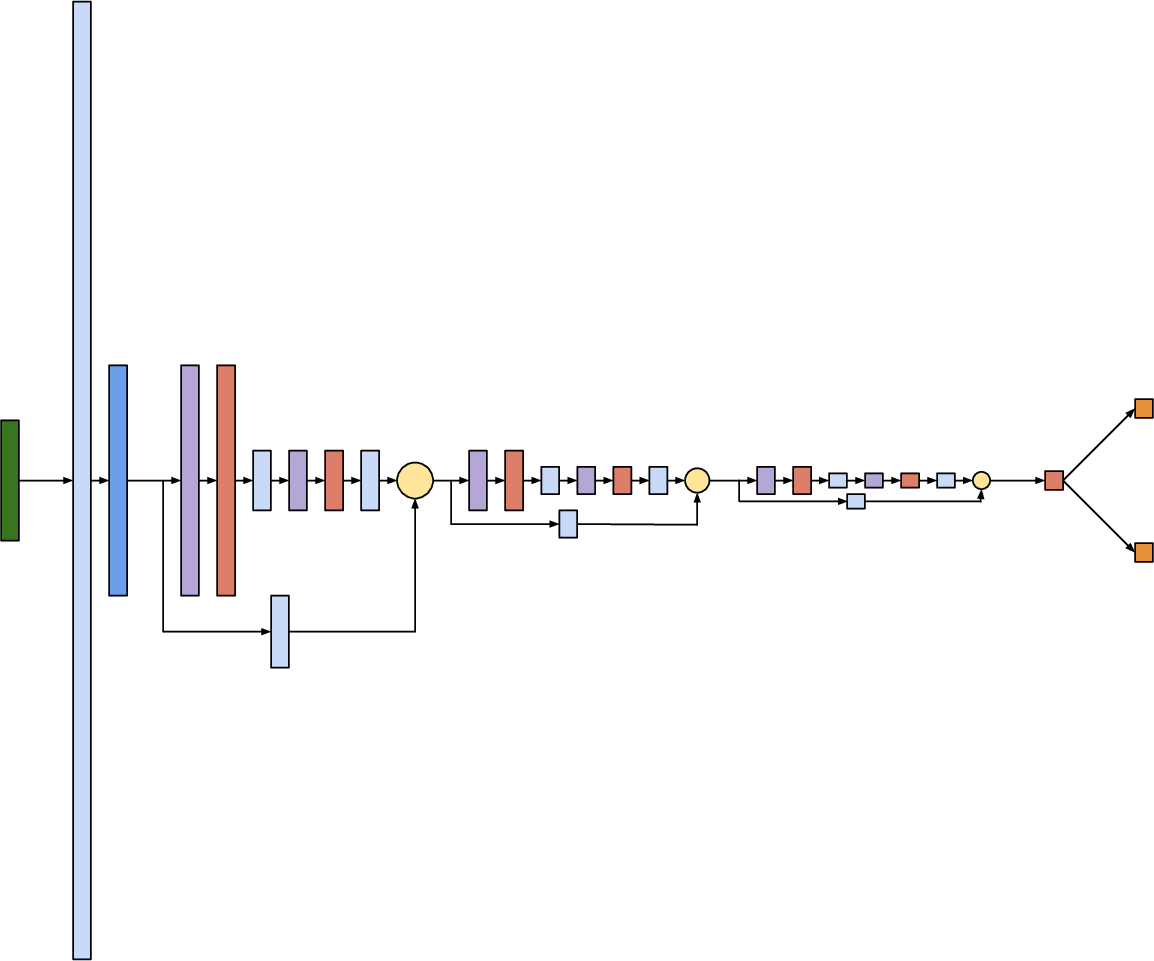

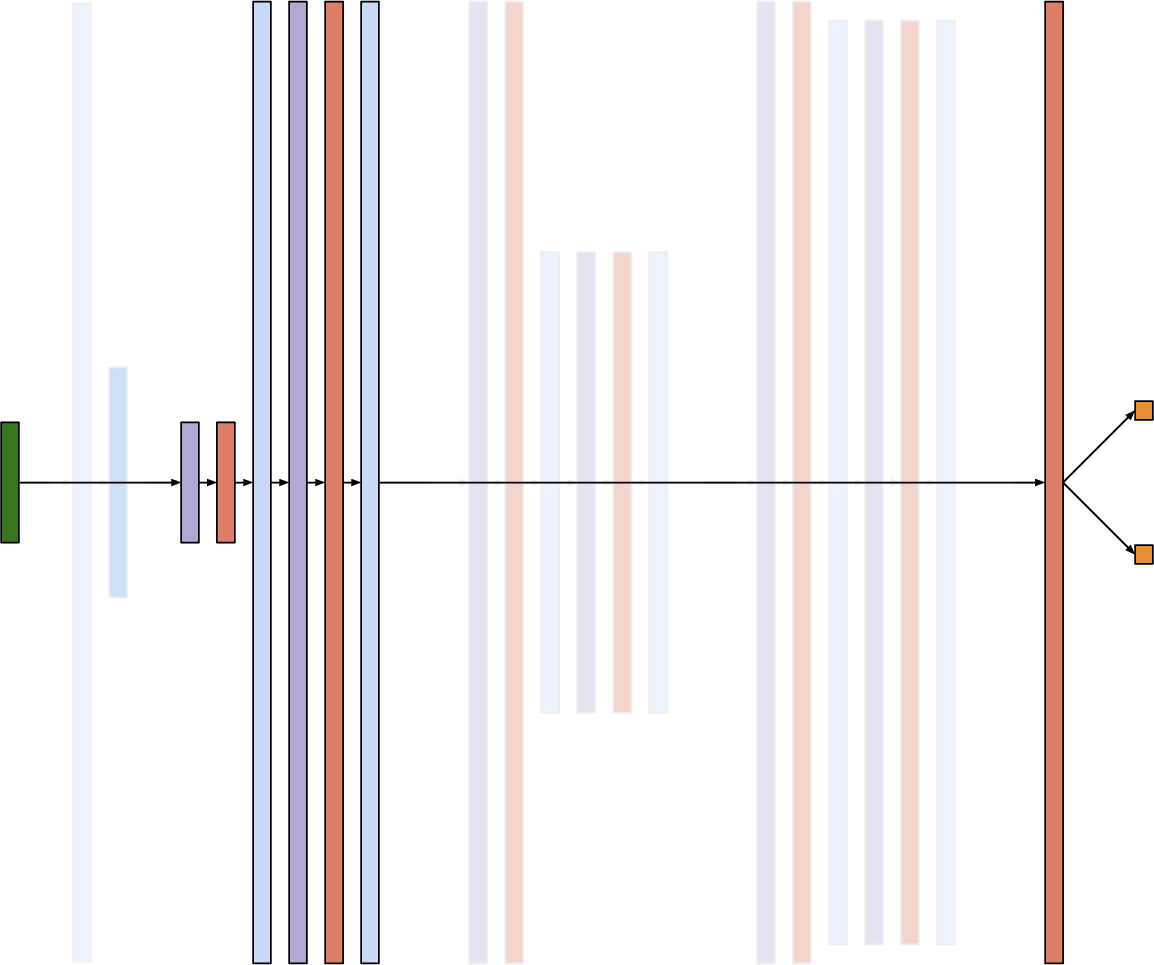

DNN refactoring encompasses architectural transformations and knowledge distillation. Architectural transformation reduces network complexity by modifying layer structures such as number and size while ensuring supportive adaptations for seamless interconnections (Figure 1).

Figure 1: The R4V Approach illustrates the refactoring process, partitioning into architectural transformation and knowledge distillation.

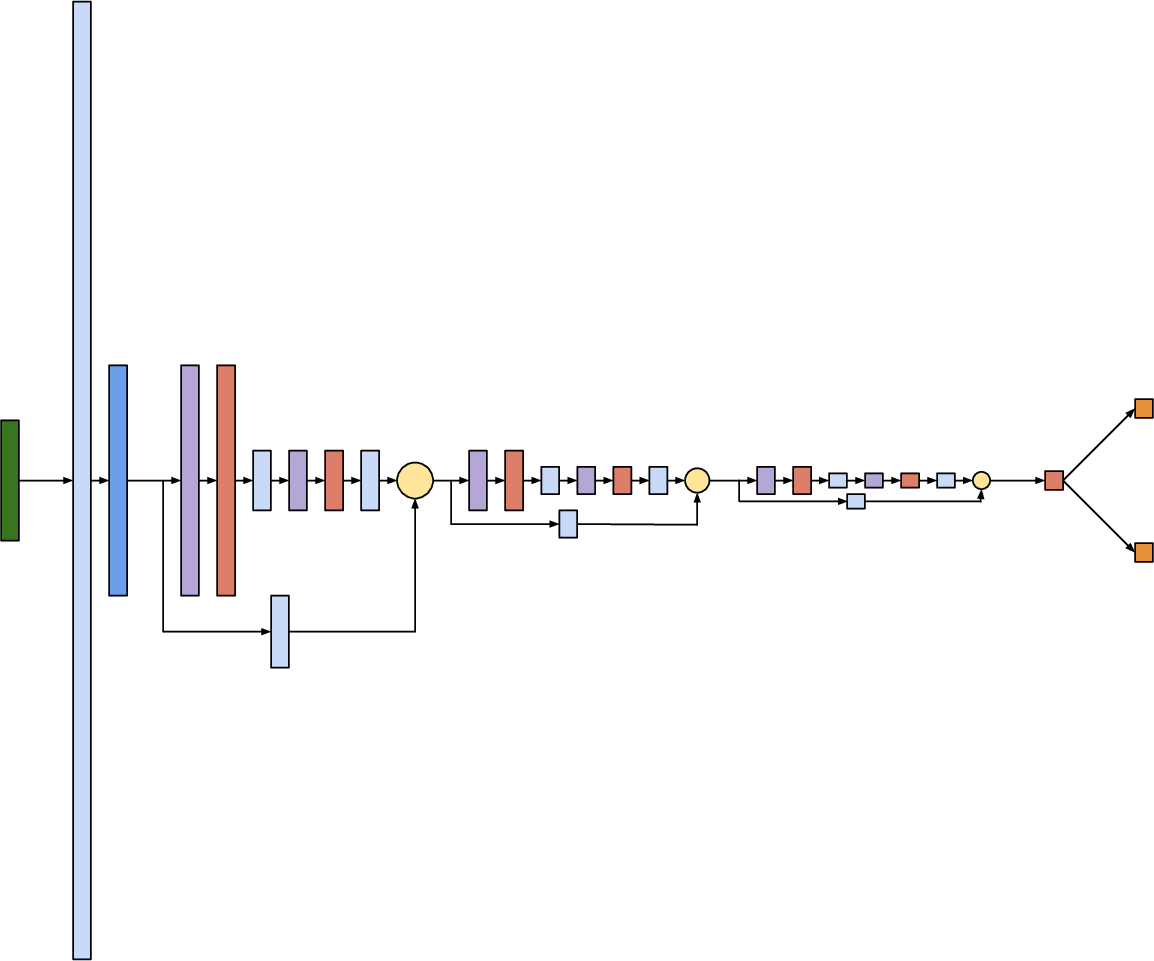

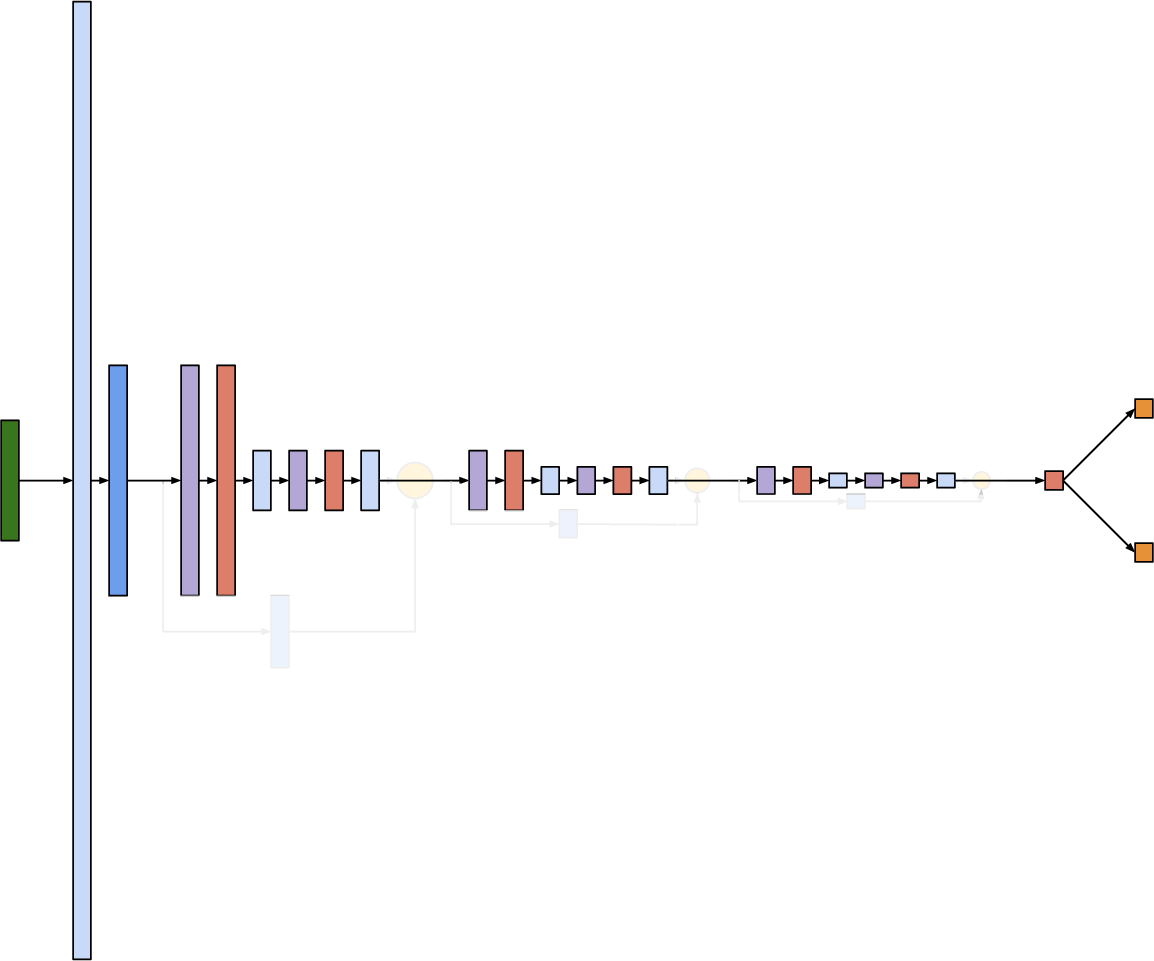

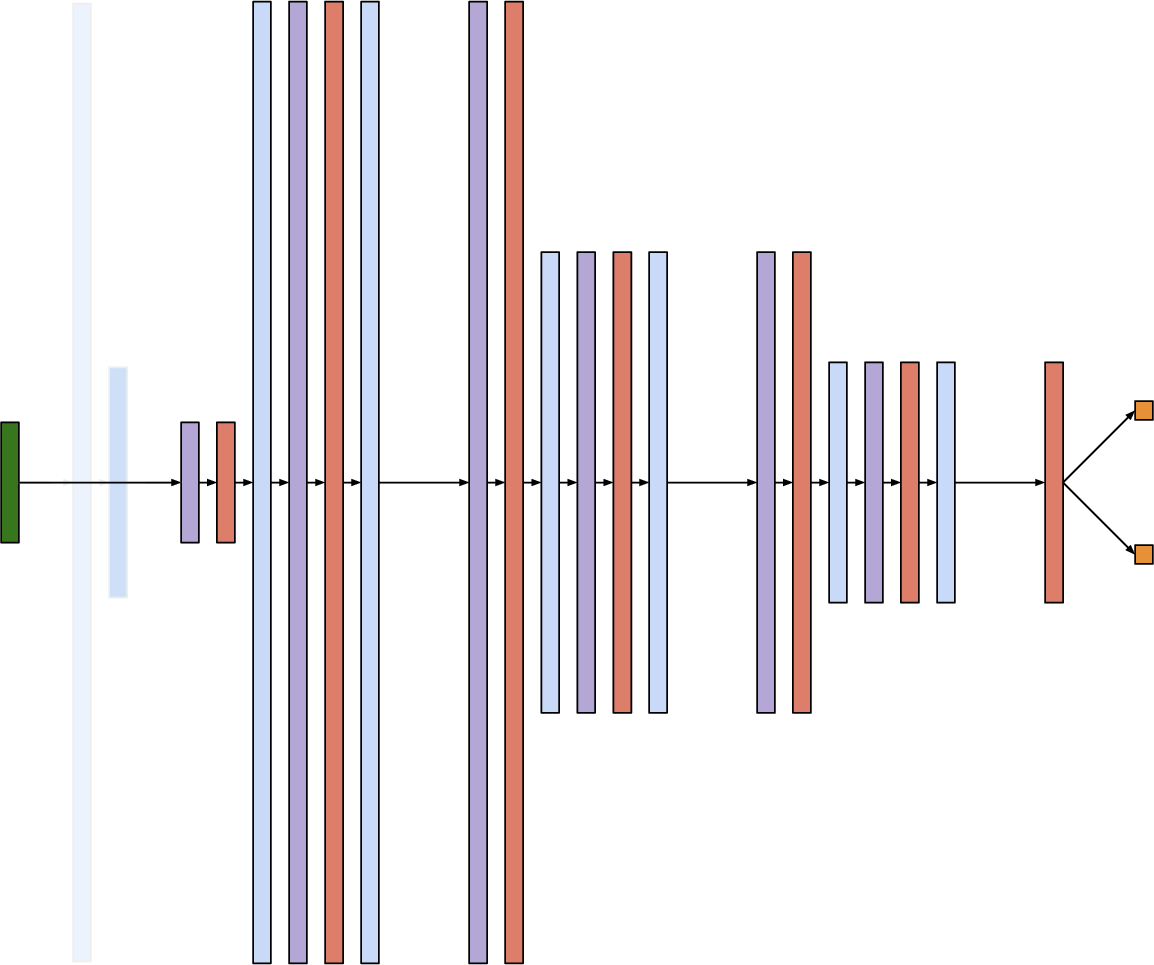

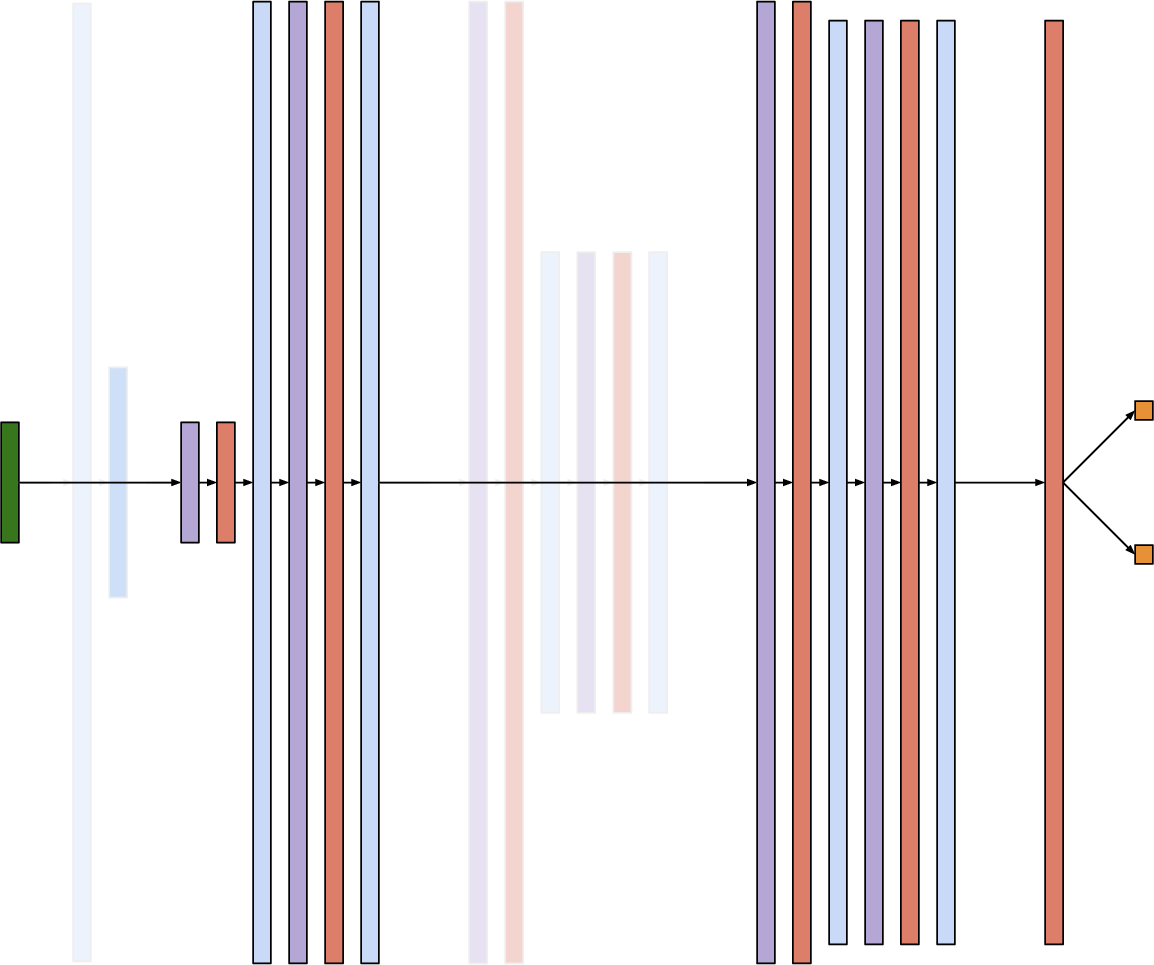

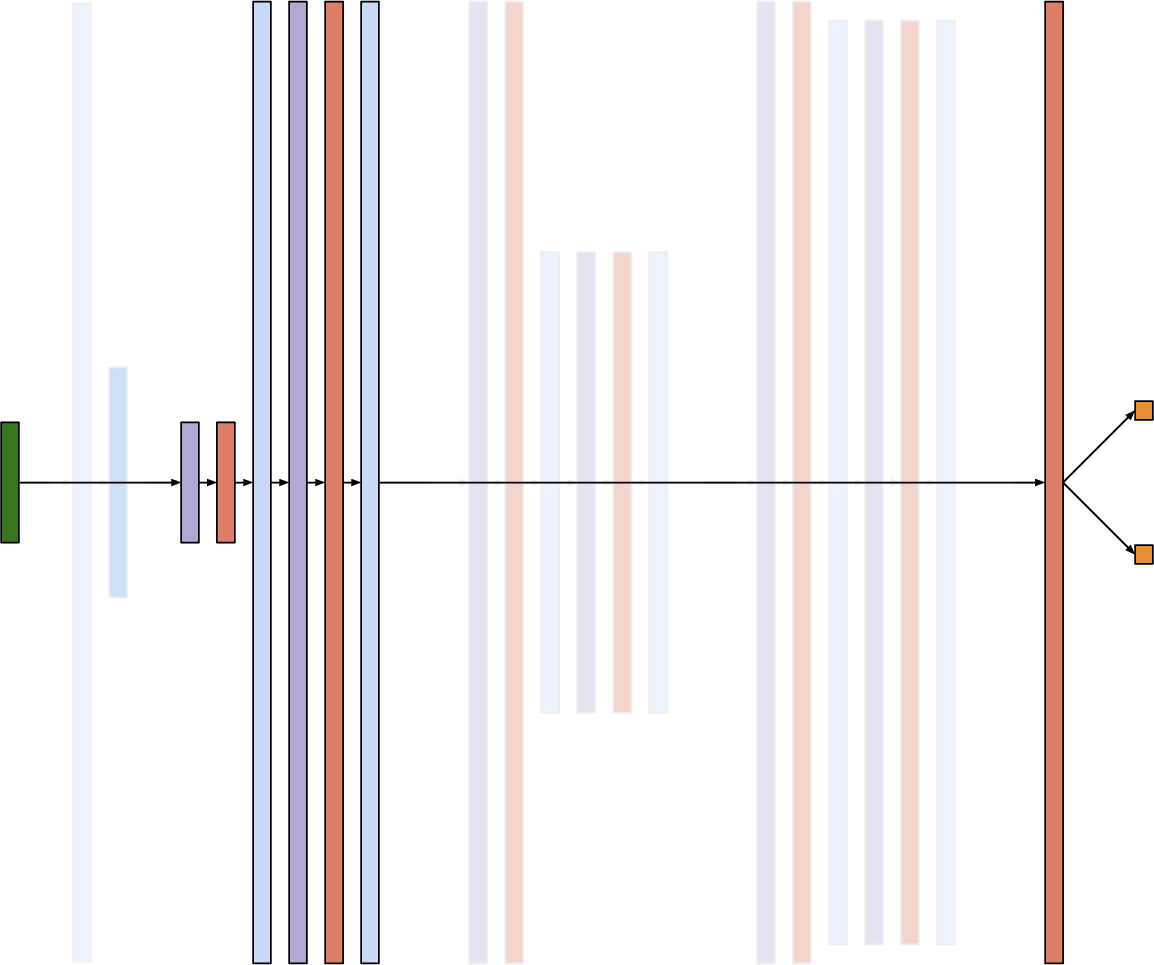

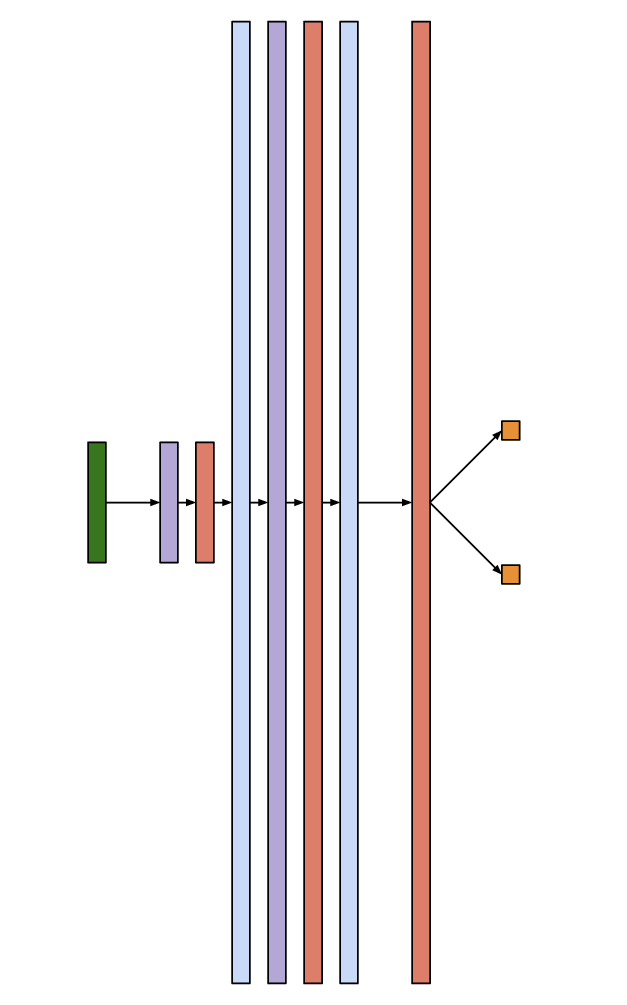

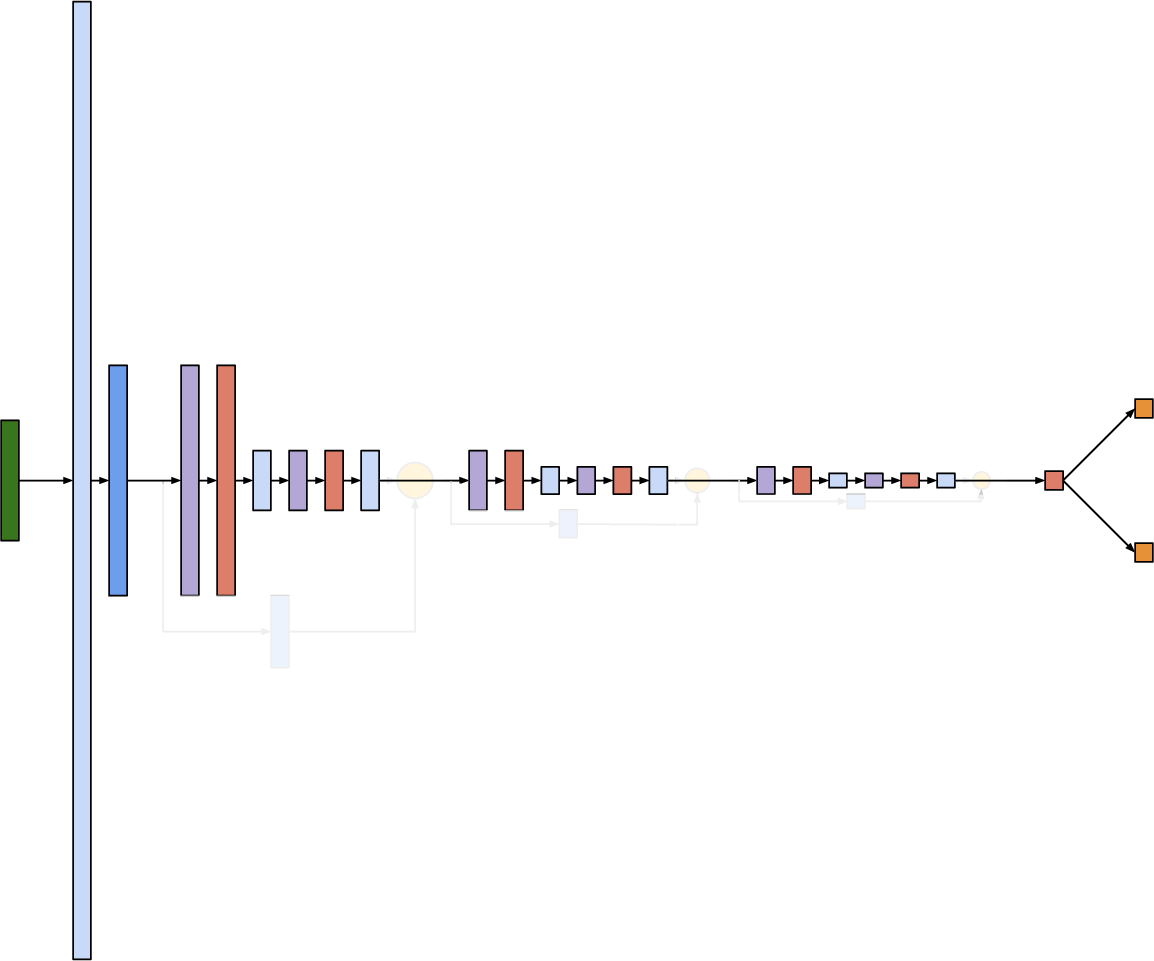

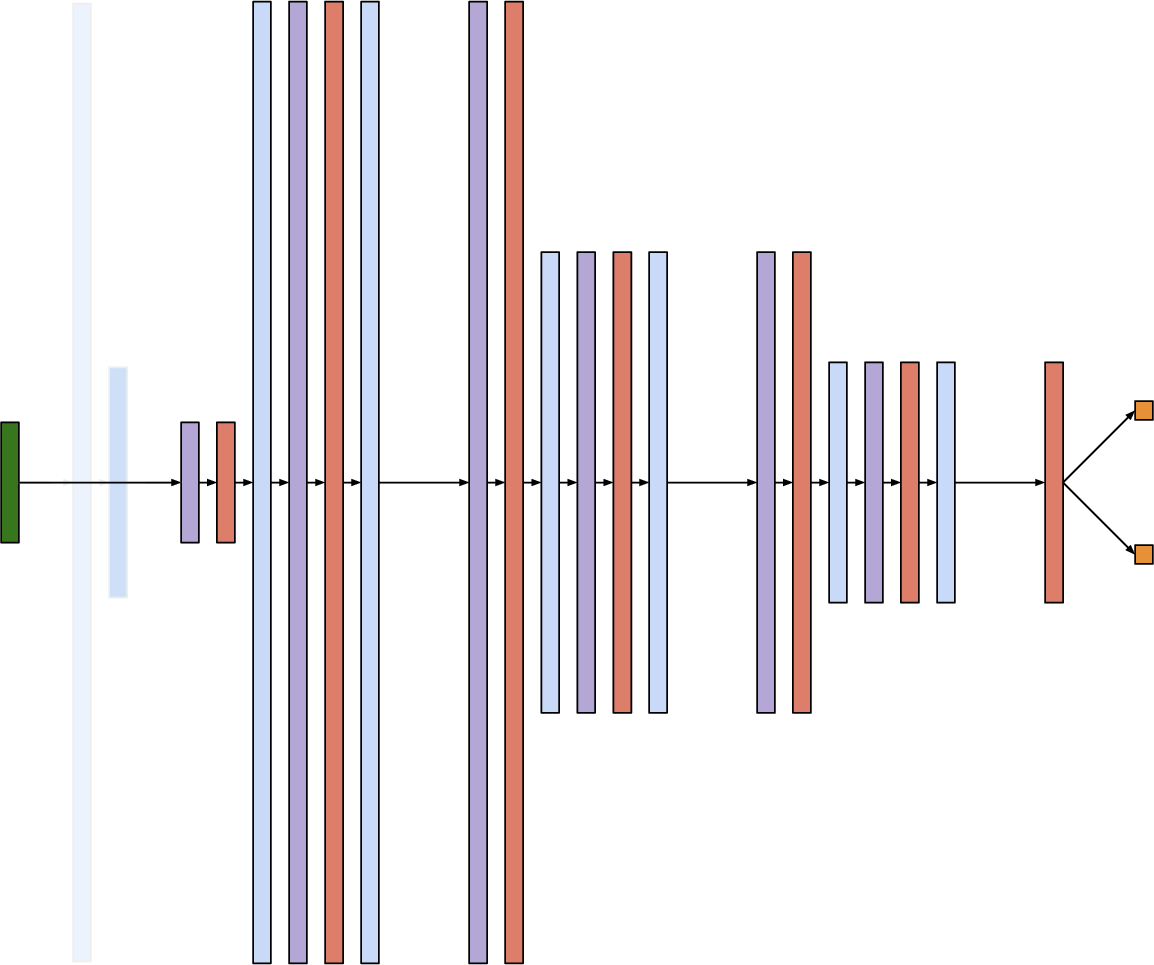

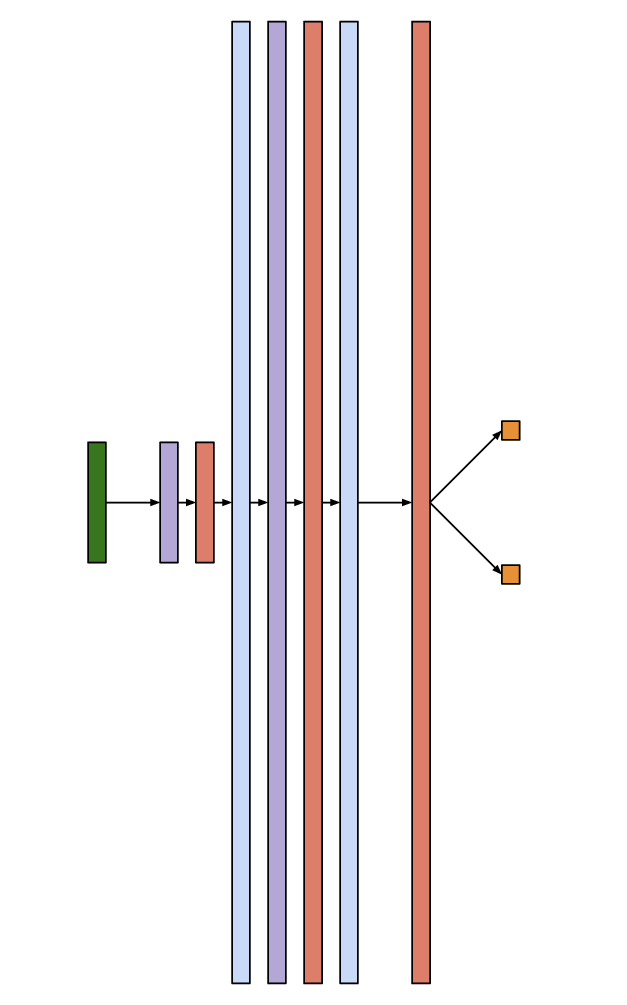

Knowledge distillation extracts and transfers learned representations from the original network to a simplified refactored structure, preserving functional accuracy (Figure 2).

Figure 2: Refactoring the DroNet DNN showcases a transformation process, linearizing residual blocks and adjusting computational paths.

Case Studies

Three case studies demonstrate the effectiveness of DNN refactoring:

Verifier Applicability

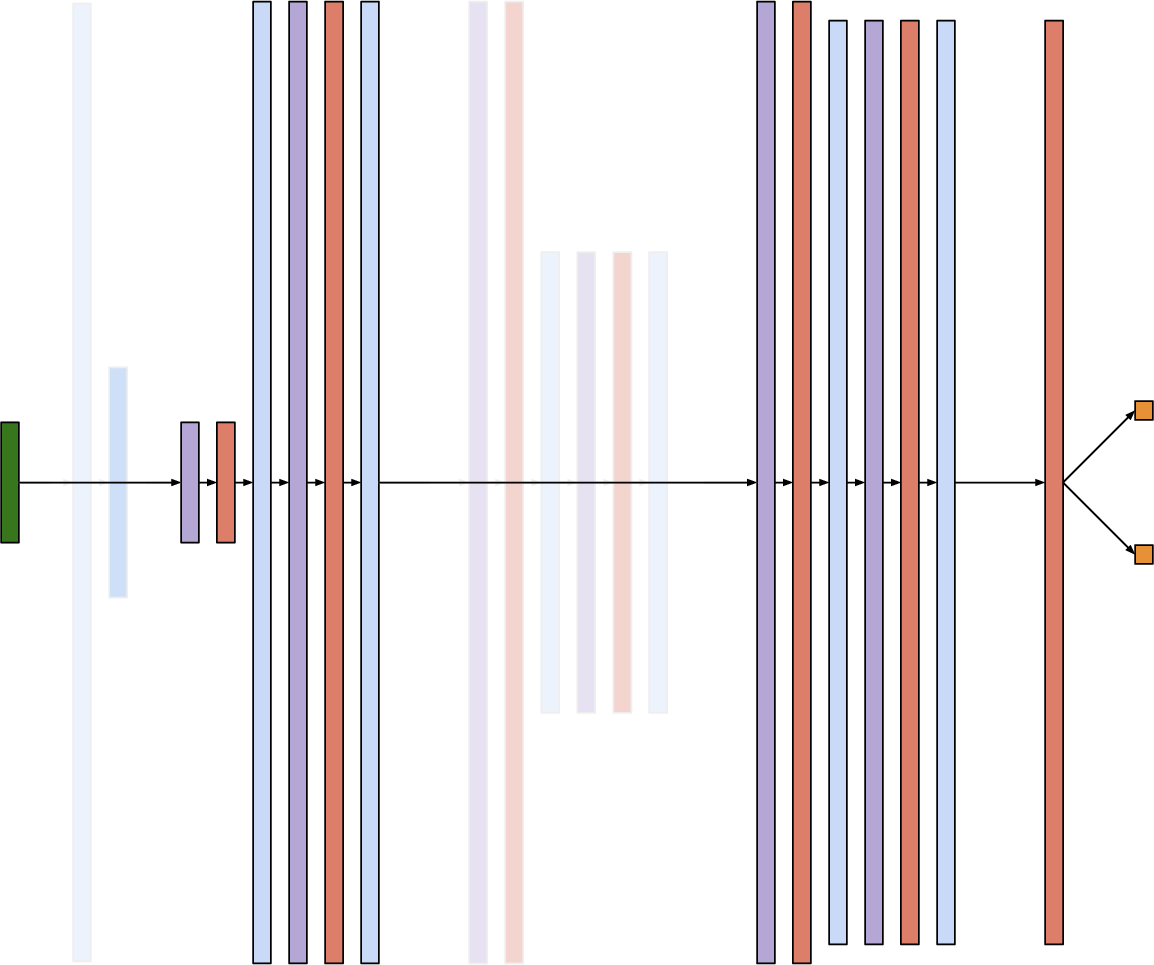

R4V enabled verification for DNNs like DAVE-2 and DroNet, overcoming constraints related to network complexity and layer types unsupported by verifiers such as Reluplex and CROWN. Dropping residual connections and convolutional layers facilitated compatibility with state-of-the-art verifiers.

Verification Efficiency

Refactoring significantly reduced verification times, exemplified by reducing DAVE-2's verifier runtime by 82% while maintaining property verification accuracy. By systematically dropping layers, overall verification times decreased, demonstrating R4V's potential to streamline safety assessments.

Error-Verifiability Trade-offs

A binary search methodology refined refactored versions within a complexity sweet spot, balancing verification efficiency and network accuracy. Strategically removing layers achieved verifiable DNNs without compromising original network error rates, highlighting R4V's ability to optimize verification-friendly architectures.

Implications and Future Developments

R4V effectively enhances the capability of existing verification techniques, enabling developers to realize verifiable networks. Future efforts will focus on expanding refactoring capabilities, improving training efficiency, and automating complexity sweet spot searches to broaden applicability.

Conclusion

R4V refactoring provides transformative benefits for DNN verification, permitting efficient and applicable property checking while retaining required accuracy. This approach fosters advancements toward reliable deployment of neural networks in mission-critical systems by enabling safety verification under defined complexity constraints.