- The paper presents a novel framework that leverages YOLO-based damage detection and deep feature extraction, achieving an 81.7% recall on car damage identification.

- It introduces a feature fusion method combining local VGG-16 features and global vehicle context, resulting in a rank-1 matching rate of 56.52% for fraud detection.

- The system utilizes a dedicated car damage dataset for real-time analysis, demonstrating practical scalability in automating fraud prevention for insurance claims.

An Anti-fraud System for Car Insurance Claim Based on Visual Evidence

This paper introduces a novel approach to address the issue of fraudulent car insurance claims using visual evidence analysis. The proposed system leverages state-of-the-art object detection techniques to identify damages on vehicles and prevent repeated claims. The main components of the system include a deep learning-based damage detector and a feature extraction method that integrates both local and global features to enhance fraud detection capabilities.

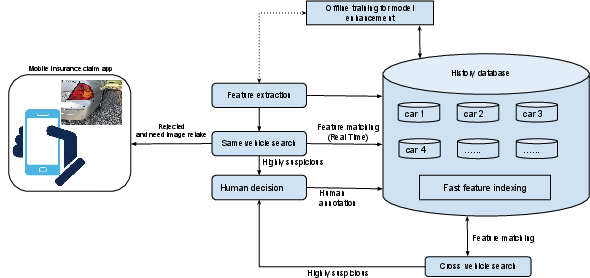

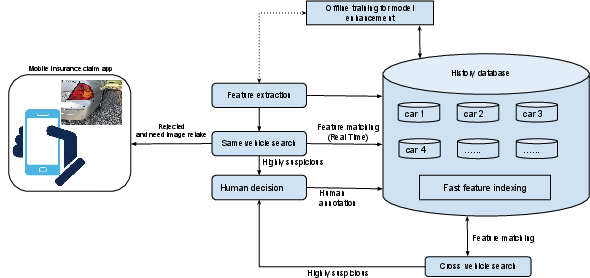

System Architecture

The anti-fraud system for car insurance claims consists of two primary components: a damage detector and a deep feature extractor. The system architecture is depicted in the following overview, illustrating its integration with the claim history database.

Figure 1: Anti-fraud system overview.

Damage Detection

The damage detection component utilizes the YOLO object detection framework. YOLO was chosen due to its balance of speed and accuracy, crucial for real-time processing requirements. The original YOLO model is fine-tuned on a newly created car damage dataset. This dataset includes images of car damages such as scratches, dents, and cracks, sourced from both online collections and real-world parking lots.

Figure 2: Example images from images collected from the internet.

Figure 3: Example images from images collected from a local public parking lot.

Damage Re-Identification

Upon detecting damages, the system extracts relevant features to identify potential fraud in claims. The process involves:

- Local Deep Feature Extraction: Using the VGG-16 model pre-trained on object recognition, extracting localized features around detected damage areas.

- Global Feature Integration: Utilizing global features from the full vehicle image and color histograms to capture the overall context.

- Feature Fusion: Combining local and global features to create a comprehensive descriptor for each claim image.

The descriptors enable effective cross-vehicle and same-vehicle fraud detection by comparing new claims against the historical claim database.

Experimental Results

A significant aspect of the system's effectiveness is the accuracy of the damage detection module. The experiments showed that the YOLO model with a Local Response Normalization (LRN) layer achieved the highest recall of 81.7% on a test dataset, outperforming other model configurations.

Figure 4: Detection result on real data collected in the wild.

Figure 5: Detection examples on data collected online.

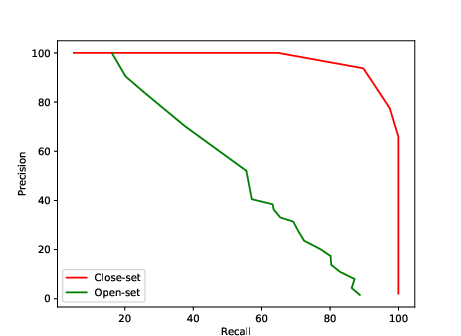

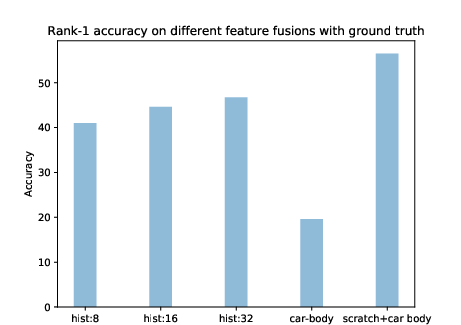

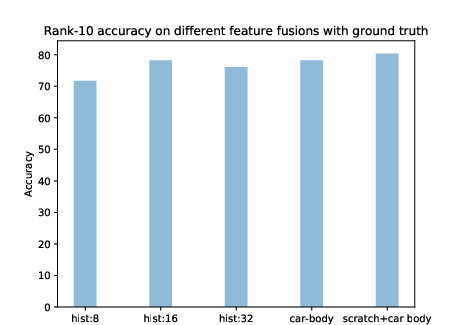

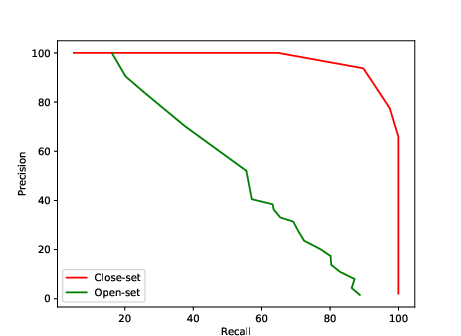

Fraud Detection Efficacy

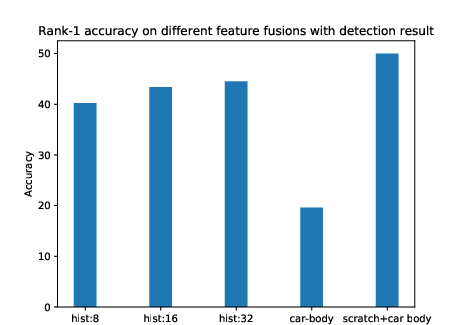

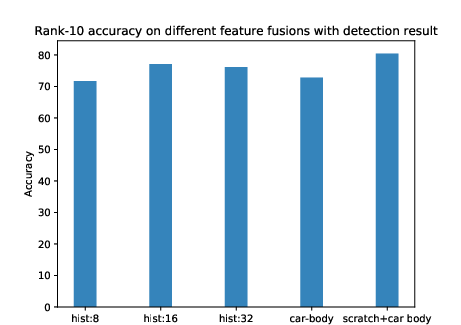

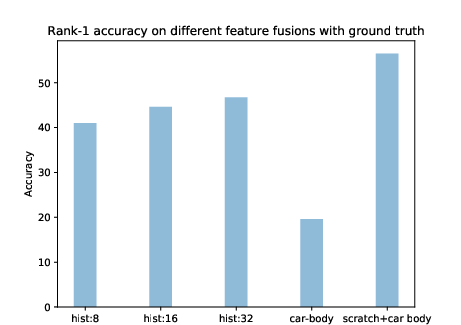

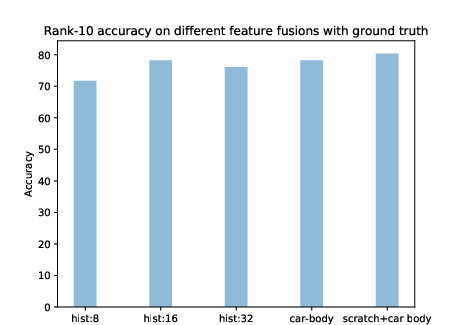

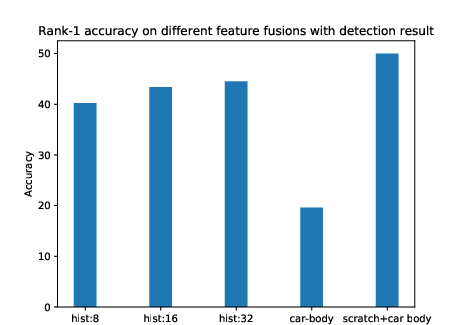

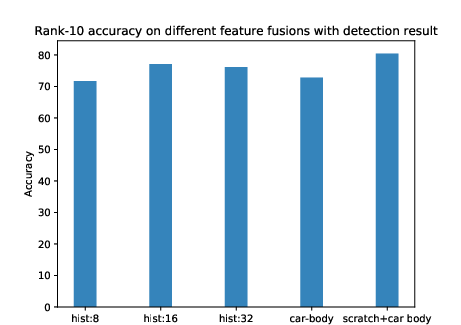

The system's fraud detection component was evaluated using rank-based retrieval metrics. The experiments demonstrated that feature fusion significantly improved fraud detection rates, achieving up to a 56.52% rank-1 matching rate when combining local damage features with global vehicle images. The evaluation showed that even with automatic detection, feature extraction remained robust, indicating the practical viability of the proposed approach.

Figure 6: Fraud detection result at rank-1 rate and rank-10 rate with ground truth and detection result with different feature fusions.

Conclusion

The presented work outlines an effective anti-fraud system for automating car insurance claim verifications. By employing advanced deep learning techniques for damage detection and feature extraction, the system effectively addresses fraudulent claims. The creation of a dedicated car damage dataset and the integration of YOLO for real-time damage detection significantly contribute to the field of computer vision applications in the insurance industry. Future developments could explore enhancements in the feature extraction process and adaption for other types of insurance claims involving visual evidence.