- The paper presents a framework integrating epistemic norms and structured logic to ensure AI systems maintain internal consistency and commitment to truth.

- It combines methodologies from epistemology, formal logic, and blockchain technology to create verifiable belief structures and robust contradiction resolution.

- The research outlines a paradigm shift towards trustworthy AI agents, offering potential advancements in high-stakes fields like scientific research and legal reasoning.

Summary of "Beyond Prediction -- Structuring Epistemic Integrity in Artificial Reasoning Systems"

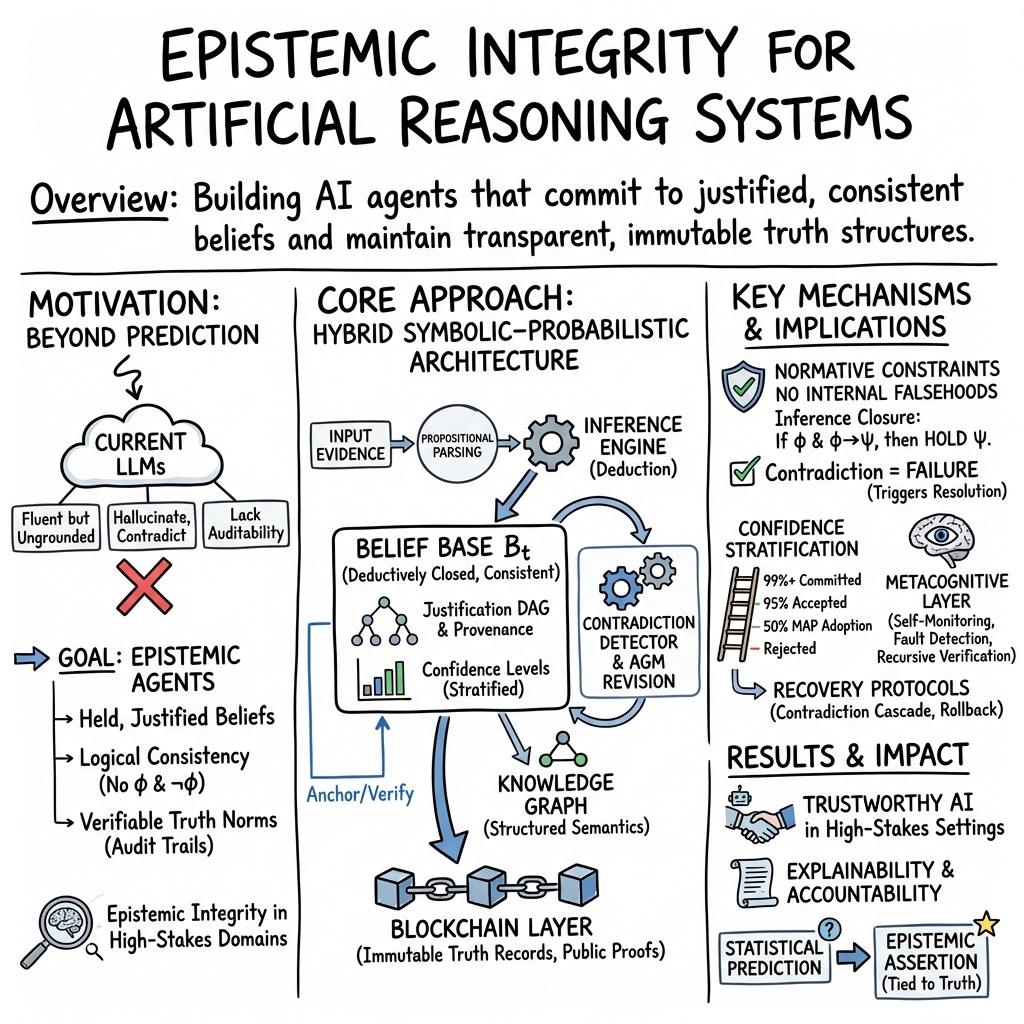

The paper "Beyond Prediction -- Structuring Epistemic Integrity in Artificial Reasoning Systems" (2506.17331) presents a comprehensive framework aimed at advancing artificial intelligence systems beyond traditional stochastic LLMs to epistemically grounded reasoning agents. The core objective of the proposed architecture is to foster systems capable of engaging in structured reasoning processes that ensure propositional commitment, metacognitive reasoning, contradiction detection, and maintenance of normative truth. By integrating insights from epistemology, formal logic, and blockchain technology, the paper seeks to create AI systems that operate under verifiable constraints regarding belief, justification, and truth.

Epistemic Integrity and Commitment

The paper underscores the necessity for artificial reasoning agents to adhere to epistemic norms that surpass mere prediction. Current AI models, particularly LLMs, excel at generating syntactically coherent text but lack the capacity for principled semantic interpretation and truth preservation. The proposed system mandates internal consistency, forbidding assertions that contradict internal beliefs, while establishing confidence thresholds governed by logical interpretations.

The framework formalizes belief structures, propositional attitudes, metacognitive loops, and recursive verification processes. This architecture rejects paraconsistent logic in favor of dynamic reasoning that resolves contradictions rather than tolerates them, ensuring commitments to truth preservation.

Knowledge Structures and Blockchain Verification

The role of knowledge graphs and symbolic reasoning is integral, embedding beliefs as justified positions rather than mere data tokens. Knowledge is recursively tracked and modified according to normative standards, with immutable blockchain mechanisms providing external anchoring, verification, and auditability.

This approach introduces a paradigm shift toward AI systems characterized as epistemic agents, designed not merely to generate plausible textual continuations but to assert justified propositions under logical constraints.

Implications and Future Developments

The paper delineates pathways for constructing epistemically grounded AI systems, discussing philosophical implications concerning artificial truthfulness and responsibility. By redefining the role of truth in AI, the research proposes new opportunities for reliable, trustworthy cognitive systems in high-stakes domains such as scientific research, decision-making, and legal reasoning.

Future developments are likely to focus on refining this architectural blueprint and implementing systems that can genuinely understand and reason about their knowledge states, providing transparent, verifiable cognitive processes. The philosophical and technical convergence presented in this framework marks a significant evolution in artificial intelligence, paving the way for the next generation of reasoning agents committed to epistemic integrity.