- The paper introduces an AR system overlaying a virtual arm on MoCap-based teleoperation to bridge human-robot control mapping gaps.

- It evaluates various AR configurations, with the HumanVertical setup yielding preferred alignment and enhanced system understanding.

- Significant reductions in physical demand and effort were observed during posture-control tasks when using AR feedback.

Assisting MoCap-Based Teleoperation of Robot Arm using Augmented Reality Visualisations

Introduction

The paper "Assisting MoCap-Based Teleoperation of Robot Arm using Augmented Reality Visualisations" (2501.05153) explores the integration of augmented reality (AR) into the teleoperation of robot arms, specifically addressing the challenges posed by the inconsistencies between human and robotic arm orientations and appearances. By utilizing AR to render virtual arms as visual references, the authors aim to mediate these inconsistencies, enhancing user performance and experience during teleoperation tasks.

MoCap-Based AR Teleoperation System

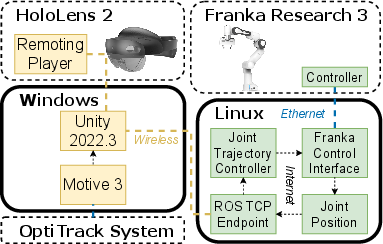

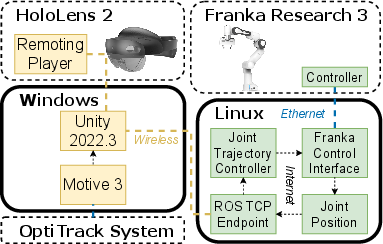

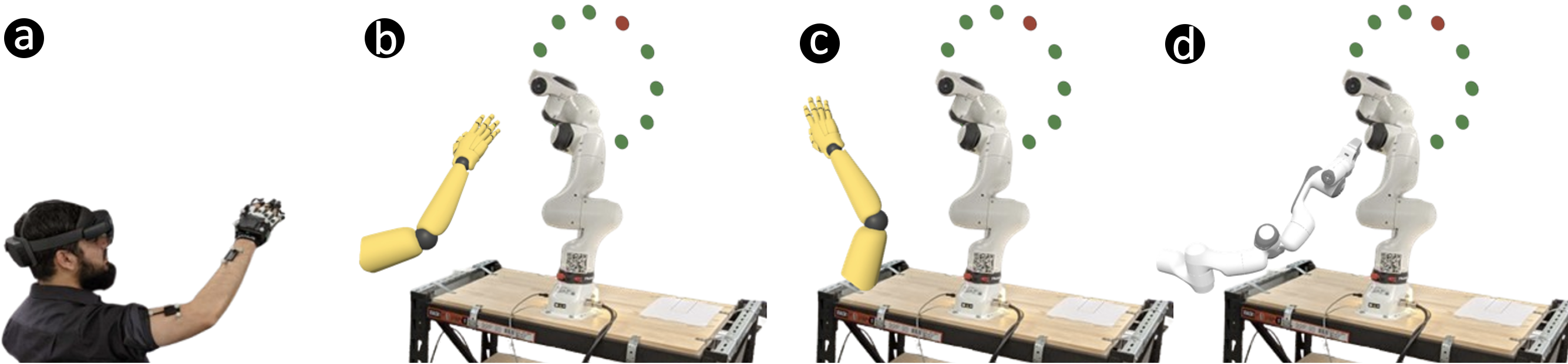

The study employs a sophisticated teleoperation system that integrates a 7-DOF robotic arm, the Franka Research 3 (FR3), with a motion capture system and AR. The system architecture involves a Linux machine running ROS 2 and the Franka Control Interface, a Windows machine running Unity and Motive, a Franka Research 3 robot, and a HoloLens 2 device for AR rendering (Figure 1).

Figure 1: System architecture: a Linux machine running ROS 2 and the Franka Control Interface, a Windows machine running Unity and Motive, a Franka Research 3 robot, and a HoloLens 2 that renders through a remoting player.

The AR system renders a virtual arm corresponding to the physical robot arm's movements, providing visual feedback on the mapping between human and robot arm joint rotations. This setup is designed to facilitate intuitive teleoperation, allowing users to control the robot arm using their naturalistic arm movements, thereby enhancing the ease of use and understanding of the control mappings.

Study 1: Configuring the AR Visualisation

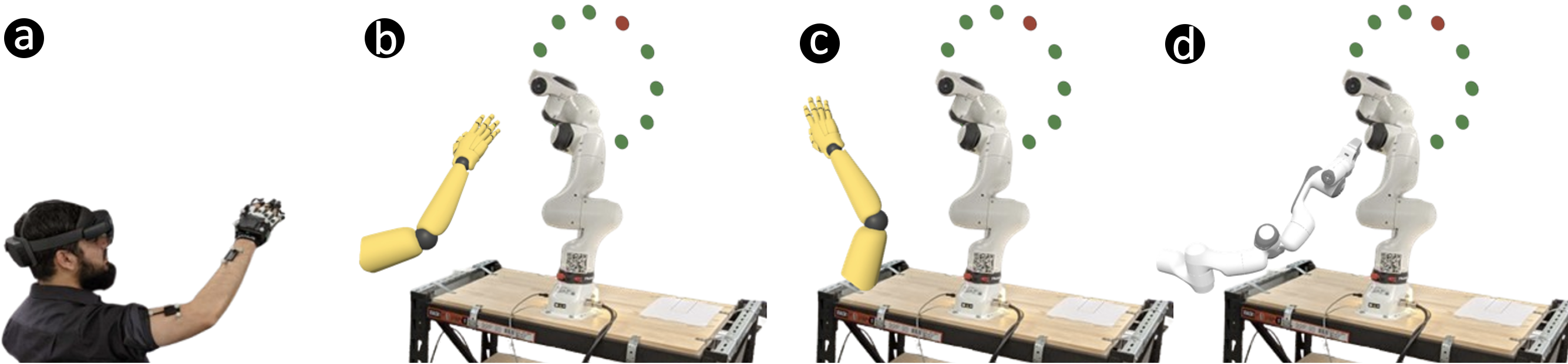

In the first study, the authors investigate various configurations of AR visualisation to determine the optimal setup for assisting with MoCap-based teleoperation. The study compares four visualisation conditions: HumanHorizontal (HH), HumanVertical (HV), RobotHorizontal (RH), and a Baseline with no AR visualisation (Figure 2).

Figure 2: AR Visualisation conditions and apparatus: (a) Participant situated away from the robot; (b) HumanHorizontal arm in AR next to the physical robot; (c) HumanVertical; (d) RobotHorizontal.

The study employs a target-reaching task to evaluate user performance. While no statistically significant differences were found in movement time or NASA-TLX scores among the visualisation conditions, qualitative feedback indicated a preference for the HumanVertical configuration. Participants reported that this setup provided a consistent visual reference with the physical robot, aiding in their understanding of control mappings.

Study 2: Posture Control

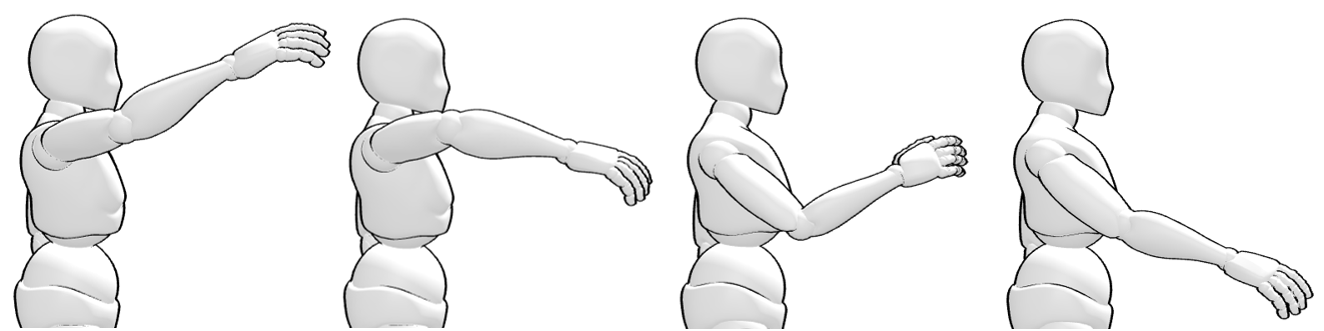

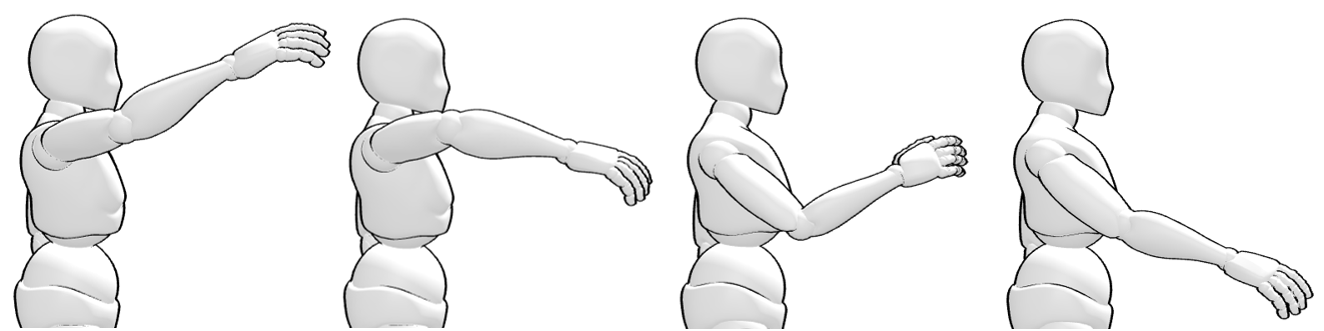

The second study further explores the efficacy of the AR visualisation in a posture-matching task, comparing two conditions: AR Arm and No Arm. Participants were required to match predefined postures using the robot arm, with visual feedback provided through spheres rendered on the robot's key joints (Figures 4 and 5).

Figure 3: S2 postures: a) Elbow up, wrist up; b) Elbow up, wrist down; c) Elbow down, wrist up; d) Elbow down, wrist down.

Figure 4: Study 2: participant, condition No Arm and AR Arm. Blue and red sphere rendered on elbow and wrist respectively. Lighter blue and red denote targets to match posture.

The results indicated significant reductions in perceived physical demand, effort, and frustration when using the AR Arm configuration compared to the No Arm baseline. Qualitative feedback suggested that the AR visualisation served as an effective learning tool for understanding teleoperation control mappings, particularly during the initial stages of learning.

Conclusion

The research demonstrates the potential of AR visualisations to enhance MoCap-based teleoperation of robot arms by bridging the perceptual gaps between human operators and robotic systems. While the study identifies the HumanVertical configuration as optimal for visual alignment, it also highlights the effectiveness of AR as a transitional learning aid rather than a perpetual guidance tool. This work lays a foundation for future research into the incorporation of AR in robotic teleoperation, particularly in contexts requiring intuitive and seamless human-robot interaction.