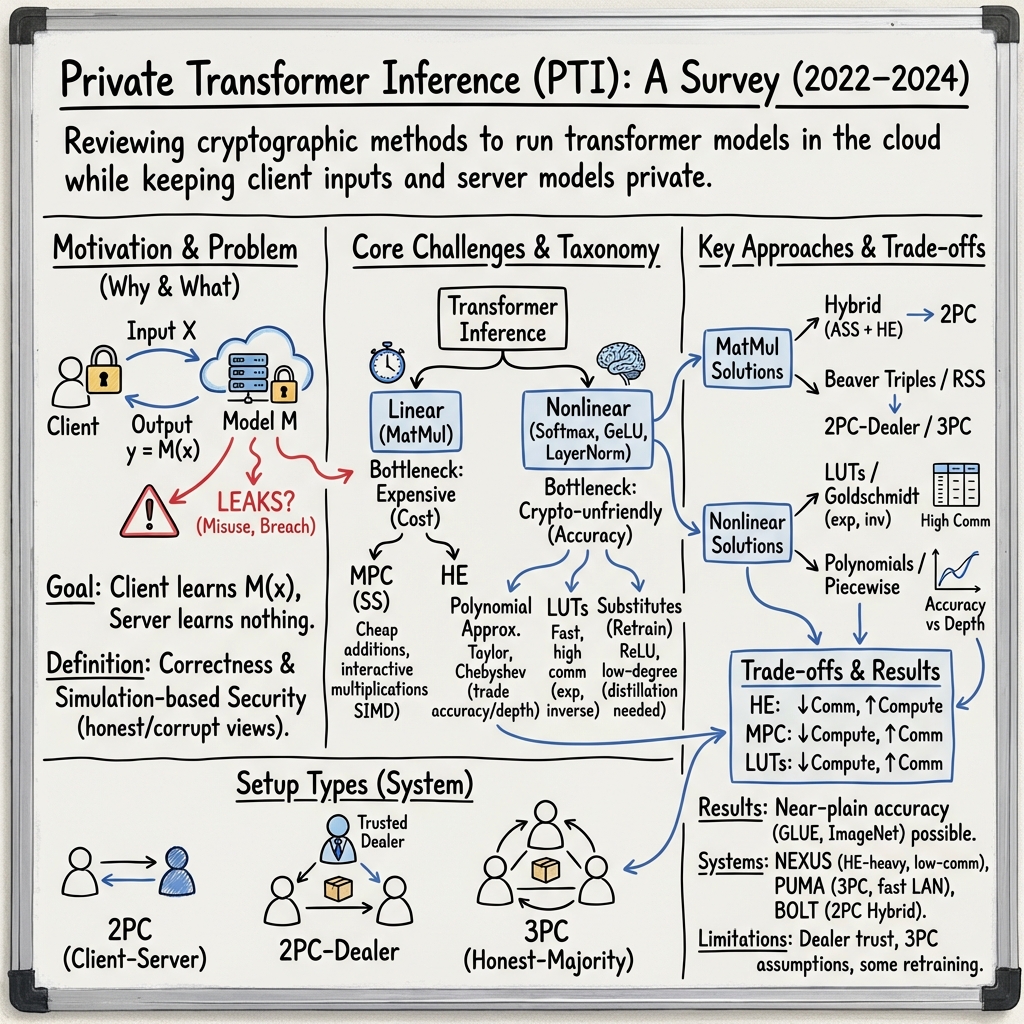

- The paper demonstrates how cryptographic methods such as HE and MPC are integrated to secure transformer inference with minimal accuracy loss.

- It evaluates trade-offs between privacy and computational efficiency, addressing challenges like large-scale matrix multiplications and non-linear operations.

- The survey outlines future research directions including hybrid cryptographic schemes and algorithmic optimizations for scalable, secure NLP applications.

Introduction

The proliferation of transformer models, exemplified by architectures such as BERT and GPT, has dramatically reshaped the landscape of NLP. However, the remarkable performance of these models is often coupled with significant privacy concerns, particularly when deployed in Machine Learning as a Service (MLaaS) setups. This survey explores the burgeoning field of Private Transformer Inference (PTI), elucidating the key cryptographic techniques used to ensure privacy during inference while maintaining computational efficiency and model performance.

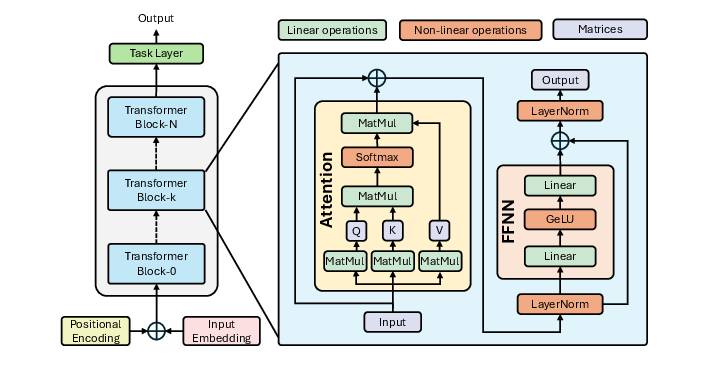

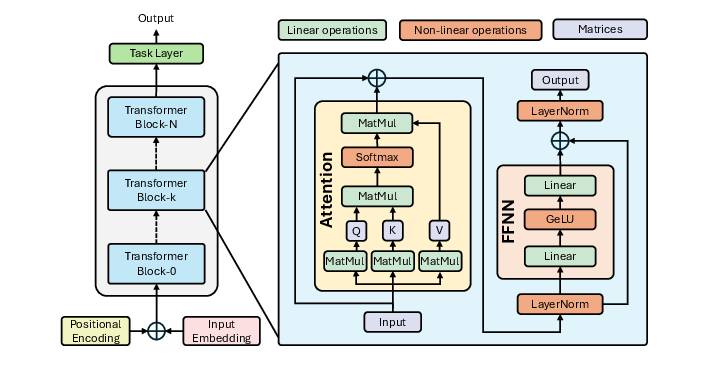

Figure 1: Structure and workflow of a Transformer.

Background and Key Concepts

Private Inference

Private inference represents a cryptographic protocol designed to execute model inference without compromising data privacy. The protocol must ensure that the server learns nothing about the client's input while the client gains no extraneous knowledge of the model beyond its inferential outcomes. This requirement is crucial when sensitive data is involved, as is often the case in medical or financial domains.

Transformers leverage attention mechanisms to model dependencies in input sequences, supporting various applications from sentiment analysis to machine translation. The architecture's reliance on large matrix multiplications and non-linear operations like Softmax, GeLU, and LayerNorm poses unique challenges to privacy-preserving computation, necessitating sophisticated cryptographic strategies.

Cryptographic Techniques for PTI

The primary cryptographic methods employed in PTI include:

- Homomorphic Encryption (HE): Enables computations over encrypted data without necessitating decryption, preserving privacy at the cost of increased computational complexity.

- Secure Multi-Party Computation (MPC): Facilitates collaborative computation over private inputs from multiple parties, typically incurring substantial communication overhead.

The survey examines the integration of these techniques in PTI, highlighting the trade-offs between privacy, computational efficiency, and inference accuracy.

Significant challenges include:

- Matrix Multiplications: Transformers' reliance on large-scale matrix multiplications necessitates efficient protocols to handle encrypted data without introducing prohibitive overhead.

- Complex Non-linear Operations: Functions like GeLU and Softmax are not crypto-friendly, requiring innovative approximation or re-implementation strategies to ensure feasibility under secure computation frameworks.

Empirical Evaluation and Discussion

The survey reports on the empirical efficacy of various PTI approaches, drawing on studies that implement PTI across different transformer models and datasets. Notable findings include:

- Accuracy Retention: Several approaches maintain close to native model performance while significantly bolstering privacy levels. For instance, studies leveraging HE often achieve minimal accuracy loss, underscoring HE's potential in privacy-critical applications.

- Efficiency Considerations: While HE offers strong privacy guarantees, its computational demands necessitate optimizations such as ciphertext packing and parallel processing. Conversely, MPC methods, while more communication-intensive, benefit from modern network technologies that can mitigate latency issues.

Future Directions

The field of PTI is poised for continued innovation, with several avenues for exploration:

- Hybrid Approaches: Combining MPC and HE could harness the strengths of both, potentially offsetting individual weaknesses.

- Algorithmic Optimizations: Developing more efficient algorithms for handling non-linear transformations within the cryptographic domain remains a critical area of research.

- Scalable Deployments: As transformer models grow in size, scalable PTI solutions will be essential for real-world applicability, especially in cloud-based environments.

Conclusion

The evolving landscape of privacy-preserving techniques for transformer inference marks a critical intersection of cryptography and AI, offering robust frameworks to mitigate privacy risks without sacrificing the transformative potential of large-scale models. This survey underscores the importance of continued research into efficient, scalable, and secure inference mechanisms, paving the way for responsible AI deployments across diverse sectors.